Create a Hologres internal table in the DataWorks console to store and query MaxCompute data. Internal tables physically store data in Hologres, which delivers faster query performance than external tables.

To create a Hologres internal table using SQL instead, see CREATE TABLE.

Prerequisites

Before you begin, make sure you have:

A Hologres computing resource added to your workspace and associated with DataStudio. For details, see DataStudio (old version): Associate a Hologres computing resource.

The Workspace Manager or Development role. For details, see Manage permissions on workspace-level services.

Region support

Creating a Hologres internal table in the DataWorks console is only supported in the China (Shanghai) and China (Beijing) regions.

Internal tables vs. external tables

Hologres tables come in two types:

| Type | Stores MaxCompute data | Best for |

|---|---|---|

| Internal table | Yes | Fast OLAP queries and analysis |

| External table | No — maps to MaxCompute data in place | Queries without data import overhead |

Use internal tables when query speed matters. Use external tables when you want to avoid copying data into Hologres.

Create a Hologres internal table

Step 1: Create a workflow (optional)

Skip this step if you already have a workflow.

Hover over the

icon and select Create Workflow.

icon and select Create Workflow.In the Create Workflow dialog box, enter a Workflow Name.

Click Create.

Step 2: Create the table

Hover over the

icon and choose Create Table > Hologres > Table.

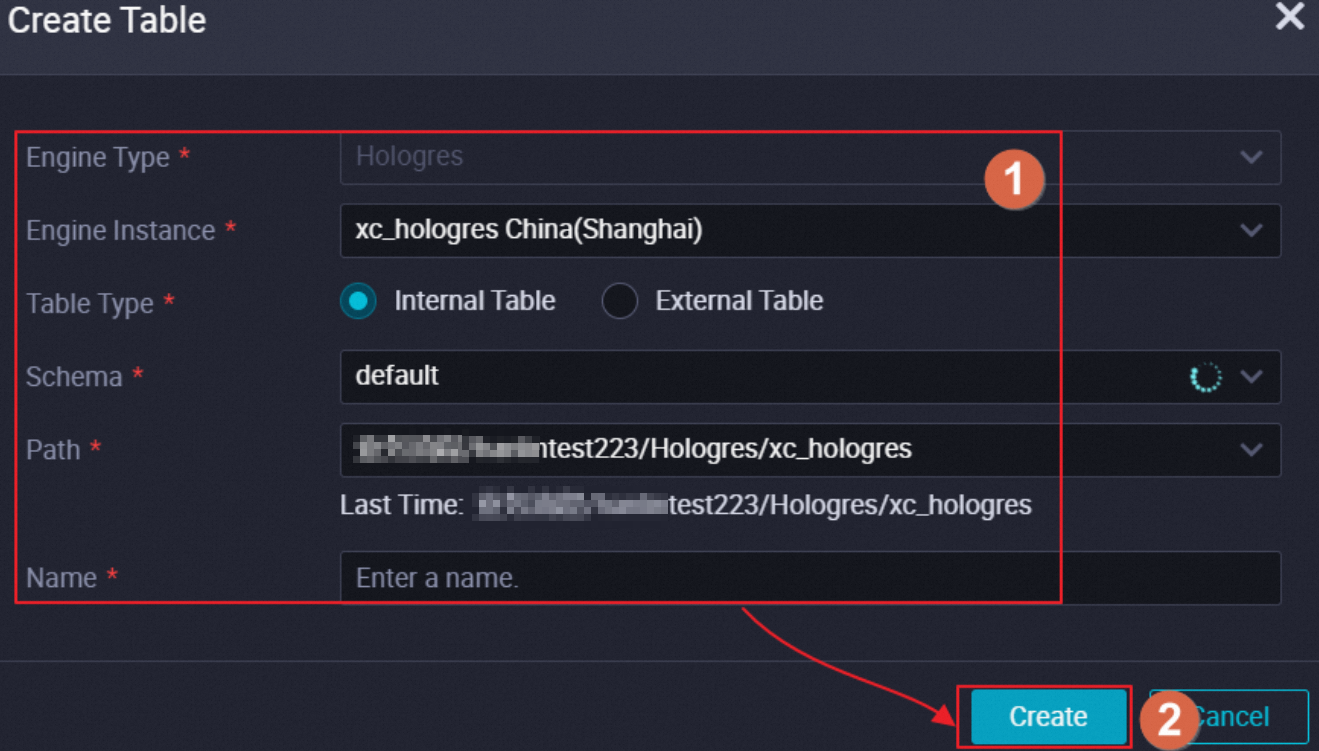

icon and choose Create Table > Hologres > Table.In the Create Table dialog box, set Table Type to Internal Table and configure Engine Instance, Path, and Name.

Step 3: Configure the table

On the table configuration tab, set parameters across three sections.

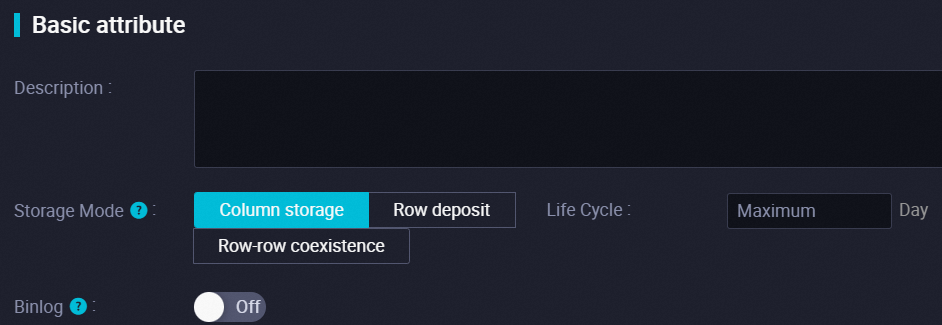

General

| Parameter | Description |

|---|---|

| Storage mode | The storage layout of the table. Default: Column storage (columnar storage). See details below. |

| Life cycle | The time-to-live (TTL) for the table data, in seconds. Default: permanent. The TTL countdown starts when data is first written. After the TTL expires, data is deleted at an unspecified time. |

| Binlog | Enables binary logging for the table. When enabled, configure Binlog Lifecycle (default: permanent). Only Hologres V0.9 and later support subscription to the binary logs of a single table. For details, see Subscribe to Hologres binary logs. |

Storage mode options:

Column storage (columnar storage): Optimized for online analytical processing (OLAP). Supports complex queries, joins, scans, filtering, and aggregations. Insert and update performance is lower than row-oriented storage.

Row deposit (row-oriented storage): Optimized for key-value lookups and supports point queries and scans based on primary keys. Insert and update performance is higher than columnar storage.

Row-row coexistence (hybrid row-column storage): Supports both point queries and OLAP. Incurs higher storage costs and internal synchronization overhead.

For details on storage modes, see the orientation parameter in CREATE TABLE.

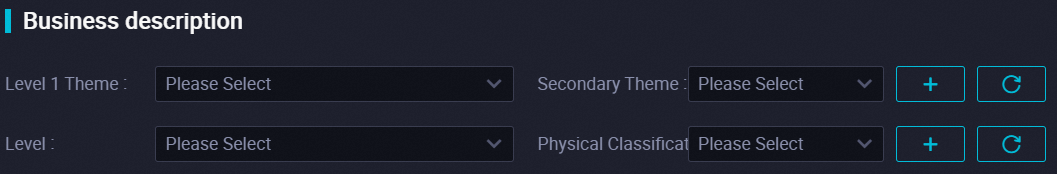

Physical model

Physical model settings are for table management and organization only. They do not affect the underlying table logic.

| Parameter | Description |

|---|---|

| Theme | The level-1 and level-2 folders that categorize the table by business domain. Folders are managed in DataWorks. |

| Layer | The data layer the table belongs to (for example, data import, data sharing, or data analysis). For details on managing data layers, see Manage settings for tables. |

| Category | The business category of the table (for example, basic service, advanced service). For details on managing categories, see Manage settings for tables. |

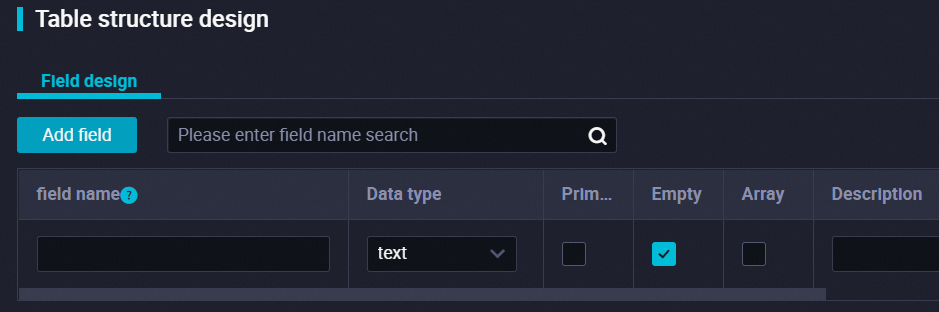

Schema

| Tab | Description |

|---|---|

| Field design | Add and define table fields. For supported data types, see Data types. |

| Storage design | Configure how data is physically stored and indexed. See storage design options below. |

| Partition | Define a partition field. If the table has a primary key, the primary key must include the partition field. |

Storage design options:

Distribution column: The distribution key used to distribute data across shards for computing and scanning.

Segmented column: A time-type column used as an event time filter. When event time columns are included in query conditions, Hologres can find the storage location of data based on these columns. Suitable for logs, traffic data, and time-series workloads.

Cluster column: Columns for which Hologres builds clustered indexes. Data is sorted by these indexes to accelerate RANGE and FILTER queries.

Dictionary encoding column: Columns for which Hologres builds dictionary mappings. Converts string comparisons to numeric comparisons to speed up GROUP BY and FILTER operations.

Bitmap column: Columns for which Hologres builds bitmap indexes. Bitmap indexes help filter data that equals a specified value in a stored file. We recommend converting equality filter conditions to bitmap indexes.

For details on all storage options, see CREATE TABLE.

Step 4: Commit and deploy

After configuration, commit the table to make it available in the Hologres database.

In a workspace running in basic mode, commit to the production environment only. For details on basic and standard mode workspaces, see Differences between workspaces in basic mode and workspaces in standard mode.

| Operation | Description |

|---|---|

| Commit to development environment | Creates the table in the Hologres database in the development environment. The table schema then appears in the Hologres folder of the workflow in DataStudio — the folder you specified when you created the table. |

| Load from development environment | Loads table configuration from the development environment to the current page, overwriting any unsaved changes. Available only after the table is committed to the development environment. |

| Commit to production environment | Creates the table in the Hologres database in the production environment. |

| Load from production environment | Loads table configuration from the production environment to the current page, overwriting any unsaved changes. Available only after the table is committed to the production environment. |

What's next

After creating the internal table, you can:

Query and develop data: Create a Hologres SQL node to run queries. See Create a Hologres SQL node and CREATE TABLE.

Import MaxCompute data: Load data from MaxCompute into the internal table at scheduled intervals:

Using SQL commands: Import data from MaxCompute to Hologres by executing SQL statements

Using the DataWorks console: Create a node to synchronize MaxCompute data with a few clicks