Use a Cloudera's Distribution Including Apache Hadoop (CDH) MapReduce (MR) node in DataWorks DataStudio to run MapReduce jobs against ultra-large datasets stored in your CDH cluster.

Prerequisites

Before you begin, ensure that you have:

A workflow in DataStudio. All nodes in DataStudio belong to a workflow. See Create a workflow

A CDH cluster registered to your DataWorks workspace. See Register a CDH or CDP cluster to DataWorks

A serverless resource group purchased and configured with workspace association and network settings. See Create and use a serverless resource group

(RAM users only) Your RAM user added to the workspace with the Development or Workspace Administrator role assigned. See Add workspace members and assign roles to them

The Workspace Administrator role grants broader permissions than needed for task development. Assign it only when strictly necessary.

Limitations

CDH MR tasks run on serverless resource groups or old-version exclusive resource groups for scheduling. We recommend that you use serverless resource groups.

Step 1: Create a CDH MR node

Log on to the DataWorks console. In the top navigation bar, select a region. In the left-side navigation pane, choose Data Development and O\&M > Data Development. Select your workspace and click Go to Data Development.

On the DataStudio page, find your workflow, right-click its name, and choose Create Node > CDH > CDH MR.

In the Create Node dialog box, set the Engine Instance, Path, and Name parameters.

Click Confirm.

Step 2: Create and reference a CDH JAR resource

CDH MR nodes run JAR files uploaded to DataStudio as CDH JAR resources. Create the resource first, then reference it from your node.

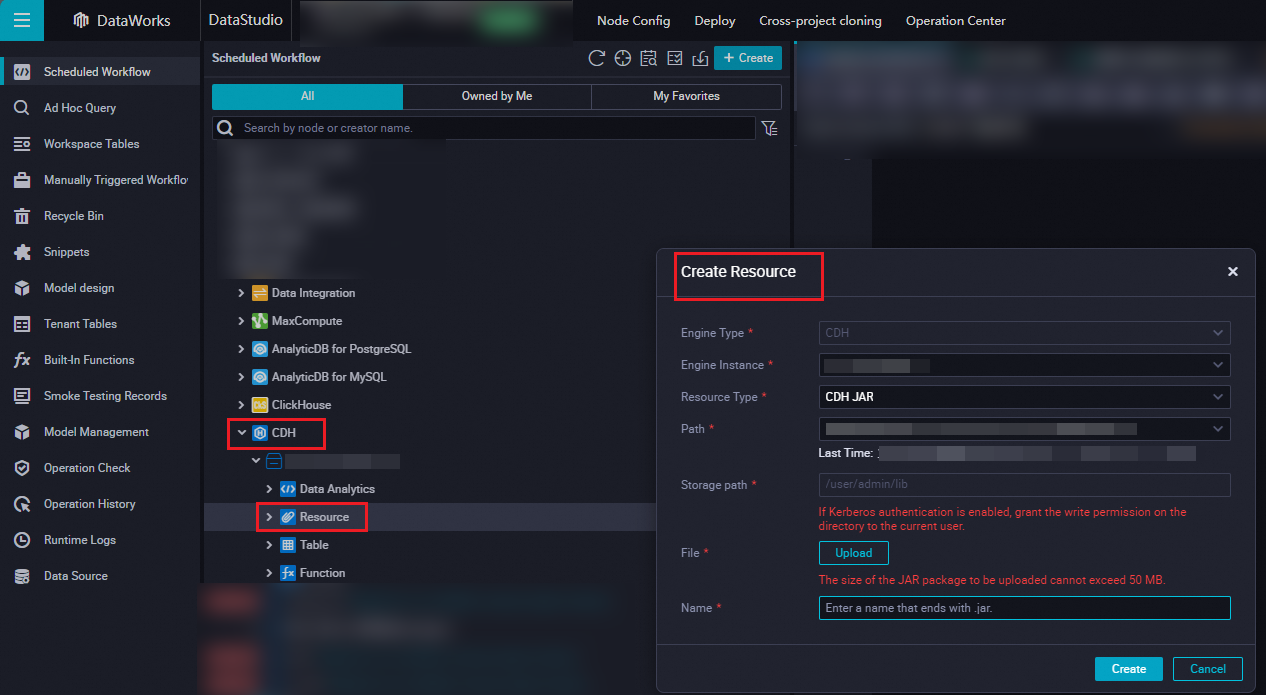

Create the CDH JAR resource

In the DataStudio file tree, find your workflow and click CDH.

Right-click Resource and choose Create Resource > CDH JAR.

In the Create Resource dialog box, click Upload and select your JAR file.

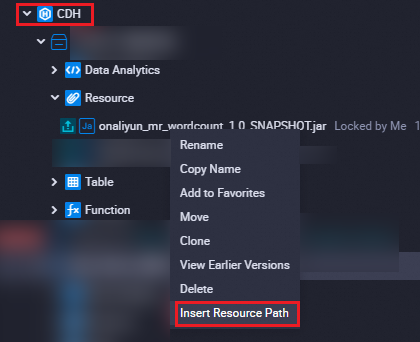

Reference the CDH JAR resource in your node

Open the configuration tab of your CDH MR node.

Under Resource in the CDH folder, right-click your resource name and select Insert Resource Path. A clause in the

##@resource_reference{""}format appears at the top of the editor, confirming the resource is referenced.

Write your job command below the resource reference clause. The following example runs a word count job:

##@resource_reference{"onaliyun_mr_wordcount-1.0-SNAPSHOT.jar"} onaliyun_mr_wordcount-1.0-SNAPSHOT.jar cn.apache.hadoop.onaliyun.examples.EmrWordCount oss://onaliyun-bucket-2/cdh/datas/wordcount02/inputs oss://onaliyun-bucket-2/cdh/datas/wordcount02/outputsThe command uses these parameters:

Parameter Description Example JAR file name Name of the uploaded CDH JAR resource onaliyun_mr_wordcount-1.0-SNAPSHOT.jarMain class Fully qualified name of the MapReduce main class cn.apache.hadoop.onaliyun.examples.EmrWordCountInput path OSS path to the input data directory oss://onaliyun-bucket-2/cdh/datas/wordcount02/inputsOutput path OSS path where the job writes results oss://onaliyun-bucket-2/cdh/datas/wordcount02/outputsReplace the JAR file name, main class, and input/output paths with your actual values.

Do not add comments to CDH MR node code.

Step 3: Configure scheduling properties

To have DataWorks run the task on a schedule, click Properties in the right-side navigation pane and configure the following:

Basic properties: See Configure basic properties.

Scheduling cycle and dependencies: See Configure time properties and Configure same-cycle scheduling dependencies.

Resource group: See Configure the resource property. If the node needs to access the internet or a virtual private cloud (VPC), select a resource group for scheduling that has the required network connectivity. See Network connectivity solutions.

Configure both Rerun and Parent Nodes on the Properties tab before committing the task.

Step 4: Debug the task

(Optional) Select a resource group and configure parameters. Click the

icon in the toolbar. In the Parameters dialog box, select the resource group to use for debugging. If your code uses scheduling parameters, assign values to those parameters for the debug run. See Differences in scheduling parameter value assignment among run modes.

icon in the toolbar. In the Parameters dialog box, select the resource group to use for debugging. If your code uses scheduling parameters, assign values to those parameters for the debug run. See Differences in scheduling parameter value assignment among run modes.Save and run the task. Click the

icon to save, then click the

icon to save, then click the  icon to run.

icon to run.(Optional) Run smoke testing. Smoke testing verifies the task in the development environment before or after you commit it. See Perform smoke testing.

What's next

Commit and deploy the task:

Click the

icon to save the task.

icon to save the task.Click the

icon to commit the task.

icon to commit the task.In the Submit dialog box, enter a Change description and click Confirm.

If your workspace is in standard mode, deploy the task to the production environment after committing. Click Deploy in the top navigation bar. See Deploy tasks.

Monitor the task in Operation Center:

Click Operation Center in the upper-right corner of the node's configuration tab to view the task in the production environment. See View and manage auto triggered tasks.

To view more information about the task, click Operation Center in the top navigation bar of the DataStudio page. See Operation Center overview.