This topic covers common errors that cause node task failures in DataWorks, grouped by node type.

Quick reference

| Error | Category | Fix |

|---|---|---|

Task Run Timed Out, Killed by System!!! |

Configuration | Restart manually — timeout failures do not trigger auto-rerun |

OSError: [Errno 7] Argument list too long |

Configuration | Split the SQL code into smaller chunks |

ODPS-0420095: Access Denied |

Infrastructure | Top up your account or resume the project in the MaxCompute console |

ODPS-0420061: Fetched data is larger than the rendering limitation |

Configuration | Add a LIMIT clause; use Tunnel to export data over 10,000 rows |

| Out-of-order data with multiple threads | Configuration | Add ORDER BY after synchronization |

| AnalyticDB for MySQL task fails on a public resource group | Infrastructure | Switch to an exclusive resource group connected to a Virtual Private Cloud (VPC) |

sql execute failed! Unsupported jdbc driver |

Configuration | Recreate the MySQL data source using connection string mode |

error in your condition run fail (branch node) |

Configuration | Use Python-syntax conditions; quote string variables |

None Ftp connection info!! |

Configuration | Fix or create the FTP data source |

Connect Failed (FTP Check) |

Infrastructure | Verify FTP server connectivity with telnet |

The current time has exceeded the end-check time point! |

Configuration | Set a later Check Stop Time |

File not Exists or exceeded the end-check time point! |

Expected | Expected behavior — downstream tasks are not triggered |

no available machine resources under the task resource group |

Infrastructure | Switch the task to a different resource group |

General

A task does not rerun after failing with Task Run Timed Out, Killed by System!!!

Even when Rerun is set to Rerun Regardless of Status or Rerun upon Failure in Scheduling Configuration > Time Properties, a task that exceeds its configured timeout is terminated by the system and will not trigger auto-rerun. This is by design — the auto-rerun mechanism applies only to task logic failures, not to timeout terminations.

Restart the task manually from Operation Center.

A task fails with OSError: [Errno 7] Argument list too long

The SQL code submitted to the node exceeds the 128 KB size limit. Split the SQL code into smaller segments and run the task again.

A single node cannot contain more than 200 SQL commands.

MaxCompute nodes

ODPS-0420095: Access Denied - Authorization Failed [4093]

Full error: You have NO privilege to do the restricted operation on {acs:odps:*:projects/xxxx}. Access Mode is AllDenied.

The project has been disabled. There are two possible causes:

-

Overdue payment or expired subscription: Check whether your account has an overdue payment or the default computing quota subscription has expired. Top up your account or renew the subscription. The project status changes to Normal automatically — recovery takes 2 to 30 minutes depending on the number of orders and projects.

-

Manually disabled: Go to Project Management in the MaxCompute console and resume the project.

ODPS-0420061: Fetched data is larger than the rendering limitation

Full error: Invalid parameter in HTTP request - Fetched data is larger than the rendering limitation. Please try to reduce your limit size or column number

Add a LIMIT clause to your query to reduce the result set. To export larger datasets, use Tunnel — required for results over 10,000 rows.

Out-of-order data when running a node task with multiple threads

Data in MaxCompute tables is stored and read in non-sequential order. Without explicit sorting, query results are also unordered.

After synchronization, add an ORDER BY clause to your SQL statement to sort the data. For example:

SELECT * FROM your_table ORDER BY your_column LIMIT n;AnalyticDB for MySQL nodes

AnalyticDB for MySQL task fails on a public resource group

Activate an exclusive resource group for scheduling, connect it to the VPC where your AnalyticDB for MySQL instance runs, then rerun the task. For setup steps, see Test the connectivity of a data source.

MySQL nodes

sql execute failed! Unsupported jdbc driver

This error occurs when a MySQL data source was created without using connection string mode. Create a new MySQL data source using connection string mode. For details, see Configure a MySQL data source.

To check how an existing data source was created, go to Data Source Management, find the data source, click Edit in the Actions column, and check the mode shown on the Edit Data Source page.

General nodes

View logs for for-each, do-while, and PAI nodes in Operation Center

Find the instance in Operation Center, right-click it, and select the option to view its inner nodes.

A branch node fails with error in your condition run fail

Branch conditions must follow Python syntax. If an upstream assignment node outputs a string, enclose the referenced variable in quotation marks in the branch condition.

FTP Check nodes

None Ftp connection info!!

The FTP Check node cannot retrieve data source information because the FTP data source is configured incorrectly or does not exist.

Go to Data Source Management and verify that the FTP data source is configured correctly. If no FTP data source exists, configure an FTP data source first.

Connect Failed

The FTP Check node cannot connect to the FTP server. Run telnet <IP> <port> to verify that the server is reachable — use the IP address and port from your FTP data source configuration. To find these values, log in to the DataWorks console and go to Data Source Management.

For more information about navigating to Data Source Management, see Data Source Management.

The current time has exceeded the end-check time point!

The check ran past the configured Check Stop Time for the Done file in the FTP data source, so the task failed immediately. Set a later Check Stop Time in the FTP Check node configuration. For details, see Configure a check policy.

File not Exists or exceeded the end-check time point!

This is expected behavior. It means the FTP Check node did not find the Done file in the FTP data source before the Check Stop Time elapsed. When this occurs, DataWorks does not trigger the downstream tasks of the FTP Check node.

Resource groups

no available machine resources under the task resource group

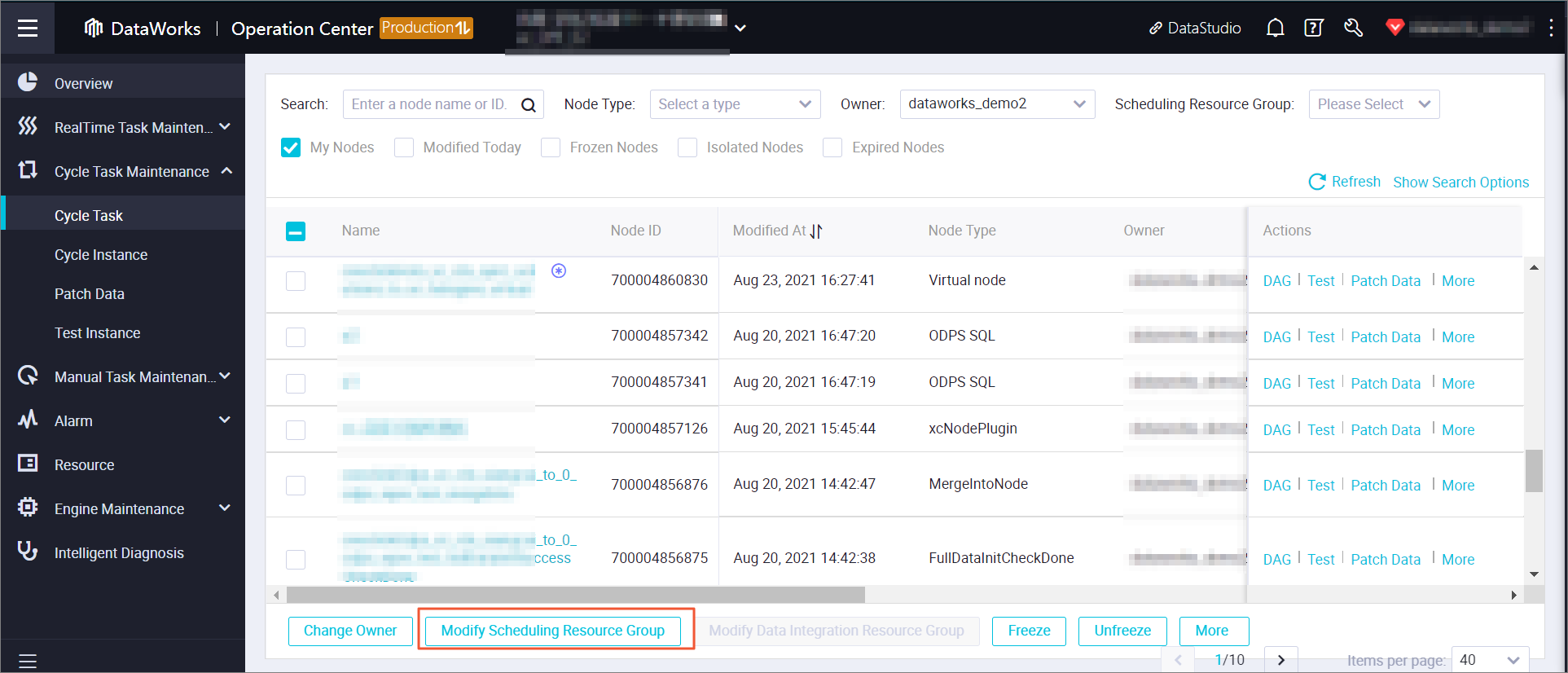

Operation Center cannot allocate a machine for the task. In the left navigation pane of Operation Center, choose Auto triggered task O&M > Auto triggered task and switch the task to a different resource group for scheduling.