This topic describes how to migrate data from an ApsaraDB RDS for PPAS instance to a PolarDB for Oracle cluster by using Data Transmission Service (DTS). DTS supports schema migration, full data migration, and incremental data migration. When you configure a data migration task, you can select all of the supported migration types to ensure service continuity.

Prerequisites

- A PolarDB for Oracle cluster is created. For more information, see Create a PolarDB for PostgreSQL(Compatible with Oracle) cluster.

- The available storage space of the PolarDB for Oracle cluster is larger than the total size of the data in the ApsaraDB RDS for PPAS instance.

- If one or more uppercase letters exist in the names of the source database, tables, and fields in the ApsaraDB RDS for PPAS instance, you must enclose the names of the source database, tables, and fields in the PolarDB for Oracle cluster with double quotation marks (") when you create the objects in the cluster.

- To migrate incremental data from an ApsaraDB RDS for PPAS instance, you must grant the permissions of the superuser role to the database account.

Limits

- DTS uses read and write resources of the source and destination databases during full data migration. This may increase the loads of the database servers. If the database performance is unfavorable, the specification is low, or the data volume is large, database services may become unavailable. For example, DTS occupies a large amount of read and write resources in the following cases: a large number of slow SQL queries are performed on the source database, the tables have no primary keys, or a deadlock occurs in the destination database. Before you migrate data, evaluate the impact of data migration on the performance of the source and destination databases. We recommend that you migrate data during off-peak hours. For example, you can migrate data when the CPU utilization of the source and destination databases is less than 30%.

- The tables to be migrated in the source database must have PRIMARY KEY or UNIQUE constraints and all fields must be unique. Otherwise, the destination database may contain duplicate data records.

- A single data migration task can migrate data from only one database. To migrate data from multiple databases, you must create a data migration task for each database.

- If a data migration task fails, DTS automatically resumes the task. Before you switch your workloads to the destination cluster, stop or release the data migration task. Otherwise, the data in the source database overwrites the data in the destination cluster after the task is resumed.

- After your workloads are switched to the destination database, newly written sequences do not increment from the maximum value of the sequences in the source database. Therefore, you must query the maximum value of the sequences in the source database before you switch your workloads to the destination database. Then, you must specify the queried maximum value as the initial value of the sequences in the destination database. You can run the following commands to query the maximum value of the sequences in the source database.

do language plpgsql $$ declare nsp name; rel name; val int8; begin for nsp,rel in select nspname,relname from pg_class t2 , pg_namespace t3 where t2.relnamespace=t3.oid and t2.relkind='S' loop execute format($_$select last_value from %I.%I$_$, nsp, rel) into val; raise notice '%', format($_$select setval('%I.%I'::regclass, %s);$_$, nsp, rel, val+1); end loop; end; $$; - When you migrate data from your ApsaraDB RDS for PPAS instance to a PolarDB for Oracle cluster, take note of the following items:

- Make sure that the specifications of the PolarDB for Oracle cluster are higher than or equal to those of the ApsaraDB RDS for PPAS instance. Otherwise, slow SQL queries or out of memory (OOM) errors may occur because of insufficient CPU or memory resources after you migrate the data to the PolarDB for Oracle cluster. For more information about the recommended specifications of PolarDB for Oracle clusters, see Mapping between specifications of ApsaraDB RDS for PPAS instances and recommended specifications of PolarDB for Oracle clusters.

- If you have business requirements for the number of connections and IOPS after migration, select appropriate specifications based on your business requirements. For more information, see Specifications of compute nodes.

- If you use a cluster endpoint to connect your application to a PolarDB for Oracle cluster, read/write splitting is enabled. Then, the system forwards read requests to read-only PolarDB for Oracle nodes. This reduces the load on the PolarDB for Oracle cluster. For more information about how to obtain a cluster endpoint, see View or apply for an endpoint.

Mapping between specifications of ApsaraDB RDS for PPAS instances and recommended specifications of PolarDB for Oracle clusters

Make sure that the specifications of the PolarDB for Oracle cluster are higher than or equal to the specifications of your ApsaraDB RDS for PPAS instance. Otherwise, slow SQL queries or OOM errors may occur because of insufficient CPU or memory resources after you migrate the data to the PolarDB for Oracle cluster. The following table lists the recommended specifications of PolarDB for Oracle clusters.

| Specifications of ApsaraDB RDS for PPAS instances | Recommended specifications of PolarDB for Oracle clusters | ||

|---|---|---|---|

| Instance type | CPU and memory | Instance type | CPU and memory |

| rds.ppas.t1.small | 1 core, 1 GB | polar.o.x4.medium | 2 cores, 8 GB |

| ppas.x4.small.2 | 1 core, 4 GB | polar.o.x4.medium | 2 cores, 8 GB |

| ppas.x4.medium.2 | 2 cores, 8 GB | polar.o.x4.medium | 2 cores, 8 GB |

| ppas.x8.medium.2 | 2 cores, 16 GB | polar.o.x4.large | 4 cores, 16 GB |

| ppas.x4.large.2 | 4 cores, 16 GB | polar.o.x4.large | 4 cores, 16 GB |

| ppas.x8.large.2 | 4 cores, 32 GB | polar.o.x4.xlarge | 8 cores, 32 GB |

| ppas.x4.xlarge.2 | 8 cores, 32 GB | polar.o.x4.xlarge | 8 cores, 32 GB |

| ppas.x8.xlarge.2 | 8 cores, 64 GB | polar.o.x8.xlarge | 8 cores, 64 GB |

| ppas.x4.2xlarge.2 | 16 cores, 64 GB | polar.o.x8.2xlarge | 16 cores, 128 GB |

| ppas.x8.2xlarge.2 | 16 cores, 128 GB | polar.o.x8.2xlarge | 16 cores, 128 GB |

| ppas.x4.4xlarge.2 | 32 cores, 128 GB | polar.o.x8.4xlarge | 32 cores, 256 GB |

| ppas.x8.4xlarge.2 | 32 cores, 256 GB | polar.o.x8.4xlarge | 32 cores, 256 GB |

| rds.ppas.st.h43 | 60 cores, 470 GB | polar.o.x8.8xlarge | 64 cores, 512 GB memory |

Migration types

| Migration type | Description |

|---|---|

| Schema migration | DTS migrates the schemas of the required objects from the source database to the destination PolarDB cluster. DTS supports schema migration for the following types of objects: table, view, synonym, trigger, stored procedure, stored function, package, and user-defined type. Important DTS does not support triggers for this migration type. If an object contains triggers, data inconsistency between the source and destination databases may occur. |

| Full data migration | DTS migrates the historical data of the required objects from the source database to the destination PolarDB cluster. Important During schema migration and full data migration, do not perform DDL operations on the objects to be migrated. Otherwise, the objects may fail to be migrated. |

| Incremental data migration | DTS retrieves redo log files from the source database. Then, DTS migrates incremental data from the source database to the destination PolarDB cluster. DTS can synchronize DML operations, such as INSERT, UPDATE, and DELETE. DTS cannot synchronize DDL operations. Incremental data migration allows you to ensure service continuity when you perform data migration. |

Billing

| Migration type | Task configuration fee | Internet traffic fee |

|---|---|---|

| Schema migration and full data migration | Free of charge. | Charged only when data is migrated from Alibaba Cloud over the Internet. For more information, see Billing overview. |

| Incremental data migration | Charged. For more information, see Billing overview. |

Permissions required for database accounts

Log on to the source Oracle database, create an account for data collection, and grant permissions to the account.

| Database | Schema migration | Full data migration | Incremental data migration |

|---|---|---|---|

| ApsaraDB RDS for PPAS instance | Read permissions | Read permissions | Permissions of the superuser role |

| PolarDB for Oracle cluster | Permissions of the schema owner | Permissions of the schema owner | Permissions of the schema owner |

To create a database account and grant permissions to the database account, perform the following operations:

To create a database account in a PolarDB for Oracle cluster, follow the instructions in Create database accounts

Procedure

- Log on to the DTS console. Note If you are redirected to the Data Management (DMS) console, you can click the

icon in the lower-right corner to go to the previous version of the DTS console.

icon in the lower-right corner to go to the previous version of the DTS console. - In the left-side navigation pane, click Data Migration.

- At the top of the Migration Tasks page, select the region where the destination cluster resides.

- In the upper-right corner of the page, click Create Migration Task.

- Configure the source and destination databases.

Section Parameter Description N/A Task Name The task name that DTS automatically generates. We recommend that you specify a descriptive name that makes it easy to identify the task. You do not need to specify a unique task name. Source Database Instance Type Select User-Created Database Connected Over Express Connect, VPN Gateway, or Smart Access Gateway. You cannot select ApsaraDB RDS for PPAS as the instance type. Instance Region Select the region in which the ApsaraDB RDS for PPAS instance resides. Peer VPC Select the ID of the virtual private cloud (VPC) that is connected to the source database in the ApsaraDB RDS for PPAS instance. Database Type Select PPAS. Version Select the database engine version of the ApsaraDB RDS for PPAS instance. Hostname or IP Address Enter the private IP address of the ApsaraDB RDS for PPAS instance. Port Number Enter the service port number of the ApsaraDB RDS for PPAS instance. The default port number is 3433. Database Name Enter the name of the source database in the ApsaraDB RDS for PPAS instance. Database Account Enter the username of the database account that is used to connect to the source database in the ApsaraDB RDS for PPAS instance. For information about the permissions that are required for the database account, see Permissions required for database accounts. Database Password Enter the password of the database account. Note After you specify the information about the self-managed Oracle database, you can click Test Connectivity next to Database Password to check whether the information is valid. If the information is valid, the Passed message appears. If the Failed message appears, click Check next to Failed. Then, modify the information based on the check results.Destination Database Instance Type Select PolarDB. Instance Region Select the region in which the destination PolarDB for Oracle cluster resides. PolarDB Instance ID Select the ID of the destination PolarDB for Oracle cluster. Database Name Enter the name of the destination database. Database Account Enter the username of the database account that is used to connect to the destination database in the PolarDB for Oracle cluster. For information about the permissions that are required for the database account, see Permissions required for database accounts. Database Password Enter the password of the database account. Note After you specify the information about the destination database, you can click Test Connectivity next to Database Password to check whether the information is valid. If the information is valid, the Passed message appears. If the Failed message appears, click Check next to Failed. Then, modify the information based on the check results. - In the lower-right corner of the page, click Set Whitelist and Next. Warning If the CIDR blocks of DTS servers are automatically or manually added to the whitelist of the database or instance, or to the ECS security group rules, security risks may arise. Therefore, before you use DTS to migrate data, you must understand and acknowledge the potential risks and take preventive measures, including but not limited to the following measures: enhance the security of your username and password, limit the ports that are exposed, authenticate API calls, regularly check the whitelist or ECS security group rules and forbid unauthorized CIDR blocks, or connect the database to DTS by using Express Connect, VPN Gateway, or Smart Access Gateway.

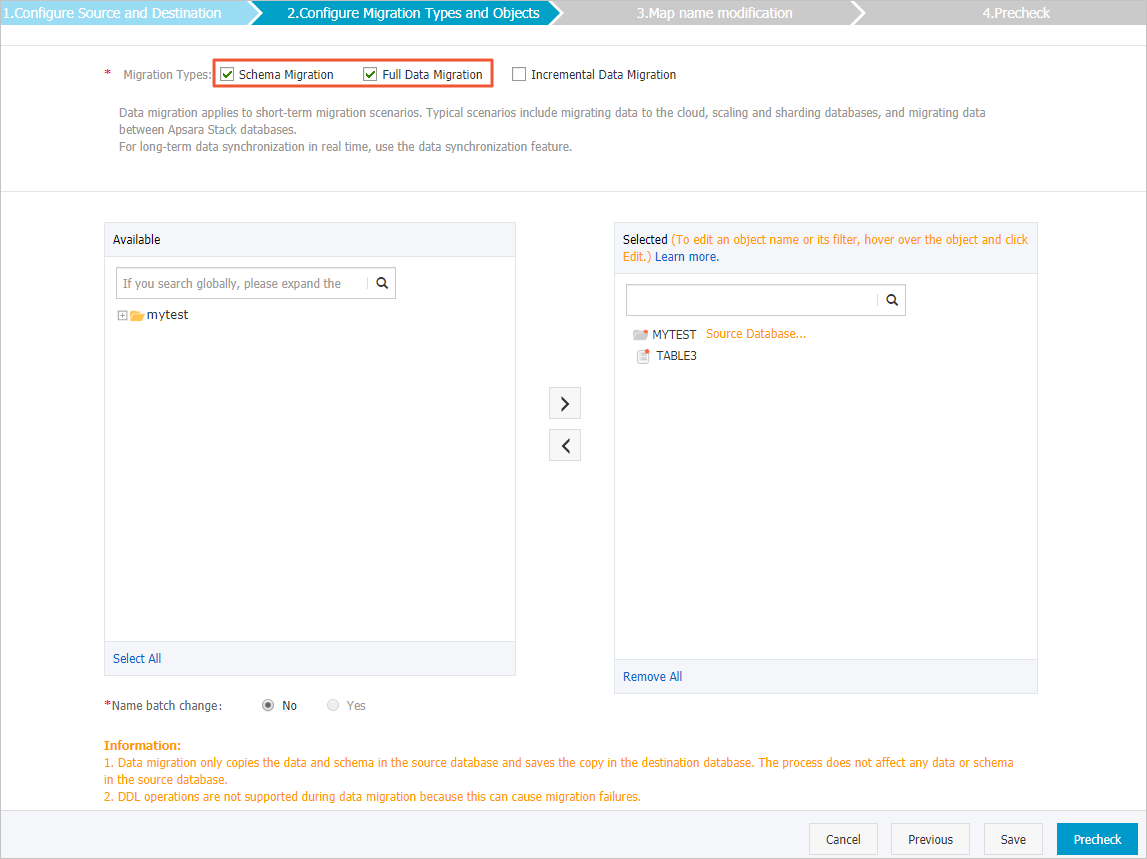

- Select the migration types and the objects to be migrated.

Setting Description Select migration types - To perform only full data migration, select Schema Migration and Full Data Migration.

- To ensure service continuity during data migration, select Schema Migration, Full Data Migration, and Incremental Data Migration.

Important- If Incremental Data Migration is not selected, we recommend that you do not write data to the source database during full data migration. This ensures data consistency between the source and destination databases.

- During schema migration and full data migration, do not perform DDL operations on the objects to be migrated. Otherwise, the objects may fail to be migrated.

Select the objects that you want to migrate Select one or more objects from the Source Objects section and click the

icon to add the objects to the Selected Objects section. Note

icon to add the objects to the Selected Objects section. Note- You can select columns, tables, or databases as the objects to migrate.

- By default, after an object is migrated to the destination database, the name of the object remains unchanged. You can use the object name mapping feature to rename the objects that are migrated to the destination database. For more information, see Object name mapping.

- If you use the object name mapping feature to rename an object, other objects that are dependent on the object may fail to be migrated.

Specify whether to rename objects You can use the object name mapping feature to rename the objects that are migrated to the destination cluster. For more information, see Object name mapping. Specify the retry time range for failed connections to the self-managed Oracle database or ApsaraDB RDS for PPAS instance By default, if DTS fails to connect to the source and destination databases, DTS retries within the following 12 hours. You can specify the retry time range based on your business requirements. If DTS is reconnected to the source and destination databases within the specified time range, DTS resumes the data migration task. Otherwise, the data migration task fails. Note Within the time range in which DTS attempts to reconnect to the source and destination databases, you are charged for the DTS instance. We recommend that you specify the retry time range based on your business requirements. You can also release the DTS instance at the earliest opportunity after the source and destination databases are released. - In the lower-right corner of the page, click Precheck. Note

- Before you can start the data migration task, DTS performs a precheck. You can start the data migration task only after the task passes the precheck.

- If the task fails to pass the precheck, you can click the

icon next to each failed item to view details.

icon next to each failed item to view details. - You can troubleshoot the issues based on the causes and run a precheck again.

- If you do not need to troubleshoot the issues, you can ignore failed items and run a precheck again.

- After the task passes the precheck, click Next.

- In the Confirm Settings dialog box, specify the Channel Specification parameter and select Data Transmission Service (Pay-As-You-Go) Service Terms.

- Click Buy and Start to start the data migration task.

- Schema migration and full data migration

We recommend that you do not manually stop the task during full data migration. Otherwise, the data migrated to the destination database may be incomplete. You can wait until the data migration task automatically stops.

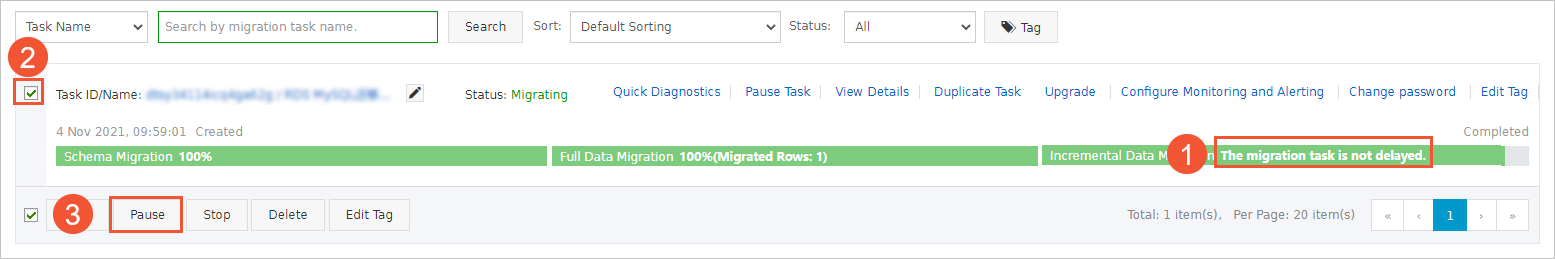

- Schema migration, full data migration, and incremental data migration

An incremental data migration task does not automatically stop. You must manually stop the task.

Important We recommend that you select an appropriate time to manually stop the data migration task. For example, you can stop the task during off-peak hours or before you switch your workloads to the destination cluster.- Wait until Incremental Data Migration and The migration task is not delayed appear in the progress bar of the migration task. Then, stop writing data to the source database for a few minutes. The latency of incremental data migration may be displayed in the progress bar.

- Wait until the status of incremental data migration changes to The migration task is not delayed again. Then, manually stop the migration task.

- Schema migration and full data migration