When a Tair (Redis OSS-compatible) instance runs out of memory or shows performance degradation, large keys — keys with oversized values or element counts — are a common cause. Cache analysis scans Redis Database (RDB) backup files to identify these large keys and show you their memory consumption, distribution, and expiration time, so you can pinpoint and resolve the root cause.

Scope

The instance must be connected to Database Autonomy Service (DAS) and in the Normal Access state.

Cache analysis is unavailable for Tair (Enterprise Edition) instances that use Enterprise SSDs (ESSDs) and SSDs.

Cache analysis supports Redis data structures and the following Tair self-developed data structures: TairString, TairHash, TairGIS, TairBloom, TairDoc, TairCpc, and TairZset. Other Tair self-developed data structures are not supported.

If you change the specifications of an instance, you cannot analyze backup files generated before the change.

Cache analysis is based on RDB persistence and is available for Tair (Redis OSS-compatible) 7.0 instances.

Run cache analysis

Start an analysis task

Log on to the DAS console.

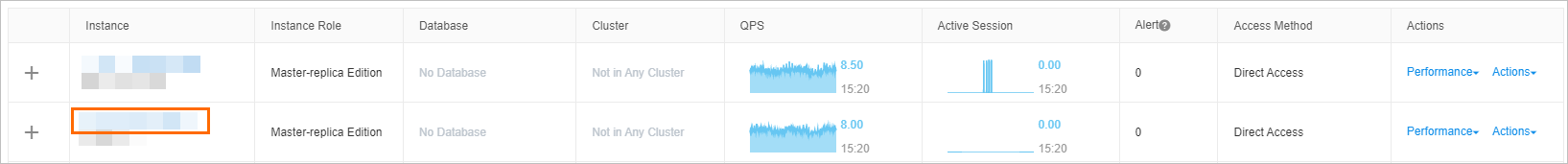

In the left-side navigation pane, choose Intelligent O&M Center > Instance Monitoring.

Find the instance and click its ID to open the instance details page.

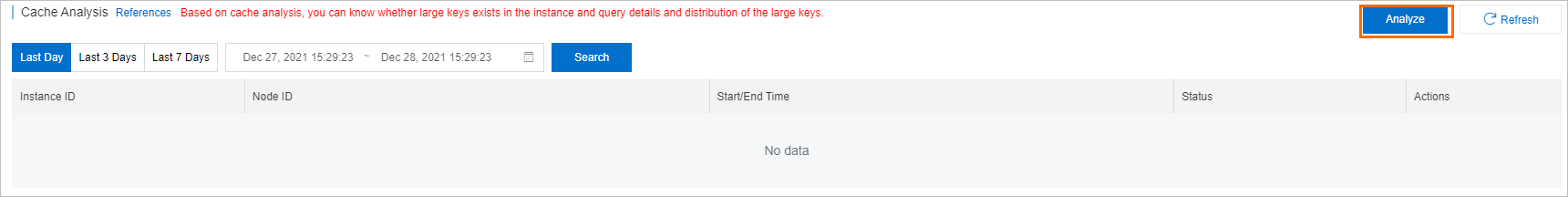

In the left-side navigation pane, choose Request Analysis > Cache Analysis.

In the upper-right corner, click Analyze.

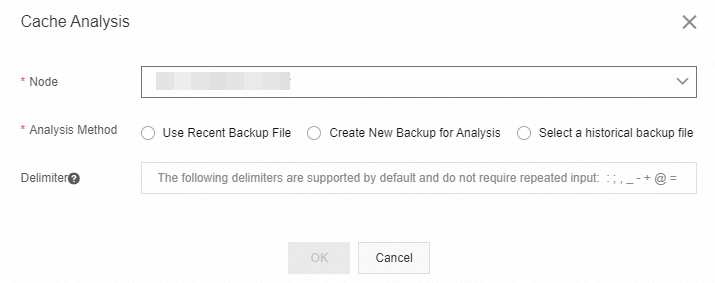

In the Cache Analysis dialog box, configure the following parameters.

Parameter Description Node The ID of the node on which you want to perform cache analysis. You can select an instance or a node for analysis. Analysis Method The backup file to use for analysis. Valid values: Use Recent Backup File: analyzes the most recent backup file.

Create New Backup for Analysis: triggers an immediate backup and analyzes it, reflecting the current state of the instance.

Select a historical backup file: analyzes a backup file you choose.

NoteIf you use an existing backup file, verify that its creation time meets your requirements.

Delimiter The characters used to identify key prefixes. The default delimiters are :;,_-+@=|#. Leave this blank to use the defaults.Click OK.

View analysis results

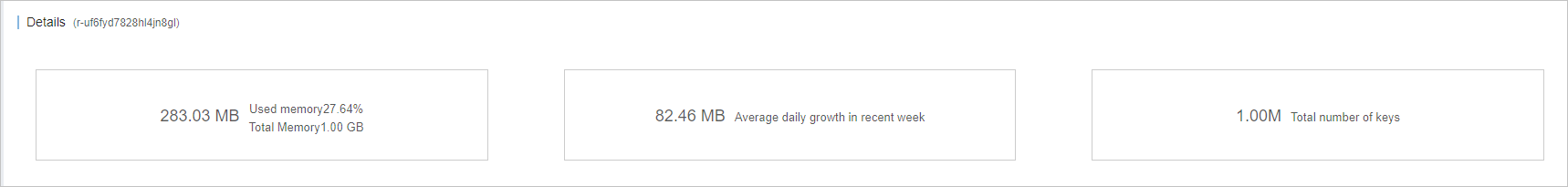

After the task completes, click Details in the Actions column. The results page has three sections:

Basic information: Summary details for this analysis run, including the instance ID and the analysis method used.

Relevant nodes: For cluster instances, the memory usage of each node, including total memory and key statistics.

NoteThis section is displayed only for Redis Open-Source Edition cluster instance analysis.

Details: A breakdown of the instance's memory usage and key statistics, including:

Metric Description Daily growth within seven days Memory growth trend for the past seven days Total number of keys Total key count in the analyzed scope Memory usage of keys Memory consumed by keys Distribution of keys How keys are distributed across prefixes and types Memory usage of elements Memory consumed by element values Distribution of elements How elements are distributed Distribution of key expiration time (memory) Expiration time distribution weighted by memory Distribution of key expiration time (quantity) Expiration time distribution by key count The Details section also lists the top 100 large keys ranked by memory usage, number of keys, and prefixes.

FAQ

Why does the memory shown on the Details page appear lower than the actual memory usage?

Cache analysis calculates memory based on serialized key-value data in the RDB file, which covers only part of actual memory consumption. The reported value does not include:

Struct data, pointers, and byte alignment overhead. For an instance with 250 million keys, this overhead can add approximately 2–3 GB.

Client output buffers, query buffers, append-only file (AOF) rewrite buffers, and replication backlogs from primary/secondary replication.

Why is the memory usage for Stream keys several times larger than expected?

Stream is a complex data structure that uses radix trees and listpacks internally. Cache analysis cannot accurately measure memory for such structures, which causes the reported values to exceed actual usage. This deviation is statistical and does not affect instance functionality.

Why is the element count the same as element length for String type keys?

String keys have only one element, so element count equals element length — both represent the actual byte size of the key value.

Why does the number of elements differ from the actual element count for cluster instances?

For cluster instances, the Statistics section shows data only from the nodes that contain the relevant data, not all nodes. This produces statistical deviations that do not affect instance functionality.

What do I do if I see `decode rdbfile error: rdb: invalid file format`?

This error indicates the selected backup file is invalid. Check whether the instance specifications changed after the backup was created, or whether transparent data encryption (TDE) is enabled — encrypted backup files cannot be analyzed.

What do I do if I see `decode rdbfile error: rdb: unknown object type 116 for key XX`?

This error means the instance has Bloom keys, which are not supported by cache analysis. Delete these keys from the instance, or upgrade to Tair (Enterprise Edition) and migrate the Bloom structure to TairBloom.

What do I do if I see `decode rdbfile error: rdb: unknown module type`?

The backup file contains self-developed Tair data structures that cache analysis does not support.

What do I do if I see `XXX backup failed` when using Create New Backup for Analysis?

A BGSAVE or BGREWRITEAOF command is running on the instance, which prevents a new backup from being created. Run the analysis during off-peak hours, or switch to Use Recent Backup File or Select a historical backup file.

See also

Cache analysis is also available in the Tair (Redis OSS-compatible) console. For more information, see Use the offline key analysis feature.

API reference

| Operation | Description |

|---|---|

| CreateCacheAnalysisJob | Creates a cache analysis task |

| DescribeCacheAnalysisJob | Queries the details of a cache analysis task |

| DescribeCacheAnalysisJobs | Queries cache analysis tasks |