Release 25.11 adds multi-architecture support, upgrades PyTorch to 2.9, and delivers a 20% end-to-end throughput improvement for 8B LLM training.

What's new

Multi-architecture support

The image now supports both amd64 and aarch64 architectures, with two CUDA variants:

-

CUDA 13.0.2 — requires NVIDIA driver ≥ 580; supports amd64 and aarch64

-

CUDA 12.8 — requires NVIDIA driver ≥ 575; supports amd64 only

Core component upgrades

| Component | Version | Notes |

|---|---|---|

| PyTorch | 2.9 | |

| Transformers | 4.57.1 | |

| DeepSpeed | 0.18.1 | |

| TransformerEngine | 2.8 | Adds Qwen3-VL support |

| vLLM | 0.11.2 |

This release contains no bug fixes.

Image contents

The image ships in two CUDA variants. Choose the variant that matches your NVIDIA driver version.

CUDA 13.0 image (amd64 and aarch64)

Requirements: NVIDIA driver ≥ 580

| Component | Version |

|---|---|

| Ubuntu | 24.04 |

| Python | 3.12.7+gc |

| CUDA | 13.0 |

| torch | 2.9.0+ali.10.nv25.10 |

| triton | 3.5.0 |

| transformer_engine | 2.9.0+70f53666 |

| deepspeed | 0.18.1+ali |

| flash_attn | 2.8.3 |

| transformers | 4.57.1+ali |

| grouped_gemm | 1.1.4 |

| accelerate | 1.11.0+ali |

| diffusers | 0.34.0 |

| mmengine | 0.10.3 |

| mmcv | 2.1.0 |

| mmdet | 3.3.0 |

| opencv-python-headless | 4.11.0.86 |

| ultralytics | 8.3.96 |

| timm | 1.0.22 |

| vllm | 0.11.2+cu130 |

| flashinfer-python | 0.5.2 |

| pytorch-dynamic-profiler | 0.24.11 |

| peft | 0.16.0 |

| ray | 2.52.0 |

| megatron-core | 0.14.0 |

| perf | 5.4.30 |

| gdb | 15.0.50 |

CUDA 12.8 image (amd64 only)

Requirements: NVIDIA driver ≥ 575

| Component | Version |

|---|---|

| Ubuntu | 24.04 |

| Python | 3.12.7+gc |

| CUDA | 12.8 |

| torch | 2.8.0.9+nv25.3 |

| triton | 3.4.0 |

| transformer_engine | 2.9.0 |

| deepspeed | 0.18.1+ali |

| flash_attn | 2.8.3 |

| flash_attn_3 | 3.0.0b1 |

| transformers | 4.57.1+ali |

| grouped_gemm | 1.1.4 |

| accelerate | 1.11.0+ali |

| diffusers | 0.34.0 |

| mmengine | 0.10.3 |

| mmcv | 2.1.0 |

| mmdet | 3.3.0 |

| opencv-python-headless | 4.11.0.86 |

| ultralytics | 8.3.96 |

| timm | 1.0.22 |

| vllm | 0.11.2 |

| flashinfer-python | 0.5.2 |

| pytorch-dynamic-profiler | 0.24.11 |

| peft | 0.16.0 |

| ray | 2.52.0 |

| megatron-core | 0.14.0 |

| perf | 5.4.30 |

| gdb | 15.0.50 |

Assets

Public images

CUDA 13.0.2 (driver ≥ 580, amd64 and aarch64)

egslingjun-registry.cn-wulanchabu.cr.aliyuncs.com/egslingjun/training-nv-pytorch:25.11-cu130-serverlessCUDA 12.8 (driver ≥ 575, amd64)

egslingjun-registry.cn-wulanchabu.cr.aliyuncs.com/egslingjun/training-nv-pytorch:25.11-cu128-serverlessVPC images

To speed up image pulls from within your virtual private cloud (VPC), replace the standard registry hostname with a region-specific VPC endpoint.

Standard format:

egslingjun-registry.cn-wulanchabu.cr.aliyuncs.com/egslingjun/<image:tag>VPC format:

acs-registry-vpc.<region-id>.cr.aliyuncs.com/egslingjun/<image:tag>Replace the placeholders with actual values:

| Placeholder | Description | Example |

|---|---|---|

<region-id> |

The ID of the region where your ACS service is deployed | cn-beijing, cn-wulanchabu |

<image:tag> |

The image name and tag | training-nv-pytorch:25.11-cu130-serverless |

This image is compatible with standard ACS and the multi-tenant Lingjun environment. It is not compatible with the single-tenant Lingjun environment.

Driver requirements

| CUDA version | Minimum NVIDIA driver |

|---|---|

| CUDA 13.0.2 | 580 |

| CUDA 12.8.0 | 575 |

For a complete driver compatibility matrix, see CUDA Application Compatibility. For information on managing driver versions, see CUDA Compatibility and Upgrades.

Key features and enhancements

PyTorch compiler optimization

torch.compile(), introduced in PyTorch 2.0, works well for single-GPU small-scale training. For LLM training, however, it provides limited benefit or can hurt performance because LLM training relies on GPU memory optimization and distributed frameworks such as Fully Sharded Data Parallel (FSDP) or DeepSpeed.

This release addresses that limitation with two optimizations:

-

Communication granularity control in DeepSpeed — the compiler now receives a complete compute graph over a wider scope, enabling broader compiler optimizations.

-

PyTorch compiler frontend improvements — compilation continues even when a graph break occurs. Mode matching and dynamic shape capabilities are also enhanced to produce more optimized compiled code.

After these optimizations, end-to-end (E2E) throughput increases by 20% when training an 8B LLM.

GPU memory optimization for recomputation

This release integrates automatic recommendation of the optimal number of activation recomputation layers directly into PyTorch. The recommendation is based on profiling models across different cluster configurations and parameter settings, collecting GPU memory utilization metrics, and analyzing the results. This feature is currently supported in the DeepSpeed framework.

End-to-end performance evaluation

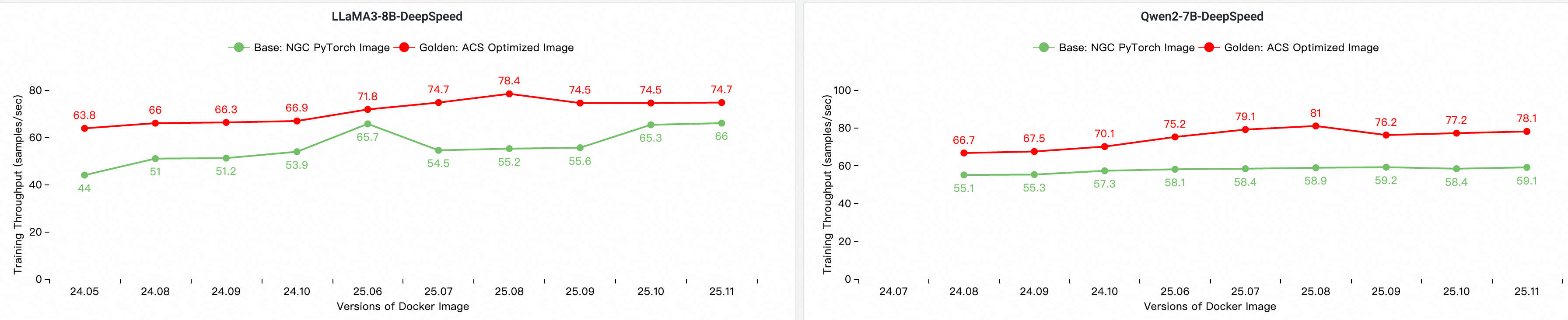

Using CNP (cloud-native AI performance benchmarking tool), we ran a comprehensive E2E performance comparison of this image against a standard base image, using mainstream open-source models and framework configurations.

Image performance evaluation: baseline comparison and iterative analysis

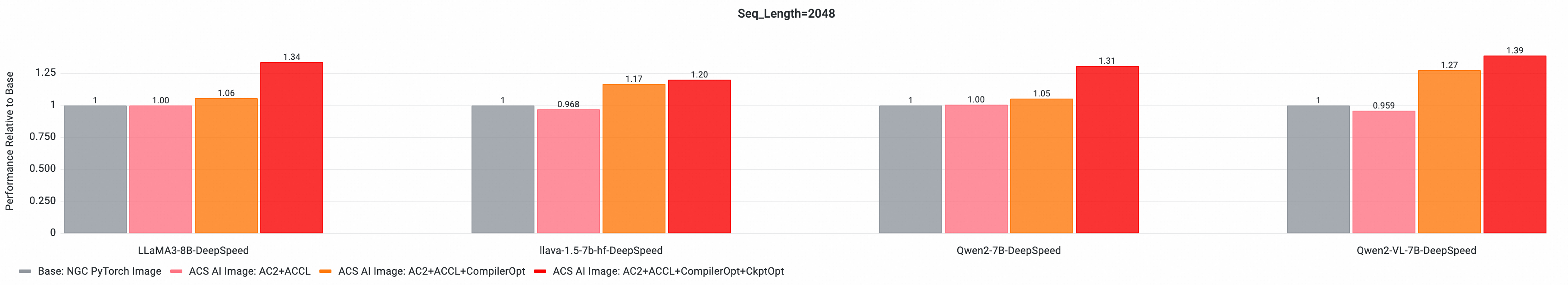

E2E performance contribution of core GPU components

The following ablation study is based on Golden-25.11. All tests ran on a multi-node GPU cluster. The configurations compared are:

-

Base — the standard NGC PyTorch image

-

ACS AI Image (Base + ACCL) — the base image with the ACCL communication library

-

ACS AI Image (AC2 + ACCL) — the Golden image using the AC2 BaseOS, no optimizations enabled

-

ACS AI Image (AC2 + ACCL + CompilerOpt) — the Golden image with only

torch.compileoptimization enabled -

ACS AI Image (AC2 + ACCL + CompilerOpt + CkptOpt) — the Golden image with both

torch.compileand selective gradient checkpointing enabled

Quick start

To use this image in ACS, select it from Artifact Center when creating a workload, or specify the image reference in a YAML manifest.

1. Pull the image

docker pull egslingjun-registry.cn-wulanchabu.cr.aliyuncs.com/egslingjun/training-nv-pytorch:<tag>Replace <tag> with the target image tag, such as 25.11-cu130-serverless or 25.11-cu128-serverless.

2. Enable optimizations

Compiler optimization — enable via the Transformers Trainer API:

Gradient checkpointing optimization — set the following environment variable before starting the container:

export CHECKPOINT_OPTIMIZATION=true3. Start the container and run a training job

The image includes ljperf, a built-in model training and benchmarking tool.

LLMs

# Start the container and enter its shell

docker run --rm -it --ipc=host --net=host --privileged \

egslingjun-registry.cn-wulanchabu.cr.aliyuncs.com/egslingjun/training-nv-pytorch:<tag>

# Run the training demo

ljperf benchmark --model deepspeed/llama3-8bConfiguration notes

This image includes custom modifications to PyTorch, DeepSpeed, and other core libraries. Reinstalling these packages from their upstream sources will overwrite those modifications and disable the performance optimizations.

DeepSpeed configuration:

Leave zero_optimization.stage3_prefetch_bucket_size blank or set it to auto.

NCCL network interface:

Set NCCL_SOCKET_IFNAME based on your GPU count:

| GPU count per pod | NCCL_SOCKET_IFNAME value |

Notes |

|---|---|---|

| 1, 2, 4, or 8 GPUs | eth0 |

Default in this image |

| 16 GPUs (full node) | hpn0 |

Enables the High-Performance Network (HPN) |

Known issues

Compiling fa3 directly in the CUDA 13.0.2 image results in an error. This is a known community issue.