Release notes for the training-nv-pytorch container image, version 25.10.

Announcements

Before upgrading, note the following constraints:

VPC image pull is limited to China (Beijing). Only images in the China (Beijing) region can be pulled over a Virtual Private Cloud (VPC).

Single-tenant Lingjun is not supported. This image is suitable for standard Alibaba Cloud Container Service (ACS) and the multi-tenant Lingjun environment. Do not use it in a single-tenant Lingjun setup.

Do not reinstall core packages. The image includes custom builds of PyTorch and DeepSpeed. Reinstalling these packages overwrites the optimizations.

What's new

Key features

Multi-architecture support: The image now supports both amd64 and aarch64 architectures.

Megatron-Core upgraded to 0.14.0.

Transformer Engine upgraded to 2.4.

vLLM upgraded to 0.11.0.

Bug fixes

No bug fixes in this release.

Contents

The table below lists the core components included in version 25.10 for each architecture.

| Component | aarch64 | amd64 |

|---|---|---|

| Use case | Training / Inference | Training / Inference |

| Framework | PyTorch | PyTorch |

| Requirements | NVIDIA Driver >= 575 | NVIDIA Driver >= 575 |

| Ubuntu | 24.04 | 24.04 |

| CUDA | 12.8 | 12.8 |

| Python | 3.12.7+gc | 3.12.7+gc |

| torch | 2.8.0.9+nv25.3 | 2.8.0.9+nv25.3 |

| accelerate | 1.7.0+ali | 1.7.0+ali |

| deepspeed | 0.16.9+ali | 0.16.9+ali |

| diffusers | 0.34.0 | 0.34.0 |

| flash_attn | 2.8.3 | 2.8.3 |

| flash_attn_3 | 3.0.0b1 | 3.0.0b1 |

| flashinfer-python | 0.2.5 | 0.2.5 |

| gdb | 15.0.50.20240403-git | 15.0.50.20240403-git |

| grouped_gemm | 1.1.4 | 1.1.4 |

| megatron-core | 0.14.0 | 0.14.0 |

| mmcv | 2.1.0 | 2.1.0 |

| mmdet | 3.3.0 | 3.3.0 |

| mmengine | 0.10.3 | 0.10.3 |

| opencv-python-headless | 4.11.0.86 | 4.11.0.86 |

| peft | 0.16.0 | 0.16.0 |

| perf | — | 5.4.30 |

| pytorch-dynamic-profiler | 0.24.11 | 0.24.11 |

| pytorch-triton / triton | 3.4.0 | 3.4.0 |

| ray | 2.50.1 | 2.50.1 |

| timm | 1.0.20 | 1.0.20 |

| transformer_engine | 2.4.0+3cd6870c | 2.4.0+3cd6870c |

| transformers | 4.56.1+ali | 4.56.1+ali |

| ultralytics | 8.3.96 | 8.3.96 |

| vllm | 0.11.0 | 0.11.0 |

perfis included only in the amd64 image. On aarch64,pytorch-tritonis used; on amd64,tritonis used.

Driver requirements

Version 25.10 is based on CUDA 12.8.0 and requires NVIDIA driver 575 or later.

Exception — data center GPUs (such as T4): Use one of the following driver versions instead:

470.57 (or a later R470 release)

525.85 (or a later R525 release)

535.86 (or a later R535 release)

545.23 (or a later R545 release)

Drivers not forward-compatible with CUDA 12.8: R418, R440, R450, R460, R510, R520, R530, R545, R555, and R560. Upgrade before using this image.

For the complete compatibility matrix, see CUDA application compatibility. For background on CUDA versioning, see CUDA compatibility and upgrades.

Key features and enhancements

PyTorch compiling optimization

torch.compile(), introduced in PyTorch 2.0, works well for single-GPU training but cannot benefit LLM training that requires GPU memory optimization and distributed frameworks such as Fully Sharded Data Parallel (FSDP) or DeepSpeed — and may provide negative benefits in these scenarios.

This release addresses the issue with two optimizations:

Communication granularity control in DeepSpeed: Regulating communication granularity gives the compiler a more complete compute graph, enabling wider-scope optimization.

Frontend improvements: The PyTorch compiler frontend now handles graph breaks without stopping compilation. Mode matching and dynamic shape support are also improved.

After these changes, end-to-end (E2E) throughput improves by 20% when training an 8B LLM.

GPU memory optimization for recomputation

This release integrates into PyTorch the suggested optimal number of activation recomputation layers. The recommendation is derived from performance tests across clusters and configurations, using collected system metrics such as GPU memory utilization. This allows users to easily benefit from GPU memory optimization. The feature is available in the DeepSpeed framework.

End-to-end performance evaluation

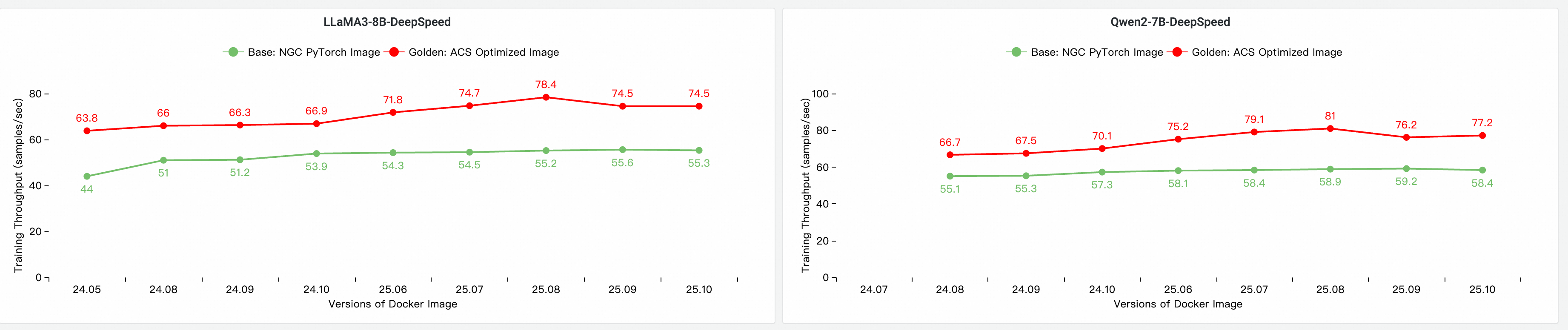

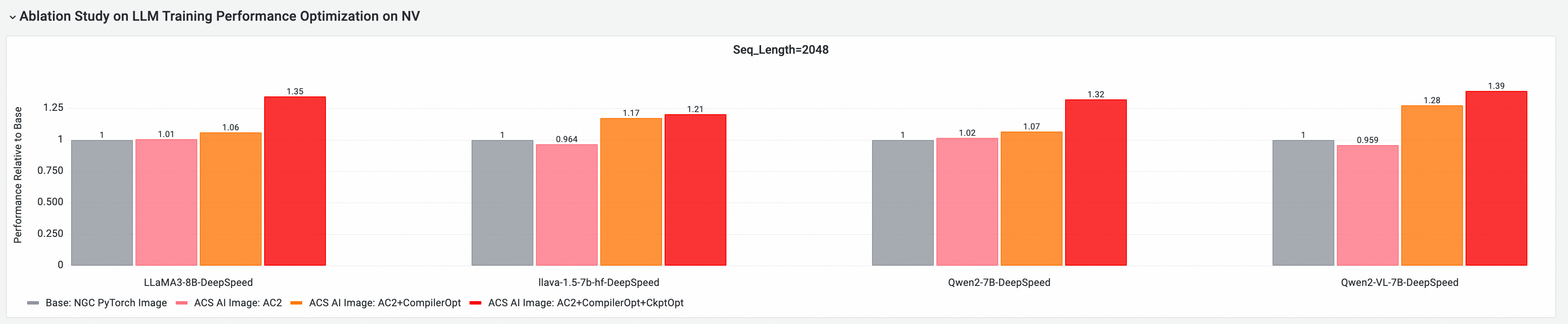

Using the cloud-native AI performance benchmarking tool CNP, this release was evaluated against a standard base image with mainstream open-source models and framework configurations. An ablation study measured the performance contribution of each optimization component.

Image performance evaluation: baseline comparison and iterative analysis

E2E performance contribution of core GPU components

The following tests are based on version 25.10, run on a multi-node GPU cluster. The four configurations compared are:

Base: Standard NGC PyTorch image.

ACS AI Image (AC2): The Golden image using AC2 BaseOS with no optimizations enabled.

ACS AI Image (AC2 + CompilerOpt): The Golden image using AC2 BaseOS with only the

torch.compileoptimization enabled.ACS AI Image (AC2 + CompilerOpt + CkptOpt): The Golden image using AC2 BaseOS with both

torch.compileand selective gradient checkpointing enabled.

Quick start

The following example pulls the image using Docker and runs a training job.

To use this image in ACS, select it from the Artifact Center in the console when creating a workload, or specify the image reference in a YAML manifest.

1. Pull the image

docker pull egslingjun-registry.cn-wulanchabu.cr.aliyuncs.com/egslingjun/training-nv-pytorch:[tag]2. Enable optimizations

Compiler optimization — To enable the compile optimization using the transformers trainer API:

Gradient checkpointing optimization — Set the following environment variable before starting training:

export CHECKPOINT_OPTIMIZATION=true3. Start the container and run training

The image includes ljperf, a built-in model training tool. The following example starts a container and runs an LLM training benchmark.

# Start and enter the container

docker run --rm -it --ipc=host --net=host --privileged \

egslingjun-registry.cn-wulanchabu.cr.aliyuncs.com/egslingjun/training-nv-pytorch:[tag]

# Run the training demo

ljperf benchmark --model deepspeed/llama3-8bConfiguration notes

| Setting | Recommendation |

|---|---|

| PyTorch / DeepSpeed packages | Do not reinstall. The image ships custom builds; reinstalling overwrites the optimizations. |

zero_optimization.stage3_prefetch_bucket_size | Leave blank or set to auto. |

NCCL_SOCKET_IFNAME (1, 2, 4, or 8 GPUs) | Set to eth0. This is the default. |

NCCL_SOCKET_IFNAME (16 GPUs, full node) | Set to hpn0 to use the High-Performance Network (HPN). |

Assets

Version 25.10

Public image:

egslingjun-registry.cn-wulanchabu.cr.aliyuncs.com/egslingjun/training-nv-pytorch:25.10-serverlessVPC image:

acs-registry-vpc.{region-id}.cr.aliyuncs.com/egslingjun/{image:tag}Replace the placeholders with actual values:

| Placeholder | Description | Example |

|---|---|---|

{region-id} | Region where your ACS is activated | cn-beijing, cn-wulanchabu |

{image:tag} | Image name and tag | training-nv-pytorch:25.10-serverless |

Only images in the China (Beijing) region can be pulled over a VPC.