If you plan to change the version of an ApsaraDB for ClickHouse Community-compatible Edition cluster, you can use the built-in migration feature in the ApsaraDB for ClickHouse console to migrate data between two Community-compatible Edition clusters. The migration supports full and incremental data, ensuring data integrity throughout the process.

Limitations: This method applies only to Community-compatible Edition clusters within the same Virtual Private Cloud (VPC) and the same region. If your data exceeds 10 TB (hot) or 1 TB (cold), or the clusters are in different VPCs, use manual migration instead.

Prerequisites

Before you begin, ensure the following:

Both clusters:

-

Are Community-compatible Edition clusters

To migrate between a Community-compatible Edition cluster and an Enterprise Edition cluster, see Migrate a ClickHouse Community-compatible Edition cluster to an Enterprise Edition cluster.

-

Are in Running state

-

Have a database account and password configured

-

Have consistent hot and cold tiered storage states

-

Are in the same VPC and the same region, with each cluster's IP address added to the other's whitelist

Run

SELECT * FROM system.clusters;to view the IP address of an ApsaraDB for ClickHouse instance. For whitelist configuration, see Set a whitelist. If network connectivity issues exist between the clusters, see How to resolve network connectivity issues between a destination cluster and a data source. -

Each local table in the source cluster must have a unique distributed table

Destination cluster only:

-

Runs the same version as the source cluster or a later version. For the latest version, see Community-compatible Edition release notes

-

Has unused disk storage space (excluding cold storage) of at least 1.2x the used disk storage space (excluding cold storage) of the source cluster

What gets migrated

The migration copies the following objects:

-

Clusters, databases, tables, materialized views, user permissions, and cluster configurations

-

Data dictionaries created using SQL If a data dictionary accesses an external service, confirm the service is available and its whitelist allows access from the destination cluster. If a data dictionary uses an internal ClickHouse table as its data source and the

HOSTparameter is set to an IP address, access may fail after migration because the IP address changes. Note the newHOSTIP address and manually recreate the data dictionary.Data dictionaries created using XML are not supported. To check for XML-based dictionaries, run:

SELECT * FROM system.dictionaries WHERE (database = '') OR isNull(database);. If the query returns results, XML-based dictionaries exist.

Unsupported objects:

-

Kafka and RabbitMQ engine tables: These cannot be migrated. Clear them from the source cluster before migration, then create them in the destination cluster or use different consumer groups.

ImportantFailing to clear Kafka and RabbitMQ engine tables before migration risks splitting data between the source and destination.

-

Non-MergeTree tables (such as external tables and Log tables): Only the table schema is migrated — no business data. To migrate business data from non-MergeTree tables, use the

remotefunction.

Migration overview

The migration process runs entirely from the destination cluster:

-

Create a migration task and configure the source and destination clusters.

-

Confirm migration content and run the precheck.

-

Set the write-stop time window and start the migration.

-

Monitor migration progress and handle write-stop triggers.

-

(Optional) Cancel the migration or adjust the write-stop window if needed.

Usage notes

Migration speed:

-

A single node in the destination cluster typically migrates data at more than 20 MB/s.

-

If the source cluster's write speed also exceeds 20 MB/s, verify the destination cluster can keep pace. If it cannot, the migration will never complete.

Behavior during migration:

-

The destination cluster pauses merge operations during migration; the source cluster does not.

-

Source cluster: reads and writes are allowed. Data Definition Language (DDL) operations (adding, deleting, or modifying database and table metadata) are not allowed.

-

Write-stop: when the estimated remaining migration time drops to 10 minutes or less, the source cluster automatically pauses writes — but only within the preset write-stop time window. Writes resume when all data is migrated or the time window ends.

Data volume limits:

| Data type | Limit | Action if exceeded |

|---|---|---|

| Cold data | Total cold data must not exceed 1 TB | Clear cold data from the source cluster before migration |

| Hot data | Must not exceed 10 TB | Use manual migration instead |

Potential impacts

Source cluster:

During migration, DDL operations are blocked. The source cluster automatically stops writes when the estimated remaining time reaches 10 minutes, if that moment falls within the preset write-stop window. Writes resume after the window ends or after migration completes.

Destination cluster:

After migration completes, the destination cluster runs frequent merge operations for a period. This increases I/O usage and can raise latency for service requests. Plan for this impact before switching traffic. To estimate how long the merge operations will take, see Calculate the merge duration after migration.

Procedure

Perform all steps on the destination cluster, not the source cluster.

Step 1: Create a migration task

-

Log on to the ApsaraDB for ClickHouse console.

-

On the Clusters page, select the Clusters of Community-compatible Edition tab, and click the ID of the destination cluster.

-

In the left navigation pane, click Data Migration and Synchronization > Migration from ClickHouse.

-

Click Create Migration Task.

-

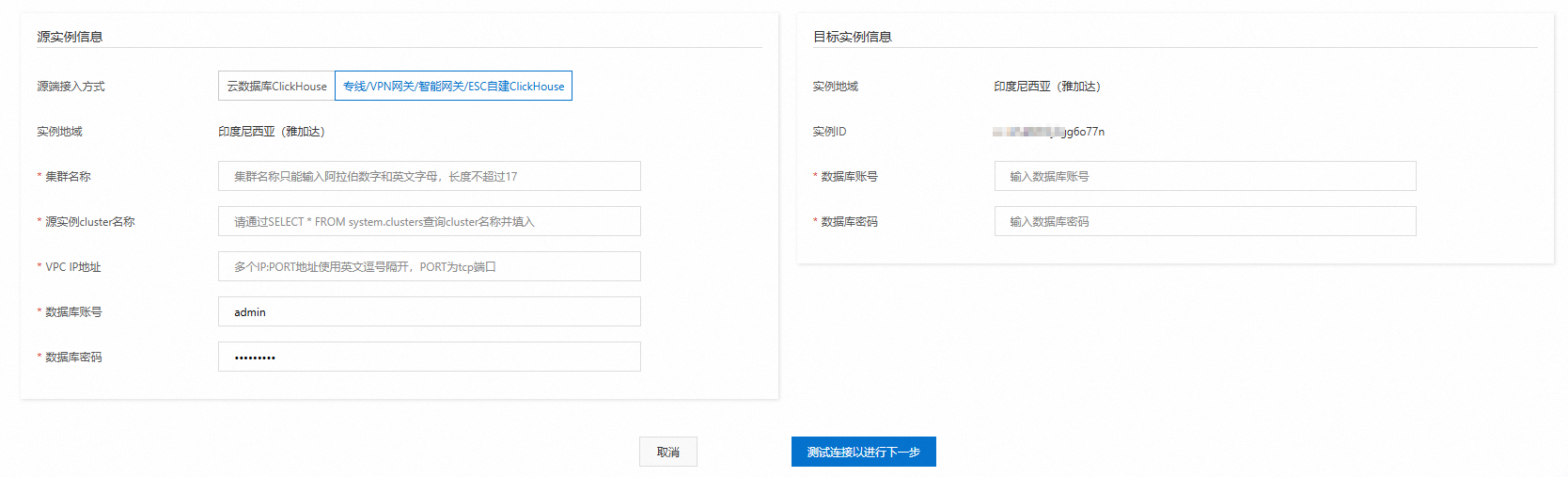

Configure the source and destination instances, then click Test Connectivity and Proceed.

If the connectivity test fails, reconfigure the source and destination instances as prompted.

-

Review the migration content details, then click Next: Pre-detect and Start Synchronization.

-

The system runs a precheck: Instance Status Detection, Storage Space Detection, and Local Table and Distributed Table Detection.

If the precheck passes:

-

Review the impact information displayed on the page.

-

Set the Time of Stopping Data Writing (write-stop time window).

-

The source cluster stops writes during the last 10 minutes of migration to ensure data consistency.

-

Set the write-stop window to at least 30 minutes to maximize the success rate.

-

The migration task must end within 5 days of creation, so the end date for Time of Stopping Data Writing must be no later than

current date + 5 days. -

Set the write-stop window to off-peak hours to minimize business impact.

-

-

Click Completed to create and start the task.

If the precheck fails, resolve the issues as prompted and retry. The precheck requirements are:

Check item Requirement Instance Status Detection No management tasks (such as scale-out or configuration changes) are running on either cluster Storage Space Detection Destination storage space >= 1.2x source storage space Local Table and Distributed Table Detection Each local table in the source cluster must have exactly one unique distributed table; delete extra distributed tables or create a missing one -

Step 2: Assess migration feasibility (when source write speed exceeds 20 MB/s)

Skip this step if the source cluster's write speed is less than 20 MB/s.

If the source cluster writes at more than 20 MB/s, the destination cluster's single-node write speed is theoretically similar. Verify the destination cluster's actual write speed to confirm migration can complete:

-

Check the destination cluster's Disk throughput metric. For details, see View monitoring metrics.

-

Compare the write speeds:

-

Destination write speed greater than source write speed: the migration has a high success rate. Proceed to Step 3.

-

Destination write speed lower than source write speed: the migration may fail. Cancel the migration task or switch to manual migration.

-

Step 3: Monitor the migration task

-

On the Clusters page, select the Clusters of Community-compatible Edition tab, and click the ID of the destination cluster.

-

In the left navigation pane, click Data Migration and Synchronization > Migration from ClickHouse. The migration list shows each task's Migration Status, Running Information, and Data Write-Stop Window.

When the estimated remaining time in Running Information drops to 10 minutes or less and Migration Status is Migrating, the write-stop is triggered. What happens next depends on the timing:

| Condition | Result |

|---|---|

| Trigger time is within the preset write-stop window | Source cluster stops writes |

Trigger time is outside the preset window AND <= task creation date + 5 days |

Modify the write-stop time window to continue the migration |

Trigger time is outside the preset window AND > task creation date + 5 days |

Migration fails. Cancel the task, clear the migrated data from the destination cluster, and recreate the task |

Step 4: Cancel the migration task (optional)

-

On the Clusters page, go to Clusters of Community-compatible Edition and click the ID of the destination cluster.

-

In the left navigation pane, click Data Migration and Synchronization > Migration from ClickHouse.

-

In the Actions column of the migration task, click Cancel Migration.

-

In the Cancel Migration dialog box, click OK.

The task list does not update immediately after cancellation. Refresh the page to check the updated status.

After cancellation, Migration Status changes to Completed.

Before starting a new migration, clear the migrated data from the destination cluster to avoid data duplication.

Step 5: Modify the write-stop time window (optional)

-

On the Clusters page, select the Clusters of Community-compatible Edition tab, and click the ID of the destination cluster.

-

In the left navigation pane, click Data Migration and Synchronization > Migration from ClickHouse.

-

In the Actions column of the migration task, click Modify Data Write-Stop Time Window.

-

In the Modify Data Write-Stop Time Window dialog box, select a Time of Stopping Data Writing.

The same rules that apply when creating the migration task apply here.

-

Click OK.

Next steps

To migrate data from a self-managed ClickHouse cluster to ApsaraDB for ClickHouse, see Migrate data from a self-managed ClickHouse cluster to an ApsaraDB for ClickHouse Community-compatible Edition cluster.