ksqlDB is a streaming SQL engine for Apache Kafka. It replaces custom stream processing code with SQL statements that you run against Kafka topics through a built-in GUI editor.

With ksqlDB, you can:

Query streaming data in real time -- run continuous SQL queries against live Kafka topics

Create materialized views -- maintain always-up-to-date query results backed by Kafka

Process streams with SQL -- perform aggregation, joins, windowed operations, and session-based operations without writing application code

ksqlDB consolidates the stream processing engine and connectors into a single system. For more about the underlying architecture, see ksqlDB for Confluent Platform.

Architecture

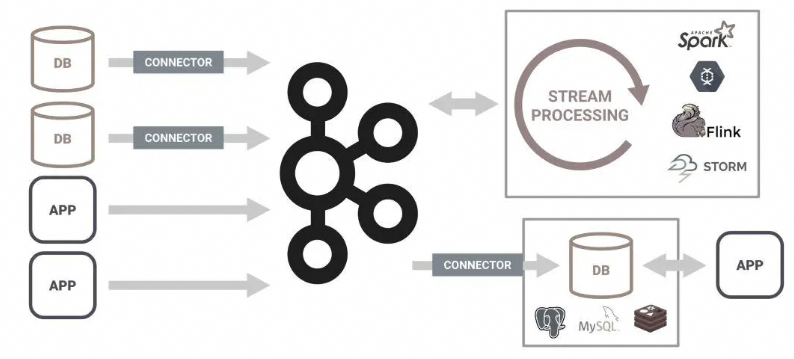

Traditional stream processing requires separate systems for connectors, a processing engine, and a storage layer. ksqlDB merges all three into a single platform.

Traditional stream processing architecture

ksqlDB-based stream processing architecture

Prerequisites

Before you begin, make sure that you have:

An ApsaraMQ for Confluent instance

A topic created for ksqlDB (this guide uses a topic named

ksql_test)A schema configured with Avro validation for the topic

Schema validation enabled on the topic

Appropriate RBAC permissions for the ksqlDB cluster

The following sections walk you through each prerequisite.

Create and configure a topic

Create a topic. This guide uses a topic named

ksql_test.Create a schema. Select Avro as the validation mode and add the following schema definition:

{ "namespace": "io.confluent.examples.clients.basicavro", "type": "record", "name": "Payment", "fields": [ { "name": "id", "type": "string" }, { "name": "amount", "type": "double" } ] }This schema defines a

Paymentrecord with two fields:id(string) andamount(double). All messages produced toksql_testmust conform to this schema.Enable schema validation for the

ksql_testtopic.

Set up authorization

ApsaraMQ for Confluent uses role-based access control (RBAC) to manage ksqlDB cluster access. This guide uses a user named test.

Create the

testuser and assign the following roles. For more information, see Manage users and grant permissions to them.Username Cluster type Resource type Role test Kafka cluster Cluster SystemAdmin test KSQL Cluster ResourceOwner test Schema Registry Cluster SystemAdmin Grant read permissions on the

ksql_testtopic toksql, the default ksqlDB user:Username Cluster type Resource type Role ksql Kafka cluster Topic DeveloperRead

Create a stream and query data

With the topic, schema, and permissions in place, open the ksqlDB editor in Control Center to create a stream and run queries.

Open the ksqlDB editor

Log on to the ApsaraMQ for Confluent console. In the left-side navigation pane, click Instances.

In the top navigation bar, select the region where your instance resides. On the Instances page, click the instance name.

In the upper-right corner of the Instance Details page, click Log on to Control Center.

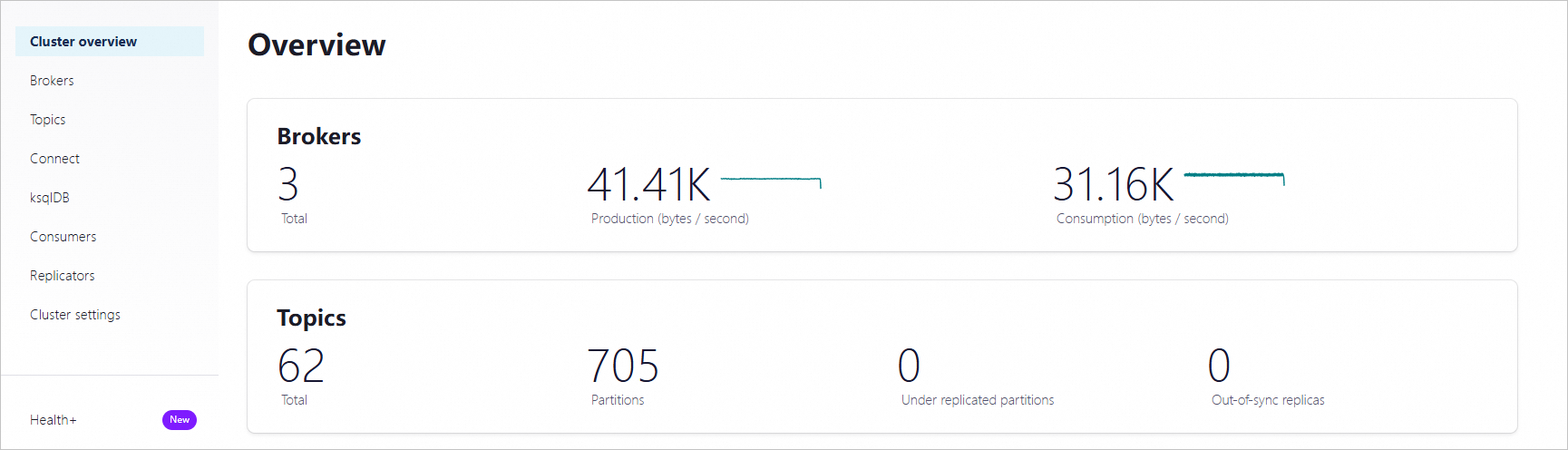

On the Home page of Control Center, click the controlcenter.clusterk card to go to the Cluster overview page.

In the left-side navigation pane, click ksqlDB, then click the name of the ksqlDB cluster.

On the cluster details page, click the Editor tab to open the SQL editor.

Create a stream

Run the following statement in the editor to create a stream backed by the ksql_test topic:

CREATE STREAM ksql_test_stream WITH (KAFKA_TOPIC='ksql_test',VALUE_FORMAT='AVRO');Each parameter serves a specific purpose:

| Parameter | Description |

|---|---|

KAFKA_TOPIC | The Kafka topic that backs the stream. The stream reads events from this topic. In this example, ksql_test is the topic created earlier. |

VALUE_FORMAT | Serialization format for message values. AVRO matches the Avro schema you configured on the topic. Other supported formats include JSON and PROTOBUF. |

For more stream creation options, see Quick Start.

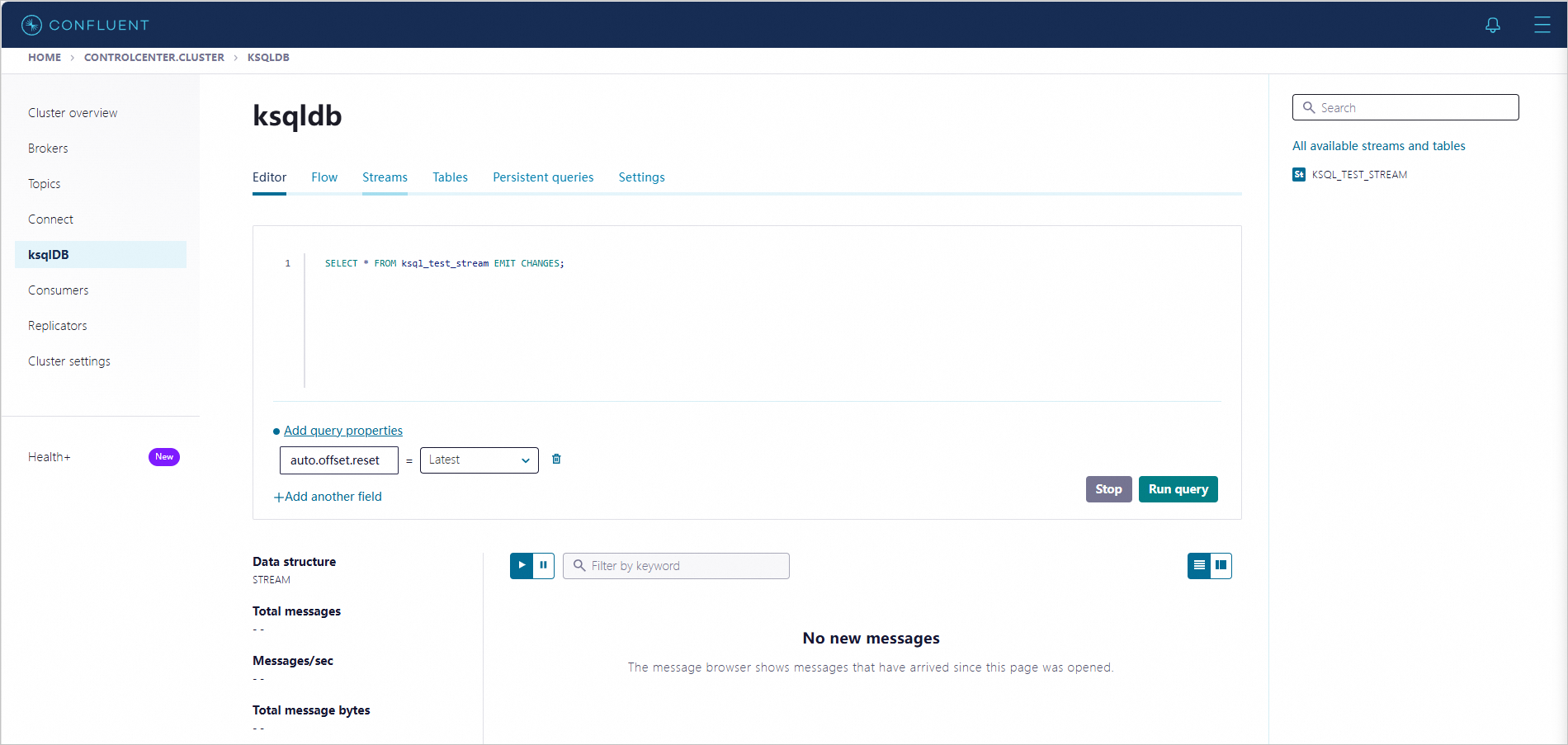

Query the stream

Run a push query to continuously receive new events as they arrive:

SELECT * FROM ksql_test_stream EMIT CHANGES;This is a push query. It runs continuously and pushes each new event to the client as it arrives on the underlying topic. The query does not terminate until you stop it.

ksqlDB also supports pull queries, which follow a traditional request-response model: they return a point-in-time result from a materialized view and then terminate, similar to a standard database SELECT.

Verify with a test message

To confirm that the stream processes events correctly, produce a test message and observe the push query output.

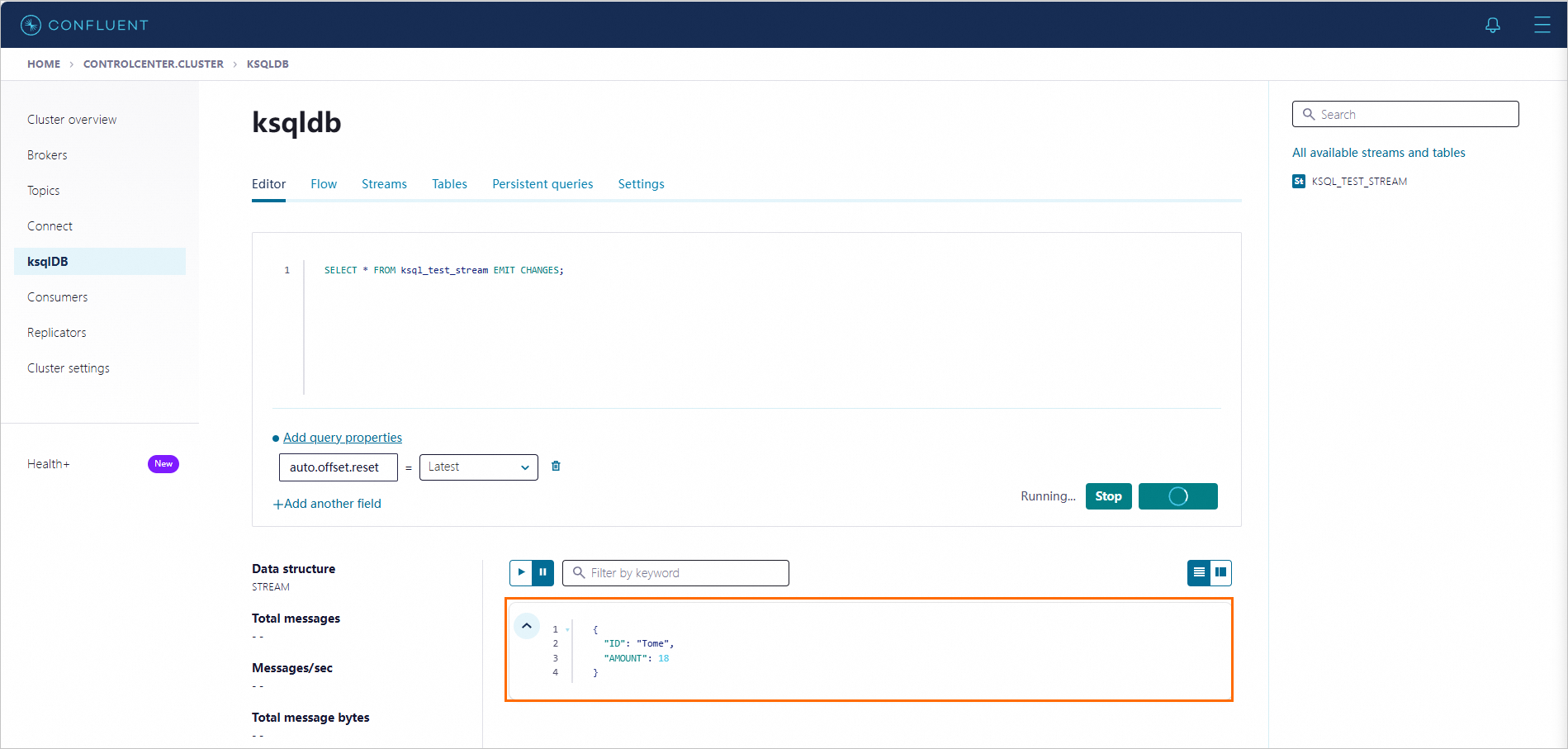

Start the push query. In the ksqlDB Editor tab, enter the following statement and click Run query:

SELECT * FROM ksql_test_stream EMIT CHANGES;

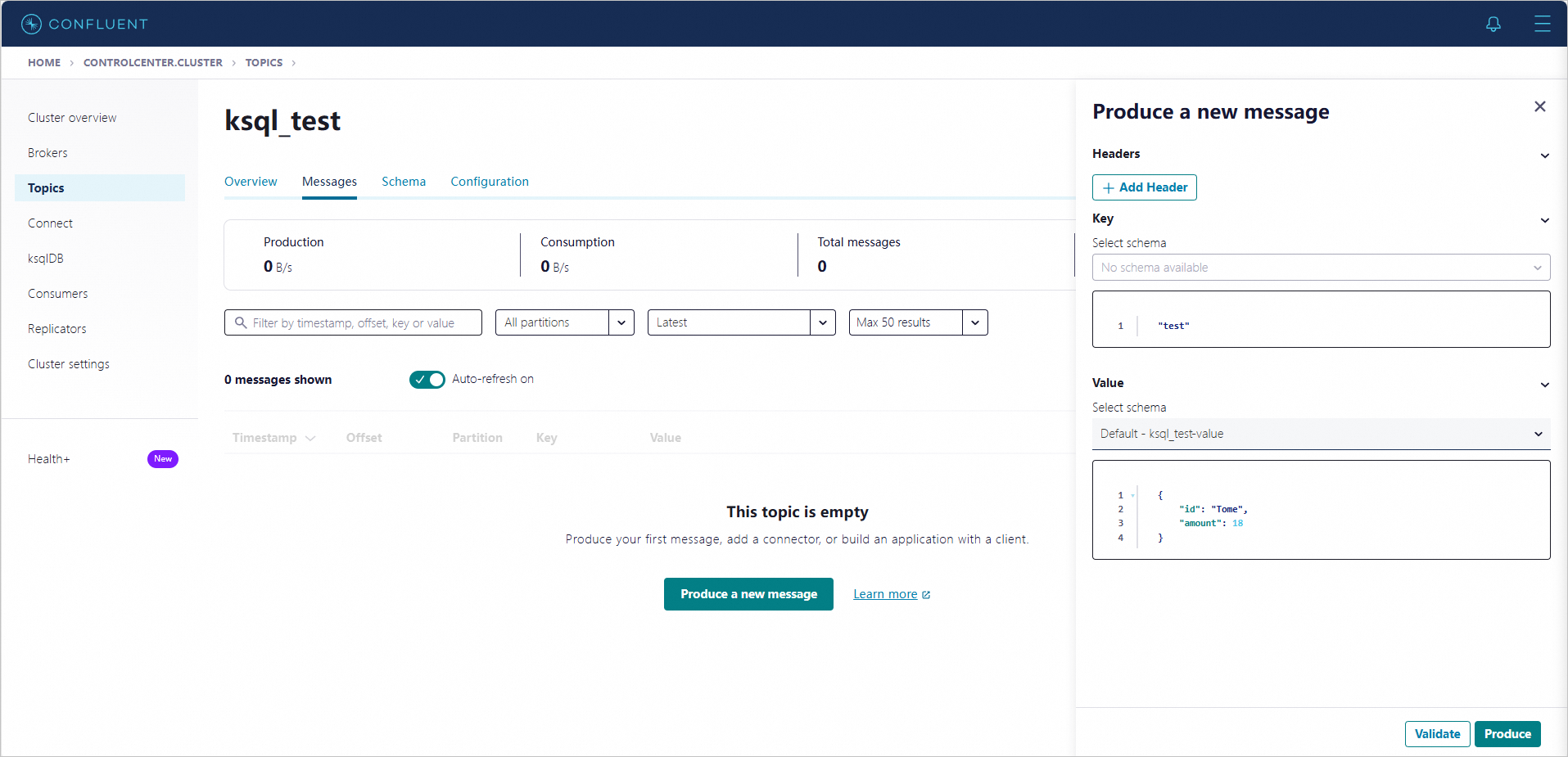

Produce a test message.

Open a new Control Center window.

Navigate to the

ksql_testtopic details page and click the Messages tab.Click Produce a new message. In the Produce a new message panel, enter the following message body and click Produce:

{ "id": "Tome", "amount": 18 }

Confirm the result. Switch back to the editor window where the push query is running. The test message appears in the query output.