When a complex SQL query runs slowly in AnalyticDB for MySQL, the bottleneck is often a single stage that blocks downstream stages or a task that receives far more data than its peers. The Stages & Tasks tab breaks down every query by stage and task so you can pinpoint the bottleneck, detect data skew, and identify whether time is lost to computation, queuing, or network latency.

Task details are available only for queries that take more than 1 second to complete.

How it works

AnalyticDB for MySQL divides each query into stages that run on worker and executor nodes. Stages that have no dependencies can run in parallel; stages that depend on the output of earlier stages must run in series. Serial dependencies between stages are the most common reason complex SQL queries are slow.

Within a stage, multiple tasks run concurrently across nodes. Each task processes a partition of the stage's input data.

For each stage, AnalyticDB for MySQL tracks:

Input and output volume (rows and bytes)

Peak memory and cumulative CPU time across all operators

Execution status

For each task within a stage, AnalyticDB for MySQL tracks additional metrics that help you identify data skew, long tail issues, and where time is spent—computation versus queuing or network latency.

View stage and task details in the console

Log on to the AnalyticDB for MySQL console. In the upper-left corner, select a region. In the left-side navigation pane, click Clusters. On the Clusters page, click an edition tab, find the cluster, and click the cluster ID.

In the left-side navigation pane, click Diagnostics and Optimization.

On the SQL Queries tab, find the query to diagnose and click Diagnose in the Actions column.

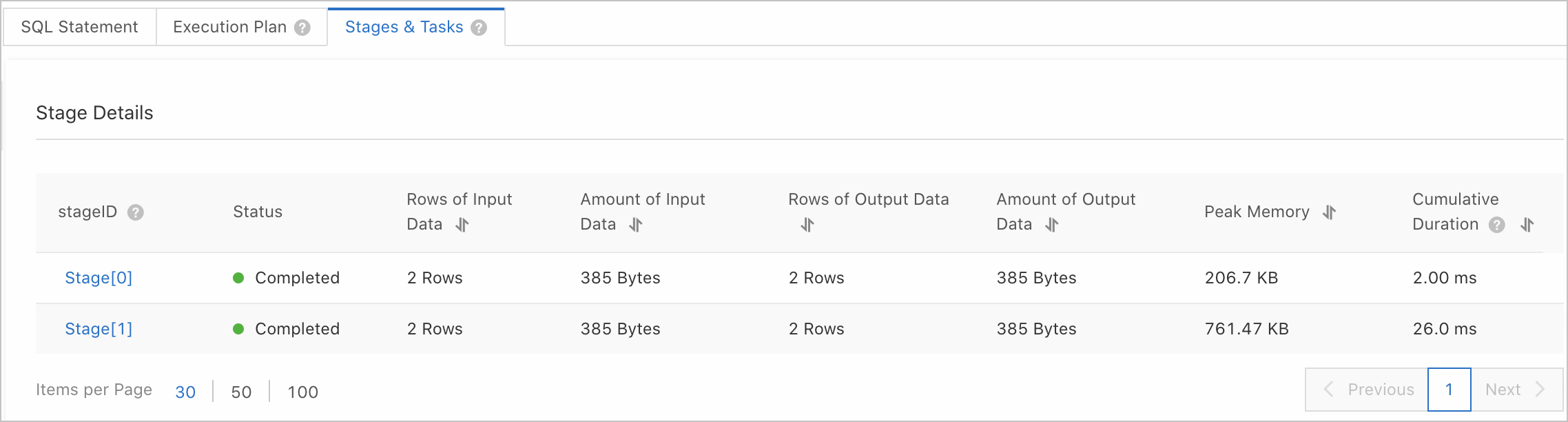

Click the Stages & Tasks tab to see stage-level details. For a description of each column, see Stage parameters

Click a stage ID to drill down into the tasks for that stage. For a description of each column, see Task parameters.

Diagnose common problems

Use the table below to match symptoms to the relevant parameters and next steps.

| Symptom | Where to look | Next step |

|---|---|---|

| Query is slow overall | Stage list: sort by Cumulative duration | Click into the top stage to inspect its tasks |

| One task in a stage is much slower than others (long tail) | Task list: compare Interval between start and end time across tasks | Check Execution node—if the same node is slow across multiple queries, investigate it for resource contention |

| Tasks in a stage receive unequal amounts of data (data skew) | Task list: sort by Amount of input data | Trace back to the upstream stage and review GROUP or JOIN distribution fields |

| A stage reads much more data from the source table than expected | Task list: sort by Amount of read table data | Check distribution field settings in the console. See Data modeling diagnostics |

| A stage uses high memory or queries fail with memory errors | Task list: compare Peak memory across tasks | Unequal peak memory indicates data skew; check input data distribution |

| Most of a task's elapsed time is not spent computing | Task Computing time ratio is low | Look for slow operators in the execution plan |

| A task spends most of its elapsed time computing | Task Computing time ratio is high | Focus on queuing delays and network latency as secondary factors |

Stage parameters

Sort by Cumulative duration to identify the stages that consume the most CPU time. Click into those stages to inspect individual tasks.

| Parameter | Description |

|---|---|

| Stage ID | The unique identifier of the stage. Corresponds to the stage ID in the execution plan tree. |

| Status | The execution status of the stage. Valid values: Completed (all tasks finished), Failed (one or more tasks failed), Running (tasks are still running), Canceled (one or more tasks were canceled). |

| Rows of input data | The number of rows input to the stage. |

| Amount of input data | The amount of data input to the stage. |

| Rows of output data | The number of rows output from the stage. |

| Amount of output data | The amount of data output from the stage. |

| Peak memory | The peak memory usage of the stage. |

| Cumulative duration | The total time consumed to execute all operators in the stage. Use this to identify stages with high CPU cost. When comparing with query duration, account for concurrent execution—a stage with high cumulative duration may still complete quickly if its tasks run in parallel. |

Task parameters

Use the task list to diagnose data skew, long tail issues, and compute efficiency.

| Parameter | Description |

|---|---|

| Task ID | The unique identifier of the task, in the format {stage_id}.{task_id}. For example, 2.24 means task 24 in stage 2. |

| Status | The execution status of the task. Valid values: Completed, Failed, Terminated, Canceled. |

| Amount of input data | The number of rows and the amount of data input to the task. Sort this column across all tasks to check for data skew on the stage's input. If one task receives significantly more data than others, the skew is likely caused by inappropriate GROUP or JOIN distribution fields. Trace back to the upstream stage to investigate. |

| Amount of output data | The number of rows and the amount of data output from the task. To understand why output volume is high, check whether combined fields exist in the GROUP or JOIN clause—for example, a.id = b.id joins on one field, while a.id = b.id AND a.age = b.age joins on two. Inspect the Aggregation or Join node in the operator plan tree for the current stage. |

| Peak memory | The peak memory usage of the task. Peak memory is proportional to input data volume. If queries fail, compare peak memory across tasks to detect unbalanced input data distribution as the root cause. |

| Duration of reading table data | The cumulative time all TableScan operators in the stage spent reading table data. This is a cross-node, cross-thread cumulative value and cannot be directly compared to query duration. Compare it with Cumulative duration to determine what fraction of a stage's compute time is spent on data scans versus other processing. |

| Amount of read table data | The number of rows and the amount of data read by all TableScan operators in the stage. Sort this column to detect data skew on source table reads. If skew exists, check whether it is caused by distribution field settings in the console. For more information, see Data modeling diagnostics. |

| Created at | The time when the task was created. |

| Queuing duration | The time the task waited in the queue before execution started. |

| Ended at | The time when the task ended. |

| Interval between start and end time | The elapsed time between task creation and task completion. Compare this with overall query duration to determine how much this stage contributes to total query time. For example, if query duration is 6 seconds and a task's interval is 4 seconds, that stage is the primary driver of latency. For a worked example, see Calculate task duration and concurrency. |

| Cumulative duration | The total time consumed by all threads across all tasks in the stage. For a worked example, see Calculate task duration and concurrency. |

| Computing time ratio | The ratio of actual compute time to the task lifecycle. Calculated as: (Cumulative duration / Subtask concurrency) / Interval between start and end time. A low ratio means the interval is long relative to compute time—look for slow operators in the execution plan. A high ratio means most time is spent on actual computation—focus on queuing delays and network latency as secondary factors. For a worked example, see Calculate task duration and concurrency. |

| Subtask concurrency | The number of threads concurrently executing the task on a node. |

| Execution node | The IP address of the node running the task. If long tail issues (where some tasks take significantly longer than others) repeatedly occur on the same node across multiple queries, investigate that node for resource contention or hardware issues. |

Calculate task duration and concurrency

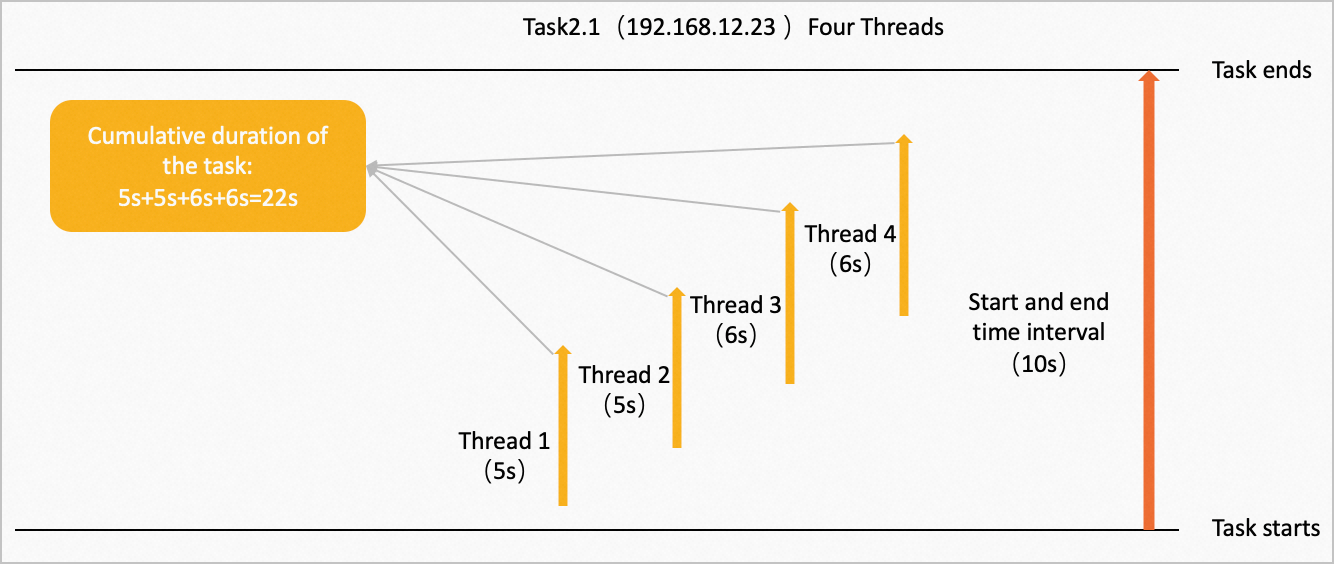

This example walks through computing the interval between start and end time, cumulative duration, computing time ratio, and subtask concurrency for Task 2.1.

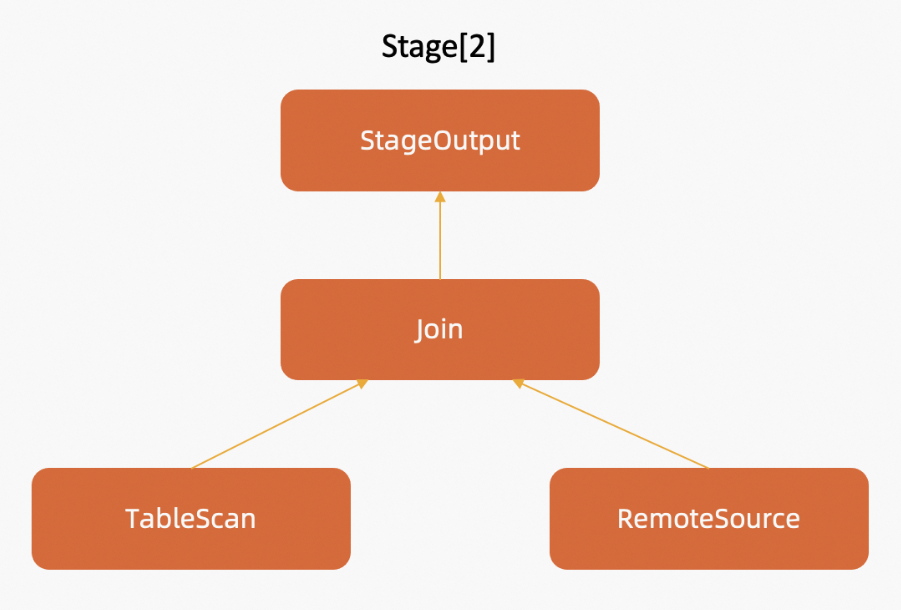

Task 2.1 belongs to Stage 2, which consists of four operators: StageOutput, Join, TableScan, and RemoteSource. The following figure shows the operator tree for Stage 2.

These operators execute concurrently across multiple nodes, in the direction of the arrows. On the node at IP address 192.168.12.23, Task 2.1 runs with four concurrent threads. Each thread takes 5s, 5s, 6s, and 6s respectively to compute data, as shown below.

From these values:

Cumulative duration: 5s + 5s + 6s + 6s = 22s

Interval between start and end time: 10s

Computing time ratio: (22s / 4) / 10s = 0.55

The ratio of 0.55 means about 55% of the task's elapsed time was spent on computation. The remaining 45% reflects queuing, network latency, or synchronization overhead.

API reference

Use the following API operations to retrieve stage and task details programmatically.

| Operation | Description |

|---|---|

| DescribeDiagnosisSQLInfo | Retrieves execution details for an SQL statement. |

| DescribeDiagnosisTasks | Retrieves execution details for distributed tasks in a specific stage of a query. |