This tutorial walks you through creating an AnalyticDB for MySQL cluster and running your first data job—from cluster setup to writing and querying data across a three-layer warehouse pipeline.

By the end of this tutorial, you will have:

Created and configured an AnalyticDB for MySQL cluster

Set up a database account and resource group

Written and queried data using Spark SQL or Xihe BSP SQL

Data Lakehouse Edition is no longer available for new purchases. If you already have a Data Lakehouse Edition cluster, you can still follow this tutorial.

Prerequisites

Before you begin, ensure that you have:

An Alibaba Cloud account. To register, visit the Alibaba Cloud website. If multiple users need access, create Resource Access Management (RAM) users under your root account and assign them the appropriate permissions. For details, see RAM user overview.

(Optional) Familiarity with AnalyticDB for MySQL concepts. If this is your first time using Enterprise Edition, Basic Edition, or Data Lakehouse Edition, see Product introduction.

Billing

Creating a cluster incurs compute and storage costs. For pricing details, see Pricing for Enterprise Edition and Basic Edition and Pricing for Data Lakehouse Edition.

Quick start video guide

This topic uses a Data Lakehouse Edition cluster as an example to show how to create a cluster, create an account, connect to the data warehouse, and run SQL queries. The steps for Enterprise Edition and Basic Edition are similar.

Step 1: Create a cluster

Log on to the AnalyticDB for MySQL console. In the upper-right corner, click Create Cluster.

On the purchase page, configure the key parameters below. Keep all other parameters at their default values. For the full parameter reference, see Create a cluster.

Parameter Description Product Type Pay-as-you-go: Billed hourly. Best for short-term or exploratory workloads. Release the cluster when done to stop charges.<br>Subscription: Pay upfront at cluster creation. Best for steady, long-term workloads. Offers lower per-hour rates than pay-as-you-go, with deeper discounts for longer terms. Edition Enterprise Edition: Multi-replica storage with distributed computing. Provides high availability (HA).<br>Basic Edition: Single-replica storage. Does not provide HA.<br>For a full comparison, see Editions. Deployment Mode Enterprise Edition: Choose single-zone deployment or multi-zone deployment. Multi-zone deployment is available only in China (Hangzhou), China (Shanghai), China (Beijing), China (Zhangjiakou), China (Shenzhen), China (Hong Kong), and Singapore.<br>Basic Edition: Fixed to single-zone deployment. Region The geographic location where the cluster runs. Choose the region closest to your users. The region cannot be changed after purchase. Primary Zone The primary availability zone for the cluster. Secondary Zone The standby zone. If the primary zone fails, traffic automatically switches to this zone—the cluster may briefly become unavailable during the switch. Set this only when Deployment Mode is multi-zone deployment. Virtual private cloud (VPC) / vSwitch in primary zone / vSwitch in secondary zone Select an existing VPC that matches your network plan (for example, the VPC where your ECS instances run). If no suitable VPC exists, use the default VPC and vSwitch or create one. ImportantPlace your AnalyticDB for MySQL cluster in the same VPC as any ECS instances or ApsaraDB RDS instances it needs to communicate with. Cross-VPC communication requires additional configuration and may reduce performance.<br>Set vSwitch in secondary zone only when Deployment Mode is multi-zone deployment.

Reserved resource node specifications Default is 8 ACU per node. Reserved resources power data computation—more ACUs mean faster queries. Each group of reserved resources supports up to 8 TB of hot data storage, billed on actual usage. NoteIf you previously used Data Warehouse Edition or Data Lakehouse Edition, see Mapping between Enterprise Edition and Data Lakehouse Edition or Data Warehouse Edition specifications when selecting Enterprise Edition specifications.

Number of reserved resource nodes Enterprise Edition: Default is 3 nodes; minimum step size is 3. Setting nodes to 0 limits the cluster to external tables only—internal tables require at least 1 node. Nodes can be added later via scale-out.<br>Basic Edition: Default is 1 node; minimum step size is 1. Complete the remaining purchase steps as prompted.

After payment, wait 10–15 minutes. The cluster status on the Clusters page changes to Running when creation is complete.

Step 2: Create a database account

AnalyticDB for MySQL supports two account types:

Privileged account: Manages all databases and standard accounts. Equivalent to the root account in MySQL.

Standard account: Requires explicit permission grants. For a comparison and creation steps, see Privileged and standard accounts.

This tutorial uses a privileged account.

On the Clusters page, click your cluster ID to open the cluster details page.

In the left navigation pane, click Accounts.

On the Database Accounts tab, click Create Account.

Configure the account parameters:

Parameter Description Database Account Name for the privileged account. Account Type Select Privileged Account. New Password Password for the account. Confirm Password Re-enter the password. Description (Optional) Notes to help identify the account later. Click OK.

To grant permissions to a standard account after creation, click Actions next to the account, then click Permissions.

Step 3: Create a resource group

AnalyticDB for MySQL uses resource groups to isolate compute resources, protecting core workloads from resource contention caused by complex queries or traffic spikes.

Three resource group types are available:

| Type | Supported tasks | Common scenarios |

|---|---|---|

| Interactive resource group | XIHE MPP SQL (XIHE engine); Spark SQL (Spark engine) | High-QPS, low-latency online analytics |

| Job resource group | Xihe BSP SQL; Spark SQL; Spark applications | High-throughput offline batch processing |

| AI resource group | MLSQL model execution; Ray-managed compute | Heterogeneous AI/ML workloads |

This tutorial uses a Job resource group. Create one as follows:

On the cluster details page, go to Cluster Management > Resource Management in the left navigation pane.

Click the Resource Groups tab, then click Create Resource Group in the upper-left corner.

Enter a Resource Group Name and set Job Type to Job.

For this tutorial, set Minimum Computing Resources to 0 ACU and Maximum Computing Resources to 8 ACU. These settings are for quick-start testing only.

Click OK.

Step 4: (Optional) Run the built-in test script

To verify the cluster quickly without setting up your own data, load the built-in dataset and run the pre-built SQL scripts.

On the cluster details page, go to Job Development > SQL Development in the left navigation pane.

On the Databases and Tables tab, click Load Built-in Dataset.

On the Scripts tab, double-click a script and run it in the SQLConsole.

For details on the built-in dataset schema, see Built-in dataset table details.

Step 5: Develop jobs

Choose a job type based on your data and compute requirements:

| Job type | Prerequisites |

|---|---|

| External tables — Spark SQL | A Job resource group or an Interactive resource group with Spark engine |

| External tables — Xihe BSP SQL | A Job resource group |

| Internal tables — Spark SQL | A Job resource group or an Interactive resource group with Spark engine. Enterprise Edition reserved resources must be greater than 0 ACU. Data Lakehouse Edition clusters require at least 16 ACU of compute reserved resources and 24 ACU of storage reserved resources. |

| Internal tables — Xihe BSP SQL | A Job resource group. Data Lakehouse Edition clusters require at least 16 ACU of compute reserved resources and 24 ACU of storage reserved resources. |

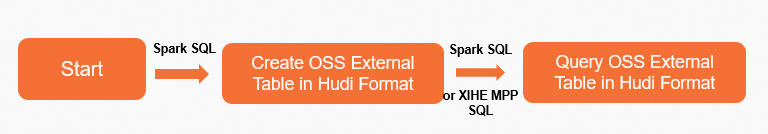

External tables using Spark SQL

External tables let you query data stored in OSS directly, without loading it into AnalyticDB for MySQL. This example uses the Hudi table format.

Create an OSS bucket and directory in the same region as your cluster. See Enable OSS, Create a bucket, and Create a directory.

Create an OSS Hudi external table.

Go to Job Development > SQL Development in the left navigation pane.

In the SQLConsole, select the Spark engine and a Job resource group (or an Interactive resource group with Spark engine).

Create a database to store Hudi data. This example uses the OSS path

oss://testBucketName/adb-test-1/. ``sql CREATE DATABASE spark_external_db LOCATION 'oss://testBucketName/adb-test-1/';``Create a Hudi external table named

spark_hudi_tablein the database. This example uses the pathoss://testBucketName/adb-test-1/spark_external_db/.CREATE TABLE spark_external_db.spark_hudi_table (id int, name string, score int, city string ) using hudi partitioned by (id) tblproperties (primaryKey = 'id', preCombineField = 'city') LOCATION 'oss://testBucketName/adb-test-1/spark_external_db/';Insert sample data.

INSERT OVERWRITE spark_external_db.spark_hudi_table PARTITION(id) VALUES (001,'Anna',99,'London'), (002,'Bob',67,'USA'), (003,'Cindy',78,'Spain'), (004,'Dan',100,'China');

Query the external table.

If your cluster has compute reserved resources (Data Lakehouse Edition or Enterprise Edition), switch to the Xihe engine with an Interactive resource group to query the external table using Xihe MPP SQL online mode.

SELECT * FROM spark_external_db.spark_hudi_table;To view results, go to Job Development > Spark JAR Development. In the Applications list, click Actions next to your SQL query, then click Logs.

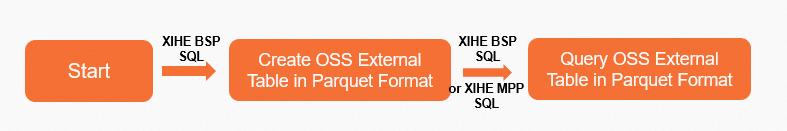

External tables using Xihe BSP SQL

Create an OSS bucket and directory in the same region as your cluster. See Enable OSS, Create a bucket, and Create a directory.

Upload sample data to OSS. This example uploads the file

xihe_oss.txttooss://adb-test-1/test_xihe/. Rows are separated by line feeds; columns are separated by commas.001,Anna,99,London 002,Bob,67,USA 003,Cindy,78,Spain 004,Dan,100,ChinaCreate an OSS external table.

Go to Job Development > SQL Development in the left navigation pane.

In the SQLConsole, select a Job resource group and the Xihe engine.

Create an external database. ``

sql CREATE EXTERNAL DATABASE xihe_external_db;``Create an external table that reads from the uploaded file. ``

sql CREATE EXTERNAL TABLE xihe_external_db.xihe_oss_table ( id int , name string , score int, city string ) ROW FORMAT DELIMITED FIELDS TERMINATED BY ',' STORED AS TEXTFILE LOCATION 'oss://adb-test-1/test_xihe/xihe_oss.txt';``

Query the external table.

If your Data Lakehouse Edition cluster has compute reserved resources, switch to an Interactive resource group to query using Xihe MPP SQL online mode.

SELECT * FROM xihe_external_db.xihe_oss_table;

Internal tables using Spark SQL

Internal tables store data inside AnalyticDB for MySQL. This example follows a three-layer data warehouse architecture:

ODS (Operational Data Store) layer: Raw source data ingested via extract, transform, and load (ETL).

DWD (Data Warehouse Detail) layer: Cleaned and filtered ODS data.

ADS (Application Data Service) layer: Business-ready data for analytics and reporting. ADS tables require indexes for fast query performance.

1. Create the ODS layer table

Go to Job Development > SQL Development in the left navigation pane.

In the SQLConsole, select the Spark engine and a Job resource group.

Create a database named

test_spark_db.CREATE DATABASE test_spark_db;Create an index-free, hash-partitioned ODS table and insert sample data.

CREATE TABLE test_spark_db.adb_spark_ods (id int, name string, age int) USING adb tblproperties ( 'distributeType' = 'HASH', 'distributeColumns' = 'id', 'partitionType' = 'VALUE', 'partitionColumn' = 'age', 'partitionCount' = '200', 'indexAll' = 'false')INSERT OVERWRITE test_spark_db.adb_spark_ods PARTITION(age) VALUES (001,'Anna',18), (002,'Bob',22), (003,'Cindy',12), (004,'Dan',25);

2. Create the DWD layer table

This step reads ODS data, filters out records where id = 002, and writes the result to the DWD layer.

Create an index-free DWD table with the same schema.

CREATE TABLE test_spark_db.adb_spark_dwd ( id int, name string, age int ) USING adb TBLPROPERTIES( 'distributeType'='HASH', 'distributeColumns'='id', 'partitionType'='value', 'partitionColumn'='age', 'partitionCount'='200', 'indexAll'='false')(Optional) Before querying offline hot data, switch to the Xihe engine with the (Interactive)

user_defaultresource group and run the following configuration statements. Skipping this step causes subsequent SQL queries to fail.SET adb_config CSTORE_HOT_TABLE_ALLOW_SINGLE_REPLICA_BUILD=true; SET adb_config ELASTIC_ENABLE_HOT_PARTITION_HAS_HDD_REPLICA=true; SET adb_config ELASTIC_PRODUCT_ENABLE_MIXED_STORAGE_POLICY=true;Switch back to the Spark engine. Filter ODS records where

id != 002and write to the DWD table.INSERT OVERWRITE test_spark_db.adb_spark_dwd partition(age) SELECT id, name, age FROM test_spark_db.adb_spark_ods WHERE id != 002;Query the DWD table.

Spark SQL SELECT results do not display table data in the SQLConsole. To view results, go to Spark JAR development, click Actions next to your query in the Applications list, and click Logs.

SELECT * FROM test_spark_db.adb_spark_dwd;

3. Create the ADS layer table

The ADS table stores filtered DWD data for direct use in business analytics. ADS tables require indexes (indexAll='true') to meet fast query requirements.

Create an indexed ADS table.

CREATE TABLE test_spark_db.adb_spark_ads ( id int, name string, age int ) USING adb TBLPROPERTIES( 'distributeType'='HASH', 'distributeColumns'='id', 'partitionType'='value', 'partitionColumn'='age', 'partitionCount'='200', 'indexAll'='true')Write records from the DWD table where

age > 15to the ADS table.INSERT OVERWRITE test_spark_db.adb_spark_ads partition(age) SELECT id, name, age FROM test_spark_db.adb_spark_dwd WHERE age > 15;

4. Query ADS data in real time

AnalyticDB for MySQL supports both offline queries (Spark SQL or Xihe BSP SQL) and online real-time queries (Xihe MPP SQL). For real-time results, use Xihe MPP SQL.

In the SQLConsole, select the Xihe engine and the (Interactive)

user_defaultresource group.Query the ADS table.

SELECT * FROM test_spark_db.adb_spark_ads;Expected results:

+------+-------+------+ | id | name | age | +------+-------+------+ | 4 | Dan | 25 | | 1 | Anna | 18 | +------+-------+------+

Internal tables using Xihe BSP SQL

This example follows the same three-layer architecture (ODS → DWD → ADS) using Xihe BSP SQL.

1. Create the ODS layer table

Go to Job Development > SQL Development in the left navigation pane.

In the SQLConsole, select the Xihe engine and a Job resource group.

Create a database named

test_xihe_db.CREATE DATABASE test_xihe_db;Create an index-free ODS table and insert sample data.

CREATE TABLE test_xihe_db.adb_xihe_ods (id int, name string, age int)DISTRIBUTED BY HASH (id)PARTITION BY VALUE (age)LIFECYCLE 4INDEX_ALL='N';INSERT INTO test_xihe_db.adb_xihe_ods(id,name,age) VALUES(001,'Anna',18),(002,'Bob',22),(003,'Cindy',12),(004,'Dan',25);

2. Create the DWD layer table

This step filters out records where id = 002 from the ODS table and writes the result to the DWD table.

Create an index-free DWD table.

CREATE TABLE test_xihe_db.adb_xihe_dwd( id int, name string, age int)DISTRIBUTED BY HASH (id)PARTITION BY VALUE (age)LIFECYCLE 4INDEX_ALL = 'N';Read from the ODS table, filter, and write to the DWD table.

INSERT INTO test_xihe_db.adb_xihe_dwd SELECT id, name, age FROM test_xihe_db.adb_xihe_ods where id != 002;Query the DWD table.

SELECT * FROM test_xihe_db.adb_xihe_dwd;Expected results:

+------+-------+------+ | id | name | age | +------+-------+------+ | 4 | Ban | 25 | | 1 | Anna | 18 | | 3 | Cindy | 12 | +------+-------+------+

3. Create the ADS layer table

Create an indexed ADS table.

CREATE TABLE test_xihe_db.adb_xihe_ads (id int, name string, age int)DISTRIBUTED BY HASH (id)PARTITION BY VALUE (age)LIFECYCLE 4;Write records from the DWD table where

age > 15to the ADS table.INSERT INTO test_xihe_db.adb_xihe_ads SELECT id, name, age FROM test_xihe_db.adb_xihe_dwd WHERE age > 15;

4. Query ADS data in real time

AnalyticDB for MySQL Data Lakehouse Edition clusters support both offline data queries (using Spark SQL or Xihe BSP SQL) and online real-time queries (using Xihe MPP SQL). For real-time results, use Xihe MPP SQL.

In the SQLConsole, select the Xihe engine and the (Interactive)

user_defaultresource group.Query the ADS table.

SELECT * FROM test_xihe_db.adb_xihe_ads;Expected results:

+------+-------+------+ | id | name | age | +------+-------+------+ | 4 | Ban | 25 | | 1 | Anna | 18 | +------+-------+------+

What's next

Now that your cluster is running and your first job is complete, here are some common next steps based on what you want to do:

Load production data: See Data import for supported ingestion methods, including batch load, real-time sync, and connector-based imports.

Scale your cluster: Adjust reserved resources to meet production workload requirements. See Scaling for Enterprise Edition and Basic Edition.

Manage resource groups: Add or configure resource groups to isolate workloads by job type or team. See Create and manage resource groups.