Model configuration

In the fine rank stage, the algorithm model service on the Elastic Algorithm Service (EAS) is generally invoked to obtain scores. EAS supports the deployment of various types of models, and PAI-Rec also supports the invocation of different models, including EasyRec, TorchEasyRec, TensorFlow, PMML, PS, Alink. The model information configuration corresponds to AlgoConfs in the configuration overview.

The code of EasyRec is open source. You can train the model and export the model, and use the EasyRec processor to deploy the EAS service based on the EasyRec documentation. You can use PAI-Rec for invocation and scoring.

TorchEasyRec is the Torch version of EasyRec. You can train the model, export the model, and use the TorchEasyRec Processor to deploy the EAS service based on the TorchEasyRec documentation. Then, you can use PAI-Rec for invocation and scoring.

Configuration example:

{

"AlgoConfs": [

{

"Name": "room3_mt_v2",

"Type": "EAS",

"EasConf": {

"Processor": "EasyRec",

"Timeout": 300,

"ResponseFuncName": "easyrecMutValResponseFunc",

"Url": "http:xxx.pai-eas.aliyuncs.com/api/predict/xxx",

"EndpointType": "DIRECT",

"Auth": ""

}

},

{

"Name": "room3_mt_v2_torch",

"Type": "EAS",

"EasConf": {

"Processor": "EasyRec",

"Timeout": 300,

"ResponseFuncName": "torchrecMutValResponseFunc",

"Url": "http:xxx.pai-eas.aliyuncs.com/api/predict/xxx",

"EndpointType": "DIRECT",

"Auth": ""

}

},

{

"Name": "tf",

"Type": "EAS",

"EasConf": {

"Processor": "TensorFlow",

"ResponseFuncName": "tfMutValResponseFunc",

"Url": "http:xxx.pai-eas.aliyuncs.com/api/predict/xxx",

"Auth": ""

}

},

{

"Name": "ps_smart",

"Type": "EAS",

"EasConf": {

"Processor": "PMML",

"ResponseFuncName": "pssmartResponseFunc",

"Url": "http:xxx.pai-eas.aliyuncs.com/api/predict/xxx",

"Auth": ""

}

},

{

"Name": "fm",

"Type": "EAS",

"EasConf": {

"Processor": "ALINK_FM",

"ResponseFuncName": "alinkFMResponseFunc",

"Url": "http:xxx.pai-eas.aliyuncs.com/api/predict/xxx",

"Auth": ""

}

}

]

}Field name | Type | Required | Description |

Name | string | Yes | Custom model information name, referenced in RankConf |

Type | string | Yes | Model deployment type |

EasConf

Field name | Type | Required | Description |

Processor | string | Yes | Model type, enumeration values:

|

Timeout | int | No | Request model timeout |

ResponseFuncName | string | Yes | Which function is needed to parse the returned data, matching the Processor type.

|

Url | string | Yes | Model address |

EndpointType | string | No | Enumeration values: DOCKER/DIRECT If both the engine and the model are deployed on EAS, it can be set to DIRECT to request the model by direct connection, which has higher performance |

Auth | string | Yes | Model token |

The above easyrecMutValResponseFunc, torchrecMutValResponseFunc, and tfMutValResponseFunc are return values that can contain multiple target prediction values. These multiple target prediction values can be processed by configuring operation functions in PAIREC. For example, all returned values can be summed up to serve as the final total ranking score basis.

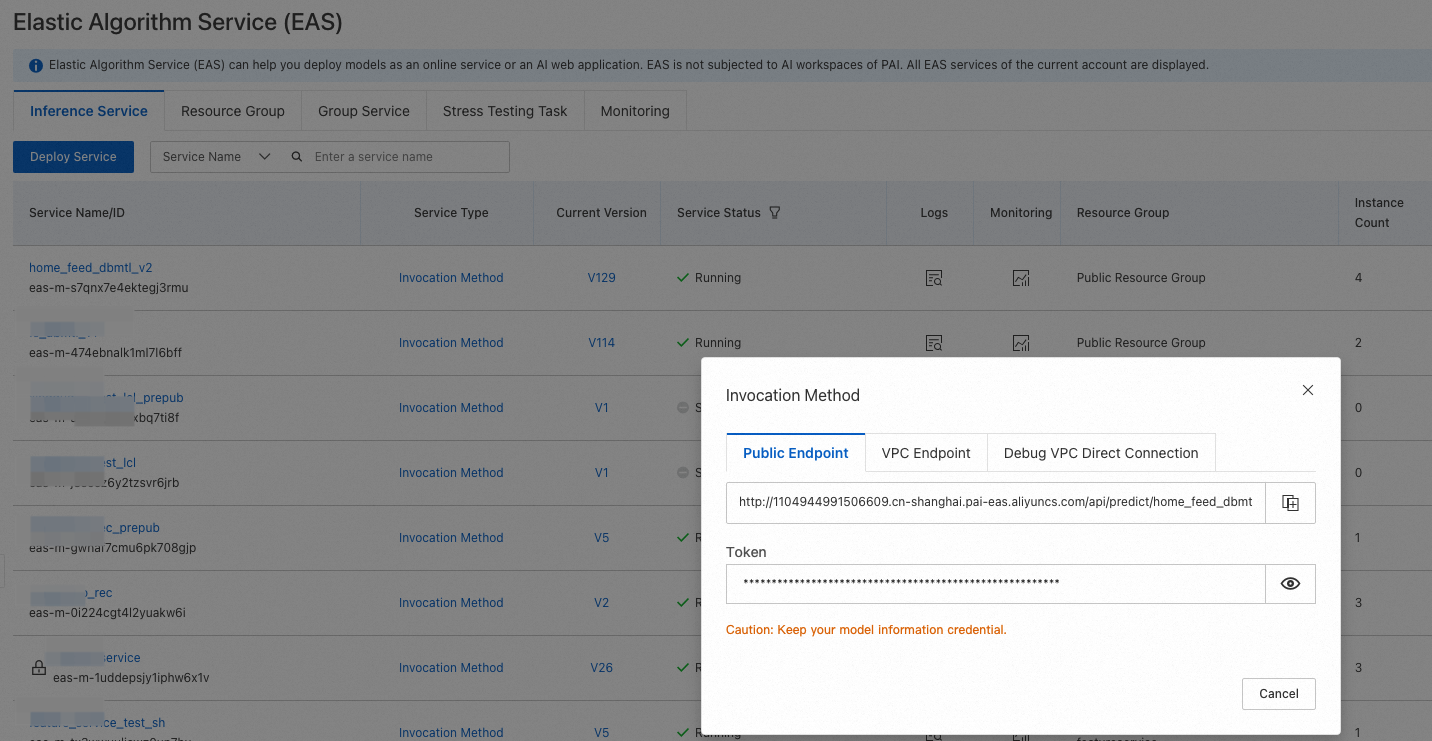

If the model is deployed on EAS, both Url and Auth can be found in the EAS console.

Algorithm configuration

The algorithm configuration corresponds to RankConf in the configuration overview. RankConf is a Map[string]object structure, where the key is the scenario name (${scene_name1} and ${scene_name2} are the names of two recommendation scenarios). Different models can be selected for different scenarios. You need to use the custom model names configured in the model configuration.

Configuration example:

{

"RankConf": {

"scene_name1": {

"RankAlgoList": [

"fm"

],

"RankScore": "${fm}"

},

"scene_name2": {

"RankAlgoList": [

"room3_mt_v2"

],

"RankScore": "${room3_mt_v2_probs_ctr} * (${room3_mt_v2_probs_view} + ${room3_mt_v2_y_view_time} + 0.5 * ${room3_mt_v2_probs_on_wheat} + 0.5 * ${room3_mt_v2_y_on_wheat_time} + 0.25 * ${room3_mt_v2_y_gift} + 0.25 * ${room3_mt_v2_probs_add_friend} + 0.1)",

"Processor": "EasyRec"

}

}Field | Type | Required | Description |

RankAlgoList | []string | Yes | Models defined in AlgoConfs, indicating which model is used for scoring in this scenario. |

RankScore | string | Yes | Model fusion expression. |

Processor | string | No | When the processor in AlgoConfs is EasyRec, it must be filled in, and the value is also EasyRec. |

BatchCount | int | No | Batch request model data size. Default value: 100. |

ContextFeatures | []string | No | When the processor in AlgoConfs is EasyRec, the context features are passed. If no features need to be passed, this value can be omitted. If context features need to be passed to the processor, the field names of the features must be configured in ContextFeatures. |

ItemFeatures | []string | No | When the processor in AlgoConfs is EasyRec, the item features are passed to the EasyRec processor instead of using the item features cached in the processor. To pass all item features, you can use the ["*"] configuration. You can also specify related features in the ["feature1","feature2"] format. This field can be configured together with the ContextFeatures field. |

RankScore is the fusion expression of multiple target prediction values. For example, dbmtl_v1_probs_ctr, where dbmtl_v1 is the model configuration name mentioned above, and probs_ctr is the return value in the model configuration service.

The following arithmetic operators are supported by the RankScore expression: addition (+), subtraction (-), multiplication (*), division (/), exponentiation (^), and modulo (%). You can use these operators in a similar way. The RankScore expression in the preceding statements is for reference. Example of the modulo operation: ${dbmel_v1_probs_ctr}%2. Example of the exponentiation operation: ${dbmel_v1_probs_ctr}^2.

Multi-model fusion scoring follows a similar approach. For example, if you have two models dbmtl_v1 and dbmtl_v2, you should set RankAlgoList to ["dbmtl_v1","dbmtl_v2"], and RankScore can be set to ${dbmtl_v1_probs_ctr}+${dbmtl_v2_probs_y_gift}. Each model service is concatenated with its respective target. Here, probs_ctr and y_gift are used as examples.

BatchCount is the batch request data size of PAI-Rec. For example, if the candidate set for recommendation ranking has 1000 item_ids, setting BatchCount to 100 means that PAI-Rec will send requests in 10 batches, with each batch containing 100 requests. This can be flexibly configured according to the resources of the model inference service.