This topic walks you through creating a stateless NGINX Deployment on an ACK Serverless cluster using the ACK console — from configuring a container image to exposing the application via a Service and Ingress.

Prerequisites

Before you begin, ensure that you have:

-

An ACK Serverless cluster. For more information, see Create an ACK Serverless cluster.

Step 1: Configure basic settings

-

Log on to the ACK console. In the left-side navigation pane, click Clusters.

-

On the Clusters page, find the cluster you want to manage and click its name. In the left-side navigation pane, choose Workloads > Deployments.

-

In the upper-right corner of the Deployments page, click Create from Image.

-

On the Basic Information wizard page, select a namespace at the top of the page. This example uses the default namespace.

-

Configure the basic settings.

Parameter Description Name Enter a name for the application. Replicas Specify the number of pods to provision. Type Select the resource type: Deployment, StatefulSet, Job, or CronJob. Label Add labels to identify the application. Annotations Add annotations to the application. -

Click Next to go to the Container step.

Step 2: Configure the container

In the Container step, configure the container image, computing resources, ports, environment variables, health checks, lifecycle, and volumes.

Click Add Container at the top of the Container step to add more containers to the application.

Configure the image

In the General section, configure the following settings:

| Parameter | Description |

|---|---|

| Image Name | Select or enter a container image. See Choose an image source below. |

| Required Resources | Reserve CPU and memory for the container. Reserving resources prevents the application from being disrupted when other workloads compete for compute. It is also required for HPA to work. |

| Container Start Parameter | stdin: passes input from the ACK console to the container. tty: passes start parameters from a virtual terminal to the ACK console. |

| Init Containers | Select this option to create an init container for pod management. For more information, see Init containers. |

Choose an image source

Click Select images to choose from the following sources:

-

Container Registry Enterprise Edition: images stored in a Container Registry Enterprise Edition instance. Select the region and instance. No Secret is required to pull images from Enterprise Edition.

-

Container Registry Personal Edition: images stored in a Container Registry Personal Edition instance. Select the region and instance.

-

Artifact Center: base OS images, base language images, and AI- and big data-related images updated and patched by Alibaba Cloud or OpenAnolis. This example uses an NGINX image. For more information, see Overview of the artifact center.

For questions or additional image requests, join the DingTalk group (ID 33605007047) to request technical support.

-

Private registry: enter the image address in the format

domainname/namespace/imagename:tag.

Image pull policy

| Policy | Behavior |

|---|---|

| IfNotPresent | Use a locally cached image if available; pull from the registry otherwise. |

| Always | Pull from the registry every time the application deploys or scales. |

| Never | Use only locally cached images; never pull from the registry. |

If no policy is selected, no image pull policy is applied by default.

Set Image Pull Secret

To pull images from a private registry (such as Container Registry Personal Edition), click Set Image Pull Secret and select a Secret. For more information, see Manage Secrets. No Secret is required for Container Registry Enterprise Edition.

Configure ports

In the Ports section, click Add to add a container port.

| Field | Description |

|---|---|

| Name | Enter a name for the port. |

| Container Port | Enter a port number (1–65535). |

| Protocol | Select TCP or UDP. |

Configure environment variables

In the Environments section, click Add to configure environment variables.

To use ConfigMaps or Secrets as environment variable sources, create them first. See Manage ConfigMaps and Manage Secrets.

Set Type to one of: Custom, ConfigMaps, Secrets, Value/ValueFrom, or ResourceFieldRef. Selecting ConfigMaps or Secrets passes all data in the selected resource to the container as environment variables.

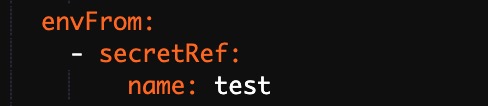

This example uses Secrets: select Secrets from the Type list, then select a Secret from Value/ValueFrom. All data in the selected Secret is passed to the environment variables, and the application's YAML will reference the Secret accordingly.

Configure health checks

In the Health Check section, enable probes as needed. The three probe types serve different purposes and work together to keep your application reliable:

| Probe | Purpose | When to use |

|---|---|---|

| Liveness | Detects whether the container is running correctly; restarts it if not. | Always configure for long-running containers. |

| Readiness | Determines whether the container is ready to receive traffic. | Configure when your app needs warm-up time before serving requests. |

| Startup | Checks whether the application has finished starting; disables liveness and readiness checks until it succeeds. | Use for applications that take a long time to initialize on first start. |

Startup probes require Kubernetes 1.18 or later.

Each probe supports the following request types:

HTTP — sends an HTTP request to check container health.

| Parameter | Description | Default |

|---|---|---|

| Protocol | HTTP or HTTPS. | — |

| Path | HTTP path on the server. | — |

| Port | Port number or name exposed by the container (1–65535). | — |

| HTTP Header | Custom headers in key-value pairs; duplicates allowed. | — |

| Initial Delay (s) | Wait time after container starts before the first probe runs (initialDelaySeconds). Set this long enough for the container to finish starting. |

Liveness: 3; TCP: 15; Command: 5 |

| Period (s) | Interval between probes (periodSeconds); minimum 1. Lower values detect failures faster but increase probe overhead. |

10 |

| Timeout (s) | Time before a probe times out (timeoutSeconds); minimum 1. |

1 |

| Healthy Threshold | Consecutive successes required to consider the container healthy. Must be 1 for liveness probes. | 1 |

| Unhealthy Threshold | Consecutive failures required to consider the container unhealthy. Higher values tolerate transient failures; lower values trigger restarts sooner. | 3 |

TCP — opens a TCP socket to check container health. Supports Port, Initial Delay, Period, Timeout, Healthy Threshold, and Unhealthy Threshold (same parameters as HTTP, minus Protocol, Path, and HTTP Header).

Command — runs a command inside the container to check health. Supports Command, Initial Delay, Period, Timeout, Healthy Threshold, and Unhealthy Threshold.

Configure the lifecycle

In the Lifecycle section, configure commands that run at specific container lifecycle events:

-

Start: runs before the container starts.

-

Post Start: runs after the container starts.

-

Pre Stop: runs before the container stops.

For more information, see Configure the lifecycle of a container.

Configure volumes

In the Volume section, add storage volumes. The following types are supported:

-

On-premises storage volume

-

PVC (PersistentVolumeClaim)

-

NAS (File Storage NAS)

-

Disk

For more information, see Use a statically provisioned disk volume, Use a dynamically provisioned disk volume, and Mount a statically provisioned NAS volume.

Configure log collection

In the Log section, configure log collection parameters. For more information, see Create an application from an image and configure Simple Log Service to collect application logs.

Click Next to proceed to the advanced settings.

Step 3: Configure advanced settings

In the Advanced step, configure access control, scaling, labels, and annotations.

Configure access control

In the Access Control section, choose how to expose the application's pods.

Internal applications: create a ClusterIP or NodePort Service to enable communication within the cluster.

External applications: expose the application to the internet using one of the following methods:

-

LoadBalancer Service: set the Service type to Server Load Balancer. Select or create a Server Load Balancer (SLB) instance to route external traffic.

-

Ingress: use an Ingress resource to route HTTP/HTTPS traffic to the Service. For more information, see Ingress.

This example creates a ClusterIP Service and an Ingress to expose an NGINX application.

Create a Service

Click Create next to Services and configure the following parameters:

| Parameter | Description |

|---|---|

| Name | Enter a name for the Service. This example uses nginx-svc. |

| Type | Select the Service type. This example uses Server Load Balancer. Options: Cluster IP, Server Load Balancer, Node Port. |

| Port Mapping | Map a Service port to a container port. This example uses Service Port 80 and Container Port 80. |

| External Traffic Policy | Local: routes traffic only to the node where the Service runs. Cluster: routes traffic to pods on any node. Applies to NodePort and Server Load Balancer types only. |

| Annotations | Add SLB configuration annotations. For example, service.beta.kubernetes.io/alicloud-loadbalancer-bandwidth:20 limits bandwidth to 20 Mbit/s. See Use annotations to configure CLB instances. |

| Label | Add labels to identify the Service. |

When using SLB instances:

Listeners on an existing SLB instance overwrite the Service's listeners.

An SLB instance created with a Service cannot be reused by other Services. Only SLB instances manually created in the SLB console or via API can be shared across multiple Services.

Services sharing the same SLB instance must use different frontend ports to avoid conflicts.

Do not modify listener names or vServer group names on shared SLB instances, as ACK uses them as unique identifiers.

SLB instances cannot be shared across clusters.

Create an Ingress

Click Create next to Ingresses and configure the following parameters:

| Parameter | Description |

|---|---|

| Name | Enter a name for the Ingress. This example uses nginx-ingress. |

| Rule | Define routing rules: Domain (this example uses the test domain foo.bar.com), Path (default /), Services (select nginx-svc), and EnableTLS. |

| Weight | Set the traffic weight for each Service in the path. Default: 100. |

| Canary Release | Enable or disable the canary release feature. We recommend that you select Open Source Solution. |

| Ingress Class | Specify the Ingress class. |

| Annotations | Add Ingress annotations. See Annotations. |

| Labels | Add labels to describe the Ingress. |

When creating an application from an image, each Service can have only one Ingress. This example uses a virtual host name as a test domain. Add the following entry to your hosts file so that the domain resolves to the Ingress IP address: To get the Ingress IP address, go to the application details page and click the Access Method tab. The IP address is in the External Endpoint column. In production, use a domain name with an Internet Content Provider (ICP) filing.

101.37.xx.xx foo.bar.com # Replace with the actual Ingress IP addressAfter creating the Service and Ingress, they appear in the Access Control section. Click Update or Delete to modify them.

Configure auto scaling

In the Scaling section, configure Horizontal Pod Autoscaler (HPA) or CronHPA.

To enable HPA, configure resource requests for the container in the Required Resources field in Step 2. HPA does not work without resource requests.

HPA scales the number of pods automatically based on CPU or memory usage:

| Parameter | Description |

|---|---|

| Metric | Select CPU Usage or Memory Usage. Must match the resource type configured in Required Resources. |

| Condition | Set the usage threshold that triggers scale-out. For example, a CPU threshold of 70% means HPA adds pods when average CPU usage exceeds 70%. Setting the threshold too high delays scaling and may cause performance degradation; setting it too low causes unnecessary scale-out. |

| Max. Replicas | Maximum number of pods the application can scale to. |

| Min. Replicas | Minimum number of pods that must always run. |

CronHPA is also supported for scheduling-based scaling.

Add labels and annotations

In the Labels,Annotations section, click Add to add labels and annotations to the pod.

-

Pod Labels: labels to identify the pod.

-

Pod Annotations: annotations for the pod.

Click Create.

Step 4: Verify the deployment

After the application is created, verify that the Deployment, Service, and application are all running correctly.

Check the Deployment

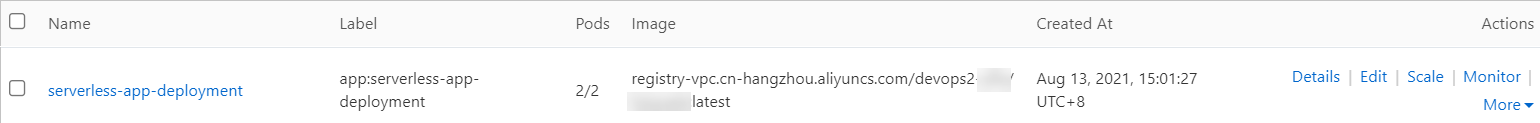

In the Complete step, click View Details. On the Deployments page, confirm that the application named serverless-app-svc appears.

Check the Service

In the left-side navigation pane, choose Network > Services. Confirm that the Service named serverless-app-svc appears in the list.

Access the application

Open the external endpoint or domain name in a browser to visit the NGINX welcome page.

-

The Service type must be Server Load Balancer to access the application via browser.

-

If you are using the domain name

foo.bar.com, make sure you have added the Ingress IP address to your hosts file as described in Create an Ingress.