ACK One multi-cluster gateways let you build zone-level disaster recovery for applications across multiple Kubernetes clusters without managing separate load balancer IP addresses per cluster or installing Ingress controllers in each cluster. This topic walks through two recovery modes — active zone-redundancy and primary/secondary — using a sample application deployed via GitOps to two ACK clusters in different availability zones (AZs) of the China (Hong Kong) region.

Multi-cluster gateways handle Layer 7 traffic failover only. Data disaster recovery is outside the scope of this feature.

How it works

ACK One multi-cluster gateways are built on managed Microservices Engine (MSE) Ingresses. Combined with ACK One GitOps (Argo CD), the setup works as follows:

-

Deploy your application to multiple ACK clusters in different AZs using Argo CD.

-

Create a multi-cluster gateway (

MseIngressConfig) in the fleet instance. The gateway provisions a single Server Load Balancer (SLB) IP address at the region level and automatically discovers Ingress resources with the specifiedingressClassacross all associated clusters. -

Create Ingress objects in the fleet instance to define traffic routing rules. The gateway routes requests to backend Services in the associated clusters and automatically fails over traffic if a cluster becomes unhealthy.

Key components:

| Component | Role |

|---|---|

| ACK One fleet instance | Control plane for managing multi-cluster resources; where you create the gateway and Ingress objects |

| MSE Ingress (MseIngressConfig) | Cloud-native gateway that handles Layer 7 routing, load balancing, and failover across clusters |

| Argo CD (GitOps) | Deploys and syncs the application to multiple ACK clusters from a Git repository |

| ACK clusters (Cluster 1, Cluster 2) | Workload clusters in separate AZs that run the application; added to the gateway as backends |

Recovery modes

Both modes protect against AZ-level failures. The difference is in how traffic is distributed under normal conditions.

| Mode | Normal traffic distribution | Failover behavior | Best for |

|---|---|---|---|

| Active zone-redundancy | Load-balanced across all clusters by replica ratio | Auto-reroutes to healthy clusters | Stateless applications that can scale horizontally |

| Primary/secondary | All traffic goes to the primary cluster | Auto-reroutes to secondary when primary is unhealthy | Applications with stateful backends (databases, caches) where active-active adds complexity |

Prerequisites

Before you begin, ensure that you have:

-

Enabled the Fleet management feature

-

Associated two ACK clusters with the fleet instance in the same Virtual Private Cloud (VPC) — see Associate clusters

-

Downloaded the kubeconfig file for the fleet instance from the ACK One console and connected kubectl to it

-

Enabled the multi-cluster gateway feature — see Billing rules for pricing

-

Created a

gateway-demonamespace in the fleet instance (must match the namespace where the application is deployed in the associated clusters)

Step 1: Deploy the application to multiple clusters

Use Argo CD to deploy the web-demo application to both Cluster 1 and Cluster 2. The application consists of a Deployment and a Service.

Choose either the Argo CD UI or CLI.

Deploy using the Argo CD UI

-

Log on to the ACK One console. In the left-side navigation pane, choose Fleet > Multi-cluster Applications.

-

On the Multi-cluster GitOps page, click GitOps Console.

If GitOps is not yet enabled, click Enable GitOps. To access GitOps over the public network, see Enable public access to Argo CD.

-

Add the application repository.

-

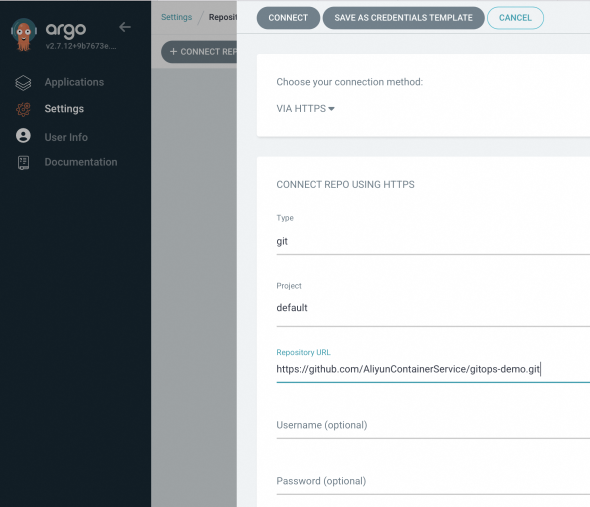

In the Argo CD left-side navigation pane, click Settings, then choose Repositories > + Connect Repo.

-

Configure the following parameters and click CONNECT.

Section Parameter Value Choose your connection method — VIA HTTP/HTTPS CONNECT REPO USING HTTP/HTTPS Type git Project default Repository URL https://github.com/AliyunContainerService/gitops-demo.gitSkip server verification Select this checkbox

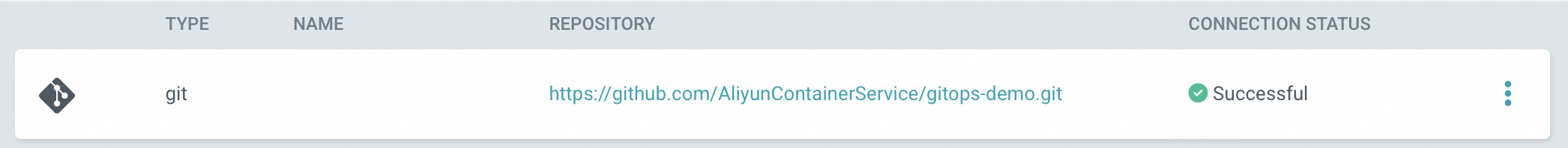

When the connection succeeds, CONNECTION STATUS shows Successful.

-

-

Create an application for each cluster. On the Applications page, click + NEW APP and configure the following parameters. Repeat this step for Cluster 2, substituting the cluster URL and

envClustervalue accordingly.Section Parameter Value GENERAL Application Name A unique name for the application Project Name defaultSYNC POLICY Manual (sync on demand) or Automatic (Argo CD checks the Git repository every 3 minutes and deploys changes automatically) SYNC OPTIONS — Select AUTO-CREATE NAMESPACESOURCE Repository URL https://github.com/AliyunContainerService/gitops-demo.gitRevision Branches: gateway-demoPath manifests/helm/web-demoDESTINATION Cluster URL Select the URL for Cluster 1 (or Cluster 2 for the second app) Namespace gateway-demoHelm > Parameters envClustercluster-demo-1for Cluster 1,cluster-demo-2for Cluster 2

Deploy using the Argo CD CLI

-

Add the Git repository.

argocd repo add https://github.com/AliyunContainerService/gitops-demo.git --name ackone-gitops-demosExpected output:

Repository 'https://github.com/AliyunContainerService/gitops-demo.git' added -

Verify the repository was added and confirm both clusters are registered.

argocd repo listExpected output:

TYPE NAME REPO INSECURE OCI LFS CREDS STATUS MESSAGE PROJECT git https://github.com/AliyunContainerService/gitops-demo.git false false false false Successful defaultargocd cluster listExpected cluster list output:

SERVER NAME VERSION STATUS MESSAGE PROJECT https://1.1.XX.XX:6443 c83f3cbc90a****-temp01 1.22+ Successful https://2.2.XX.XX:6443 c83f3cbc90a****-temp02 1.22+ Successful https://kubernetes.default.svc in-cluster Unknown Cluster has no applications and is not being monitored. -

Create the application manifest. Replace

repoURLwith your actual repository URL, and replace${cluster1_url}and${cluster2_url}with the cluster API server URLs from the previous step. apps-web-demo.yamlapiVersion: argoproj.io/v1alpha1 kind: Application metadata: name: app-demo-cluster1 namespace: argocd spec: destination: namespace: gateway-demo # https://1.1.XX.XX:6443 server: ${cluster1_url} project: default source: helm: releaseName: "web-demo" parameters: - name: envCluster value: cluster-demo-1 valueFiles: - values.yaml path: manifests/helm/web-demo repoURL: https://github.com/AliyunContainerService/gitops-demo.git targetRevision: gateway-demo syncPolicy: syncOptions: - CreateNamespace=true --- apiVersion: argoproj.io/v1alpha1 kind: Application metadata: name: app-demo-cluster2 namespace: argocd spec: destination: namespace: gateway-demo server: ${cluster2_url} project: default source: helm: releaseName: "web-demo" parameters: - name: envCluster value: cluster-demo-2 valueFiles: - values.yaml path: manifests/helm/web-demo repoURL: https://github.com/AliyunContainerService/gitops-demo.git targetRevision: gateway-demo syncPolicy: syncOptions: - CreateNamespace=true -

Deploy the applications.

kubectl apply -f apps-web-demo.yaml -

Verify both applications are synced and healthy.

argocd app listExpected output:

NAME CLUSTER NAMESPACE PROJECT STATUS HEALTH SYNCPOLICY CONDITIONS REPO PATH TARGET argocd/web-demo-cluster1 https://10.1.XX.XX:6443 default Synced Healthy Auto <none> https://github.com/AliyunContainerService/gitops-demo.git manifests/helm/web-demo main argocd/web-demo-cluster2 https://10.1.XX.XX:6443 default Synced Healthy Auto <none> https://github.com/AliyunContainerService/gitops-demo.git manifests/helm/web-demo main

Step 2: Create the multi-cluster gateway

Create an MseIngressConfig resource in the fleet instance to provision the gateway and associate both clusters as backends.

-

Get the vSwitch IDs for the fleet instance — see Obtain a vSwitch ID.

-

Create the gateway manifest. gateway.yaml

Replace

${vsw-id1}and${vsw-id2}with the vSwitch IDs, and${cluster1}and${cluster2}with the IDs of the associated clusters. For each associated cluster, configure the inbound rules of its security group to allow access from all IP addresses and ports in the vSwitch CIDR block.Parameter Description mse.alibabacloud.com/remote-clustersComma-separated IDs of the clusters to add to the gateway. Must be clusters already associated with the fleet instance. spec.nameName of the gateway instance. spec.common.instance.spec(Optional) Instance type. Default: 4c8g.spec.common.instance.replicas(Optional) Number of gateway replicas. Default: 3.spec.ingress.local.ingressClass(Optional) The Ingress class name to listen on. The gateway listens to all Ingress resources in the fleet instance where ingressClassis set tomse.apiVersion: mse.alibabacloud.com/v1alpha1 kind: MseIngressConfig metadata: annotations: mse.alibabacloud.com/remote-clusters: ${cluster1},${cluster2} name: ackone-gateway-hongkong spec: common: instance: replicas: 3 spec: 2c4g network: vSwitches: - ${vsw-id} ingress: local: ingressClass: mse name: mse-ingress -

Deploy the gateway.

kubectl apply -f gateway.yaml -

Wait for the gateway to reach

Listeningstatus. ThePendingphase, during which the cloud-native gateway is being created, may take about 3 minutes.Status Description PendingGateway is being provisioned (~3 minutes) RunningGateway is created and running ListeningGateway is running and watching for Ingress resources FailedGateway is invalid; check the Statusfield for detailskubectl get mseingressconfig ackone-gateway-hongkongExpected output:

NAME STATUS AGE ackone-gateway-hongkong Listening 3m15sGateway status values:

-

Confirm both clusters were added successfully.

kubectl get mseingressconfig ackone-gateway-hongkong -ojsonpath="{.status.remoteClusters}"Expected output:

[{"clusterId":"c7fb82****"},{"clusterId":"cd3007****"}]Both cluster IDs appear with no

Failedmessage, confirming the clusters are connected to the gateway.

Step 3: Configure zone-disaster recovery using Ingress

The multi-cluster gateway uses Ingress resources defined in the fleet instance to route traffic across clusters. Create the Ingress objects in the gateway-demo namespace — the same namespace where the application is deployed.

The gateway-demo namespace must exist in the fleet instance before you create Ingress resources.

Choose the recovery mode that fits your application:

Active zone-redundancy

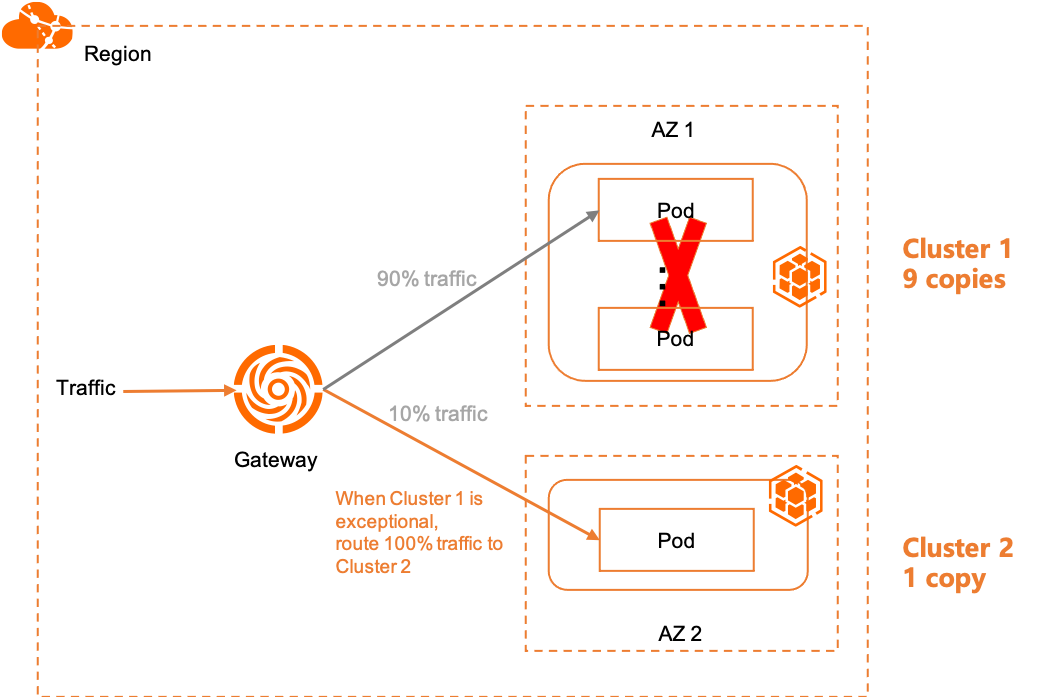

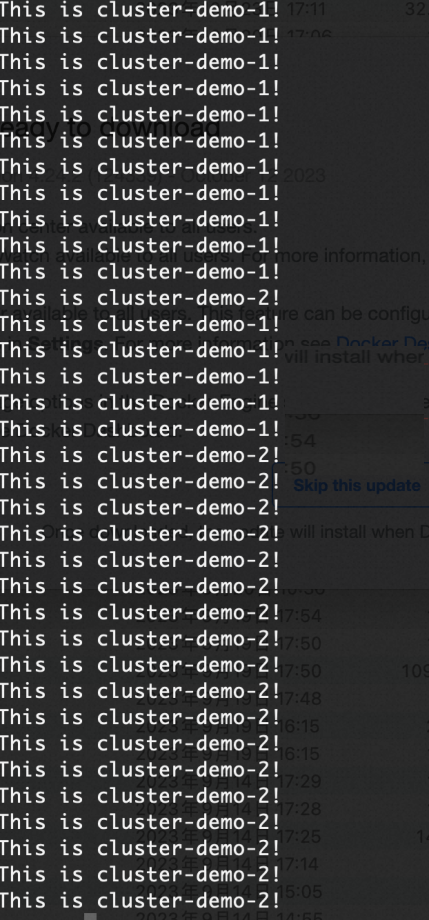

In active zone-redundancy mode, traffic is load-balanced across all cluster backends by replica ratio. If a cluster becomes unhealthy, the gateway automatically reroutes its traffic share to the remaining healthy clusters.

Example: With 9 replicas in Cluster 1 and 1 replica in Cluster 2, 90% of traffic goes to Cluster 1 and 10% to Cluster 2 by default. If all Cluster 1 backends fail, 100% of traffic shifts to Cluster 2.

Create an Ingress for active zone-redundancy

Create an Ingress that routes traffic to service1 under the domain example.com. The gateway distributes requests across the same-named Service in both clusters.

ingress-demo.yaml

apiVersion: networking.k8s.io/v1

kind: Ingress

metadata:

name: web-demo

spec:

ingressClassName: mse

rules:

- host: example.com

http:

paths:

- path: /svc1

pathType: Exact

backend:

service:

name: service1

port:

number: 80Deploy the Ingress in the fleet instance.

kubectl apply -f ingress-demo.yaml -n gateway-demoVerify active zone-redundancy

-

Get the public IP address of the multi-cluster gateway.

kubectl get ingress web-demo -n gateway-demo -ojsonpath="{.status.loadBalancer}" -

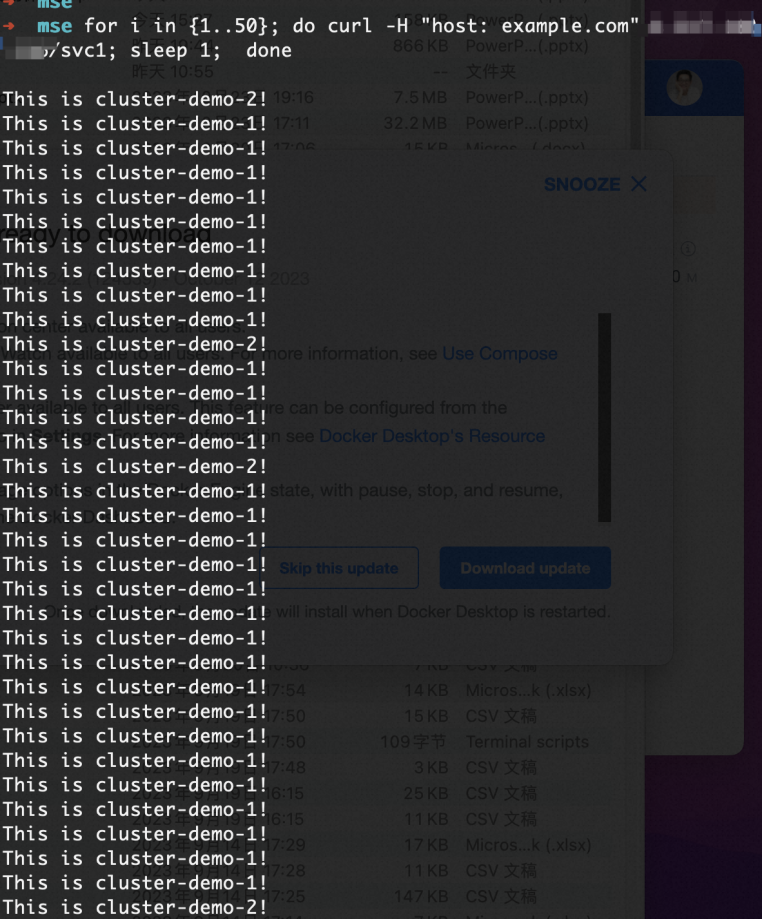

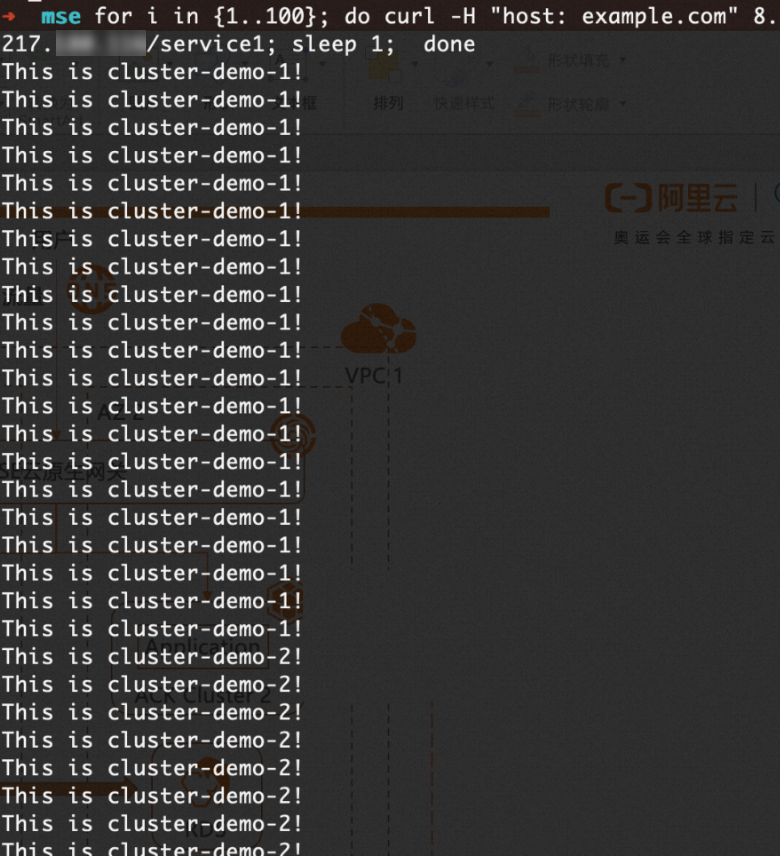

Send 100 requests and observe the traffic distribution. Replace

XX.XX.XX.XXwith the gateway IP address.for i in {1..100}; do curl -H "host: example.com" XX.XX.XX.XX; doneExpected result: Traffic is distributed between Cluster 1 and Cluster 2 at a 9:1 ratio.

-

Simulate a cluster failure by scaling the Deployment replicas in Cluster 1 to 0. All traffic automatically reroutes to Cluster 2.

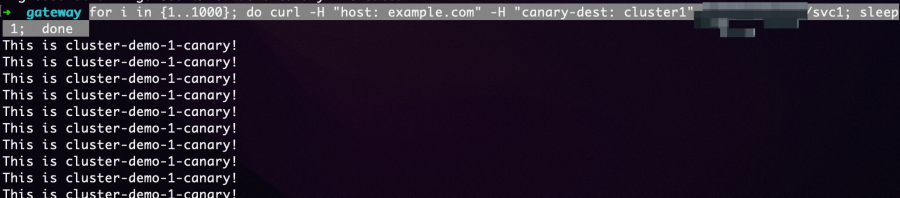

Run canary releases with header-based routing

In active zone-redundancy mode, you can test a canary release in one cluster without affecting live traffic. Deploy the canary version as a separate Service and Deployment, then use an Ingress annotation to route requests with a specific header to it.

-

Deploy the canary application in Cluster 1. new-app.yaml

apiVersion: v1 kind: Service metadata: name: service1-canary-1 namespace: gateway-demo spec: ports: - port: 80 protocol: TCP targetPort: 8080 selector: app: web-demo-canary-1 sessionAffinity: None type: ClusterIP --- apiVersion: apps/v1 kind: Deployment metadata: name: web-demo-canary-1 namespace: gateway-demo spec: replicas: 1 selector: matchLabels: app: web-demo-canary-1 template: metadata: labels: app: web-demo-canary-1 spec: containers: - env: - name: ENV_NAME value: cluster-demo-1-canary image: 'registry-cn-hangzhou.ack.aliyuncs.com/acs/web-demo:0.6.0' imagePullPolicy: Always name: web-demokubectl apply -f new-app.yaml -

Create a header-based canary Ingress in the fleet instance. Requests with the header

canary-dest: cluster1are routed to the canary Service. new-ingress.yamlAnnotation Description nginx.ingress.kubernetes.io/canarySet to "true"to enable header-based routing for this Ingressnginx.ingress.kubernetes.io/canary-by-headerThe header key to match ( canary-dest)nginx.ingress.kubernetes.io/canary-by-header-valueThe header value to match ( cluster1)apiVersion: networking.k8s.io/v1 kind: Ingress metadata: name: web-demo-canary-1 namespace: gateway-demo annotations: nginx.ingress.kubernetes.io/canary: "true" nginx.ingress.kubernetes.io/canary-by-header: "canary-dest" nginx.ingress.kubernetes.io/canary-by-header-value: "cluster1" spec: ingressClassName: mse rules: - host: example.com http: paths: - path: /svc1 pathType: Exact backend: service: name: service1-canary-1 port: number: 80kubectl apply -f new-ingress.yaml -

Verify that requests with the header are routed to the canary version.

for i in {1..100}; do curl -H "host: example.com" -H "canary-dest: cluster1" XX.XX.XX.XX/svc1; sleep 1; doneExpected result: All requests with

canary-dest: cluster1are handled by the canary release in Cluster 1.

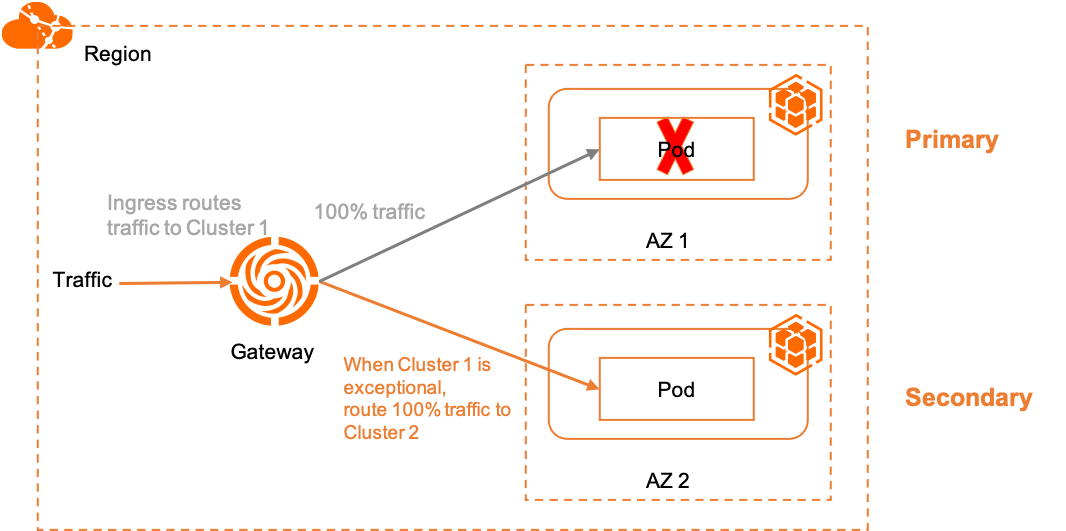

Primary/secondary disaster recovery

In primary/secondary mode, all traffic goes to Cluster 1 (primary) under normal conditions. If Cluster 1 becomes unhealthy, the gateway automatically reroutes traffic to Cluster 2 (secondary).

This mode uses two MSE-specific annotations to pin Ingress routes to a specific cluster:

| Annotation | Description |

|---|---|

mse.ingress.kubernetes.io/service-subset |

A readable label for the service subset. Use a name that indicates the target cluster. |

mse.ingress.kubernetes.io/subset-labels |

The cluster ID to route to, using the topology.istio.io/cluster label. |

For a complete list of MSE Ingress annotations, see Annotations supported by MSE Ingress gateways.

Create an Ingress for primary/secondary disaster recovery

-

Create the primary Ingress that pins traffic to Cluster 1. Replace

${cluster1-id}with the actual cluster ID. ingress-demo-cluster-one.yamlapiVersion: networking.k8s.io/v1 kind: Ingress metadata: annotations: mse.ingress.kubernetes.io/service-subset: cluster-demo-1 mse.ingress.kubernetes.io/subset-labels: | topology.istio.io/cluster ${cluster1-id} name: web-demo-cluster-one spec: ingressClassName: mse rules: - host: example.com http: paths: - path: /service1 pathType: Exact backend: service: name: service1 port: number: 80kubectl apply -f ingress-demo-cluster-one.yaml -n gateway-demo

Run cluster-level canary releases

Use a header-based canary Ingress alongside the primary Ingress to route specific requests to Cluster 2 for validation, without changing the default traffic path.

-

Create the canary Ingress targeting Cluster 2. Replace

${cluster2-id}with the actual cluster ID. ingress-demo-cluster-gray.yamlapiVersion: networking.k8s.io/v1 kind: Ingress metadata: annotations: mse.ingress.kubernetes.io/service-subset: cluster-demo-2 mse.ingress.kubernetes.io/subset-labels: | topology.istio.io/cluster ${cluster2-id} nginx.ingress.kubernetes.io/canary: "true" nginx.ingress.kubernetes.io/canary-by-header: "app-web-demo-version" nginx.ingress.kubernetes.io/canary-by-header-value: "gray" name: web-demo-cluster-gray spec: ingressClassName: mse rules: - host: example.com http: paths: - path: /service1 pathType: Exact backend: service: name: service1 port: number: 80kubectl apply -f ingress-demo-cluster-gray.yaml -n gateway-demo

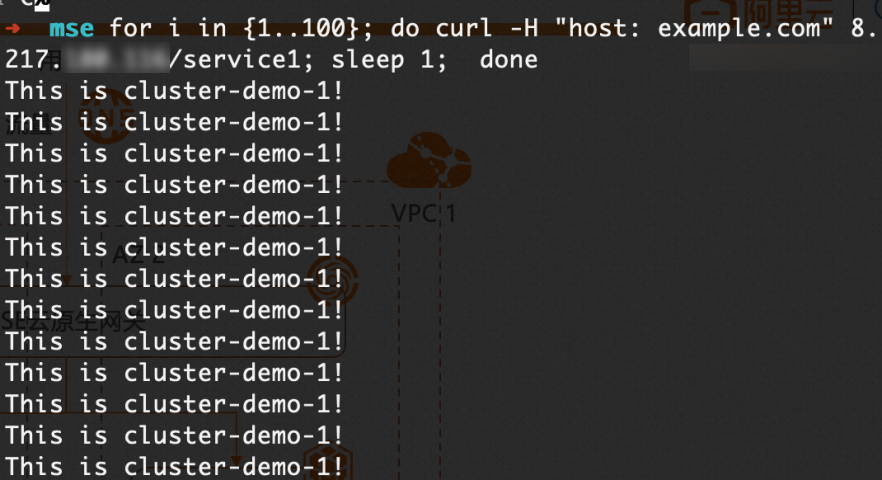

Verify primary/secondary disaster recovery

-

Get the public IP address of the multi-cluster gateway.

kubectl get ingress web-demo -n gateway-demo -ojsonpath="{.status.loadBalancer}" -

Confirm default traffic goes to Cluster 1.

for i in {1..100}; do curl -H "host: example.com" XX.XX.XX.XX/service1; sleep 1; doneExpected result: All default traffic is handled by Cluster 1.

-

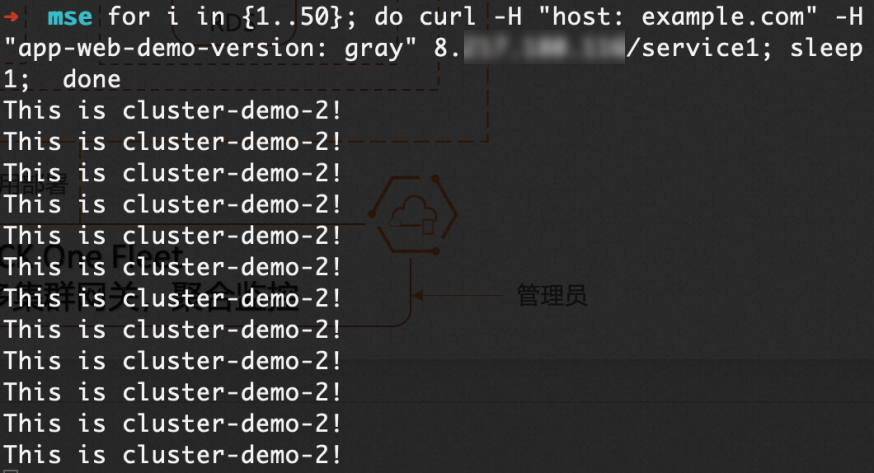

Confirm canary traffic goes to Cluster 2.

for i in {1..50}; do curl -H "host: example.com" -H "app-web-demo-version: gray" XX.XX.XX.XX/service1; sleep 1; doneExpected result: All requests with

app-web-demo-version: grayare handled by Cluster 2.

-

Simulate a Cluster 1 failure by scaling the Deployment replicas to 0. All default traffic automatically reroutes to Cluster 2.

What's next

-

Manage north-south traffic — explore the full traffic management capabilities of ACK One multi-cluster gateways

-

Quick start for GitOps — learn more about deploying applications with Argo CD on ACK One