Run DeepSpeed distributed training jobs with Arena and visualize results using TensorBoard.

Prerequisites

Before you begin, make sure you have:

-

An ACK cluster with GPU-accelerated nodes. See Create an ACK cluster with GPU-accelerated nodes.

-

The cloud-native AI suite installed, with the

ack-arenaCLI at version 0.9.10 or later. See Deploy the cloud-native AI suite. -

The Arena client installed at version 0.9.10 or later. See Configure the Arena client.

-

Persistent volume claims (PVCs) created in the cluster. See Configure a shared NAS volume.

How it works

A DeepSpeed job in ACK runs as a launcher-worker topology:

-

The Launcher node coordinates the distributed training run. The launcher node does not use a GPU.

-

Worker nodes run the actual training. Each worker receives one or more GPUs and executes the DeepSpeed training script.

Because communication between nodes uses SSH, all images must have OpenSSH installed.

Training jobs are accessed exclusively through passwordless SSH. Protect Kubernetes Secrets in production environments.

Sample setup

This guide uses a sample that trains a masked language model. The sample code and dataset from microsoft/DeepSpeedExamples are prepackaged in the image registry.cn-beijing.aliyuncs.com/acs/deepspeed:hello-deepspeed. The sample uses a PVC named training-data (backed by a shared NAS volume) to store training results.

Use a custom image

To bring your own training code, choose one of the following approaches:

-

Build from the ACK DeepSpeed base image:

registry.cn-beijing.aliyuncs.com/acs/deepspeed:v072_base -

Build from your own base image: Follow the Dockerfile and install OpenSSH in the image.

Sync code from a private Git repository

Pass --sync-mode=git and --sync-source to pull training code at runtime. Arena uses git-sync to synchronize the repository. Set credentials using GIT_SYNC_USERNAME and GIT_SYNC_PASSWORD environment variables.

Submit and monitor a DeepSpeed job

Step 1: Check available GPU resources

arena top nodeExpected output:

NAME IPADDRESS ROLE STATUS GPU(Total) GPU(Allocated)

cn-beijing.192.1xx.x.xx 192.1xx.x.xx <none> Ready 0 0

cn-beijing.192.1xx.x.xx 192.1xx.x.xx <none> Ready 0 0

cn-beijing.192.1xx.x.xx 192.1xx.x.xx <none> Ready 1 0

cn-beijing.192.1xx.x.xx 192.1xx.x.xx <none> Ready 1 0

cn-beijing.192.1xx.x.xx 192.1xx.x.xx <none> Ready 1 0

---------------------------------------------------------------------------------------------------

Allocated/Total GPUs In Cluster:

0/3 (0.0%)Three GPU-accelerated nodes are available.

Step 2: Submit the DeepSpeed job

The following command submits a job named deepspeed-helloworld with one Launcher node and three worker nodes, each using one GPU:

arena submit deepspeedjob \

--name=deepspeed-helloworld \

--gpus=1 \

--workers=3 \

--image=registry.cn-beijing.aliyuncs.com/acs/deepspeed:hello-deepspeed \

--data=training-data:/data \

--tensorboard \

--logdir=/data/deepspeed_data \

"deepspeed /workspace/DeepSpeedExamples/HelloDeepSpeed/train_bert_ds.py --checkpoint_dir /data/deepspeed_data"Parameters

| Parameter | Required | Description | Default |

|---|---|---|---|

--name |

Yes | Globally unique job name | — |

--image |

Yes | Container image for the runtime | — |

--gpus |

No | GPUs allocated to each worker node | 0 |

--workers |

No | Number of worker nodes | 1 |

--data |

No | Mounts a PVC into the runtime. Format: <pvc-name>:<mount-path>. Run arena data list to view available PVCs. |

— |

--tensorboard |

No | Enables a TensorBoard service. Must be used together with --logdir. |

— |

--logdir |

No | Path TensorBoard reads event files from. Must be used together with --tensorboard. |

/training_logs |

To sync code from a private Git repository instead of bundling it in the image, add these flags:

arena submit deepspeedjob \

...

--sync-mode=git \

--sync-source=<private-git-repo-url> \

--env=GIT_SYNC_USERNAME=<username> \

--env=GIT_SYNC_PASSWORD=<password> \

"deepspeed /workspace/DeepSpeedExamples/HelloDeepSpeed/train_bert_ds.py --checkpoint_dir /data/deepspeed_data"Expected output after submission:

trainingjob.kai.alibabacloud.com/deepspeed-helloworld created

INFO[0007] The Job deepspeed-helloworld has been submitted successfully

INFO[0007] You can run `arena get deepspeed-helloworld --type deepspeedjob` to check the job statusStep 3: Verify the job is running

List all Arena training jobs:

arena listExpected output:

NAME STATUS TRAINER DURATION GPU(Requested) GPU(Allocated) NODE

deepspeed-helloworld RUNNING DEEPSPEEDJOB 3m 3 3 192.168.9.69Check GPU usage by job:

arena top jobExpected output:

NAME STATUS TRAINER AGE GPU(Requested) GPU(Allocated) NODE

deepspeed-helloworld RUNNING DEEPSPEEDJOB 4m 3 3 192.168.9.69

Total Allocated/Requested GPUs of Training Jobs: 3/3Check cluster-wide GPU usage:

arena top nodeExpected output:

NAME IPADDRESS ROLE STATUS GPU(Total) GPU(Allocated)

cn-beijing.192.1xx.x.xx 192.1xx.x.xx <none> Ready 0 0

cn-beijing.192.1xx.x.xx 192.1xx.x.xx <none> Ready 0 0

cn-beijing.192.1xx.x.xx 192.1xx.x.xx <none> Ready 1 1

cn-beijing.192.1xx.x.xx 192.1xx.x.xx <none> Ready 1 1

cn-beijing.192.1xx.x.xx 192.1xx.x.xx <none> Ready 1 1

---------------------------------------------------------------------------------------------------

Allocated/Total GPUs In Cluster:

3/3 (100%)Step 4: Get job details and the TensorBoard URL

arena get deepspeed-helloworldExpected output:

Name: deepspeed-helloworld

Status: RUNNING

Namespace: default

Priority: N/A

Trainer: DEEPSPEEDJOB

Duration: 6m

Instances:

NAME STATUS AGE IS_CHIEF GPU(Requested) NODE

---- ------ --- -------- -------------- ----

deepspeed-helloworld-launcher Running 6m true 0 cn-beijing.192.1xx.x.x

deepspeed-helloworld-worker-0 Running 6m false 1 cn-beijing.192.1xx.x.x

deepspeed-helloworld-worker-1 Running 6m false 1 cn-beijing.192.1xx.x.x

deepspeed-helloworld-worker-2 Running 6m false 1 cn-beijing.192.1xx.x.x

Your tensorboard will be available on:

http://192.1xx.x.xx:31870The last two lines appear only when TensorBoard is enabled.

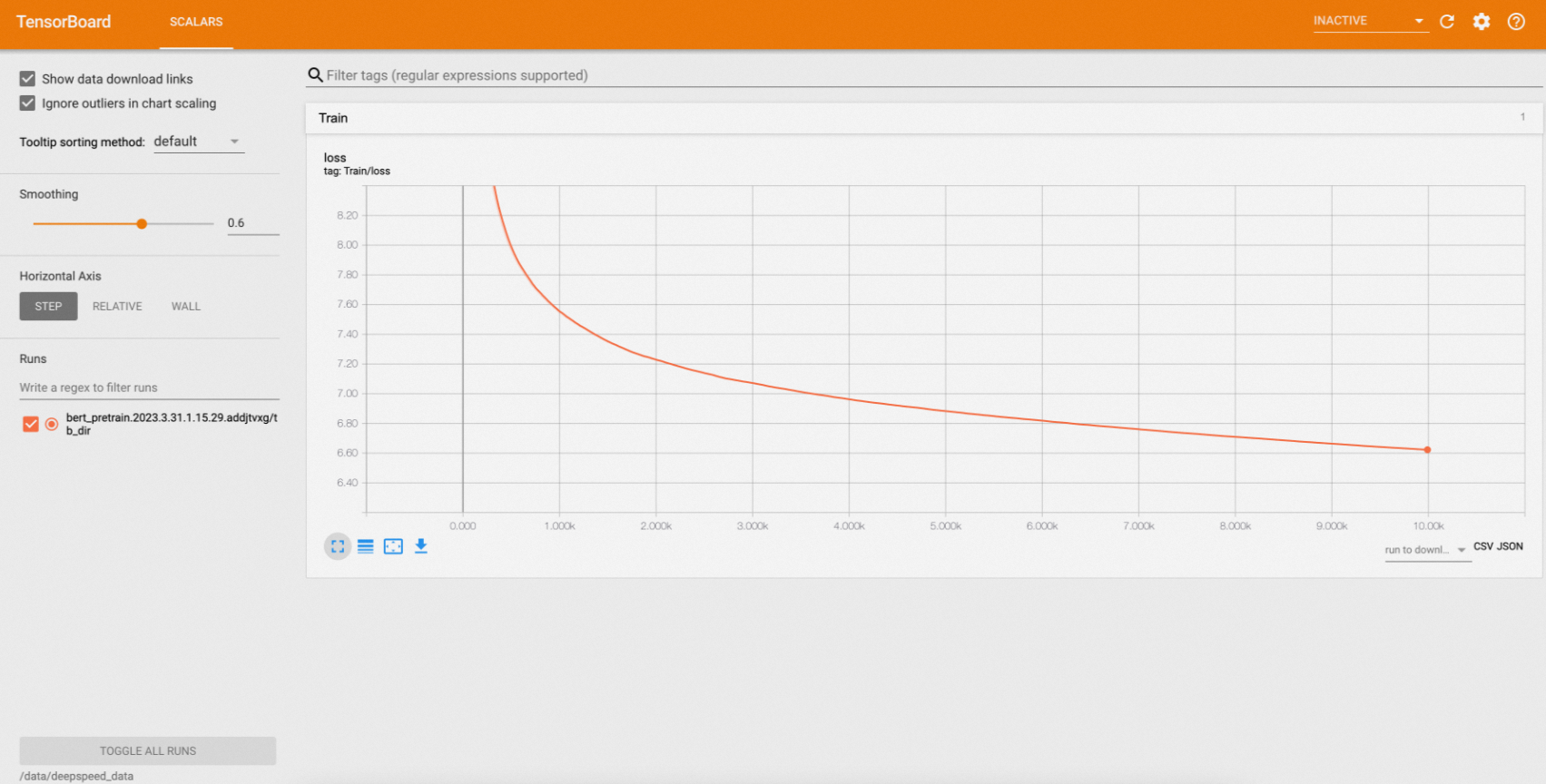

Step 5: View training results in TensorBoard

-

Forward the TensorBoard service to a local port:

kubectl port-forward svc/deepspeed-helloworld-tensorboard 9090:6006 -

Open

localhost:9090in a browser.

Step 6: View training logs

Print all logs from the job:

arena logs deepspeed-helloworldExpected output:

deepspeed-helloworld-worker-0: [2023-03-31 08:38:11,201] [INFO] [logging.py:68:log_dist] [Rank 0] step=7050, skipped=24, lr=[0.0001], mom=[(0.9, 0.999)]

deepspeed-helloworld-worker-0: [2023-03-31 08:38:11,254] [INFO] [timer.py:198:stop] 0/7050, RunningAvgSamplesPerSec=142.69733028759384, CurrSamplesPerSec=136.08094834473613, MemAllocated=0.06GB, MaxMemAllocated=1.68GB

deepspeed-helloworld-worker-0: 2023-03-31 08:38:11.255 | INFO | __main__:log_dist:53 - [Rank 0] Loss: 6.7574

deepspeed-helloworld-worker-0: [2023-03-31 08:38:13,103] [INFO] [logging.py:68:log_dist] [Rank 0] step=7060, skipped=24, lr=[0.0001], mom=[(0.9, 0.999)]

deepspeed-helloworld-worker-0: [2023-03-31 08:38:13,134] [INFO] [timer.py:198:stop] 0/7060, RunningAvgSamplesPerSec=142.69095076844823, CurrSamplesPerSec=151.8552037291255, MemAllocated=0.06GB, MaxMemAllocated=1.68GB

deepspeed-helloworld-worker-0: 2023-03-31 08:38:13.136 | INFO | __main__:log_dist:53 - [Rank 0] Loss: 6.7570

deepspeed-helloworld-worker-0: [2023-03-31 08:38:14,924] [INFO] [logging.py:68:log_dist] [Rank 0] step=7070, skipped=24, lr=[0.0001], mom=[(0.9, 0.999)]

deepspeed-helloworld-worker-0: [2023-03-31 08:38:14,962] [INFO] [timer.py:198:stop] 0/7070, RunningAvgSamplesPerSec=142.69048436022115, CurrSamplesPerSec=152.91029839772997, MemAllocated=0.06GB, MaxMemAllocated=1.68GB

deepspeed-helloworld-worker-0: 2023-03-31 08:38:14.963 | INFO | __main__:log_dist:53 - [Rank 0] Loss: 6.7565To stream logs in real time, use -f. To print only the last N lines, use -t N:

# Stream logs in real time

arena logs deepspeed-helloworld -f

# Print the last 5 lines

arena logs deepspeed-helloworld -t 5Expected output for -t 5:

deepspeed-helloworld-worker-0: [2023-03-31 08:47:08,694] [INFO] [launch.py:318:main] Process 80 exits successfully.

deepspeed-helloworld-worker-2: [2023-03-31 08:47:08,731] [INFO] [launch.py:318:main] Process 44 exits successfully.

deepspeed-helloworld-worker-1: [2023-03-31 08:47:08,946] [INFO] [launch.py:318:main] Process 44 exits successfully.

/opt/conda/lib/python3.8/site-packages/apex/pyprof/__init__.py:5: FutureWarning: pyprof will be removed by the end of June, 2022

warnings.warn("pyprof will be removed by the end of June, 2022", FutureWarning)Run arena logs --help to see all available log options.