ML training jobs that run across multiple pods need a single, consistent view of training data. Apsara File Storage NAS (NAS) provides a shared Network File System (NFS) file system that multiple pods can mount simultaneously, making it well suited for this scenario. This topic walks you through creating a NAS file system, registering it as a Kubernetes persistent volume (PV) and persistent volume claim (PVC) in your ACK cluster, and populating it with training data for use with Arena.

Before you begin

Before you begin, make sure you have:

An ACK cluster up and running

Sufficient permissions to create NAS file systems and manage ACK storage resources

An Elastic Compute Service (ECS) instance in the same Virtual Private Cloud (VPC) as your ACK cluster, used to mount and populate the NAS file system

Considerations

Review the following constraints before you start:

VPC co-location required. The NAS file system, its mount target, and your ACK cluster must all reside in the same VPC.

Protocol type affects multi-writer performance. NFSv3 delivers better performance when multiple ECS instances do not need to write to the same file simultaneously. Extreme NAS supports NFSv3 only.

PV capacity is used for matching only. The capacity value set on the PV and PVC is used only for PVC-to-PV matching — it does not cap the actual usable storage. The actual storage limit is determined by the NAS file system specifications.

Static PVs do not support `archiveOnDelete`. If you set the reclaim policy to Delete on a static PV, the PV and NAS data are not deleted when the PVC is deleted. Use Retain (the default) unless you are using dynamically provisioned NAS volumes.

Step 1: Create a NAS instance

Create a General-purpose NAS file system in the same region and VPC as your ACK cluster.

For step-by-step instructions, see Create a General-purpose NAS file system using the console.

When configuring the file system, use the following required values:

| Parameter | Required value |

|---|---|

| File system type | General-purpose NAS |

| Region | Same region as your ACK cluster |

| VPC | Same VPC as your ACK cluster |

| Protocol type | NFS |

Step 2: Mount the file system to an ECS instance

Mount the NAS file system to an ECS instance so you can later populate it with training data. This step uses the one-click mount feature in the NAS console. For other mount methods, see Scenarios for mounting a file system.

Mount the NAS file system

Log on to the NAS console. In the left navigation pane, choose File System > File System List.

In the top navigation bar, select the resource group and region where your file system resides.

Find the file system you created and click Mount in the Actions column.

The first time you use the mount feature, NAS prompts you to assign the AliyunServiceRoleForNasEcsHandler service-linked role. Follow the on-screen instructions to complete the authorization. For details, see Service-linked roles of NAS.

In the Mount panel, configure the mount settings:

Select a mount target and click Next.

Select an ECS instance and click Next. The ECS instance must be in the same VPC as the file system. If you cannot find the instance in the list, refresh the page and try again. > Note: To mount a NAS file system on multiple ECS instances at once, see Mount an NFS file system on multiple ECS instances at a time.

Configure the mount parameters:

Parameter Description Required Default Mount path The local directory on the ECS instance where the file system will be mounted. Yes — Automatic mount When enabled, the file system remounts automatically when the ECS instance restarts. No Enabled Protocol type The NFS protocol version. General-purpose NAS supports NFSv3 and NFSv4; Extreme NAS supports NFSv3 only. Use NFSv3 for optimal performance when multiple ECS instances do not need to write to the same file simultaneously. Yes — NAS directory The directory in the NAS file system to mount. Enter /for the root directory or a subdirectory such as/abc. If the directory does not exist, select Confirm New Directory — you must also set the UID, GID, and POSIX permissions for the new directory.No /(root)Mount parameters NFS mount flags. For the full parameter reference, see Mount an NFS file system on a Linux ECS instance. No Default mount parameters

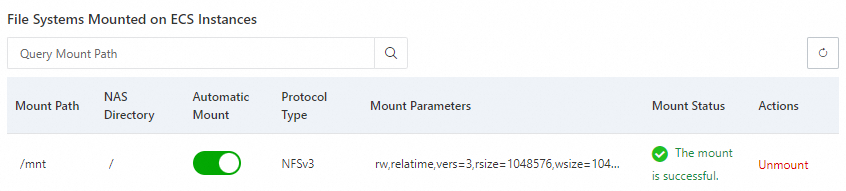

Click Complete. The mount operation takes about 1 to 2 minutes. When the mount status shows Mounted, the NAS file system is ready. If the status shows Failed, use the built-in troubleshooting tool. For guidance, see Mount an NFS file system on a Linux ECS instance.

Verify the mount

Connect to the ECS instance and run the following commands to confirm the file system is accessible:

mkdir /mnt/dir1

mkdir /mnt/dir2

touch /mnt/file1

echo 'some file content' > /mnt/file2

ls /mntThe output lists dir1, dir2, file1, and file2:

To view mount details or check the file system capacity, run mount -l or df -h.

Each NAS file system requires a mount target to connect to an ECS instance. To add mount targets or look up existing mount target addresses, see Manage mount targets. When adding a mount target, set Mount target type to VPC and select the same VPC and vSwitch as your ACK cluster.

Step 3: Create a PV and a PVC

Register the NAS file system in your ACK cluster as a PV, then create a PVC so your pods can claim storage from it.

Create a PV

Log on to the ACK console. In the left navigation pane, click Clusters.NAS console

On the Clusters page, click the name of the target cluster. In the left navigation pane, choose Volumes > Persistent Volumes.

On the Persistent Volumes page, click Create. Configure the parameters in the dialog box and click OK.

Parameter Description Required Default Example PV type Select NAS. Yes — NAS Name A unique name for the PV within the cluster. Yes — pv-nasCapacity Used only for PVC matching — it does not cap the actual usable storage. The actual storage limit is determined by the NAS file system specifications. For details, see General-purpose NAS file systems and Extreme NAS file systems. Yes — 5 GiAccess mode ReadWriteMany lets multiple nodes mount the volume as read-write simultaneously. ReadWriteOnce restricts mounting to a single node. Yes — ReadWriteManyEnable CNFS Whether to use Container Network File System (CNFS) for automated O&M, cache acceleration, and performance monitoring. To manage an existing NAS file system with CNFS, see Create a CNFS to manage a NAS file system (Recommended). No Disabled Disabled Mount target domain name Available only when CNFS is disabled. The address of the NAS mount target. Select from previously added mount targets, or enter a custom domain name. To look up the address, see Manage mount targets. Yes (when CNFS is disabled) — 0c47****-mpk25.cn-shenzhen.nas.aliyuncs.coMount path (Advanced) The subdirectory of the NAS file system to mount. For Extreme NAS, the path must start with /share(for example,/share/data). If the directory does not exist, it is created automatically.No /(root)/dataReclaim policy Retain (default): when the PVC is deleted, the PV and NAS data are preserved and must be cleaned up manually. Delete: must be used with archiveOnDelete. Static PVs do not supportarchiveOnDelete, so the PV and data are not deleted even with this policy set. ForarchiveOnDeleteconfiguration, see Use dynamically provisioned NAS volumes.No Retain RetainMount options The NFS protocol version and mount flags. NFSv3 delivers better performance for ML training workloads where multiple clients read from the same dataset without concurrent writes. No — nolock,tcp,noresvport<br>vers=3Label Labels to attach to the PV. No — pv-nas

After the PV is created, it appears on the Persistent Volumes page.

Create a PVC

In the navigation pane, choose Storage > Persistent Volume Claims.

On the Persistent Volume Claims page, click Create. Configure the parameters and click OK.

Parameter Description Required Default Example PVC type Select NAS. Yes — NAS Name A unique name for the PVC within the cluster. Yes — pvc-nasAllocation mode Select Use Existing Persistent Volume to bind to the PV created above. Alternatively, select Create Volume to provision a new PV inline. Yes — Use Existing Persistent Volume Existing volumes Select the PV created in the previous step. Yes (when using existing PV) — pv-nasCapacity The storage capacity to claim. Used only for PVC-to-PV matching and does not limit actual usable capacity. Yes — 5

Verify the PV and PVC

After creating the PVC, confirm it is bound to the PV. Connect to any node in the ACK cluster and run:

kubectl get pvc pvc-nasThe output shows the PVC in Bound state:

NAME STATUS VOLUME CAPACITY ACCESS MODES STORAGECLASS AGE

pvc-nas Bound pv-nas 5Gi RWX 1mIf the status is Pending, check that the PV name in the PVC matches exactly and that the access modes are compatible.

Step 4: Populate the PVC with data

Because the ACK cluster accesses shared data through the PVC, upload your training data directly to the NAS file system created in Step 1.

Connect to any ECS node in the ACK cluster using Workbench. For other connection methods, see Overview of methods for connecting to ECS instances.

The NFS file system is mounted to

/mnt(from Step 2). Create two directories to store the training datasets:cd /mnt/ mkdir tf_data/ mkdir pytorch_data/Download the TensorFlow MNIST dataset:

cd tf_data git clone https://code.aliyun.com/xiaozhou/tensorflow-sample-code.git mv tensorflow-sample-code/data/* ./ && rm -rf tensorflow-sample-codeDownload the PyTorch MNIST dataset:

cd pytorch_data git clone https://code.aliyun.com/370272561/mnist-pytorch.git mv mnist-pytorch/MNIST ./ && rm -rf mnist-pytorch

The tf_data/ directory now contains the TensorFlow MNIST training data, and pytorch_data/ contains the PyTorch MNIST data. Both directories are accessible to any pod that mounts the pvc-nas claim.

What's next

Submit an Arena training job that uses the

--dataparameter to mountpvc-nas.To manage additional users, create separate PVCs pointing to different subdirectories of the same NAS file system. For example, the PVCs for User A and User B can point to the same NAS file system but must reference different subdirectories to isolate their working environments.

To enhance NAS performance and automate O&M for production workloads, consider enabling CNFS.