During rolling updates, pod termination and traffic routing rule updates start in parallel—not sequentially. This race condition can cause 502 or 504 errors if a pod stops serving before the ALB Ingress controller finishes removing it from the backend server group. A preStop hook delays the SIGTERM signal long enough for the ALB Ingress controller to complete deregistration first, eliminating service interruptions.

Prerequisites

Before you begin, make sure you have:

-

An ACK managed cluster or ACK dedicated cluster running Kubernetes 1.18 or later. See Create an ACK managed cluster or Create an ACK dedicated cluster (discontinued). To upgrade an existing cluster, see Manually upgrade ACK clusters.

-

The ALB Ingress controller installed in the cluster. See Manage the ALB Ingress controller.

To use an ALB Ingress with an ACK dedicated cluster, grant the cluster the permissions required by the ALB Ingress controller first. See Authorize an ACK dedicated cluster to access the ALB Ingress controller.

How it works

Pod lifecycle

A pod contains two types of containers:

-

Init container: Runs before the main container to complete initialization operations.

-

Main container: Runs the application process. After the container starts, the postStart hook runs. During the container's lifetime, the system performs liveness and readiness checks. Before the container terminates, the preStop hook runs.

The following figure shows the pod lifecycle.

Why errors occur: two procedures run in parallel

When you delete a pod, two procedures start simultaneously. This parallel execution is the root cause of 502 and 504 errors during rolling updates.

Pod termination procedure

-

kube-apiserver marks the pod as

Terminating. -

The system runs the preStop hook, if configured.

-

The cluster sends a SIGTERM signal to the container.

-

The system waits for the container to stop or for the grace period to expire. The default grace period (

terminationGracePeriodSeconds) is 30 seconds. -

If the pod is still running after the grace period, the kubelet waits 2 additional seconds and sends a SIGKILL signal.

-

The pod is deleted.

Traffic routing rule update procedure

-

kube-apiserver marks the pod as

Terminating. -

The endpoint controller removes the pod's IP address from the endpoint.

-

The ALB Ingress controller reconciles the backend servers and removes the Service endpoint from the backend server group.

Because these two procedures run in parallel, the ALB Ingress controller may not finish reconciling before the pod shuts down. If that happens, traffic continues routing to a pod that is already terminating.

How a preStop hook prevents errors

Without a preStop hook, you may see the following errors during rolling updates:

| Status code | Cause |

|---|---|

| 504 | The pod was processing a non-idempotent request when it was deleted. |

| 502 | The pod was deleted after receiving the SIGTERM signal, but the ALB Ingress controller had not yet finished reconciling—so the pod still existed in the backend server group when the next request arrived. |

A preStop hook adds a sleep delay that starts as soon as kube-apiserver marks the pod as Terminating. This gives the ALB Ingress controller time to deregister the pod from the backend server group before the pod receives the SIGTERM signal.

Understanding the time budget

The preStop sleep and the container's shutdown time share the same grace period (terminationGracePeriodSeconds). For example, with a sleep 10 preStop hook and a program shutdown time of 5 seconds, set terminationGracePeriodSeconds to at least 15 seconds—plus a safety margin. The example in this topic uses sleep 10 and terminationGracePeriodSeconds: 45.

If the sum of the preStop duration and the program shutdown time exceedsterminationGracePeriodSeconds, the graceful shutdown times out. After the grace period ends, the kubelet waits 2 seconds and sends a SIGKILL signal to forcefully terminate the pod. IncreaseterminationGracePeriodSecondsto ensure enough time for both operations.

Step 1: Deploy the application with a preStop hook

-

Create

tea-service.yamlwith the following content. Thelifecycle.preStopfield configures the hook.apiVersion: apps/v1 kind: Deployment metadata: name: tea spec: replicas: 3 selector: matchLabels: app: tea template: metadata: labels: app: tea spec: containers: - name: tea image: registry.cn-hangzhou.aliyuncs.com/acs-sample/nginxdemos:latest ports: - containerPort: 80 lifecycle: preStop: # Delay SIGTERM by 10 seconds to allow the ALB Ingress controller exec: # to finish deregistering the pod from the backend server group. command: - /bin/sh - -c - "sleep 10" terminationGracePeriodSeconds: 45 # Must exceed preStop duration + program shutdown time. --- apiVersion: v1 kind: Service metadata: name: tea-svc spec: ports: - port: 80 targetPort: 80 protocol: TCP selector: app: tea type: NodePort -

Deploy the Deployment and Service.

kubectl apply -f tea-service.yaml -

Create

tea-ingress.yamlwith the following content.apiVersion: networking.k8s.io/v1 kind: Ingress metadata: name: tea-ingress spec: ingressClassName: alb rules: - host: demo.ingress.top http: paths: - path: / pathType: Prefix backend: service: name: tea-svc port: number: 80 -

Create the Ingress.

kubectl apply -f tea-ingress.yaml -

Get the ALB address assigned to the Ingress.

kubectl get ingressExpected output:

NAME CLASS HOSTS ADDRESS PORTS AGE tea-ingress alb demo.ingress.top alb-110zvs5nhsvfv*****.cn-chengdu.alb.aliyuncs.com 80 7m5sNote the

ADDRESSvalue—you will need it in the next step.

Step 2: Verify that the preStop hook prevents service interruptions

-

Create

test.shwith the following content. The script sends one request per second to the NGINX service and records each HTTP status code.#!/bin/bash HOST="demo.ingress.top" DNS="alb-110zvs5nhsvfv*****.cn-chengdu.alb.aliyuncs.com" # Replace with your ADDRESS value. printf "Response Code|| TIME \n" >> log.txt while true; do RESPONSE=$(curl -H Host:$HOST -s -o /dev/null -w "%{http_code}" -m 1 http://$DNS/) TIMESTAMP=$(date +%Y-%m-%d_%H:%M:%S) echo "$TIMESTAMP - $RESPONSE" >> log.txt sleep 1 done -

Run the script.

bash test.sh -

Trigger a rolling restart.

kubectl rollout restart deploy tea -

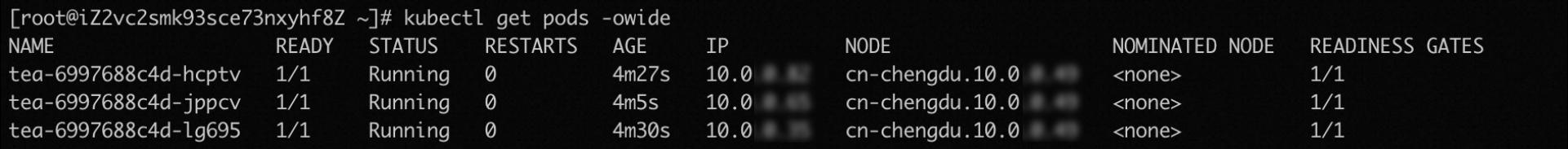

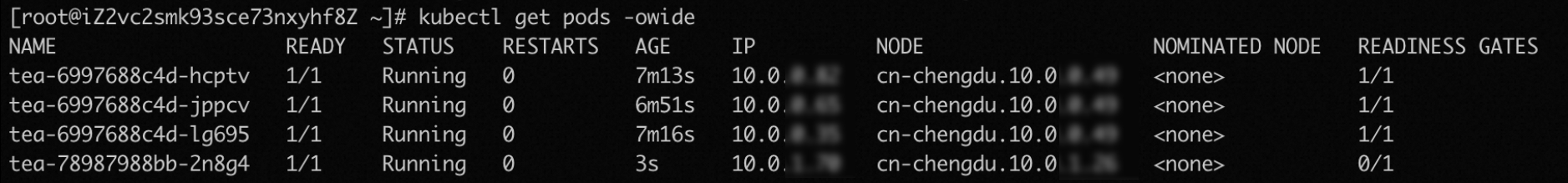

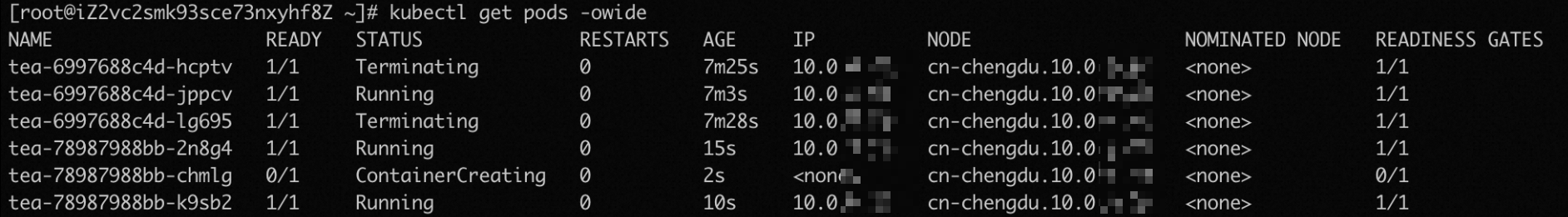

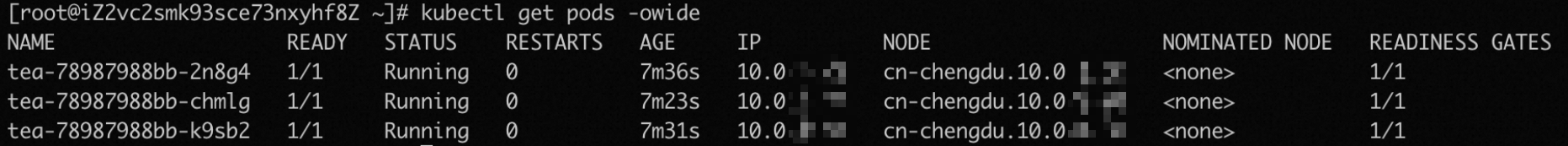

Watch the rollout progress. The following screenshots show the expected pod states during the update. Before the rolling update starts, three replicas are running. When new pods are not yet ready, two old pods continue serving traffic. After the new pods are added to the backend server group, the old pods begin terminating. After all old pods finish the preStop hook (or the grace period expires), the kubelet sends the termination signal and the rolling update completes.

-

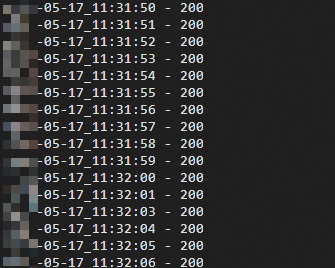

Check the test log. Every request should return 200, confirming no service interruption occurred during the rolling update.

cat log.txtExpected output:

What's next

-

For advanced routing scenarios—such as HTTP-to-HTTPS redirects and canary releases—see Advanced ALB Ingress configurations.

-

To make sure pods pass readiness checks before they receive traffic during rolling updates, see Use readiness gates to seamlessly launch pods that are associated with ALB Ingresses during rolling updates.

-

If you run into issues, see ALB Ingress FAQ and ALB Ingress controller troubleshooting.