Container Service for Kubernetes (ACK) supports GPU scheduling and operations management using the standard Kubernetes extended resource request model. This topic deploys a GPU-accelerated TensorFlow job to verify that your cluster schedules GPU workloads correctly.

Prerequisites

Before you begin, ensure that you have:

-

An ACK cluster with at least one GPU node

-

kubectl configured to connect to the cluster

-

Access to the ACK console

Avoid bypassing standard GPU resource requests

For GPU nodes managed by an ACK cluster, request GPU resources only through the standard Kubernetes extended resource mechanism (nvidia.com/gpu in the resources.limits field). The following actions bypass this mechanism and introduce security risks:

-

Running GPU applications directly on nodes

-

Using

docker,podman, ornerdctlto create containers or request GPU resources (for example,docker run --gpus allordocker run -e NVIDIA_VISIBLE_DEVICES=all) -

Adding

NVIDIA_VISIBLE_DEVICES=allorNVIDIA_VISIBLE_DEVICES=<GPU ID>to theenvsection of a pod's YAML file -

Using the

NVIDIA_VISIBLE_DEVICESenvironment variable to directly request GPU resources for a pod -

Defaulting

NVIDIA_VISIBLE_DEVICEStoallin a container image when the variable is not set in the pod's YAML file -

Setting

privileged: truein the pod'ssecurityContextand running a GPU program

Why it matters: GPU resources requested through these non-standard methods are not recorded in the scheduler's resource tracking. The mismatch between actual GPU allocation on a node and what the scheduler tracks can cause it to assign additional GPU workloads to the same node, leading to service failures from resource contention on the same GPU card. These methods may also trigger known errors reported by the NVIDIA community.

Verify GPU availability

Before deploying a workload, confirm that your GPU node exposes GPU capacity to the Kubernetes scheduler.

-

List nodes in the cluster:

kubectl get nodes -

Describe a GPU node to check its capacity:

kubectl describe node <gpu-node-name>In the Capacity section,

nvidia.com/gpumust show a non-zero value:Capacity: nvidia.com/gpu: 1If

nvidia.com/gpuis missing or shows0, the GPU device plugin may not be running correctly on that node. Possible causes include the NVIDIA device plugin DaemonSet not being deployed, driver issues, or node configuration problems. Resolve the underlying issue before proceeding.

Deploy a GPU application

-

Log on to the ACK console. In the left navigation pane, click Clusters.

-

On the Clusters page, click the name of the target cluster. In the left navigation pane, choose Workloads > Deployments.

-

On the Deployments page, click Create from YAML and paste the following manifest:

apiVersion: v1 kind: Pod metadata: name: tensorflow-mnist namespace: default spec: containers: - image: registry.cn-beijing.aliyuncs.com/acs/tensorflow-mnist-sample:v1.5 name: tensorflow-mnist command: - python - tensorflow-sample-code/tfjob/docker/mnist/main.py - --max_steps=100000 - --data_dir=tensorflow-sample-code/data resources: limits: nvidia.com/gpu: 1 # Request one GPU card for this container. workingDir: /root restartPolicy: AlwaysGPU resources are only declared in

limits, notrequests. This is a Kubernetes constraint specific to GPU and other extended resources: you cannot setrequestswithout a matchinglimitsfor GPU. The scheduler uses thelimitsvalue as the effective request. -

In the left navigation pane, choose Workloads > Pods. Find the pod you created and click its name.

-

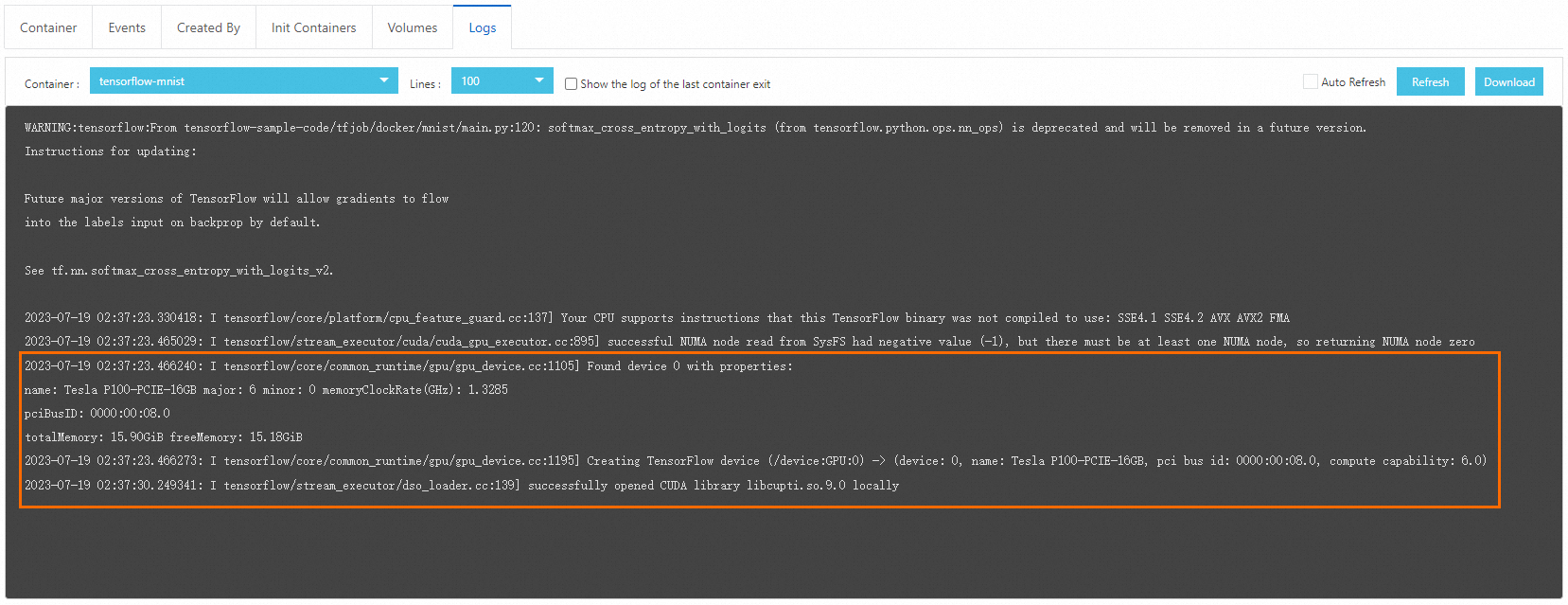

Click the Logs tab. It may take a few minutes for the image to pull and the pod to start. When the pod is running, the log output confirms that the job is using the GPU correctly.

What's next

-

To schedule pods to specific GPU node types in a heterogeneous cluster, use node labels and node selectors. Label GPU nodes with the accelerator type (for example,

kubectl label nodes <node-name> accelerator=<gpu-model>), then add anodeSelectorto your pod spec. -

To explore advanced GPU scheduling options in ACK, such as GPU sharing and isolation, see the ACK GPU scheduling documentation.