Resource profiling analyzes historical CPU and memory usage to recommend right-sized container resource requests. Instead of adjusting requests based on intuition and stress tests, you get data-driven recommendations that reduce resource waste while maintaining workload stability.

This feature is in public preview and ready to use. This topic shows how to enable and use resource profiling from the ACK console and the command line.

How it works

When resource profiling is enabled for a workload, ack-koordinator continuously collects container resource usage samples. After at least 24 hours of data, it generates a profiled value for each container using:

-

CPU: P95 percentile of usage samples + safety margin

-

Memory: P99 percentile of usage samples + safety margin

The algorithm uses a half-life sliding window over the last 14 days, so older samples carry less weight. It also factors in out-of-memory (OOM) events to improve memory accuracy.

The profiled value is then compared to the current resource request to generate one of three suggestions:

| Suggestion | Condition |

|---|---|

| Upgrade | Profiled value > current request. The container has been overusing resources, which poses a stability risk. |

| Downgrade | Target = Profiled value × (1 + Buffer). Degree = 1 − (Request / Target). If |Degree| > 0.1, a downgrade is suggested. |

| Keep | All other cases. No adjustment needed. |

The drift percentage shown in the console is calculated as: |Profiled value − Requested value| / Requested value.

The profiled value is a statistical summary of current resource demand, not a ready-to-apply specification. Before applying it, consider your workload's characteristics:

-

For workloads that must handle sudden traffic spikes or maintain an active-active architecture, add buffer beyond the profiled value.

-

For resource-sensitive workloads that cannot run stably under high host load, increase the specifications beyond the profiled value. Use the CPU Redundancy Rate or CPU Consumption Buffer setting to incorporate this headroom automatically into the suggested target.

Prerequisites

Before you begin, ensure that you have:

-

An ACK Pro cluster

-

ack-koordinator v0.7.1 or later installed (formerly ack-slo-manager). For installation and upgrade instructions, see ack-koordinator

-

metrics-server v0.3.8 or later installed

If a node uses containerd as its container runtime and was added to the cluster before 14:00 on January 19, 2022, re-add the node or upgrade the cluster to the latest version before using this feature. For details, see Add existing nodes and Manually upgrade a cluster.

Supported workload types

Resource profiling is designed for online service applications: Deployment, StatefulSet, and DaemonSet.

-

Offline batch jobs: The profiling algorithm sizes for peak availability. Batch workloads prioritize throughput and can tolerate contention, so the profiled values may appear conservative. Review carefully before applying.

-

Active-passive workloads: Idle replica pods produce near-zero usage samples that skew profiled values downward. Review carefully before applying.

Billing

ack-koordinator is free to install and use. Charges may apply in two scenarios:

-

Worker node resources: ack-koordinator runs as a self-managed component that consumes worker node resources. Configure the resource requests for each module during installation to control this consumption.

-

Prometheus metrics: By default, ack-koordinator exposes resource profiling metrics in Prometheus format. If you enable Enable Prometheus Monitoring for ACK-Koordinator and use Managed Service for Prometheus, these metrics are reported as basic metrics. Changing the default storage duration or other settings may incur additional charges. For details, see Billing of Managed Service for Prometheus.

Use resource profiling in the console

Step 1: Enable resource profiling

-

Log on to the ACK console. In the left navigation pane, click ACK consoleACK consoleACK consoleClusters.

-

On the Clusters page, click the name of the target cluster. In the left-side pane, choose Cost Suite > Cost Optimization.

-

On the Cost Optimization page, click the Resource Profiling tab and follow the on-screen instructions to enable the feature.

-

Component installation: Follow the prompts to install or upgrade ack-koordinator. First-time users must install the component. > Note: If the installed version of ack-koordinator is earlier than 0.7.0, migrate and upgrade it first. For details, see Migrate ack-koordinator from the marketplace to the component center.

-

Initial profiling configuration: After installation or upgrade, select Default Settings to configure the initial profiling scope. This is the recommended starting point. Adjust the settings later by clicking Profiling Configuration in the console.

-

-

Click Enable Resource Profiling to go to the Resource Profiling tab.

Step 2: Configure profiling policies

Profiling configuration controls which workloads are profiled and how the recommendations are calculated. Two modes are available.

-

On the Resource Profiling tab, click Profiling Configuration.

-

Select a configuration mode, adjust parameters as needed, then click OK.

Global Configuration (recommended)

Enables resource profiling for all workloads in the cluster, excluding the specified namespaces. This is the default mode and the recommended starting point for most clusters.

| Parameter | Description | Value range |

|---|---|---|

| Excluded Namespaces | Namespaces excluded from profiling. The final profiling scope is the intersection of non-excluded namespaces and the selected workload types. | Existing namespaces in the cluster. Defaults to kube-system and arms-prom. Multiple namespaces can be selected. |

| Workload Type | The workload types to include in profiling. The final scope is the intersection of the selected types and non-excluded namespaces. | Deployment, StatefulSet, DaemonSet. Multiple types can be selected. |

| CPU Redundancy Rate | The safety buffer added when generating CPU recommendations. A higher value produces more conservative recommendations. See the explanation below. | Non-negative number. Common values: 70%, 50%, 30%. |

| Memory Redundancy Rate | The safety buffer added when generating memory recommendations. A higher value produces more conservative recommendations. See the explanation below. | Non-negative number. Common values: 70%, 50%, 30%. |

Automated O&M

Enables resource profiling only for workloads in specific namespaces. Use this mode for large clusters (for example, clusters with more than 1,000 nodes) or when you want to profile only a subset of workloads.

| Parameter | Description | Value range |

|---|---|---|

| Enabled Namespaces | Namespaces included in profiling. The final scope is the intersection of these namespaces and the selected workload types. | Existing namespaces in the cluster. Multiple namespaces can be selected. |

| Enabled Workload Types | The workload types to include in profiling. The final scope is the intersection of the selected types and enabled namespaces. | Deployment, StatefulSet, DaemonSet. Multiple types can be selected. |

| CPU Consumption Buffer | The safety buffer added when generating CPU recommendations. A higher value produces more conservative recommendations. See the explanation below. | Non-negative number. Common values: 70%, 50%, 30%. |

| Memory Consumption Buffer | The safety buffer added when generating memory recommendations. A higher value produces more conservative recommendations. See the explanation below. | Non-negative number. Common values: 70%, 50%, 30%. |

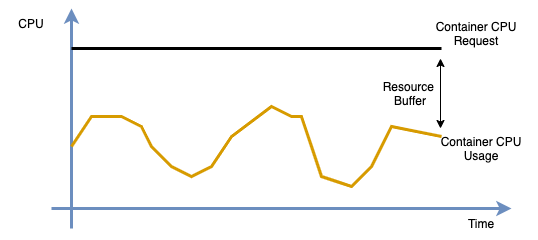

Choosing a buffer value

The buffer accounts for the gap between measured peak usage and the resource capacity that applications actually need. Physical resource constraints (such as hyper-threading) and traffic burst headroom mean that applications should not be sized to run at 100% utilization. A downgrade suggestion is generated only when the difference between the profiled target and the current request exceeds the buffer threshold.

| Buffer value | Suitable for |

|---|---|

| 70% | Latency-sensitive or traffic-variable services that need large headroom |

| 50% | General-purpose services balancing cost savings and stability |

| 30% | Stable, predictable workloads where you want to maximize resource savings |

For the full target calculation, see the How it works section.

Step 3: View the profiling overview

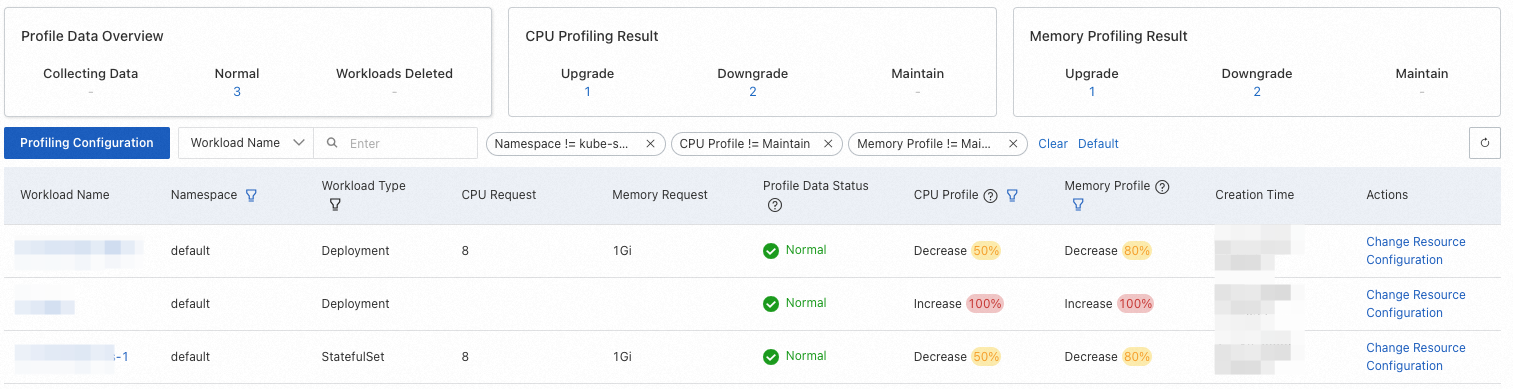

After configuring the profiling policy, the Resource Profiling tab lists all profiled workloads.

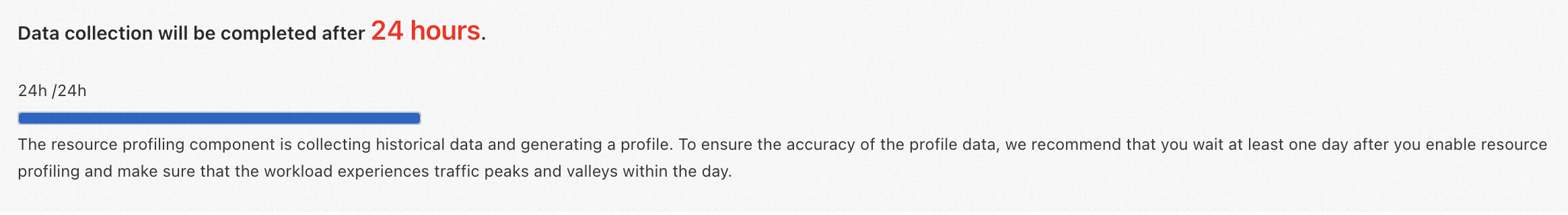

To ensure accuracy, wait at least 24 hours after enabling profiling before acting on the results.

The following table describes the columns in the profiling overview.

A hyphen (-) in the table below indicates that the field is not applicable.

| Column | Description | Values | Filterable |

|---|---|---|---|

| Workload name | The name of the workload. | - | Yes. Search by exact name. |

| Namespace | The namespace of the workload. | - | Yes. The kube-system namespace is excluded by default. |

| Workload type | The type of the workload. | Deployment, DaemonSet, StatefulSet | Yes. Default filter: All. |

| CPU request | The current CPU resource request of the workload's pods. | - | No. |

| Memory request | The current memory resource request of the workload's pods. | - | No. |

| Profile data status | The data collection status for the workload's profile. | Collecting: Insufficient data. Wait at least 24 hours and make sure the workload has run through representative traffic patterns. Normal: Profiling data is ready. Workload deleted: The workload has been deleted. The profile is automatically removed after a period of time. | No. |

| CPU profile, Memory profile | The adjustment suggestion based on the profiled value, current request, and configured buffer. For workloads with multiple containers, the suggestion shown reflects the container with the largest drift. | Upgrade, Downgrade, Keep. The percentage indicates the drift. | Yes. Default filter: Upgrade and Downgrade. |

| Creation time | The time when the profile was created. | - | No. |

| Change resource configuration | After reviewing the suggestion, click Change Resource Configuration to apply the recommended values. | - | No. |

Step 4: View profile details

On the Resource Profiling tab, click the name of a workload to open its details page.

The details page shows basic workload information, resource usage curves for each container, and the Change Resource Configuration section at the bottom.

The resource curves show the following metrics (using CPU as an example):

| Curve | Description |

|---|---|

cpu limit |

The CPU resource limit of the container. |

cpu request |

The CPU resource request of the container. |

cpu recommend |

The profiled CPU value for the container. |

cpu usage (average) |

Average CPU usage across all container replicas. |

cpu usage (max) |

Maximum CPU usage across all container replicas. |

Step 5: Apply recommended resource specifications

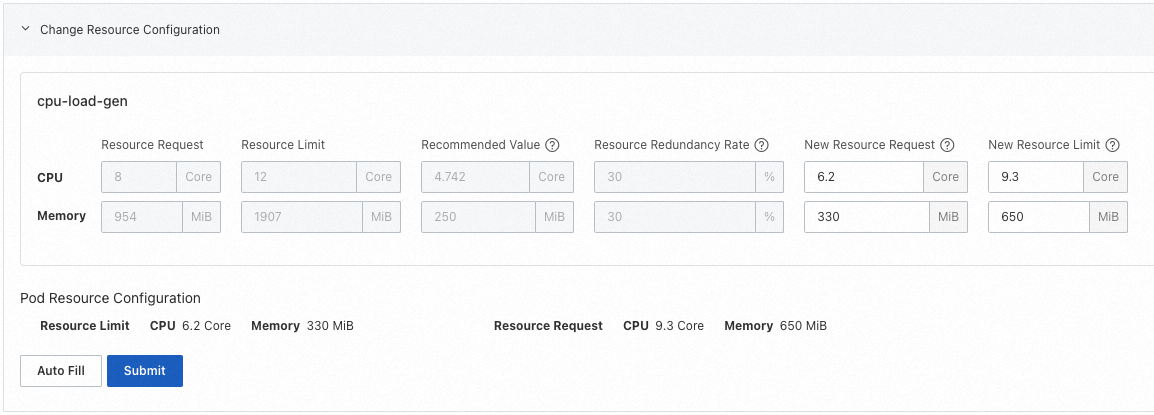

After reviewing the profiled values, update the container resource specifications in the Change Resource Configuration section at the bottom of the details page.

The following columns are shown for each container:

| Column | Description |

|---|---|

| Resource Request | The current resource request of the container. |

| Resource Limit | The current resource limit of the container. |

| Recommended Value | The profiled value generated by resource profiling. Use this as a reference for the new resource request. |

| Resource Redundancy Rate | The safety buffer configured in the profiling policy. To calculate the target, multiply the recommended value by (1 + buffer rate). For example: 4.742 × 1.3 ≈ 6.2. |

| New Resource Request | The resource request to apply to the container. |

| New Resource Limit | The resource limit to apply to the container. If the workload uses CPU topology-aware scheduling, the CPU limit must be an integer. |

After setting the new values, click Submit. ACK performs a rolling update on the workload and recreates its pods with the new resource specifications.

Use resource profiling from the command line

The command-line path uses two CustomResourceDefinitions (CRDs): RecommendationProfile to define profiling scope, and Recommendation to store profiling results.

Step 1: Enable resource profiling

Create a RecommendationProfile CRD to define which workloads and namespaces to profile.

-

Create a file named

recommendation-profile.yamlwith the following content:Field Type Description metadata.nameString The name of the RecommendationProfile object. Because RecommendationProfile is cluster-scoped, no namespace is required. spec.controllerKindString The workload types to profile. Supported values: Deployment,StatefulSet,DaemonSet.spec.enabledNamespacesString The namespaces to profile. apiVersion: autoscaling.alibabacloud.com/v1alpha1 kind: RecommendationProfile metadata: # RecommendationProfile is cluster-scoped. No namespace is required. name: profile-demo spec: # Workload types to enable resource profiling for. controllerKind: - Deployment # Namespaces to enable resource profiling for. enabledNamespaces: - default -

Apply the profile configuration:

kubectl apply -f recommendation-profile.yaml -

Deploy a sample workload to generate profiling data. Create a file named

cpu-load-gen.yaml:apiVersion: apps/v1 kind: Deployment metadata: name: cpu-load-gen labels: app: cpu-load-gen spec: replicas: 2 selector: matchLabels: app: cpu-load-gen-selector template: metadata: labels: app: cpu-load-gen-selector spec: containers: - name: cpu-load-gen image: registry.cn-zhangjiakou.aliyuncs.com/acs/slo-test-cpu-load-gen:v0.1 command: ["cpu_load_gen.sh"] imagePullPolicy: Always resources: requests: cpu: 8 # CPU request: 8 cores memory: "1Gi" limits: cpu: 12 memory: "2Gi" -

Deploy the workload:

kubectl apply -f cpu-load-gen.yaml -

After the workload has run for at least 24 hours, retrieve the profiling results:

The minimum profiled CPU value per pod is 0.025 cores, and the minimum memory value is 250 MB.

Label Description Example alpha.alibabacloud.com/recommendation-workload-apiVersionThe API version of the workload. The forward slash (/) is replaced with a hyphen (-) due to Kubernetes label constraints. apps-v1(wasapps/v1)alpha.alibabacloud.com/recommendation-workload-kindThe workload type. Deploymentalpha.alibabacloud.com/recommendation-workload-nameThe workload name. Maximum 63 characters due to Kubernetes label constraints. cpu-load-genField Description Format Example containerNameThe container name. string cpu-load-gentargetThe profiled resource values for CPU and memory. map[ResourceName]resource.Quantity cpu: 4742m,memory: 262144koriginalTargetAn intermediate result of the profiling algorithm. Not for direct use. - - Original specification Profiled specification CPU 8 cores 4.742 cores kubectl get recommendations -l \ "alpha.alibabacloud.com/recommendation-workload-apiVersion=apps-v1, \ alpha.alibabacloud.com/recommendation-workload-kind=Deployment, \ alpha.alibabacloud.com/recommendation-workload-name=cpu-load-gen" -o yamlack-koordinator creates a

Recommendationobject for each profiled workload in the same namespace as the workload. The following is a sample output for thecpu-load-genDeployment:apiVersion: autoscaling.alibabacloud.com/v1alpha1 kind: Recommendation metadata: labels: alpha.alibabacloud.com/recommendation-workload-apiVersion: apps-v1 alpha.alibabacloud.com/recommendation-workload-kind: Deployment alpha.alibabacloud.com/recommendation-workload-name: cpu-load-gen name: f20ac0b3-dc7f-4f47-b3d9-bd91f906**** namespace: recommender-demo spec: workloadRef: apiVersion: apps/v1 kind: Deployment name: cpu-load-gen status: recommendResources: containerRecommendations: - containerName: cpu-load-gen target: cpu: 4742m # Profiled CPU: ~4.742 cores memory: 262144k # Profiled memory originalTarget: # Intermediate algorithm result. Do not use directly. # ...The workload labels on the

Recommendationobject follow this convention: Per-container profiling results are stored instatus.recommendResources.containerRecommendations: Comparing thecpu-load-genworkload's original request to the profiled result: The CPU request is over-provisioned. Reducing it to the profiled value saves cluster resources.

Step 2: (Optional) View results in Prometheus

ack-koordinator exposes resource profiling data through a Prometheus-compatible HTTP interface, which integrates with ACK Prometheus monitoring.

Using ACK Managed Service for Prometheus

If this is the first time using the Resource Profile dashboard, upgrade it to the latest version. For upgrade steps, see Related operations.

To view profiling metrics in the ACK console:

-

Log on to the ACK console. In the left navigation pane, click Clusters.

-

On the Clusters page, click the name of the target cluster. In the left-side pane, choose Operations > Prometheus Monitoring.

-

On the Prometheus Monitoring page, choose Cost Analysis/Resource Optimization > Resource Profile. The dashboard shows container requests, actual usage, and profiled values. For configuration details, see Connect to and configure Managed Service for Prometheus.

Using a self-managed Prometheus instance

Use the following metric queries to build your own dashboard:

# Profiled CPU resource specification for a container

koord_manager_recommender_recommendation_workload_target{exported_namespace="$namespace", workload_name="$workload", container_name="$container", resource="cpu"}

# Profiled memory resource specification for a container

koord_manager_recommender_recommendation_workload_target{exported_namespace="$namespace", workload_name="$workload", container_name="$container", resource="memory"}The metric was renamed to koord_manager_recommender_recommendation_workload_target in ack-koordinator v1.5.0-ack1.14. The previous metric name slo_manager_recommender_recommendation_workload_target remains compatible. After upgrading to v1.5.0-ack1.14 or later, switch to the new metric name.

After installation, ack-koordinator automatically creates Service and ServiceMonitor objects. If you use Managed Service for Prometheus, metrics are automatically collected and displayed on the Grafana dashboard. For self-managed Prometheus, configure metric collection according to the official Prometheus documentation, then use the metric queries above to build a Grafana dashboard for your environment.

FAQ

How does the profiling algorithm work?

The algorithm collects resource usage samples continuously and computes aggregate statistics—peak, weighted average, and percentile values—across the last 14 days. Older samples carry less weight through a half-life sliding window model.

The final profiled values are:

-

CPU: P95 percentile + safety margin

-

Memory: P99 percentile + safety margin

The algorithm also incorporates OOM events to avoid under-provisioning memory.

ACK consoleWhich workload types does resource profiling support?

Resource profiling is designed for online service applications (Deployment, StatefulSet, DaemonSet).

For offline batch jobs, the profiling results may appear conservative: batch workloads prioritize overall throughput and can tolerate resource contention, whereas the profiling algorithm sizes for peak availability. For workloads running in active-passive mode, idle replica pods produce near-zero usage samples that skew the profiled values downward. For these scenarios, review the profiled values carefully before applying them.

Can I use the profiled values directly as resource requests and limits?

The profiled values represent a statistical summary of current resource demand, not a ready-to-apply specification. Before applying them, factor in your workload's characteristics:

-

For workloads that must handle sudden traffic spikes or maintain an active-active architecture, add extra buffer beyond the profiled value.

-

For resource-sensitive workloads that cannot run stably under high host load, increase the specifications beyond the profiled value.

Use the Resource redundancy rate (buffer) in the profiling policy to incorporate this headroom automatically into the suggested target.

How do I query profiling metrics with a self-managed Prometheus instance?

Profiling metrics are exposed through the ack-koord-manager module as a Prometheus-formatted HTTP endpoint.

-

Get the pod IP address of the active ack-koord-manager replica:

kubectl get pod -A -o wide | grep koord-managerExpected output:

kube-system ack-koord-manager-b86bd47d9-92f6m 1/1 Running 0 16h 10.10.0.xxx cn-hangzhou.10.10.0.xxx <none> <none> kube-system ack-koord-manager-b86bd47d9-vg5z7 1/1 Running 0 16h 10.10.0.xxx cn-hangzhou.10.10.0.xxx <none> <none>ack-koord-manager runs in active-passive mode with two replicas. Only the active replica serves metric data.

-

Query the metrics endpoint. The default port is 9326—verify this against your ack-koord-manager deployment configuration.

Run this command from a machine that can reach the cluster's container network.

# For ack-koordinator v1.5.0-ack1.12 or later curl -s http://10.10.0.xxx:9326/all-metrics | grep slo_manager_recommender_recommendation_workload_target # For earlier versions (before v1.5.0-ack1.12) curl -s http://10.10.0.xxx:9326/metrics | grep slo_manager_recommender_recommendation_workload_targetExpected output:

# HELP slo_manager_recommender_recommendation_workload_target Recommendation of workload resource request. # TYPE slo_manager_recommender_recommendation_workload_target gauge slo_manager_recommender_recommendation_workload_target{container_name="xxx",namespace="xxx",recommendation_name="d2169dbf-fb36-4bf4-99d1-673577fb85c1",resource="cpu",workload_api_version="apps/v1",workload_kind="Deployment",workload_name="xxx"} 0.025 slo_manager_recommender_recommendation_workload_target{container_name="xxx",namespace="xxx",recommendation_name="d2169dbf-fb36-4bf4-99d1-673577fb85c1",resource="memory",workload_api_version="apps/v1",workload_kind="Deployment",workload_name="xxx"} 2.62144e+08

How do I clear profiling results and rules?

Profiling results are stored in Recommendation objects, and profiling rules are stored in RecommendationProfile objects. Run the following commands to delete all of them:

# Delete all profiling results

kubectl delete recommendation -A --all

# Delete all profiling rules

kubectl delete recommendationprofile -A --allHow do I grant a RAM user permissions to use resource profiling?

ACK uses two authorization layers: RAM authorization at the Alibaba Cloud account level and Role-Based Access Control (RBAC) authorization at the cluster level.

-

RAM authorization: Use your Alibaba Cloud account to log on to the RAM console and grant the AliyunCSFullAccess policy to the RAM user. For details, see Grant permissions.

-

RBAC authorization: Grant the RAM user the developer role or higher for the target cluster. For details, see Use RBAC to authorize operations on cluster resources.

For more fine-grained access control, create a custom ClusterRole instead of assigning the predefined developer role. The custom ClusterRole must include the following rules:

apiVersion: rbac.authorization.k8s.io/v1

kind: ClusterRole

metadata:

name: recommendation-clusterrole

rules:

- apiGroups:

- "autoscaling.alibabacloud.com"

resources:

- "*"

verbs:

- "*"For instructions on creating custom ClusterRole configurations, see Use custom RBAC roles to restrict resource operations in a cluster.