When a network connection fails in your ACK cluster, locating the faulty hop typically requires deep knowledge of container networking, network plug-ins, and kernel-level routing. Network diagnostics removes that barrier: you specify the source address, destination address, port, and protocol, and ACK automatically traces the packet path, checks each hop for configuration errors, and highlights the problem with a suggested fix.

When you run a diagnosis, ACK deploys a data collection program on each node to gather system-level information: the system version, loads, Docker, and Kubelet. The program does not collect business information or sensitive data.

Supported scenarios

Network diagnostics supports the following scenarios. Identify your situation, then follow the corresponding parameter guidance in Parameters by scenario.

Pod-to-pod or pod-to-node connectivity — diagnose direct network paths between pods or between pods and nodes.

Pod or node to Service — verify that a pod or node can reach a Kubernetes Service, and check the Service's network configuration.

DNS resolution — check whether a pod can reach the kube-dns Service in the

kube-systemnamespace.Pod or node to the Internet — diagnose outbound connectivity from pods or nodes to a public IP address.

Internet to LoadBalancer Service — diagnose inbound connectivity to a LoadBalancer Service exposed through a Classic Load Balancer (CLB) instance.

How it works

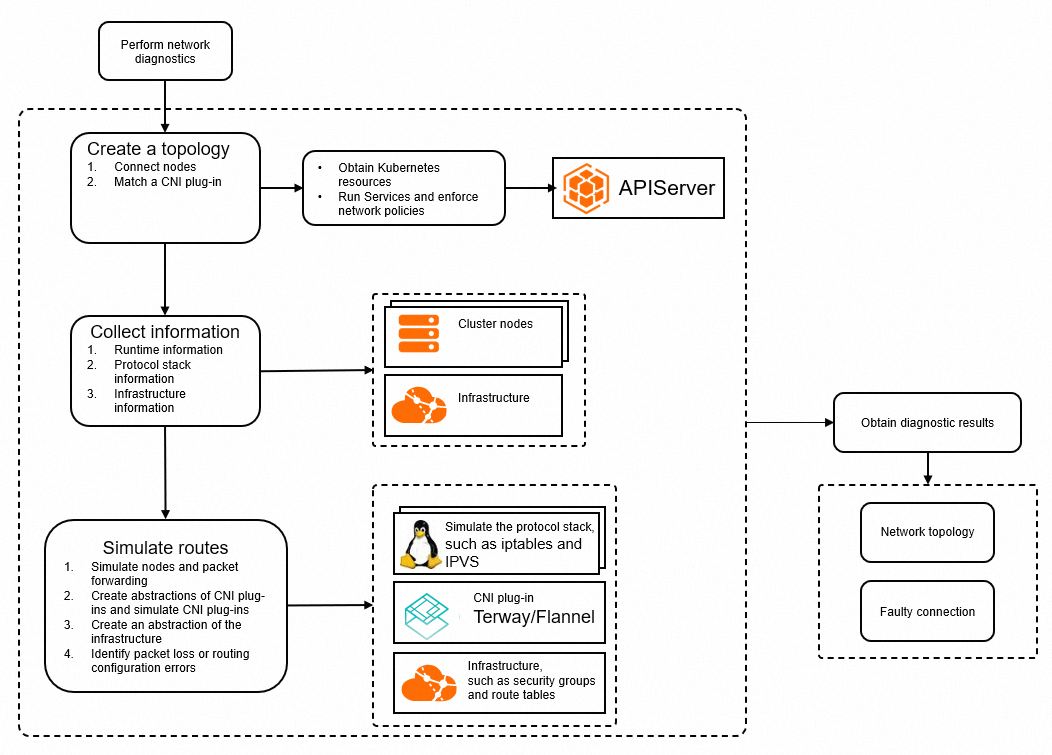

Network diagnostics traces the packet path between your source and destination, checking each hop for configuration errors. The diagnosis runs in four stages:

Build a topology — the feature maps the network path using information from the cluster, including pods, nodes, Services, and network policies.

Collect runtime state — it collects network stack and infrastructure information from the relevant nodes and containers.

Simulate the route — it runs commands on Elastic Compute Service (ECS) instances or deploys a collector pod to gather network stack details (network devices, sysctl, iptables, IPVS) and cloud-layer information (route tables, security groups, NAT gateways). It then simulates the expected packet flow and compares it against the actual configuration.

Surface anomalies — abnormal hops are highlighted in a topology view, together with a description of the issue and a suggested fix.

Network diagnostics is built on KubeSkoop, an open source Kubernetes networking diagnostic tool that supports multiple network plug-ins and Infrastructure as a Service (IaaS) providers, and uses Extended Berkeley Packet Filter (eBPF) to monitor kernel-level packet paths. For in-depth monitoring and analysis beyond diagnostics, see Use ACK Net Exporter to troubleshoot network issues.

Limits

Pods to be diagnosed must be in the Running state.

For the Internet-to-LoadBalancer scenario, only Layer 4 CLB instances are supported, and the LoadBalancer Service must have no more than 10 backend pods.

ACK Serverless clusters and virtual nodes do not support network diagnostics.

Run a diagnosis

Before you begin: You must have an ACK managed cluster.

Log on to the ACK console. In the left-side navigation pane, click Clusters.

On the Clusters page, click the name of the cluster you want to diagnose. In the left-side navigation pane, choose Inspections and Diagnostics > Diagnostics.

On the Diagnosis page, click Network diagnosis. On the Network diagnosis page, click Diagnosis.

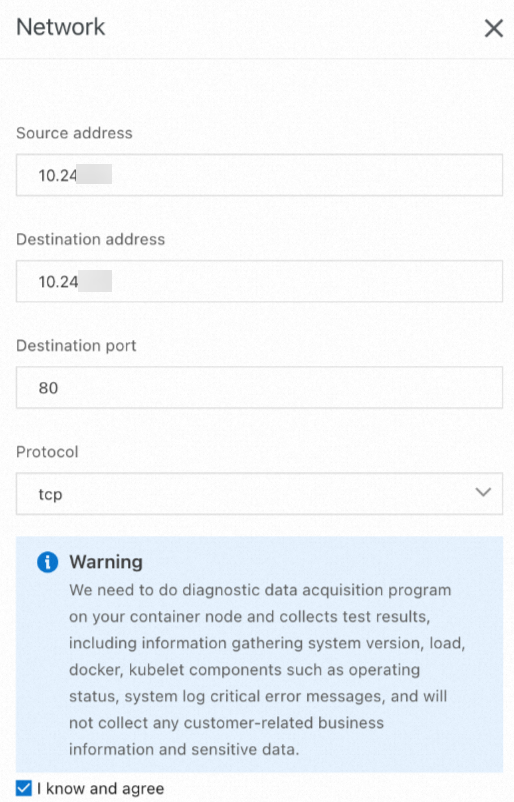

In the Network panel, fill in Source address, Destination address, Destination port, and Protocol. For parameter values, see Parameters by scenario. Read the warning, select I know and agree, and then click Create diagnosis.

On the Diagnosis result page, review the results. The Packet paths section shows all diagnosed hops. Abnormal hops are highlighted. For help interpreting results, see Common diagnostic results and solutions.

Parameters by scenario

Pod-to-pod or pod-to-node connectivity

| Parameter | Value |

|---|---|

| Source address | IP address of a pod or node |

| Destination address | IP address of another pod or node |

| Destination port | Port to diagnose |

| Protocol | Protocol to diagnose |

Pod or node to Service

Specify the cluster IP of a Service to check whether a pod or node can reach it and verify the Service's network configuration.

| Parameter | Value |

|---|---|

| Source address | IP address of a pod or node |

| Destination address | Cluster IP of the Service |

| Destination port | Port to diagnose |

| Protocol | Protocol to diagnose |

DNS resolution

When the destination is a domain name, you also need to verify that the pod can reach the kube-dns Service. Run the following command to get the cluster IP of kube-dns:

kubectl get svc -n kube-system kube-dnsExpected output:

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE

kube-dns ClusterIP 172.16.XX.XX <none> 53/UDP,53/TCP,9153/TCP 6dUse the cluster IP shown in the CLUSTER-IP column as the destination address.

| Parameter | Value |

|---|---|

| Source address | IP address of a pod or node |

| Destination address | Cluster IP of kube-dns (for example, 172.16.XX.XX) |

| Destination port | 53 |

| Protocol | udp |

Pod or node to the Internet

If the destination is a domain name, resolve it to a public IP address first.

| Parameter | Value |

|---|---|

| Source address | IP address of a pod or node |

| Destination address | Public IP address |

| Destination port | Port to diagnose |

| Protocol | Protocol to diagnose |

Internet to LoadBalancer Service

If external traffic cannot reach a LoadBalancer Service, specify the public IP address as the source address and the external IP address of the LoadBalancer Service as the destination address.

| Parameter | Value |

|---|---|

| Source address | Public IP address |

| Destination address | External IP address of the LoadBalancer Service |

| Destination port | Port to diagnose |

| Protocol | Protocol to diagnose |

Common diagnostic results and solutions

| Diagnostic result | Description | Solution |

|---|---|---|

pod container ... is not ready | Containers in the pod are not ready. | Check the pod's health status and fix the pod. |

network policy ... deny the packet from ... | A network policy is blocking packets. | Modify the network policy to allow the traffic. |

no process listening on ... | No process is listening on the specified port using the specified protocol. | Check that the listener process is running. Verify the port and protocol in the diagnostic parameters. |

no route to host .../invalid route ... for packet ... | No route exists, or the route does not point to the expected destination. | Check whether the network plug-in is working correctly. |

... do not have same security group | Two ECS instances are in different security groups; packets may be dropped. | Configure the ECS instances to use the same security group. |

security group ... not allow packet ... | A security group on an ECS instance is blocking packets. | Modify the security group rule to allow packets from the source IP address. |

no route entry for destination ip ... / no next hop for destination ip ... | The route table has no route to the destination. | If the destination is a public IP address, check the Internet NAT gateway configuration. |

error route next hop for destination ip ... / expect next hop for destination ip ... | The route in the route table does not point to the expected destination. | If the destination is a public IP address, check the Internet NAT gateway configuration. |

no snat entry on nat gateway ... | No SNAT entry was found on the Internet NAT gateway. | Check the SNAT configuration of the Internet NAT gateway. |

backend ... health status for port ..., not "normal" | One or more backend pods failed health checks on the CLB instance. | Verify that the backend pods are correctly associated with the CLB instance and that the application in those pods is healthy. |

cannot find listener port ... for slb ... | The specified port has no listener on the CLB instance. | Check the LoadBalancer Service configuration and verify the port and protocol in the diagnostic parameters. |

status of loadbalancer for ... port ... not "running" | The CLB listener is not in the Running state. | Check whether the listening port is normal. |

service ... has no valid endpoint | The Service has no endpoint. | Check the label selector of the Service, verify that an endpoint exists, and confirm the endpoint is healthy. For issues with accessing a LoadBalancer Service from within the cluster, see The CLB instance cannot be accessed from within the cluster. |

What's next

Use ACK Net Exporter to troubleshoot network issues — monitor and analyze kernel-level packet paths with eBPF for deeper network visibility.