Container Service for Kubernetes (ACK) lets you enable distributed tracing on the NGINX Ingress controller and send trace data to Managed Service for OpenTelemetry. Managed Service for OpenTelemetry persists the data and computes it in real time to produce trace details and real-time topology, which you can use to troubleshoot and diagnose issues.

Version compatibility

The tracing protocol supported by the NGINX Ingress controller depends on its version.

| NGINX Ingress controller version | OpenTelemetry | OpenTracing |

|---|---|---|

| ≥ 1.10.2-aliyun.1 | Supported | Not supported |

| v1.9.3-aliyun.1 | Supported | Supported |

| v1.8.2-aliyun.1 | Supported | Supported |

| < v1.8.2-aliyun.1 | Not supported | Supported |

Follow the procedure that matches your installed version.

Prerequisites

Before you begin, ensure that you have:

-

Activated Managed Service for OpenTelemetry and granted the required permissions. For more information, see Managed Service for OpenTelemetry activation and authorization.

-

Installed the NGINX Ingress controller. For more information, see Manage the NGINX Ingress controller.

Procedure

Enable tracing with OpenTelemetry

Use this procedure for NGINX Ingress controller versions ≥ v1.8.2-aliyun.1.

Step 1: Get the endpoint from Managed Service for OpenTelemetry

The steps differ depending on which version of the Managed Service for OpenTelemetry console you are using.

New version of the Managed Service for OpenTelemetry console

-

Log on to the Managed Service for OpenTelemetry console. In the left-side navigation pane, click Integration Center.

-

In the Open Source Frameworks section, click the OpenTelemetry card.

-

In the OpenTelemetry panel, select the region from which you want to import trace data.

-

Record the endpoint used to import data over gRPC.

The NGINX Ingress controller and the Managed Service for OpenTelemetry agent in this example are deployed in the same region. Use a virtual private cloud (VPC) endpoint. If they are in different regions, use a public endpoint.

Previous version of the Managed Service for OpenTelemetry console

-

Log on to the Managed Service for OpenTelemetry console.

-

In the left-side navigation pane, click Cluster Configurations. On the right side of the page that appears, click the Access point information tab.

-

In the upper part of the page, select the region from which you want to import trace data.

-

In the Cluster Information section, select Show Token. Then, click OpenTelemetry in the Client section and record the endpoint used to import data over gRPC.

The NGINX Ingress controller and the Managed Service for OpenTelemetry agent in this example are deployed in the same region. Use a VPC endpoint. If they are in different regions, use a public endpoint.

Step 2: Enable OpenTelemetry on the NGINX Ingress controller

Part A: Add the authentication environment variable

-

Log on to the ACK console. In the left-side navigation pane, click Clusters.

-

On the Clusters page, click the name of your cluster. In the left-side navigation pane, choose Workloads > Deployments.

-

In the Namespace drop-down list, select kube-system. Enter

nginx-ingress-controllerin the search box and click the search icon. Click Edit in the Actions column of the nginx-ingress-controller deployment. -

At the top of the Edit page, select the nginx-ingress-controller container. On the Environments tab, click Add and configure the following environment variable. Set the value to the authentication token from Step 1. Example:

authentication=bfXXXXXXXe@7bXXXXXXX1_bXXXXXe@XXXXXXX1. After adding the variable, click Update on the right side of the Edit page. In the dialog that appears, click Confirm.Type Variable key Value/ValueFrom Custom OTEL_EXPORTER_OTLP_HEADERSauthentication=<Authentication token>

Part B: Configure the nginx-configuration ConfigMap

-

In the left-side navigation pane, choose Configurations > ConfigMaps.

-

In the Namespace drop-down list, select kube-system. Enter

nginx-configurationin the Name search box and click the search icon. Click Edit in the Actions column. -

In the Edit panel, click Add to add the following configuration entries, then click OK.

Name Description Valid value Example enable-opentelemetryEnable Managed Service for OpenTelemetry. true/falsetruemain-snippetExpose the OTEL_EXPORTER_OTLP_HEADERSenvironment variable to the NGINX configuration.env OTEL_EXPORTER_OTLP_HEADERS;env OTEL_EXPORTER_OTLP_HEADERS;otel-service-nameService name displayed in traces. Custom value nginx-ingressotlp-collector-hostDomain name for gRPC data export. Remove http://and the port number from the VPC endpoint obtained in Step 1.Domain name only tracing-analysis-XX-XX-XXXXX.aliyuncs.comotlp-collector-portPort for gRPC data export. Port number 8090opentelemetry-trust-incoming-spanWhether to trust the call traces of other services or systems. true: trust upstream spans.false: do not trust the call traces of other services or systems.true/falsetrueopentelemetry-operation-nameSpan name format. HTTP $request_method $service_name $uriHTTP $request_method $service_name $uriotel-samplerSampling strategy. For available options, see opentelemetry. TraceIdRatioBasedTraceIdRatioBasedotel-sampler-ratioFraction of requests to sample. 0: no data collected.1: all requests sampled. Accurate to two decimal places. For more information, see opentelemetry.0–10.1otel-sampler-parent-basedWhether to inherit the sampling decision from the upstream span. false(default): applyotel-samplerandotel-sampler-ratio.true: inherit the upstream sampling flag and ignoreotel-samplerandotel-sampler-ratio. For more information, see opentelemetry.true/falsefalse

Step 3: Verify trace data in Managed Service for OpenTelemetry

-

Log on to the Managed Service for OpenTelemetry.

-

In the left-side navigation pane, click Applications.

-

At the top of the Applications page, select the region you configured in Step 1. Click nginx-ingress.

-

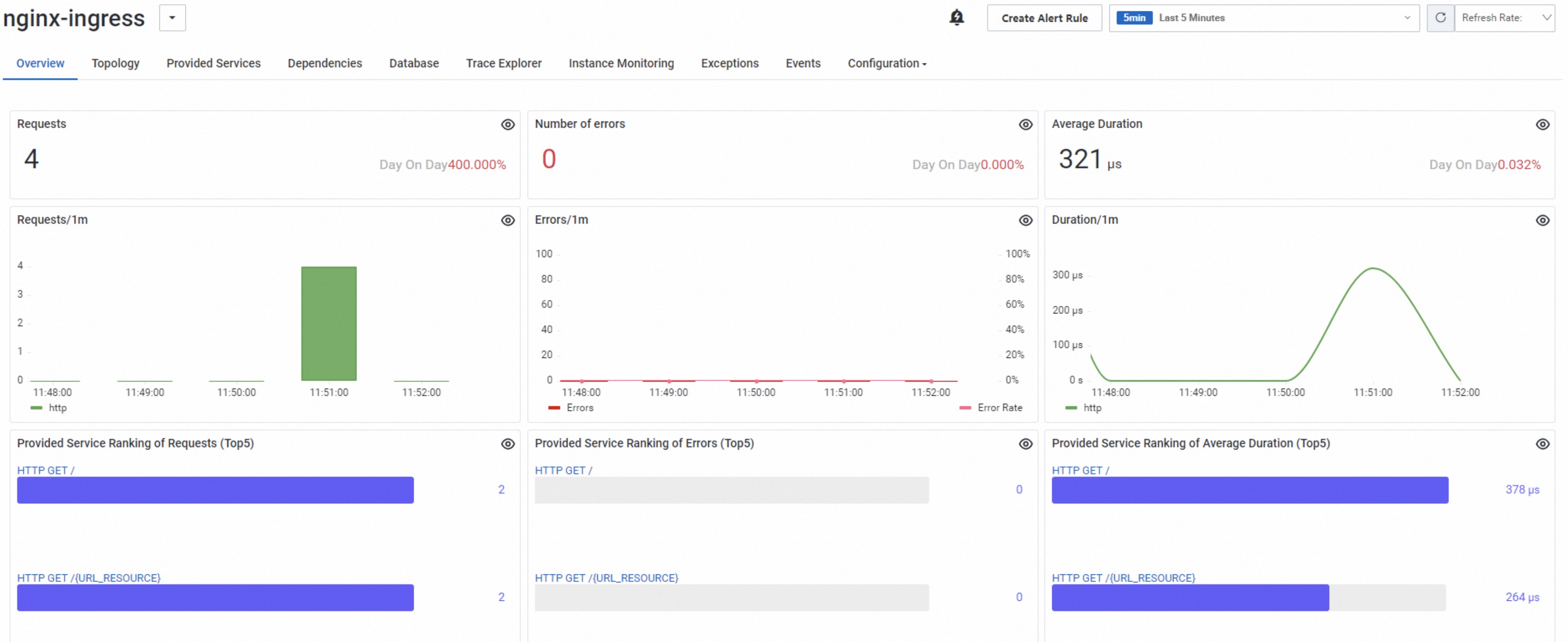

On the application details page, review the trace data:

-

On the Application Overview tab, view the request count and error count.

-

On the Trace Analysis tab, view the trace list and average duration.

-

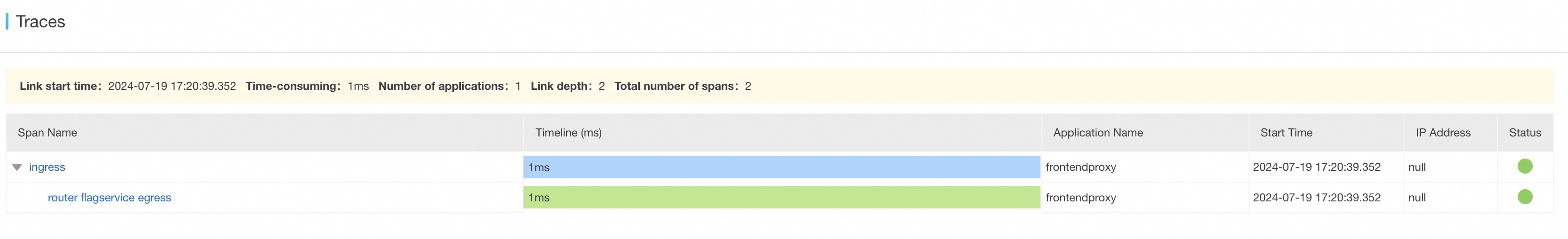

On the Trace Analysis tab, click a trace ID to view span details.

-

Enable tracing with OpenTracing

Step 1: Get the endpoint from Managed Service for OpenTelemetry

New version of the Managed Service for OpenTelemetry console

-

Log on to the Managed Service for OpenTelemetry console. In the left-side navigation pane, click Integration Center.

-

In the Open Source Frameworks section, click the Zipkin card.

This step uses a Zipkin client to collect trace data.

-

In the Zipkin panel, select the region from which you want to import trace data.

-

Record the endpoint.

The NGINX Ingress controller and the Managed Service for OpenTelemetry agent in this example are deployed in the same region. Use a VPC endpoint. If they are in different regions, use a public endpoint.

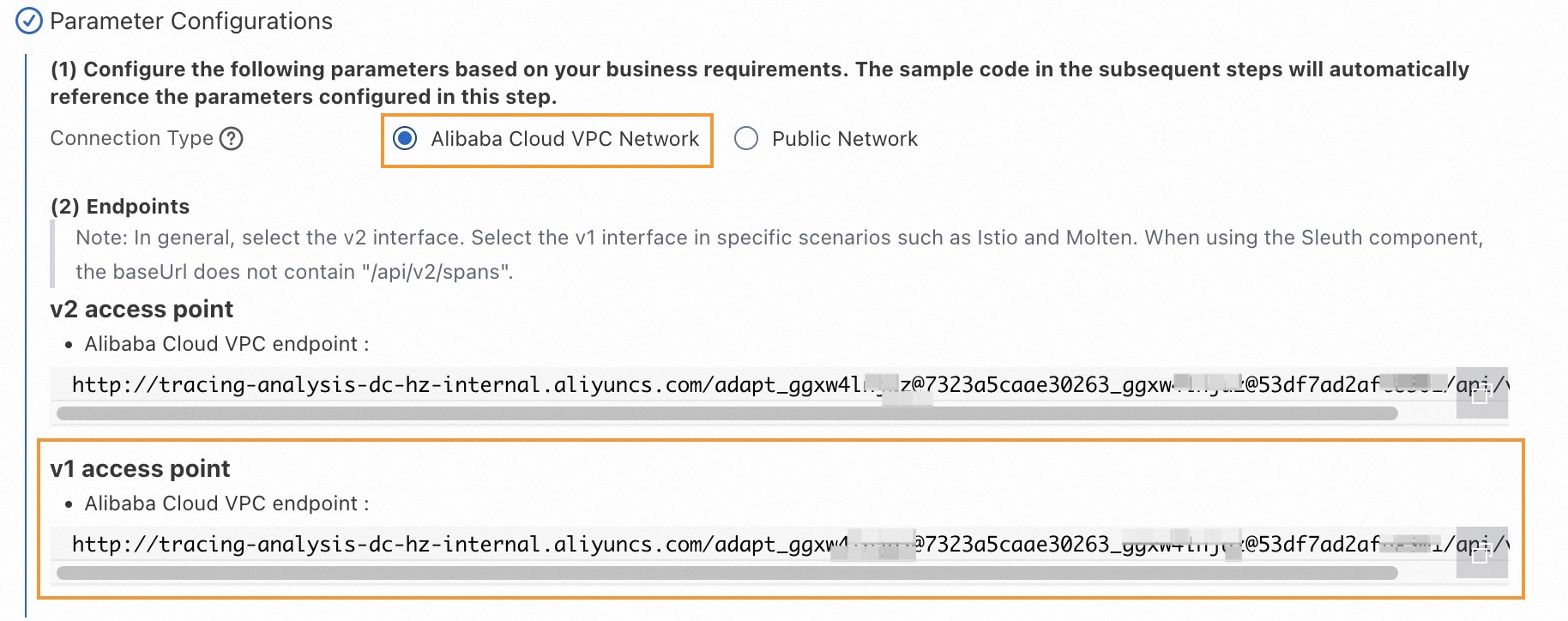

Previous version of the Managed Service for OpenTelemetry console

-

Log on to the Managed Service for OpenTelemetry console.

-

In the left-side navigation pane, click Cluster Configurations. On the page that appears, click the Access point information tab.

-

At the top of the page, select the region from which you want to import trace data.

-

In the Cluster Information section, select Show Token. Click Zipkin in the Client section and record the endpoint.

The NGINX Ingress controller and the Managed Service for OpenTelemetry agent in this example are deployed in the same region. Use a VPC endpoint. If they are in different regions, use a public endpoint.

Use this procedure for NGINX Ingress controller versions that support OpenTracing (< v1.10.2-aliyun.1). OpenTracing uses a Zipkin client to collect data.

Step 2: Enable OpenTracing on the NGINX Ingress controller

-

Log on to the ACK console. In the left-side navigation pane, click Clusters.

-

On the Clusters page, click the name of your cluster. In the left-side navigation pane, choose Configurations > ConfigMaps.

-

In the Namespace drop-down list, select kube-system. Enter

nginx-configurationin the Name search box and click the search icon. Click Edit in the Actions column. -

In the Edit panel, click Add to add the following configuration entries, then click OK.

Name Description Valid value Example enable-opentracingEnable Tracing Analysis. true/falsetruezipkin-service-nameService name displayed in traces. Custom value nginx-ingresszipkin-collector-hostDomain name for data import. Remove http://from the endpoint obtained in Step 1 and append?. Example:http://tracing-analysis-dc-hz-internal.aliyuncs.com/adapt_****_**/api/v1/spans` becomes `tracing-analysis-dc-hz-internal.aliyuncs.com/adapt_**_****/api/v1/spans?.Modified endpoint tracing-analysis-dc-hz-internal.aliyuncs.com/adapt_****_****/api/v1/spans?opentracing-trust-incoming-spanWhether to trust trace context propagated by upstream services. true/falsetruezipkin-sample-rateFraction of requests to sample. 0: no data collected.1: all requests sampled. Accurate to two decimal places.0–10.1

Step 3: Verify trace data in Managed Service for OpenTelemetry

-

Log on to the Managed Service for OpenTelemetry console.

-

In the left-side navigation pane, click Applications.

-

At the top of the Applications page, select the region you configured in Step 1. Click nginx.

-

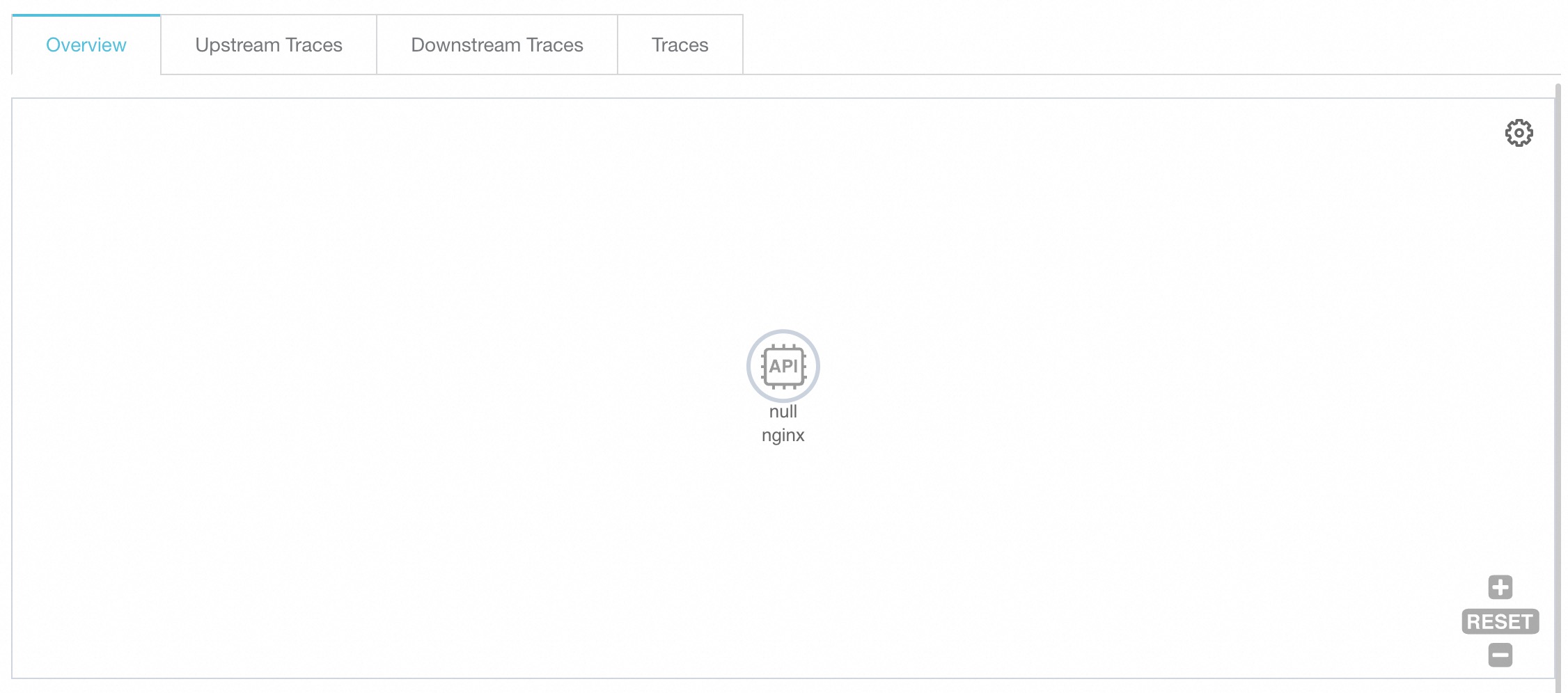

In the left-side navigation pane of the details page, click Interface Calls. Review the trace data on the right side of the page:

-

On the Overview tab, view the trace topology.

-

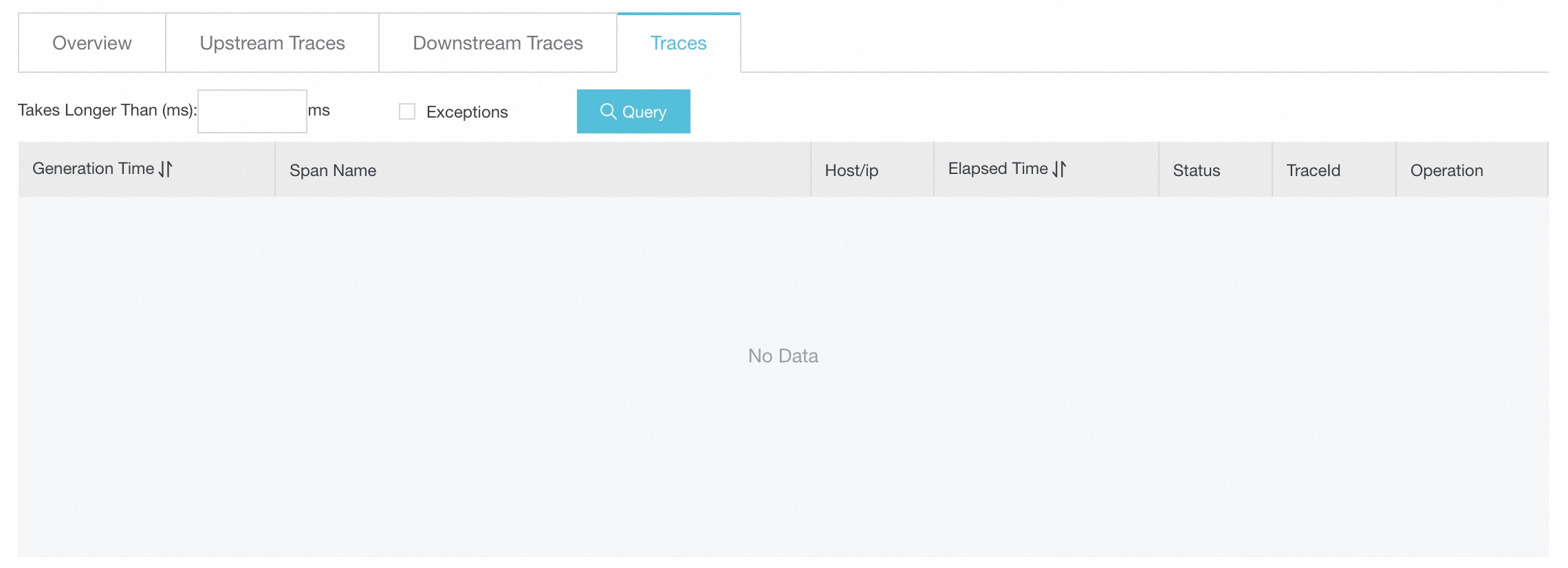

On the Traces tab, view the top 100 most time-consuming traces. For more information, see Interface calls.

-

On the Traces tab, click a trace ID to view span details.

-

(Optional) Change the trace propagation protocol

When OpenTelemetry is enabled, Managed Service for OpenTelemetry passes trace data in the W3C trace context specification to the downstream service. If your frontend or backend applications use a different protocol, such as Jaeger or Zipkin, change the propagation protocol so that spans from the frontend application, NGINX Ingress, and backend application are correctly joined into a single trace.

-

Add the

OTEL_PROPAGATORSenvironment variable to the nginx-ingress-controller deployment. Follow the same steps as Part A of Step 2 in the OpenTelemetry procedure, then save the changes and redeploy.Variable key Value Description OTEL_PROPAGATORStracecontext,baggage,b3,jaegerProtocols used to propagate trace context. For more information, see Specify the format to pass trace data. -

Update the

main-snippetentry in the nginx-configuration ConfigMap. Follow the same steps as Part B of Step 2 and setmain-snippetto the following value.Name Value Description main-snippetenv OTEL_EXPORTER_OTLP_HEADERS; env OTEL_PROPAGATORS;Loads both the OTEL_EXPORTER_OTLP_HEADERSandOTEL_PROPAGATORSenvironment variables into the NGINX configuration.

What's next

-

For more information about Managed Service for OpenTelemetry, see What is Managed Service for OpenTelemetry?

-

For more information about ACK, see What is Container Service for Kubernetes?

-

To import trace data using other clients such as Zipkin, Jaeger, or SkyWalking, configure the corresponding parameters in the nginx-configuration ConfigMap. For more information, see Preparations.