Common questions and answers for deploying and managing workloads in ACK clusters.

How do I deploy a containerized application in an ACK cluster?

Deploying a containerized application involves four phases:

-

Write your application code in any language.

-

Build a container image using a Dockerfile — a text file that packages your code and all its dependencies into a portable delivery artifact. A container image is more complete than traditional packages like JAR, WAR, or RPM files because it bundles the application with every file the container runtime needs. Use that image to start a container, which is the running application. For step-by-step instructions, see Build, package, and run images using a Dockerfile.

-

Push the image to a registry. Container Registry (ACR) stores, manages, distributes, and serves images. ACR offers two editions: For more information, see What is Container Registry ACR.

-

Personal Edition — for individual developers

-

Enterprise Edition — for enterprise customers

-

-

Deploy workloads in ACK to run your containerized application. ACK manages the full lifecycle of containerized workloads — scheduling pods to specific nodes, scaling on load changes, and more. For more information, see Workloads.

Why do image pulls take too long or fail?

Check the following based on where you're pulling from:

Pulling over the public network

-

Verify that your cluster has public network access. See Enable public network access for a cluster.

-

Verify that your image registry also allows public access. For an ACR example, see Configure public network access control for ACR.

-

Check whether public IP bandwidth is set too low.

Pulling from ACR

-

Verify that the image pull secret is correct. See How do I use imagePullSecrets?.

-

If using the passwordless pull component, verify that it is configured correctly. See Pull images within the same account and Pull images across accounts.

How do I troubleshoot application issues in ACK?

Application failures typically originate from pods, controllers (Deployment, StatefulSet, DaemonSet), or Services. Start by identifying which layer has the problem, then follow the steps below.

Check pods

See Troubleshoot pod anomalies for a complete guide to diagnosing pod-level issues.

Check Deployments

Pod anomalies often surface when controllers like Deployments, DaemonSets, StatefulSets, or Jobs are created. After checking for pod-level issues, look at Deployment events and logs:

-

Log on to the ACK console. In the left navigation pane, click ACK consoleClusters.

-

On the Clusters page, find and click the cluster name. In the left navigation pane, choose Workloads > Deployments.

-

On the Deployments page, click the Deployment name, then click Events or Logs to identify the issue.

The process for DaemonSets, StatefulSets, and Jobs is the same.

Check Services

A Service load-balances traffic across a group of pods. Follow these steps to identify Service-related issues.

Step 1: Verify that endpoints exist

Connect to the cluster with kubectl, then run:

kubectl get endpoints <service_name>The number of addresses in the ENDPOINTS column must match the expected replica count. For example, a Deployment with three replicas should show three addresses.

Missing Service endpoints

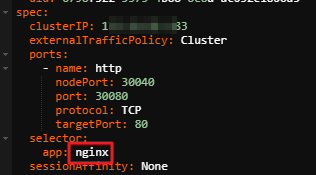

If the Service has no endpoint addresses, the Service selector may not match any pods. Run the following to check:

-

Find the selector in the Service YAML:

-

Query pods using that selector:

<app>is the value of the pod'sapplabel.<namespace>is the namespace where the Service resides. Omit-n <namespace>if the Service is in the default namespace.kubectl get pods -l app=<app> -n <namespace> -

If matching pods exist but still have no endpoints, the Service port may not match the container port. Test connectivity:

<ip>and<port>are theclusterIPandportvalues from the Service YAML in step 1. The exact test method depends on your environment.curl <ip>:<port>Confirm that the container port specified in the Service is the same port the application actually listens on.

Network forwarding issues

If the client can reach the Service and endpoints are correct, but connections drop immediately, traffic may not be reaching the pods. Check the following:

-

Is the pod healthy? See Troubleshoot pod anomalies.

-

Is the pod IP reachable? Get pod IPs with

kubectl get pods -o wide, then log on to any node and runping <pod-ip>to verify network connectivity. -

Is the application listening on the correct port? On any node, run

curl <pod-ip>:<port>to confirm the container port in the pod is working as expected.

How do I manually update Helm?

Helm v2's Tiller server-side component has known security vulnerabilities that allow attackers to install unauthorized applications in clusters. Upgrade to Helm v3. See Upgrading from Helm v2 to Helm v3.

How do I pull images from a Container Registry Enterprise Edition instance in the Chinese mainland to an ACK cluster outside the Chinese mainland?

Purchase the following Container Registry Enterprise Edition instances:

-

Standard or Premium instance in the region inside the Chinese mainland

-

Basic instance in the region outside the Chinese mainland

Then use a sync instance to replicate images from the Chinese mainland region to the region outside the Chinese mainland. See Same-account sync instance. After synchronization, retrieve the registry address from the Enterprise Edition instance in the region outside the Chinese mainland and use it when creating applications in ACK.

ACR Personal Edition has slower synchronization speeds. For self-managed repositories, you must purchase Global Acceleration (GA) to speed up image pulls, which increases cost. Container Registry Enterprise Edition is the recommended option. See Billing information.

How do I perform rolling updates without service interruptions?

When replacing pods during a rolling update, a delay of a few seconds occurs before pod changes sync to the Classic Load Balancer (CLB) instance. This can cause temporary 5XX errors. Configure graceful shutdown to achieve zero-downtime rolling updates. See How to implement zero-downtime rolling updates for Kubernetes.

How do I get a container image for a workload?

For prerequisites and guidance on pulling a container image, see Pull image.

How do I restart a container?

Kubernetes does not support restarting individual containers directly. Instead, delete the pod — the controller (Deployment, DaemonSet, etc.) automatically creates a replacement.

-

List pods to find the one to restart:

kubectl get pods -

Delete the pod:

kubectl delete pod <pod-name> -

Verify the new pod is running:

kubectl get podsConfirm the pod status is

Running.

In production, manage containers through higher-level objects like ReplicaSets and Deployments rather than directly manipulating pods. This preserves cluster state consistency.

How do I change the namespace of a Deployment?

Moving a Deployment to a different namespace also requires manually updating dependent resources — persistent volume claims (PVCs), ConfigMaps, and Secrets — to the new namespace.

-

Export the Deployment configuration:

kubectl get deploy <deployment-name> -n <old-namespace> -o yaml > deployment.yaml -

Edit

deployment.yamland replace thenamespacevalue:apiVersion: apps/v1 kind: Deployment metadata: annotations: generation: 1 labels: app: nginx name: nginx-deployment namespace: new-namespace # Specify the new namespace. ... ... -

Apply the updated configuration:

kubectl apply -f deployment.yaml -

Verify the Deployment is running in the new namespace:

kubectl get deploy -n new-namespace

How do I expose pod information to running containers?

ACK follows native Kubernetes community specifications. Two methods are supported:

-

Environment variables — pass pod information to a container via environment variables. See Expose pod information to containers through environment variables.

-

Files — mount pod information as files inside the container using the Downward API volume. See Expose pod information to containers through files.

How to use imagePullSecrets?

-

The passwordless pull component does not support the manually specified

imagePullSecretsfield. -

The Secret must be in the same namespace as the workload.

ACR Personal Edition instances created on or after September 9, 2024 do not support aliyun-acr-credential-helper. Store your credentials in a Kubernetes Secret and reference it via imagePullSecrets.

Step 1: Create the Secret

kubectl create secret docker-registry image-secret \

--docker-server=<ACR-registry> \

--docker-username=<username> \

--docker-password=<password> \

--docker-email=<email@example.com>| Parameter | Description |

|---|---|

--docker-server |

ACR instance endpoint. Must match the network type of the registry address (internal or public). |

--docker-username |

Username of the ACR access credential. |

--docker-password |

Password of the ACR access credential. |

--docker-email |

Optional. |

Step 2: Reference the Secret

Choose one of the following methods:

Use a Service Account

Option 1: Add to a ServiceAccount

All workloads that use the ServiceAccount can pull images without specifying the Secret individually.

apiVersion: v1

kind: ServiceAccount

metadata:

name: default

namespace: default

imagePullSecrets:

- name: image-secret # Enter the ACR SecretAny Deployment using this ServiceAccount can then pull images:

apiVersion: apps/v1

kind: Deployment

metadata:

name: nginx-test

namespace: default

labels:

app: nginx

spec:

serviceAccountName: default # If using the default ServiceAccount for the namespace, you do not need to specify it.

replicas: 2

selector:

matchLabels:

app: nginx

template:

metadata:

labels:

app: nginx

spec:

containers:

- name: nginx

image: <acrID>.cr.aliyuncs.com/<repo>/nginx:latest # Replace with the ACR instance linkUse Directly in a Workload

Option 2: Specify directly in the workload

apiVersion: apps/v1

kind: Deployment

metadata:

name: nginx-test

namespace: default

labels:

app: nginx

spec:

replicas: 2

selector:

matchLabels:

app: nginx

template:

metadata:

labels:

app: nginx

spec:

imagePullSecrets:

- name: image-secret # Use the Secret created in the previous step

containers:

- name: nginx

image: <acrID>.cr.aliyuncs.com/<repo>/nginx:latest # Replace with the Registry Address of the ACR repositoryVerify

After applying the configuration, confirm the pod is running:

kubectl get podsWhy do pulls still fail after configuring the passwordless component?

The passwordless pull component may be misconfigured. Check for these two common causes:

-

The instance information in the component does not match the ACR instance.

-

The image address used to pull does not match the domain name configured in the component.

Follow Pull images within the same account to verify the configuration.

If the pull still fails after the configuration is correct, a manually specified imagePullSecrets field in the workload YAML may be conflicting with the passwordless component. Remove the imagePullSecrets field, then delete and recreate the pod.

Why do containers fail to start on new nodes after enabling node pool image acceleration?

After enabling Container Image Acceleration for a node pool, you may see the following error:

failed to create containerd container: failed to attach and mount for snapshot 46: failed to enable target for /sys/kernel/config/target/core/user_99999/dev_46, failed:failed to open remote file as tar file xxxxThis error occurs because Container Image Acceleration requires the aliyun-acr-acceleration-suite component to be installed and pull credentials to be configured for private images. See Configure container image pull credentials.

If the issue persists after installing the component, disable container image acceleration first to restore your services, then reconfigure it.