When standard OS images don't meet your requirements — for example, when you need to preinstall software, pre-cache container images, or install a GPU driver before nodes join the cluster — build a custom OS image using Alicloud Image Builder. Node pools configured with a custom image provision faster and are ready for workloads immediately after joining the cluster.

Alicloud Image Builder runs as a Kubernetes Job inside your ACK cluster. It launches a temporary Elastic Compute Service (ECS) instance, runs your provisioning scripts, captures the result as a custom image, and releases the temporary instance automatically.

Prerequisites

Before you begin, make sure you have:

-

An ACK cluster. See Create an ACK managed cluster.

-

kubectl connected to the cluster. See Get a cluster kubeconfig and connect to the cluster using kubectl.

-

An AccessKey pair with the required ECS and VPC permissions. See the Grant permissions to the AccessKey pair step in this topic.

How it works

The image build process runs entirely inside your ACK cluster:

-

A ConfigMap stores the Packer build configuration (parameters, builder settings, and provisioner scripts).

-

A Job runs the

image-buildercontainer, which invokes Packer with the configuration from the ConfigMap. -

Packer creates a temporary ECS instance from your

source_image, runs the provisioner scripts (install software, set environment variables, pre-cache images), then snapshots the instance into a new custom image. -

The temporary ECS instance is released automatically after the image is created.

-

The custom image is available in ECS and can be selected when you create a node pool.

Build a custom OS image

This section walks through building a custom image using a Job. The example uses a ConfigMap named build-config and a Job named image-builder.

Step 1: Create the build configuration

Create a ConfigMap that defines the Packer build configuration.

-

Create a file named

build-config.yamlwith the following content:ImportantBefore assigning a custom image to a node pool, make sure the image's build settings match the node pool configuration exactly — including cluster version, region, container runtime, and instance type (GPU or CPU). A mismatch prevents nodes from joining the cluster. Validate the image by first using it in a regular node pool with matching settings, then confirming your applications run correctly on those nodes.

Packer configuration sections

Section Example Description variables"access_key": "{{env \ALICLOUD_ACCESS_KEY\}}"Defines build-time variables. Store sensitive values like access_keyandsecret_keyas environment variables to avoid hardcoding credentials in the configuration file.builders"type": "alicloud-ecs"Defines the image builder. With alicloud-ecs, Packer creates a temporary ECS instance to run the build, then releases the instance after the image is captured.provisioners"type": "shell"Defines the operations to run on the temporary instance before the image is captured — for example, installing software with yum install redis.x86_64 -y. See Provisioner configuration for all supported types.Image build parameters

Parameter Type Required Default Description access_keystring Yes — The AccessKey ID used to create the custom image. See Obtain an AccessKey pair. secret_keystring Yes — The AccessKey secret used to create the custom image. regionstring Yes — The region where the custom image is created. Example: cn-beijing.image_namestring Yes — The name of the custom image. Must be globally unique. Example: ack-custom_image.source_imagestring Yes — The ID of the Alibaba Cloud public image used as the base. The custom image inherits the same OS. See OS images supported by ACK. instance_typestring Yes — The ECS instance type used to run the provisioning scripts. For GPU images, specify a GPU-accelerated instance type. Example: ecs.c6.xlarge.disk_sizeint No 40 System disk size in GB. Example: 120.vpc_idstring No Auto-created If blank, a virtual private cloud (VPC) is created for the build and deleted afterward. vswitch_idstring No Auto-created If blank, a vSwitch is created for the build and deleted afterward. security_group_idstring No Auto-created If blank, a security group is created for the build and deleted afterward. RUNTIMEstring Yes — Container runtime. Valid values: containerd,docker.RUNTIME_VERSIONstring Yes 1.6.20(containerd),19.03.15(docker)Version of the container runtime. SKIP_SECURITY_FIXbool Yes — Set to trueto skip OS security updates during the build.KUBE_VERSIONstring Yes — Kubernetes version of the target cluster. Example: 1.30.1-aliyun.1.OS_ARCHstring Yes — CPU architecture. Valid values: amd64,arm64.PRESET_GPUbool No — Set to trueto preinstall a GPU driver to accelerate startup.NVIDIA_DRIVER_VERSIONstring No 460.91.03GPU driver version to preinstall. Takes effect only when PRESET_GPU=true.MOUNT_RUNTIME_DATADISKbool No — Set to trueto enable dynamic data disk attachment during ECS instance runtime when using custom images with pre-cached application dependencies.The following tables describe the key parameters.

-

Deploy the ConfigMap to the cluster:

kubectl apply -f build-config.yaml

Step 2: Set up credentials and create the build Job

Alicloud Image Builder needs ECS and VPC permissions to create and manage the temporary resources used during the build.

Grant permissions to the AccessKey pair

Attach the following policy to the RAM user or role associated with your AccessKey pair:

Store credentials as a Kubernetes Secret

-

Generate Base64-encoded strings for your AccessKey pair:

echo -n "yourAccessKeyID" | base64 echo -n "yourAccessKeySecret" | base64 -

Create a Secret named

my-secretwith the encoded values:Secret field Corresponds to Source ALICLOUD_ACCESS_KEYaccess_keyinbuild-config.yamlOutput from the first echocommandALICLOUD_SECRET_KEYsecret_keyinbuild-config.yamlOutput from the second echocommandapiVersion: v1 kind: Secret metadata: name: my-secret namespace: default type: Opaque data: ALICLOUD_ACCESS_KEY: TFRI**************** # Base64-encoded AccessKey ID ALICLOUD_SECRET_KEY: a0zY**************** # Base64-encoded AccessKey secretThe following table maps each Secret field to the corresponding build configuration parameter:

Create and run the build Job

Create a file named build.yaml. The Job launches a temporary ECS instance of the specified instance_type using source_image, runs the provisioner scripts, captures the result as a new custom image in the specified region, then releases the temporary instance.

Deploy the Job to start the build:

kubectl apply -f build.yamlStep 3: (Optional) View the build log

The build log records each phase of the process: parameter validation, temporary resource creation, provisioner execution, image capture, and cleanup. To view the log:

-

Log on to the ACK console. In the left-side navigation pane, click Clusters.

-

On the Clusters page, click the name of your cluster. In the left navigation pane, choose Workloads > Jobs.

-

Find the Job and click Details in the Actions column. Click the Logs tab.

Provisioner configuration

A provisioner runs on the temporary ECS instance during the build to install software, patch the kernel, create users, download application code, or build a custom Alibaba Cloud Linux 3 image. Provisioners execute in the order they are listed.

Run shell scripts

"provisioners": [{

"type": "shell",

"script": "script.sh"

}]Run Ansible playbooks

"provisioners": [

{

"type": "ansible",

"playbook_file": "./playbook.yml"

}

]Install the CPFS client

Installing the Cloud Parallel File System (CPFS) client requires multiple packages, some of which involve real-time compilation. Baking the client into a custom image avoids this cost on every new node.

Build a GPU-accelerated node image

Do not assign an image with a preinstalled GPU driver to CPU-only nodes.

Set PRESET_GPU=true and specify a GPU-accelerated instance type for both the build and the target node pool.

Pre-cache application images in the OS image

Baking application images into the OS image eliminates the per-node pull time when nodes join the cluster. The example below pulls the pause image into the custom image.

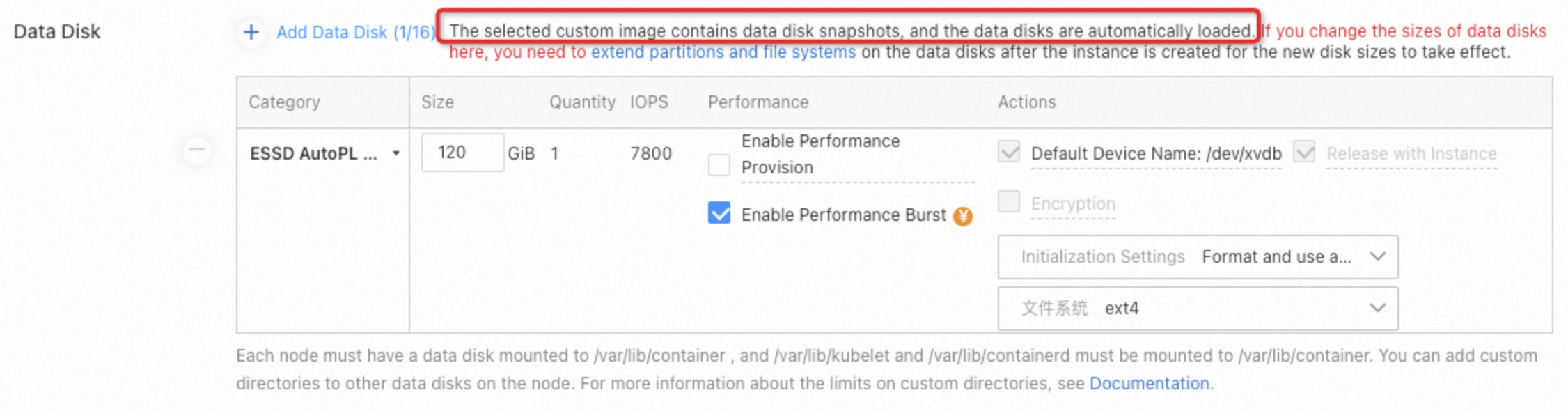

Preserve pre-cached images when a data disk is attached

Disk initialization occurs when you add an ECS instance with a mounted data disk to a node pool, which results in the removal of any pre-stored application images. To keep the pre-cached images, add a data disk to the custom image during the build to generate a data disk snapshot. When a new node is created from the custom image, the data disk snapshot is used to restore the data disk, preserving the application images.

{

"variables": {

"image_name": "ack-custom_image",

"source_image": "aliyun_3_x64_20G_alibase_20240528.vhd",

"instance_type": "ecs.c6.xlarge",

"access_key": "{{env `ALICLOUD_ACCESS_KEY`}}",

"region": "{{env `ALICLOUD_REGION`}}",

"secret_key": "{{env `ALICLOUD_SECRET_KEY`}}"

},

"builders": [

{

"type": "alicloud-ecs",

"system_disk_mapping": {

"disk_size": 120,

"disk_category": "cloud_essd"

},

"image_disk_mappings": {

"disk_size": 40,

"disk_category": "cloud_auto"

},

"access_key": "{{user `access_key`}}",

"secret_key": "{{user `secret_key`}}",

"region": "{{user `region`}}",

"image_name": "{{user `image_name`}}",

"source_image": "{{user `source_image`}}",

"instance_type": "{{user `instance_type`}}",

"ssh_username": "root",

"skip_image_validation": "true",

"io_optimized": "true"

}

],

"provisioners": [

{

"type": "file",

"source": "scripts/ack-optimized-os-linux3-all.sh",

"destination": "/root/"

},

{

"type": "shell",

"inline": [

"export RUNTIME=containerd",

"export SKIP_SECURITY_FIX=true",

"export KUBE_VERSION=1.30.1-aliyun.1",

"export OS_ARCH=amd64",

"export MOUNT_RUNTIME_DATADISK=true",

"bash /root/ack-optimized-os-linux3-all.sh",

"ctr -n k8s.io i pull registry-cn-hangzhou-vpc.ack.aliyuncs.com/acs/pause:3.9",

"mv /var/lib/containerd /var/lib/container/containerd" # Move the container runtime data to the data disk

]

}

]

}When configuring the node pool, select a custom image that includes the data disk snapshot. The system automatically associates the corresponding data disk snapshot.

Pull images from a private registry

When the container runtime is containerd:

ctr -n k8s.io i pull --user=username:password nginxWhen the container runtime is Docker:

docker login <image_address> -u user -p password

docker pull nginxPull from a private registry after the custom image is built

Use this approach when you need Docker credentials baked into the image.

-

On a Linux server with Docker installed, log in to generate a credential file:

docker login --username=zhongwei.***@aliyun-test.com --password xxxxxxxxxx registry.cn-beijing.aliyuncs.comAfter login succeeds, Docker creates a credential file at

/root/.docker/config.json. -

Create a ConfigMap from the credential file:

apiVersion: v1 kind: ConfigMap metadata: name: docker-config data: config.json: |- { "auths": { "registry.cn-beijing.aliyuncs.com": { "auth": "xxxxxxxxxxxxxx" } }, "HttpHeaders": { "User-Agent": "Docker-Client/19.03.15 (linux)" } } -

Modify

build.yamlto mount the ConfigMap into the build pod: -

Add the Docker credential file to

build-config.yamland update the provisioner to copy it and pull the private image: -

Deploy the updated ConfigMap and run the Job.

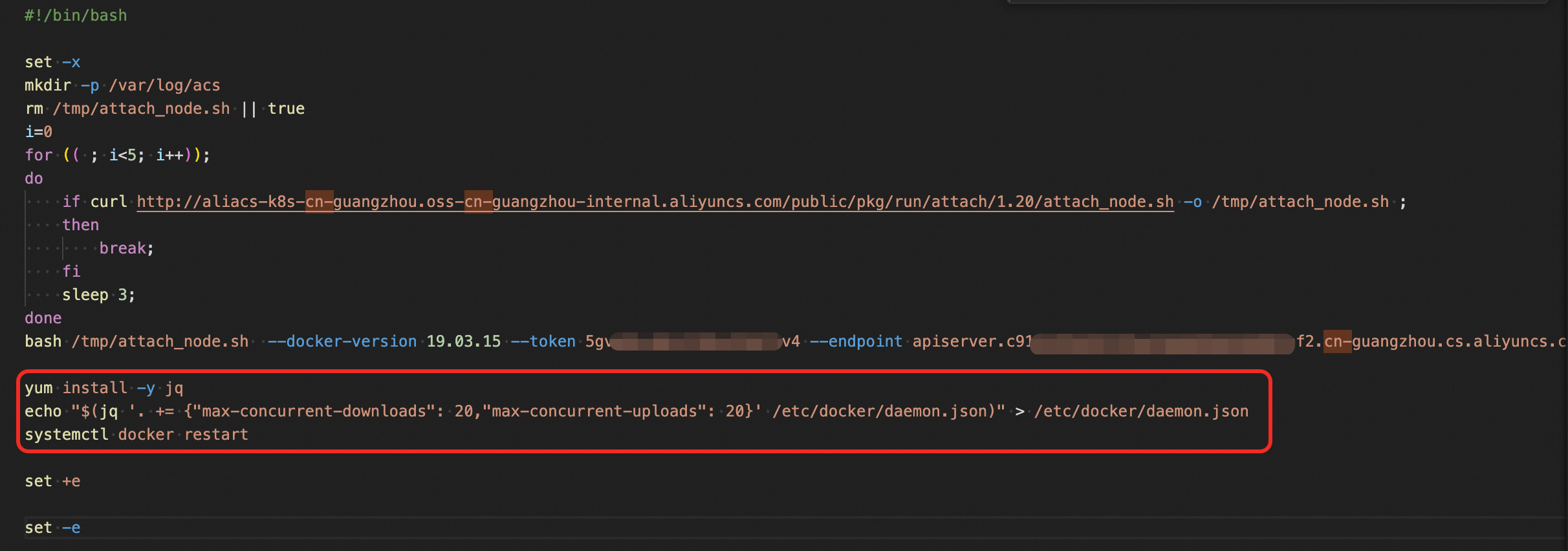

Set the maximum concurrent image downloads and uploads

To increase the number of concurrent image layer downloads and uploads on Docker nodes, modify the Docker daemon configuration through the instance user data of the Auto Scaling group.

-

Log on to the ACK console. In the left-side navigation pane, click Clusters.

-

On the Clusters page, click the name of your cluster. In the left navigation pane, choose Nodes > Node Pools.

-

Click the name of the target node pool, then click the Overview tab. In the Node Pool Information section, click the link next to Auto Scaling Group.

-

On the page that appears, click the Instance Configuration Sources tab. Find the scaling configuration and click Edit > OK.

-

On the Modify Scaling Configuration page, click Advanced Settings and copy the content from the Instance User Data field.

-

Modify the user data:

-

Base64-decode the content you copied.

-

Append the following script to the end of the decoded content:

bash # Install jq for JSON processing yum install -y jq # Increase concurrent image layer downloads and uploads echo "$(jq '. += {"max-concurrent-downloads": 20,"max-concurrent-uploads": 20}' /etc/docker/daemon.json)" > /etc/docker/daemon.json # Restart Docker to apply the changes service docker restart

-

-

Re-encode and save the user data:

-

Base64-encode the entire modified script, including the appended lines.

-

Replace the original content in the Instance User Data field with the encoded string.

-

Click Modify to save.

-

What's next

-

Use the custom image to create a node pool. See Create and manage node pools.

-

Configure auto scaling for nodes using custom images. See Enable node auto scaling.