Spark jobs running in a Container Service for Kubernetes (ACK) cluster generate logs distributed across many pods, making centralized log management difficult. By integrating with Simple Log Service (SLS), you can:

-

Collect structured logs from all Spark driver and executor pods automatically

-

Query and analyze Spark job logs across a specified time range

-

Filter logs by application name, version, role, and submission ID

This topic describes how to configure the full log collection pipeline: building a Spark container image with structured logging support, deploying a Logtail configuration to collect pod logs, and querying the results in Simple Log Service.

Prerequisites

Before you begin, ensure that you have:

-

The ack-spark-operator component installed. For more information, see Step 1: Install the ack-spark-operator component.

-

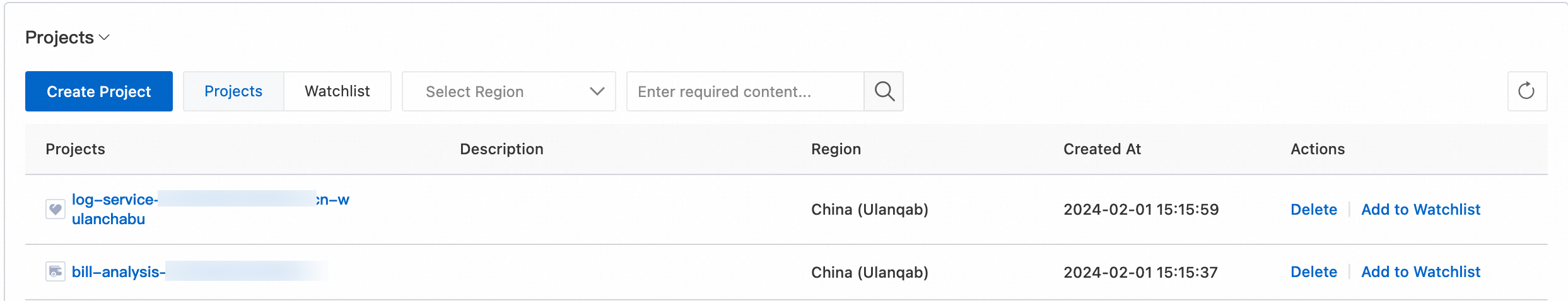

A Simple Log Service project created. For more information, see Manage a project.

-

Logtail components installed in your ACK cluster. For more information, see Install Logtail components in an ACK cluster.

-

A container image registry to push your custom Spark image.

How it works

The pipeline works as follows:

-

A custom Spark container image adds the Log4j2 JSON template layout library, enabling structured JSONL output.

-

A ConfigMap configures Log4j2 to write logs in JSONL format using the Elastic Common Schema (ECS) template, both to stdout and to a file at

/opt/spark/logs/spark.log. -

A Logtail configuration (AliyunLogConfig) tells Simple Log Service to collect logs from containers matching the Spark Operator pod label and container name pattern, then parses the JSONL fields and extracts timestamps.

-

After a Spark job runs, its logs are available in the specified Logstore for querying and analysis.

Step 1: Build a Spark container image

Create the following Dockerfile. This example uses Spark 3.5.3 and adds the log4j-layout-template-json dependency to the Spark classpath, which enables JSONL output via the JsonTemplateLayout appender.

ARG SPARK_IMAGE=<SPARK_IMAGE> # Replace <SPARK_IMAGE> with your Spark base image.

FROM ${SPARK_IMAGE}

# Add dependency for log4j-layout-template-json

ADD --chown=spark:spark --chmod=644 https://repo1.maven.org/maven2/org/apache/logging/log4j/log4j-layout-template-json/2.24.1/log4j-layout-template-json-2.24.1.jar ${SPARK_HOME}/jarsBuild and push the image to your registry:

docker build -t <your-registry>/<your-image-name>:<tag> .

docker push <your-registry>/<your-image-name>:<tag>Replace the placeholders with your actual registry and image details.

Step 2: Configure Log4j2 logs

Create a file named spark-log-conf.yaml with the following content. This ConfigMap sets the log level to INFO and configures both the console and file appenders to output logs in JSONL format using the ECS template. For more information, see Collect Log4j logs.

apiVersion: v1

kind: ConfigMap

metadata:

name: spark-log-conf

namespace: default

data:

log4j2.properties: |

# Set everything to be logged to the console and file

rootLogger.level = info

rootLogger.appenderRefs = console, file

rootLogger.appenderRef.console.ref = STDOUT

rootLogger.appenderRef.file.ref = FileAppender

appender.console.name = STDOUT

appender.console.type = Console

appender.console.layout.type = JsonTemplateLayout

appender.console.layout.eventTemplateUri = classpath:EcsLayout.json

appender.file.name = FileAppender

appender.file.type = File

appender.file.fileName = /opt/spark/logs/spark.log

appender.file.layout.type = JsonTemplateLayout

appender.file.layout.eventTemplateUri = classpath:EcsLayout.jsonApply the ConfigMap:

kubectl apply -f spark-log-conf.yamlExpected output:

configmap/spark-log-conf createdStep 3: Create a Logtail configuration

Create a file named aliyun-log-config.yaml with the following content. Replace <SLS_PROJECT> with your Simple Log Service project name and <SLS_LOGSTORE> with your Logstore name. If the Logstore does not exist, Simple Log Service creates it automatically.

The configuration filters for pods launched by the Spark Operator using the label sparkoperator.k8s.io/launched-by-spark-operator: "true", which the Spark Operator sets automatically on all driver and executor pods. Logs are collected from /opt/spark/logs, parsed as JSON, and the @timestamp field is extracted as the log time. For more information about AliyunLogConfig fields, see Use AliyunLogConfig to manage a Logtail configuration.

apiVersion: log.alibabacloud.com/v1alpha1

kind: AliyunLogConfig

metadata:

name: spark

namespace: default

spec:

# (Optional) The name of the project. Default value: k8s-log-<Your_Cluster_ID>.

project: <SLS_PROJECT>

# The name of the Logstore. If the Logstore that you specify does not exist, Simple Log Service automatically creates a Logstore.

logstore: <SLS_LOGSTORE>

# The Logtail configuration.

logtailConfig:

# The name of the Logtail configuration.

configName: spark

# The type of the data source. The value file specifies text logs.

inputType: file

# The configurations of the log input.

inputDetail:

# The directory in which the log file is located.

logPath: /opt/spark/logs

# The name of a log file. Wildcard characters are supported.

filePattern: '*.log'

# The encoding of the log file.

fileEncoding: utf8

# The log type.

logType: json_log

localStorage: true

key:

- content

logBeginRegex: .*

logTimezone: ''

discardNonUtf8: false

discardUnmatch: true

preserve: true

preserveDepth: 0

regex: (.*)

outputType: LogService

topicFormat: none

adjustTimezone: false

enableRawLog: false

# Collect text logs from containers.

dockerFile: true

# Advanced configurations.

advanced:

# Preview the container metadata.

collect_containers_flag: true

# Logtail configurations in Kubernetes.

k8s:

# Filter pods based on the tag.

IncludeK8sLabel:

sparkoperator.k8s.io/launched-by-spark-operator: "true"

# Filter containers based on the container name.

K8sContainerRegex: "^spark-kubernetes-(driver|executor)$"

# Additional log tag configurations.

ExternalK8sLabelTag:

spark-app-name: spark-app-name

spark-version: spark-version

spark-role: spark-role

spark-app-selector: spark-app-selector

sparkoperator.k8s.io/submission-id: sparkoperator.k8s.io/submission-id

# The log processing plug-in.

plugin:

processors:

# Log isolation.

- type: processor_split_log_string

detail:

SplitKey: content

SplitSep: ''

# Parse the JSON field.

- type: processor_json

detail:

ExpandArray: false

ExpandConnector: ''

ExpandDepth: 0

IgnoreFirstConnector: false

SourceKey: content

KeepSource: false

KeepSourceIfParseError: true

NoKeyError: false

UseSourceKeyAsPrefix: false

# Extract the log timestamp.

- type: processor_strptime

detail:

SourceKey: '@timestamp'

Format: '%Y-%m-%dT%H:%M:%S.%fZ'

KeepSource: false

AdjustUTCOffset: true

UTCOffset: 0

AlarmIfFail: falseApply the configuration:

kubectl apply -f aliyun-log-config.yamlTo verify that the Logstore and Logtail configuration were created:

-

Log on to the Simple Log Service console.

-

In the Projects section, click your project.

-

Choose Log Storage \> Logstores. Click the \> icon next to the target Logstore. Choose Data Import \> Logtail Configurations.

-

Click the Logtail configuration to view its details.

Step 4: Submit a sample Spark job

Create a file named spark-pi.yaml with the following content. The sparkConfigMap field references the ConfigMap created in Step 2, which injects the Log4j2 configuration into the Spark pods.

apiVersion: sparkoperator.k8s.io/v1beta2

kind: SparkApplication

metadata:

name: spark-pi

namespace: default

spec:

type: Scala

mode: cluster

image: <SPARK_IMAGE>

mainClass: org.apache.spark.examples.SparkPi

mainApplicationFile: local:///opt/spark/examples/jars/spark-examples_2.12-3.5.3.jar

arguments:

- "5000"

sparkVersion: 3.5.3

sparkConfigMap: spark-log-conf

driver:

cores: 1

memory: 512m

serviceAccount: spark-operator-spark

executor:

instances: 1

cores: 1

memory: 4gSubmit the job:

kubectl apply -f spark-pi.yamlAfter the job completes, check the last 10 lines of the driver pod log to confirm JSONL output:

kubectl logs --tail=10 spark-pi-driverExpected output:

{"@timestamp":"2024-11-20T11:45:48.487Z","ecs.version":"1.2.0","log.level":"WARN","message":"Kubernetes client has been closed.","process.thread.name":"-937428334-pool-19-thread-1","log.logger":"org.apache.spark.scheduler.cluster.k8s.ExecutorPodsWatchSnapshotSource"}

{"@timestamp":"2024-11-20T11:45:48.585Z","ecs.version":"1.2.0","log.level":"INFO","message":"MapOutputTrackerMasterEndpoint stopped!","process.thread.name":"dispatcher-event-loop-7","log.logger":"org.apache.spark.MapOutputTrackerMasterEndpoint"}

{"@timestamp":"2024-11-20T11:45:48.592Z","ecs.version":"1.2.0","log.level":"INFO","message":"MemoryStore cleared","process.thread.name":"main","log.logger":"org.apache.spark.storage.memory.MemoryStore"}

{"@timestamp":"2024-11-20T11:45:48.592Z","ecs.version":"1.2.0","log.level":"INFO","message":"BlockManager stopped","process.thread.name":"main","log.logger":"org.apache.spark.storage.BlockManager"}

{"@timestamp":"2024-11-20T11:45:48.596Z","ecs.version":"1.2.0","log.level":"INFO","message":"BlockManagerMaster stopped","process.thread.name":"main","log.logger":"org.apache.spark.storage.BlockManagerMaster"}

{"@timestamp":"2024-11-20T11:45:48.598Z","ecs.version":"1.2.0","log.level":"INFO","message":"OutputCommitCoordinator stopped!","process.thread.name":"dispatcher-event-loop-1","log.logger":"org.apache.spark.scheduler.OutputCommitCoordinator$OutputCommitCoordinatorEndpoint"}

{"@timestamp":"2024-11-20T11:45:48.602Z","ecs.version":"1.2.0","log.level":"INFO","message":"Successfully stopped SparkContext","process.thread.name":"main","log.logger":"org.apache.spark.SparkContext"}

{"@timestamp":"2024-11-20T11:45:48.604Z","ecs.version":"1.2.0","log.level":"INFO","message":"Shutdown hook called","process.thread.name":"shutdown-hook-0","log.logger":"org.apache.spark.util.ShutdownHookManager"}

{"@timestamp":"2024-11-20T11:45:48.604Z","ecs.version":"1.2.0","log.level":"INFO","message":"Deleting directory /var/data/spark-f783cf2e-44db-452c-83c9-738f9c894ef9/spark-2caa5814-bd32-431c-a9f9-a32208b34fbb","process.thread.name":"shutdown-hook-0","log.logger":"org.apache.spark.util.ShutdownHookManager"}

{"@timestamp":"2024-11-20T11:45:48.606Z","ecs.version":"1.2.0","log.level":"INFO","message":"Deleting directory /tmp/spark-dacdfd95-f166-4b23-9312-af9052730417","process.thread.name":"shutdown-hook-0","log.logger":"org.apache.spark.util.ShutdownHookManager"}Each JSONL log entry contains the following fields:

| Field | Description |

|---|---|

@timestamp |

The time when the log is generated |

ecs.version |

The ECS (Elastic Common Schema) version |

log.level |

The log level, such as INFO or WARN |

message |

The log message |

process.thread.name |

The name of the thread that generates the log |

log.logger |

The name of the logger that records the log |

Step 5: Verify log collection

After the Spark job completes, confirm that logs have reached your Logstore before using them for analysis.

Log on to the Simple Log Service console and open your project. In the Logstore, set a time range covering the job execution window and run a query. You should see JSONL-format entries with the ECS fields described above.

If no logs appear, check:

-

The Logtail configuration is active and the Logstore name matches

<SLS_LOGSTORE>in your AliyunLogConfig. -

The Spark pods have the label

sparkoperator.k8s.io/launched-by-spark-operator: "true"(set automatically by the Spark Operator). -

The log file exists at

/opt/spark/logs/spark.loginside the driver or executor pod.

Step 6: Query and analyze Spark logs

With logs flowing into Simple Log Service, use the query and analysis features to filter and aggregate Spark job logs by time range, log level, application name, or Spark role (driver vs. executor).

(Optional) Step 7: Clean up

After testing, remove the resources to avoid unnecessary costs.

Delete the Spark job:

kubectl delete -f spark-pi.yamlDelete the Logtail configuration:

kubectl delete -f aliyun-log-config.yamlDelete the Log4j2 ConfigMap:

kubectl delete -f spark-log-conf.yaml