Diagnose and resolve storage-related failures in ACK clusters when a pod cannot start or a persistent volume claim (PVC) fails to reach Bound status. This guide covers disk, NAS, and OSS volume types.

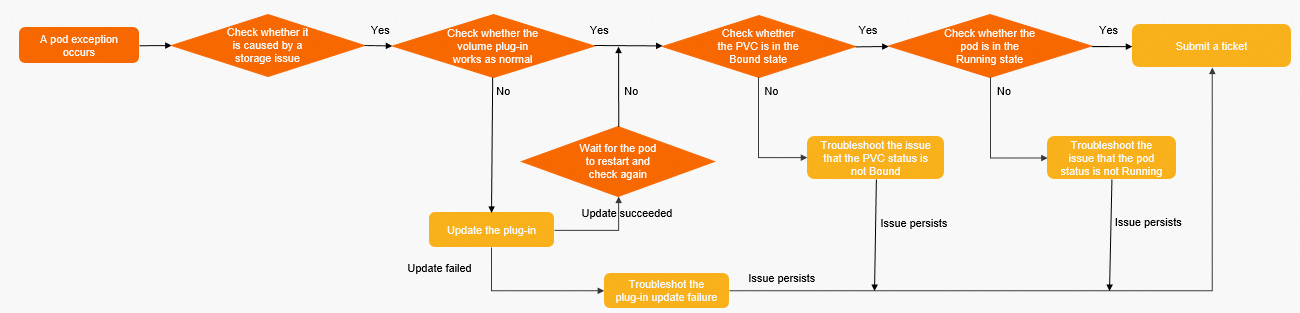

Diagnostic procedure

Follow these steps in order before diving into storage-type-specific sections.

Step 1: Confirm the failure is storage-related.

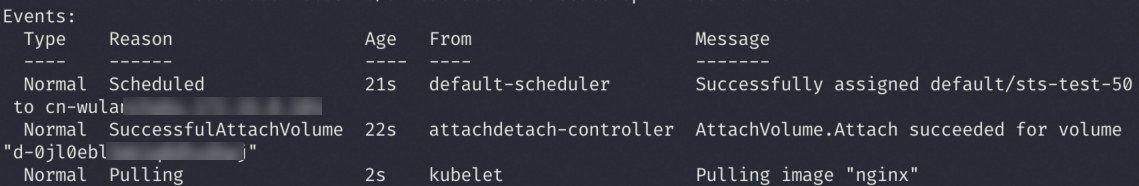

Run kubectl describe pods <pod-name> and inspect the Events section.

If the pod shows the state in the following figure, the volume mounted successfully. A CrashLoopBackOff or similar exit code at this stage is an application error, not a storage error. Submit a ticketSubmit a ticketSubmit a ticketSubmit a ticketSubmit a ticketSubmit a ticketSubmit a ticketSubmit a ticketSubmit a ticketSubmit a ticketSubmit a ticketSubmit a ticketSubmit a ticketSubmit a ticketSubmit a ticketSubmit a ticketSubmit a ticketSubmit a ticketSubmit a ticketSubmit a ticketSubmit a ticketSubmit a ticketSubmit a ticketSubmit a ticketSubmit a ticketSubmit a ticketSubmit a ticketSubmit a ticketSubmit a ticketSubmit a ticketSubmit a ticketSubmit a ticketSubmit a ticketSubmit a ticketSubmit a ticketSubmit a ticketSubmit a ticketSubmit a ticketSubmit a ticketSubmit a ticketSubmit a ticketSubmit a ticketSubmit a ticketSubmit a ticketSubmit a ticketSubmit a ticketSubmit a ticketSubmit a ticketSubmit a ticket for application-level assistance.

Step 2: Verify the CSI plug-in is running.

kubectl get pod -n kube-system | grep csiExpected output:

NAME READY STATUS RESTARTS AGE

csi-plugin-*** 4/4 Running 0 23d

csi-provisioner-*** 7/7 Running 0 14dIf either pod is not in Running status, run kubectl describe pods <pod-name> -n kube-system to see the container exit reason and pod events.

Step 3: Verify the CSI plug-in is up to date.

kubectl get ds csi-plugin -n kube-system -oyaml | grep imageExpected output:

image: registry.cn-****.aliyuncs.com/acs/csi-plugin:v*****-aliyunCompare the image tag against the csi-plugin version history and the csi-provisioner version history.

If the version is outdated, upgrade the CSI plug-in. For upgrade failures, see Troubleshoot component update failures.

Step 4: Troubleshoot pod pending issues.

-

Disk volume: see Pod not running (disk)

-

NAS volume: see Pod not running (NAS)

-

OSS volume: see Pod not running (OSS)

Step 5: Troubleshoot PVC not Bound issues.

-

Disk volume: see PVC not Bound (disk)

-

NAS volume: see PVC not Bound (NAS)

-

OSS volume: see PVC not Bound (OSS)

Step 6: If the issue persists, submit a ticket.

Troubleshoot component update failures

csi-provisioner

csi-provisioner is a Deployment with 2 replicas by default, deployed on different nodes in a mutually exclusive manner.

-

If the upgrade fails, check whether the cluster has fewer than two schedulable nodes. Two replicas require at least two schedulable nodes.

-

csi-provisioner 1.14 and earlier used a StatefulSet instead of a Deployment. If a StatefulSet named

csi-provisionerstill exists in your cluster, delete it and reinstall the component:kubectl delete sts csi-provisionerThen log in to the Container Service console and reinstall csi-provisioner from Components. For details, see Components.

csi-plugin

csi-plugin is a DaemonSet deployed on every node.

-

Check whether any nodes are in

NotReadystatus. Nodes inNotReadystatus block the DaemonSet upgrade. -

If the upgrade fails but all plug-ins are working normally, the component center may have detected a timeout and automatically rolled back. Submit a ticketSubmit a ticketSubmit a ticketSubmit a ticketSubmit a ticketSubmit a ticketSubmit a ticketSubmit a ticketSubmit a ticketSubmit a ticketSubmit a ticketSubmit a ticketSubmit a ticketSubmit a ticketSubmit a ticketSubmit a ticketSubmit a ticketSubmit a ticketSubmit a ticketSubmit a ticketSubmit a ticketSubmit a ticketSubmit a ticketSubmit a ticketSubmit a ticketSubmit a ticketSubmit a ticketSubmit a ticketSubmit a ticketSubmit a ticketSubmit a ticketSubmit a ticketSubmit a ticketSubmit a ticketSubmit a ticketSubmit a ticketSubmit a ticketSubmit a ticketSubmit a ticketSubmit a ticketSubmit a ticketSubmit a ticketSubmit a ticketSubmit a ticketSubmit a ticketSubmit a ticketSubmit a ticketSubmit a ticketSubmit a ticket for assistance.

Disk troubleshooting

The node and the disk must be in the same region and zone. Cross-region and cross-zone usage is not supported. Different ECS instance types support different disk types. For details, see Instance family.

Pod not running (disk)

Symptom

The PVC status is Bound but the pod is not Running.

Possible causes and resolution

PVC not Bound (disk)

Symptom

Both the PVC and pod are not Running.

Fault locating

Run kubectl describe pvc <pvc-name> -n <namespace> and inspect the events to determine whether this is a static or dynamic provisioning issue.

Static provisioning

The PVC and PV cannot be associated because their selectors do not match. Common mismatches:

-

The PVC selector differs from the PV selector.

-

They reference different StorageClass names.

-

The PV status is

Released.

Check the YAML configuration of both the PVC and PV. See Use static disk volumes.

A PV in Released status means its previous PVC was deleted. The underlying disk is still held by the old claim reference and cannot be reused. Create a new PV to bind the disk to the new PVC.

Dynamic provisioning

csi-provisioner failed to create the disk. Inspect the PVC events for the specific error:

| Error type | Reference |

|---|---|

| Disk creation errors | Disk volume FAQ |

| Disk expansion errors | Disk volume FAQ |

| ECS API errors during disk creation | ECS Error Center |

If no relevant event appears, submit a ticketsubmit a ticketsubmit a ticketsubmit a ticketsubmit a ticketsubmit a ticketsubmit a ticketsubmit a ticketsubmit a ticketsubmit a ticketsubmit a ticketsubmit a ticketsubmit a ticketsubmit a ticketsubmit a ticketsubmit a ticketsubmit a ticketsubmit a ticketsubmit a ticketsubmit a ticketsubmit a ticketsubmit a ticketsubmit a ticketsubmit a ticketsubmit a ticketsubmit a ticketsubmit a ticketsubmit a ticketsubmit a ticketsubmit a ticketsubmit a ticketsubmit a ticketsubmit a ticketsubmit a ticketsubmit a ticketsubmit a ticketsubmit a ticketsubmit a ticketsubmit a ticketsubmit a ticketsubmit a ticketsubmit a ticketsubmit a ticketsubmit a ticketsubmit a ticketsubmit a ticketsubmit a ticketsubmit a ticketsubmit a ticket.

NAS troubleshooting

-

The node and the NAS mount target must be in the same virtual private cloud (VPC). If they are in different VPCs, use Cloud Enterprise Network (CEN) to connect them.

-

Cross-zone mounting is supported: the node and NAS file system can be in different zones within the same VPC.

-

The mount directory for Extreme NAS and CPFS 2.0 must start with

/share.

Pod not running (NAS)

Symptom

The PVC status is Bound but the pod is not Running.

Possible causes and resolution

Symptom in kubectl describe pods <pod-name> events |

Cause | Fault locating | Resolution |

|---|---|---|---|

Pod stays in Init state for a long time; no explicit error message |

securityContext.fsGroup is set and the volume contains many files, causing slow chmod |

Check whether securityContext.fsGroup is set in the pod spec |

Remove fsGroup from the pod spec, delete the pod, and let it restart to remount |

MountVolume.SetUp failed for volume "..." with a network timeout |

Port 2049 (NFS) is blocked in the security group | Check security group rules for the node | Add an inbound rule for TCP port 2049. See Add security group rules. |

| Mount target unreachable; connection timeout in events | Node and NAS in different VPCs | Verify whether the node VPC and the NAS mount target VPC match | Move the NAS mount target to the same VPC as the node, or use CEN to connect the two VPCs |

| Other events | Other errors | Check pod events with kubectl describe pods <pod-name> |

See NAS volume FAQ. If no relevant event appears, submit a ticketsubmit a ticketsubmit a ticketsubmit a ticketsubmit a ticketsubmit a ticketsubmit a ticketsubmit a ticketsubmit a ticketsubmit a ticketsubmit a ticketsubmit a ticketsubmit a ticketsubmit a ticketsubmit a ticketsubmit a ticketsubmit a ticketsubmit a ticketsubmit a ticketsubmit a ticketsubmit a ticketsubmit a ticketsubmit a ticketsubmit a ticketsubmit a ticketsubmit a ticketsubmit a ticketsubmit a ticketsubmit a ticketsubmit a ticketsubmit a ticketsubmit a ticketsubmit a ticketsubmit a ticketsubmit a ticketsubmit a ticketsubmit a ticketsubmit a ticketsubmit a ticketsubmit a ticketsubmit a ticketsubmit a ticketsubmit a ticketsubmit a ticketsubmit a ticketsubmit a ticketsubmit a ticketsubmit a ticketsubmit a ticket. |

PVC not Bound (NAS)

Symptom

The PVC is not Bound and the pod is not Running.

Fault locating

Run kubectl describe pvc <pvc-name> -n <namespace> and inspect the events to determine whether this is a static or dynamic provisioning issue.

Static provisioning

The PVC and PV selectors do not match. Common mismatches:

-

Selector configuration differs between PVC and PV.

-

They reference different StorageClass names.

-

The PV status is

Released.

Check the YAML configuration of both the PVC and PV. See Use static NAS volumes.

A PV in Released status cannot be reused. Create a new PV that points to the NAS file system.

Dynamic provisioning

csi-provisioner failed to provision the NAS volume. Inspect the PVC events for the specific error. See NAS volume FAQ. If no relevant event appears, submit a ticketsubmit a ticketsubmit a ticketsubmit a ticketsubmit a ticketsubmit a ticketsubmit a ticketsubmit a ticketsubmit a ticketsubmit a ticketsubmit a ticketsubmit a ticketsubmit a ticketsubmit a ticketsubmit a ticketsubmit a ticketsubmit a ticketsubmit a ticketsubmit a ticketsubmit a ticketsubmit a ticketsubmit a ticketsubmit a ticketsubmit a ticketsubmit a ticketsubmit a ticketsubmit a ticketsubmit a ticketsubmit a ticketsubmit a ticketsubmit a ticketsubmit a ticketsubmit a ticketsubmit a ticketsubmit a ticketsubmit a ticketsubmit a ticketsubmit a ticketsubmit a ticketsubmit a ticketsubmit a ticketsubmit a ticketsubmit a ticketsubmit a ticketsubmit a ticketsubmit a ticketsubmit a ticketsubmit a ticketsubmit a ticket.

OSS troubleshooting

-

Mounting an OSS bucket requires AccessKey credentials in the PV, which can be provided through a Kubernetes Secret.

-

For cross-region access, use the public endpoint of the OSS bucket. For same-region access, use the internal endpoint.

Pod not running (OSS)

Symptom

The PVC status is Bound but the pod is not Running.

Possible causes and resolution

Symptom in kubectl describe pods <pod-name> events |

Cause | Fault locating | Resolution |

|---|---|---|---|

Pod stays in Init state for a long time; slow chmod on bucket contents |

securityContext.fsGroup is set and the bucket contains many files |

Check whether securityContext.fsGroup is set in the pod spec |

Remove fsGroup from the pod spec, delete the pod, and let it restart to remount |

MountVolume.SetUp failed for volume "..." with a connection error |

Cross-region access using the internal (private) endpoint | Check whether the bucket endpoint in the PV is a private address while the node is in a different region | Switch the endpoint to the public address of the OSS bucket |

| Other events | Other errors | Check pod events with kubectl describe pods <pod-name> |

See OSS volume FAQ. If no relevant event appears, submit a ticketsubmit a ticketsubmit a ticketsubmit a ticketsubmit a ticketsubmit a ticketsubmit a ticketsubmit a ticketsubmit a ticketsubmit a ticketsubmit a ticketsubmit a ticketsubmit a ticketsubmit a ticketsubmit a ticketsubmit a ticketsubmit a ticketsubmit a ticketsubmit a ticketsubmit a ticketsubmit a ticketsubmit a ticketsubmit a ticketsubmit a ticketsubmit a ticketsubmit a ticketsubmit a ticketsubmit a ticketsubmit a ticketsubmit a ticketsubmit a ticketsubmit a ticketsubmit a ticketsubmit a ticketsubmit a ticketsubmit a ticketsubmit a ticketsubmit a ticketsubmit a ticketsubmit a ticketsubmit a ticketsubmit a ticketsubmit a ticketsubmit a ticketsubmit a ticketsubmit a ticketsubmit a ticketsubmit a ticketsubmit a ticket. |

PVC not Bound (OSS)

Symptom

The PVC is not Bound and the pod is not Running.

Fault locating

Run kubectl describe pvc <pvc-name> -n <namespace> and inspect the events to determine whether this is a static or dynamic provisioning issue.

Static provisioning

The PVC and PV selectors do not match. Common mismatches:

-

Selector configuration differs between PVC and PV.

-

They reference different StorageClass names.

-

The PV status is

Released.

Check the YAML configuration of both the PVC and PV. See Use static OSS volumes.

A PV in Released status cannot be reused. Extract the bucket address from the old PV and create a new PV.

Dynamic provisioning

csi-provisioner failed to mount the OSS bucket. Inspect the PVC events for the specific error. See OSS volume FAQ. If no relevant event appears, submit a ticketsubmit a ticketsubmit a ticketsubmit a ticketsubmit a ticketsubmit a ticketsubmit a ticketsubmit a ticketsubmit a ticketsubmit a ticketsubmit a ticketsubmit a ticketsubmit a ticketsubmit a ticketsubmit a ticketsubmit a ticketsubmit a ticketsubmit a ticketsubmit a ticketsubmit a ticketsubmit a ticketsubmit a ticketsubmit a ticketsubmit a ticketsubmit a ticketsubmit a ticketsubmit a ticketsubmit a ticketsubmit a ticketsubmit a ticketsubmit a ticketsubmit a ticketsubmit a ticketsubmit a ticketsubmit a ticketsubmit a ticketsubmit a ticketsubmit a ticketsubmit a ticketsubmit a ticketsubmit a ticketsubmit a ticketsubmit a ticketsubmit a ticketsubmit a ticketsubmit a ticketsubmit a ticketsubmit a ticketsubmit a ticket.