Use Data Transmission Service (DTS) to synchronize data from an ApsaraDB RDS for MySQL database to an Alibaba Cloud Elasticsearch cluster in real time. For more information about DTS, see DTS. DTS supports synchronization of data changes generated by insert, delete, and update operations, making this approach suited for use cases that require low-latency search or analytics on production MySQL data.

This tutorial walks through the full setup: preparing the source database and destination cluster, creating the synchronization task, and verifying both full and incremental sync results.

How it works

DTS runs in two phases:

-

Full data sync — DTS reads existing rows from the source MySQL tables and writes them to Elasticsearch as documents.

-

Incremental sync — After full sync completes, DTS switches to change data capture and continuously applies INSERT, UPDATE, and DELETE operations to Elasticsearch.

During initial full sync, DTS reads and writes resources on both the source and destination, which can increase load. Run the sync during off-peak hours when CPU utilization on both ends is below 30%. If you start during peak hours, full sync may fail — restart the task if that happens. Incremental sync during peak hours may introduce latency.

DTS does not synchronize DDL operations. If you alter a table schema during sync, see Limitations for the required recovery steps.

Prerequisites

Before you begin, make sure you have:

-

An ApsaraDB RDS for MySQL instance (MySQL 8.0 used in this tutorial)

-

An Alibaba Cloud Elasticsearch cluster (V7.10 used in this tutorial), with the Auto Indexing feature enabled

-

Access to the DTS console

Limitations

| Limitation | Details |

|---|---|

| DDL operations are not synchronized | If you run a DDL operation on a source table during sync, you must: remove the table from the sync task, delete the corresponding index from the Elasticsearch cluster, and re-add the table to the task. See Remove an object from a data synchronization task and Add an object to a data synchronization task. |

| Adding columns requires manual steps | To add a column, first update the index mapping in Elasticsearch to include the new field. Then run the ALTER TABLE on the source, pause the sync task, and restart it. |

| Data type differences | ApsaraDB RDS for MySQL and Elasticsearch use different type systems. During initial schema sync, DTS maps source fields to destination fields based on supported Elasticsearch types. See Data type mappings for schema synchronization for the full mapping table. |

| Tables without primary keys | Tables without primary keys can cause DTS to consume more read/write resources. This may increase the load on the source and destination to the point where the source or destination becomes unavailable. |

Step 1: Prepare the source database and destination cluster

Set up the source database

-

Create an ApsaraDB RDS instance running MySQL 8.0.

-

Create an account and a database named

test_mysql. -

In the

test_mysqldatabase, create a table and insert the sample data:-- Create the table CREATE TABLE `es_test` ( `id` bigint(32) NOT NULL, `name` varchar(32) NULL, `age` bigint(32) NULL, `hobby` varchar(32) NULL, PRIMARY KEY (`id`) ) ENGINE=InnoDB DEFAULT CHARACTER SET=utf8; -- Insert sample rows INSERT INTO `es_test` (`id`,`name`,`age`,`hobby`) VALUES (1,'user1',22,'music'); INSERT INTO `es_test` (`id`,`name`,`age`,`hobby`) VALUES (2,'user2',23,'sport'); INSERT INTO `es_test` (`id`,`name`,`age`,`hobby`) VALUES (3,'user3',43,'game'); INSERT INTO `es_test` (`id`,`name`,`age`,`hobby`) VALUES (4,'user4',24,'run'); INSERT INTO `es_test` (`id`,`name`,`age`,`hobby`) VALUES (5,'user5',42,'basketball');

Set up the destination Elasticsearch cluster

-

Create an Alibaba Cloud Elasticsearch cluster running V7.10.

-

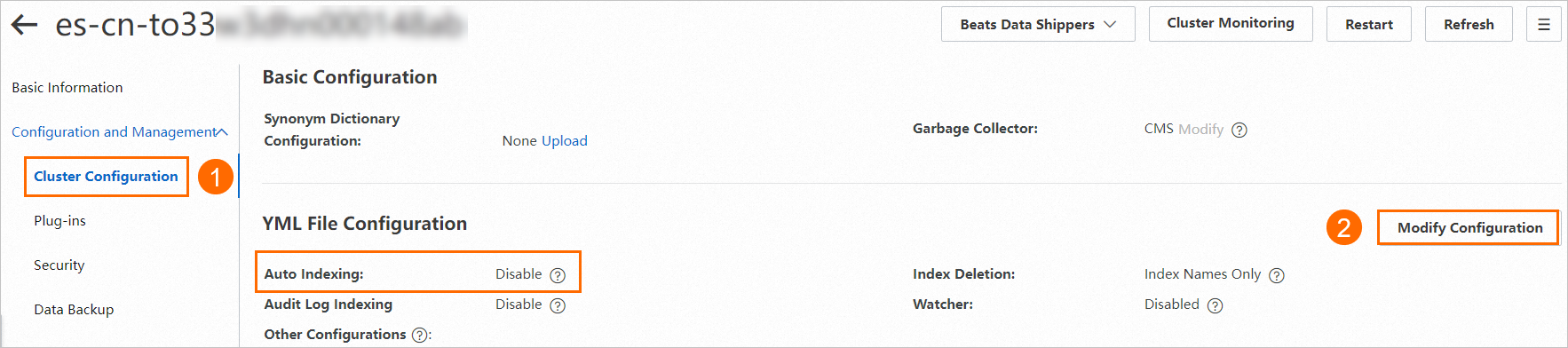

Enable the Auto Indexing feature. Open the cluster's YML file configuration and turn on Auto Indexing.

Step 2: Create the synchronization task

-

Go to the Data Synchronization page in the DTS console.

-

Click Create Task.

-

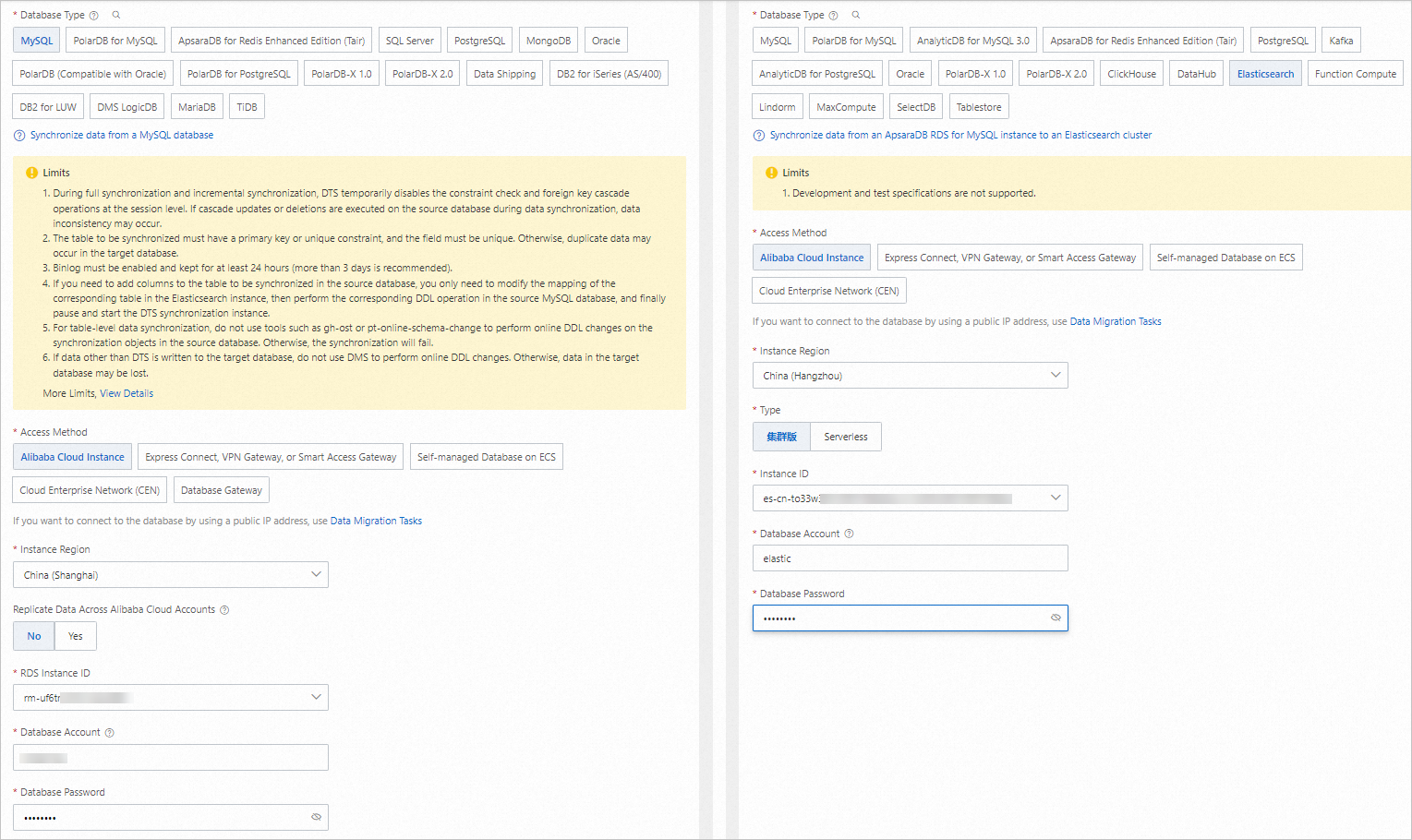

Configure the task as prompted:

For details on each parameter, see Synchronize data from an ApsaraDB RDS for MySQL instance to an Elasticsearch cluster.

-

Configure the source (ApsaraDB RDS for MySQL) and destination (Elasticsearch cluster). Click Test Connectivity and Proceed.

-

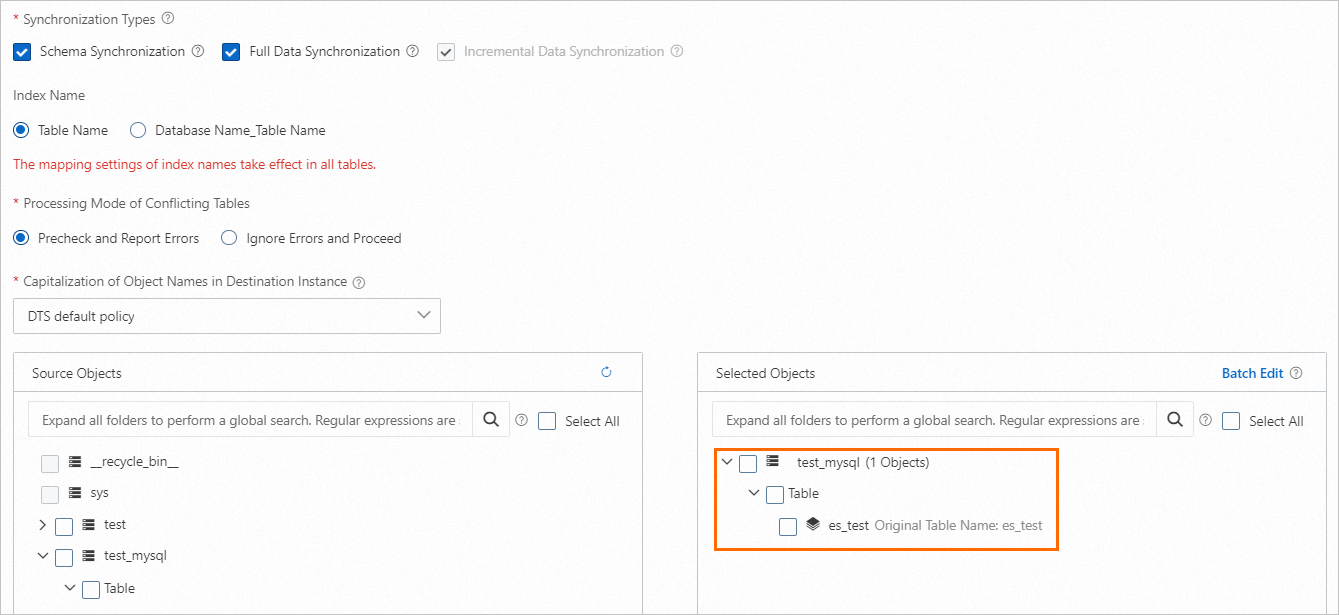

Select the objects to synchronize.

-

Configure advanced settings. The defaults are used in this tutorial.

-

In the Data Verification substep, select Apply _routing Policy to No Tables.

This setting is required when the destination cluster runs Elasticsearch V7.X.

-

-

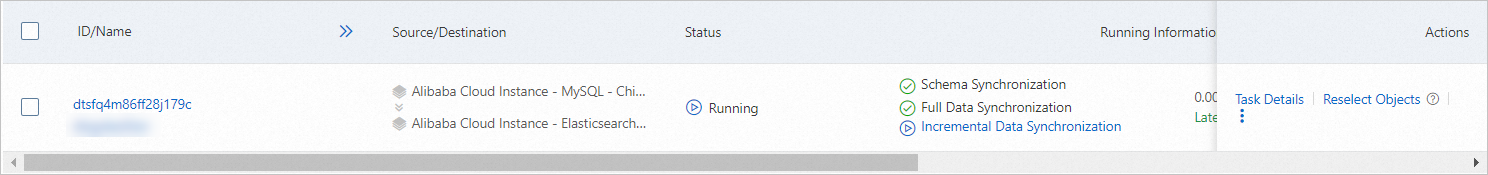

Save the task, run the pre-check, and purchase a DTS instance to start the sync. After the DTS instance is purchased, the task starts automatically. Monitor progress on the Data Synchronization page. After full sync completes, the initial 5 rows are available in Elasticsearch.

Step 3: (Optional) Verify the synchronization results

Log on to the Kibana console for your Elasticsearch cluster. In the upper-left corner, choose  > Management > Dev Tools, then click the Console tab.

> Management > Dev Tools, then click the Console tab.

Verify full data sync

Run the following query:

GET /es_test/_searchThe response confirms all 5 rows were synced:

{

"took" : 10,

"timed_out" : false,

"_shards" : {

"total" : 5,

"successful" : 5,

"skipped" : 0,

"failed" : 0

},

"hits" : {

"total" : {

"value" : 5,

"relation" : "eq"

},

"max_score" : 1.0,

"hits" : [

{

"_index" : "es_test",

"_type" : "es_test",

"_id" : "3",

"_score" : 1.0,

"_source" : {

"id" : 3,

"name" : "user3",

"age" : 43,

"hobby" : "game"

}

},

{

"_index" : "es_test",

"_type" : "es_test",

"_id" : "5",

"_score" : 1.0,

"_source" : {

"id" : 5,

"name" : "user5",

"age" : 42,

"hobby" : "basketball"

}

},

{

"_index" : "es_test",

"_type" : "es_test",

"_id" : "4",

"_score" : 1.0,

"_source" : {

"id" : 4,

"name" : "user4",

"age" : 24,

"hobby" : "run"

}

},

{

"_index" : "es_test",

"_type" : "es_test",

"_id" : "2",

"_score" : 1.0,

"_source" : {

"id" : 2,

"name" : "user2",

"age" : 23,

"hobby" : "sport"

}

},

{

"_index" : "es_test",

"_type" : "es_test",

"_id" : "1",

"_score" : 1.0,

"_source" : {

"id" : 1,

"name" : "user1",

"age" : 22,

"hobby" : "music"

}

}

]

}

}Verify incremental data sync

-

Insert a new row into the source table:

INSERT INTO `test_mysql`.`es_test` (`id`,`name`,`age`,`hobby`) VALUES (6,'user6',30,'dance'); -

After the row is replicated, run

GET /es_test/_searchagain. The response now shows 6 hits, including the new record:{ "took" : 541, "timed_out" : false, "_shards" : { "total" : 5, "successful" : 5, "skipped" : 0, "failed" : 0 }, "hits" : { "total" : { "value" : 6, "relation" : "eq" }, "max_score" : 1.0, "hits" : [ { "_index" : "es_test", "_type" : "es_test", "_id" : "3", "_score" : 1.0, "_source" : { "id" : 3, "name" : "user3", "age" : 43, "hobby" : "game" } }, { "_index" : "es_test", "_type" : "es_test", "_id" : "5", "_score" : 1.0, "_source" : { "id" : 5, "name" : "user5", "age" : 42, "hobby" : "basketball" } }, { "_index" : "es_test", "_type" : "es_test", "_id" : "4", "_score" : 1.0, "_source" : { "id" : 4, "name" : "user4", "age" : 24, "hobby" : "run" } }, { "_index" : "es_test", "_type" : "es_test", "_id" : "2", "_score" : 1.0, "_source" : { "id" : 2, "name" : "user2", "age" : 23, "hobby" : "sport" } }, { "_index" : "es_test", "_type" : "es_test", "_id" : "6", "_score" : 1.0, "_source" : { "name" : "user6", "id" : 6, "age" : 30, "hobby" : "dance" } }, { "_index" : "es_test", "_type" : "es_test", "_id" : "1", "_score" : 1.0, "_source" : { "id" : 1, "name" : "user1", "age" : 22, "hobby" : "music" } } ] } }

What's next

-

Overview of data synchronization scenarios — supported source and destination combinations