Audio and video transcoding consumes significant compute resources in media pipelines. CloudFlow and Function Compute together provide an elastic, highly available processing system that scales automatically, removes infrastructure management overhead, and reduces deployment effort from weeks to days.

Use cases

Dedicated cloud-based transcoding services handle most scenarios. A custom serverless pipeline is a better fit when you need:

Elastic video processing -- An existing FFmpeg-based service runs on virtual machines or containers and needs millisecond-level auto scaling without infrastructure management.

Batch processing at scale -- Hundreds of 1080p videos (each over 4 GB) are regularly generated every Friday and must be processed within a few hours.

Custom processing logic -- Beyond transcoding, the pipeline needs to log transcoding details to a database, prefetch popular videos to Alibaba Cloud CDN (CDN) points of presence (PoPs), add watermarks, generate GIF thumbnails, or adjust transcoding parameters -- without disrupting existing services.

Direct access to source files -- Video files in File Storage NAS (NAS) or on Elastic Compute Service (ECS) disks are processed in place, without migrating them to Object Storage Service (OSS).

Custom audio processing -- Convert audio formats, set custom sample rates, or apply noise reduction.

Cost-effective lightweight processing -- Simple operations like generating a GIF from the first few frames of a video or querying the duration of a media file.

How it works

Conventional self-managed architecture

A traditional approach uses ECS instances, OSS, and CDN to store, process, and deliver media files. You provision servers, install FFmpeg, build a processing pipeline, and manage scaling, monitoring, and deployments yourself.

Serverless architecture with CloudFlow

CloudFlow orchestrates Function Compute functions into a video processing workflow that handles the full pipeline -- from upload trigger to final output.

When a user uploads an .mp4 video to an OSS bucket, an OSS event trigger starts a CloudFlow execution. The workflow runs three stages:

Segment -- Splits the video into smaller chunks at specified time intervals.

Transcode -- Processes each segment in parallel into one or more target formats (for example, MP4 or FLV).

Merge -- Reassembles the transcoded segments into complete output files.

This architecture provides three capabilities:

Parallel multi-format transcoding -- A single source video is transcoded to multiple formats simultaneously. Custom processing steps such as watermarking or database updates can run at any stage.

Automatic scaling -- When multiple files arrive at once, Function Compute scales out to process them in parallel.

Large video optimization -- Oversized videos are segmented, transcoded in parallel, and merged. Shorter segment intervals speed up transcoding for larger files.

Video segmentation splits the video stream into multiple segments at specified time intervals and records segment information in an index file.

Serverless vs. self-managed comparison

Engineering efficiency

| Dimension | Serverless solution | Self-managed architecture |

|---|---|---|

| Infrastructure | None. | Provision and manage servers, load balancers, and storage. |

| Development focus | Business logic only. Serverless Devs handles orchestration and deployment. | Business logic plus runtime environment setup: software installation, service configuration, and security updates. |

| Parallel and distributed processing | CloudFlow orchestrates parallel processing across videos and distributed processing within a single large video. Stability and monitoring are built in. | Requires custom development for parallelism and a separate monitoring system. |

| Learning curve | Programming language skills and FFmpeg knowledge. | Additional expertise in Kubernetes, ECS, networking, and infrastructure management. |

| Time to production | ~3 person-days: 2 for development and debugging, 1 for stress testing. | 30+ person-days: hardware procurement, environment configuration, system development, testing, monitoring, alerting, and canary release. |

Scaling and operations

| Dimension | Serverless solution | Self-managed architecture |

|---|---|---|

| Auto scaling | Function Compute scales in milliseconds to absorb traffic surges, with no O&M overhead. | Requires Server Load Balancer (SLB). Scaling is slower than Function Compute. |

| Monitoring and alerting | Fine-grained metrics for flow executions (CloudFlow) and function executions (Function Compute). Latency and logs are queryable per invocation. | Limited to infrastructure-level metrics (auto scaling groups or containers). |

Prerequisites

Before you begin, make sure that you have:

Function Compute activated

An OSS bucket in the same region as the workflow

CloudFlow activated

File Storage NAS activated

Virtual Private Cloud (VPC) activated

Serverless Devs and its dependencies installed

Serverless Devs credentials configured

Deploy the video processing workflow

CloudFlow orchestrates multiple functions into a video processing pipeline. Serverless Devs handles the deployment.

Open the multimedia-process-flow template.

In the Development & Experience section, click Deploy to go to the Function Compute application center.

On the Create Application page, configure the following parameters, then click Create and Deploy Default Environment.

NoteIf the Failed to obtain the role message appears, ask the Alibaba Cloud account owner to attach the

AliRAMReadOnlyAccessandAliyunFCFullAccesspolicies to your RAM user. For more information, see Grant permissions to a RAM user by using an Alibaba Cloud account.Basic Configurations

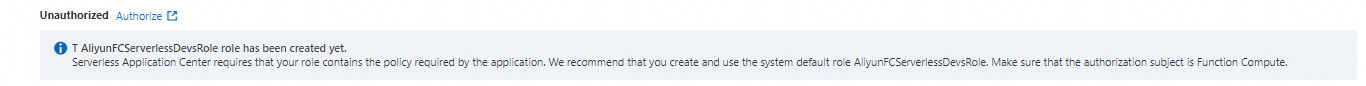

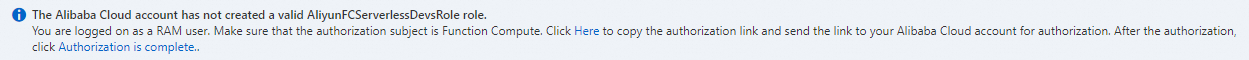

Parameter Default Description Deployment Type -- Select Directly Deploy. Role Name -- If this is your first time creating an application with an Alibaba Cloud account, click Authorize Now to go to the Role Templates page, create the AliyunFCServerlessDevsRoleservice-linked role, and click Confirm Authorization Policy. If you are using a RAM user, copy the authorization link shown on the page and send it to the Alibaba Cloud account owner for authorization. After authorization completes, click Authorized.

If you are using a RAM user, copy the authorization link shown on the page and send it to the Alibaba Cloud account owner for authorization. After authorization completes, click Authorized.

Advanced Settings

Parameter Default Description Region -- The region where the application is deployed. Workflow RAM Role ARN -- The service role used to run the workflow. Create a service role in advance and attach the AliyunFCInvocationAccesspolicy.Role Service Function Compute AliyunFCDefaultRoleThe service role that allows Function Compute to access other cloud services. Object Storage Bucket Name -- Name of a bucket in the same region as the workflow and functions. Prefix srcThe directory prefix for source videos. The directory in which the transcoded video is saved dstThe output directory for transcoded videos. OSS trigger RAM role ARN AliyunOSSEventNotificationRoleThe role used for OSS event notifications. If this is your first deployment, click Create Role to create and authorize this role. Wait 1--2 minutes for the deployment to complete. The system creates five functions and the

multimedia-process-flow-3oihworkflow: View the results in the Function Compute console and the CloudFlow console.Function Purpose oss-trigger-workflowTriggers workflow execution when a new video is uploaded to the specified OSS directory. splitSegments the video in the working directory based on the configured segment length. transcodeTranscodes each segment into the specified output format. mergeConcatenates transcoded segments into a complete output file. after-processCleans up the working directory.

Verify deployment

Log on to the CloudFlow console. Select the target region in the top navigation bar.

On the workflows page, click the

multimedia-process-flow-3oihworkflow.On the Execution Records tab, click Started Execution.

In the Execute Workflow panel, set Execution Name and Input of Execution, then click OK. Example input:

{ "oss_bucket_name": "buckettestfnf", "video_key": "source/test.mov", "output_prefix": "outputs", "segment_time_seconds": 15, "dst_formats": [ "mp4", "flv" ] }Check the execution result. On the Execution Details page, open the Basic Information tab. Execution Succeeded under Execution Status confirms a successful run.

Verify the output files. In the OSS console, navigate to the target bucket and the

/outputsdirectory. Confirm that the transcoded video files are present.

FAQ

How do I add custom processing steps like database logging or CDN prefetching?

Add steps at any point in the workflow -- preprocessing before transcoding starts, or post-processing after it finishes. For details on the base deployment, see Deploy the video processing workflow.

How do I add features without disrupting existing services?

CloudFlow invokes each function independently, so updating one function does not affect the others. For safe rollouts, use function versions and aliases to run canary releases. See Manage versions.

Do I need the full workflow for simple tasks like generating a GIF or querying media duration?

No. A single Function Compute function running FFmpeg commands is enough for lightweight operations. See the fc-oss-ffmpeg sample project.

Can I process files stored in NAS or on ECS disks without migrating to OSS?

Yes. Mount a NAS file system to Function Compute and read files directly from the mount point. See Configure a NAS file system.