This topic describes how to migrate data from a Teradata database to an AnalyticDB for PostgreSQL instance by using Data Transmission Service (DTS).

Prerequisites

- The new DTS console is used. You can configure a data migration task for this scenario only in the new DTS console.

- The version of the source Teradata database is 17 or earlier.

- The destination AnalyticDB for PostgreSQL instance is created. For more information, see Create an instance.

- The available storage space of the destination AnalyticDB for PostgreSQL instance is larger than the total size of the data in the Teradata database.

Limits

- During schema migration, DTS migrates foreign keys from the source database to the destination database.

- During full data migration and incremental data migration, DTS temporarily disables the constraint check and cascade operations on foreign keys at the session level. If you perform the cascade and delete operations on the source database during data migration, data inconsistency may occur.

| Category | Description |

|---|---|

| Limits on the source database |

|

| Other limits |

|

Billing

| Migration type | Instance configuration fee | Internet traffic fee |

|---|---|---|

| Schema migration and full data migration | Free of charge. | Charged only when data is migrated from Alibaba Cloud over the Internet. For more information, see Billing overview. |

Migration types

- Schema migration

Data Transmission Service (DTS) migrates the schemas of objects from the source database to the destination database.

Warning Teradata and AnalyticDB for PostgreSQL are heterogeneous databases. Their data types do not have one-to-one correspondence. We recommend that you evaluate the impact of data type conversion on your business because data type conversion may cause data inconsistency or task failures. For more information, see Data type mappings between heterogeneous databases. - Full data migration

DTS migrates the existing data of objects from the source database to the destination database.

Permissions required for database accounts

| Database | Schema migration | Full data migration |

|---|---|---|

| Teradata database | Read permissions on the objects to be migrated | |

| AnalyticDB for PostgreSQL instance | Read and write permissions on the destination database | |

- Self-managed Teradata database: Adding Permissions

- AnalyticDB for PostgreSQL instance: Create a database account and Manage users and permissions

Procedure

- Go to the Data Migration Tasks page.

- Log on to the Data Management (DMS) console.

- In the top navigation bar, click DTS.

- In the left-side navigation pane, choose .

Note- Operations may vary based on the mode and layout of the DMS console. For more information, see Simple mode and Configure the DMS console based on your business requirements.

- You can also go to the Data Migration Tasks page of the new DTS console.

- From the drop-down list next to Data Migration Tasks, select the region in which the data migration instance resides. Note If you use the new DTS console, you must select the region in which the data migration instance resides in the upper-left corner.

- Click Create Task. On the page that appears, configure the source and destination databases.Warning After you select the source and destination instances, we recommend that you read the limits displayed at the top of the page. This helps ensure that the task properly runs or prevent data inconsistency.

Section Parameter Description N/A Task Name The name of the task. DTS automatically generates a task name. We recommend that you specify an informative name to identify the task. You do not need to specify a unique task name.

Source Database Database Type The type of the source database. Select Teradata. Access Method The access method of the source database. Select Public IP Address. Note If your source database is a self-managed database, you must deploy the network environment for the database. For more information, see Preparation overview.Instance Region The region in which the Teradata database resides. Hostname or IP address The endpoint that is used to access the Teradata database. In this example, the public IP address is used. Port Number The service port number of the Teradata database. Default value: 1025. Database Account The account of the Teradata database. For more information about the permissions that are required for the account, see the Permissions required for database accounts section of this topic. Database Password The password of the database account.

Destination Database Database Type The type of the destination database. Select AnalyticDB for PostgreSQL. Access Method The access method of the destination database. Select Alibaba Cloud Instance. Instance Region The region in which the destination AnalyticDB for PostgreSQL instance resides. Instance ID The ID of the destination AnalyticDB for PostgreSQL instance. Database Name The name of the destination database in the destination AnalyticDB for PostgreSQL instance. Database Account The database account of the destination AnalyticDB for PostgreSQL instance. For more information about the permissions that are required for the account, see the Permissions required for database accounts section of this topic. Database Password The password of the database account.

- If a whitelist is configured for your self-managed database, you must add the CIDR blocks of DTS servers to the whitelist. Then, click Test Connectivity and Proceed. Warning If the CIDR blocks of DTS servers are automatically or manually added to the whitelist of a database or an instance, or to the security group rules of an ECS instance, security risks may arise. Therefore, before you use DTS to migrate data, you must understand and acknowledge the potential risks and take preventive measures, including but not limited to the following measures: enhance the security of your username and password, limit the ports that are exposed, authenticate API calls, regularly check the whitelist or ECS security group rules and forbid unauthorized CIDR blocks, or connect the database to DTS by using Express Connect, VPN Gateway, or Smart Access Gateway.

- Configure objects to migrate and advanced settings.

- Basic Settings

Parameter Description Migration Type Select both Schema Migration and Full Data Migration.Note In this scenario, DTS does not support incremental data migration. To ensure data consistency, we recommend that you do not write data to the source instance during data migration.Processing Mode of Conflicting Tables Precheck and Report Errors: checks whether the destination database contains tables that have the same names as tables in the source database. If the source and destination databases do not contain identical table names, the precheck is passed. Otherwise, an error is returned during the precheck and the data migration task cannot be started.

Note You can use the object name mapping feature to rename the tables that are migrated to the destination database. You can use this feature if the source and destination databases contain tables that have identical names and the tables in the destination database cannot be deleted or renamed. For more information, see Map object names.- Ignore Errors and Proceed: skips the precheck for identical table names in the source and destination databases. Warning If you select Ignore Errors and Proceed, data inconsistency may occur, and your business may be exposed to potential risks.

- If the source and destination databases have the same schema, DTS does not migrate data records that have the same primary keys as data records in the destination database.

- If the source and destination databases have different schemas, only some columns are migrated or the data migration task fails. Proceed with caution.

Source Objects Select one or more objects from the Source Objects section and click the

icon to add the objects to the Selected Objects section. Note You can select columns, tables, or schemas as the objects to be migrated. If you select tables or columns as the objects to be migrated, DTS does not migrate other objects, such as views, triggers, or stored procedures, to the destination database.

icon to add the objects to the Selected Objects section. Note You can select columns, tables, or schemas as the objects to be migrated. If you select tables or columns as the objects to be migrated, DTS does not migrate other objects, such as views, triggers, or stored procedures, to the destination database.Selected Objects - To rename an object that you want to migrate to the destination instance, right-click the object in the Selected Objects section. For more information, see Map the name of a single object.

- To rename multiple objects at a time, click Batch Edit in the upper-right corner of the Selected Objects section. For more information, see Map multiple object names at a time.

Note- If you use the object name mapping feature to rename an object, other objects that are dependent on the object may fail to be migrated.

- To specify WHERE conditions to filter data, right-click an object in the Selected Objects section. In the dialog box that appears, specify the conditions. For more information, see Use SQL conditions to filter data.

- Advanced Settings

Parameter Description Set Alerts Specifies whether to set alerts for the data migration task. If the task fails or the migration latency exceeds the threshold, the alert contacts will receive notifications. Valid values:- No: does not set alerts.

- Yes: sets alerts. If you select Yes, you must also set the alert threshold and alert contacts. For more information, see Configure monitoring and alerting when you create a DTS task.

Retry Time for Failed Connections The retry time range for failed connections. If the source or destination database fails to be connected after the data migration task is started, DTS immediately retries a connection within the time range. Valid values: 10 to 1440. Unit: minutes. Default value: 720. We recommend that you set the parameter to a value greater than 30. If DTS reconnects to the source and destination databases within the specified time range, DTS resumes the data migration task. Otherwise, the data migration task fails.Note- If you set different retry time ranges for multiple data migration tasks that share the same source or destination database, the value that is set later takes precedence.

- If DTS retries a connection, you are charged for the operation of the DTS instance. We recommend that you specify the retry time based on your business needs and release the DTS instance at your earliest opportunity after the source and destination instances are released.

Capitalization of Object Names in Destination Instance The capitalization of database names, table names, and column names in the destination instance. By default, DTS default policy is selected. You can select other options to ensure that the capitalization of object names is consistent with the default capitalization of object names in the source or destination database. For more information, see Specify the capitalization of object names in the destination instance.

- Basic Settings

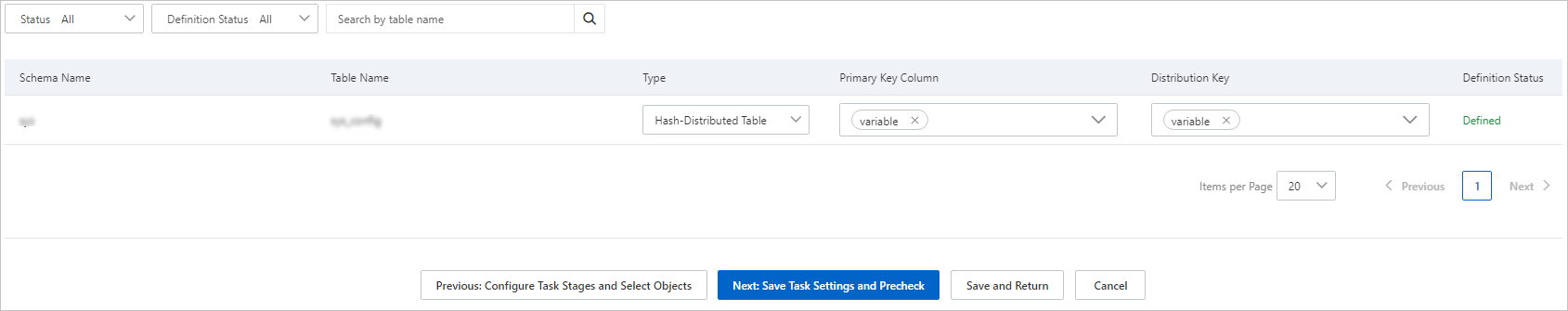

- Specify the primary key columns and distribution key columns of the tables that you want to migrate to the AnalyticDB for PostgreSQL instance.

- In the lower part of the page, click Next: Save Task Settings and Precheck. Note

- Before you can start the data migration task, DTS performs a precheck. You can start the data migration task only after the task passes the precheck.

- If the task fails to pass the precheck, click View Details next to each failed item. After you troubleshoot the issues based on the causes, run a precheck again.

- If an alert is triggered for an item during the precheck:

- If an alert item cannot be ignored, click View Details next to the failed item and troubleshoot the issues. Then, run a precheck again.

- If an alert item can be ignored, click Confirm Alert Details. In the View Details dialog box, click Ignore. In the message that appears, click OK. Then, click Precheck Again to run a precheck again. If you ignore the alert item, data inconsistency may occur, and your business may be exposed to potential risks.

- Wait until the Success Rate value becomes 100%. Then, click Next: Purchase Instance.

- On the Purchase Instance page, specify the Instance Class parameter for the data migration instance. The following table describes the parameter.

Section Parameter Description Parameters Instance Class DTS provides several instance classes that vary in the migration speed. You can select an instance class based on your business scenario. For more information, see Specifications of data migration instances.

- Read and select the check box to agree to Data Transmission Service (Pay-as-you-go) Service Terms.

- Click Buy and Start to start the data migration task. You can view the progress of the task in the task list.