The data analysis landscape has shifted from standalone relational databases to cloud-native distributed warehouses over four decades. This document traces that evolution and explains the four technology trends—cloud-native architecture, storage-compute separation, HTAP integration, and database/big-data convergence—that define modern data infrastructure, and why they matter for AnalyticDB for PostgreSQL.

Technology development trends

From standalone databases to distributed warehouses

Commercial relational databases emerged in the 1980s. Oracle, SQL Server, and Db2 processed structured data in real time using a standalone architecture. Open source relational databases such as MySQL and PostgreSQL followed in the 1990s, lowering the barrier for adoption.

Standalone architectures hit a fundamental limit: they cannot scale horizontally. As business data volumes grew, enterprises needed distributed systems. Teradata and Oracle Exadata addressed this with a scale-out architecture—but as all-in-one offerings with specific hardware requirements, they were expensive and accessible mainly to large enterprises in finance, transportation, and energy.

Google and the rise of Internet-scale workloads shifted the equation again. Hadoop and the x86-based big data stack brought distributed processing to a broader market. Open source massively parallel processing (MPP) databases such as Greenplum emerged as lower-cost alternatives, making large-scale data analysis accessible to small and medium-sized enterprises (SMEs). SQL interfaces were layered on top of MapReduce, making SQL a standard part of the big data analytics stack.

Cloud service providers—Amazon Web Services (AWS), Microsoft Azure, Alibaba Cloud, and Google—then introduced a new generation: cloud-native distributed data warehouses. Cloud-native data warehouses originate from database and big data technologies and provide standard SQL interfaces and atomicity, consistency, isolation, durability (ACID) guarantees, while using either Shared Everything or Shared Nothing architectures for resource pooling and horizontal scalability. Resource isolation and data sharing are common requirements for cloud-native data warehouses.

Current trends

Four trends define the direction of data analysis technology:

Cloud-native distributed architecture

Standalone storage cannot keep pace with the rapid growth of data in both online transaction processing (OLTP) and online analytical processing (OLAP) scenarios. According to Gartner's report "The Future of the DBMS Market Is Cloud," cloud-native architectures have become a necessity for cloud databases. Distributed databases are now the foundational technology for enterprise data infrastructure.

Storage and computing separation

The core of cloud computing is efficient resource pooling. Separating storage from compute lets each layer scale independently, enabling resource isolation and data sharing without over-provisioning either layer. Storage-compute separation is now the dominant architectural pattern for cloud data systems.

Computing and analysis integration

Traditional pipelines regularly extract data from OLTP systems and load it into OLAP systems for near-real-time analysis. This synchronization layer introduces complex deployments, data redundancy, poor real-time performance, and high costs. A single hybrid transaction/analytical processing (HTAP) system handles both workloads, eliminating the synchronization overhead entirely.

Integration of databases and big data technologies

Early big data systems traded consistency for distributed scalability. Over time, MPP techniques and SQL interfaces were applied on top of MapReduce, and distributed databases began incorporating big data storage formats. Both approaches converged on the same goal: large-scale data analysis with standard interfaces and reliable semantics.

Market trends

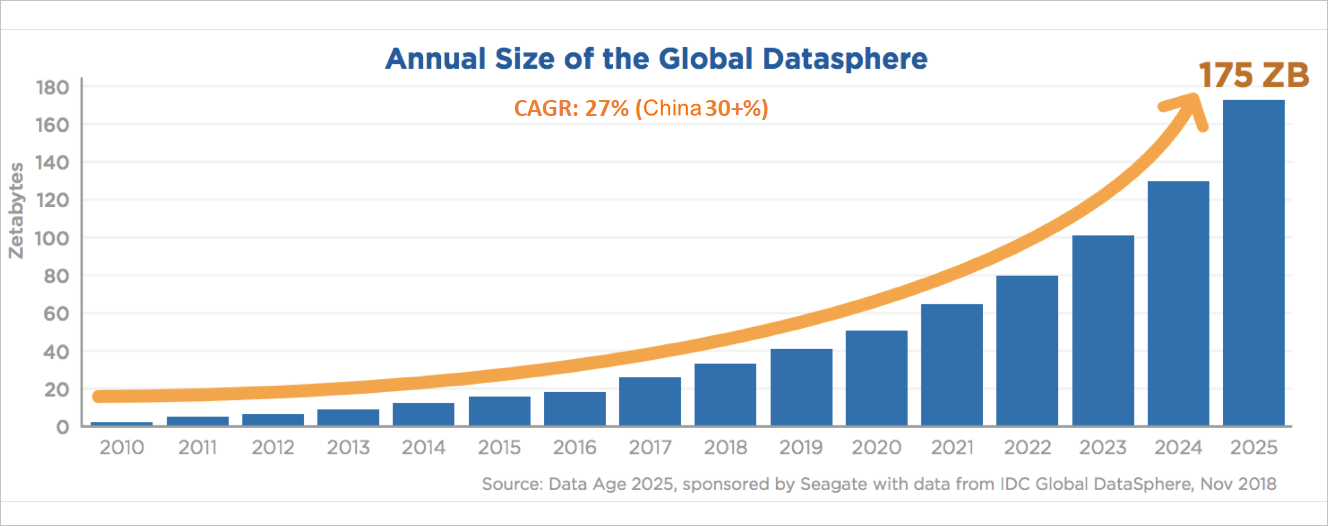

Data growth is driving sustained demand for analytics infrastructure. Between 2010 and 2025, the compound annual growth rate (CAGR) of data worldwide is expected to reach 27%, and 30% in China. By 2025, according to Gartner:

Live data will account for 30% of all data

Unstructured live data will account for 80% of all live data

Data stored in the cloud will account for 45% of all data

Databases stored in the cloud will account for 75% of all databases

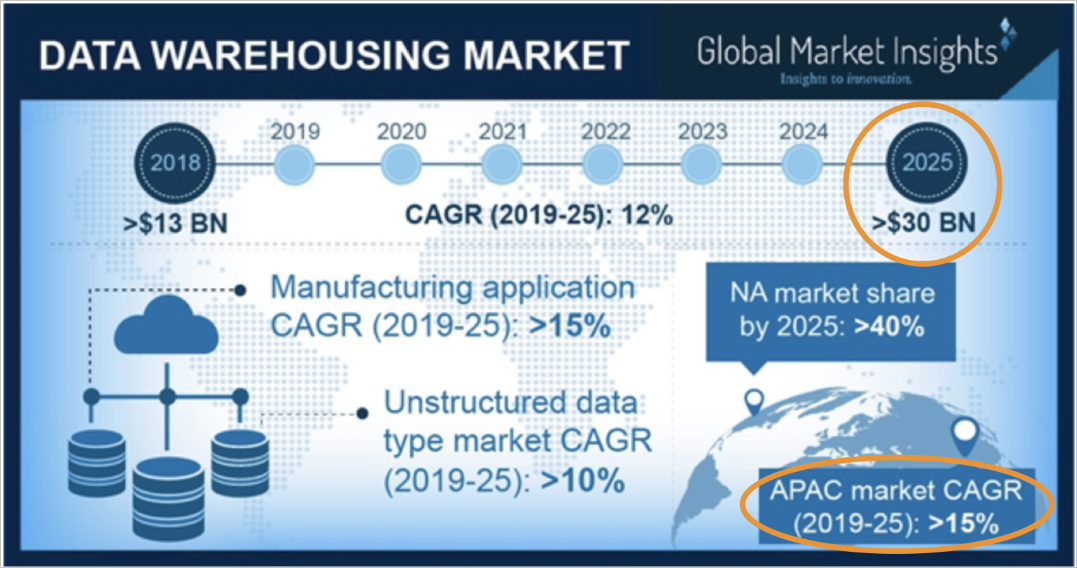

According to Global Market Insights, the data warehousing market is projected to grow at more than 12% CAGR worldwide and more than 15% in China between 2019 and 2025. Demand comes from finance, Internet, manufacturing, government, and new retail industries.

Alibaba Cloud database services

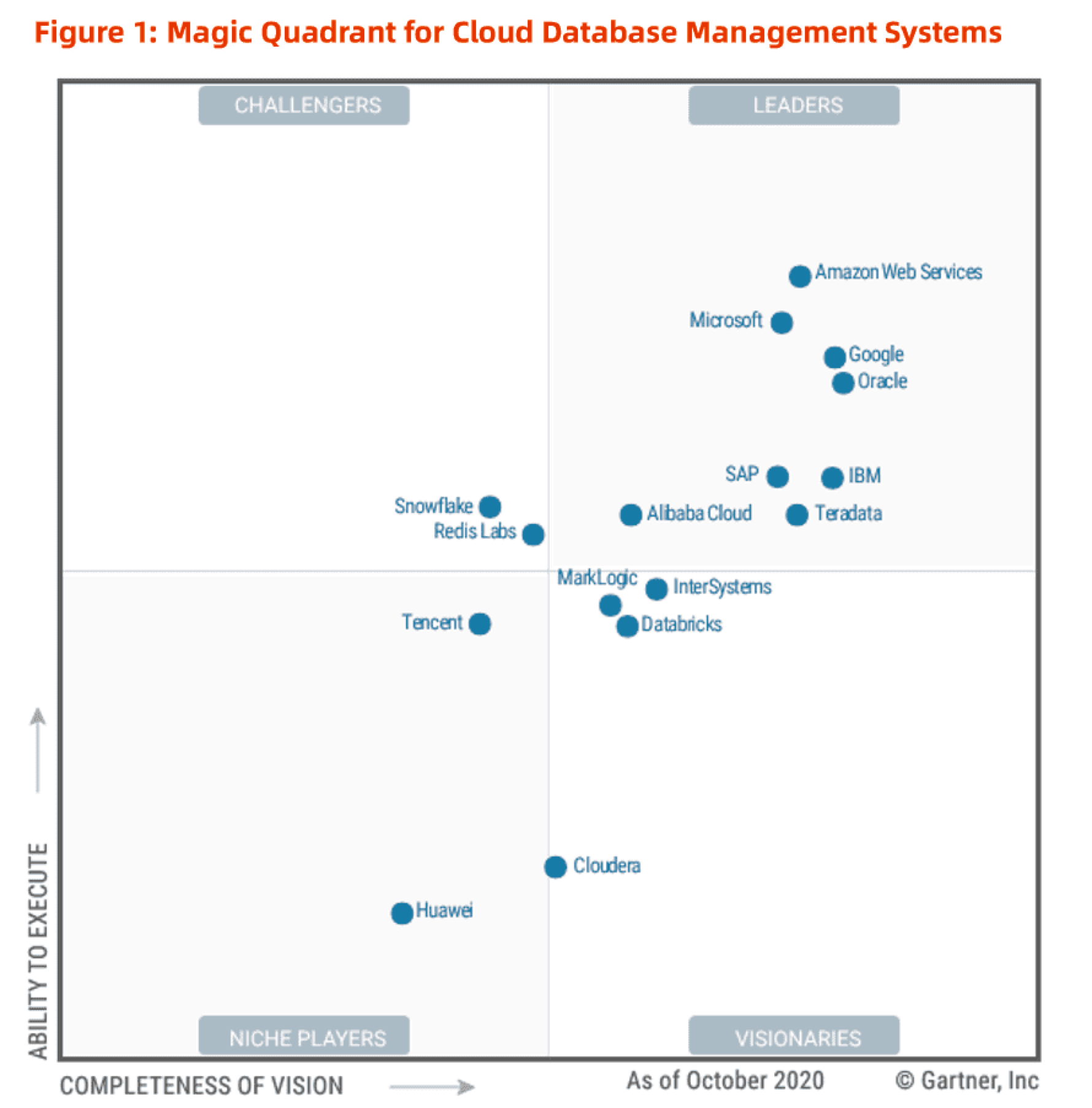

Alibaba Cloud has invested in database and data analysis technologies since its inception to provide services for businesses inside and outside Alibaba Cloud across industries and scenarios worldwide. As of 2020, Alibaba Cloud has been named a Leader in the Gartner Magic Quadrant for Cloud Database Management Systems for three consecutive years.

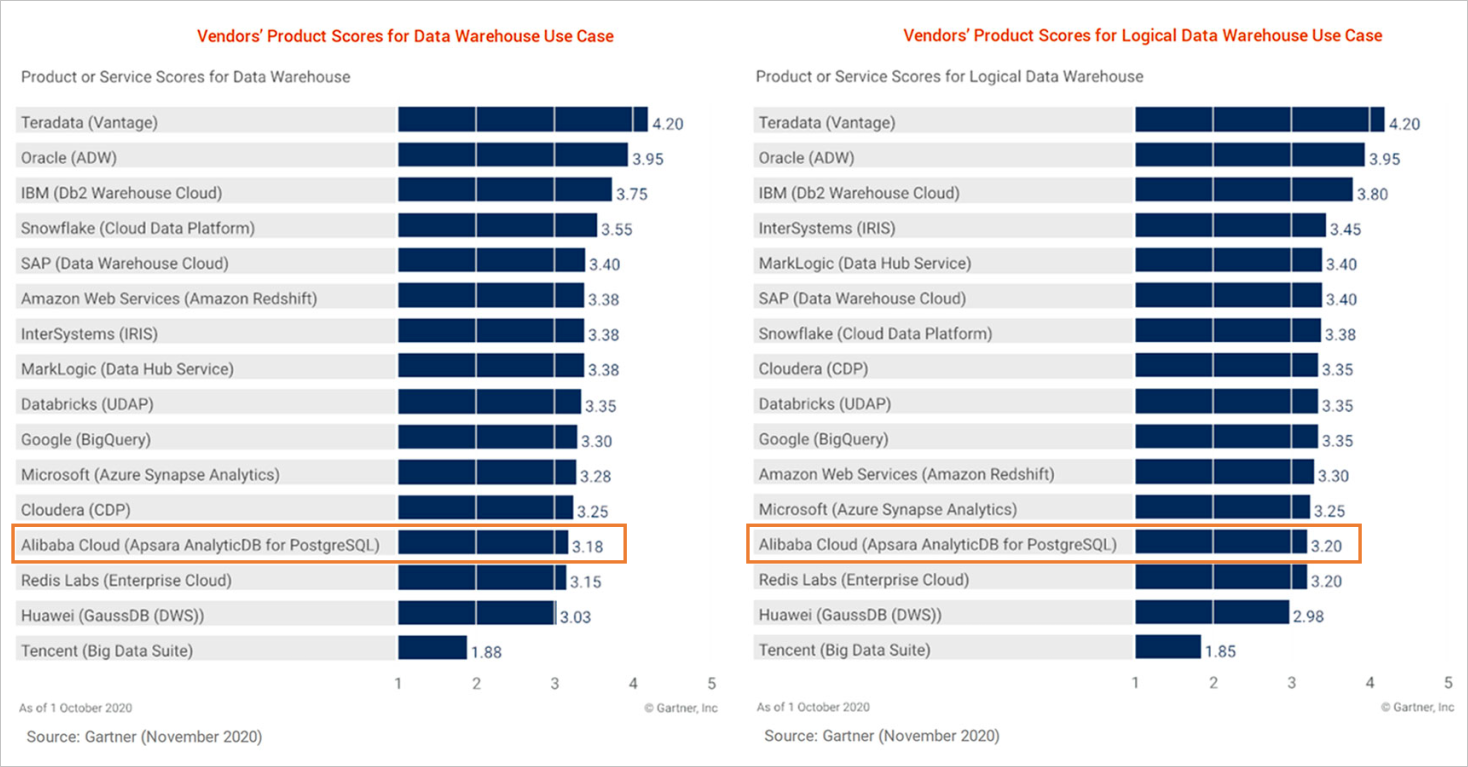

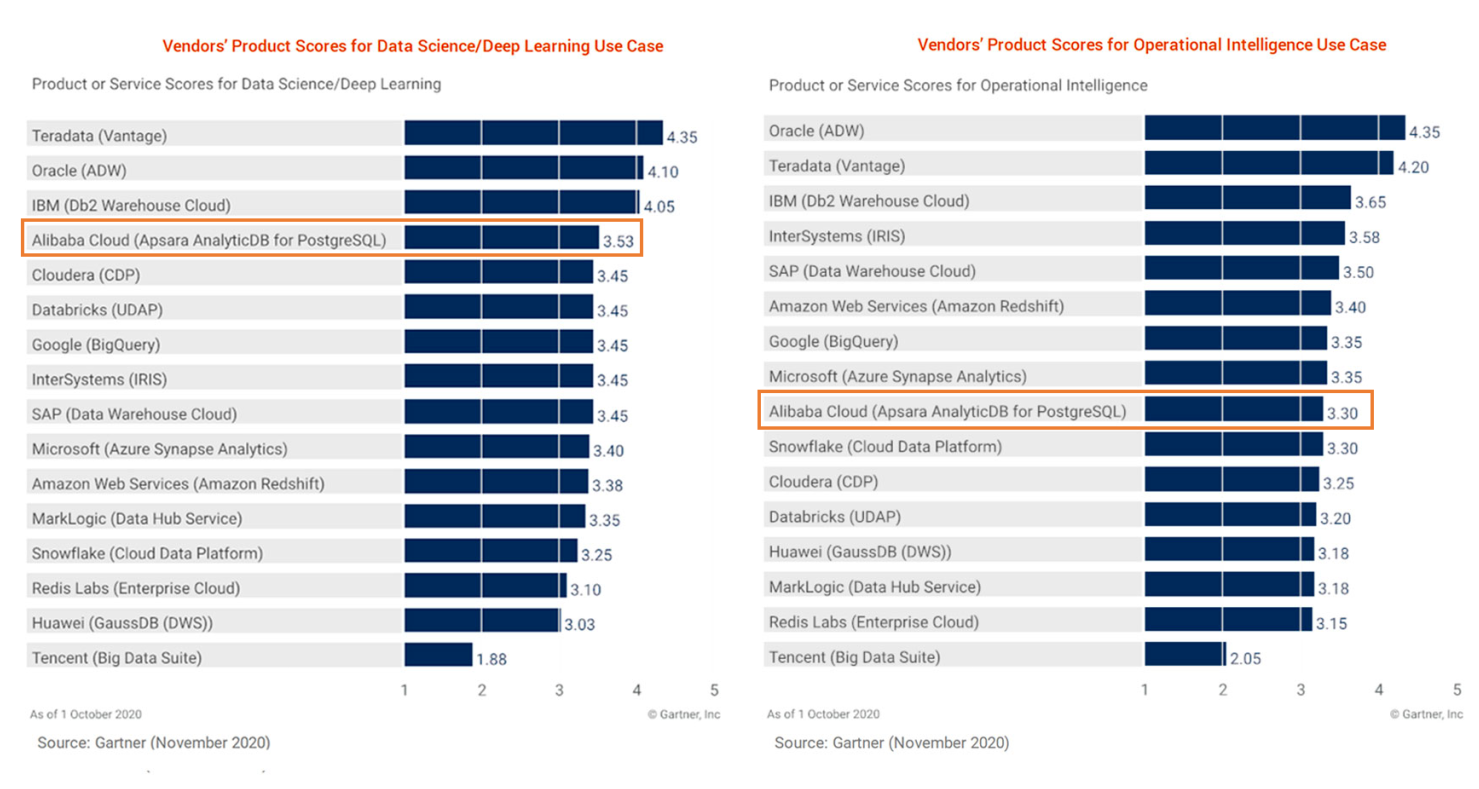

AnalyticDB for PostgreSQL provides core features for data analysis. Its score ranking in the 2020 Gartner Critical Capabilities for Cloud Database Management Systems for Analytical Use Cases report is shown below.