By Jeremy Pedersen

Welcome! In this blog, we'll be setting up a new DataWorks project. We will import some data from a MySQL database, perform some basic processing steps using MaxCompute SQL, then export our final results back out to the MySQL database. Let's get started!

DataWorks is Alibaba Cloud's "Data IDE". It's a friendly web-based console that serves as an interface to Alibaba Cloud's various data processing "engines", including MaxCompute, AnalyticDB for MySQL, and E-MapReduce.

As a data analyst or data developer working on Alibaba Cloud, you will spend the majority of your time in DataWorks. DataWorks is used for:

...and a lot of other things, besides. You can find more details in the DataWorks documentation.

MaxCompute is Alibaba Cloud's large-scale data storage and batch processing engine. MaxCompute uses a distributed storage model similar to Hadoop's HDFS, and a SQL query language similar to Hadoop's Hive.

MaxCompute can store enormous tables (up to Petabytes in size) and can run large-scale data processing tasks in parallel across thousands of compute nodes.

The Hadoop ecosystem of tools is great, but Alibaba Group has a few requirements that were difficult to meet with Hadoop alone, including:

MaxCompute was built to fulfill these needs, and DataWorks was later added to provide a friendlier (non-commandline) interface for users.

Before you go any further, you should create a MySQL database by following along with this blog post. This SQL database will contain the users, products, and orders tables we will be importing into our DataWorks Workspace.

Done?

Great! Let's continue.

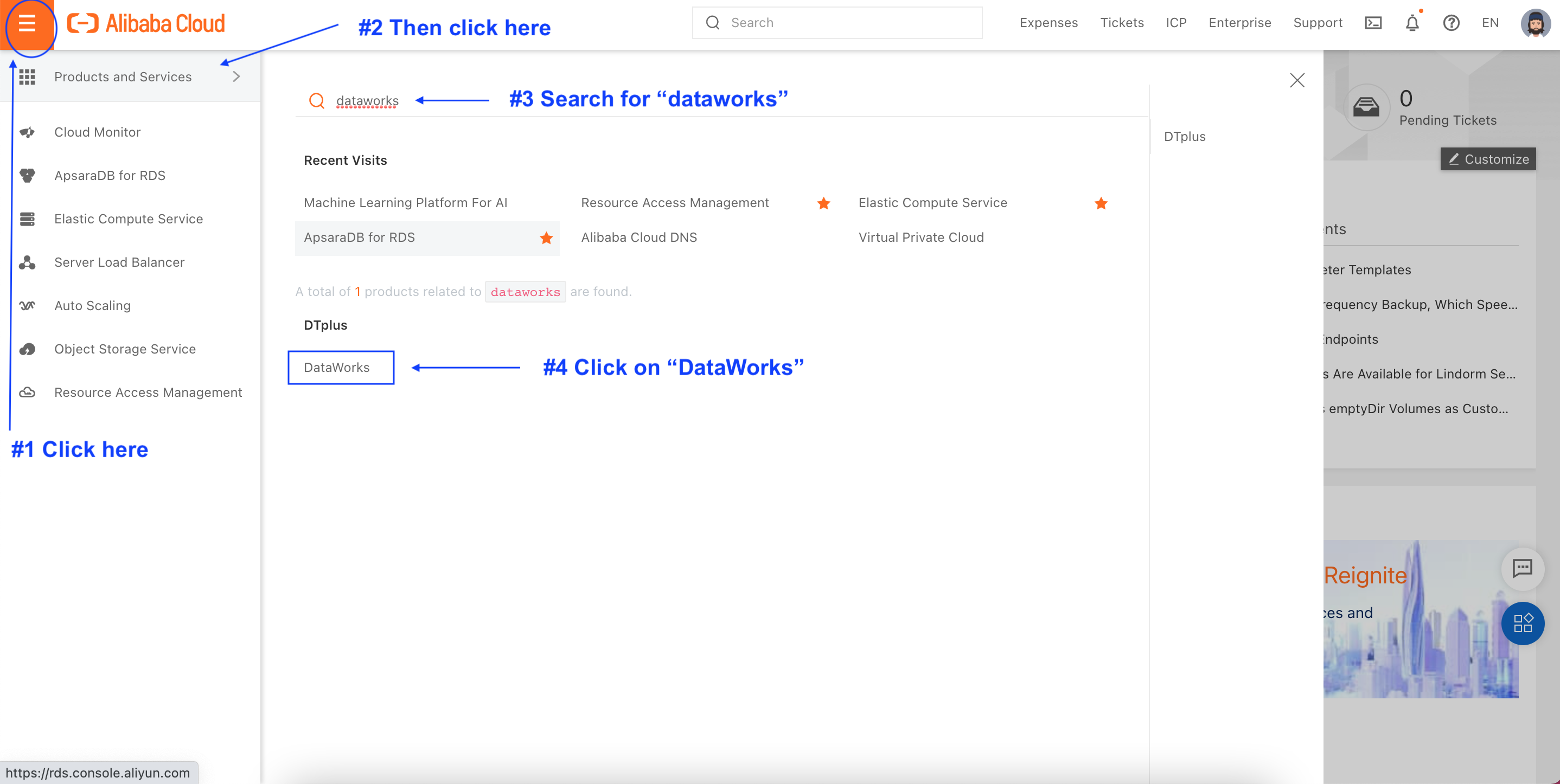

The first thing we need to do is create a DataWorks workspace. First, log in to your Alibaba Cloud account. Then, from the Console homepage, search for "dataworks":

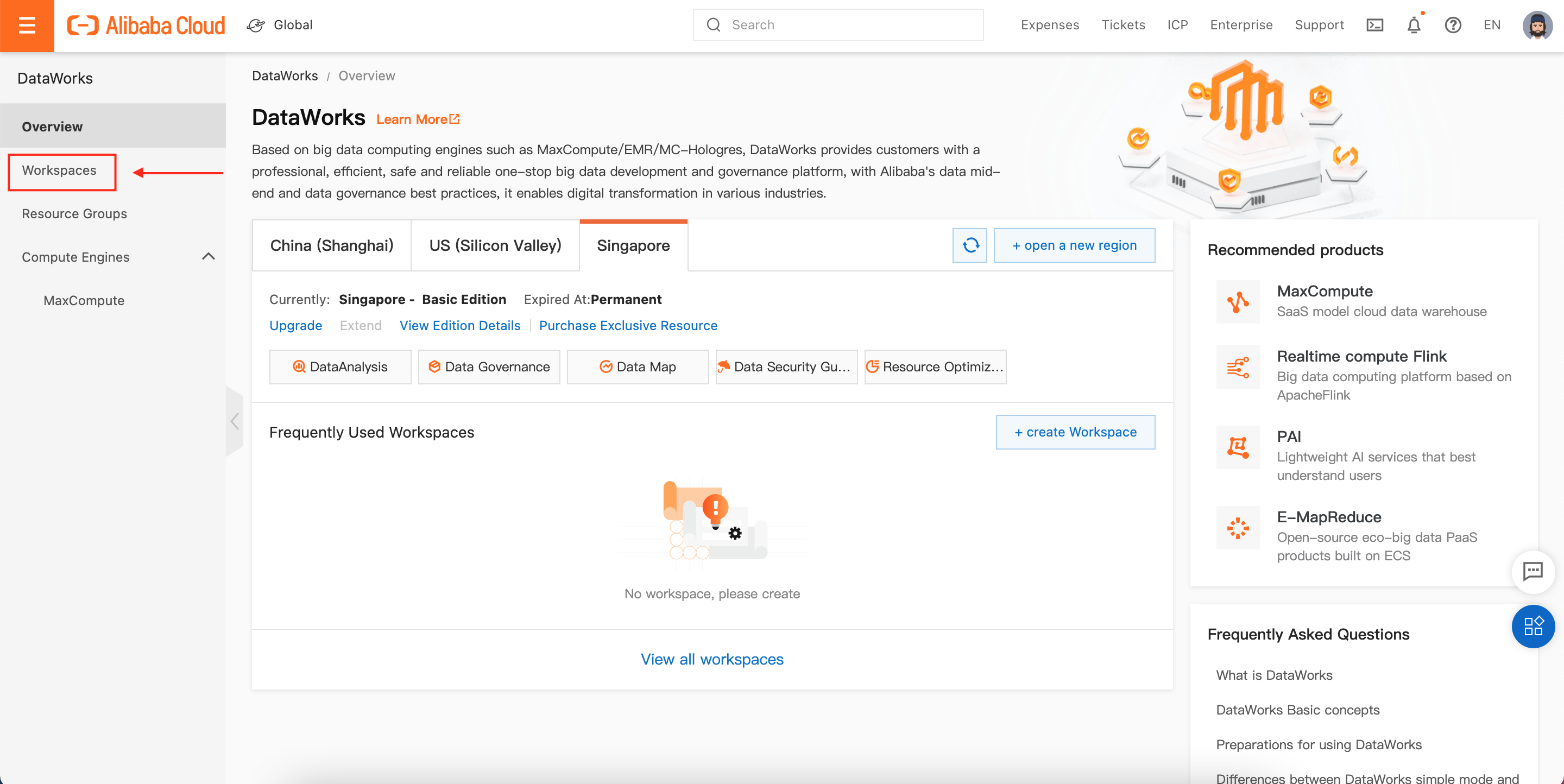

Click on "Workspaces":

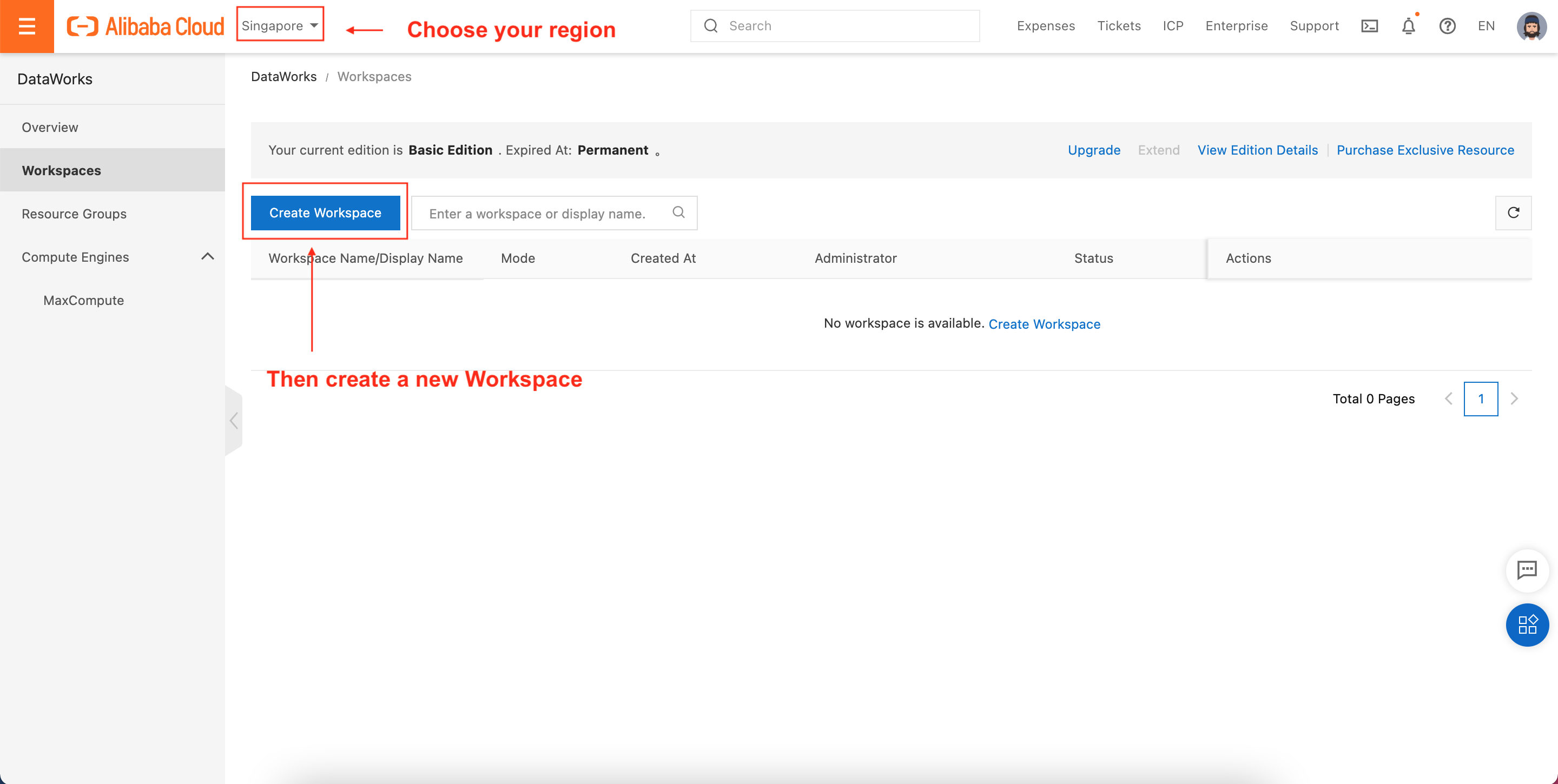

This will take you to the "Workspaces" homepage. Select the Alibaba Cloud Region where you created your MySQL database, then click on "Create Workspace":

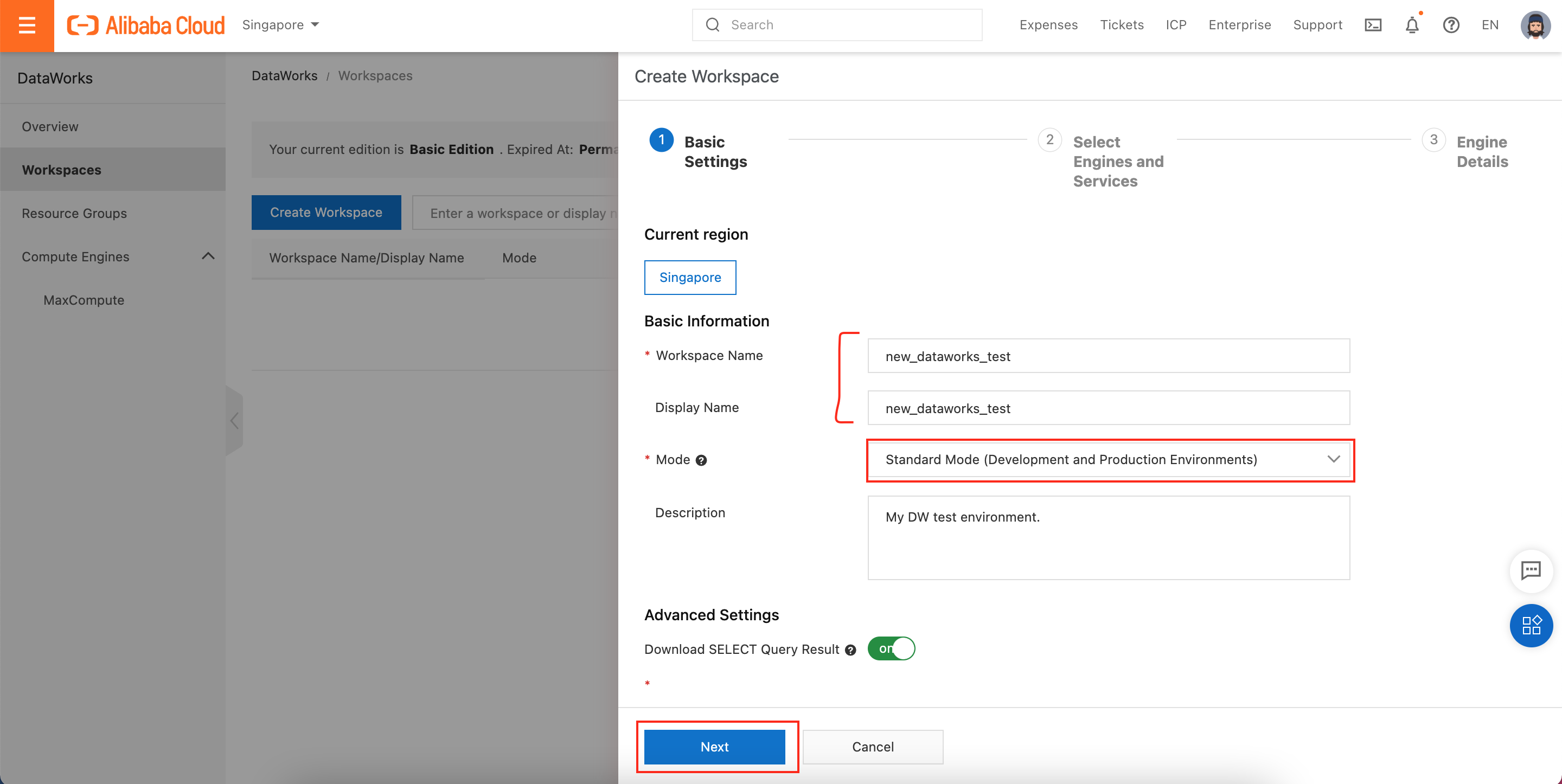

Give the new Workspace a Name and a Display Name. Choose Standard Mode as the Mode for this Workspace, and then click Next:

At this point you probably have a few questions (you can skip this and just click Next if you're in a hurry!):

What's the point of having separate Name and Display Name fields?

A lot of Alibaba Cloud's users are not native speakers of English. Workspace names use a restricted (ASCII) character set, which means the name of your project cannot be written in - say - French or Chinese. That's inconvenient for users that are not native English speakers, so the Display Name allows users to attach a friendly name to the project in their native language. This Display Name is what will be displayed in the Alibaba Cloud console, though on the backend and in configuration files, Name will be used instead.

What is meant by Mode?

I could write a whole separate blog post about this, but I'll try to summarize. In Basic mode, the DataWorks Workspace contains a single MaxCompute project. That means all the tables you create, all the SQL jobs, all the Python code you write, etc... is all part of a single shared "space". This is fine for testing and development, but it isn't great if you want to enforce separation between "Production" and "Development" environments.

In Standard mode, the DataWorks Workspace contains two separate MaxCompute projects, one for development and one for production.

These two environments can contain different users, different tables, different data sources, different SQL code, etc... In this way, you can isolate development and testing from production. When using Standard mode, DataWorks also gives you a formal deployment process to follow.

This means that a developer who has written a workflow in the "Development" MaxCompute workspace must submit it for review by someone else before it can be pushed (deployed) into the "Production" MaxCompute workspace. This helps ensure both security and stability. As a result, we always recommend using Standard mode for any real, production work you do in DataWorks.

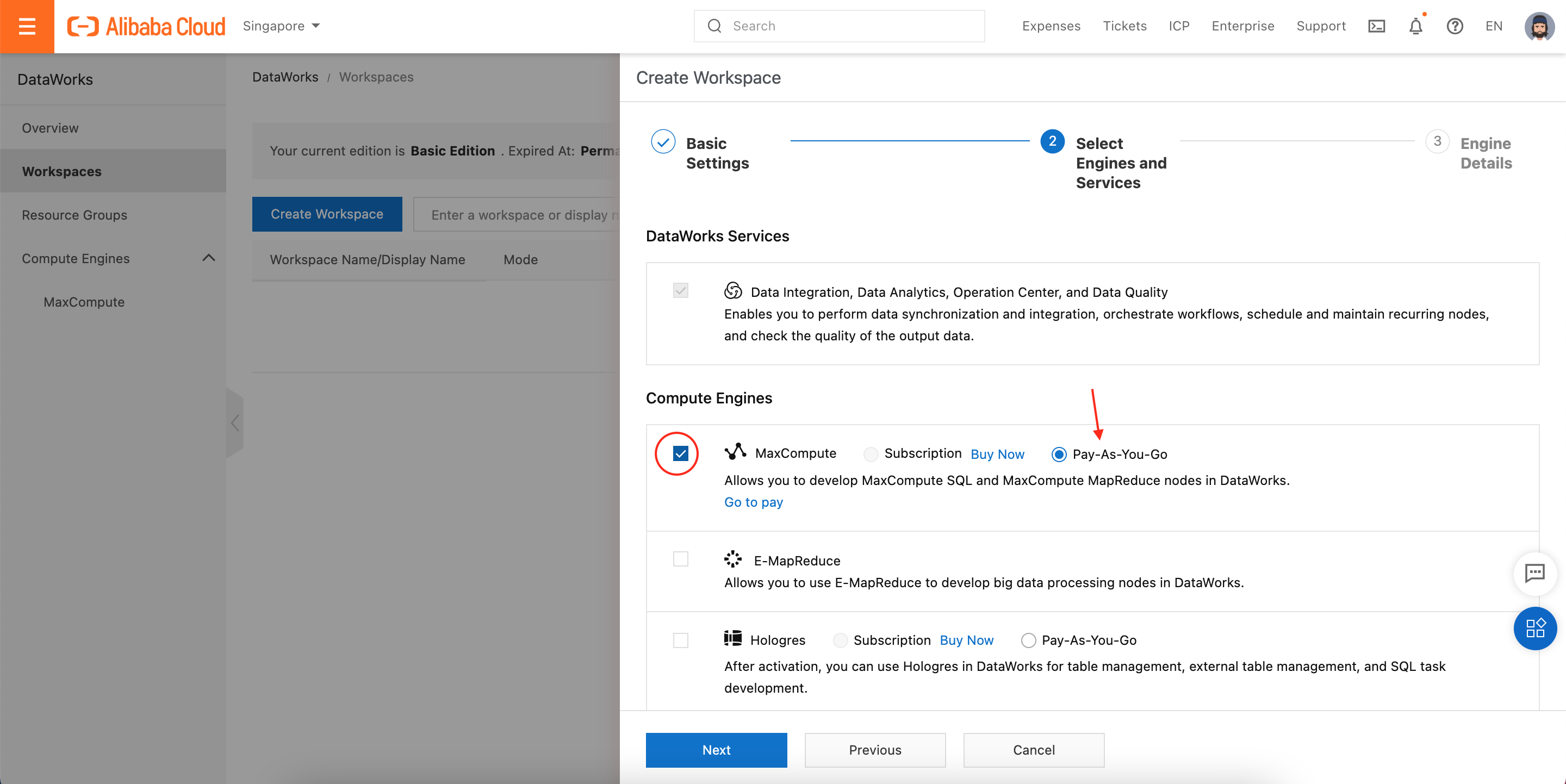

Ok, moving on! Next we need to choose the "engine" we will use with DataWorks:

Although this blog focuses exlusively on DataWorks + MaxCompute, DataWorks can work with other data processing "engines" including E-MapReduce (Hadoop) and Hologres. We will choose MaxCompute.

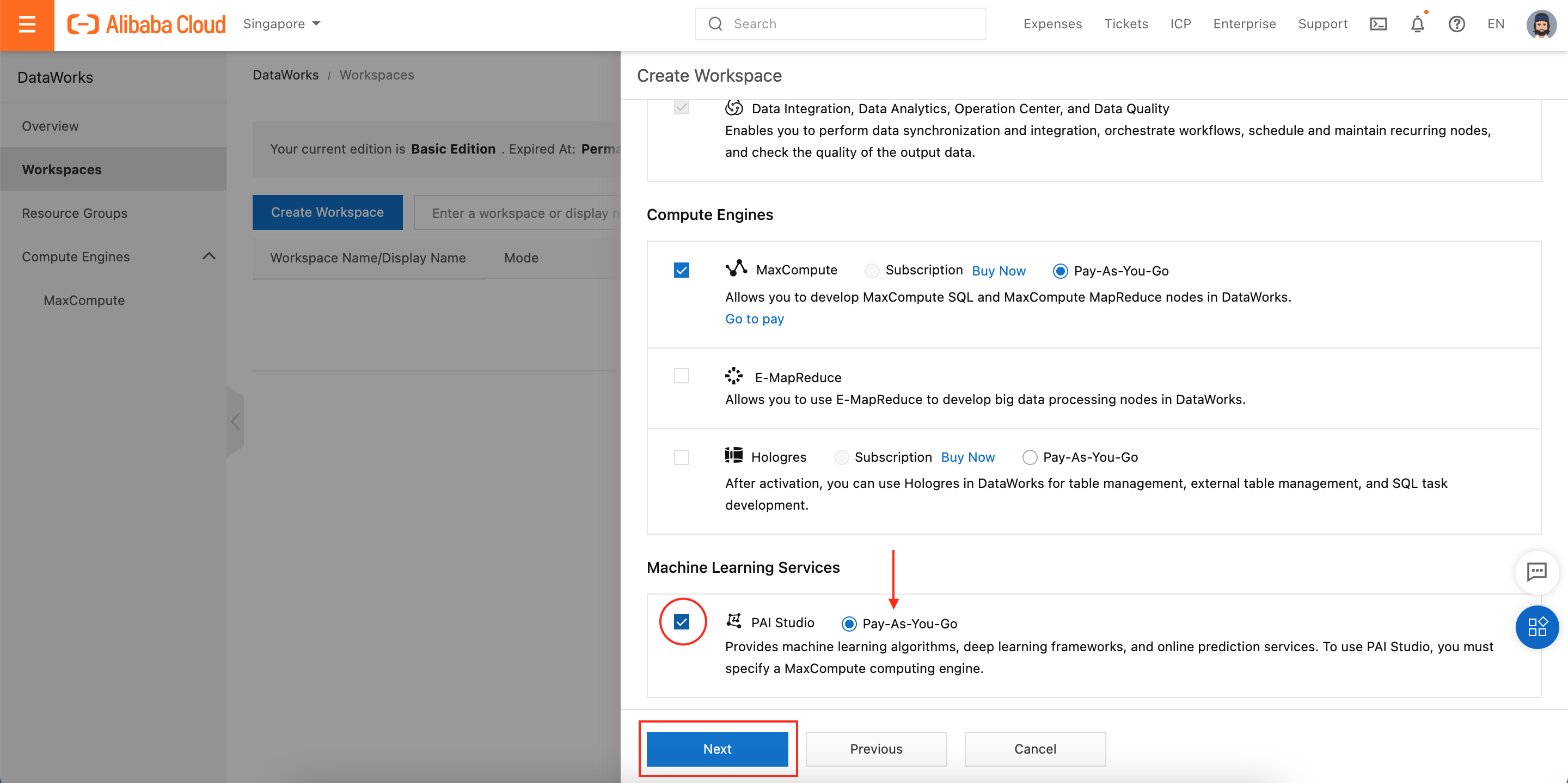

Scrolling down, you'll see we can also attach our DataWorks workspace to PAI, the Machine Learning Platform for AI. This won't cost us anything and may come in handy later if we want to run Machine Learning tasks on our processed data, so let's tick the box to enable PAI, then click Next:

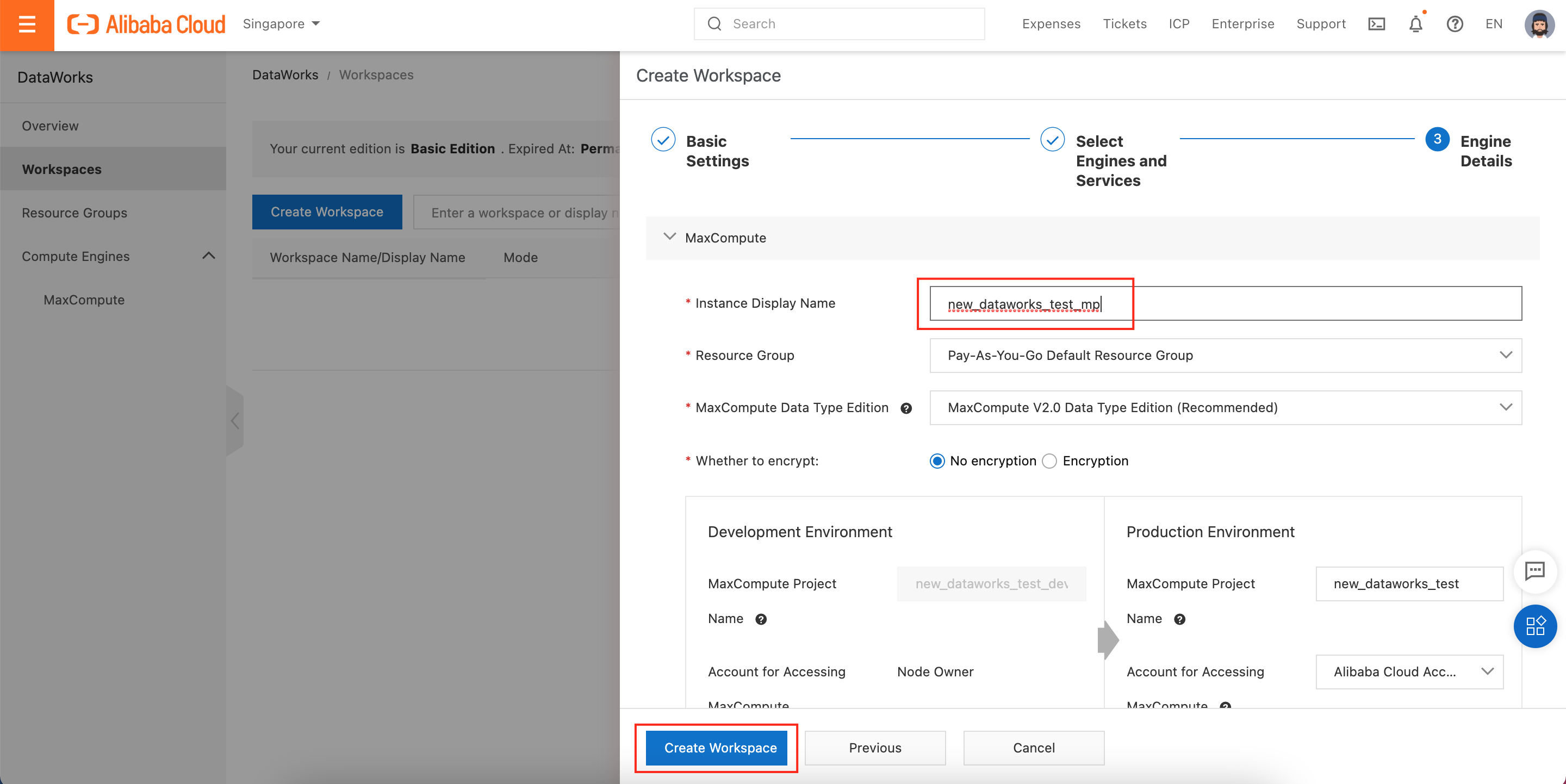

We next need to give our MaxCompute project its own name. Although we're using MaxCompute exclusively via DataWorks at this point, MaxCompute is technically a separate Alibaba Cloud product, so we need to give our MaxCompute project a name and choose a MaxCompute engine type (we will choose "MaxCompute V2.0" but there are other options such as "Hive-compatible"). Finally, we click Create Workspace:

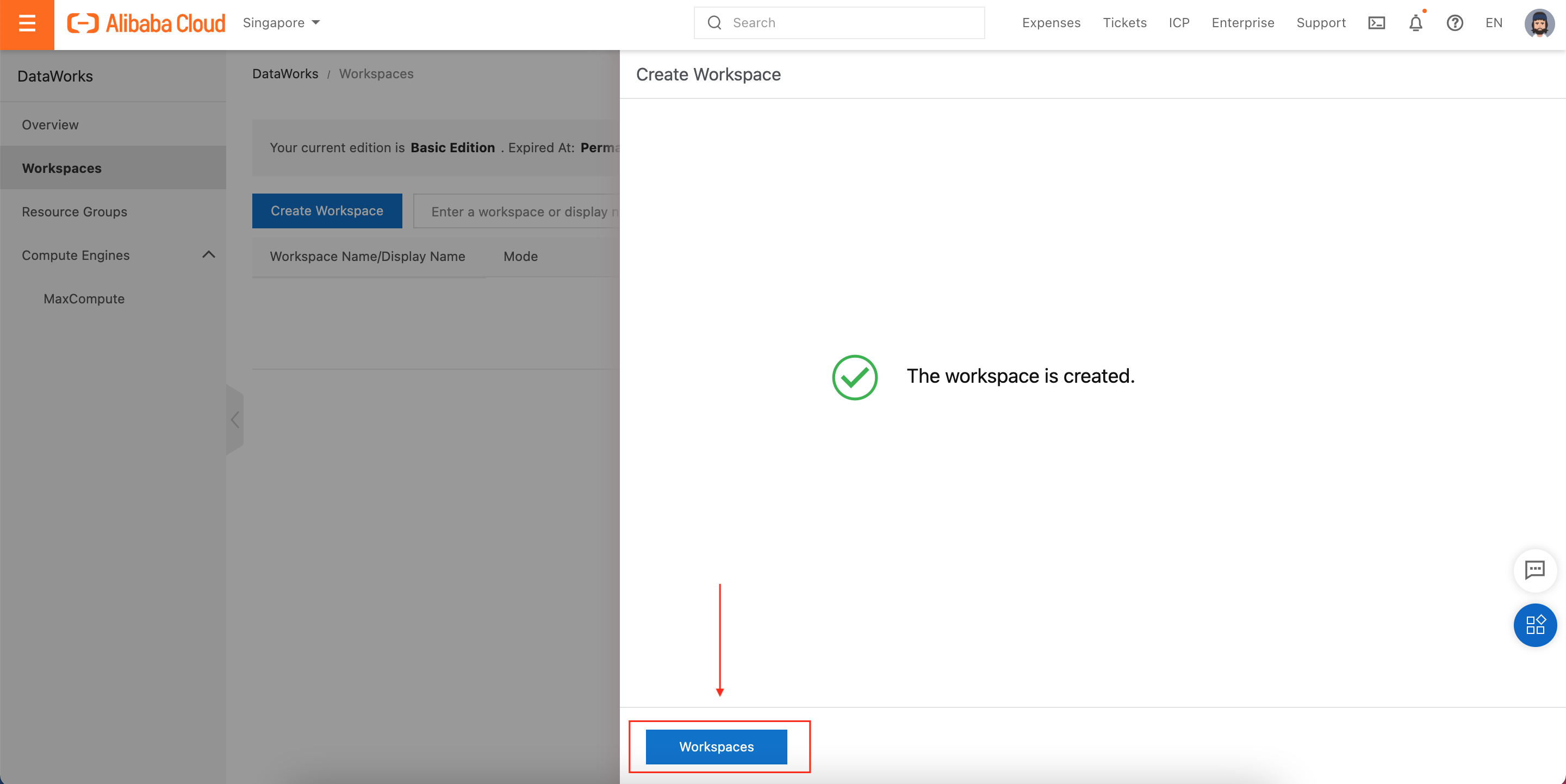

After a few seconds, we should see a page like this one. Click on Workspaces to return to the workspace list:

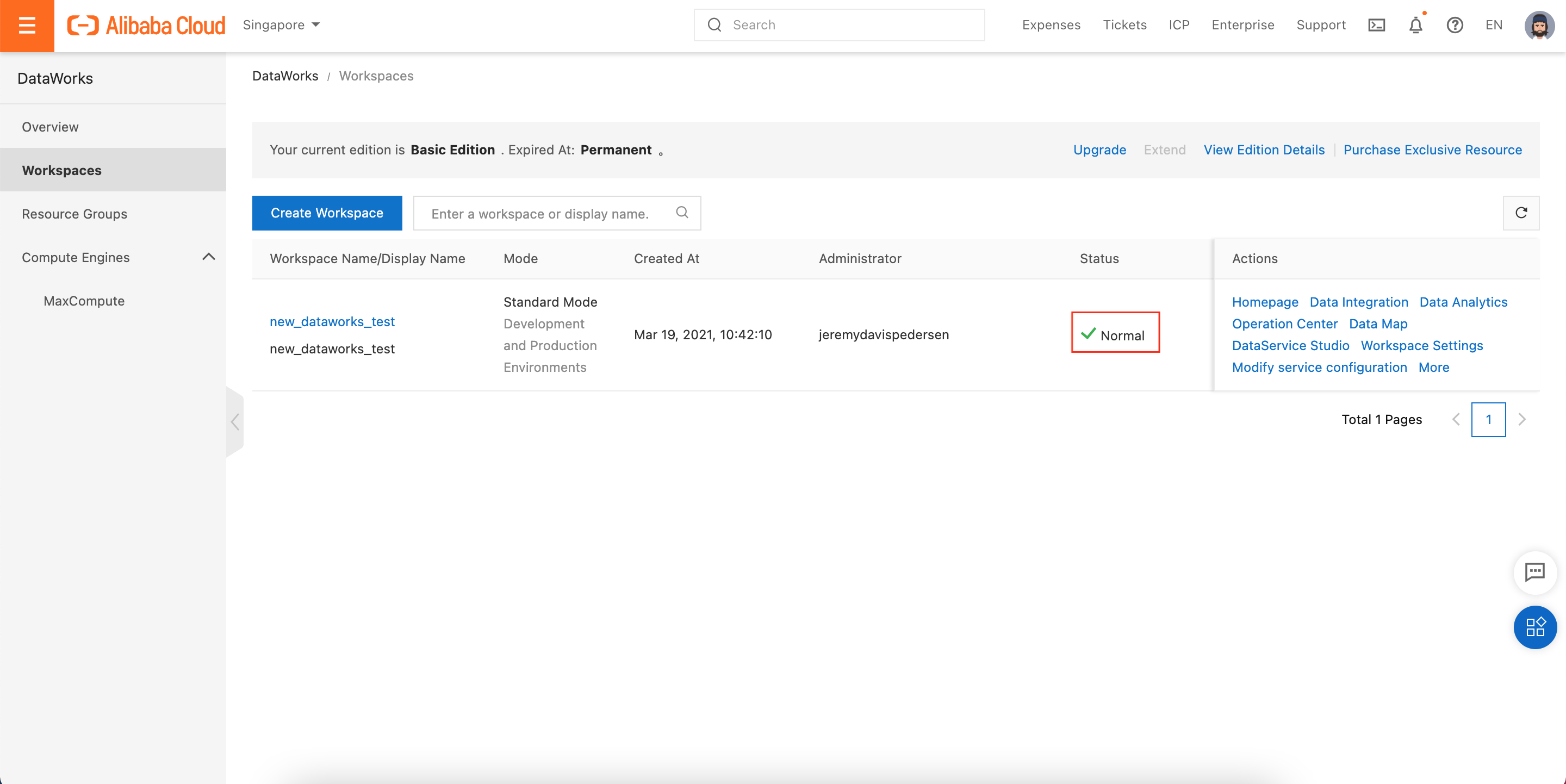

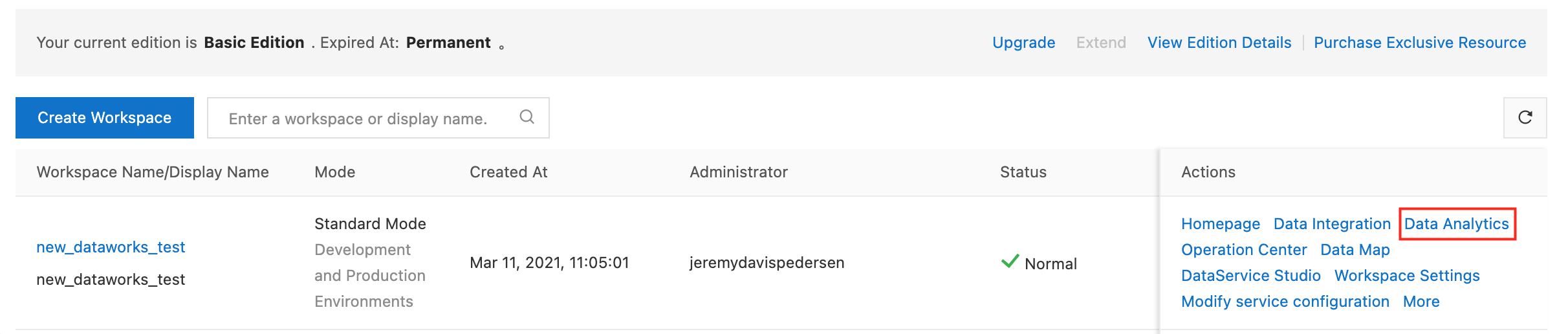

Back on the Workspaces page, we should see our new Workspace. Its Status should be Normal:

Now we can start setting up our data source (MySQL in this case) and import some data!

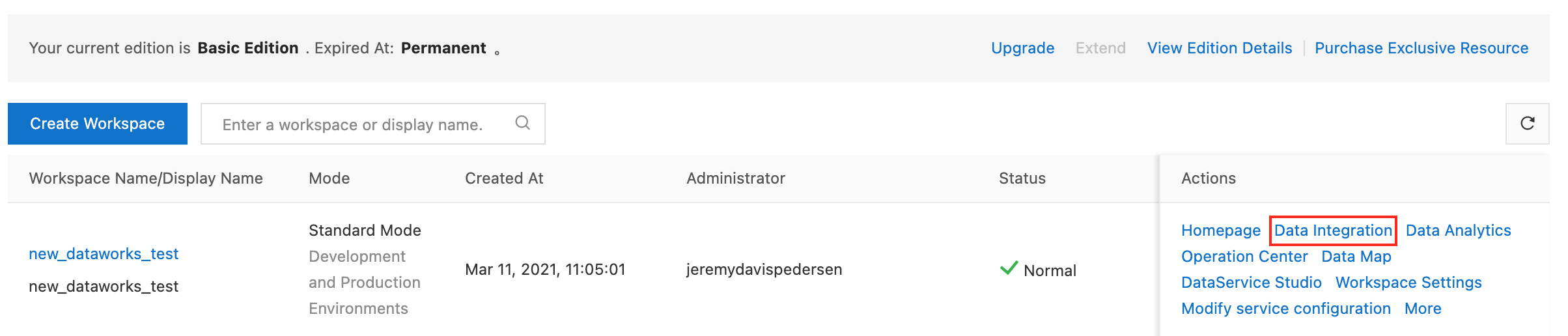

First, we need to set up some data sources. Click on Data Integration to open the Data Integration console:

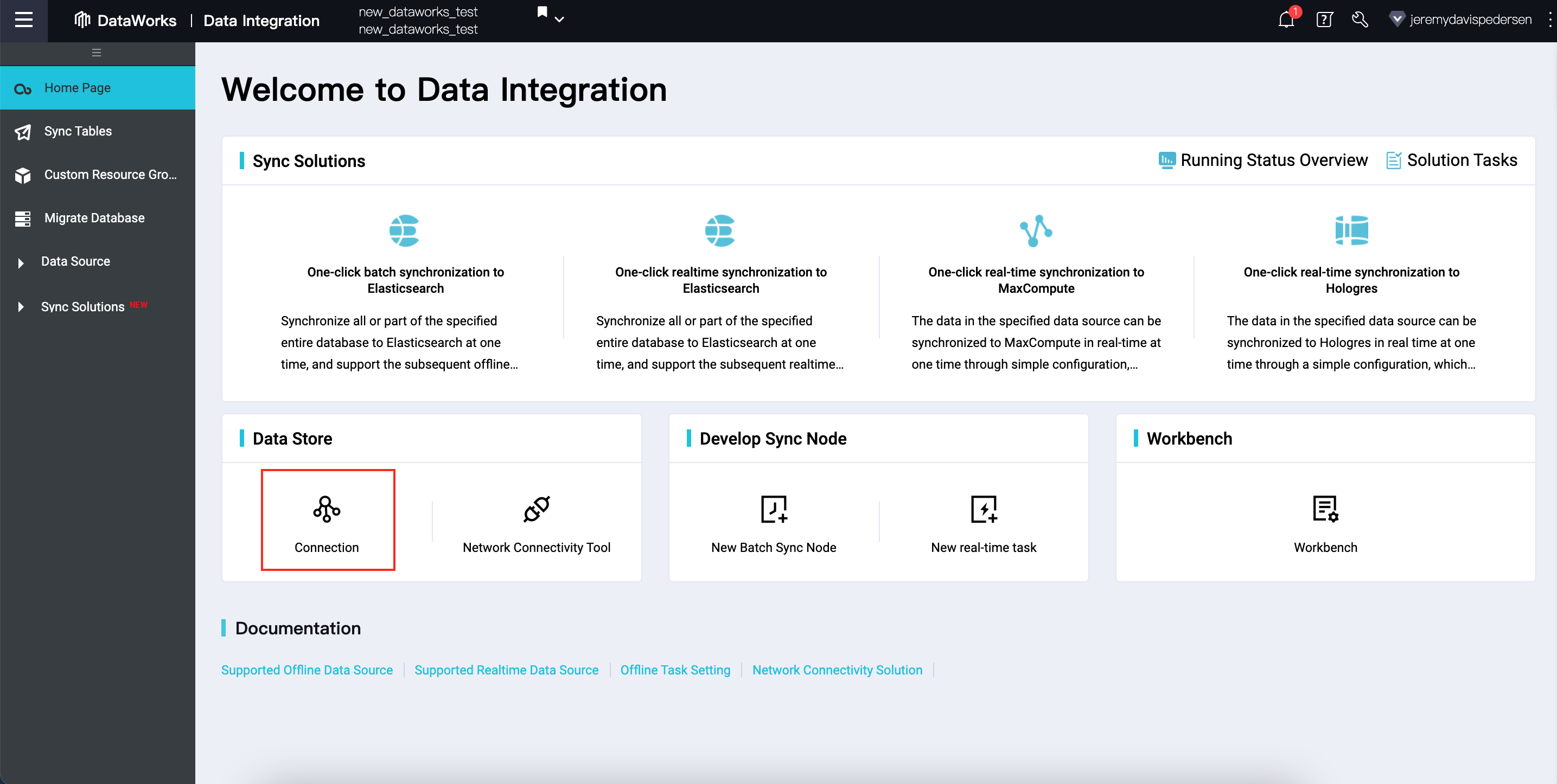

Next, click on Connection to open the data sources page:

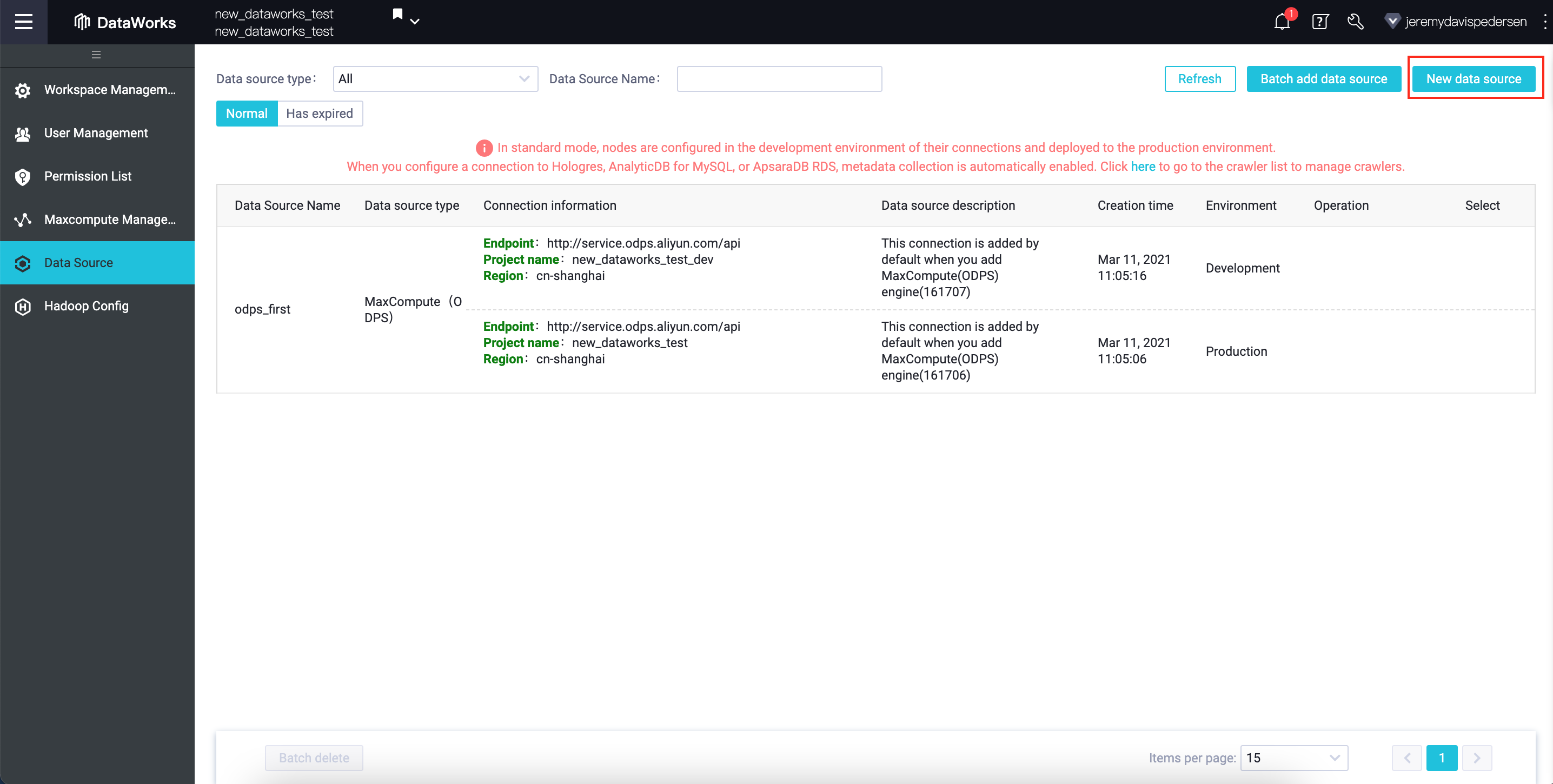

From here, we can add a new data source:

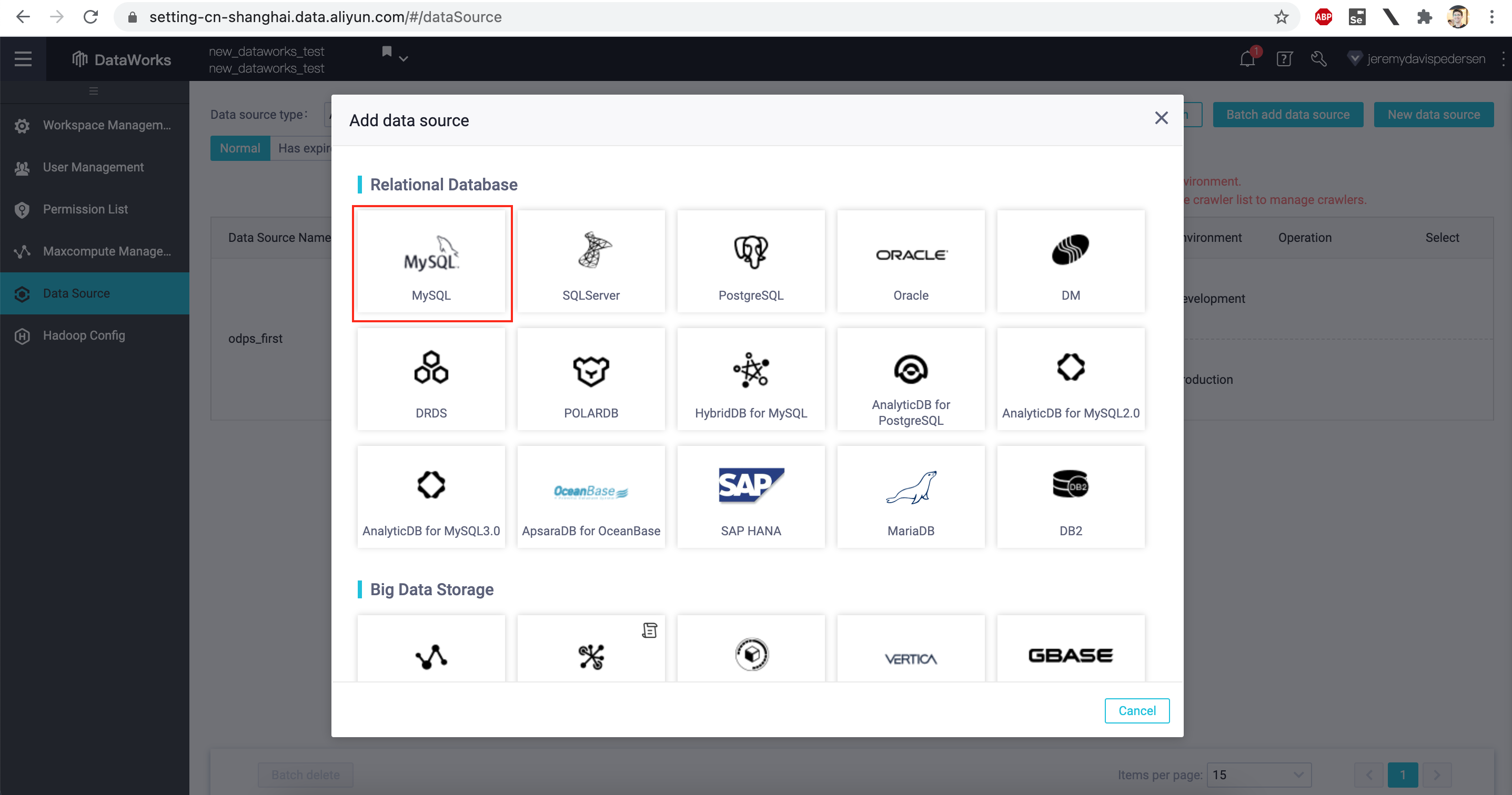

In our case, we'll be adding a MySQL data source, so we click on MySQL at the top of the list:

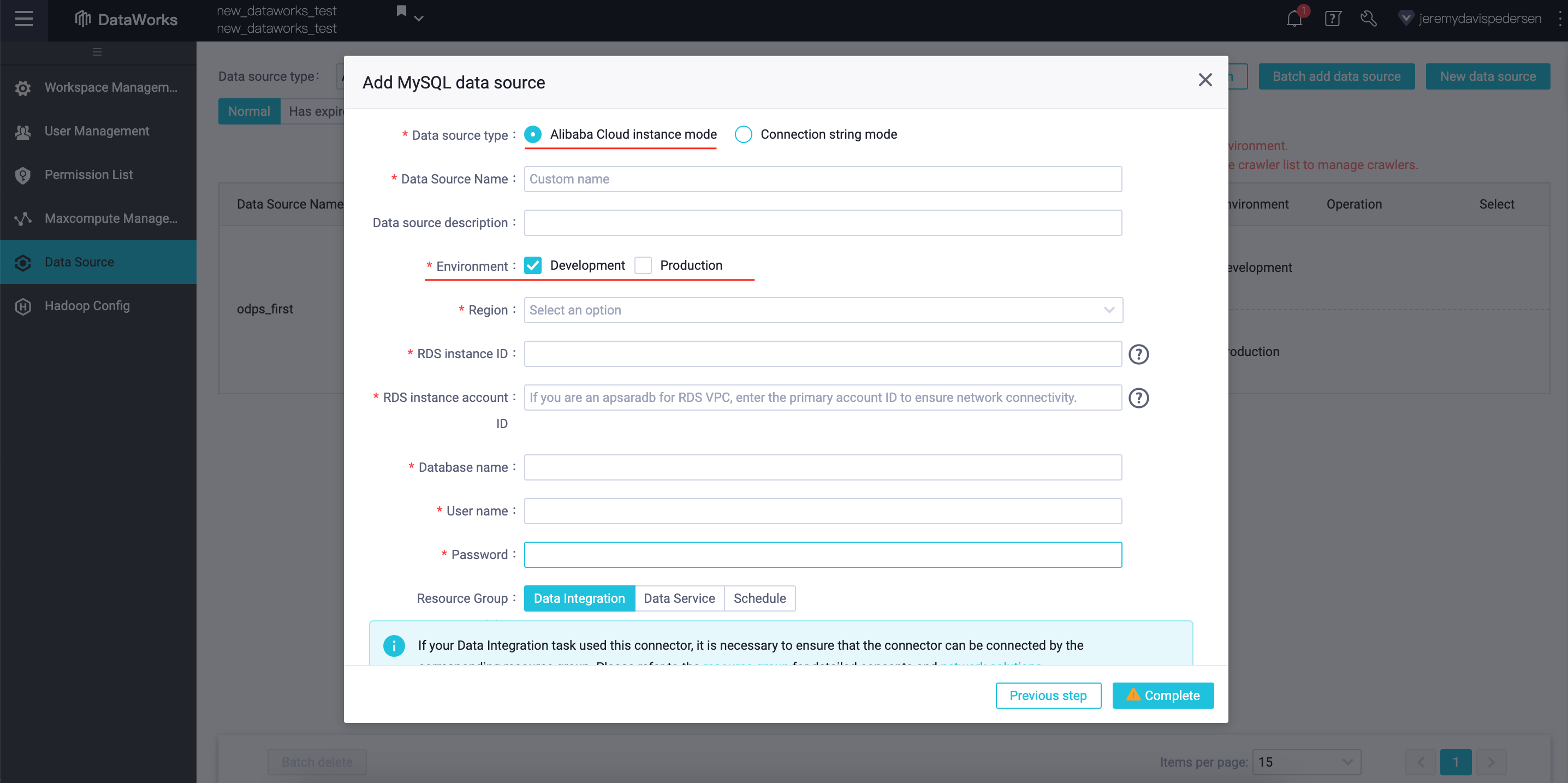

Our data source is an RDS instance in the same region as our DataWorks Workspace, so we choose Alibaba Cloud Instance Mode:

Note that you can publish your new data source into either the Development environment or the Production environment (or both, if you choose). We will publish our RDS data source into both environments.

For some projects, it makes sense to keep development and production entirely separate, down to having different data sources. This protects your production databases from errors and mistakes made in Development, and also limits the access your developers have to potentially sensitive Production data.

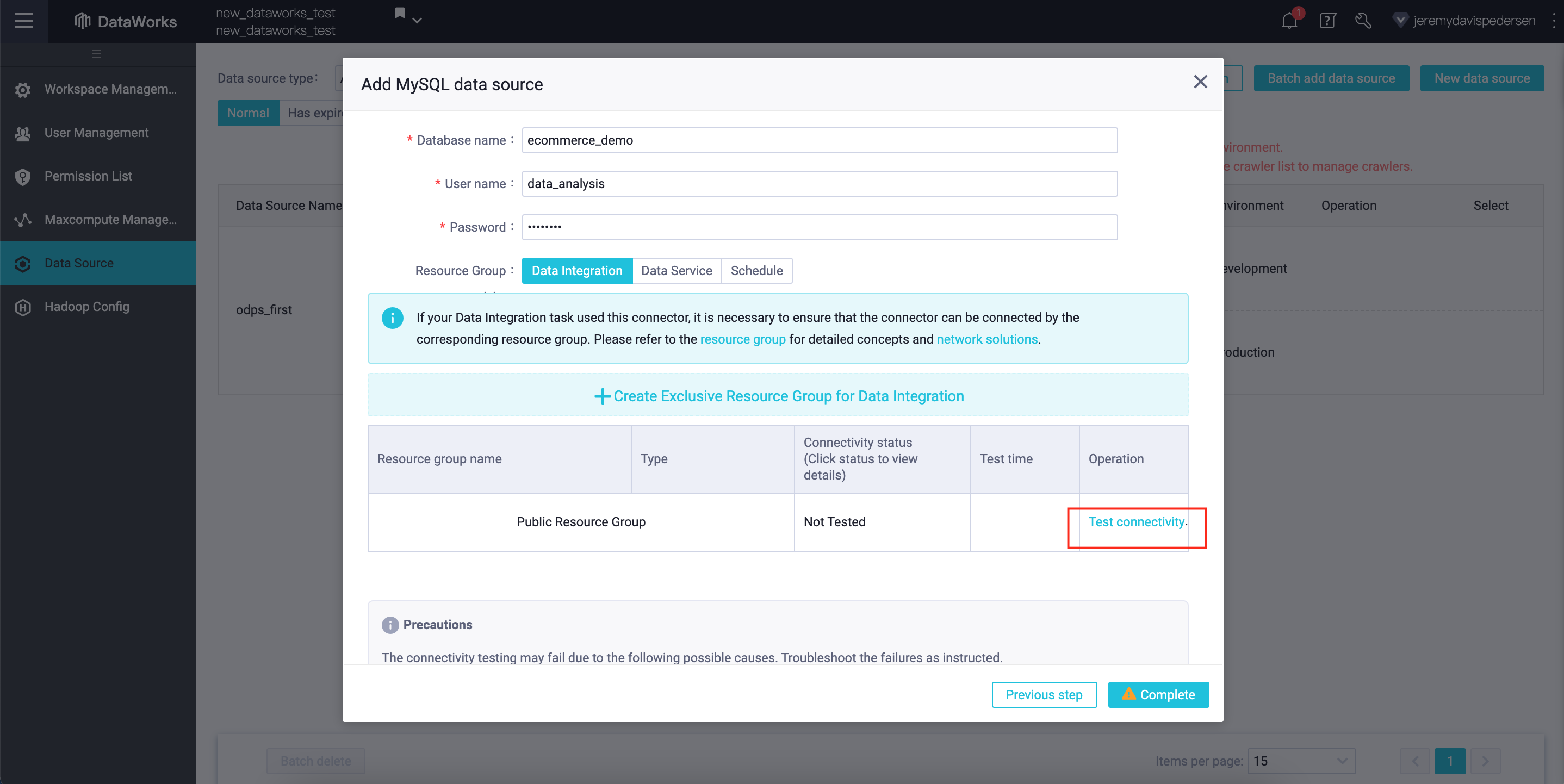

After filling in the required information (RDS instance ID, Alibaba Cloud Account ID, connection string, etc...), you can test the connection between DataWorks and the database, by clicking on Test Connectivity:

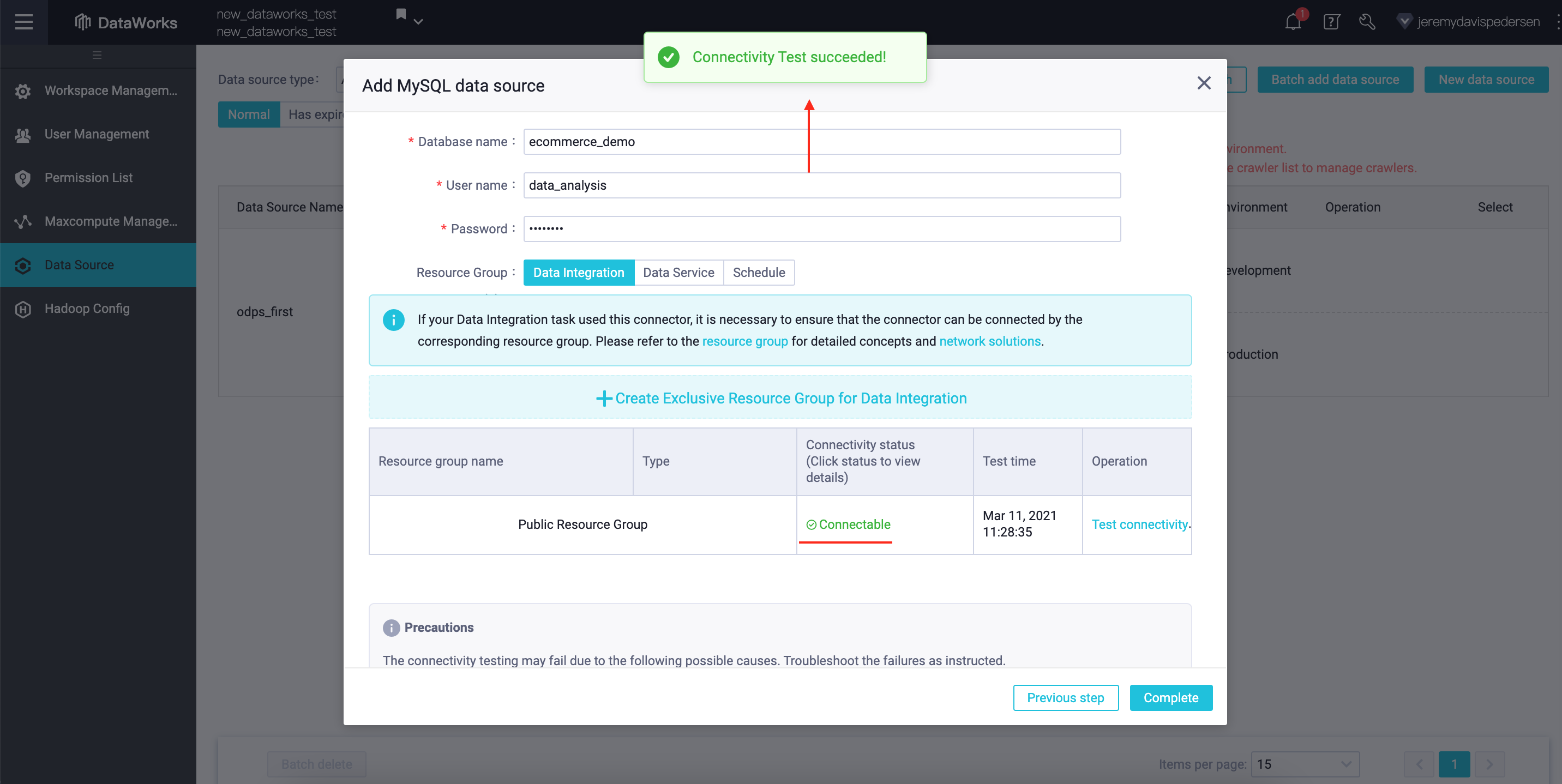

If everything works, you'll see a message like this one:

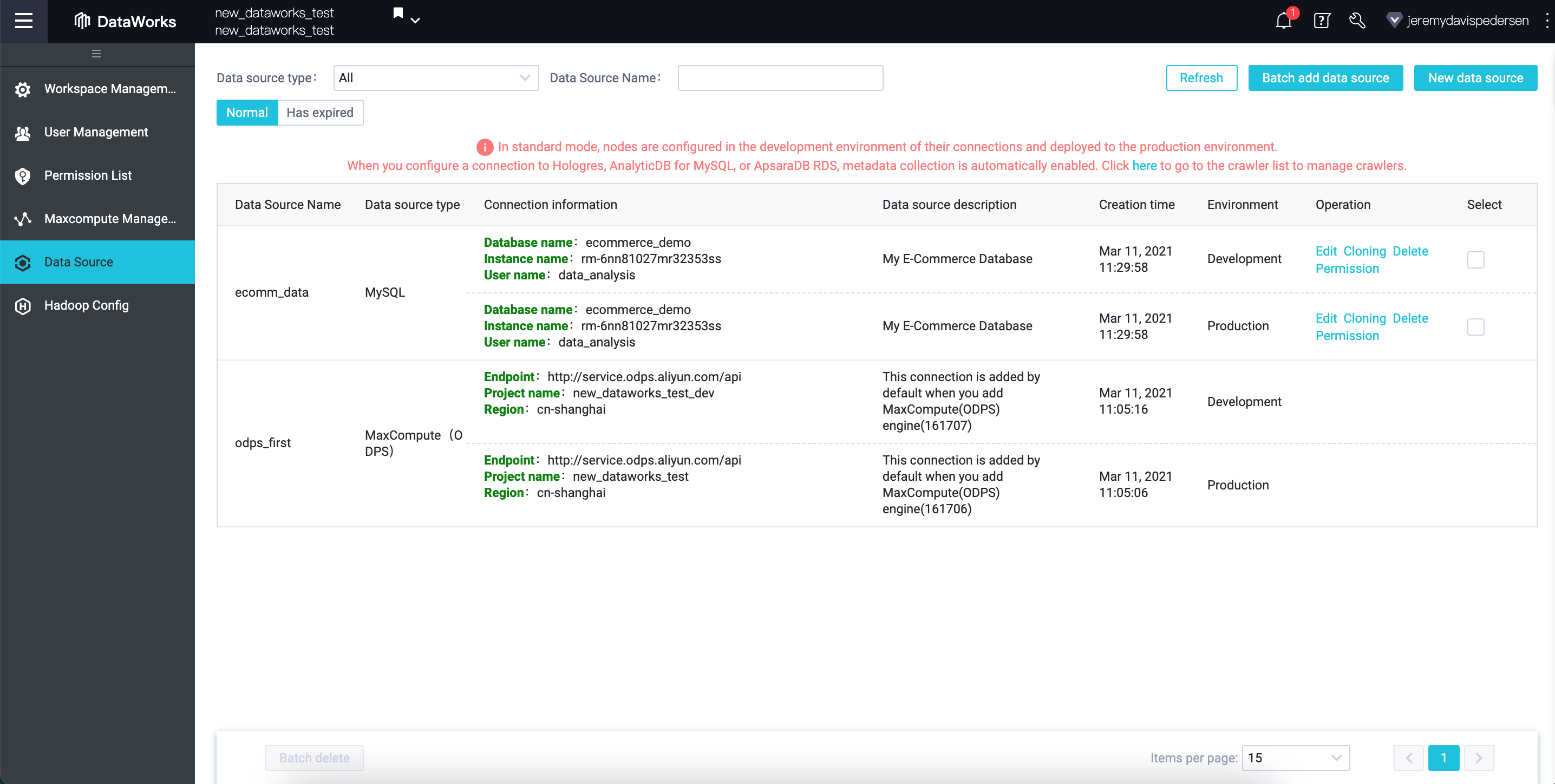

After hitting Complete, you should see your new connection appear at the top of the list, as shown here:

Once the data source has been added, we can switch back to the DataWorks Workspace view, and click on Data Analytics:

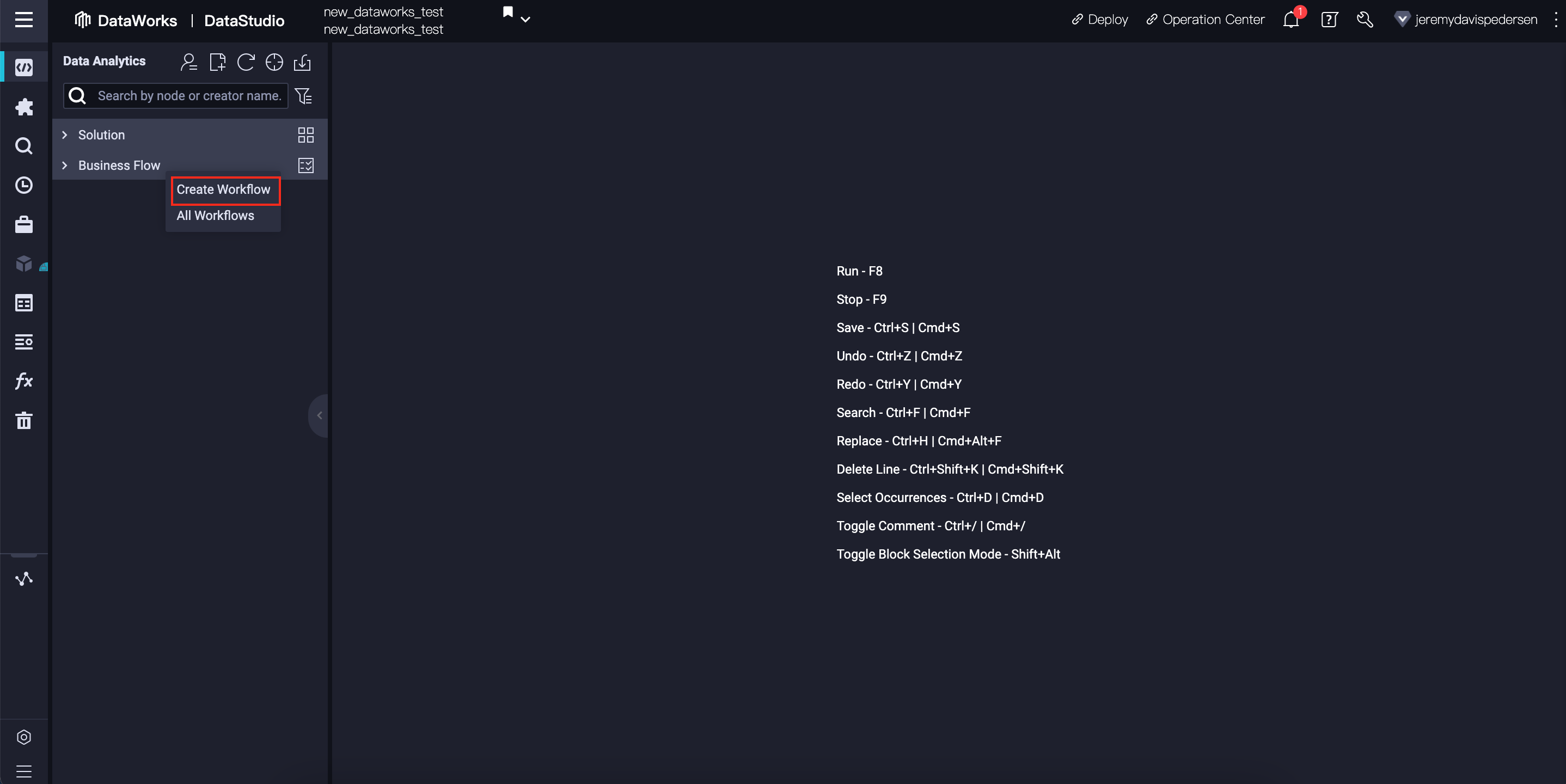

From here, we can right click on Business Flow and choose Create Workflow:

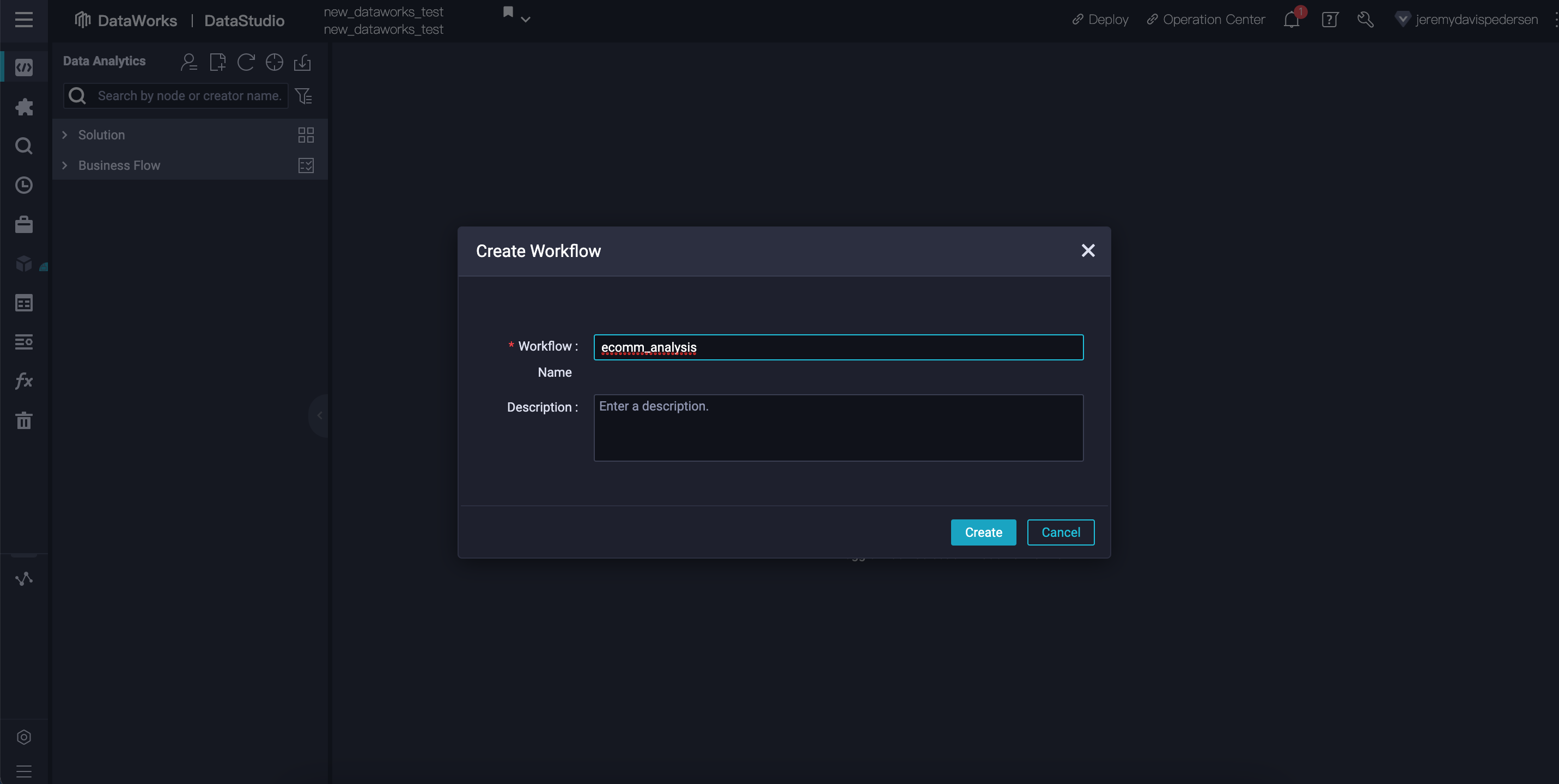

We can call the workflow anything we like. Here I choose ecomm_analysis:

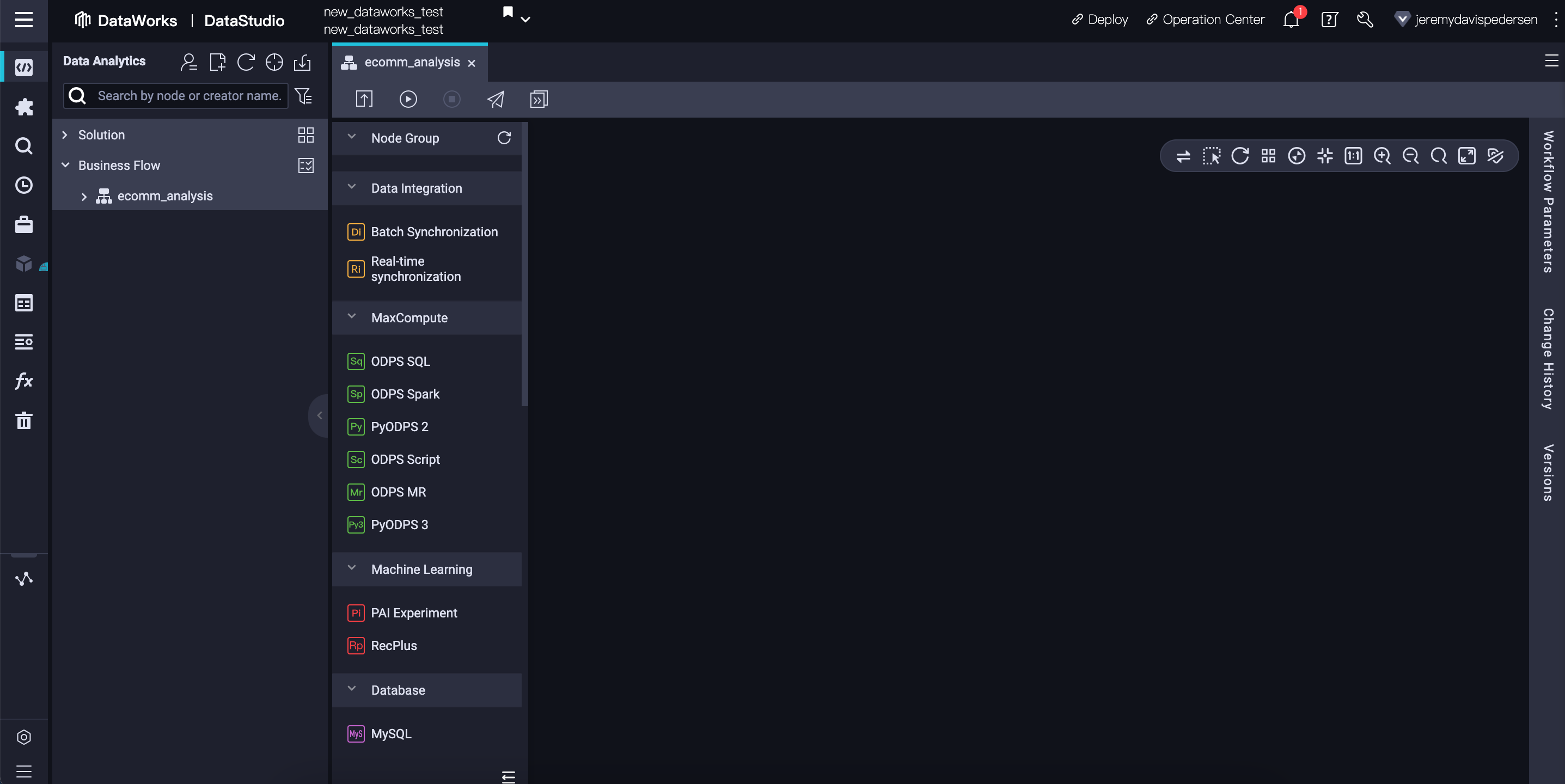

This will create a new, empty workflow, like this one:

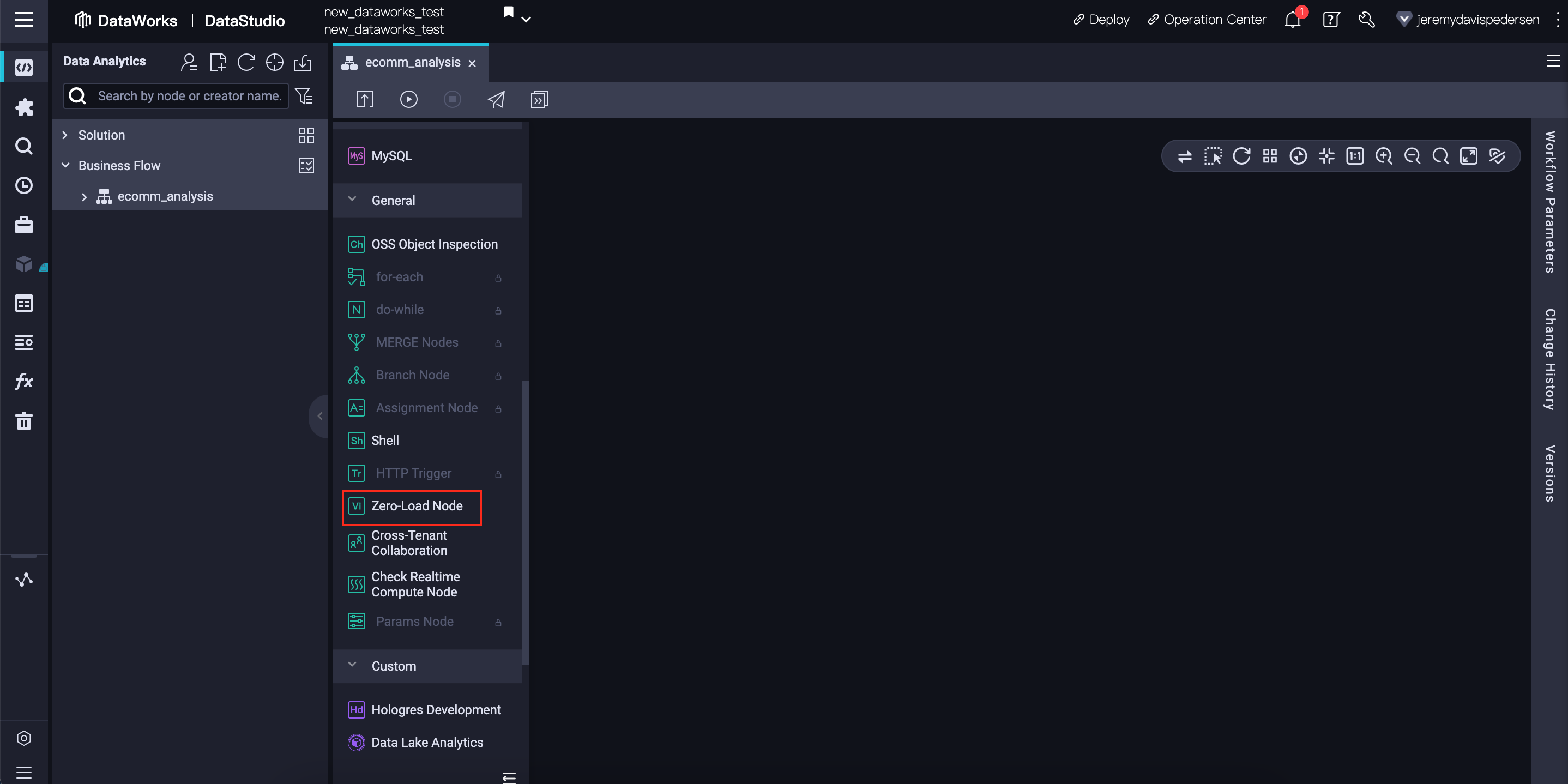

Workflows in DataWorks are DAGs (Directed Acyclic Graphs), and they must have a starting point, or starting node. For this purpose, we can use a dummy task that does no work, called a Zero-Load Node:

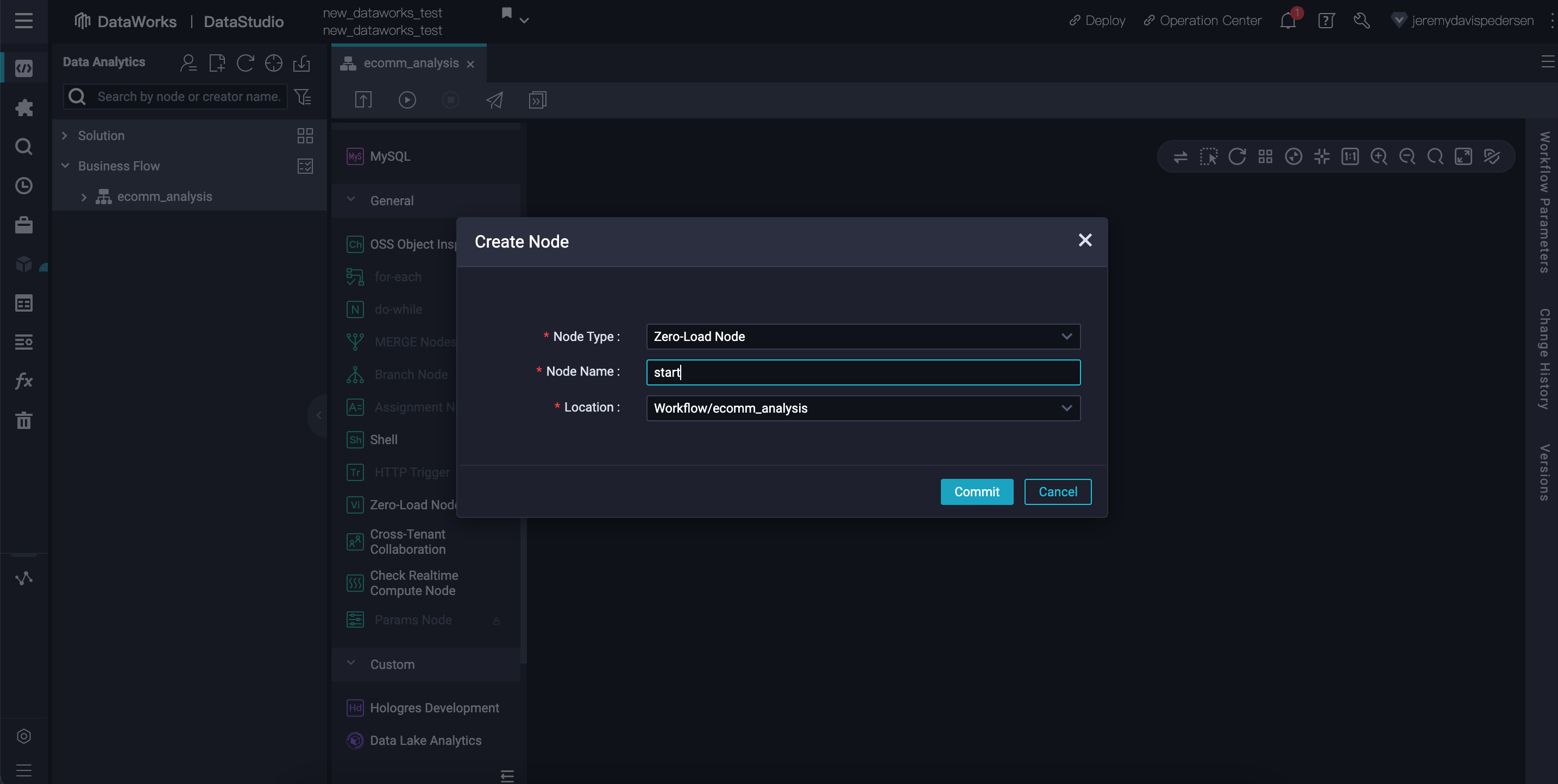

We drag and drop this node from the sidebar onto the canvas, then give it a meaningful name, like start:

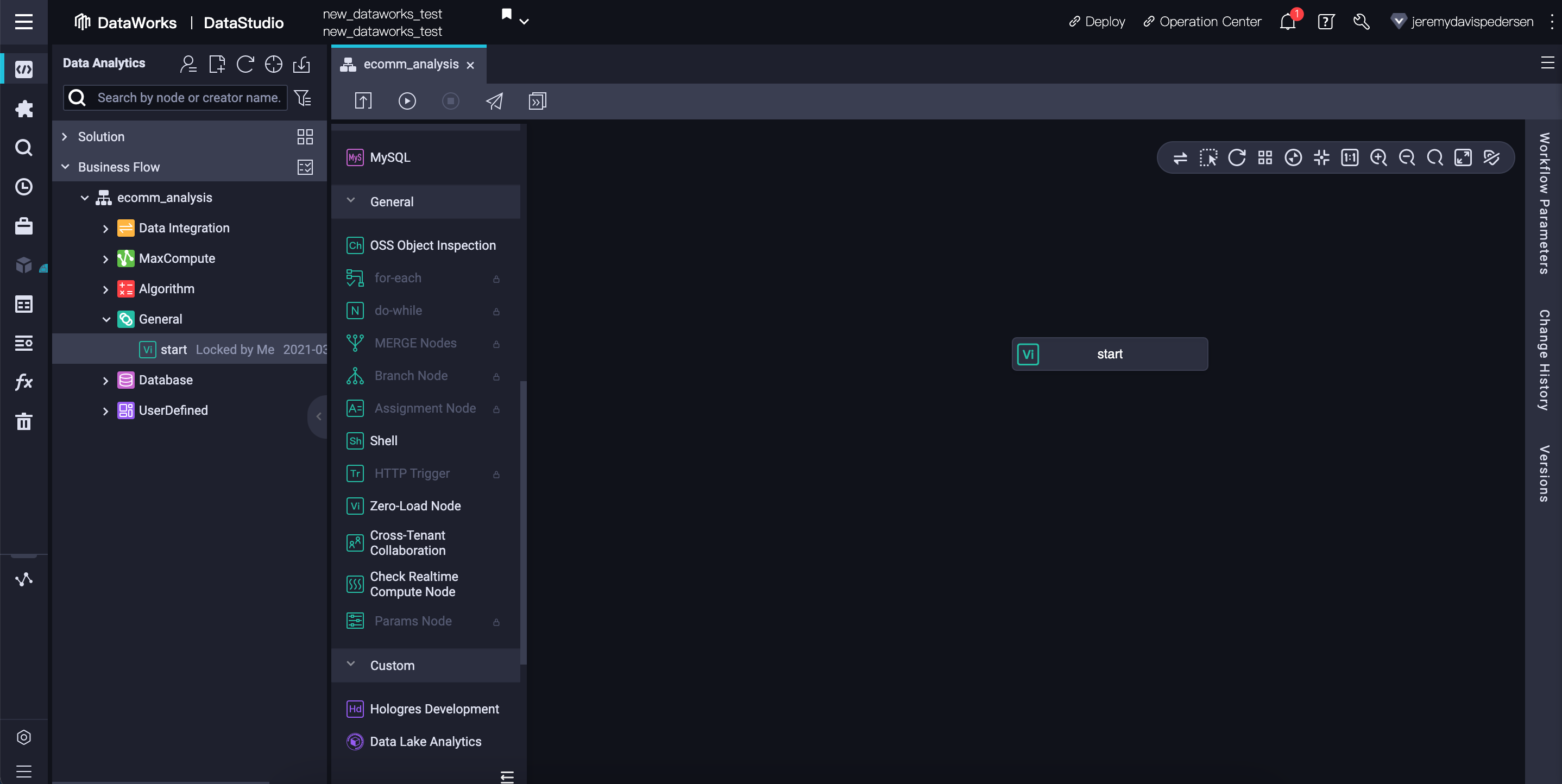

Our workflow now looks like this: our graph has a single node, with no children or parents:

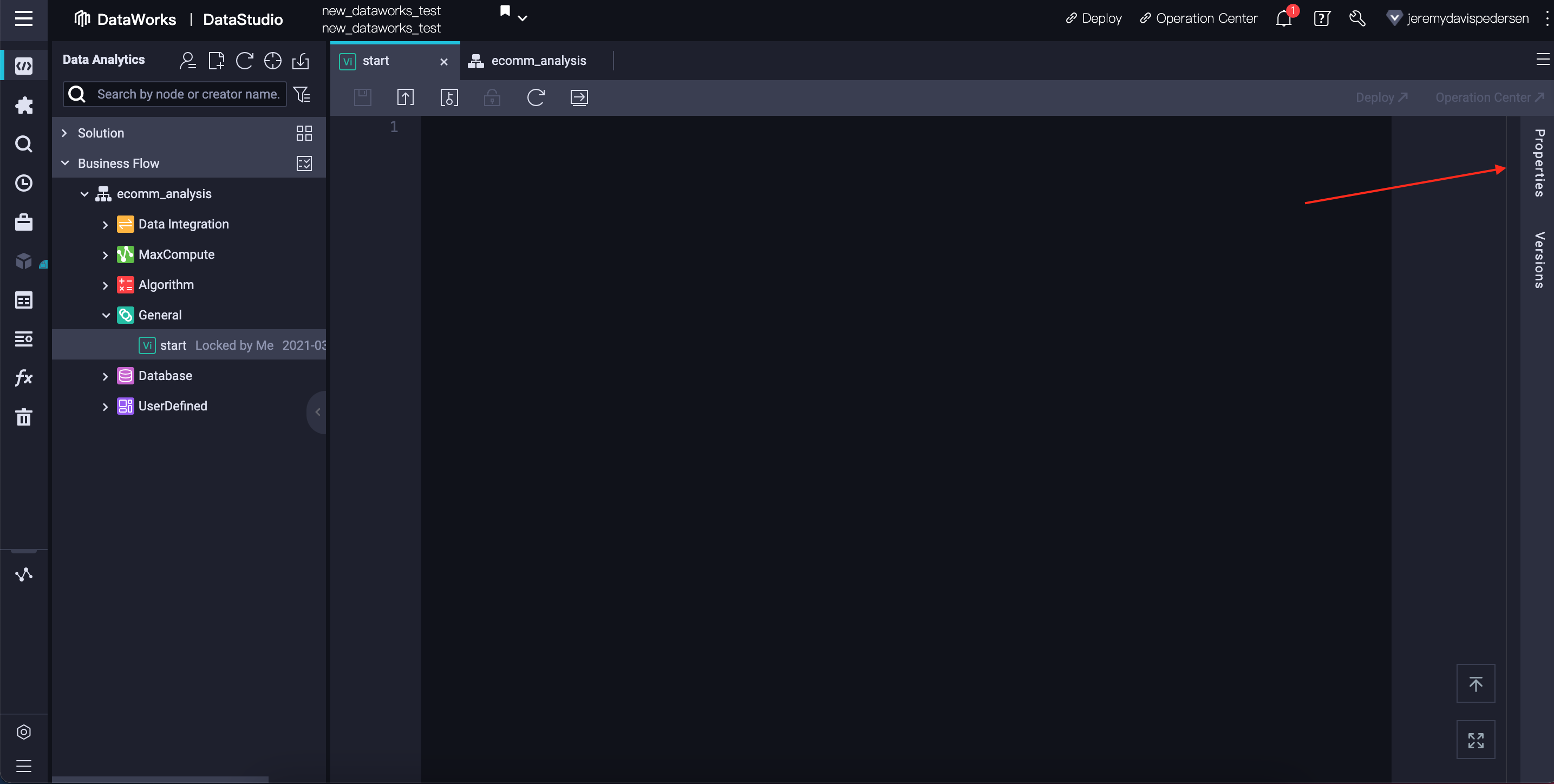

DataWorks actually requires all nodes - even the first node in a workflow - to have a parent node. We can set the start node's parent node to be the root node in our DataWorks Workspace. Every Workspace has a root node which can serve as the parent for the nodes in any workflows we create. First, we need to double click on the start node, then open its properties:

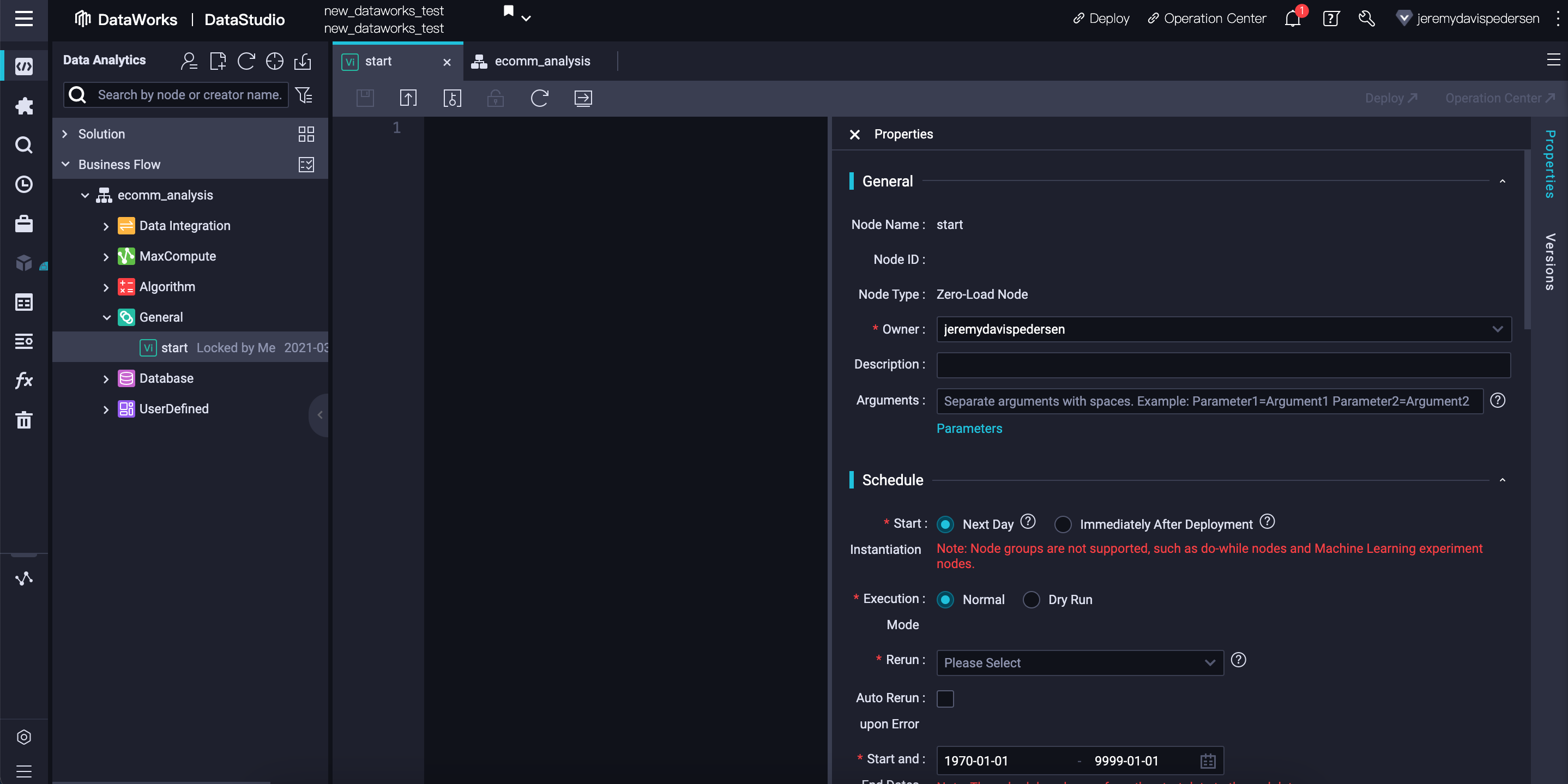

Clicking on Properties should open a side-pane like this one:

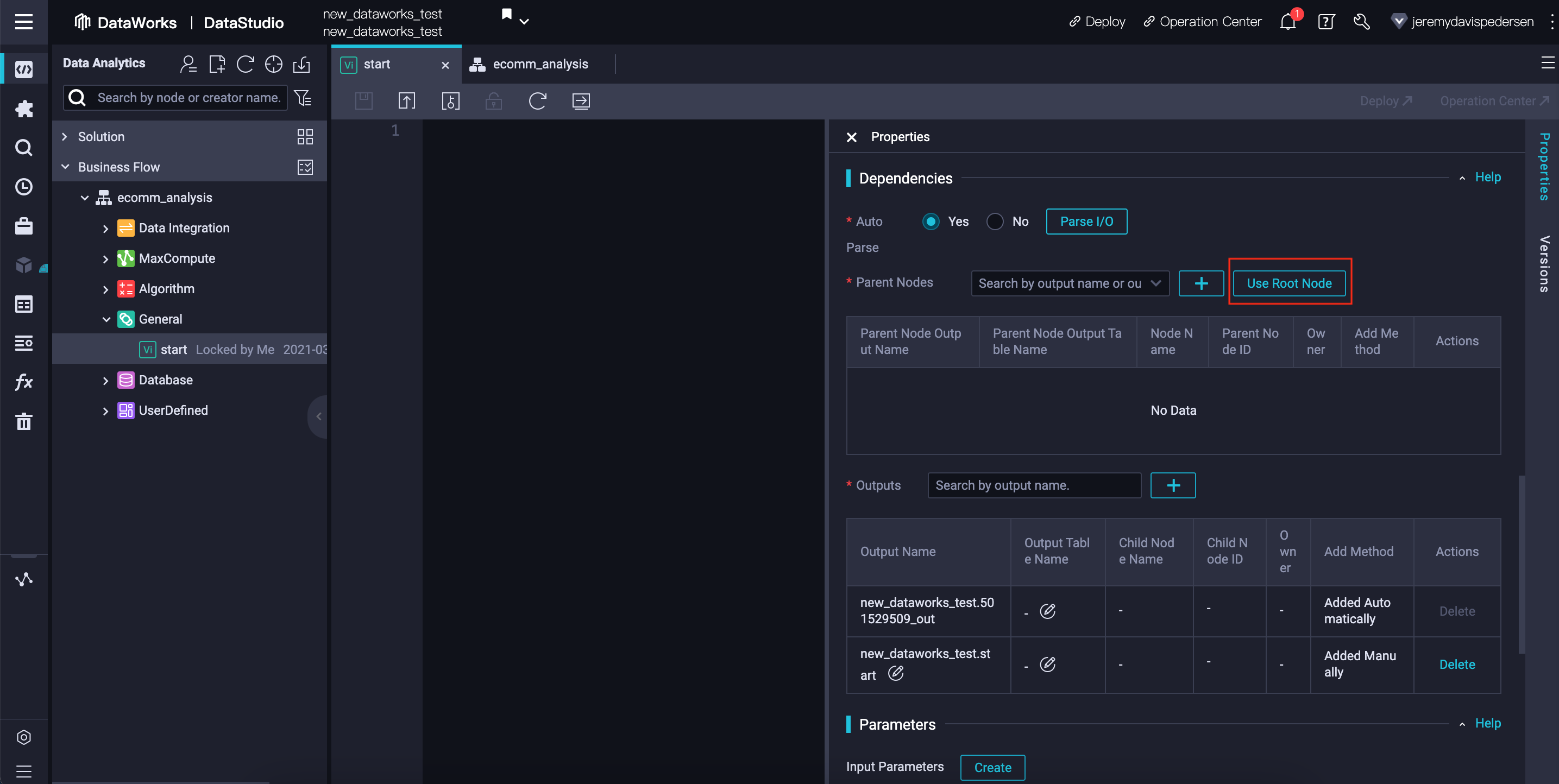

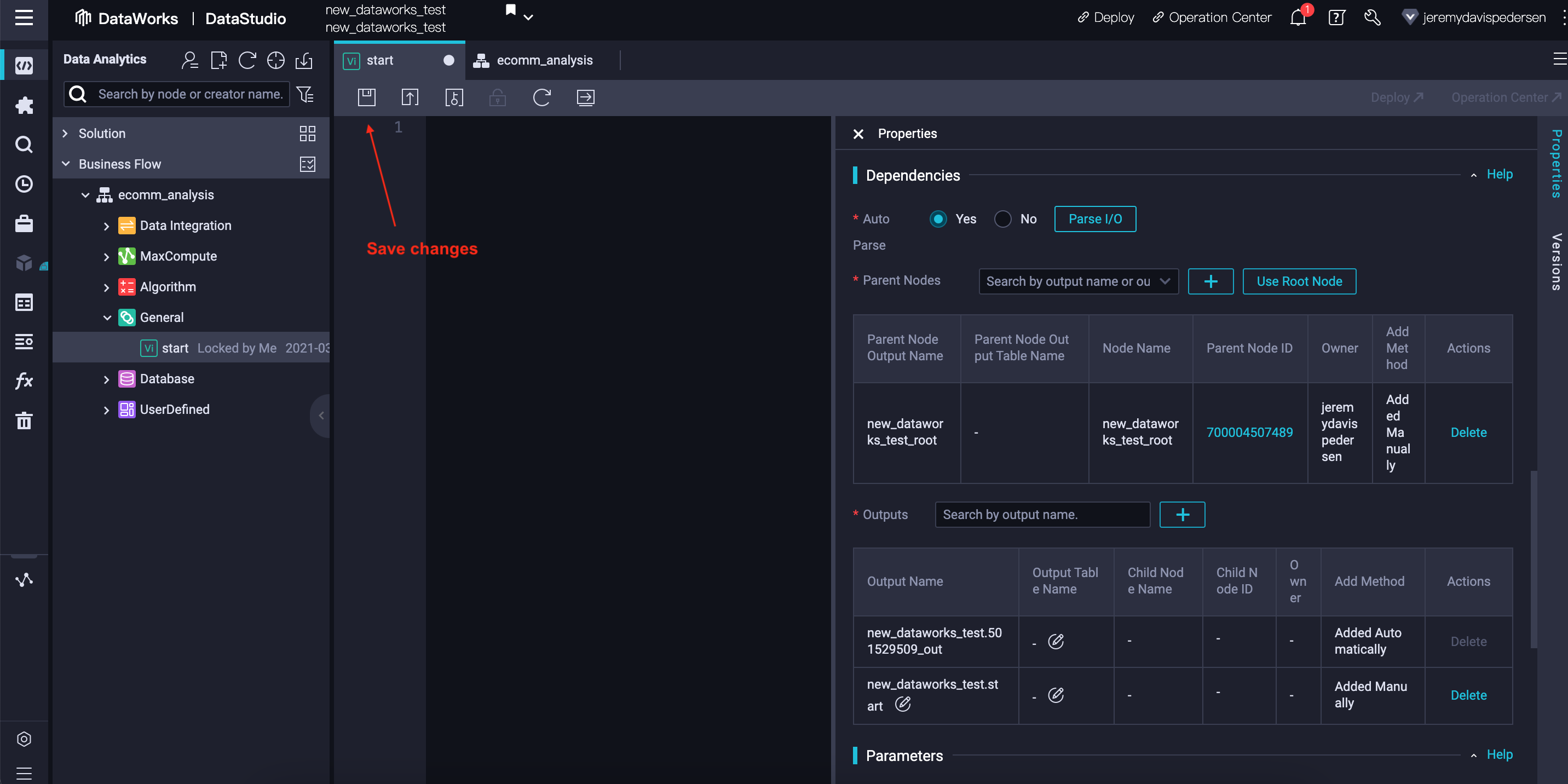

You'll notice we can set a schedule for the start node via the Properties view. This allows us to run the workflow on a regular schedule, a very common scenario for "batch processing" workflows that import new data daily or weekly, for instance. We'll scroll down past that, to the Dependencies section, then click on Use Root Node to set the parent node for our start node:

We can now save our changes and close the start node tab, returning to the ecomm_analysis tab:

Before we can import our data from MySQL into MaxCompute and start working with it, we need to create tables in MaxCompute to hold the imported data. We can do this from the DataWorks web console.

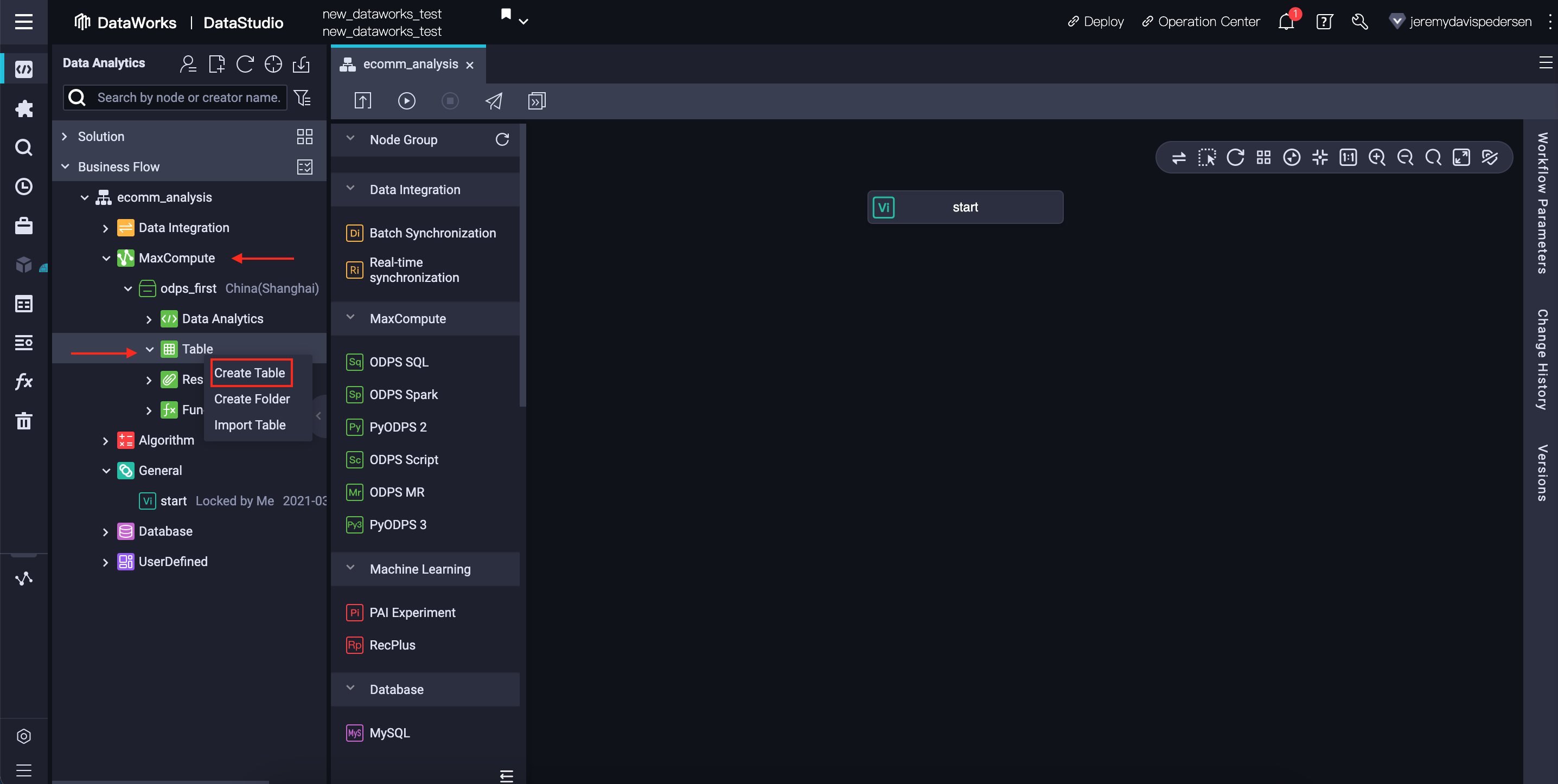

We can do that by navigating to Tables under MaxCompute, then right clicking and choosing Create Table, as shown here:

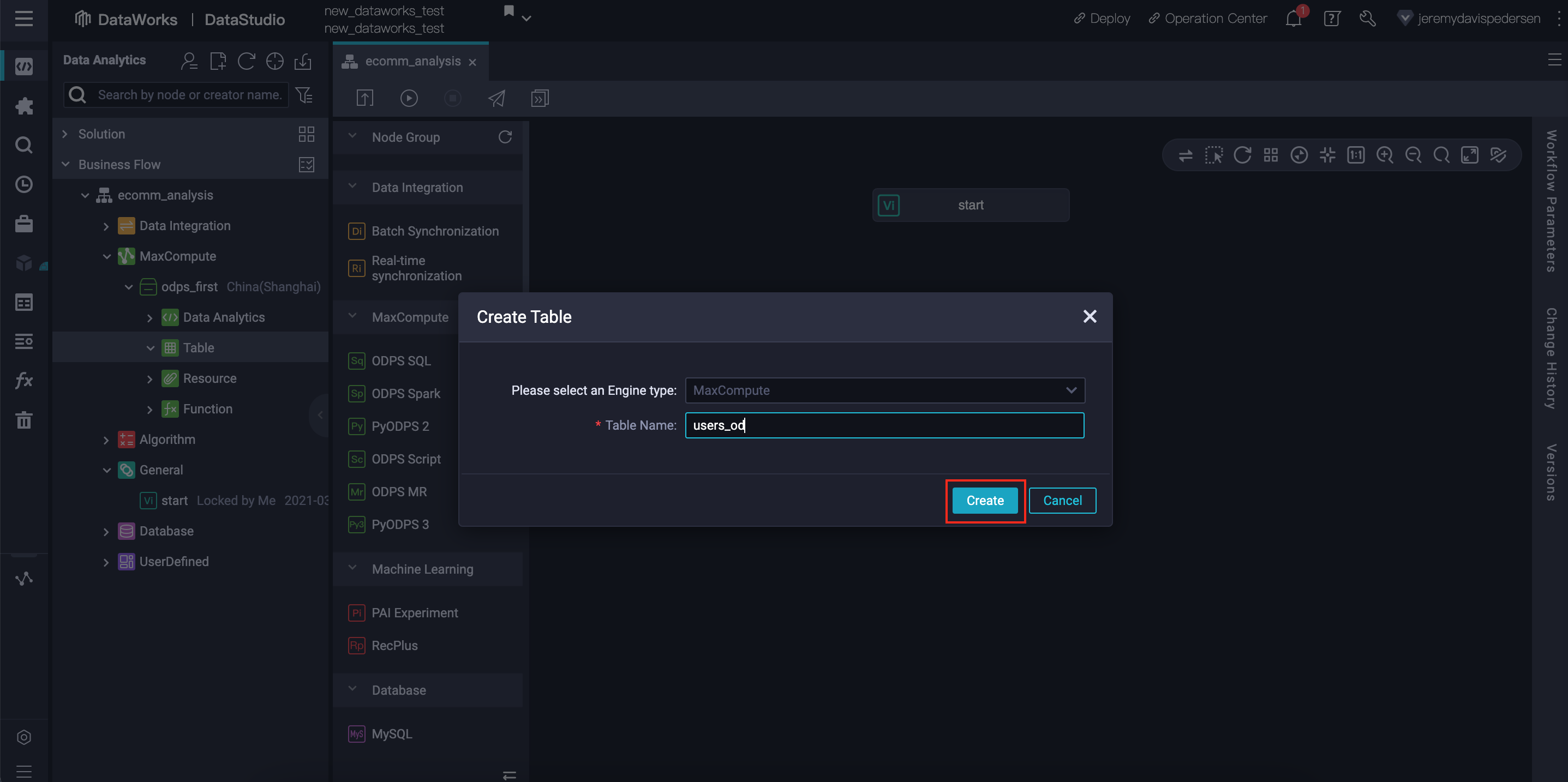

We then give the new table a name, such as users_od. I add the od postfix to indicate that the table contains original data (as opposed to derived data):

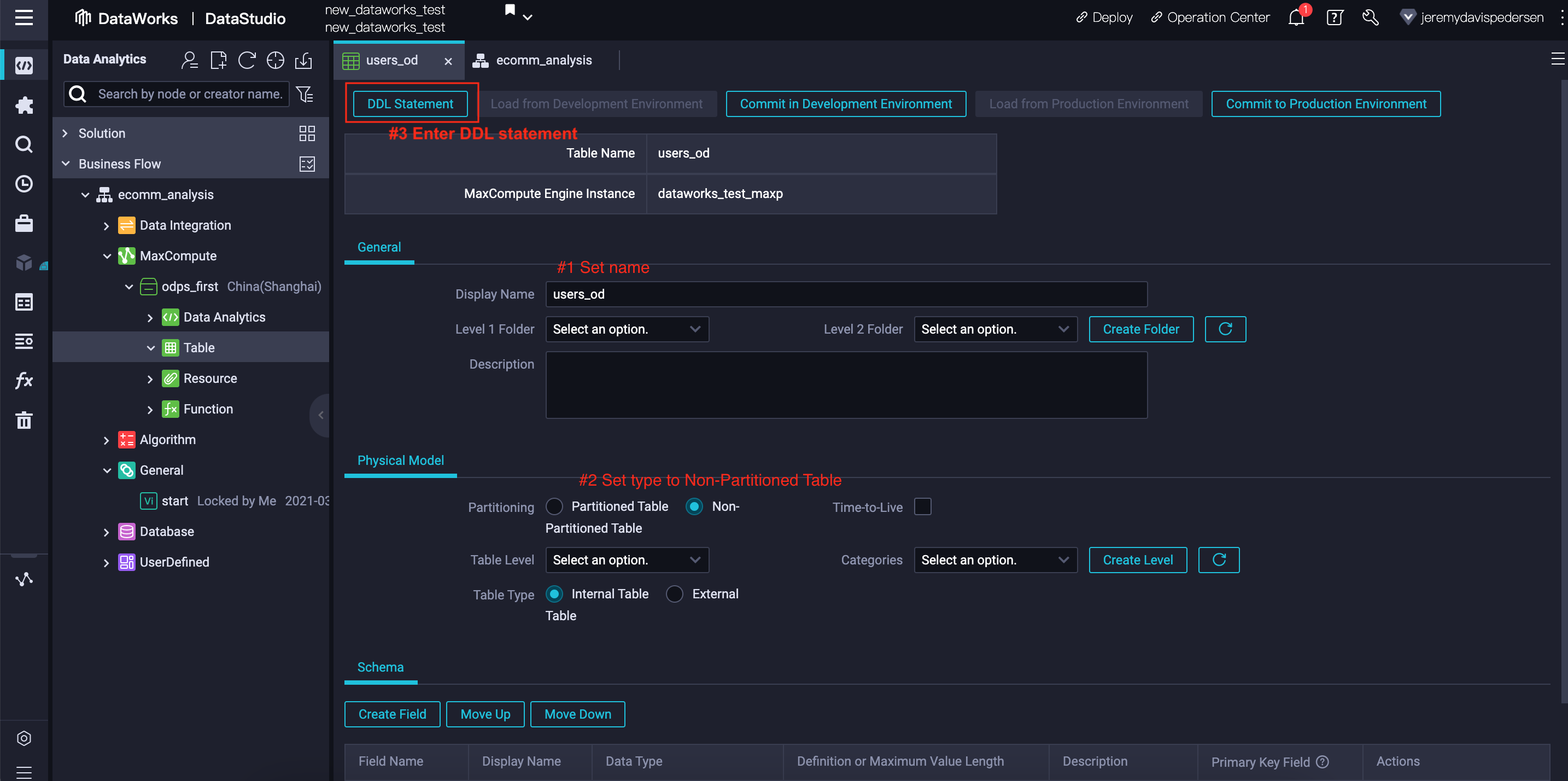

We give the table a name, choose its type (we'll use Non-Partitioned Table), and then enter a DDL statement (scroll down for the users_od DDL statement) which will set the schema for the table.

The DDL statement code for the users_od table is:

CREATE TABLE IF NOT EXISTS users_od

(

user_id BIGINT,

name STRING,

age BIGINT,

sex STRING,

country STRING,

country_code STRING

) ;We need to repeat the table creation process 4 more times, as we need to create a total of 5 new tables:

users_od table (already created above)products_od tableorders_od tableorder_data table, combining the data from #1, #2 and #3 abovebig_spenders table to hold a list of our top spending customersSimply repeat the steps above, changing the table name and DDL code each time. The DDL code is below:

CREATE TABLE IF NOT EXISTS products_od

(

product_id BIGINT,

product_name STRING,

price DOUBLE

) ;CREATE TABLE IF NOT EXISTS orders_od

(

order_id BIGINT,

year STRING,

month STRING,

product_code BIGINT,

quantity BIGINT,

user_id BIGINT

) ;CREATE TABLE IF NOT EXISTS order_data

(

order_id BIGINT,

product_id BIGINT,

user_id BIGINT,

product_name STRING,

price DOUBLE,

quantity BIGINT,

total DOUBLE,

name STRING,

age BIGINT,

sex STRING,

country STRING

) ;CREATE TABLE IF NOT EXISTS big_spenders

(

user_id BIGINT,

spend DOUBLE

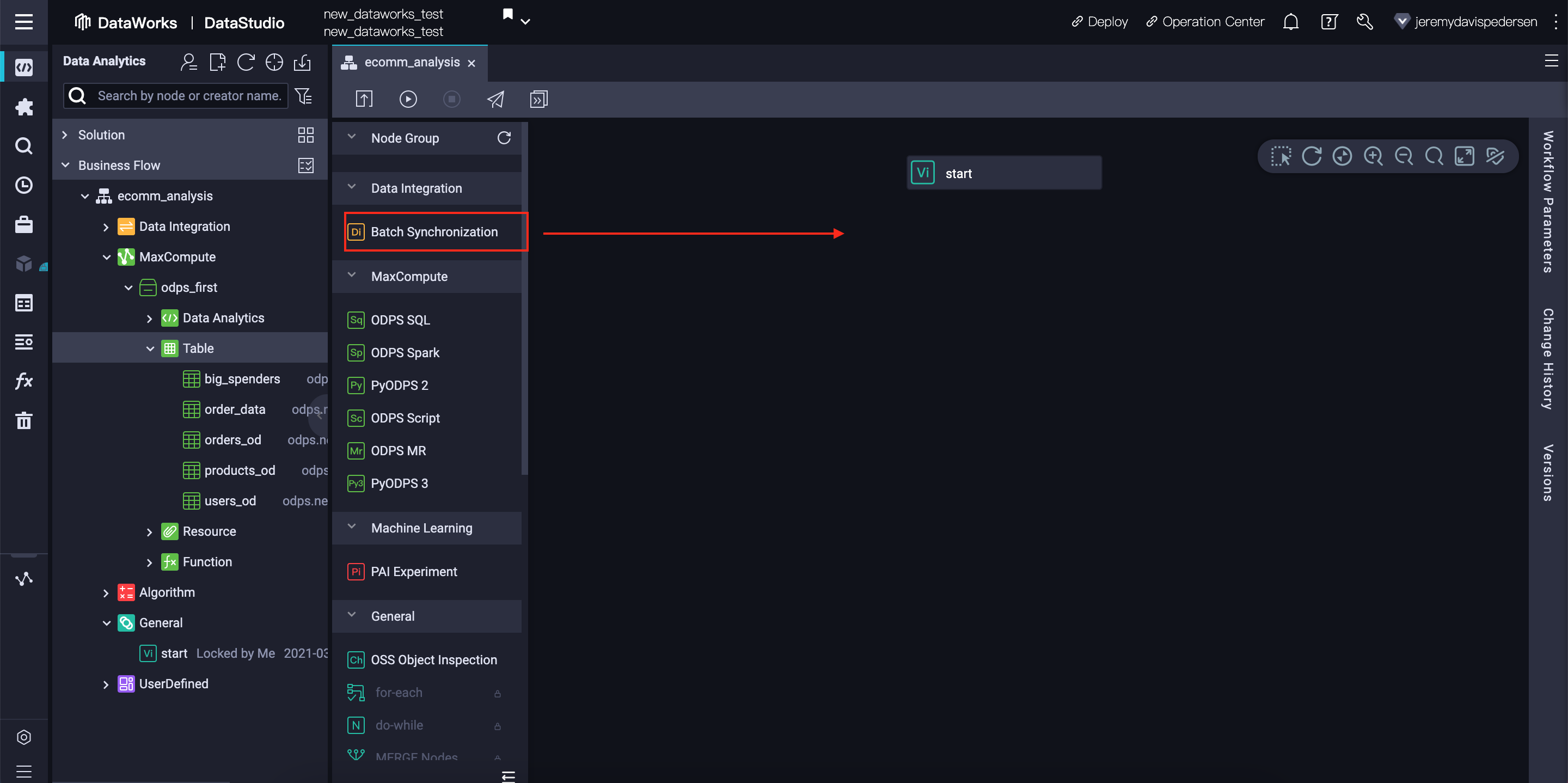

) ;Now that we have set up our MaxCompute tables, we can begin importing our data. We need to create three new Batch Synchronization nodes underneath the start node in our workflow. Each node will synchronize one MySQL database table into its corresponding MaxCompute table. Here we show the steps for users_od. Simply repeat these steps for products_od and orders_od.

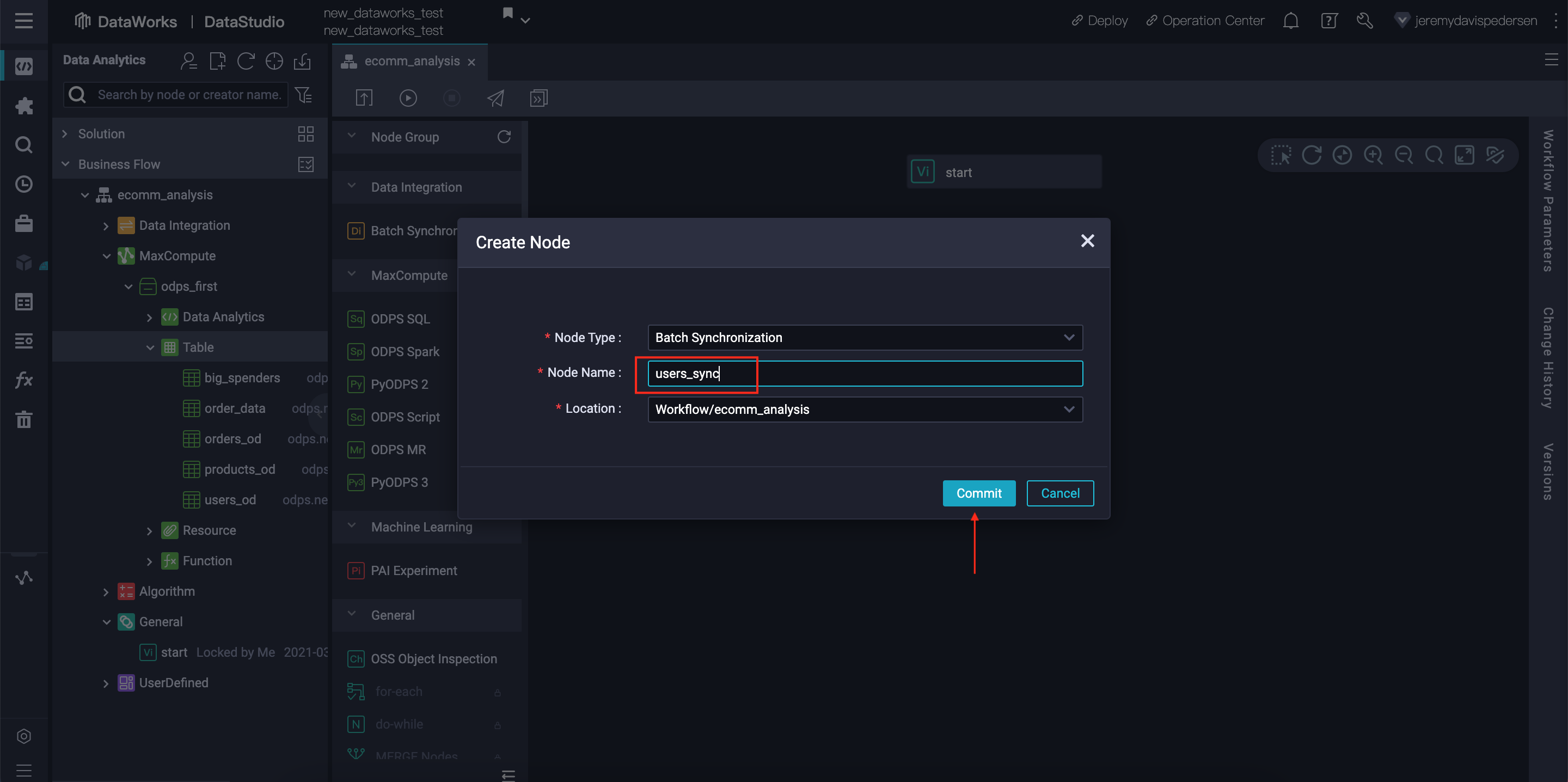

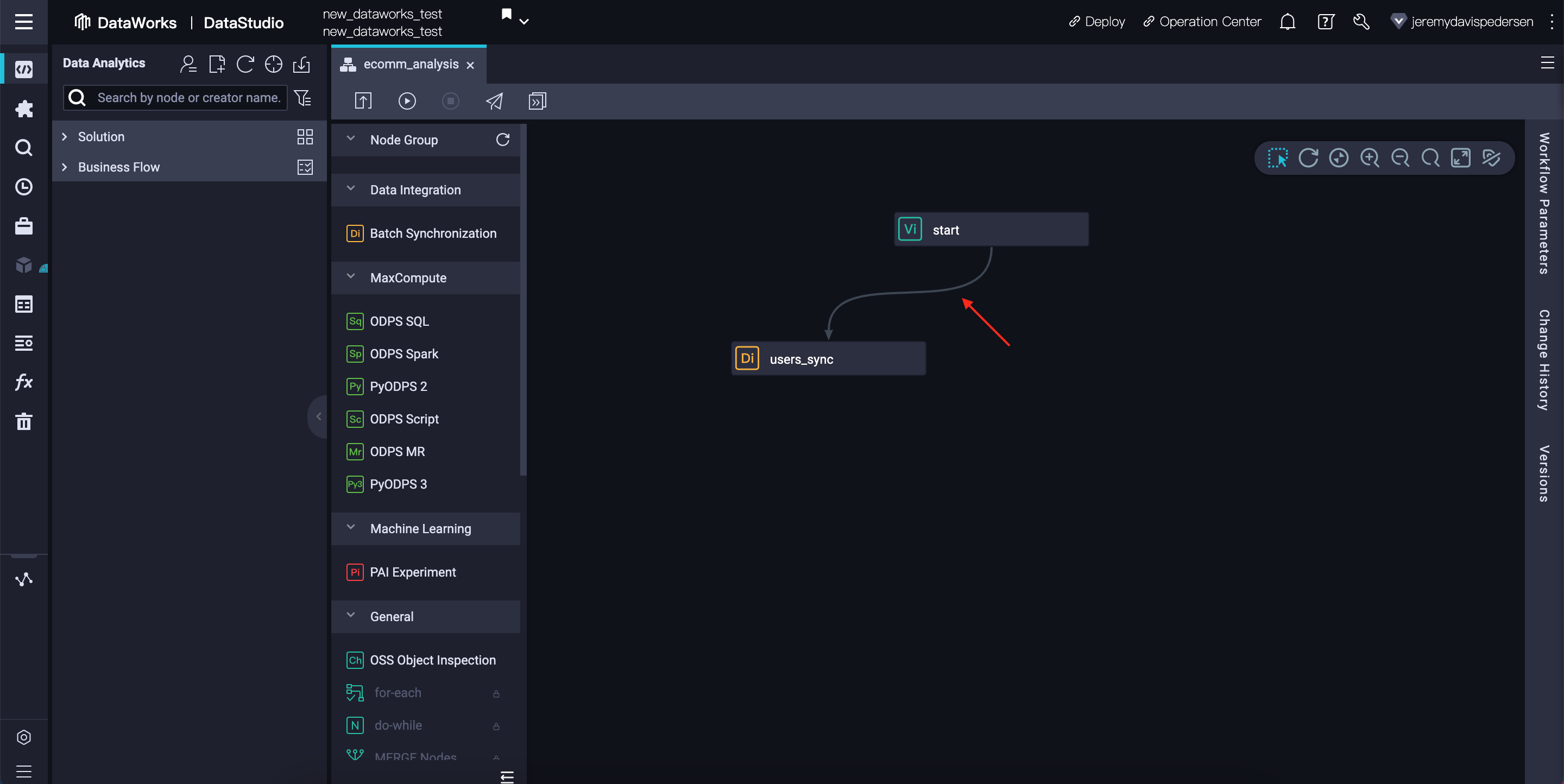

First, drag a Batch Synchronization node into the workflow, give it a meaningful name (such as users_sync), then drag a connecting line between start and this new node:

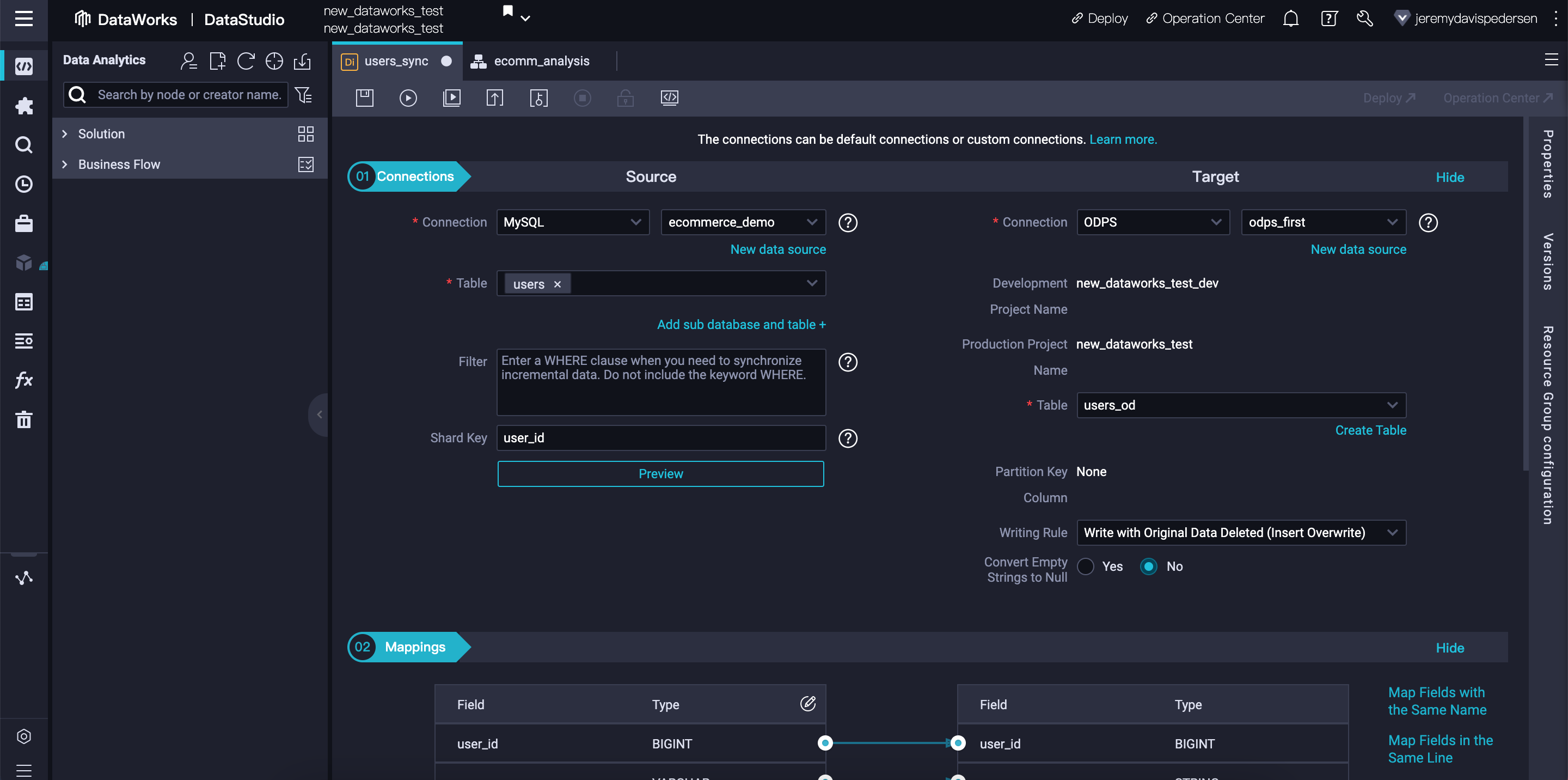

Next, double click on the new users_sync node to open its settings. Make sure the data source and target are set as follows:

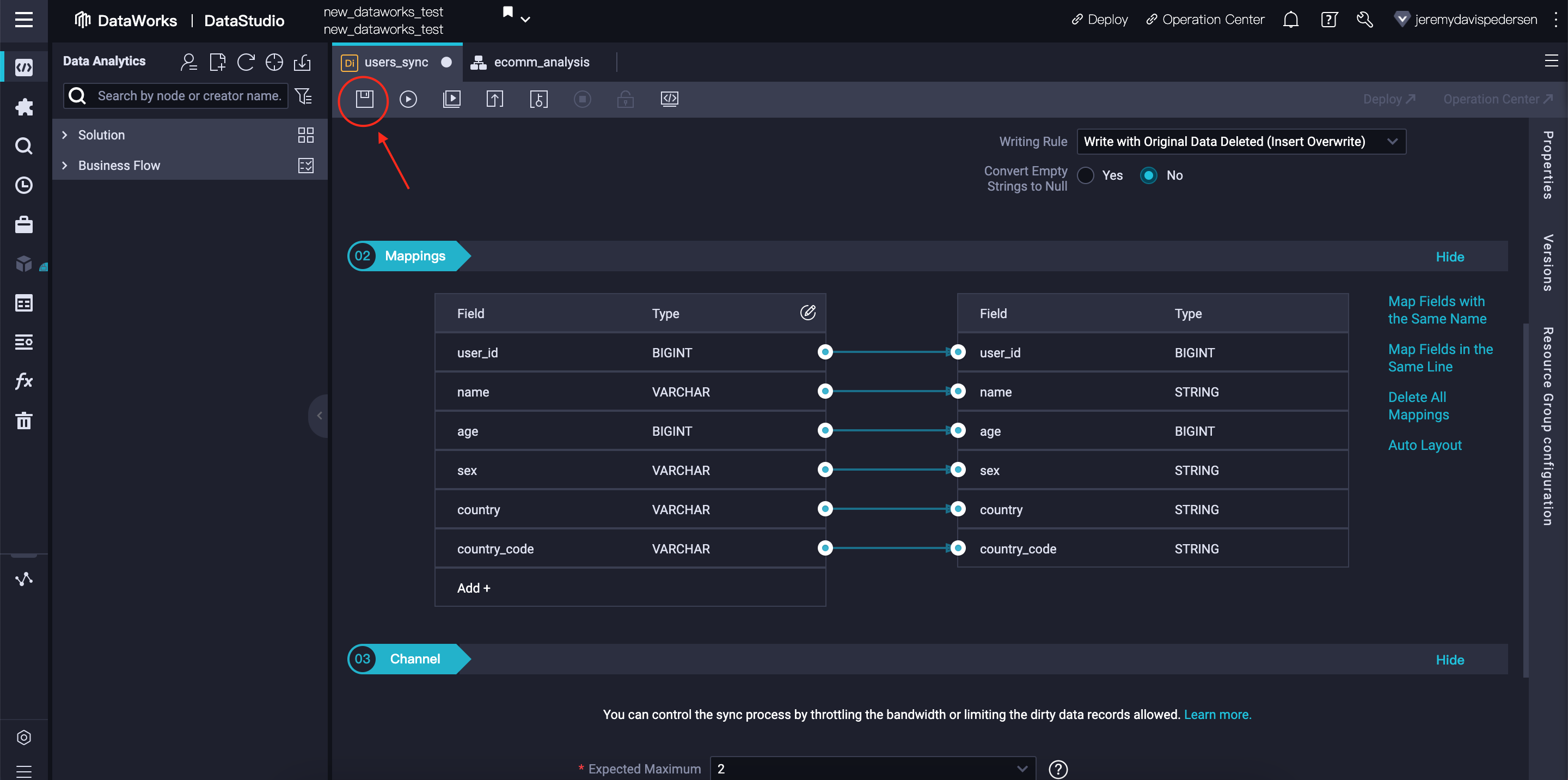

Scroll down to "mappings" and ensure that the mapping between the columns in the source & destination tables look correct:

If everything looks OK, click on the Save icon.

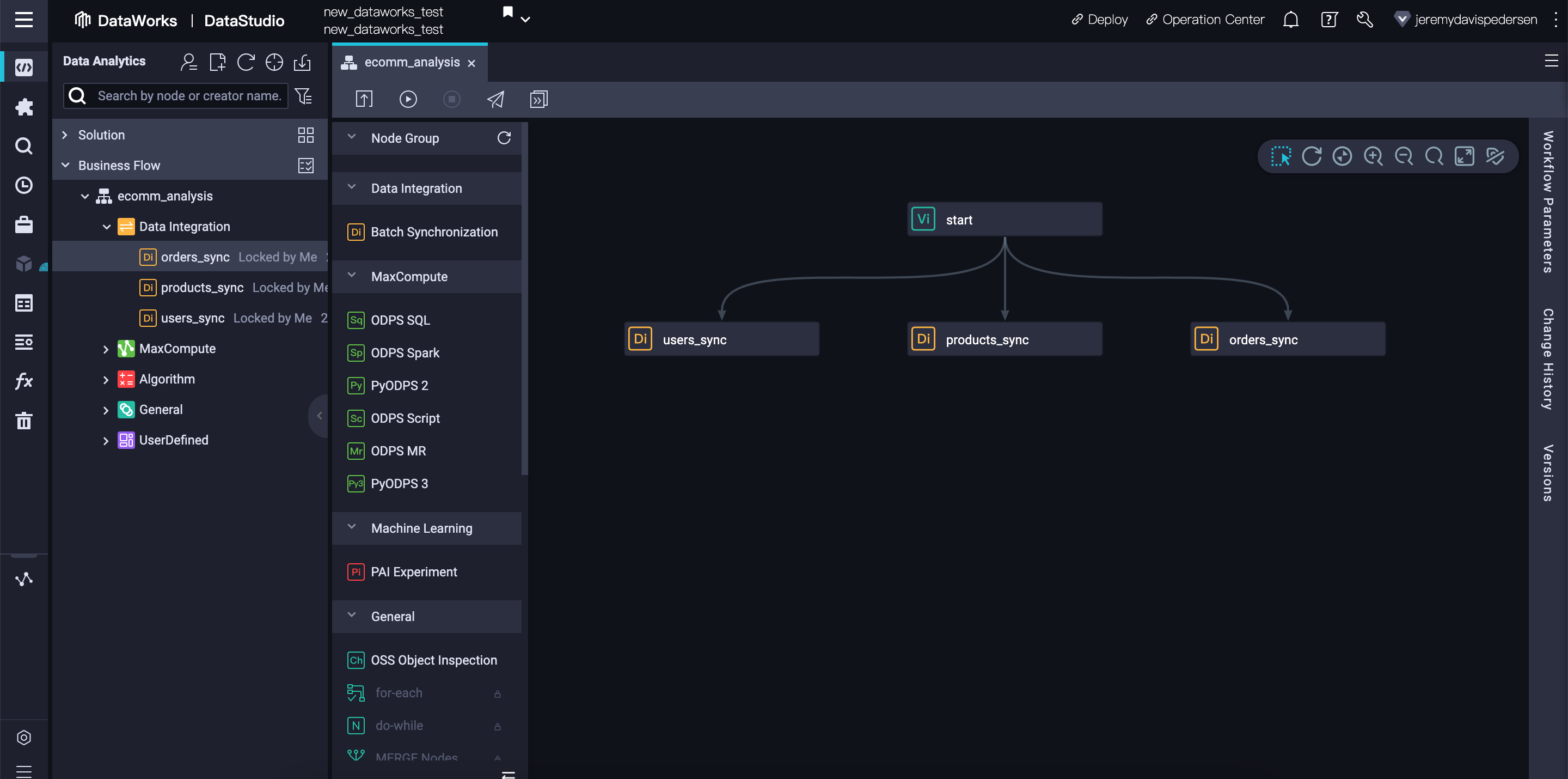

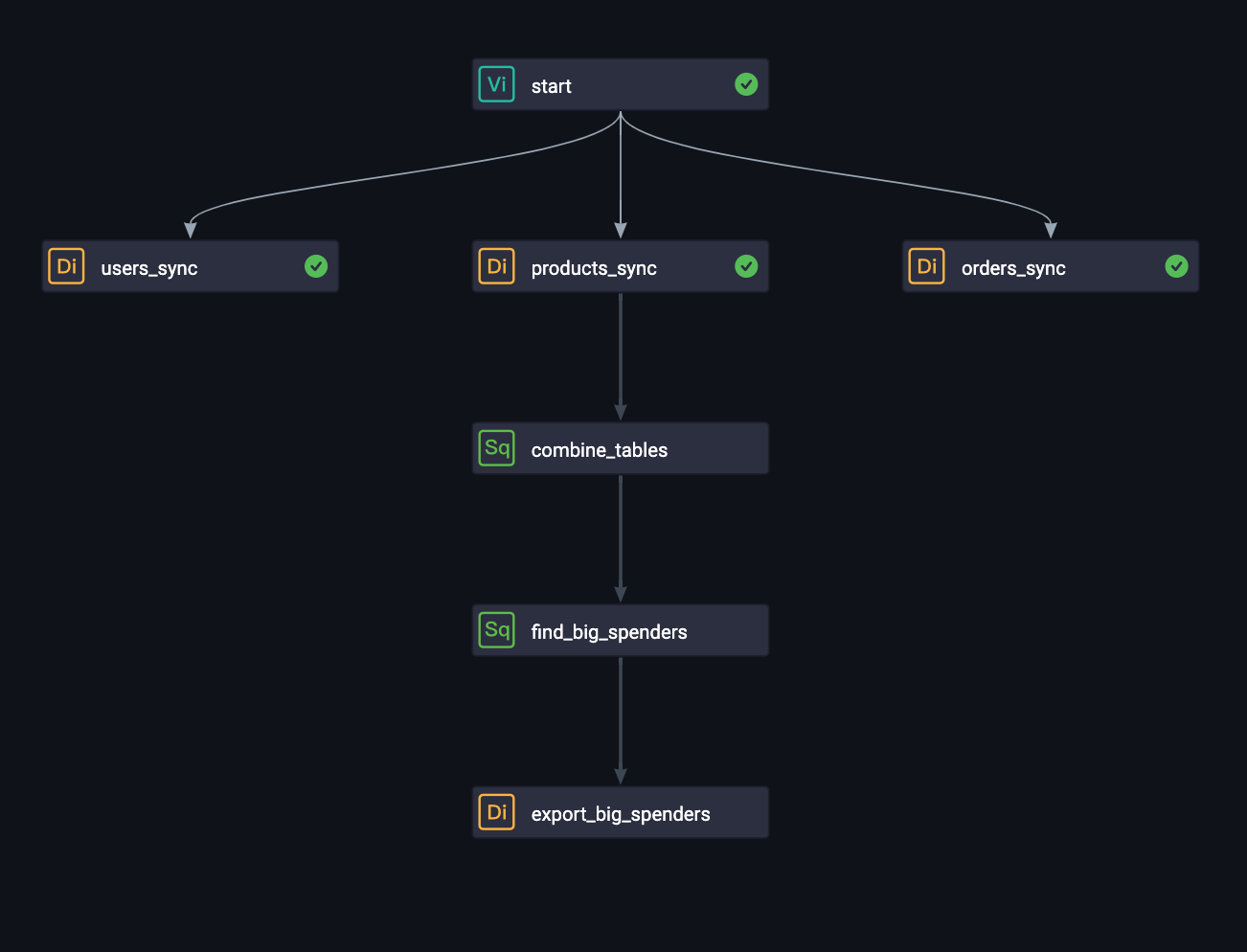

Now, repeat this process for the products and orders tables. You should end up with a workflow that looks like this:

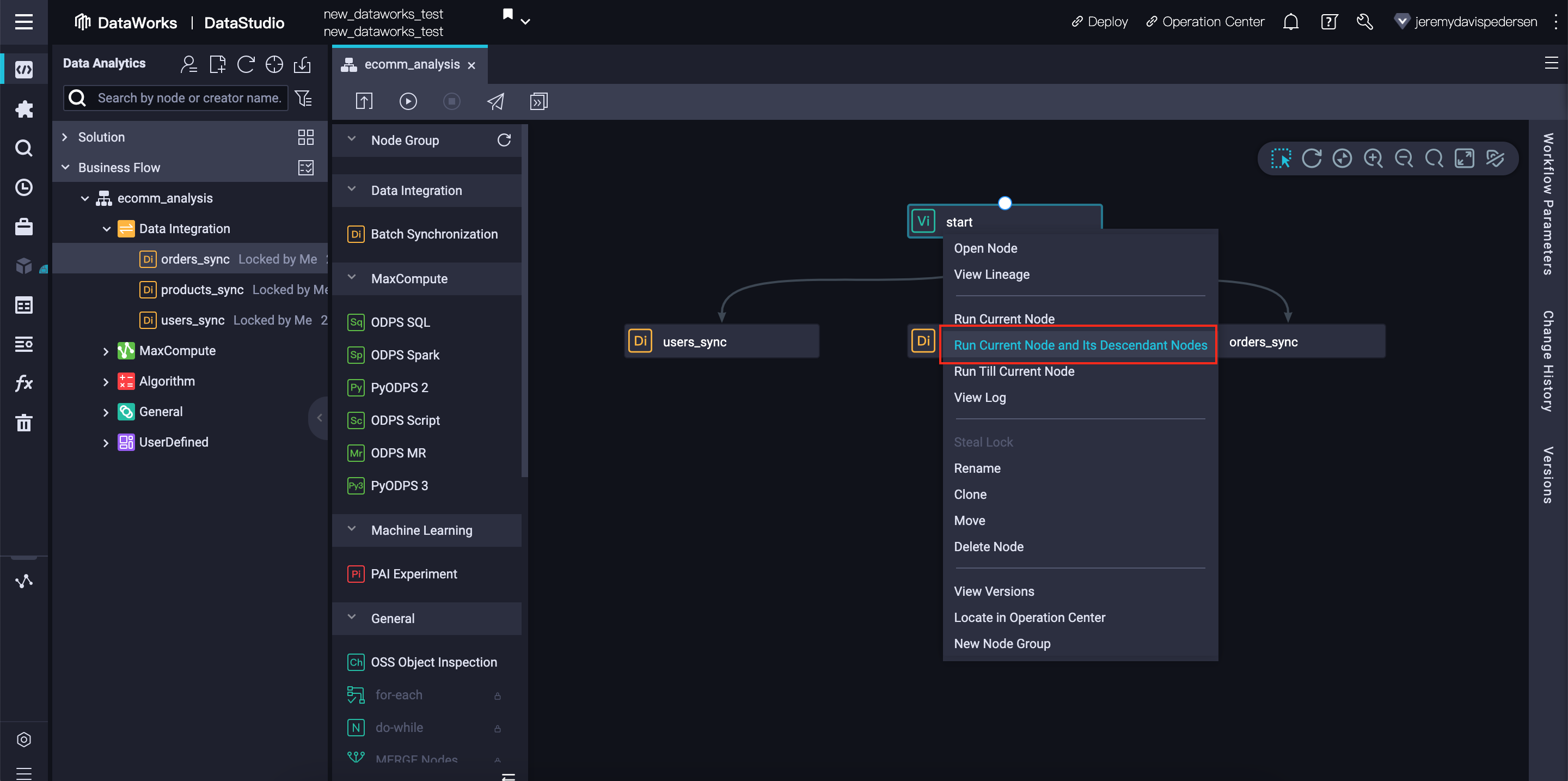

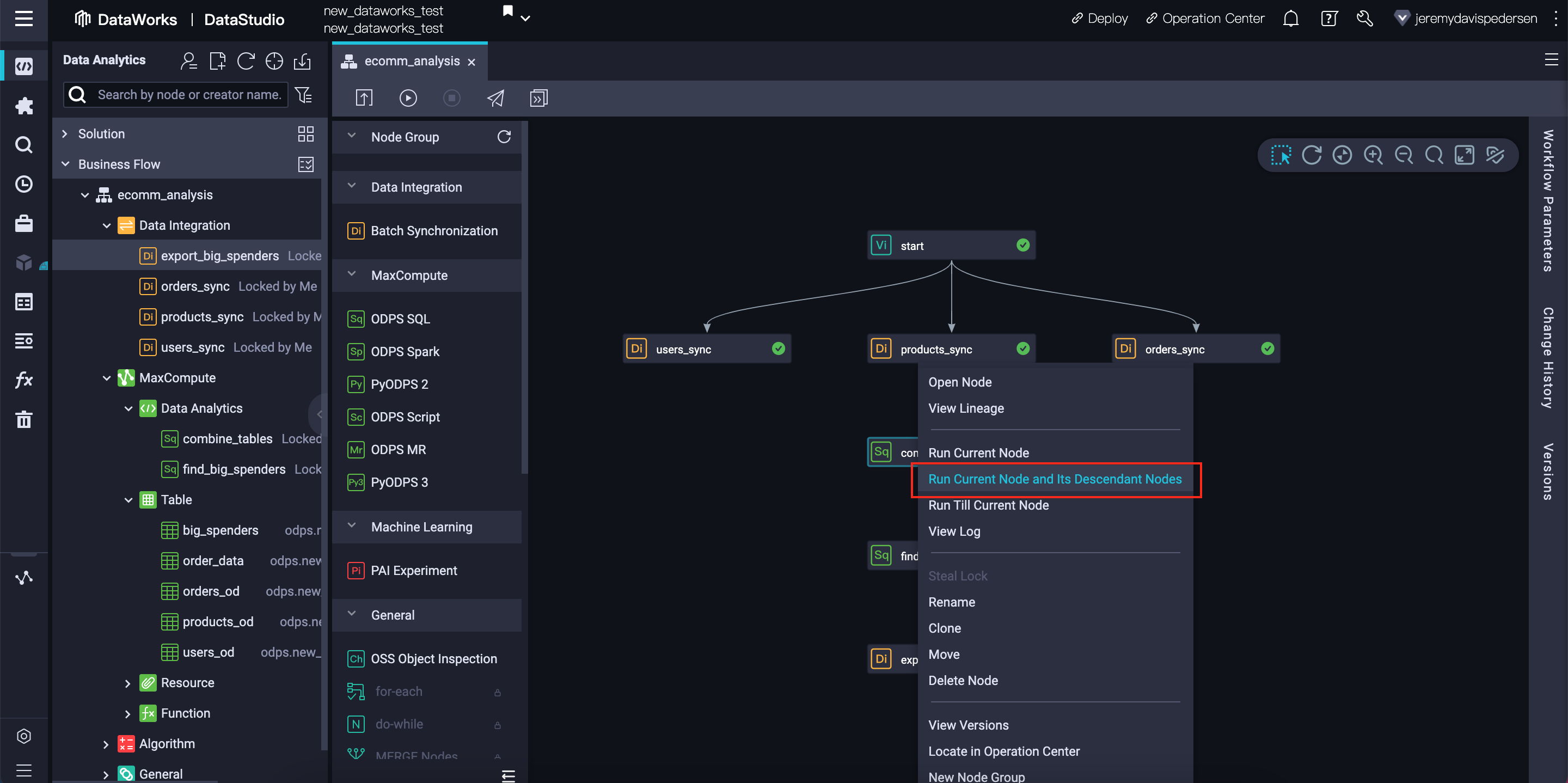

We can now run our workflow to ensure that the data import tasks are configure correctly. Right click on the start node and choose Run Current Node and Its Descendant Nodes:

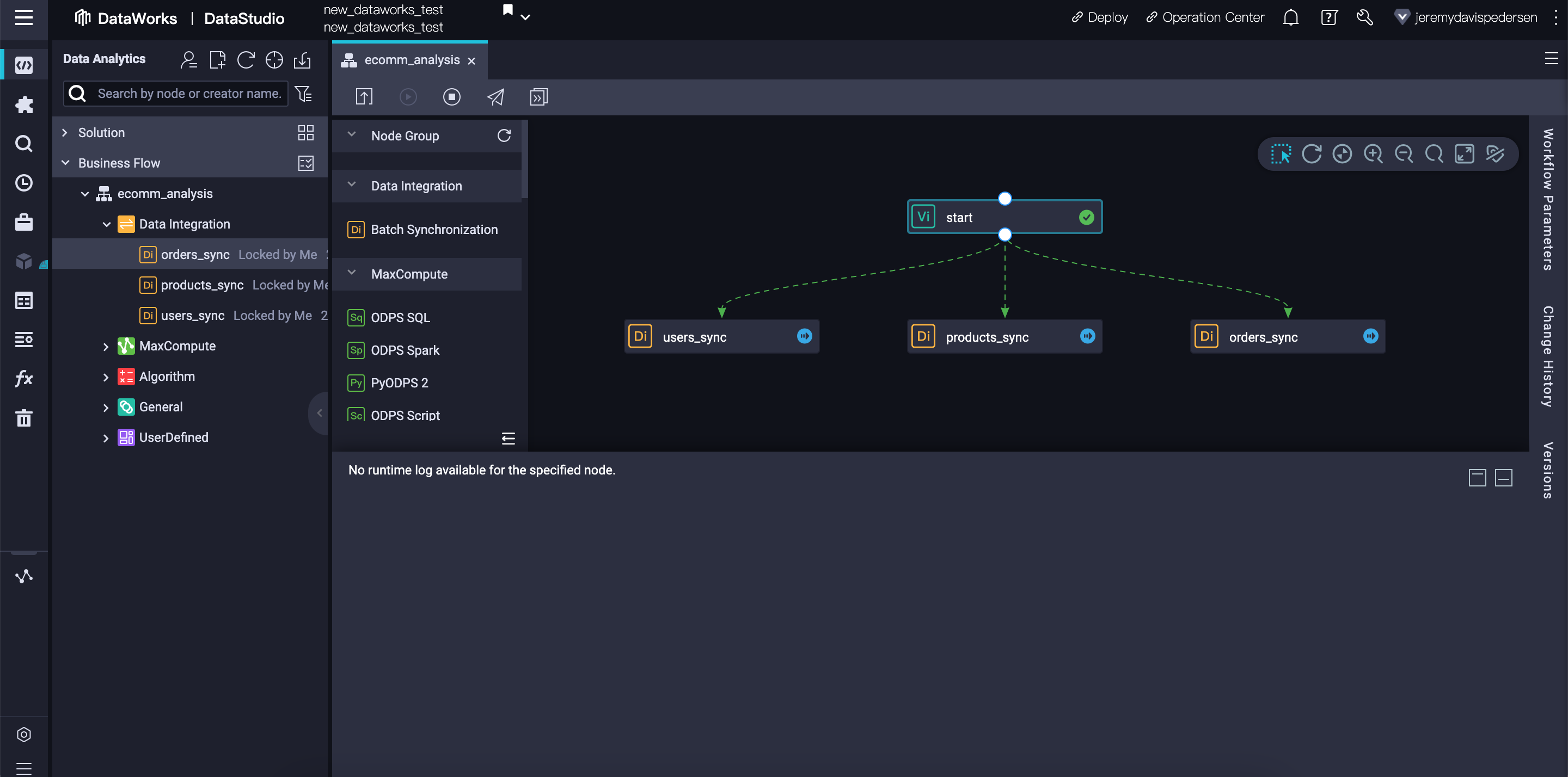

Once running, the DataWorks interface will open a log pane at the bottom of the Window, and will add status information to each node in the workflow. A green check mark indicates that a node has run successfully, a blue arrow indicates that a node is in progress, and a red "x" indicates that a node has failed:

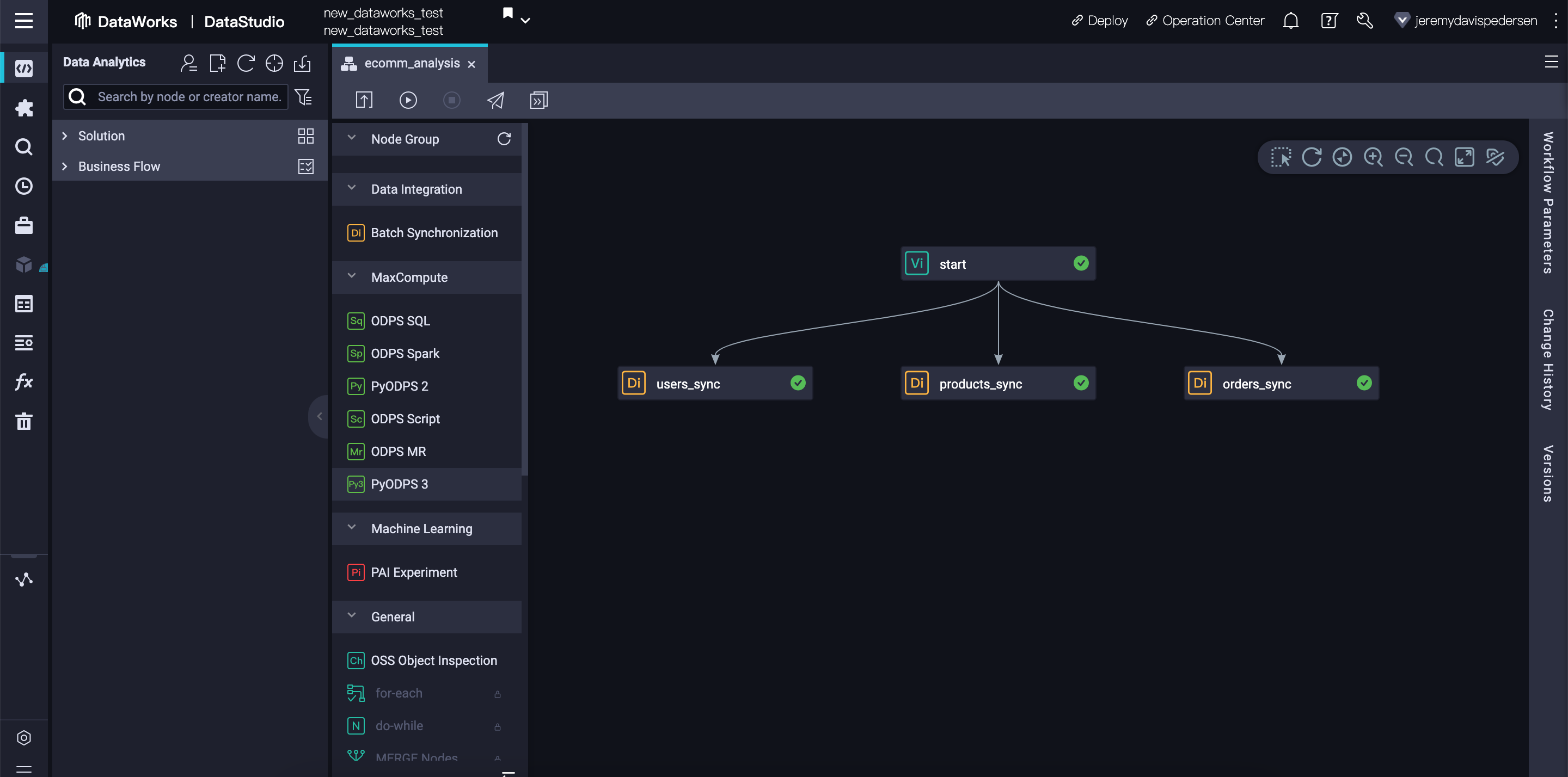

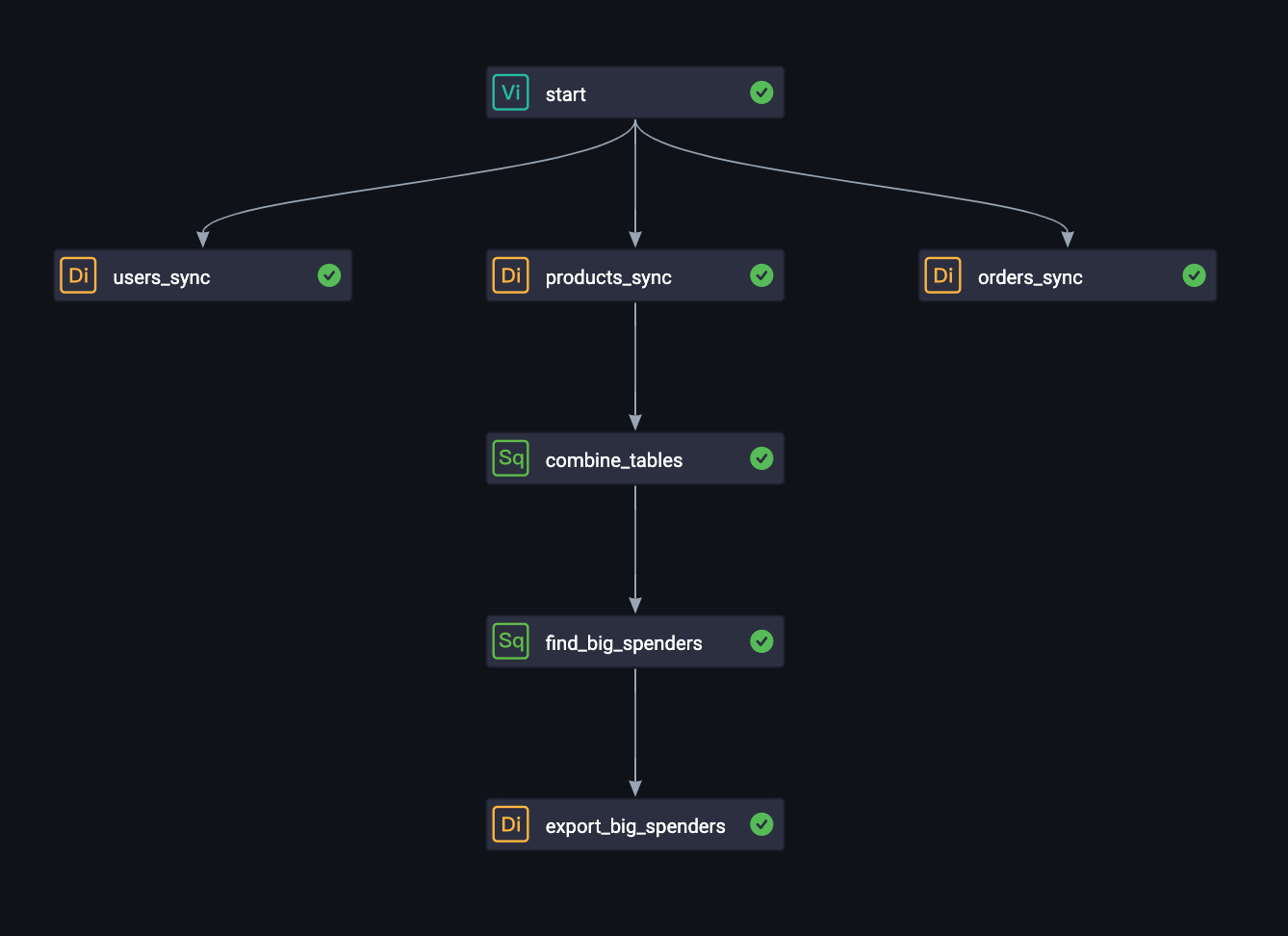

If there are no errors, the workflow should look like this once all nodes have run successfully:

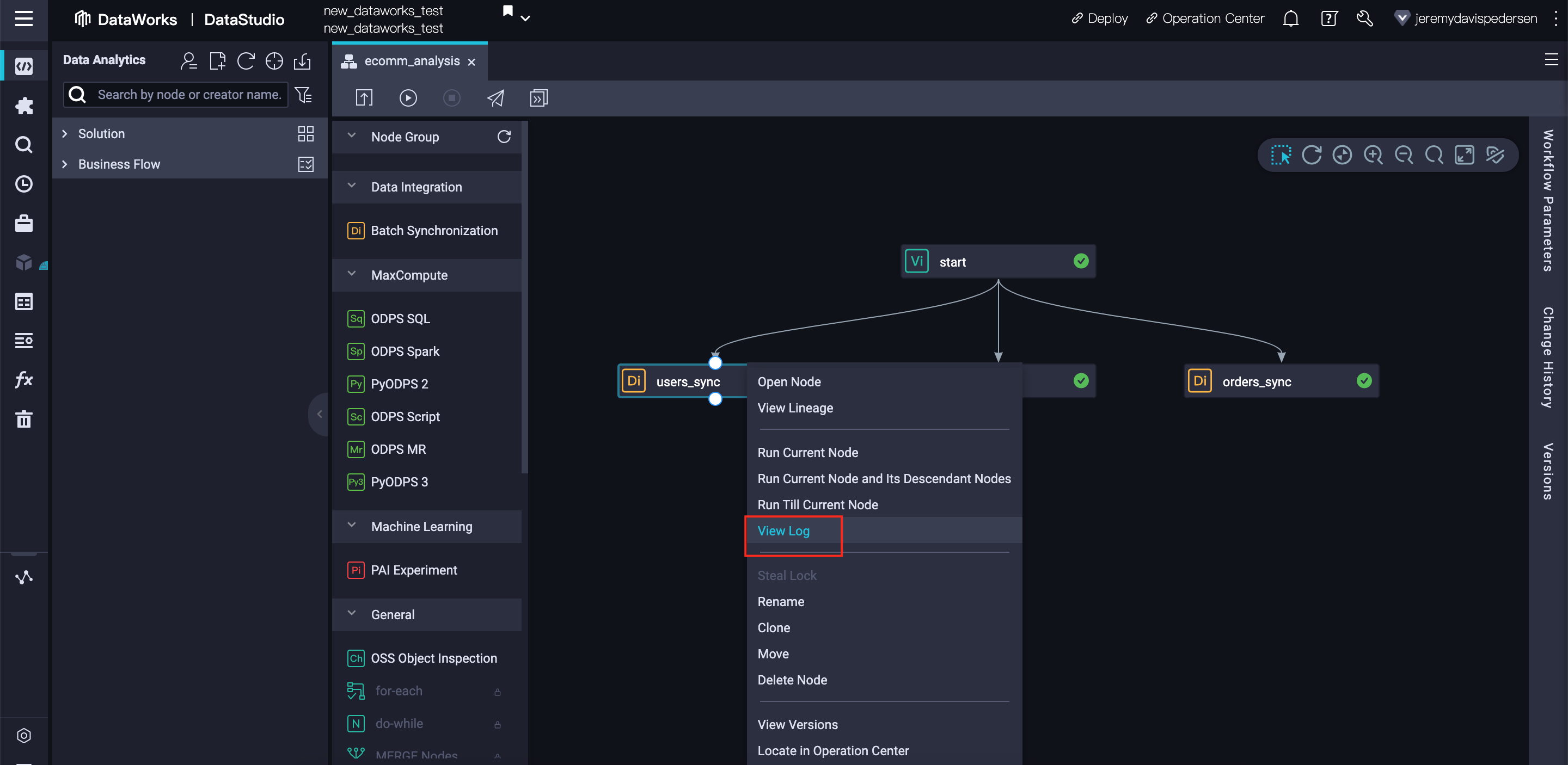

If you're curious, you can right click on any node and choose View Log to see a full task log from the node's last run:

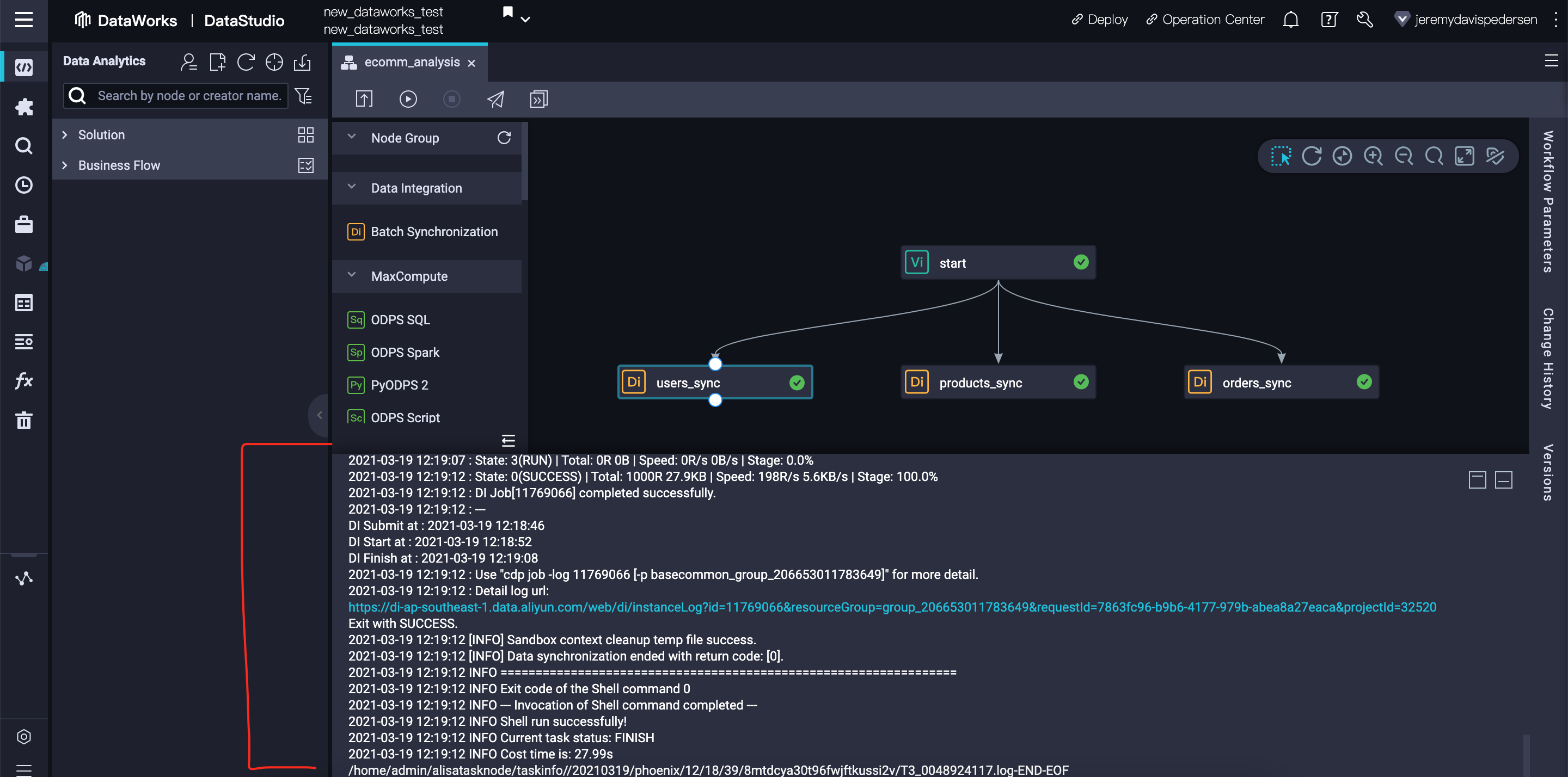

A typical log for a batch synchronization node looks like this:

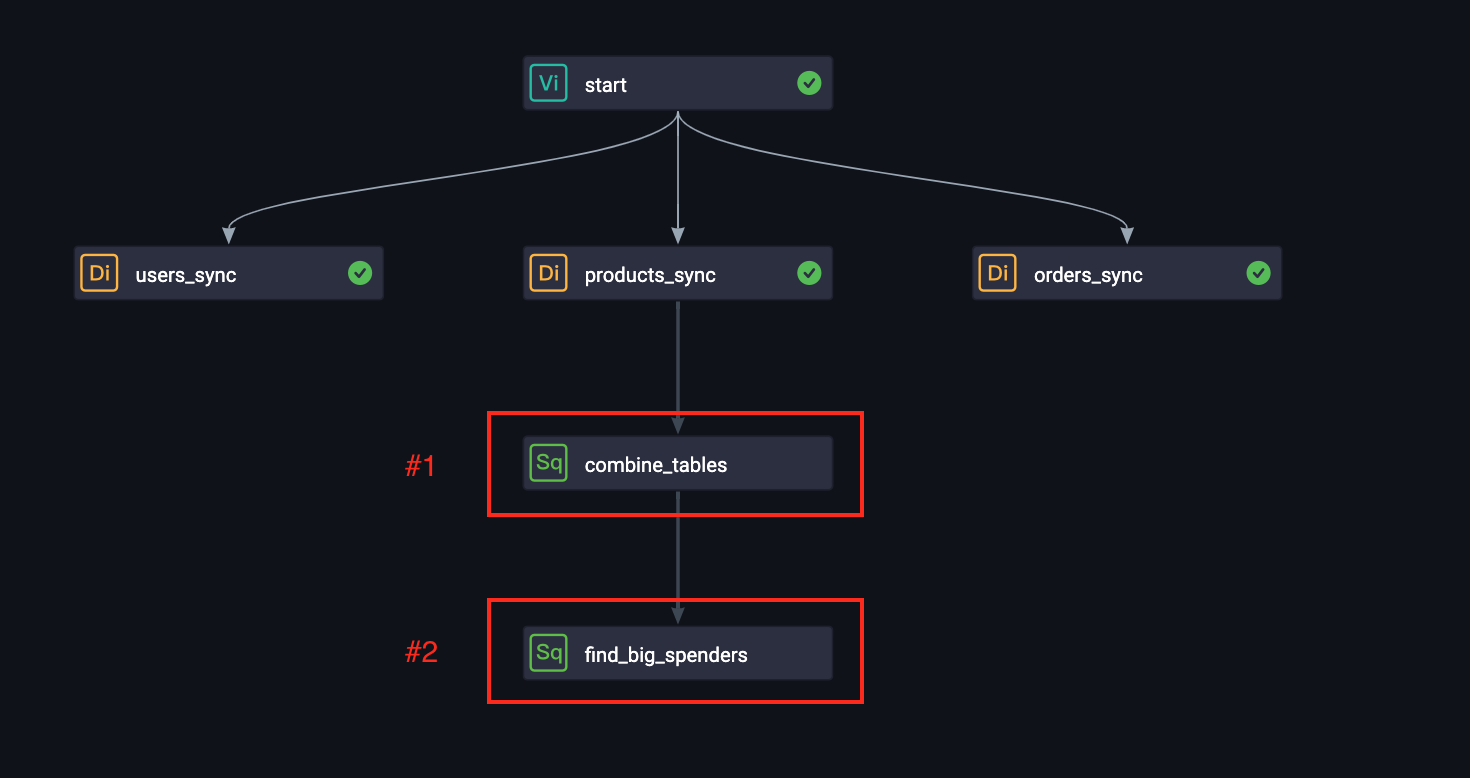

Now that all our data has been imported into MaxCompute, we can start analyzing it. Drag and drop 2 new ODPS SQL nodes onto the workflow, and connect them like so:

Double click on each of these new SQL nodes, and copy-paste the SQL code from the sections #1, and #2 below.

INSERT OVERWRITE TABLE order_data

SELECT order_id, product_id, a.user_id, product_name, price, quantity, ROUND(price*quantity,2) AS total, name, age, sex, country

FROM orders_od a JOIN products_od b ON a.product_code = b.product_id

JOIN users_od c ON a.user_id = c.user_id;INSERT OVERWRITE TABLE big_spenders

SELECT user_id, ROUND(SUM(total),2) AS spend

FROM order_data GROUP BY user_id, name ORDER BY spend LIMIT 10;big_spenders Data To MySQLAs a final step, we can take our list of big_spenders and export it back out to MySQL. It's a common practice to export final results from MaxCompute to a traditional relational database system, for fast querying and analysis.

In order to do this, we need to log into our MySQL Database using DMS, and create a new table using the following SQL statement. Remember, this should be done in DMS, NOT DataWorks!

CREATE TABLE `big_spenders` (

`user_id` bigint NULL,

`spend` double NULL

) ENGINE=InnoDB

DEFAULT CHARACTER SET=utf8;If you're not sure how to do this, take a look at this blog post, which explains in detail how to use DMS.

Next, drag a Batch Synchronization node into the workflow, and drag and arrow from find big spenders to this new node.

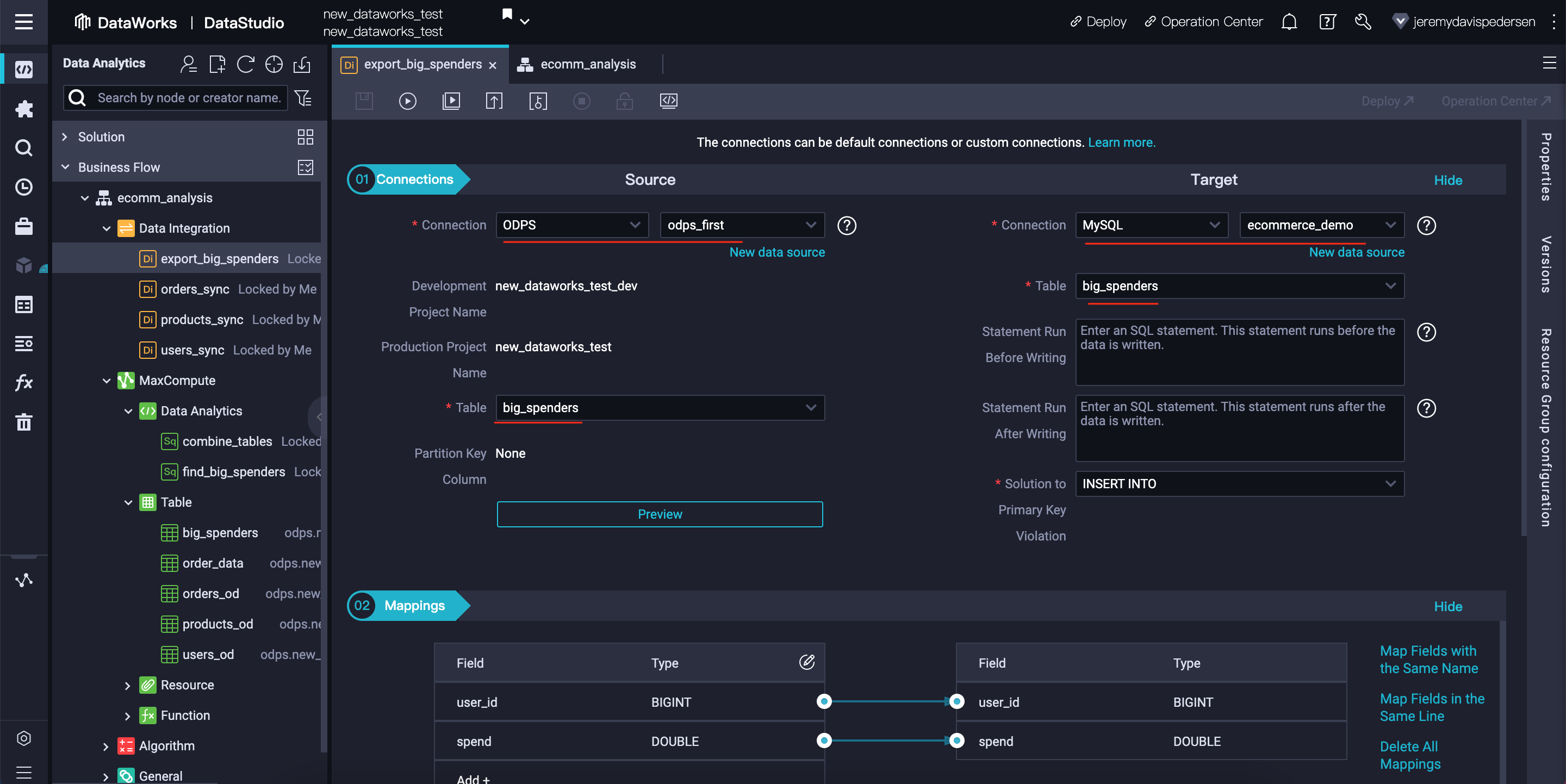

Open the new Batch Synchronization node, and make sure its settings match the ones shown here:

Note that this time, the target table is a table in MySQL, not in MaxCompute. Batch Synchronization nodes can be used to move data both into and out of MaxCompute tables, so this is perfectly fine.

The final workflow should look like this:

To run it, right click on the combine_tables node, and choose Run Current Node and Its Descendant Nodes:

If there are no issues, you should see a green check mark next to every node in the workflow.:

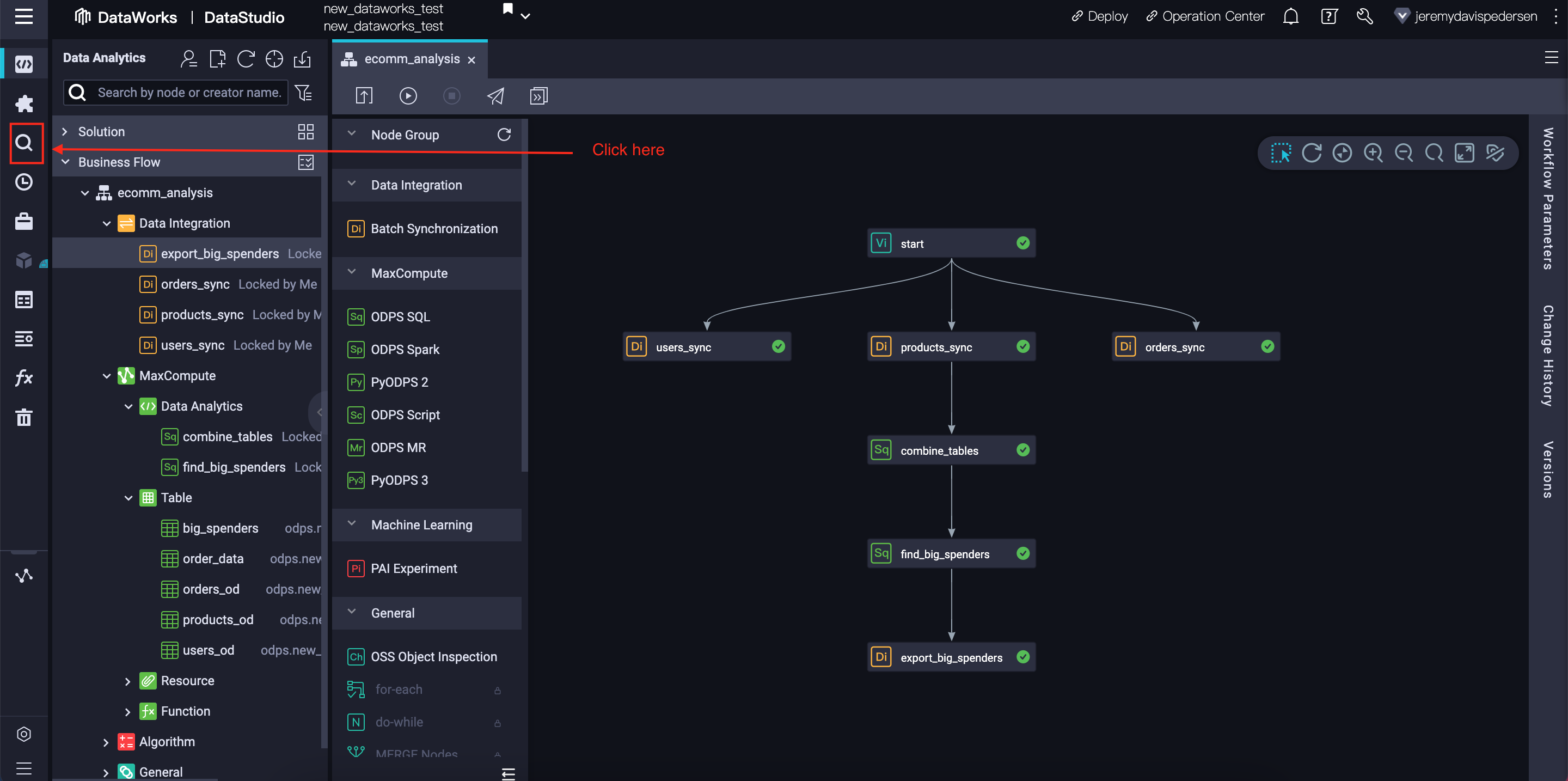

We can check that the Big Spenders table was successfully generated by taking a look at its contents using DataWorks' Ad-Hoc Query feature. This feature allows us to run MaxCompute SQL commands manually, without making them part of a workflow.

We can switch to the Ad-Hoc query interface by clicking on the magnifying glass icon on the left side of the DataWorks interface:

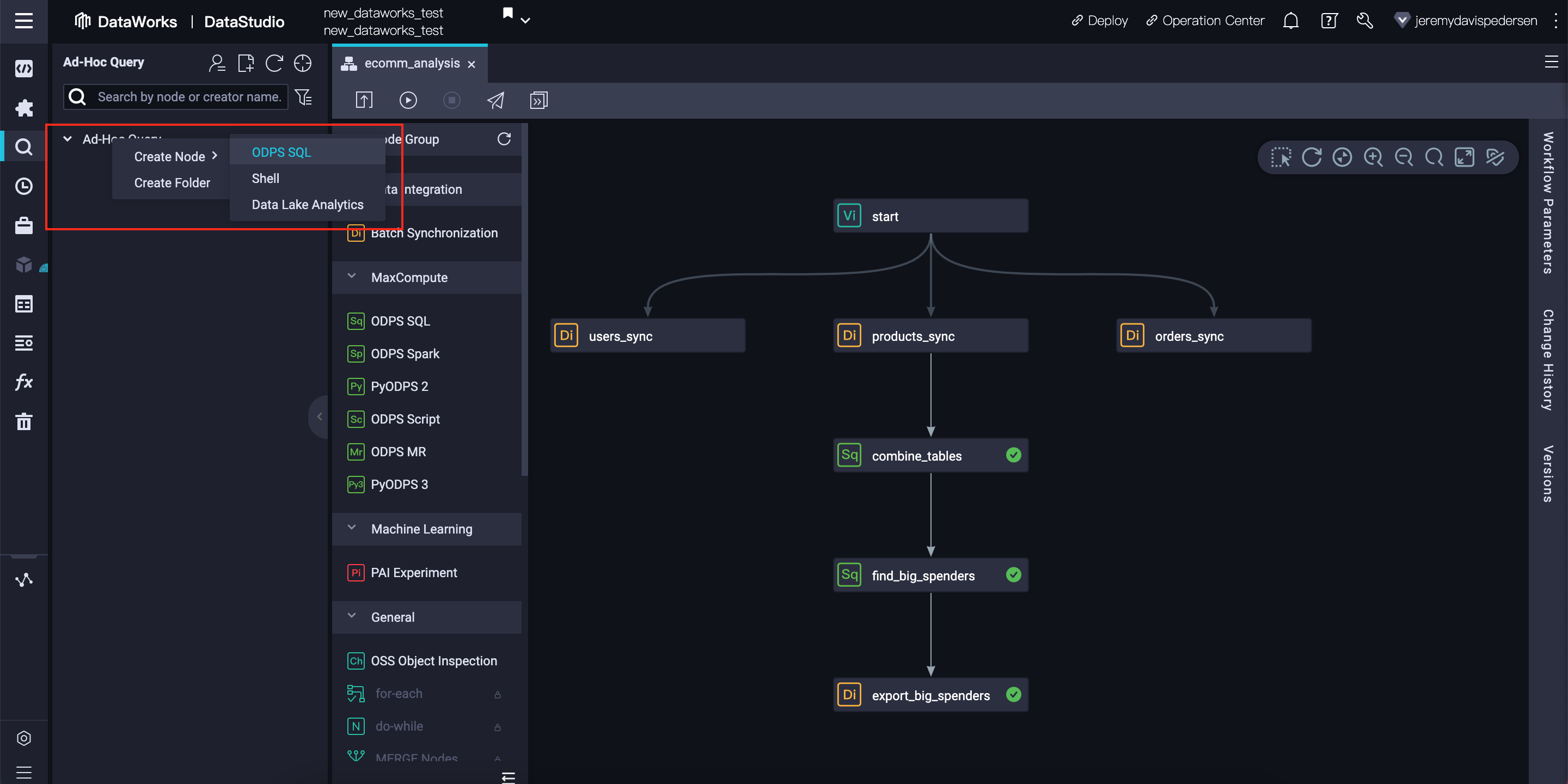

This will open up a panel on the left side of the interface. Right click on Ad-Hoc Query, choose Create Node, then click on ODPS SQL.

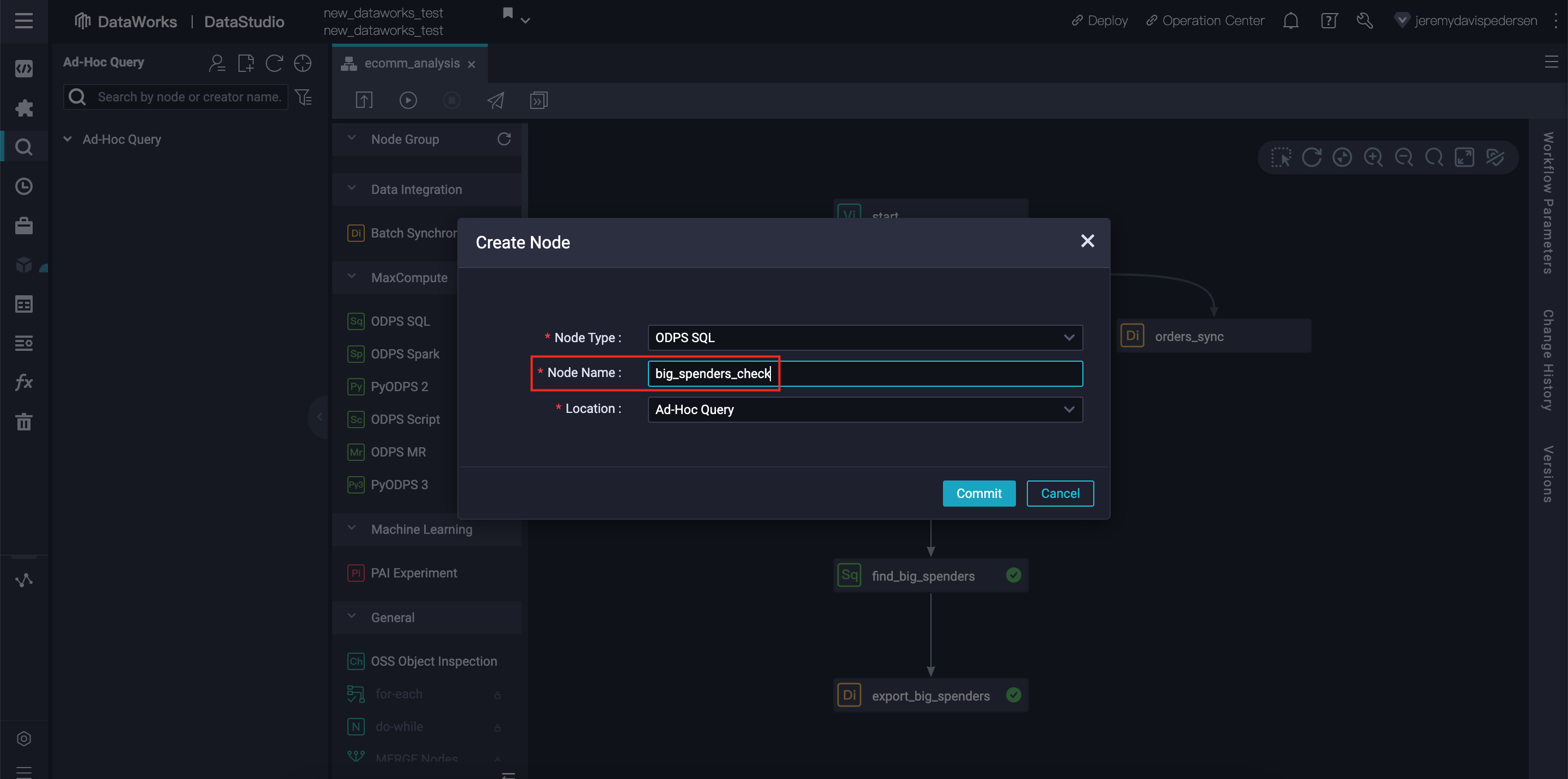

You will then need to give your new node a name. You can choose any name you like or stick with the default username_date format:

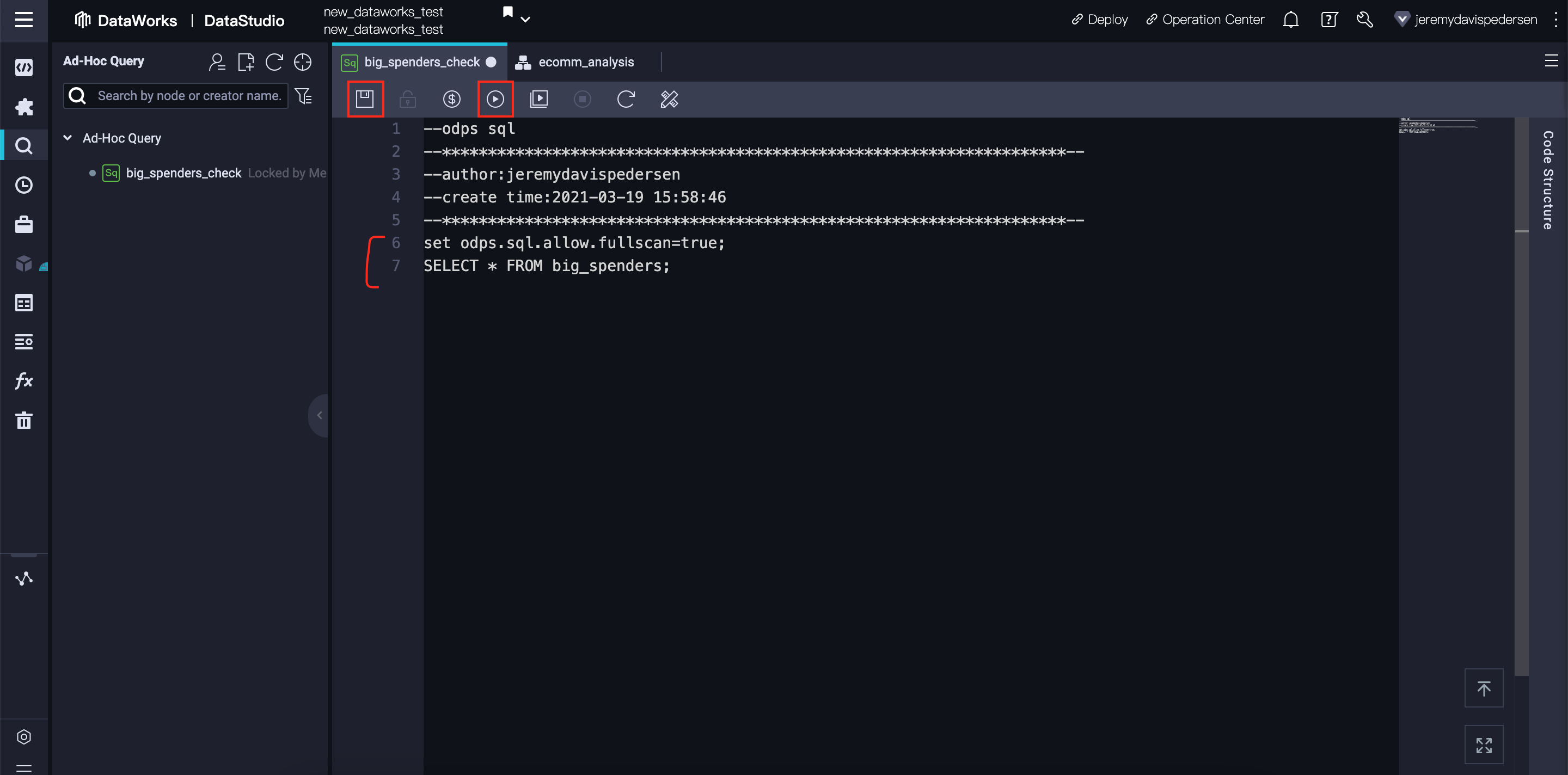

After clicking Commit, a new tab will open. Insert the following SQL code there:

set odps.sql.allow.fullscan=true;

SELECT * FROM big_spenders;Note that the first line, set odps.sql.allow.fullscan=true;, lets DataWorks know that we are OK performing a full table scan. By default, MaxCompute does not allow you to run a full table scan because in production scenarios, MaxCompute tables can be tens of terabytes in size, and a full query could be very expensive. Our big_spenders table is quite small, so a full table scan should be safe for us.

Hit the Save button, then hit Run. Note that for larger queries, you may want to get an estimate of how much the query is going to cost to run. You can do that by clicking on the $ icon that appears between the Save and Run buttons.

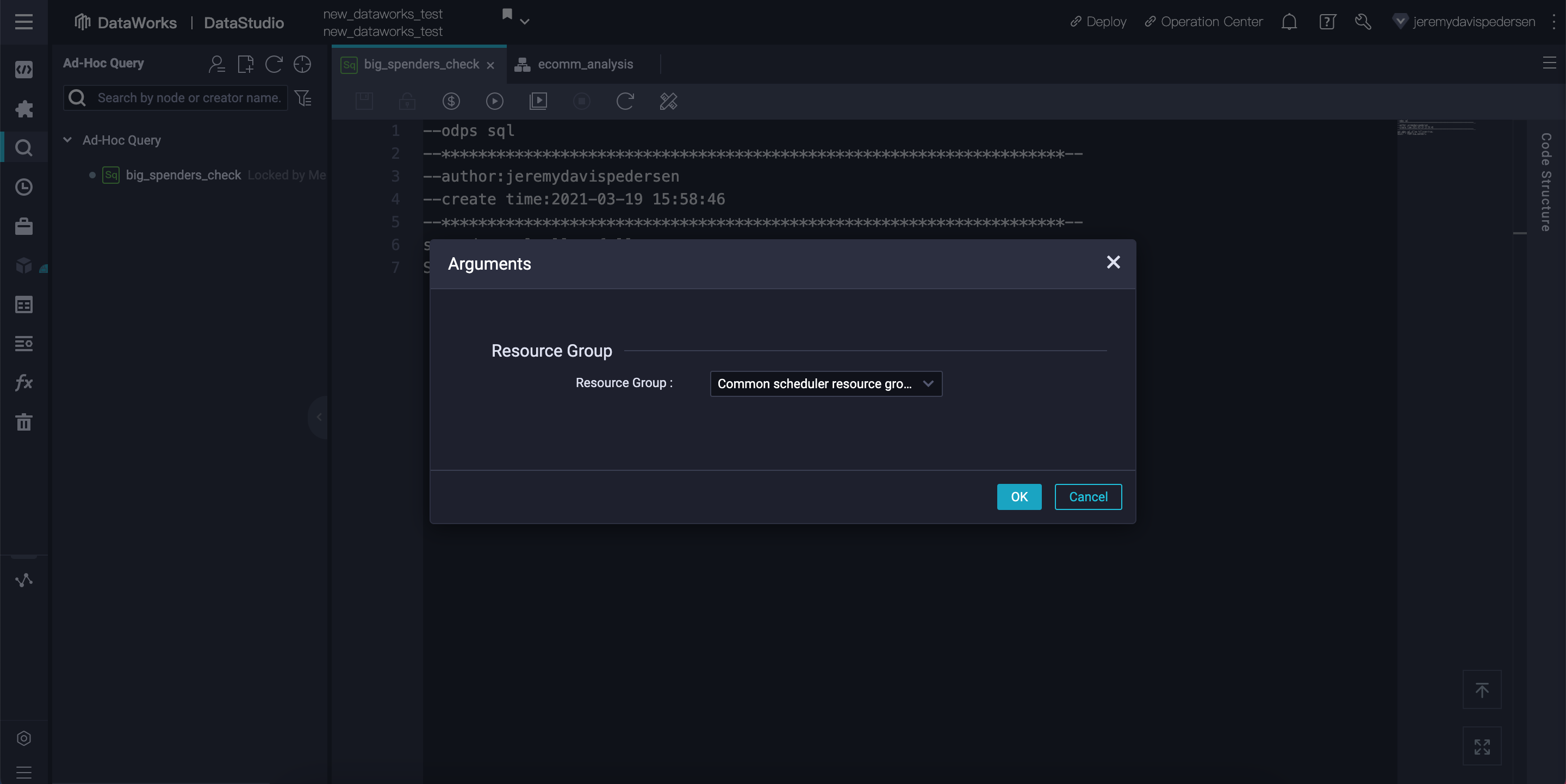

DataWorks will then ask you which Resource Group you want to use to run the query. Customers who are using Subscription mode may have Dedicated Resource Groups they can use. We are using Pay-As-You-Go MaxCompute, so we will simply use the default Common scheduler resource group:

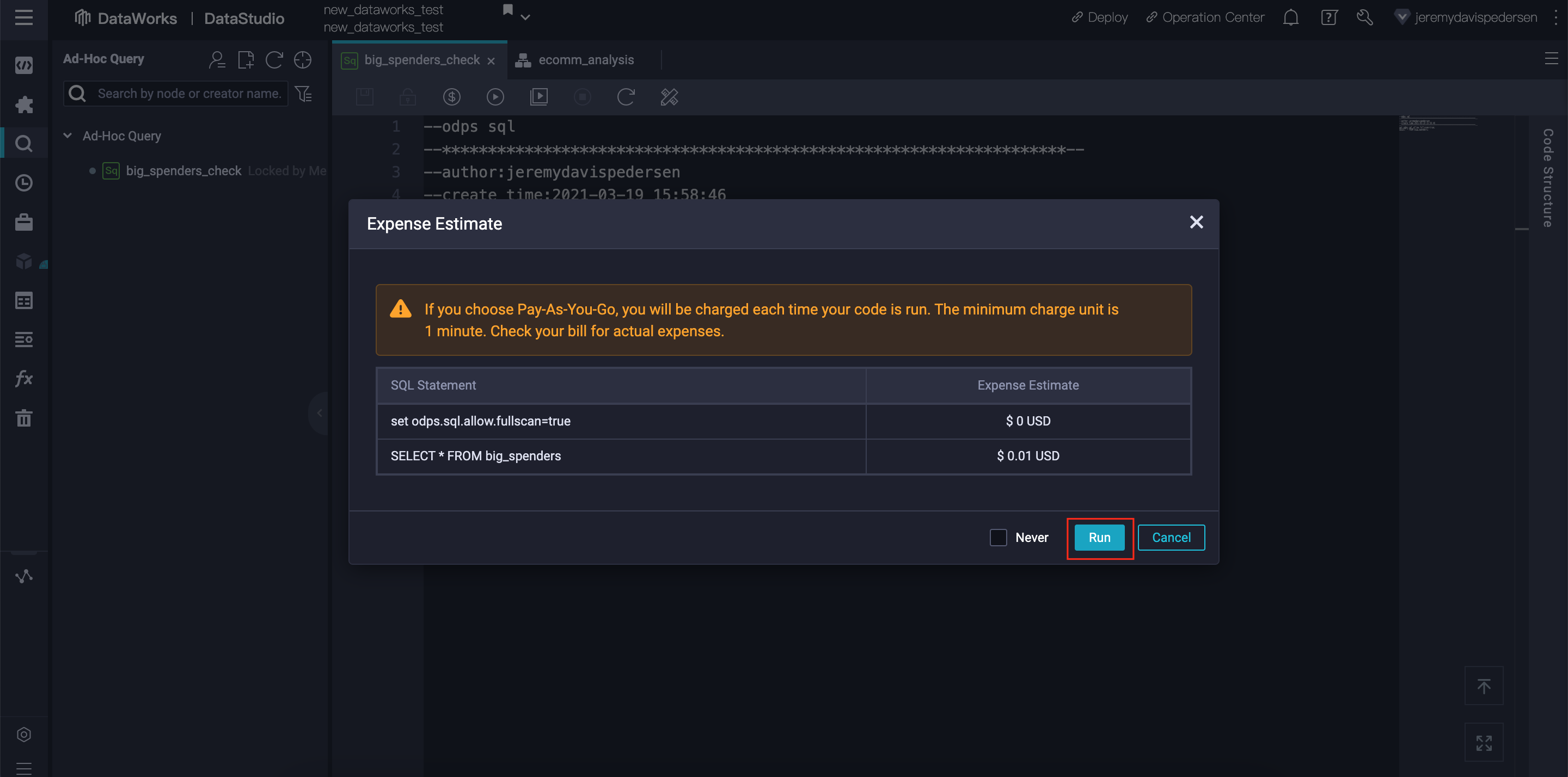

After we hit OK, DataWorks will give us a cost estimate to run our query, and will warn us if there are any MaxCompute SQL syntax errors. Our big_spenders table is small (only 10 rows) so it will cost almost nothing to run this query. In this case, DataWorks estimates a cost of USD 0.01 to run the query:

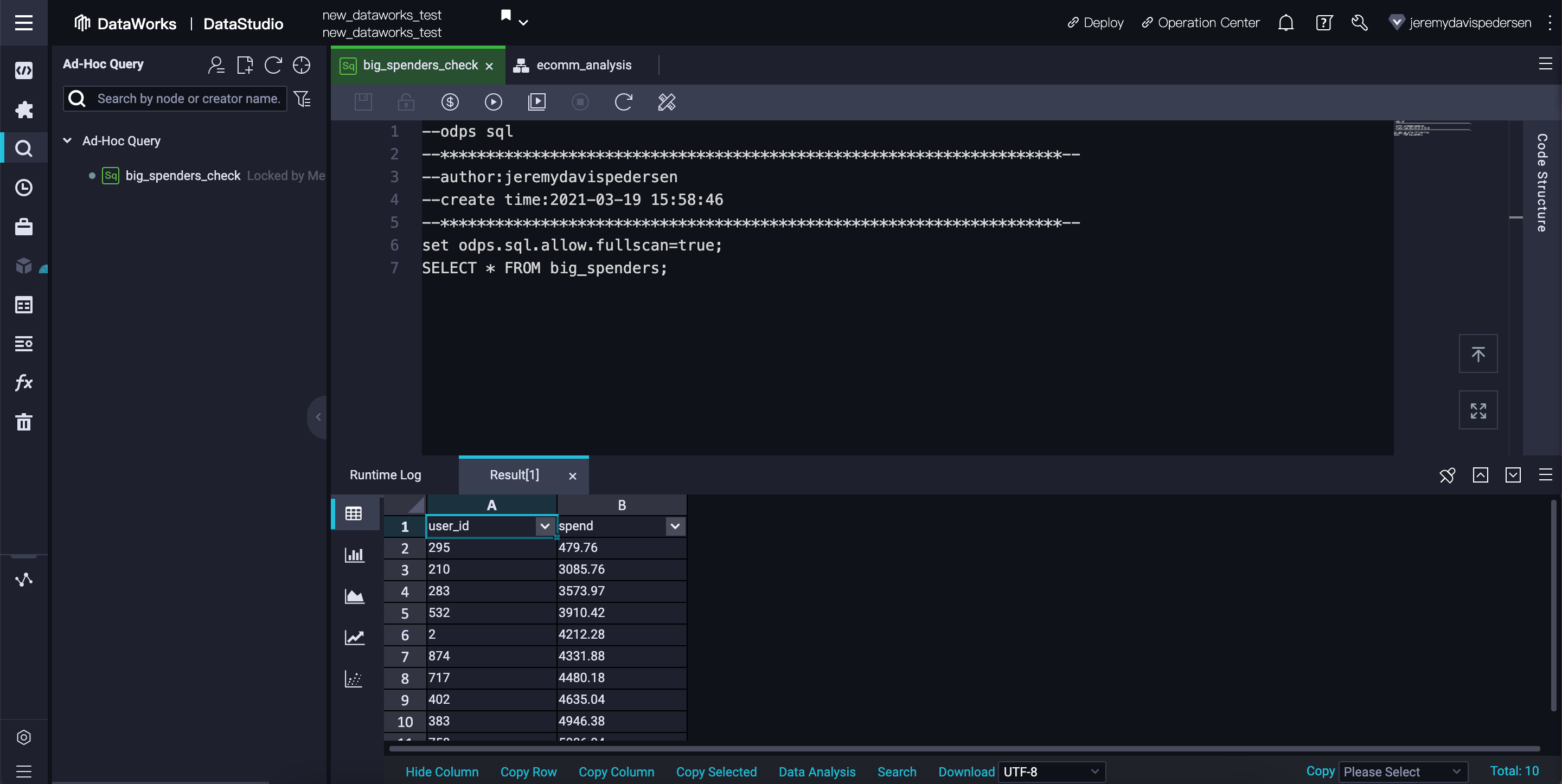

Hit Run, then wait for the MaxCompute SQL task to complete. If there are no errors, a Results tab should appear, showing the results of the SQL code we just ran:

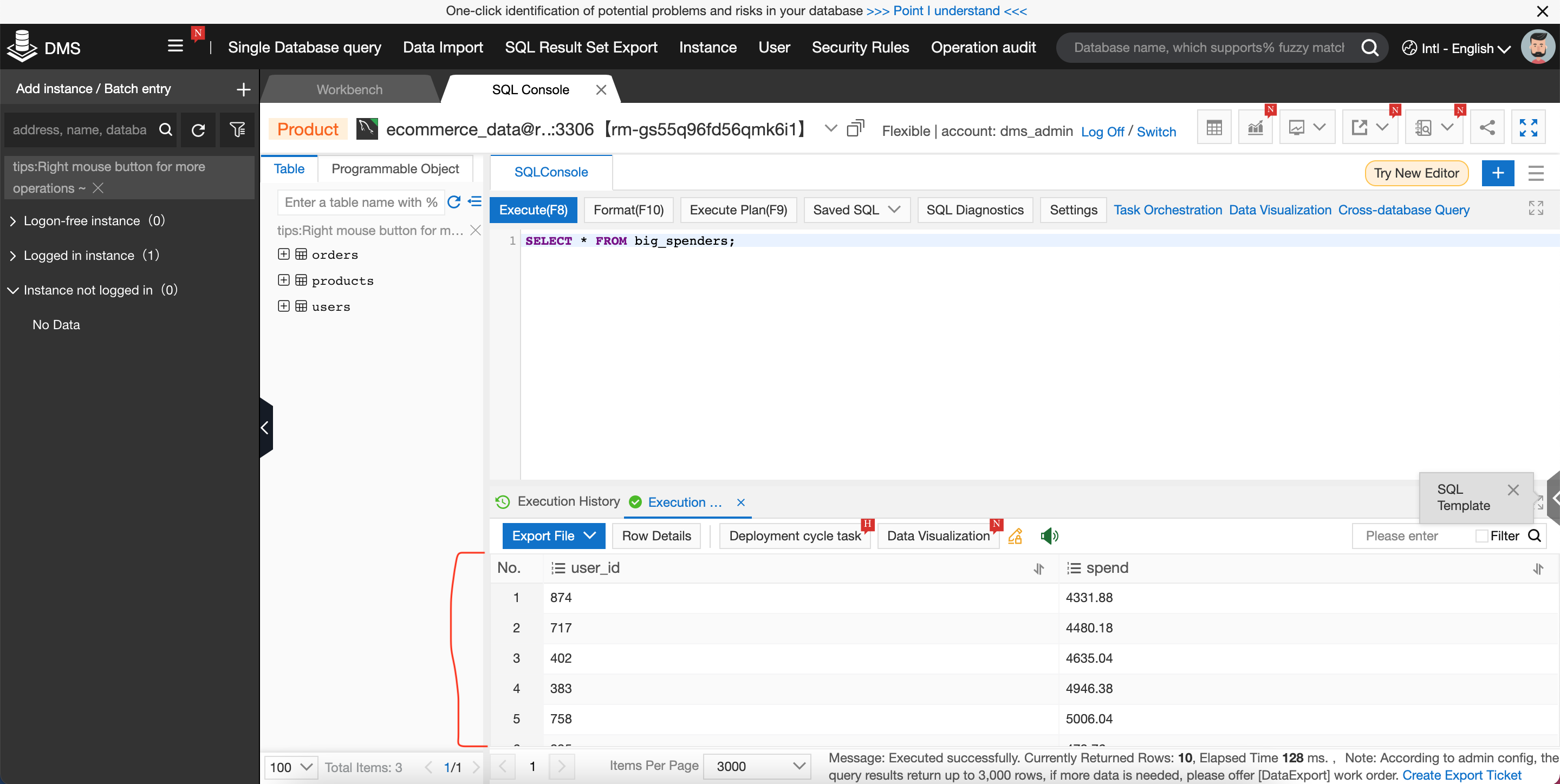

That's it! If you'd also like to confirm that the data was successfully exported to our MySQL database, you can switch over to the DMS console and run this SQL query:

SELECT * FROM big_spenders;You should see something like this:

Wondering what to try next? Here are a few suggestions:

For more helpful content, browse your way over to the Alibaba Cloud YouTube Channel or take a look at our blog on Medium

JDP - January 6, 2022

Alibaba Clouder - September 3, 2019

Alibaba Cloud MaxCompute - February 19, 2019

Alibaba Cloud MaxCompute - February 15, 2019

Kalpesh Parmar - May 6, 2026

JDP - November 11, 2021

The explanation is very detailed, God willing, I can understand this material

MaxCompute

MaxCompute

Conduct large-scale data warehousing with MaxCompute

Learn More Big Data Consulting for Data Technology Solution

Big Data Consulting for Data Technology Solution

Alibaba Cloud provides big data consulting services to help enterprises leverage advanced data technology.

Learn More Big Data Consulting Services for Retail Solution

Big Data Consulting Services for Retail Solution

Alibaba Cloud experts provide retailers with a lightweight and customized big data consulting service to help you assess your big data maturity and plan your big data journey.

Learn More Realtime Compute for Apache Flink

Realtime Compute for Apache Flink

Realtime Compute for Apache Flink offers a highly integrated platform for real-time data processing, which optimizes the computing of Apache Flink.

Learn MoreMore Posts by JDP

5428217680340790 June 10, 2021 at 7:03 am

What a great explanation! Thank you for sharing with us :)