By Chao Qian (Xixie)

Sysbench is a classic integrated performance testing tool. It is usually used for performance stress testing of databases, but it can also be used to test the I/O performance of CPUs. Sysbench is not typically recommended for I/O testing, not because the tool has problems, but because its testing method may cause confusion as we are not sure whether the performance data is related to the performance promised by cloud vendors. Generally, FIO is used for performance testing as recommend by cloud vendors. FIO performance testing can easily show the performance promised by cloud vendors.

Currently, the major Sysbench versions include 0.4.12 and 1.0. The development of Sysbench was suspended for a long time in 2006, and the early version 0.4.12 was used until 2017. (That is why you can find lots of articles on the Internet about version 0.4.) Then, the developer released version 0.5, fixing several bugs. In 2017, the developer introduced brand new Sysbench versions 1.0*. The performance tests described here are performed using the latest version.

How is Sysbench used for performance testing? You can run sysbench fileio help to view the parameters as shown in the following figure:

#/usr/local/sysbench_1/bin/sysbench fileio help

sysbench 1.0.9 (using bundled LuaJIT 2.1.0-beta2)

fileio options:

--file-num=N number of files to create [128]

--file-block-size=N block size to use in all IO operations [16384]

--file-total-size=SIZE total size of files to create [2G]

--file-test-mode=STRING test mode {seqwr, seqrewr, seqrd, rndrd, rndwr, rndrw}

--file-io-mode=STRING file operations mode {sync,async,mmap} [sync]

--file-async-backlog=N number of asynchronous operatons to queue per thread [128]

--file-extra-flags=STRING additional flags to use on opening files {sync,dsync,direct} []

--file-fsync-freq=N do fsync() after this number of requests (0 - don't use fsync()) [100]

--file-fsync-all[=on|off] do fsync() after each write operation [off]

--file-fsync-end[=on|off] do fsync() at the end of test [on]

--file-fsync-mode=STRING which method to use for synchronization {fsync, fdatasync} [fsync]

--file-merged-requests=N merge at most this number of IO requests if possible (0 - don't merge) [0]

--file-rw-ratio=N reads/writes ratio for combined test [1.5]All performance tests with Sysbench require the Prepare, Run, and Cleanup steps to respectively prepare data, run the test, and delete data. See the following example for details:

A customer uses a 2C4G VM and mounts a 120 GB SSD cloud disk for performance testing. The test command is as follows:

cd /mnt/vdb #Run this command in the disk to be tested. Otherwise, the system disk may be tested instead.

sysbench fileio --file-total-size=15G --file-test-mode=rndrw --time=300 --max-requests=0 prepare

sysbench fileio --file-total-size=15G --file-test-mode=rndrw --time=300 --max-requests=0 run

sysbench fileio --file-total-size=15G --file-test-mode=rndrw --time=300 --max-requests=0 cleanupThe result is as follows:

File operations:

reads/s: 2183.76

writes/s: 1455.84

fsyncs/s: 4658.67

Throughput:

read, MiB/s: 34.12

written, MiB/s: 22.75

General statistics:

total time: 300.0030s

total number of events: 2489528

Latency (ms):

min: 0.00

avg: 0.12

max: 204.04

95th percentile: 0.35

sum: 298857.30

Threads fairness:

events (avg/stddev): 2489528.0000/0.00

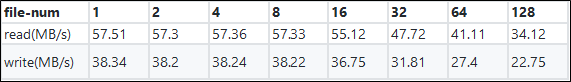

execution time (avg/stddev): 298.8573/0.00The random read/write performance seems to be poor. The IOPS value is (34.12 + 22.75) * 1024/16.384 = 3554.375, which is quite different from the declared IOPS value of 5400. Using only two cores to traverse 128 files may result in low efficiency. Therefore, file-num is tailored to perform a series of tests. The test results are as follows:

Obviously, the default test method will cause performance degradation, and the performance is maximized when the number of files is set to 1.

The difference between file-num = 128 and file-num = 1 is that the number of test files is changed from 128 to 1, but the total file size remains at 15 GB and the files are randomly read/written. Theoretically, the performance should be stable. However, reading and writing data across multiple files results in more interruptions and context switching overhead. The vmstat command is used for verification.

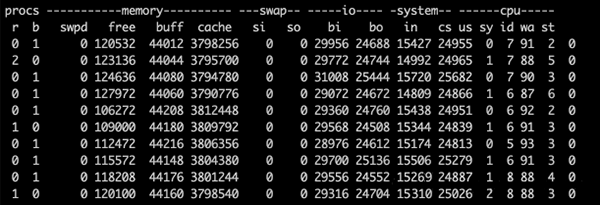

The vmstat command output for file-num = 128 is as follows:

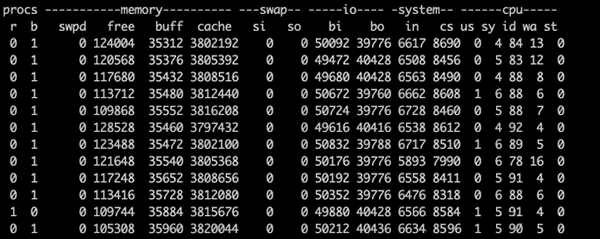

The vmstat command output for file-num = 1 is as follows:

The preceding figures show that, when file-num is 1, the context switching values are only about 8500, while they stand at 24800 when file-num is 128. In addition, the in values (indicating the number of interruptions) are much smaller when file-num is 1. Throughput is significantly increased as the interruptions and context switching overhead are reduced.

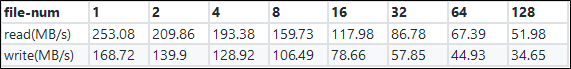

In another test, a disk with the same size is mounted to an 8C VM and eight threads are used for testing. The following data is obtained:

The performance is also maximized when file-num is set to 1, for the same reason.

When a single process is used and file-num is set to 1, the IOPS value is (57.51 + 38.34) * 1024/16.384 = 5990.625, which seems to exceed the IOPS settings. How can this IOPS value be generated using FIO?

fio -direct=1 -iodepth=128 -rw=randrw -ioengine=libaio -bs=4k -size=1G -numjobs=1 -runtime=1000 -group_reporting -filename=iotest -name=randrw_testBy reading the source code, we can find the following differences:

The previous section argues that test data inconsistency may result from the interference of the operating system and differences in the I/O read/write methods. According to the source code, the developer of Sysbench advocates libaio, and employs a lot of macro definitions in the code. See the following example:

/* Extracted code for asynchronous data writing */

#ifdef HAVE_LIBAIO

else if (file_io_mode == FILE_IO_MODE_ASYNC)

{

/* Use asynchronous write */

io_prep_pwrite(&iocb, fd, buf, count, offset);

if (file_submit_or_wait(&iocb, FILE_OP_TYPE_WRITE, count, thread_id))

return 0;

return count;

}

#endifThis macro is enabled by default.

After this macro is enabled, if you run sysbench fileio help, the message --file-async-backlog=N number of asynchronous operations to queue per thread [128] appears, indicating that the HAVE_LIBAIO macro has taken effect.

Since Sysbench uses libaio by default, the entire test method must be adjusted as follows:

# --file-extra-flags=direct indicates that the file reading and writing mode is changed to direct.

# --file-io-mode=async is used to ensure that libaio takes effect.

# --file-fsync-freq=0 indicates that the fsync operation is not required.

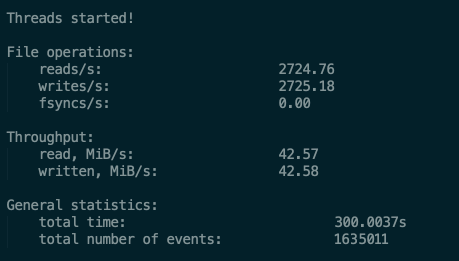

sysbench fileio --file-total-size=15G --file-test-mode=rndrw --time=300 --max-requests=0 --file-io-mode=async --file-extra-flags=direct --file-num=1 --file-rw-ratio=1 --file-fsync-freq=0 runThe test result is as follows:

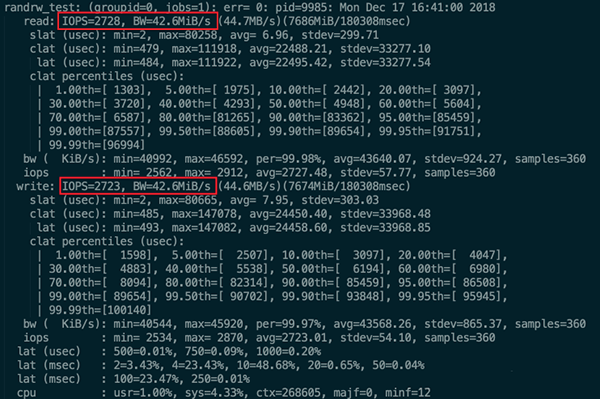

The FIO command is also adjusted to change bs to 16k. The other values remain unchanged. The upper limit 5400 is reached as well. The test result is as follows:

We can see that the Sysbench test result is the same as the FIO test result.

However, I would still recommend FIO for I/O performance testing.

33 posts | 12 followers

FollowAlibaba Clouder - March 15, 2019

ApsaraDB - October 29, 2025

ApsaraDB - August 21, 2024

ApsaraDB - July 17, 2025

ApsaraDB - November 25, 2022

ApsaraDB - April 24, 2024

33 posts | 12 followers

Follow ECS(Elastic Compute Service)

ECS(Elastic Compute Service)

Elastic and secure virtual cloud servers to cater all your cloud hosting needs.

Learn More Elastic High Performance Computing Solution

Elastic High Performance Computing Solution

High Performance Computing (HPC) and AI technology helps scientific research institutions to perform viral gene sequencing, conduct new drug research and development, and shorten the research and development cycle.

Learn More Elastic High Performance Computing

Elastic High Performance Computing

A HPCaaS cloud platform providing an all-in-one high-performance public computing service

Learn More Container Compute Service (ACS)

Container Compute Service (ACS)

A cloud computing service that provides container compute resources that comply with the container specifications of Kubernetes

Learn MoreMore Posts by Alibaba Cloud ECS

5312195358092749 July 21, 2020 at 7:05 pm

Hi, I am confused. Shouldn't you use File operations number to run the calculation for IOPS? Looks like you are using throughput (34.12 22.75) * 1024/16.384? also what is the 16.384 here?