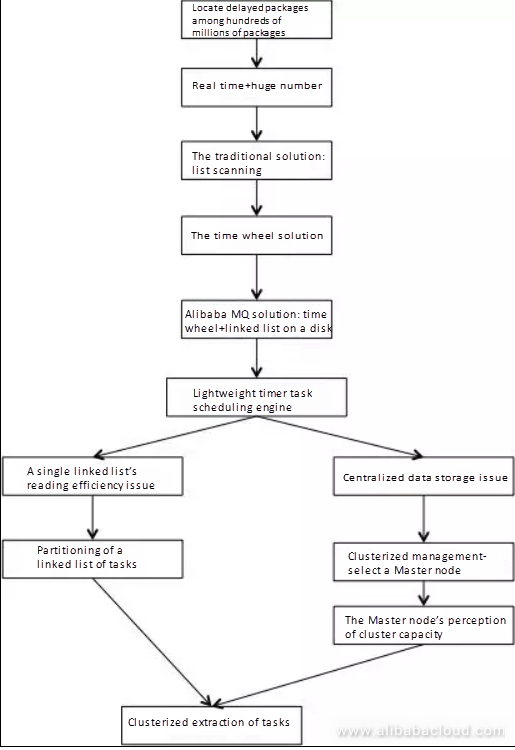

Today, with China's quick development in the field of logistics, the average number of packages delivered per day exceeds 100 million. How do we go about ensuring that these 100 million packages get delivered to their corresponding receivers within a reasonable period? What is an acceptable manner in which we can handle those packages which are failed to be delivered within a reasonable period before feedback received from the recipients becomes a vital issue demanding a quick solution?

An issue has to be discovered first before it can be solved! To reveal the problems in an effective and timely way requires real-time monitoring on every phase involved in the transportation of those 100 million packages; to enable that, a real-time and regular scheduling system is needed. In this article, we will discuss the design of a lightweight timer task scheduling engine with Alibaba Cloud Message Queue.

The common practice for the scenario mentioned above is writing the timer tasks into a database, querying the tasks at a scheduled time with a thread when said tasks are near expiration and then executing logics related to the tasks. The advantage of this solution is ease of implementation; this is especially true for scenarios featuring single machine or small-scale businesses. But its disadvantage is also obvious: more complexity gets introduced for scenarios featuring distributed and large-scale business.

First, you need to design a set of sound sharding logics and cluster task loading logic. Even once these logics are enabled, too much pressure on a single node in a cluster can be a cause for some scenarios due to timer tasks scheduled at a centralized time. Additionally, a reasonably estimated capacity is required, or subsequent storage resizing will be complicated.

We found that the critical point of this scenario is the coupling of the time of the timer task and the storage of the task. If the time and the storage can get decoupled, the storage of the task will become stateless, which will result in a much wider range for storage selection and much lower storage constraint!

The following part of this article will describe how to couple the time of the timer task and the task itself with the idea of timing wheel to enable a significant improvement in design and performance.

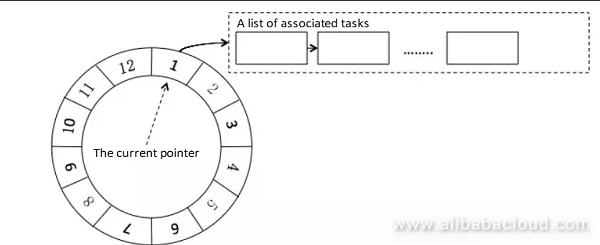

The timing wheel solution introduces the concept of a real-life clock into the design of software, the main idea of which is to define a clock cycle (e.g., 12 hours) and the step length (e.g., a step per second). Every time the pointer goes one step, the task mounted on the corresponding degree of timescale will be gotten and executed. The whole structure is as shown in figure 1.

Figure 1. Timing Wheel Algorithm

We can see from the above figure, a component similar to a clock does the calculation of time, and a task gets associated with a specific timer task on the time scale through a pointer or a reference. In this way, the time and the storage of a timer task can get decoupled. The implementation of a clock component is not difficult, the key point of the timing wheel solution is the way in which to store the task data.

We've found that the modification to the Alibaba Message Queue (MQ) provides a great solution to this problem. The old solution checks if a message is expired based on regular polling, which is limited for discrete time. For this reason, the former solution can only support a few latency levels of scheduled messages. The Alibaba MQ team has modified the old solution to a new one which is based on timing wheel+linked list to solve this problem. This new solution can enable discrete scheduled messages; it can also satisfy the complexity in the management of individual lists of tasks for individual scale degrees required for the traditional timing wheel.

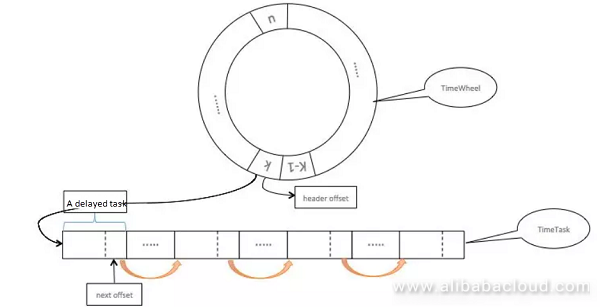

Figure 2. Alibaba MQ Scheduled Message Solution

Figure 2 provides a simple and clear description about the solution's key points; the idea is to write a list of tasks into the disk, where the list of tasks are linked together one by one in the way of a linked list. For this, each task should have a disk offset pointing to the next task, and the whole linked list of tasks can be obtained by simply getting the header of the linked list. Thus, for the solution, there is no need for an individual degree on the timing wheel to store the whole list of tasks, it can only store the header of the list so that the pressure of storage can be released.

This solution will be acceptable for those systems where the middleware gets targeted in this way, but for systems targeting ordinary apps, the requirement for deployment seems too high, because you need a fixed disk. Under the current trend of containerization and dynamic capacity management, an ordinary app will have to depend on a fixed disk; this introduces extra complexity to the operation, maintenance and deployment of a system. As a result, this article mainly focuses on the lightweight timer task scheduling engine which gets built upon that basis.

The timer task scheduling engine introduces the core idea of timing wheel solution while eliminating the necessity of system's dependency on the disk. As the data is not to be stored directly on a disk, it will have to depend on specialized storage services for storage. For this, the store at the lower level gets replaced with a structure available for applying the storage service:

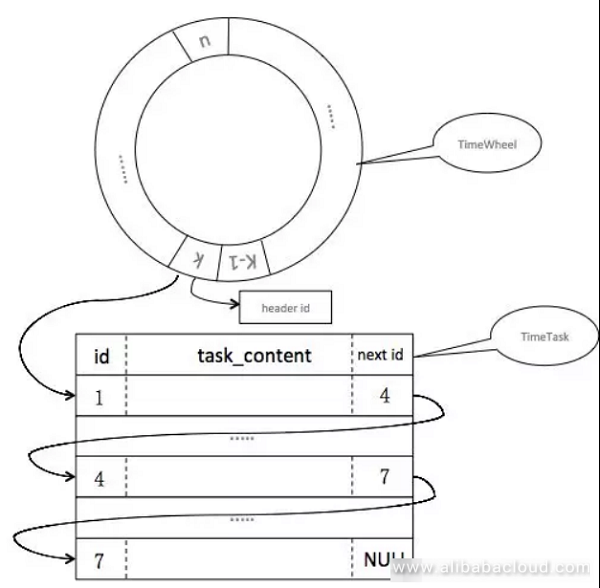

Figure 3. The Timing wheel+Linked List Solution After Adjustment

The task is designed into a structured list, and the offset on the list gets replaced with a task ID (figure 2 shows the offset of a file), through which the whole list of tasks are linked together one by one, and only the ID of the linked list header gets associated to the timing wheel.

Here the change to the MQ solution is done by merely replacing the offset of a disk with an ID; this decouples the dependency on the disk. Although the solution seems applicable until now, two new problems have arisen:

Problem 1: As a single linked list cannot get extracted concurrently, it will impact the extraction efficiency, which can lead to severe latency in timer task processing when there are a large number of timer tasks at a specific time.

Problem 2: As the tasks do not get stored in the native disk (meaning the timer tasks for the entire cluster gets stored in a centralized way, and each node in the cluster has its timing wheel), in what way will each node restore after being restarted? How to automate the management of cluster resizing and reduction?

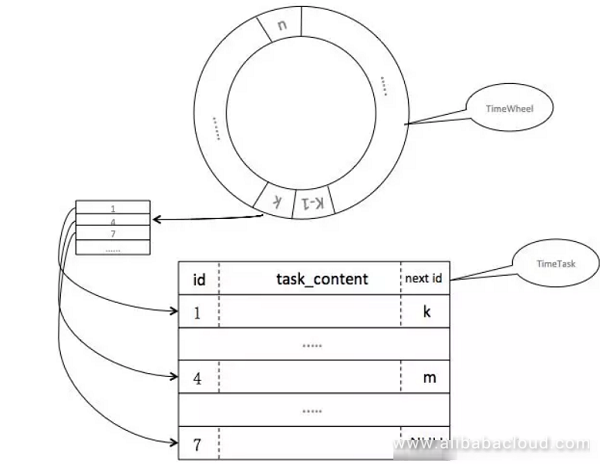

The advantage of a linked list is that, instead of storing the entire list of tasks, you store a simple ID, which reduces the consumption of storage but also introduces a new issue. It is well-known that a linked list is writing-friendly, but the reading efficiency is not very high; as a result, when a long linked list of tasks is to be mounted at a specific time, the form of a linked list will not allow the improvement in reading efficiency through concurrency.

How to improve the efficiency of extraction from task queue at a specific time? Here we use partitioning to split a single linked list for a specific time into multiple linked lists; in this way, the extraction of tasks for a specific time can get performed concurrently based on the size of linked list collection; this can accelerate the extraction of all tasks for that time. So the solution is adjusted as shown in Figure 4:

Figure 4. Partitioning-Based Design

After the issues about timer task storage and task extraction efficiency of the single linked list are solved, it seems that the entire solution is ready for use; however, there is still another problem which is considerable, that is, how to restore a node after its cluster gets deployed and it gets restarted?

For example, we need to know the degree the timing wheel is at before the restart, and the data of the linked list of tasks on the timing wheel scale during restart, which is from now on collectively referred to as the timing wheel metadata. If a machine gets restarted after a task is written into a disk, it can get restored by loading the timing wheel metadata of the current node from an ephemeral disk. If this is implemented not by using a disk, problems will arise such as: after a machine gets restarted, how can it get the timing wheel metadata before the restart?

By solving the previous issue about timer task storage, we can know that the storage of metadata can also get enabled with specialized storage services. However, as nodes in a cluster are stateless, a node will not know how to read its metadata from a storage service (i.e., the query conditions), so here is the second problem: how can a cluster node get its metadata?

It might be the case that the first solution you come up with is to associate the metadata with a node IP or a mac address, but under the current condition of dynamic capacity scheduling, it will be costly for a machine to own a fixed IP, as you are not sure on which device your app will run. Therefore, we will have to depend on the logic environment for the partitioning of the timing wheel of each node as the physical environment is unavailable for this. Then, by assigning to each node a unique logic ID within a cluster, each machine can get corresponding timing wheel data through the ID it is assigned. This introduces the third problem: who will assign this ID? The Master node will.

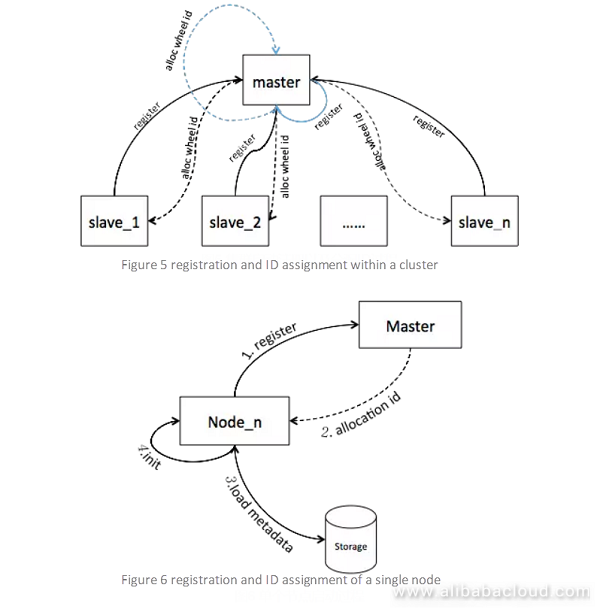

A Master node will get selected from the cluster, and all other nodes will register themselves into the Master node. After this, the Master node will assign an ID to each of these nodes, who can then get its timing wheel metadata from the storage service by its assigned ID and initialize its timing wheel. Figure 5 and Figure 6 illustrate for that process:

Figure 5 shows the process of node registration and obtaining the ID, note that, the Master node itself is also registered and assigned an ID; this is because—for an ordinary app—there is no substantial difference between a Master node or a non-Master node; a Master node will also participate in calculation throughout a given piece of business, but it also takes on the management of the cluster as an extra job.

Once we have introduced the Master node and knew that all nodes would register themselves into the Master node (including the Master node itself), we can then build a mechanism for clustered management based on the concept of a master node. For an ordinary app, the resizing and reduction of a cluster are quite normal operations; under the conditions of dynamic scheduling these operations are even unaware of the app owner; therefore, will the cluster itself be able to know when it has been resized and reduced? The answer is yes, as there is a Master node, which can perceive the statuses of other nodes within the cluster. For example, the Master node perceives a change in cluster's capacity and adjusts cluster's load.

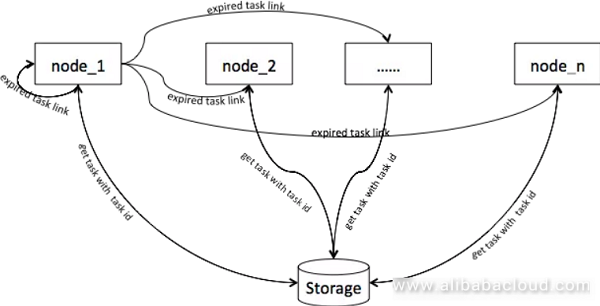

We have discussed previously the solution that splits a single linked list associated with a specific time on the timing wheel into multiple linked lists to improve the concurrent extraction of tasks, and we have also formulated a solution for clustered management. If a node only relies on itself for task extraction, even if the extraction gets done concurrently, the node can experience too high pressure when there are a vast number of tasks to be done for the current node at a specific time.

To consume the cluster's computing capacity in a more reasonable way, the solution can be further optimized based on the above mentioned clustered management i.e., distribute the extracted linked list of tasks to other nodes within the same cluster through soft loading. As the data of tasks gets stored in a centralized way, any of other nodes can extract all tasks of that linked list by simply getting the ID of the header of that linked list; in this way, the pressure on a single node also gets distributed within the whole cluster. Figure 7 shows the interactions during this process:

Figure 7. Extend the Extraction of Tasks to the Entire Cluster

We have discussed the solution in a progressed way and detailed the issues arising at each phase; some points may need further elaboration. The solution discussed in this article can be summarized with the flowchart below.

Figure 8. Flowchart of Alibaba Cloud Lightweight Timer Task Scheduling Engine

Read similar articles and learn more about Alibaba Cloud's products and solutions at https://www.alibabacloud.com/blog

Open Source E-Commerce Platforms on Alibaba Cloud's Simple Application Server: OpenCart

2,593 posts | 794 followers

FollowAlibaba Cloud Native Community - May 25, 2026

ApsaraDB - August 13, 2024

Apache Flink Community China - September 27, 2020

Alibaba Cloud Native Community - October 17, 2024

Alibaba Cloud Native Community - July 26, 2022

Apache Flink Community China - September 27, 2020

2,593 posts | 794 followers

Follow ECS(Elastic Compute Service)

ECS(Elastic Compute Service)

Elastic and secure virtual cloud servers to cater all your cloud hosting needs.

Learn MoreLearn More

Learn More

More Posts by Alibaba Clouder