By Jiyang Zhang (Cuanye)

PolarDB-X, also known as PolarDB for Xscale, is a high-performance cloud-native distributed database service developed by Alibaba Cloud. The Lizard transaction system plays a vital role as the core technology module in the storage engine of PolarDB for Xscale. This article provides a detailed explanation of the Lizard transaction system in two parts. The first part focuses on the Lizard SCN standalone transaction system, while the second part discusses the Lizard GCN distributed transaction system.

PolarDB-X distributed storage engine relies on a distributed transaction system to achieve the Atomicity, Consistency, Isolation, and Durability (ACID) properties of global distributed transactions and ecosystem-wide consistency. However, the InnoDB transaction system in the MySQL community version has several disadvantages. For more details, please refer to About Database Kernel | Transaction System of PolarDB-X Storage Engine - Part1. In this article, we will focus on the introduction of the evolved distributed transaction system based on the SCN standalone transaction system.

The ability to fully support transaction ACID is a fundamental feature of enterprise-level distributed databases.

Currently, the mainstream distributed transaction models include:

Percolator is a distributed transaction processing model proposed by Google in 2010. Its design goal is to achieve efficient transaction processing in large-scale distributed systems. Percolator is an optimistic transaction model where conflicts between writes are detected only during transaction commitment. Visibility and conflict detection rely on the start timestamp and commit timestamp of the transaction. The Percolator model, along with subsequent models like Omid, is known for its easy-to-understand and engineer-friendly implementation. It is widely used in mainstream distributed databases as an efficient and direct distributed transaction processing model. Additionally, the transaction process data in the Percolator model is stored in memory, limiting the transaction size based on available memory resources.

The Calvin model was proposed by Brown in 2012 to provide high-performance, highly available, and consistent distributed transaction processing. The core idea of the Calvin model is to use a global scheduler to determine the execution order of sub-transactions in each scheduling node in advance, avoiding the overhead of lock resources, cache resources, and other resources in concurrent transactions at the root. To achieve this, the Calvin model introduces a data structure called a "transaction flow graph" that describes the execution order and dependencies of transactions. The transaction flow graph is a directed acyclic graph where nodes represent sub-transactions and edges represent dependencies between sub-transactions. Through the transaction flow graph, the Calvin model achieves consistency and atomicity of transactions in a distributed environment.

While the Calvin model offers many advantages, it also has certain disadvantages and challenges. In the Calvin model, each transaction's execution is independent and executed in parallel in a distributed environment. This parallel execution can lead to data consistency issues, such as reading outdated or inconsistent data. Although the Calvin model provides mechanisms like version control and conflict detection to address these problems, the risk of data consistency cannot be completely eliminated. Additionally, the Calvin model requires a global scheduler to coordinate and manage the execution of all transactions. The global scheduler involves various factors, such as transaction dependencies, concurrency control, and load balancing, which increase the complexity of scheduling. Furthermore, the global scheduler can potentially become a bottleneck in the system, limiting the scalability and extensibility of the entire system.

The XA model is a standard interface specification for managing distributed transactions. It defines the protocols and operations needed to carry out transactions in a distributed environment. The XA model gets its name from X/Open (now The Open Group), an organization that developed the XA interface standard to enable collaboration between different transaction managers and resource managers, ensuring data consistency.

In the XA model, the transaction manager is responsible for coordinating and managing transaction execution, while the resource manager is responsible for managing and operating specific resources. By defining a set of standard interfaces and operations, the XA model enables collaboration between transaction managers and resource managers.

At the core of the XA model is the two-phase commit (2PC) protocol. In the 2PC protocol, the transaction manager and the resource manager communicate through a series of messages to ensure that all participating resource managers perform the corresponding operations within a single transaction, either committing or rolling back all operations. The transaction manager acts as the coordinator, initiating and managing the execution of the 2PC protocol.

To implement the XA model, both the transaction manager and the resource manager must adhere to the XA interface standard. The transaction manager needs to implement operations such as starting, committing, and rolling back transactions, as well as coordinating the execution of the 2PC protocol. The resource manager needs to implement operations related to participating in the 2PC protocol, such as prepare, commit, and rollback.

As an enterprise-level distributed database, PolarDB-X distributes data to different storage nodes, making cross-node modification and access a common practice. This brings challenges in ensuring the ACID properties of the database and achieving transparent distribution.

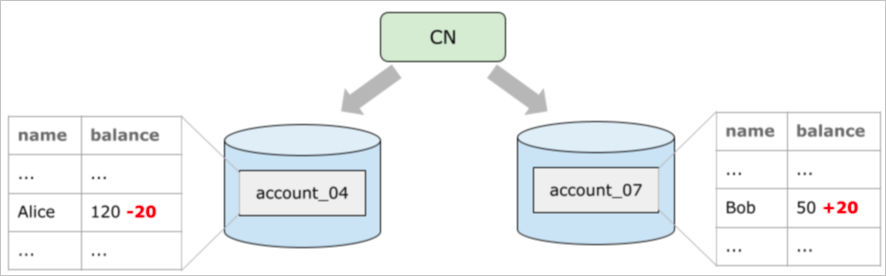

The following figure shows a typical transcation model. Standalone transaction systems are unable to ensure the atomicity of transactions and the consistency of cross-node queries.

In the design of a business model, the corresponding business access usually involves multiple dimensions. Traditional local indexes cannot be used for multi-dimensional routing. Therefore, global secondary indexes are introduced to cope with multi-dimensional business access requests to achieve efficient multi-dimensional routing access without affecting the business. How to maintain global secondary indexes across nodes also requires a guarantee from distributed transactions.

To ensure that PolarDB-X maintains ACID properties and strong consistency as a database, its distributed transaction model utilizes the two-phase commit (2PC) protocol. According to the strict definition of the XA specification:

The CN node is responsible for transaction management and acts as the coordinator. It ensures the persistence of distributed transaction states and facilitates the advancement and flow of distributed transactions.

The DN node serves as a transaction participant and is responsible for accepting changes in user data and transaction status.

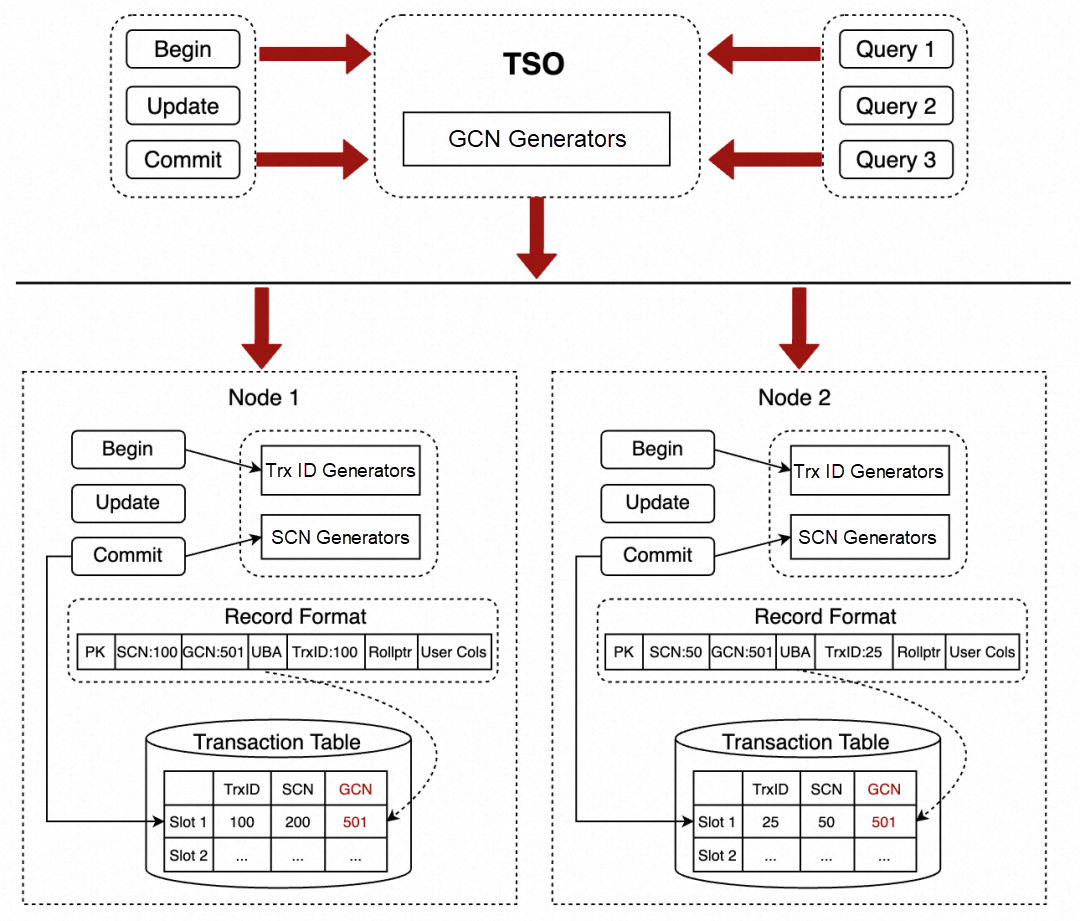

To implement the strict 2PC protocol, the PolarDB distributed storage engine implements the GCN (Global Commit Number) distributed transaction system based on the SCN standalone transaction system. The following figure shows the architecture:

The transaction slot of the Lizard transaction system extends a field on the original basis to store a GCN, which is derived from the TSO initiated number. At this point, cross-node transactions will use GCN instead of SCN to globally sequence distributed transactions.

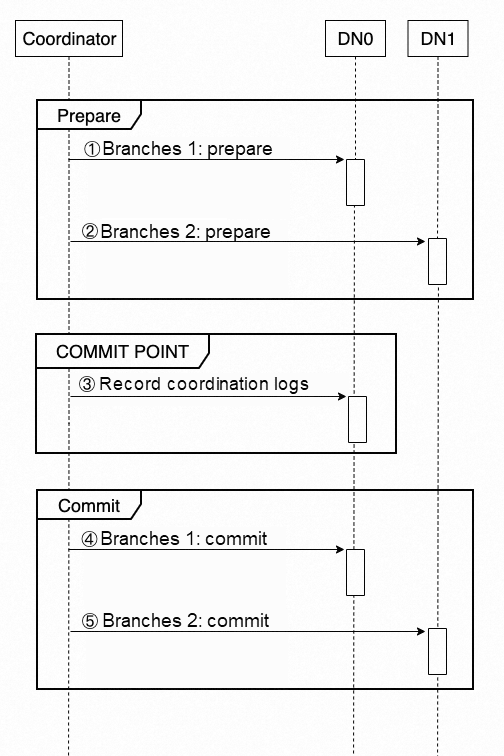

Cross-node distributed transactions are implemented in strict accordance with the standards of the 2PC protocol. According to the interface defined by the XA Spec, the CN node uses the following syntax to operate the DN node.

XA {START|BEGIN} xid

XA END xid

XA PREPARE xid

XA COMMIT xid [ONE PHASE] $GCN

XA ROLLBACK xid $GCN

XA RECOVERAt the time of XA COMMIT or ROLLBACK, the CN node obtains a TSO from GMS as the external commit number, which is GCN, and transmits it to all DN participants for persistence.

Through the 2PC protocol, PolarDB-X strictly guarantees the atomicity of cross-node transactions of users.

During cross-node access, the CN node obtains a TSO as the MVCC view for this query and sends it to the DN storage node through an AS OF query. The syntax is as follows:

SELECT ... FROM tablename

AS OF GCN expr;In the GCN transaction system, because distributed transactions are ordered by GCN, the visibility comparison of queries is also converted from standalone SCN comparison to GCN comparison to ensure strong global consistency. Due to external ordering and the two-phase commit process, during visibility comparison, PolarDB-X follows a waiting policy in the Prepare state when decisions cannot be made. The size of the GCN is compared after the waiting time.

In distributed databases, the 2PC protocol is a classic protocol for distributed transaction processing. The protocol consists of two phases: the prepare phase and the commit phase. During the prepare phase, each node sends back the data status to the coordinator node, which then decides whether to commit based on the status of each node. In the commit phase, the coordinator node notifies each node to either commit or rollback the transaction.

While the 2PC protocol ensures data consistency, it poses significant performance challenges. A common issue is the high number of interactions between nodes during the 2PC process, involving request initiation and response waiting. The interactions among multiple nodes are far more extensive than those in a standalone database, resulting in significant latency and limiting the performance and scalability of transaction commits in distributed databases. This is particularly impactful for OLTP businesses, where most transactions are short and fast, making commit performance crucial for the entire distributed database.

Furthermore, the 2PC protocol needs to ensure fault tolerance since nodes and links may experience abnormalities. Therefore, the coordinator typically records coordination logs to ensure the consistency of the final transaction state across nodes.

As seen, the coordinator and coordination logs are key elements in the entire 2PC protocol process. Core issues in distributed database design include reducing interactions between nodes in the 2PC protocol, ensuring the persistence of coordination logs, managing coordination log clean-up, and ensuring the high availability of coordination logs.

In the Lizard transaction system, these problems are properly handled. The core idea is that the coordination logs are sunk into the storage engine. When a distributed transaction occurs, one of the participants is selected as the main branch. The main branch is responsible for persistently storing coordination logs and providing backchecking capability of the coordination logs. When a transaction is started, the main branch creates a transaction slot. When the transaction is committed or rolled back, the related transaction state is persisted to the transaction slot. When other nodes recover from a fault, they must first find the main branch and drive the transactions of this branch to commit or roll back based on the transaction status of the main branch.

In addition, transaction slots are reserved by the Lizard transaction system for some time until transaction state information is no longer needed. Then, the transaction slots are automatically cleaned up and recycled by the database's cleanup system. In other words, commit and rollback are not the final state of the transaction. When the transaction slot information that represents the existence of the transaction is cleaned, the transaction finally enters the Forget state.

At the same time, multiple replicas of the coordination logs are implemented through the X-Paxos protocol, which completely ensures the reliability of the coordination logs.

During fault recovery, the coordination logs play a crucial role. The Lizard transaction system provides coordination log backchecking capabilities to assist in fault recovery.

| Status | Explain | Action |

| ATTACHED | A session is processing this XA transaction. | Wait for a retry. |

| DETACHED | No session is processing this XA transaction. | Attempt to attach this XA transaction for commit or rollback. |

| COMMIT | Committed. | Commit other branches. |

| ROLLBACK | Rolled back. | Roll back other branches. |

| FORGET | The transaction information is cleaned up. | Transaction completed. |

It is worth noting that XID is the unique identifier of the transaction in the XA distributed transaction model and is an externally specified transaction identification number. Therefore, it is different from the trx_id of InnoDB and the GTID of MySQL. It is difficult for native MySQL to backcheck transaction status information by using XID because a large number of I/O operations may be triggered, affecting online services. The Lizard transaction system does not bring this concern. The reasons are as follows:

1. Optimistic search: In the prepare phase, the hint information of the transaction slot address is returned to the CN node. The hint information is used to optimistically search for the transaction slot. In most cases, the relevant transaction information is found at the cost of one I/O at most.

2. Pessimistic search: The Lizard transaction system binds the mapping relationship between XIDs and transaction slot addresses. Even in the worst case, you only need to search for a group of transaction slots instead of performing a full search for transaction slots. Moreover, to prevent HA from falling back to full search, this mapping relationship will be broadcast to all replicas by the X-Paxos protocol.

The sinking of coordination logs to the storage engine shows significant improvements over traditional 2PC commits:

Distributed transactions require more costs than standalone transactions:

Currently, a distributed transaction needs a complete 2PC commit, and the synchronization overhead is 3 RT +2 BINLOG. By contrast, the synchronization overhead of a standalone transaction is only 1 RT +1 BINLOG.

If a data modification only involves a single shard, a one-phase commit can be used during the commit process to save the overhead caused by the 2PC commit. Such a transaction, referred to as a single-shard (write) transaction, is supported in PolarDB-X.

Similarly, if a data query only involves a single shard, it can be directly executed on the DN node without interacting with TSO. These transactions are also known as single-shard (read) transactions.

A single-shard transaction may appear similar to a standalone local transaction, but their internal logic is completely different. The crucial difference lies in the fact that the visibility of a single-shard transaction depends on the GCN, while the visibility of a standalone local transaction is determined solely by the SCN.

The commit number of a single-shard transaction cannot be obtained from TSO. Thus, determining the commit number of a single-shard transaction becomes a crucial issue. Intuitively, a single-shard transaction occurs after the committed distributed transaction on the current node and after the initiated distributed query. Therefore, its commit number must be greater than the commit numbers of all committed distributed transactions on the current node and greater than the snapshot number of the distributed query.

To address this, Lizard maintains a narrow GCN (narrow global commit number) for each node. The narrow GCN is raised in the following scenarios:

In addition, there is also a commit order between single-shard (write) transactions, which is determined only by the local commit number SCN.

So far, the commit number of a single-shard (write) transaction is determined as (narrow_GCN, SCN), which reflects the commit order of a single-shard transaction.

The narrow_GCN must be monotonically increasing and never decrease as the commit number. It is persisted to the Lizard system tablespace. However, if the narrow_GCN needs to be persisted each time a transaction is committed, severe performance hotspots may occur, which limits the overall throughput of the database.

Therefore, the Lizard transaction system optimizes the persistence performance of the narrow_GCN. The core idea is that the modification of narrow_GCN only records the redo logs without modifying the actual data pages. In other words, the actual modification of data pages is delayed, and all the modifications are merged into one modification, greatly improving the performance of narrow_GCN persistence.

When single-shard transactions and distributed transactions coexist, visibility judgment will face great challenges, because there will be four situations at the same time: distributed read and distributed write, distributed read and single-shard write, single-shard read and distributed write, and single-shard read and single-shard write. The following is a typical case:

A single-shard read may read an inconsistent state:

Obviously, this violates the atomicity and consistency of transactions. Similar inconsistency issues occur in other scenarios.

The key to visibility judgment is sequencing. The Lizard transaction system uses a combination of global commit numbers and local commit numbers to accurately sequence all transactions. More specifically, the visibility judgment first determines the order according to the GCN, and when the GCN cannot determine the order, the order is further determined according to the SCN.

The modification of the secondary index of MySQL does not generate UNDO, which means the secondary index does not have multiple versions. The MySQL native transaction system mainly relies on the max_trx_id field on the data page to determine the visibility of the secondary index. This field indicates the largest transaction ID number among all transactions modifying the secondary index data page.

When a MySQL query generates a view, it also obtains the smallest active transaction ID number in the database at that time. When reading a secondary index:

To perform table retrieval, you need to go back to the primary key to search for B+Tree until the corresponding primary key record is found. Obviously, this table retrieval will bring huge query overhead, especially a large number of random I/Os. A well-designed transaction system should minimize the number of returns to the table.

The secondary index visibility judgment of MySQL is a solution. However, in distributed query scenarios, this solution is no longer feasible because all distributed queries use Flashback Query. In this query, what is needed is not the smallest active transaction ID number in the current (latest) system, but the smallest active transaction ID number (historical) at that specific time.

A reliable solution is to perform table retrieval for all secondary index queries. However, as mentioned earlier, the cost of table retrieval is high.

The Lizard transaction system introduces the TCS (Transaction Commit Snapshot) solution to completely address the issue of table retrieval for distributed read queries involving secondary indexes. The core idea is to internally generate transaction system commit snapshot information at intervals. This snapshot information stores the current transaction system state, such as GCN, SCN, and min_active_trx_id. All commit snapshot information of the transaction system is retained within a specific period as configured by the system.

When a distributed query builds a view, it uses the Snapshot_GCN of the view to search within the TCS. It finds the nearest min_active_trx_id as the approximate min_active_trx_id value of the database during the Snapshot_GCN and compares this value with the max_trx_id on the secondary index data page to determine the visibility of the secondary index.

According to test results, the Lizard transaction system improves secondary index visibility by over 400% compared to the full table retrieval solution.

The distributed transactions in PolarDB-X rely on the XA model. However, MySQL XA transactions are prone to inconsistency between the primary and secondary replicas under the multi-replica policy. Even after multiple rounds of improvement and modification, XA transactions in MySQL 8.0.32 can still cause inconsistency between the primary and secondary replicas. This problem arises because the binary log, as a participant in the XA transaction, does not actually support rollback capability due to its text append format. In the distributed model of PolarDB-X, this problem is further amplified. This is because the DN node of PolarDB-X relies on the majority protocol of X-Paxos, and binary logs are used as carriers of the protocol, carrying more complex state flow logic.

To address this, the Lizard transaction system proposes a GTID-based full transaction log backfill scheme for MySQL XA transaction integrity. This scheme completely solves the long-standing XA transaction integrity problem in MySQL. The core idea is that the binary log not only serves as the internal coordination log but also saves the full transaction operation log. During fault recovery, the storage engine provides the GTID executed collection, and the binary logs use this collection to compensate for lost transactions in the storage engine.

How to Build a Heat Map Tile App with Alibaba Cloud ECS & PostgreSQL/Ganos: A Step-by-Step Guide

ApsaraDB - January 17, 2024

ApsaraDB - June 19, 2024

ApsaraDB - June 5, 2024

ApsaraDB - July 1, 2024

ApsaraDB - November 12, 2024

ApsaraDB - April 10, 2024

PolarDB for Xscale

PolarDB for Xscale

Alibaba Cloud PolarDB for Xscale (PolarDB-X) is a cloud-native high-performance distributed database service independently developed by Alibaba Cloud.

Learn More PolarDB for PostgreSQL

PolarDB for PostgreSQL

Alibaba Cloud PolarDB for PostgreSQL is an in-house relational database service 100% compatible with PostgreSQL and highly compatible with the Oracle syntax.

Learn More PolarDB for MySQL

PolarDB for MySQL

Alibaba Cloud PolarDB for MySQL is a cloud-native relational database service 100% compatible with MySQL.

Learn More AnalyticDB for PostgreSQL

AnalyticDB for PostgreSQL

An online MPP warehousing service based on the Greenplum Database open source program

Learn MoreMore Posts by ApsaraDB