By Yang Che (Biran)

In the era of AI and big data, computing power plays a crucial role in driving continuous innovation. However, self-built computing clusters have limitations in terms of resource capacity and elasticity. This can lead to high idle costs during low business hours and resource constraints during peak hours. To address these challenges, many enterprises are turning to the public cloud as a cost-effective supplement to their computing power.

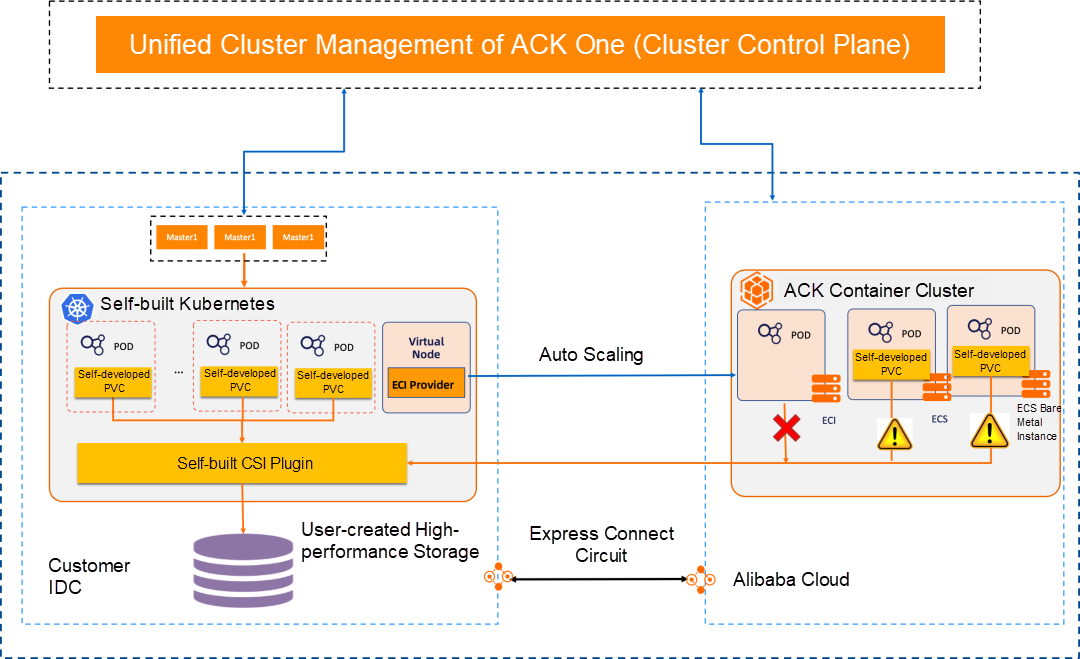

In order to meet the specific requirements for data sovereignty, some enterprises need to store their data in a private cloud while still enjoying the benefits of elasticity, reliability, and cost advantages offered by the public cloud. This has led to the increasing adoption of hybrid cloud solutions, which integrate Alibaba Cloud services with on-premises data centers. By accessing data through leased lines and managing them with ACK One, this hybrid cloud approach not only helps save costs but also provides higher performance and stronger security. However, there are common issues in cross-cloud data access scenarios:

• Complex adaptation between general-purpose cloud computing and offline heterogeneous storage: Adapting general-purpose elastic computing to access offline heterogeneous storage in the public cloud requires development and integration work, leading to longer timeframes and increased maintenance and troubleshooting costs.

• Reduced engineering efficiency due to poor data access performance: Slow cross-cloud data access negatively impacts the efficiency of data analysis and AI training.

• Redundant data access overhead: Repeatedly reading hot data results in unnecessary traffic costs.

• Transmission, replication, and synchronization of data sources: How can offline and public cloud data be replicated and synchronized to ensure data consistency?

In the next section, I will describe the common scenarios supported by ACK Fluid for hybrid cloud data access. These scenarios primarily involve Kubernetes reading data, offering simple access, non-intrusive access, high performance, low cost, and automation.

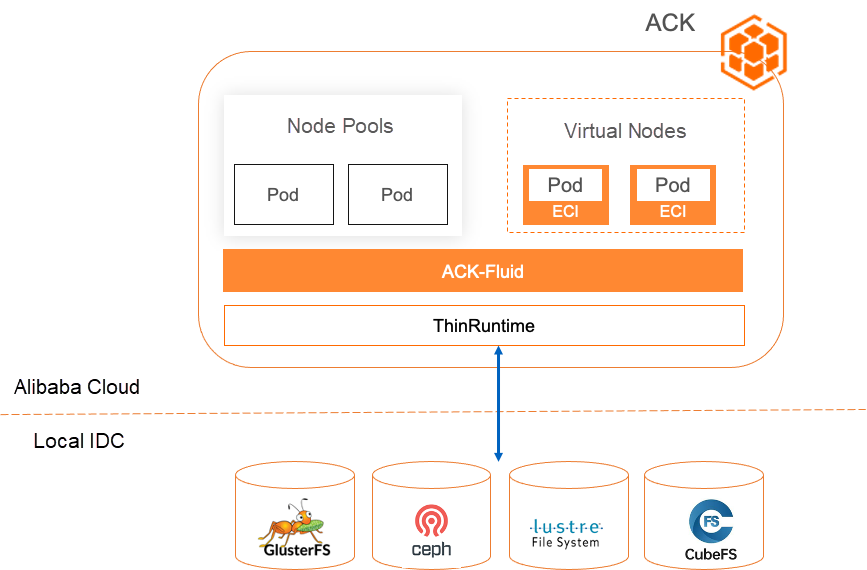

Many enterprises store their data offline using various types of storage, including open-source options like Ceph, Lustrure, JuiceFS, and CubeFS, as well as self-built storage. However, when it comes to utilizing public cloud computing resources, there are certain challenges:

• Lengthy security and cost assessment: Moving data to cloud storage requires extensive evaluation by security and storage teams, resulting in delays in the cloud migration process.

• Incompatible data access: Public cloud platforms have limited support for distributed storage types (such as NAS, OSS, and CPFS) for elastic computing instances (ECI), lacking support for third-party storage.

• Time-consuming and complex cloud platform integration: Developing and maintaining cloud-native compatible CSI plugins requires expert knowledge and adaptation projects. Additionally, it involves version upgrades and limited scenario support. For instance, self-built CSI cannot be adapted to ECI.

• Lack of trusted and transparent data access methods: Ensuring data security, transparency, and reliability during data transmission and access in the black box system of Serverless containers poses challenges.

• Avoiding business modification requirements: Ensuring that business users do not face infrastructure-level differences and avoiding modifications to existing applications.

ACK Fluid offers the ThinRuntime extension mechanism [2], allowing you to connect third-party storage clients based on FUSE to Kubernetes in containerized mode. It supports standard Kubernetes, edge Kubernetes, Serverless Kubernetes, and other forms on Alibaba Cloud.

1. Simple development

Using the ThinRuntime solution, you only need knowledge of docker. Generally, the development work takes about 2 to 3 hours, significantly reducing the work cost of accessing third-party storage.

2. Safe and controllable

ACK Fluid supports custom data access in containerized mode, ensuring that the entire data access process is non-intrusive to the cloud platform without exposing implementation details.

3. Easy to use

By adding specific labels to persistent volume claims (PVC), you can meet the needs of business users without requiring them to perceive infrastructure-level differences. This reduces the storage adaptation time to one-tenth of the original plan.

4. Open source standards

ACK Fluid provides comprehensive support for ThinRuntime based on the open-source Fluid standard. As long as the open-source requirements are met, you can adapt to ACK Fluid. The entire development and testing can be completed in the MiniKube environment.

5. Observable and controllable

Third-party storage clients only need to implement their own containerization and can be transformed into Fluid-managed pods, seamlessly connecting to the Kubernetes system and gaining observability and control over computing resources.

Summary: ACK Fluid provides the benefits of scalability, security, controllability, low adaptation cost, and independence of cloud platform implementation for cloud computing to access on-premises data.

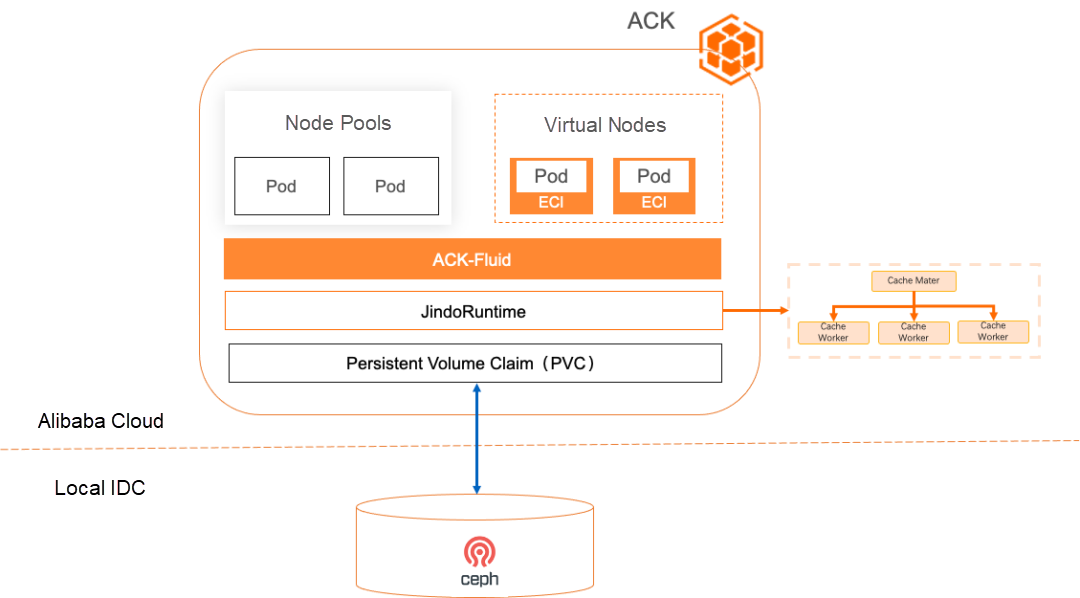

In scenario 1, even if cloud computing can access enterprises' offline storage using the standardized Kubernetes persistent volume (PV) protocol, there are still challenges and requirements in terms of performance and cost that cannot be avoided:

• Limited data access bandwidth and high latency: Cloud computing accessing on-premises storage results in high latency and limited bandwidth for data access, leading to time-consuming high-performance computing and low utilization of computing resources.

• Reading redundant data results in high network costs: During hyperparameter tuning of deep learning models and automatic parameter tuning of deep learning tasks, the same data is repeatedly accessed. However, the native scheduler of Kubernetes cannot detect the data cache status, resulting in poor application scheduling and the inability to reuse the cache. As a result, repeated data pulling increases internet and leased line fees.

• Offline distributed storage as a bottleneck for concurrent data access and facing performance and stability challenges: When large-scale computing power concurrently accesses offline storage and the I/O pressure of deep learning training increases, offline distributed storage can easily become a performance bottleneck. This negatively affects computing tasks and may even cause the entire computing cluster to fail.

• Seriously affected by network instability: Unstable network between the public cloud and the data center can lead to data synchronization errors and unavailability of applications.

• Data security requirements: Metadata and data must be protected and should not be persistently stored on disks.

ACK Fluid provides a general acceleration capability for PV volumes based on JindoRuntime [3]. It supports third-party storage that meets the requirements of PVCs and can achieve data access acceleration through a distributed cache in a simple, fast, and secure manner. The following benefits are provided:

1. Simple to use

You only need to implement the third-party storage of the PVC in the CSI protocol, and it can be used immediately without additional development.

2. High performance and efficiency

Data preheating, elastic bandwidth, and cache affinity-aware scheduling enable cloud computing clusters to access on-premises data without compromising performance.

3. Reduce costs and save traffic

The distributed cache is used to persistently store hot data in the cloud, reducing data reads and network traffic. Additionally, the throughput can be automatically scaled based on business volumes.

4. Automation

Data-centered automated O&M improves efficiency: Automatic cache preheating, automatic scaling, and cleanup contribute to efficient management.

5. Safer

Distributed memory cache improves security: No data needs to be stored on disks. This is suitable for users with sensitive data and provides superior performance and security assurance.

Summary: ACK Fluid provides benefits including out-of-the-box simplicity, high performance, low cost, automation, and no data persistence for cloud computing to access third-party storage PVCs.

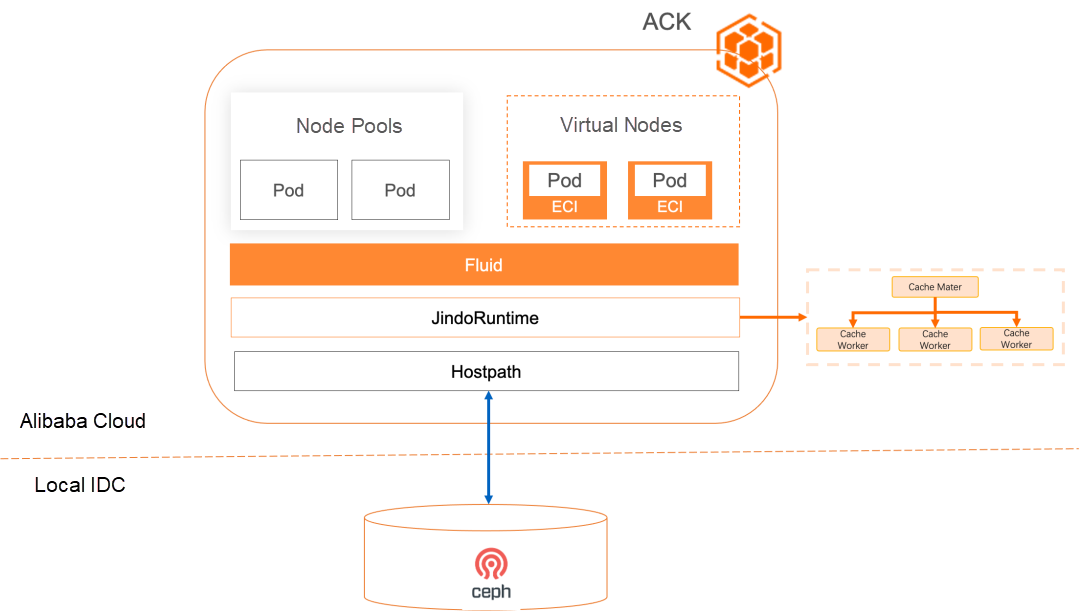

Many enterprises' on-premises storage does not support the CSI protocol and can only support host directory mounting due to historical and technical reasons. This presents challenges in connecting with the standardized Kubernetes platform, as well as similar performance and cost issues as described in Scenario 2:

• Lack of standards and difficulty in migrating to the cloud: The host directory mounting mode cannot be recognized and scheduled by Kubernetes, making it difficult to be used and managed by containerized workloads.

• Lack of data isolation: Since the entire directory is mounted on the host and accessed by all workloads, data becomes globally visible.

• Data access requirements in Scenario 3 are the same as in Scenario 2 in terms of cost, performance, and availability, so there is no need to repeat them.

ACK Fluid provides the general acceleration capability of the PV host directory based on JindoRuntime and directly supports host directory mounting. This allows for data access acceleration through a distributed cache in a native, simple, quick, and secure manner.

1. Standardization

Migrate the traditional architecture to a cloud-native architecture: Change the mounting mode of the host directory to the PV volume under the CSI protocol, which can be managed by Kubernetes. This makes it easier to integrate with public cloud platforms through standardized protocols.

2. Low cost of migration

Migration of the traditional architecture is cost-effective: No additional development is required. During deployment, you only need to convert the Hostpath protocol into PV volumes.

3. Easier data isolation

After connecting to Fluid, you can use the subdataset mode to control the visibility of different users to different directories of offline storage without the need for additional development.

4. High performance and efficiency

Features such as data preheating, elastic bandwidth, and cache affinity-aware scheduling allow cloud computing clusters to access on-premises data without any loss in performance.

5. Reduce costs and save traffic

The distributed cache is utilized to persistently store hot data in the cloud, reducing data reads and network traffic. Additionally, the throughput can be automatically scaled according to business volumes.

6. Automation

Data-centered automated operations and maintenance improve efficiency: Automatic cache preheating, automatic scaling, and cleanup contribute to efficient management.

7. Safer

Distributed memory cache enhances security: No data needs to be stored on disks. This is particularly suitable for users with sensitive data, providing superior performance and security assurance.

Summary: ACK Fluid provides benefits including out-of-the-box functionality, high performance, low cost, automation, and no data persistence when accessing the mounting mode of host directories on third-party storage.

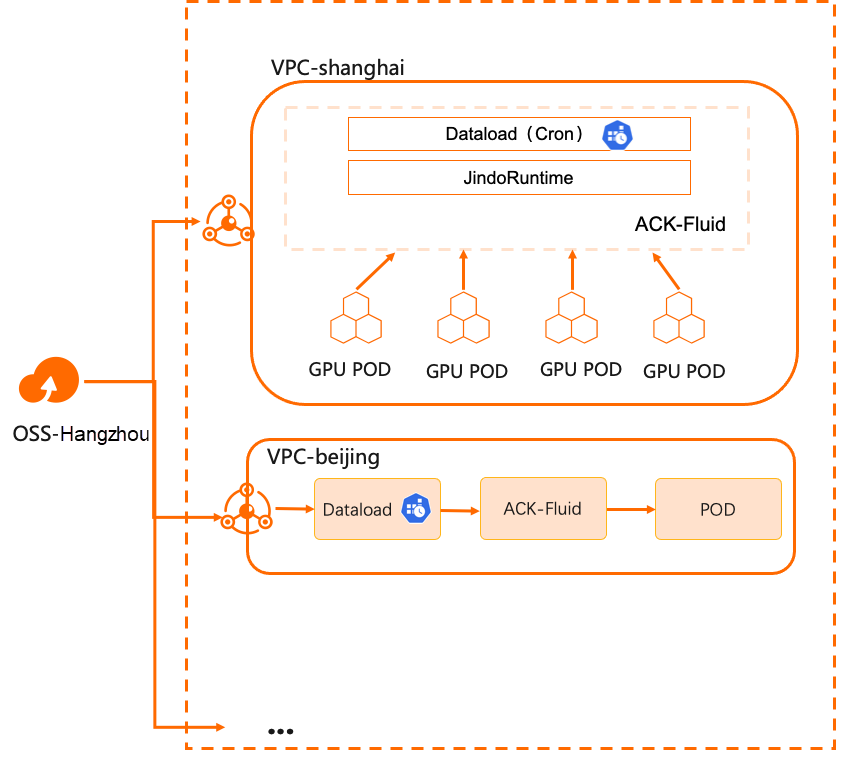

Many enterprises establish multiple compute clusters in different regions for reasons such as performance, security, stability, and resource isolation. These compute clusters require remote access to a centralized data storage center. For example, as large language models become more mature, supporting multi-region inference services based on them has become a capability that enterprises need. However, there are still several challenges in this scenario:

• Manually synchronizing data across multiple compute clusters is time-consuming.

• Managing large language models is complex. Different basic models and data are selected based on different business requirements, resulting in differences in the final model.

• Model data is frequently updated and iterated based on different business inputs.

• Pulling large language model files takes a long time, and starting the model inference service is slow. The parameter size is usually several hundred GB, resulting in a significant loading time in GPU memory.

• Model updates require synchronous updates in all regions. Using an overloaded storage cluster for replication jobs can severely impact existing load performance.

In addition to the acceleration capabilities of common storage clients, ACK Fluid provides scheduled and triggered data migration and preheating capabilities to simplify data distribution.

1. Cost savings

The cost of cross-region traffic is greatly reduced, computing time is significantly shortened, and there is a slight increase in the cost of computing clusters. Additionally, costs can be further reduced through elasticity.

2. Accelerate applications

By performing computing data access in the same data center or zone, latency is reduced, and cache throughput concurrency can be linearly scaled.

3. Simplify data synchronization

Custom policies can be used to control data synchronization operations, reducing data access contention and automatically reducing O&M complexity.

This article provides an introduction to the classification of hybrid cloud scenarios supported by ACK Fluid and JindoRuntime. The subsequent articles will delve into the specific practices and usage details of these scenarios.

[1] ACK Fluid

https://www.alibabacloud.com/help/en/ack/cloud-native-ai-suite/user-guide/overview-of-fluid

[2] ThinRuntime extension mechanism

https://github.com/fluid-cloudnative/fluid/blob/master/docs/zh/samples/thinruntime.md

[3] General acceleration capability of PV volumes

https://www.alibabacloud.com/help/en/ack/cloud-native-ai-suite/user-guide/accelerate-pv-storage-volume-data-access

Service Mesh Optimization Center: Optimizing Service Mesh for Higher Performance and Availability

212 posts | 13 followers

FollowAlibaba Cloud Native - November 29, 2023

Alibaba Cloud Native - November 30, 2023

Alibaba Cloud Native - November 29, 2023

Alibaba Cloud Native - November 29, 2023

Alibaba Container Service - November 15, 2024

Alibaba Container Service - October 30, 2024

212 posts | 13 followers

Follow Hybrid Cloud Solution

Hybrid Cloud Solution

Highly reliable and secure deployment solutions for enterprises to fully experience the unique benefits of the hybrid cloud

Learn More Hybrid Cloud Storage

Hybrid Cloud Storage

A cost-effective, efficient and easy-to-manage hybrid cloud storage solution.

Learn More Apsara Stack

Apsara Stack

Apsara Stack is a full-stack cloud solution created by Alibaba Cloud for medium- and large-size enterprise-class customers.

Learn More ACK One

ACK One

Provides a control plane to allow users to manage Kubernetes clusters that run based on different infrastructure resources

Learn MoreMore Posts by Alibaba Cloud Native