By Chen Xingyu (Yumu)

Kubernetes uses etcd to store its core metadata. After years of development, especially with the rapid development of cloud-native in the past two years, Kubernetes has been widely recognized and used on a large scale. With the rapid growth of Alibaba Cloud’s container platform ASI and public cloud ACK clusters, the etcd clusters for underlying storage have also witnessed an upsurge in the development. With the increasing number of etcd clusters from a dozen to thousands, their distribution has become worldwide, serving upper-layer Kubernetes clusters and other products with tens of thousands of users.

Alibaba Cloud’s native etcd service has undergone tremendous changes over the years. This article mainly describes the problems faced by etcd service when dealing with large-scale business growth and the solutions in the hope that readers can gain some experience on the use, management, and control of etcd.

This article consists of three parts:

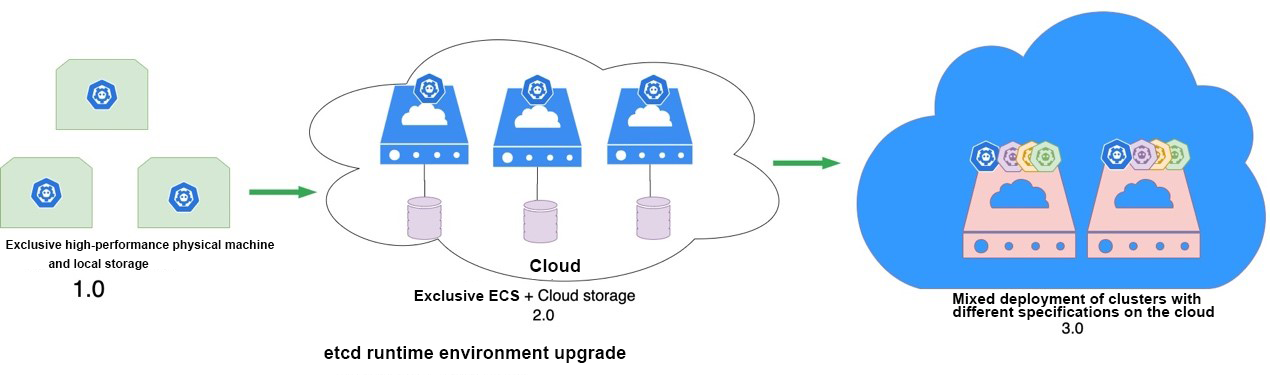

In recent years, the number of etcd clusters has galloped with their running forms upgraded from 1.0 to 2.0 to 3.0, as shown in the following figure:

In the beginning, there were few etcd clusters to be managed and etcd containers were directly run on the host using dockers. See the 1.0 mode in the above figure.

It is easier to run etcd on physical machines as in mode 1.0 in spite of software running inefficiency. As Alibaba Group migrates all its businesses to the cloud, etcd is also switched to ECS on the cloud, with its storage replaced with SSDs or ESSDs.

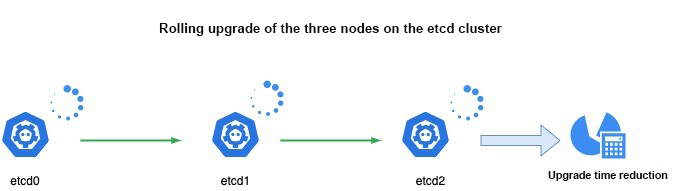

Overall cloud migration has obvious advantages. Taking advantage of the ECS elasticity and storage cloud disks based on Alibaba Cloud’s underlying IaaS, etcd clusters can quickly scale vertically and horizontally, and their failover is much easier than in mode 1.0. Let’s take the example of the cluster configuration upgrade. The overall upgrade time is reduced from the initial 30 minutes to 10 minutes now. The upgraded clusters can withstand both the peak and the daily pressures in business, making it through Alibaba's Double 11 business peaks and the Spring Festival promotion activities by multiple external public cloud customers.

As the number of etcd clusters increases, the cost of ECS and cloud disks, required to run these clusters, is also increasing. As a result, etcd has become one of the expensive parts of container service, which is a problem that needs to be solved.

In mode 2.0 where separate ECS and cloud disks are used, it is found that the resource utilization is low and a lot of resources are wasted. Therefore, in mode 3.0, etcd clusters are deployed in a hybrid manner to reduce operating costs. However, a hybrid deployment is risked by competition for computing resources and cluster instability. The hosts of etcd clusters are often switched, resulting in abnormal functions of the upper etcd-based software. For example, the host switching of the Kubernetes controller affects user services.

To address these problems, different etcd clusters are first split into multiple types based on different quality of service (QoS) and SLO. For example, there are three types, Best effort, Burstable, and Guaranteed in Kubernetes. Then, place the clusters of different types into different resource pools for operation and management. Since the hybrid deployment is highly scattered and random, coupled with a large number of cluster users who scatter the etcd hotspot requests, the stability problems, such as host switching, are greatly decreased. On the basis of stability, resource utilization is improved and the costs are significantly reduced.

In the early days, the etcd clusters were managed in a simple way. Shell scripts could basically cover the entire lifecycle of the etcd clusters, including their creation, deletion, or migration. The previous small-scale management is of less and less use as the number of clusters surges. Such new problems come along as slow etcd cluster production, difficulty in adapting to the underlying IaaS changes, and low runtime cluster management efficiency.

To address the management inefficiency, the cloud-native ecosystem is adopted with Kubernetes as the foundation for running etcd. After years of development based on the open-source etcd-operator, Kubernetes was adapted to the underlying IaaS of Alibaba Cloud and many open-source bugs have been debugged. The management and control of etcd were standardized, covering the entire etcd management lifecycle. The new management backend alpha was released which was used to unify the control of Alibaba Group's internal etcd clusters and the etcd clusters on the public cloud ACK, thus greatly improving our efficiency in managing and maintaining etcd clusters. At present, we can manage nearly 10,000 clusters with half of the manpower. The following figure shows the control interface.

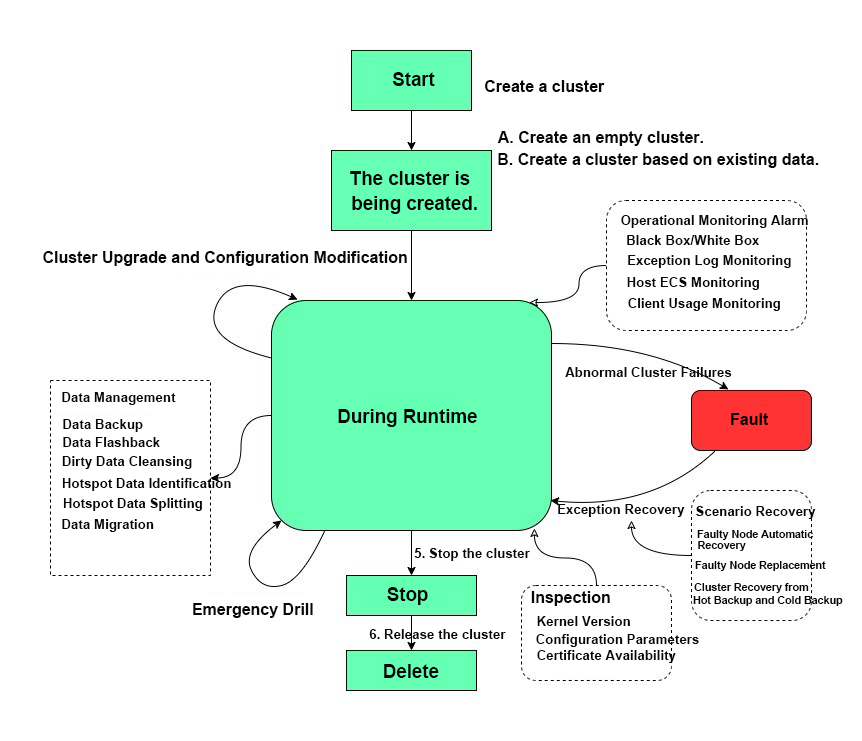

Next, let’s introduce the specific functions of alpha in detail. First, let's take a look at the following picture. It shows a typical lifecycle of an etcd cluster from its creation, running, failure, rerunning, termination to destruction.

What aspects does alpha cover in the picture?

Specifically, it is divided into the following two parts:

Alpha is characterized with etcd data management, including data migration, backup management and recovery, dirty data cleaning, and hotspot data identification. Open-source or other products have little experience on it. The specific functions are as follows.

There are two methods as follows:

The etcd key prefix can be specified to delete junk kv to reduce the storage load of the etcd server.

We have developed the ability to aggregate and analyze hot keys based on etcd key prefixes, and to analyze the database storage usage of different key prefixes. On this basis, we have helped clients troubleshoot and analyze etcd hot keys and addressed the etcd abuse many times, which is essential to large-scale etcd clusters.

When a cluster stores too much data, the data of different clients can be split horizontally and stored in different etcd clusters. Alibaba has internally applied this feature to ASI clusters to support tens of thousands of nodes.

In summary, Kubernetes is used as the operating foundation of etcd clusters to develop the alpha, a new etcd management software, based on open-source operators. The etcd management efficiency is significantly improved with a set of software covering the entire lifecycle management of all etcd clusters.

Etcd is important in the cloud-native community. With several years of evolution and development, many bugs of Etcd have been resolved and its kernel performance and storage capacity have improved. However, there are still some problems with open-source software used in the production environment. Alibaba has requirements for a larger scale of data storage and higher performance. In addition, etcd is not fit for our scenarios in that it has weak control over the multi-tenant shared QoS.

The versions 3.2 and 3.3 of open-source etcd were used in the early days. To meet our requirements in certain scenarios, some stability and security enhancements are added in the finalized etcd version, used by Alibaba now. Several important differences are displayed as follows:

Etcd stores historical values of user data, but it cannot store all historical values for a long time. Otherwise, the storage space will be insufficient. Therefore, etcd periodically clears historical value data by means of the Compact mechanism. If the ultra-large cluster contains an ultra-large amount of data, each cleaning will have a great impact on runtime performance. This is similar to a full garbage collection (GC). The compact technology can be used to adjust the compact timing based on the business request volume so as to avoid the business peak and reduce disturbance.

Raft learners are special in the raft protocol. It does not participate in leader election but can obtain the latest data in the cluster from the leader. Therefore, it can be horizontally extended as the read-only node in the cluster, which improves the cluster’s ability to process read requests.

In addition to what is mentioned above, raft learner can also be used as a hot backup node in a cluster. Currently, hot backup nodes are widely used for double-site high availability, ensuring high availability of the cluster.

On the public cloud, many users use etcd through shared etcd clusters. In such multi-tenant scenarios, not only the fair use of etcd storage resources by tenants is important but also the stability needs to be maintained. For stability, it’s critical that the entire cluster doesn’t break down due to the misuse by one tenant, thus affecting other tenants. To this end, we have developed the corresponding QoS traffic limiting feature, which can limit the read and write data traffic during the runtime and the space for static storage data.

Alibaba Cloud has used etcd as the core data storage system for container service for nearly four years. In this process, we have accumulated a wealth of etcd management experience and best etcd practices. This article shares our best practices that helped in cost reduction, efficiency improvement, and kernel optimization.

In recent years, with the rapid development of cloud-native technology, etcd has experienced unprecedented rapid development. Etcd was officially released from CNCF last year. Alibaba Cloud contributed the important features and bug fixes to the etcd community. Alibaba Cloud also actively participated in the community, learning from the community and contributing to the community. It is predictable that the etcd cluster will maintain rapid growth in the future. We will continue to make efforts in reducing costs, increasing efficiency, and ensuring stability and reliability.

Kubernetes Stability Assurance Handbook – Part 3: Observability

701 posts | 57 followers

FollowAlibaba Developer - September 23, 2020

Alibaba Developer - August 18, 2020

Alipay Technology - November 6, 2019

Alibaba Developer - January 10, 2020

Alibaba Developer - June 15, 2020

Alibaba Container Service - November 21, 2024

701 posts | 57 followers

Follow Apsara Stack

Apsara Stack

Apsara Stack is a full-stack cloud solution created by Alibaba Cloud for medium- and large-size enterprise-class customers.

Learn More ECS(Elastic Compute Service)

ECS(Elastic Compute Service)

Elastic and secure virtual cloud servers to cater all your cloud hosting needs.

Learn More Super Computing Cluster

Super Computing Cluster

Super Computing Service provides ultimate computing performance and parallel computing cluster services for high-performance computing through high-speed RDMA network and heterogeneous accelerators such as GPU.

Learn More Elastic High Performance Computing

Elastic High Performance Computing

A HPCaaS cloud platform providing an all-in-one high-performance public computing service

Learn MoreMore Posts by Alibaba Cloud Native Community