By Zhu Muhua (Yukun), Liu Luxin (Xique), Cao Yuanpeng (Yuanshang), Xu Mengtao (Honglang), Ziyin, and Li Qiang (Jiuyue).

In this blog, we're going to introduce the system that houses a complete set of e-commerce concepts, which Alibaba Group has built up over the construction of its very own knowledge graph. In particular, we'll also be considering Alibaba's exclusive knowledge graph solution, named E-Commerce ConceptNet, from the perspectives of user need analysis and overall system construction, and we will also describe how Alibaba's e-commerce platform architecture and audit procedures have been refined. These refinements, in addition to having been powered machine learning and AI, were also made possible by the tremendous efforts of the responsible engineers, as well as the product, operation, and outsourcing teams at Alibaba.

From the very launch of Alibaba's E-Commerce ConceptNet, the clever design of the knowledge graph has been specifically geared for Alibaba's major e-commerce applications. And, with the creation of Alibaba's very own ConceptNet, Alibaba Group had already come to craft a very large and nearly all-encompassing smart cognitive system for e-commerce data by mid-2017. This, of course, was after much continuous exploration, from system construction to continued implementation.

As Alibaba continues to expand the boundaries of its various business units, while blending and even redefining the meaning of Internet technologies, the demand for the intelligent use and interconnection of data points has grown at a striking rate. Data, or rather connecting the dots, is increasingly important for several different applications in e-commerce, including cross-domain search discovery, shopping guides, and platform user interaction. And, above all else, intelligently using data is also a fundamental piece to improving the overall experience of users on an e-commerce platform.

Therefore, as you can see, the knowledge graph is an excellent solution as it provides an intelligent means of satisfying all of these scenarios. But, before we get into how Alibaba's knowledge graph solution works, let's look that some of the problems that led to its creation.

Today, e-commerce feeds off of a relatively complex web of data points, with there being a diverse set of use cases for this data. The complicated shopping scenarios that Alibaba Group are involved in include new retail, e-commerce platforms with multiple language version, like AliExpress, and a shoal of online and offline settings. Data used in these scenarios often goes well beyond the traditional text ranges, like queries and titles, but this new breed of data carries with it several challenges.

The first challenge is that this data is at a massive scale and is unstructured. Furthermore, this data is distributed among several different data sources, which also happen to be materialized in a huge body of unstructured text. And, although the current category system that is implemented involves a lot of long-term work from the perspective of item management, it still only takes care of the very tip of a humongous data iceberg. So, it's clear that the current means of doing things is far from sufficient to cognize actual e-commerce user needs.

And then there's the challenge of data noise. Currently, most data in Alibaba Group involves such things as queries, titles, comments, and tips. It may at first glance appear simple, but the analysis of such massive amounts of data cannot be done in the same way as traditional text analysis is conducted. This is because the syntactic structure of such data is miles different from that of ordinary text data, because it contains loads of noise, or dirty data, making the job of receiving the valuable chunks a bit of a painful process. Part of this relates to how merchants find customers and ultimately how search engine optimization (SEO) works on an e-commerce platform. However, regardless of the causes, noise makes pinpointing user needs from the massive body of platform data that exists different, not to mention trying to express this data in a structured manner.

And then there's also the problem of multi-mode and multi-source data. As Alibaba Group expands its business, today's search recommendations contain a lot more than text information, videos, and item images. So, integrating data from various sources and associating multi-mode data can be quite difficult.

There's also that fact that data may be scattered or disconnected. Given the construction of the current item system, each department usually needs to maintain its own category-property value (CPV) system given rapid business development. This is a critical part of the item management and search processes at the later stage. However, the CPV system is used in scenarios that correspond to different industries. For example, the category of "Bags and Accessories" on Xianyu, Alibaba's second-hand buy-sell platform connected to Taobao, is a category that needs to be further segmented because the category is search at a very high frequency. With this in mind, though, due to the less frequently searched "Shoes, Bags, and Accessories" category, this becomes merely a sub-category of the Second-Hand Items category. As a result, each department has to expend extra time and effort in maintaining query and search requests in its own CPV system. Specifically, each department has to repeatedly build its own category system and search engine, associate items, and make category predictions. Therefore, it is a pressing matter to establish a generic application-oriented concept system that provides query services based on business demands.

Then, there's the absence of deep data cognition. Deep data cognition does not focus on items but on the associations among different user needs. Currently, one way to cognize if a female user's needs to prepare for pregnancy is to see whether she has searched for "folic acid" or not. However, this kind of deep data cognition application remains absent across Alibaba's e-commerce systems.

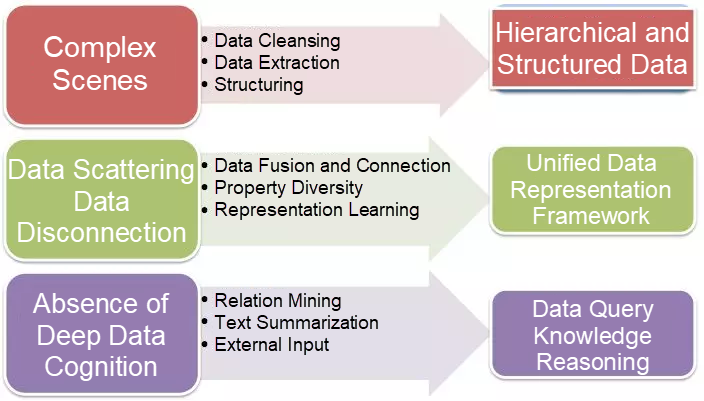

The problems given above show the need for building a globally unified knowledge representation and query framework, one that can work with all of the above challenges. For this framework to work, the following key items must be completed:

Part of this is data structuring in complex scenes. In complex scenes, data cleansing is the first thing we need to do. Specifically, we need to remove dirty data through frequency-based filtering, rules, and statistical analysis. Then, we need to capture highly available data by using methods such as phrase mining and data extraction, structure the data, and create a data hierarchy.

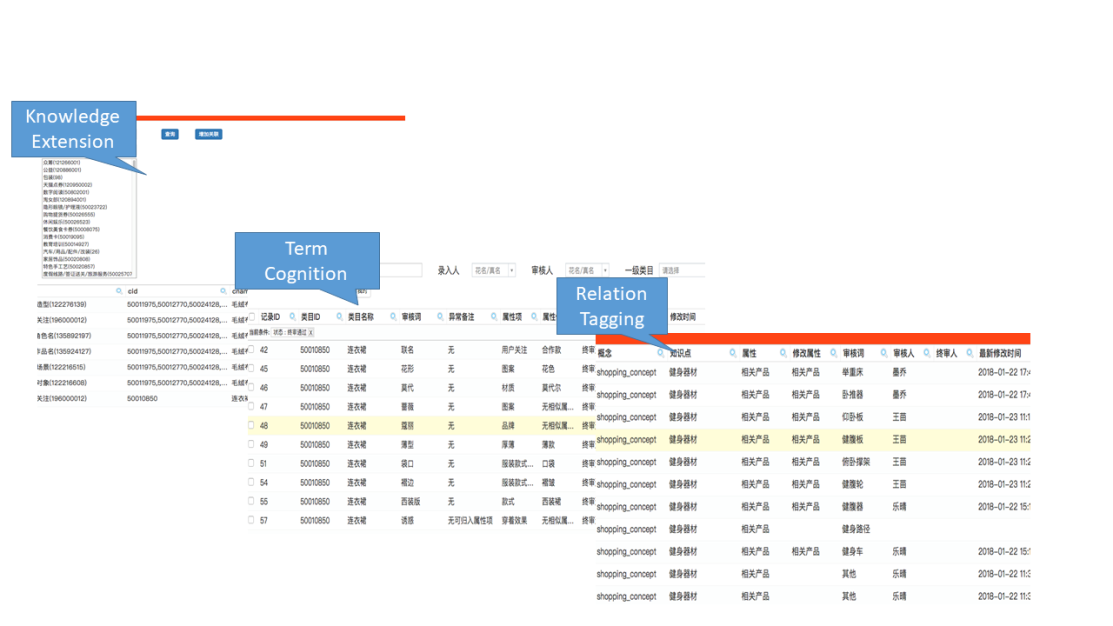

A unified representation framework for scattered data: To manage scattered data, we first need to define a global schema representation and storage method. Then, we need to merge the conceptual data based on the schema, and may additionally mine and discover properties through data mining and associate data through relationship extraction or knowledge representation.

And, then, we come back to deep data cognition. Deep cognition involves two aspects, data and data association. By analyzing data on e-commerce user behaviors and items, we can cognize users' intentions regarding specific items. Based on the input and summary of external data, we can explore the relationship between a more global view and user needs outside the item system.

To address the preceding problems, we have created the knowledge graph named E-commerce ConceptNet. It aims to establish a knowledge system for the e-commerce field. Moreover, we intend to link users, items, and scenes in the e-commerce setting to empower the demand side of E-commerce ConceptNet and the industries covered by Taobao by cognizing user needs in an in-depth manner.

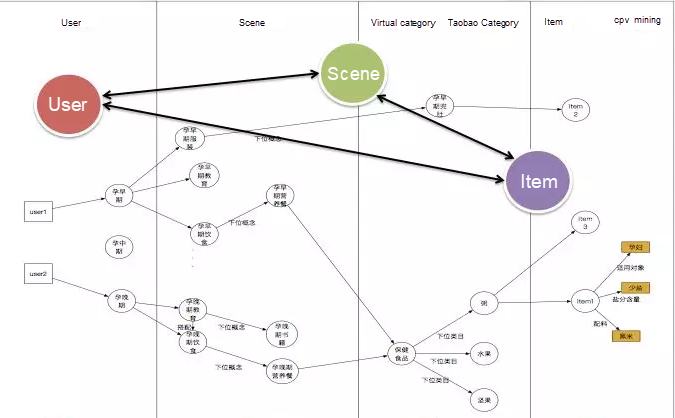

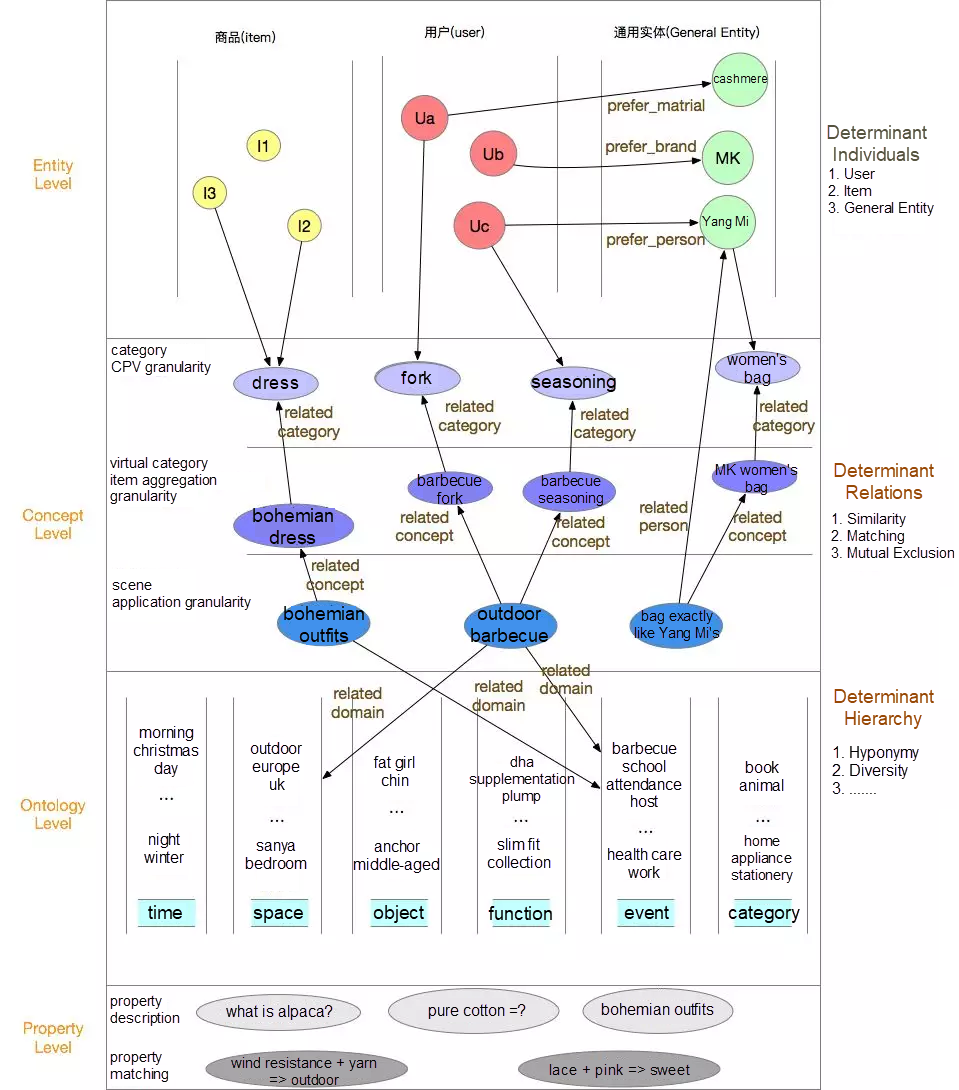

E-commerce ConceptNet is divided into four key modules. User-scene-item association can be achieved through building different types of concepts that include users, scenes, virtual categories, and items in a heterogeneous graph.

The user graph includes data related to specific user groups such as the elderly and children as well as data relating to individual user preferences for category properties in addition to general user profile information, such as age, gender, and purchasing power.

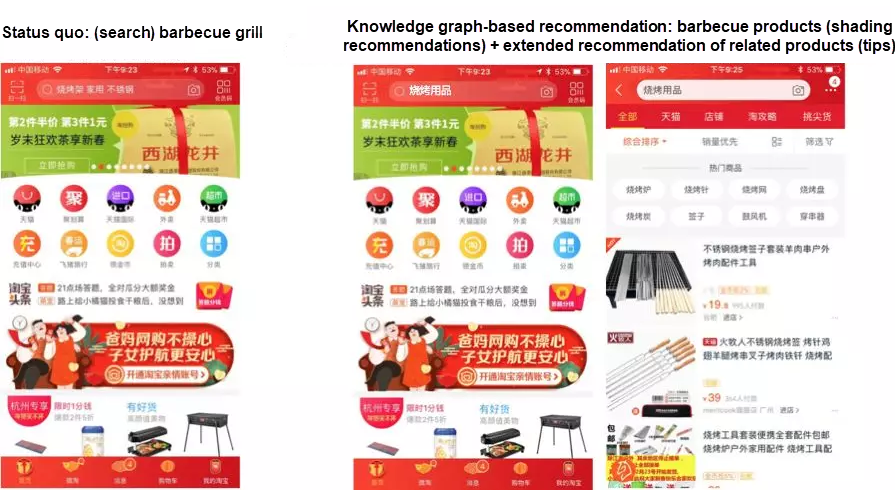

A scene can be viewed as the conceptualization of user needs. The main work of the scene graph is to generalize user needs collected from existing queries and titles into a generic scene concept, and then to create concepts such as "outdoor barbecue" and "holiday outfits." Based on continuously refined user needs, we can abstract "cross-category" and "category" fields, which together represent a certain type of user needs, into a scene concept.

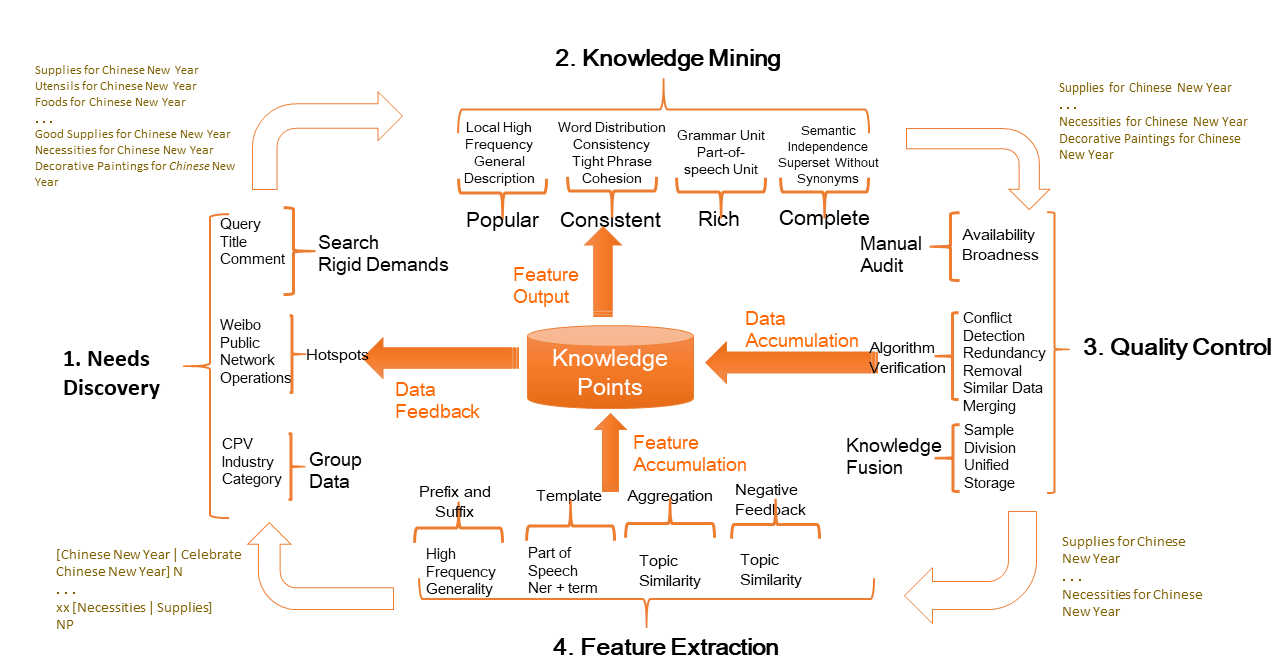

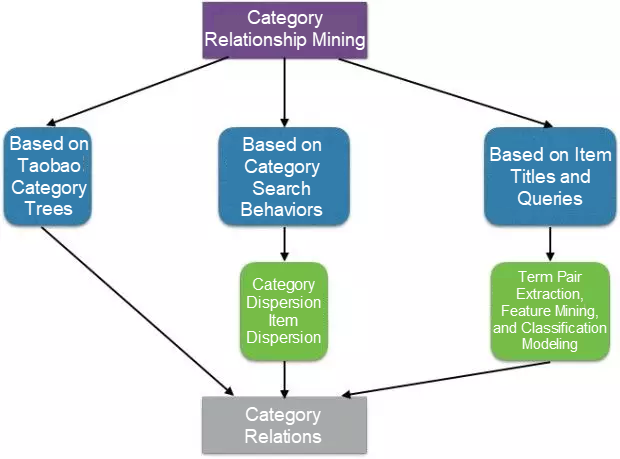

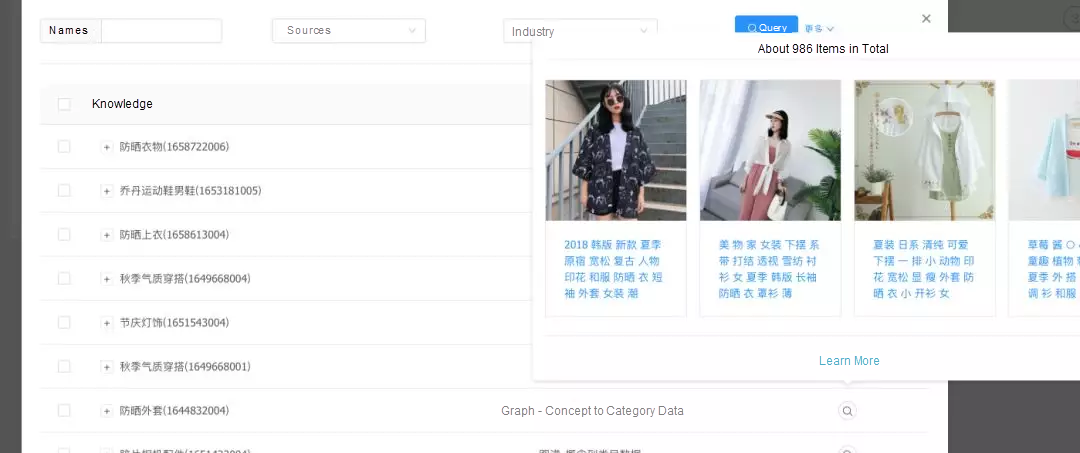

By mining concepts, we can develop the nodes on the graph. On top of concept mining, we have established the relationship between a concept and a category, and between concepts. This is equivalent to creating a directed edge on the graph and calculating the strength of the edge. The specific process is shown in the following figure:

Thus far, we have minded more than 100,000 scene concepts, with one million categories associated with them.

We had to refine categories because the current category system often is either too coarse or too fine. Category refinement involves mainly two aspects:

The first aspect is category aggregation. To understand this concept, consider this example. "Dress" is a category, but it can be found among different categories, such as in "women's wear" and "children's wear" according to industry-specific administration. As a result, "dress" exists in the two tier-1 categories. In this case, a system inclined to be common sense-based is required to maintain the common sense on "dress."

Then, there's category splitting. Category refinement is helpful for when the existing category system is insufficient to cover the needs of a user group. For example, in the "traveling in Tibet" scene, we need more details for the "scarf" category. Therefore, a virtual category called "windproof scarf" is needed (as it's pretty windy in Tibet). This process also involves entity and concept extraction, as well as relation classification. As of now, we have focused on establishing hyponymic relations for categories and sub-categories.

And, as such, we have integrated the CPV category tree and category association.

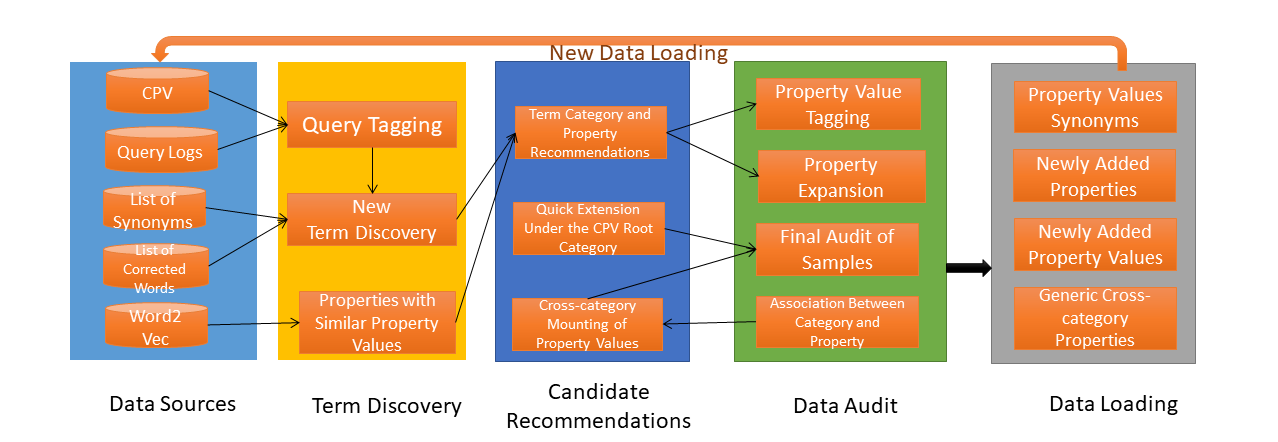

Now, another concept is phrase mining. For the item graph, we need to do more for the cognition of item properties. The premise of a sound CPV system is the cognition of phrases. For this, we have established a closed loop of CPV mining under the Bootstrap framework to effectively accumulate CPV data in the long term and expand queries and item cognition. This is also one of the data sources for item tagging.

We have audited the top 70 categories ranked by page view (PV) and added more than 120,000 CPV pairs. The percentage of queries with terms fully recognized after segmentation has increased from 30% to 60%. Currently, 70% is the maximum rate of coverage for non-long-tail keywords as medium-grained segmentation is used in mining. We will continuously expand the coverage of mining after the phrase mining process is added. Currently, the data is generated every day as basic data for category prediction and intelligent interaction.

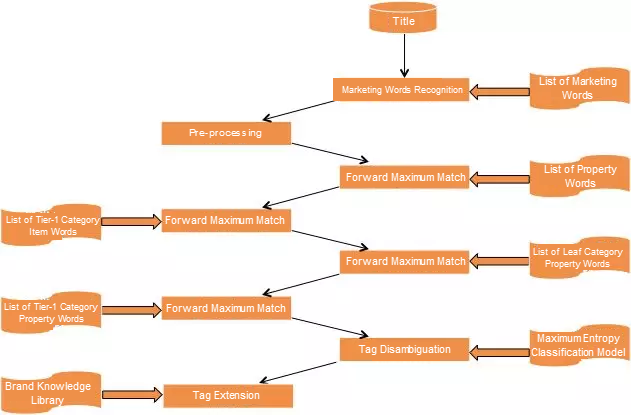

Then, there's Item tagging. Item tagging is a key technology for associating concepts with items. The data generated in the preceding three phases are finally associated with items by tagging. After tagging is complete, a complete closed loop of semantic cognition from queries to items takes shape.

At present, we have launched the first edition of item tagging.

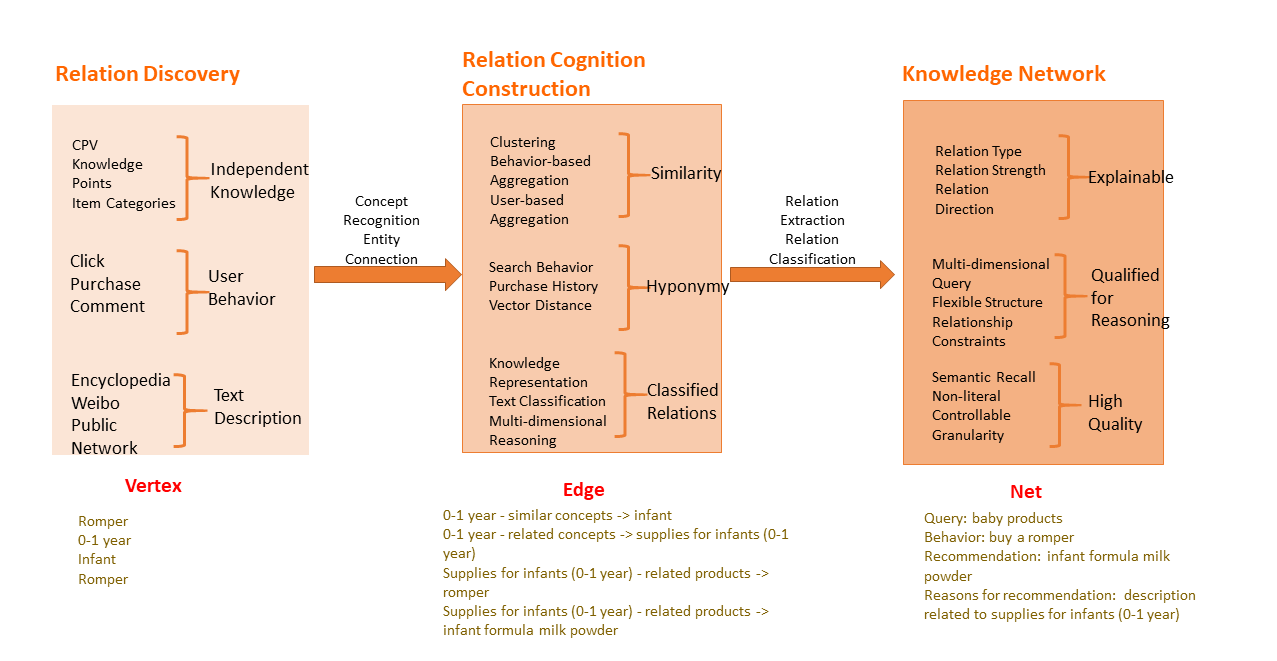

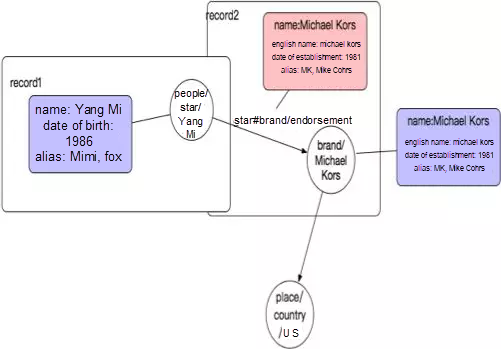

During knowledge construction, we discovered that a globally unified schema representation system is required. Therefore, we studied the construction of the WordNet and ConceptNet systems, and developed a conceptual representation system, which now make up what is E-commerce ConceptNet. Our ConceptNet aims to understand e-commerce user needs from the semantic perspective, conceptualize them, and then map them to semantic ontologies. By using relations at the lexical level, E-commerce ConceptNet formalizes the relations between semantic ontologies, represents concept hierarchies through ontological hierarchies, and abstracts classes of entities and relations between the entities through the relations between concepts.

At the data level, to describe an entity, we first need to define it as an instance-of-class, of which a class can usually be represented by a concept. Different concepts have different properties. A set of properties of a type of concepts is called a conceptual schema, and the concepts of the same schema usually belong to different domains. A domain has its own semantic ontologies.

Through such ontological hierarchies as "UK"-is-part-of-"UK", we can formalize concept hierarchies and representations. To build E-Commerce ConceptNet coarse-to-fine, we have defined a set of representation methods for the e-commerce concept system. By constantly refining ontologies and concepts as well as their relations, we managed to associate users, items, and even external entities.

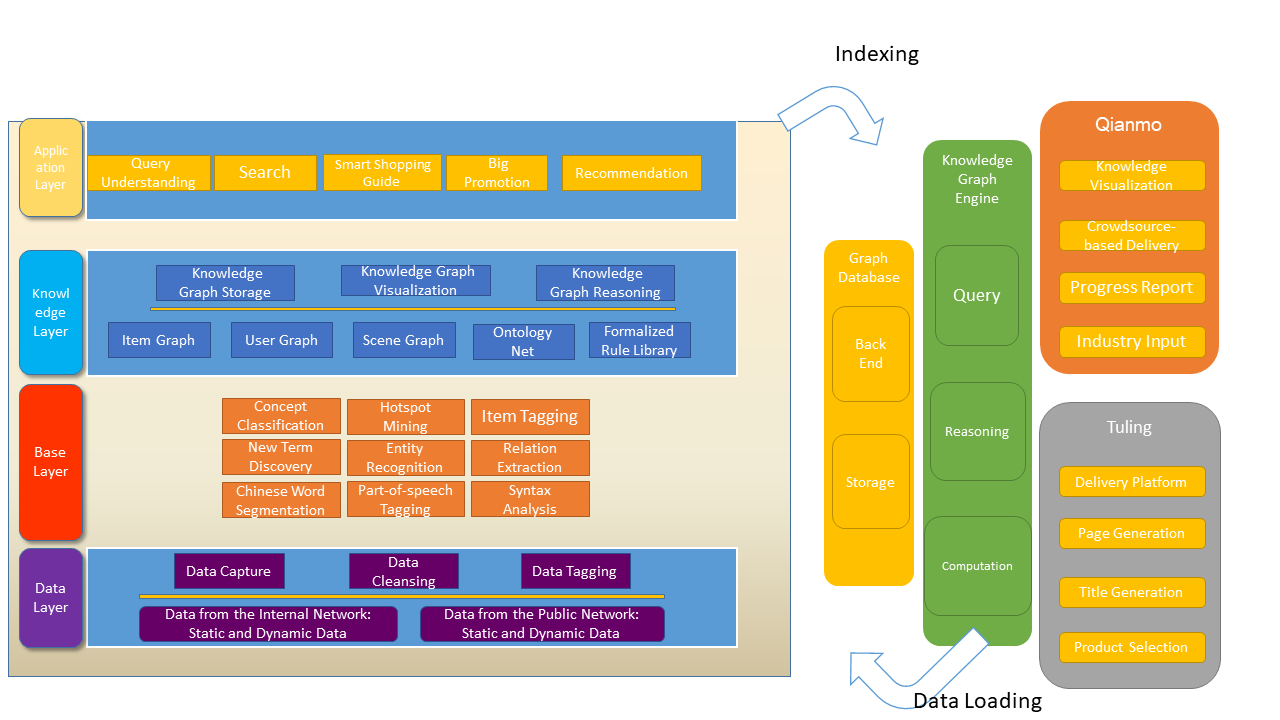

In general, we use a data service mid-end to support the knowledge engine, and then use Tuling to produce and apply knowledge.

Currently, most of our knowledge is provided in the form of cards. Tuling provides a complete set of service tools exposed through cloud topics. Then, there's also selecting concepts. You can select all your cloud topics and deliver them by channel.

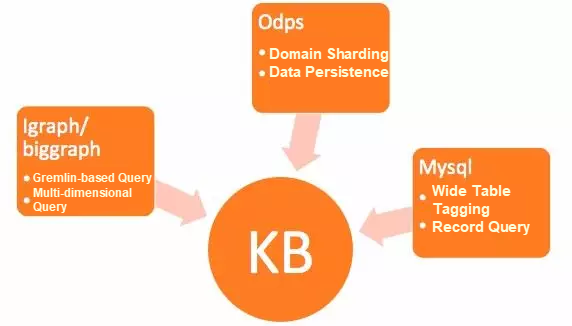

With regards to storage medium, we use MySQL for flexible tagging, use the graph database for full query, and use MaxCompute for persistent data version management.

Data is split into vertex tables and edge tables before being imported into iGraph and BigGraph. You can query data online through Gremlin.

A graph engine module is encapsulated into the upper layer of the graph database to provide different triggering scenes and the multi-path and multi-hop recall function for items. Currently, the expansion of recall based on the user, item list, and query is available. When this is used with search and discovery, the query API is available for queries and tests.

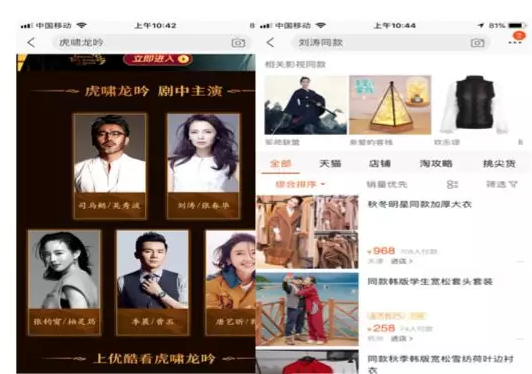

Nearly 10,000 scenes have been launched in cloud topics in the form of knowledge cards. Compared with items in the Guess Your Favorite channel on the Taobao homepage, items recommended based on the click-through rate (CRT) and diversity have greatly improved. Now, we are exploring data diversity.

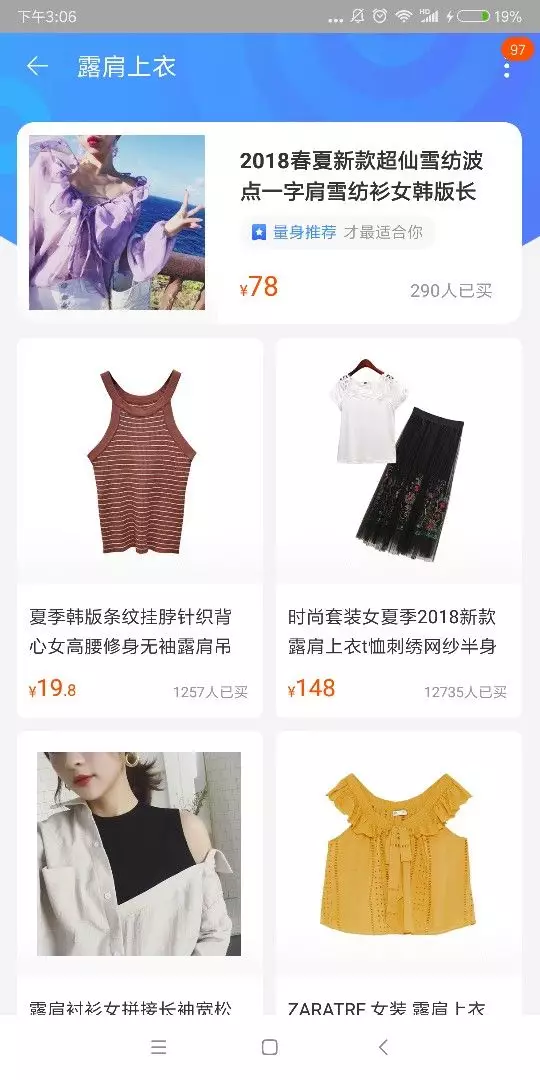

A Channel for Tank Tops

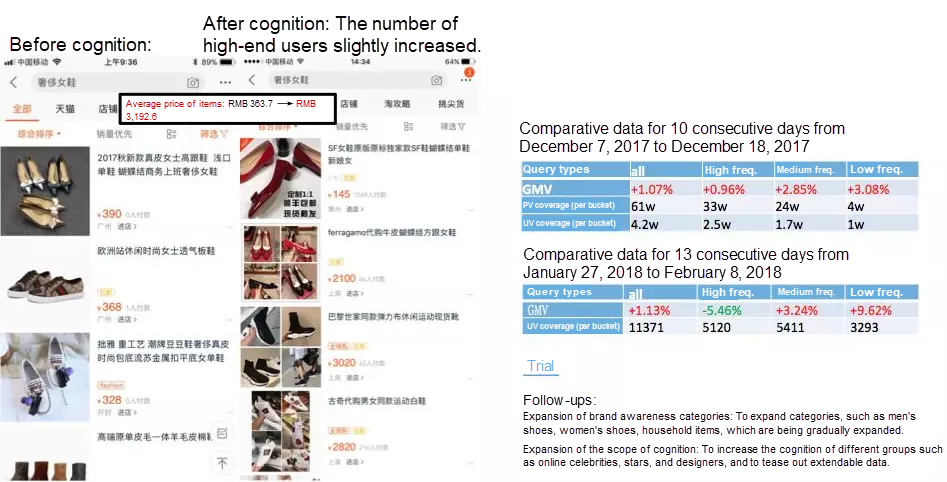

Main Page Example (Comparison)

A Search Example

At present, E-commerce ConceptNet has been developed for about a year. There is still a lot of work to be refined, of which the following will be our future areas of focus:

1 posts | 0 followers

FollowKaiwai - September 9, 2019

Alibaba Clouder - January 22, 2020

Alibaba Clouder - October 15, 2020

Alibaba Clouder - January 21, 2020

Alibaba Clouder - October 26, 2018

Alibaba Clouder - September 30, 2019

1 posts | 0 followers

Follow Platform For AI

Platform For AI

A platform that provides enterprise-level data modeling services based on machine learning algorithms to quickly meet your needs for data-driven operations.

Learn More Epidemic Prediction Solution

Epidemic Prediction Solution

This technology can be used to predict the spread of COVID-19 and help decision makers evaluate the impact of various prevention and control measures on the development of the epidemic.

Learn More Livestreaming for E-Commerce Solution

Livestreaming for E-Commerce Solution

Set up an all-in-one live shopping platform quickly and simply and bring the in-person shopping experience to online audiences through a fast and reliable global network

Learn More MaxCompute

MaxCompute

Conduct large-scale data warehousing with MaxCompute

Learn More