By Yuan Xiaodong, Apache RocketMQ Committer, RocketMQ Streams Co-Founder, and Head of Alibaba Cloud Security Intelligent Computing Engine

RocketMQ Streams contains the following four definitions:

(1) Lib Package: Lightweight. You only need to download the source code from Git and compile it into a JAR package before using it.

(2) SQL Engine: It is compatible with Flink SQL syntax, UDF, UETF, and UDAF. You can use syntax Flink or migrate and use Flink tasks.

(3) Lightweight Edge Computing Engine: RocketMQ Streams and RocketMQ are deeply integrated. RocketMQ supports MQTT, so RocketMQ Streams support cloud computing scenarios. In addition, RocketMQ supports the storage and transfer of messages, so it can meet most scenarios of edge computing.

(4) SDK: Its components can be used independently or embedded in the business.

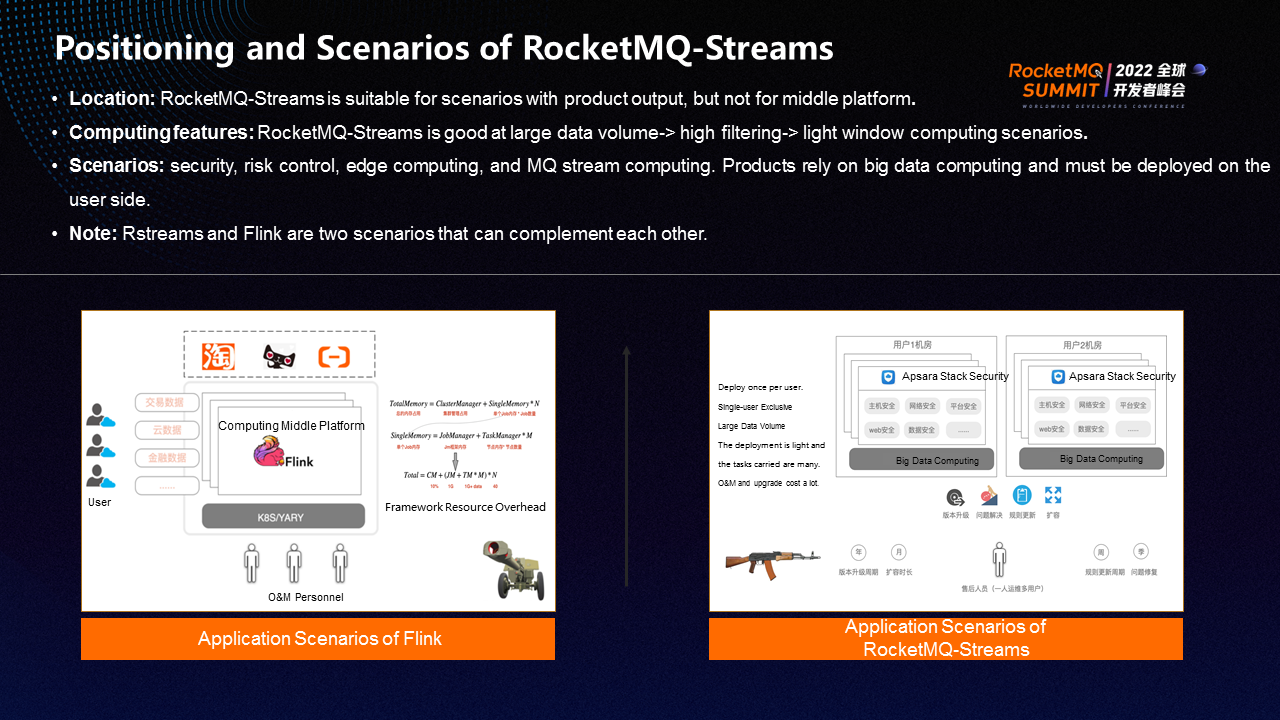

Existing big data frameworks (such as Flink, Spark, and Storm) are mature. However, we still develop an open framework (such as RocketMQ Streams) on this basis because of the following considerations.

Flink is a big data component with a heavy base. The cluster overhead and framework overhead account for a large proportion, and the O&M cost is high. Therefore, it is suitable for the middle platform, which is deployed by specialized O&M personnel, to form a large middle platform business.

However, in actual business, there must be scenarios that cannot meet the middle platform. For example, a product depends on the ability of big data and needs to export the product to the user and deploy it in the user's IDC. If big data computing capability is deployed together, three problems will occur. First, the deployment is troublesome because of the high deployment cost of Flink. Second, the maintenance cost is high. Third, the resource problem is that Flink tasks need to preset resources. Different user log volumes are different. The preset resources will be large, and Flink cannot meet the requirements.

The positioning of RocketMQ Streams is suitable for scenarios that are output with products but not for the middle platform. For example, security risk control, edge computing, message queue, and stream computing are suitable for RocketMQ Streams. Therefore, the capabilities of RocketMQ Streams and Flink can complement each other.

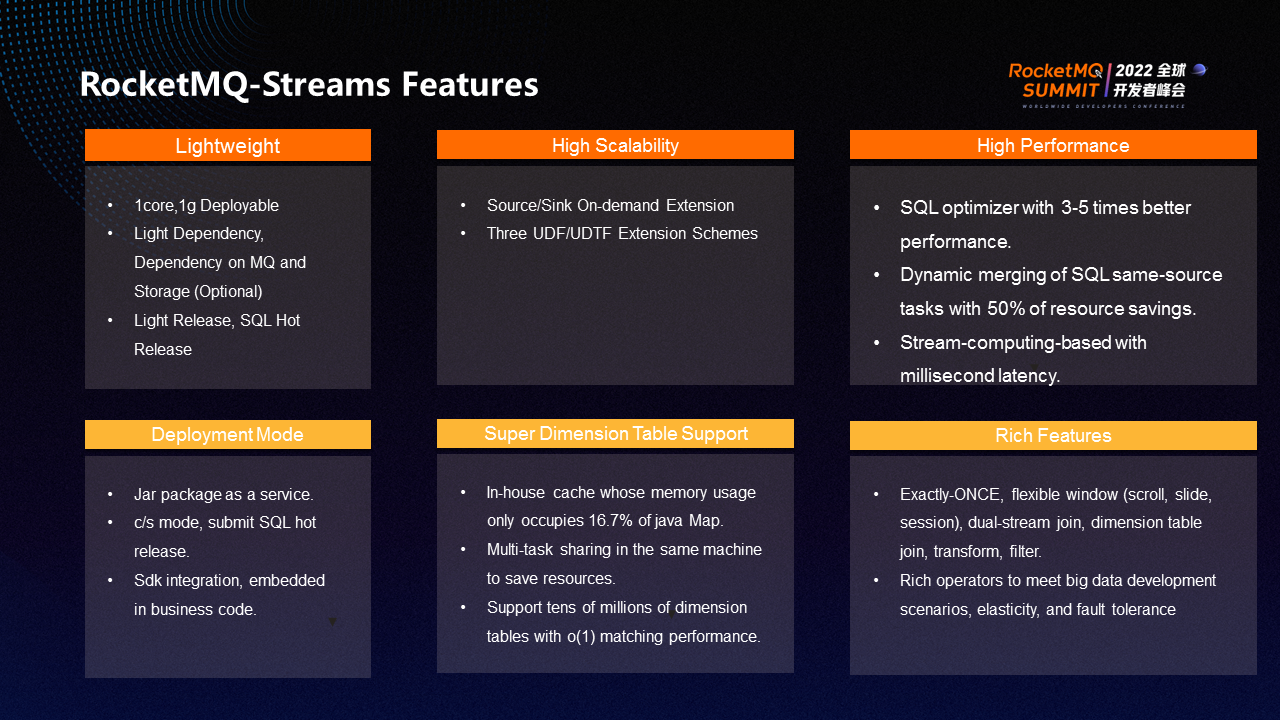

RocketMQ Streams have the following features:

(1) Lightweight: RocketMQ Streams is lightweight and can be deployed with 1core 1g. It has a lighter dependency and no other dependencies except message queues. It is easy to publish and can be released through SQL hot update.

(2) High Scaling: RocketMQ Streams can expand Source, Sink, and UDF.

(3) High Performance: Many optimizations have been made for filtering. Therefore, the performance can be improved by 3-5 times in high filtering scenarios. RocketMQ Streams realize the lightweight of some tasks. Resources can be saved by 50% in scenarios where SQL homologous tasks are merged. At the same time, it is based on stream computing and can achieve a millisecond latency.

(4) Multi-Deployment Mode: JAR package as a service. You can submit SQL to achieve hot release based on C/S mode or integrate it into the business through SDK.

(5) Super-Large Dimension Tables Are Supported: The cache memory ratio developed by RocketMQ Streams is only 16.7% of Java Map. Multiple tasks on the same machine can be shared to save resources. Dimension tables support tens of millions of levels and do not need to specify indexes. Indexes can be automatically inferred based on join conditions to achieve O(1) matching similar to maps.

(6) Rich Features: It supports exactly-once and flexible windows (such as scrolling window, sliding window, and distribution window) and features (such as dual-stream join, dimension table join, conversion, filtering, and big data development scenarios).

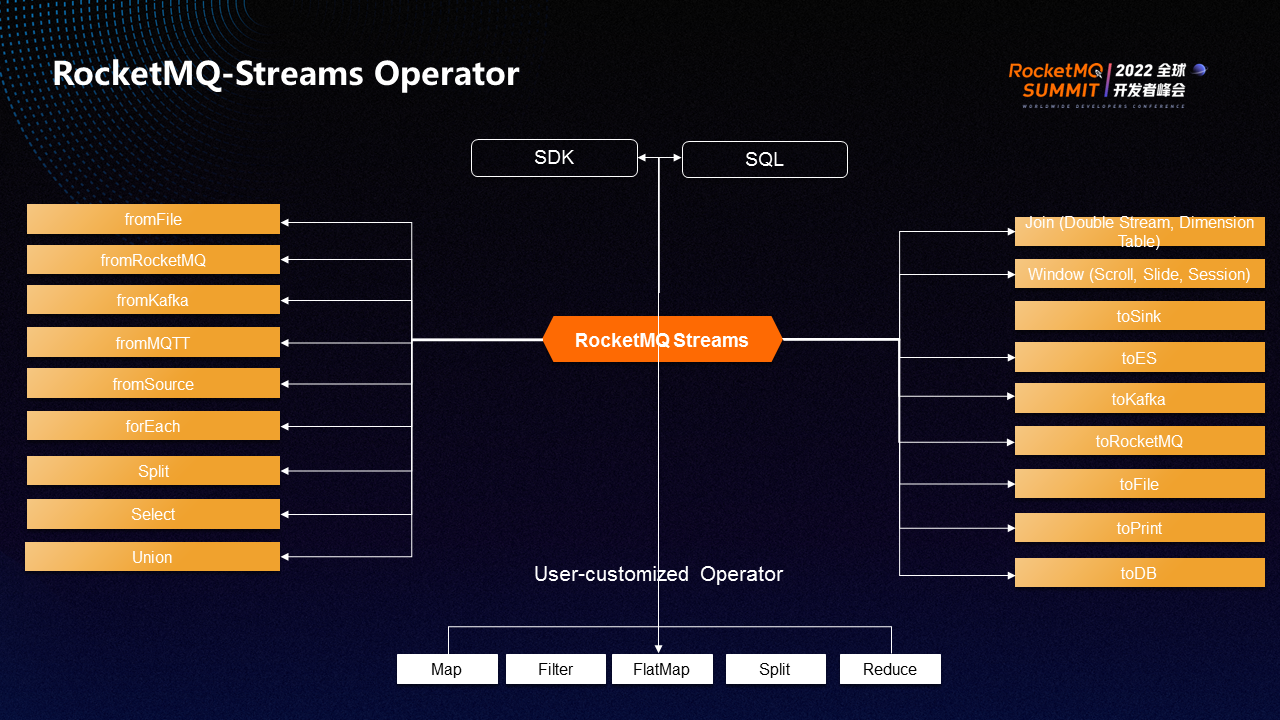

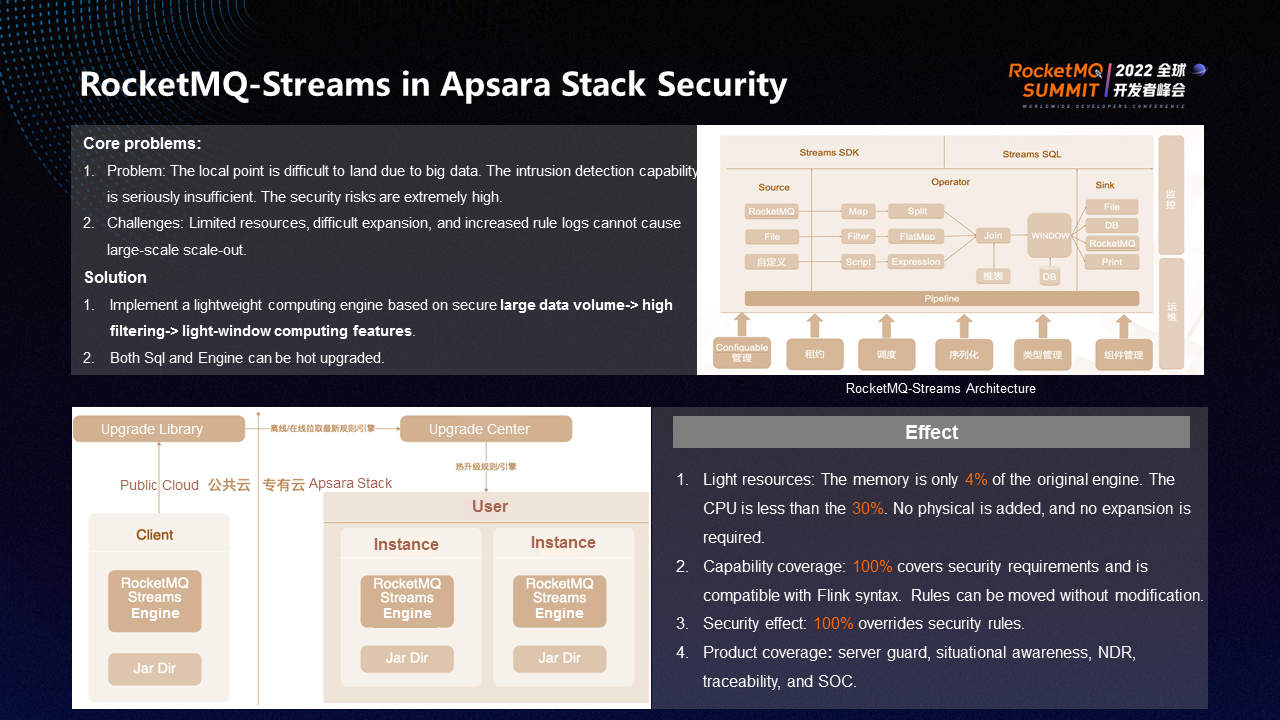

The preceding figure shows some conventional big data operators supported by RocketMQ Streams. They are similar to other big data frameworks and can be extended.

Whether it is Spark or Flink, a successful big data component often takes several years for a large team to polish and complete. Implementing RocketMQ Streams will face the following challenges:

Different ideas from Flink are necessary to implement a lightweight and high-performance big data computing framework.

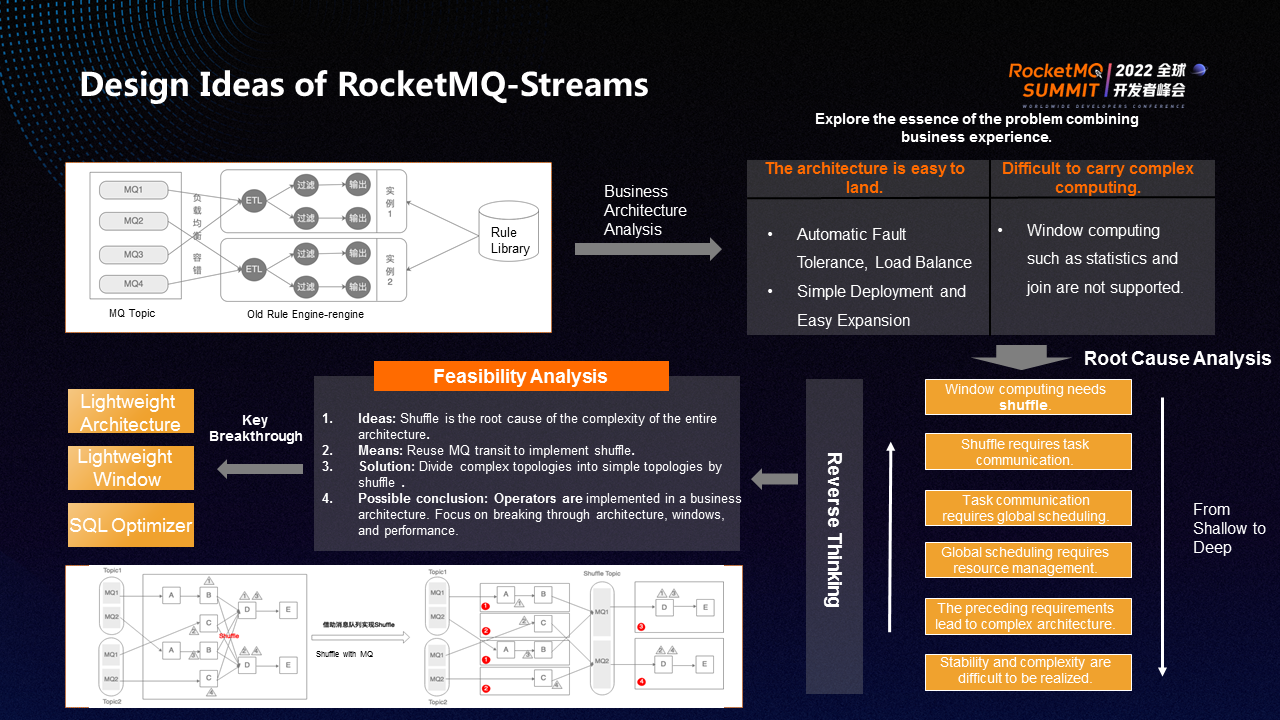

In terms of business architecture, the architecture of a conventional RocketMQ service is the same, including input, stateless calculation, and output results. This conventional RocketMQ service architecture has two advantages. First, it is lightweight. Load balance and fault tolerance are completed by RocketMQ without finishing them by other architectures. Second, it is simple to deploy. If RocketMQ is blocked, it can directly expand the service and increase consumption.

However, this conventional architecture has trouble implementing complex computing (including statistics, join, and window computing). You must implement shuffle for such complex computing. You must communicate between different operators to implement shuffle. Communication between operators requires global scheduling and global task management, while they require resource management and allocation of task resources. The preceding requirements will lead to a complex architecture, making the implementation in a short period difficult in terms of stability and complexity.

In reverse thinking, the root of complexity is shuffle, whose solution is to realize shuffle with the help of message queue transit. Shuffle is used as a partition to change a complex topology into a simple topology. A lightweight and high-performance big data computing framework can be implemented only by breaking through the three difficulties of building the entire architecture, supplementing window computing, and improving performance.

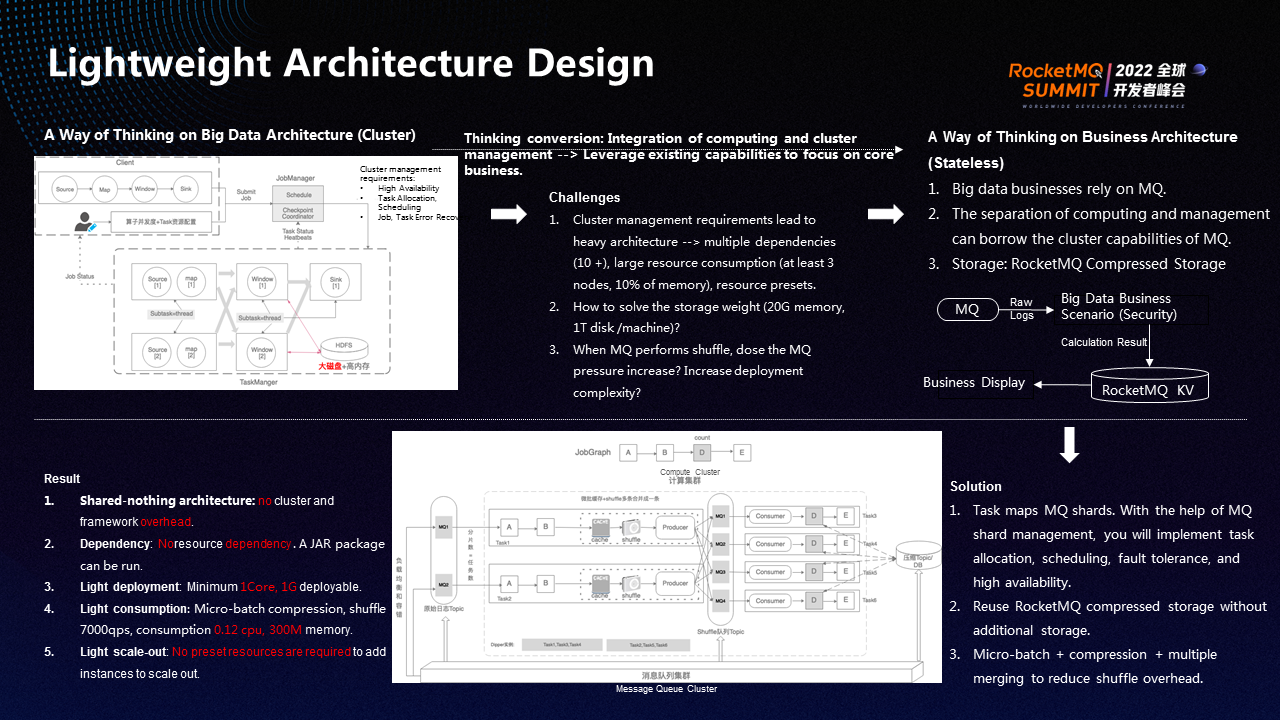

Big data architectures include Spark and Flink. The general design idea is to integrate computing and cluster management. The management of clusters must solve issues (including high availability, task allocation and scheduling, and job and task fault tolerance). Therefore, the implementation of big data architecture faces huge challenges.

(1) The management requirements of clusters make the architecture heavier since high availability means that components must be introduced. Moreover, in terms of resource consumption, a cluster mode requires at least three stages, and the overhead of a cluster may take up 10% of memory. Once the management structure is clustered, the allocation of tasks and the setting of resources need to be preset.

(2) The state storage requirements for similar window computing are strict. The deployment of big data components requires highly on memory and large disks, which undoubtedly increases the complexity of the architecture.

(3) The solution of implementing shuffle through message queue transit may increase the pressure on RocketMQ and the complexity of deployment.

The three points are the challenges to consider when building a lightweight architecture based on big data architecture. As a different way of thinking, focus on the core business and think with the idea of business architecture.

Conventional big data services will have a message queue, regardless of whether the message queue is RocketMQ. Most message queues manage load balance and fault-tolerant shards. The separation of computing and management can borrow the cluster capabilities of MQ. Storage can be implemented through RocketMQ's compressed storage.

The smallest scheduling unit of the message queue is sharding. It can perform load balance, fault tolerance, and scheduling on shards. As long as you map tasks and shards with the help of MQ shard management, you will implement task management without additional management. Reuse the compressed storage of RocketMQ without additional storage. In addition, using MQ as shuffle will increase the pressure of MQ. As the number of messages in MQ increases, both the CPU and overall resource usage will increase. Therefore, policies must be adopted to reduce resource consumption.

The real-time performance of window computing is not high. For example, a ten-minute window only needs to retrieve results every ten minutes. Therefore, the micro-batch method can be adopted. For example, 1000 pieces are computed once. The 1000 pieces are grouped based on shuffle keys, and multiple pieces of data are merged into one piece after grouping. According to the pressure of QPS, as the data volume of RocketMQ increases and the QPS decreases, the pressure on the CPU is not large. Then, compression is performed to reduce the data volume, which can reduce the shuffle overhead.

The final results are listed below:

(1) With the shared-nothing architecture, there is no cluster and framework overhead.

(2) Light Dependency: No additional dependencies are added. Although there are MQ dependencies, MQ is necessary. The MQ of business can be directly reused.

(3) Computers do not need any dependency. The deployment is lightweight.

(4) Light Consumption: Shuffle transit implements micro-batch, compression, and multiple merge policies. The 7000 QPS only requires 0.12 CPU and 300 megabytes of memory, consuming few resources.

(5) Light Scale-Out: Scale-out is simple since the shared-nothing architecture is adopted, which is similar to a web server. When messages accumulate, add instances to scale-out.

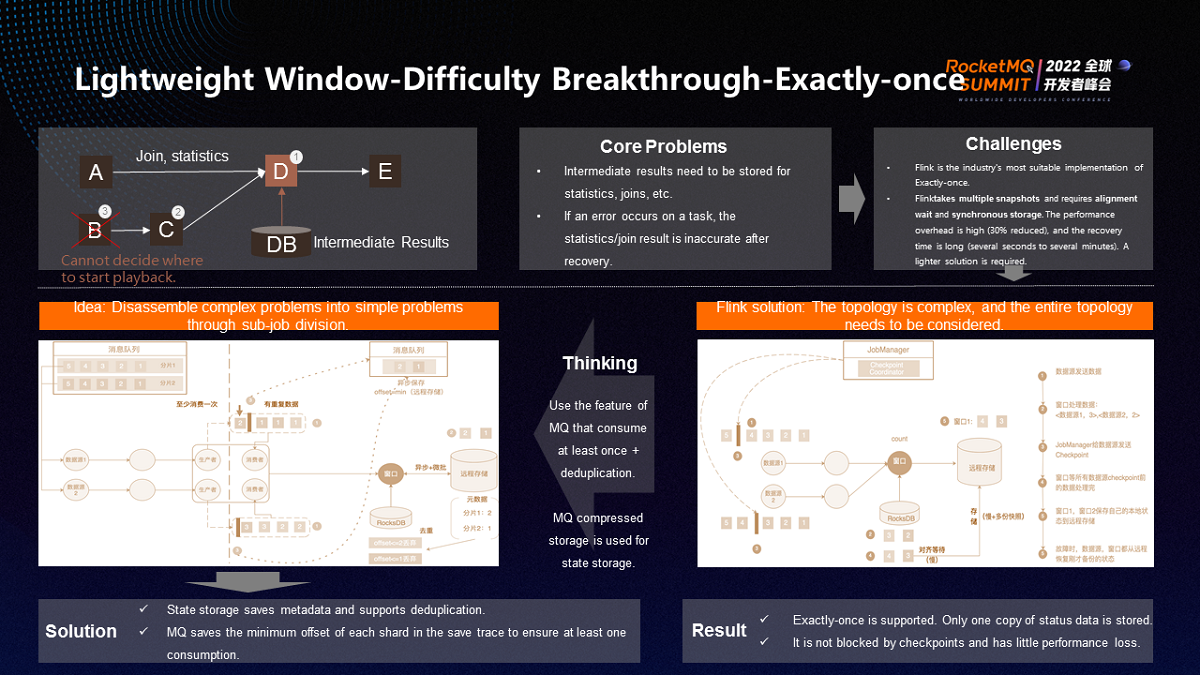

Exactly-once is difficult. The flow is borderless. The window must be divided to perform statistical computing. As shown in the figure, assuming that the position of D is a count, there are two ways to calculate how many pieces of data are received in ten minutes.

(1) Each piece of data is cached, and all data is counted every ten minutes to obtain results. This method puts great pressure on storage without efficiency.

(2) Only the intermediate result is stored for each piece of data. For example, the intermediate result of the first piece of data is 1, the second is 2, and the third is 3. However, there is also a problem. If a node or a task has a problem, the intermediate result will become uncontrollable. If 3 is down, 2 and 1 may continue to complete the calculation, or fail to calculate. Therefore, it is unknown which message to play back when B is pulled up. This situation cannot be exactly-once.

Flink is the most suitable solution for exact computing in the industry. The idea is simple. The state of the entire cluster is mirrored at a certain time and mirrored at intervals. If a problem occurs, the state of all operators is restored and then computed.

The overall computing process has the Job manager regularly send checkpoints to its data sources. When two checkpoints occur, the checkpoints follow the data in operators. When each operator receives a checkpoint, it needs to back up its state. For example, if a window operator receives a checkpoint, it cannot back up the state. It needs to wait until another checkpoint has arrived before it can back up the state. This design is called alignment waiting. The waiting depends on the difference in flow rate between the two checkpoints. After the two checkpoints arrive, the state is stored synchronously, and the local state is stored to the remote state.

The preceding procedure costs a lot. Opening a checkpoint reduces task performance by about 30%. The more complex the task, the greater the overhead of the system. In addition, the recovery duration needs to be taken into account. When operators and tasks are restarted due to problems, the complete status must be read from the remote. All operators can only start computing after they are restored. The restoration may take several seconds to several minutes, which is a long time.

Although Flink is a suitable solution, it still has many heavy operations. This is because the Flink solution considers the entire topology, and the thinking is complicated.

The simplified idea is to split a complex topology into many simple sub-jobs through shuffle. The logic of each sub-job is simple, including three points:

Some sub-Job operators are stateful, while others are stateless. The stateless ones only need to ensure that the logic is consumed at least once.

The possible consequence of the idea is that duplicate data exists in the output Sink. If the Sink is the final result, it is up to the Sink to decide whether it can be deduplicated. If it is a shuffle queue, it will complete the logic of exactly-once in the subsequent stateful operators.

Duplicate data is mixed in stateful consumption data, which only needs to be deduplicated. The logic of deduplication says metadata must be stored during state storage in addition to the intermediate computing results. Metadata refers to the shards used to calculate the existing intermediate results and the data corresponding to the maximum offset of the shards. When the data comes, if the offset of the shard is smaller than the calculated offset, it is discarded, so the logic of exactly-once is completed by deduplication. Remote storage only requires one copy. There is no need to regularly store a complete copy of data.

In addition, checkpoints do not block the process since the sending of a checkpoint is only responsible for obtaining the metadata that the operator has currently stored to the remote. The storage of the operator can be implemented asynchronously and in micro-batches. After the checkpoint reaches the operator, you only need to tell it about the result without any blocking.

The source storage offset is based on the shard elements in all state operators. If the minimum value in each same shard is stored, it must be consumed at least once during recovery after a crash. Duplicate data here can be deduplicated to ensure exactly-once.

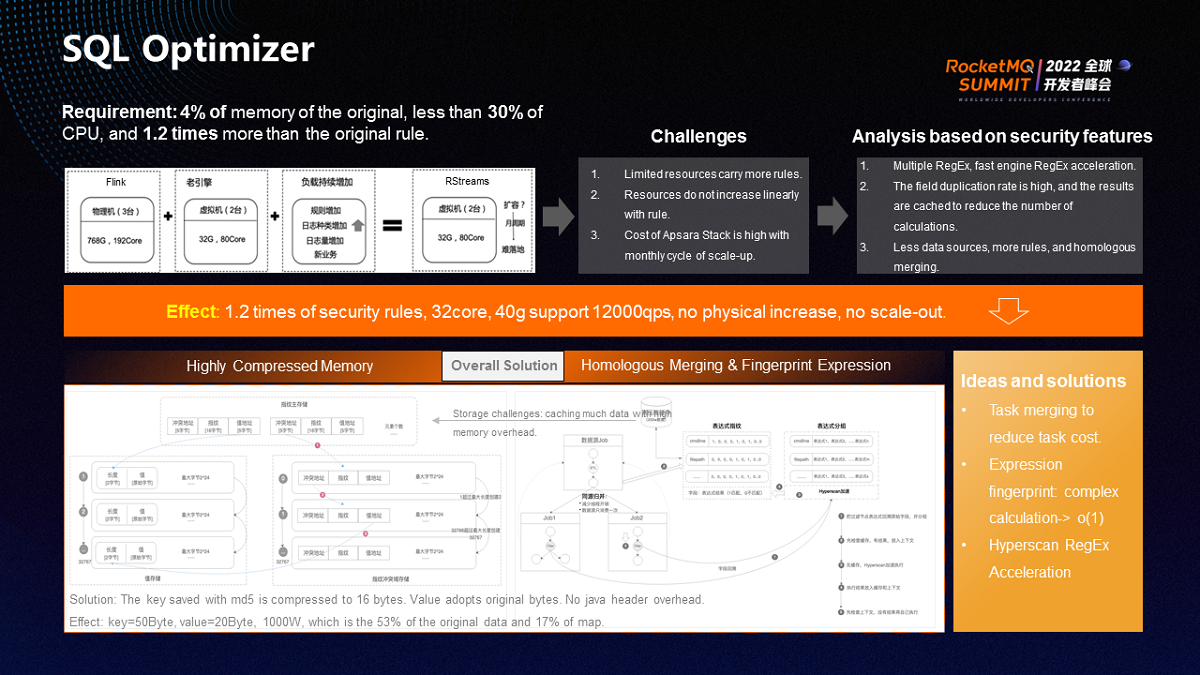

The SQL optimizer is required by Alibaba Cloud Security. Since the rules of the public cloud need to be moved to Apsara Stack and the resources of Apsara Stack are limited. It can only run the 1.2 times original rules through the original 4% of memory and less than 30% of CPU. Another feature of Apsara Stack is that the scale-out cost is high. It may scale out monthly, but it is difficult to expand machines. In summary, there are two big challenges when migrating public clouds to Apsara Stack.

First, more rules should be carried out with limited resources. Second, security scenarios need to constantly add rules to ensure security. Rules need to be added, but resources need to be ensured not to increase with the rules.

Therefore, it is necessary to consider the security characteristics when optimizing. Most big data computing has this feature, so this is a common solution.

Based on the analysis of security, there are three characteristics:

(1) There are many expressions for regular or filter classes, which can be carried by a faster engine.

(2) Some expressions have a high field repetition rate, such as the command line. No matter how many parameters there are, commands for O&M are similar.

(3) There are a few data sources, but there are many rules for each data source.

Based on the three characteristics, the overall solution is listed below:

(1) Merge tasks to reduce task overhead. Each task has a thread pool, occupying a certain amount of memory overhead. These tasks come from the same data source, so the common parts of the same data source can be extracted. For example, some fields can be standardized for consumption data sources, and the corresponding rules can be encapsulated into large tasks. According to this logic, putting ten tasks together only needs 5core 5g, so there are less resource consumption, fewer threads, and less memory usage. This solution is called homologous merge logic.

The problem resulting from fewer resources is to put the fault tolerances of this set of tasks together, so one task with problems will affect other tasks. Therefore, users can select task types as needed, including resource-sensitive and error-sensitive.

In addition, homologous merge does not make the rules become complicated and make the development testing difficult. Each rule for development testing is independent, which is called dynamic homologous merge. Homologous merge is an automatic merge after a policy is issued. If the policy is revoked, it will be restored to independent operation. This is a policy that can be dynamically provisioned.

(2) Expression Fingerprint: There are many filter conditions in a rule. The solution is to collect the filter conditions in all tasks during compilation.

A filter expression is a triplet, including variables, operations, and values. Take regular as an example. The variable is a command line, the operation is regular, and the value is a regular string. Group by variable. For example, a command line has ten expressions, but another command line has 20 expressions. You can get 30 expressions if you put them together. Designate it as one expression group.

The purpose of expression grouping is to cache. When a message arrives, the processing flow says check the cache first, and all expression groups are checked one by one. If it exists in the cache, the result is applied directly. Otherwise, the result is generated after the expression groups complete the computing. The generated result is a bit set. For example, a hundred expressions have 100 bits indicating whether the expression is triggered.

Put the command line and bit set in the cache and then add the same command line. All expressions do not need to be computed, and results can be directly obtained. Then, check whether the results exist in the context. If so, use them directly. Otherwise, perform the computing. According to the process, if the repetition rate of the command line is high, such as 80%, only the 20% command line will be computed, and the others only need O(1) time to obtain the results.

The computing resources required by this policy are reduced in scenarios where the field repetition rate is high since it can convert complex regular calculations into O(1) comparison calculations, and the resources do not increase as the rules increase.

In addition, the field repetition rate is high in scenarios where regular expressions are used, which is a common feature. Even if the repetition rate is low, since the overall resource overhead only increases by one O(1) comparison, no additional overhead is added.

(3) Hyperscan Regular Acceleration Put expressions for all tasks together and precompile expressions, especially regular classes. For example, with Hyperscan, 1000 expressions can be precompiled. Individual execution of each expression is executed together with precompilation, with a gap of about ten times. If the repetition rate of fields is not high, you can accelerate the execution of regular expressions by Hyperscan.

The amount of streaming data is large. There are problems that question whether the cache can support it and which cache is used to carry it.

First of all, whether the cache can hold up can be solved by setting the boundary. For example, set a 300 megabyte cache and lose some data if it exceeds.

Secondly, a compressed cache can be used to carry it. A large amount of data can be carried out with few resources. Java maps occupy a large number of resources since there are many synchronization, alignment, and pointer overhead. Therefore, caching can only be implemented based on native bit arrays, which can reduce resource overhead. A key may be long. For example, a command line may require dozens or hundreds of characters. However, md5 storage can be compressed to more than ten bytes, and the collision rate and collision rate of md5 are low.

Thus, no matter how big the key is, the key saved by md5 can be compressed to 16 bytes. Value is saved with the original bytes without any head overhead.

The test shows that a 50Byte key, a 20Byte value, and 10 million data can be half of the original data by compression storage, reaching 17% of the Java map. The compression effect is remarkable.

The final effect: It can meet the requirements with 1.2 times of the security rule and 32core 40g supporting 12000QPS. No physical machines are added, and no scale-out is required.

The first application of RocketMQ Streams is the security of Apsara Stack.

Apsara Stack is different from public clouds. Apsara Stack has limited resources, deployed entirely in the user's IDC room. If you want to add resources in the user's room, you need to apply for and purchase resources from the user, while public clouds can add resources at any time. Therefore, the elasticity of Apsara Stack is not as good as the public cloud.

Big data computing is a product that is not output until users purchase it. Users buy security but don’t necessarily buy big data computing, which causes users to buy security without big data computing. If you buy big data computing because of security, the cost of big data computing may be higher than security, which makes it difficult for big data to land and brings problems (including poor intrusion detection capabilities with high risks). Therefore, our final strategy is to adopt the RocketMQ Streams solution to implement deployment for users.

RocketMQ Streams require 32core 40g of the memory, which can carry 12000QPS. It can meet the needs of users. Compared with memory resources, only 4% of the original public cloud memory resources are used, and the CPU is less than 30%. From the perspective of capability coverage, it covers all security rules and is compatible with Flink syntax rules. It only needs to be developed once. The security effect is 100% guaranteed since it covers all security rules. In terms of product coverage, multiple products are applying RocketMQ Streams.

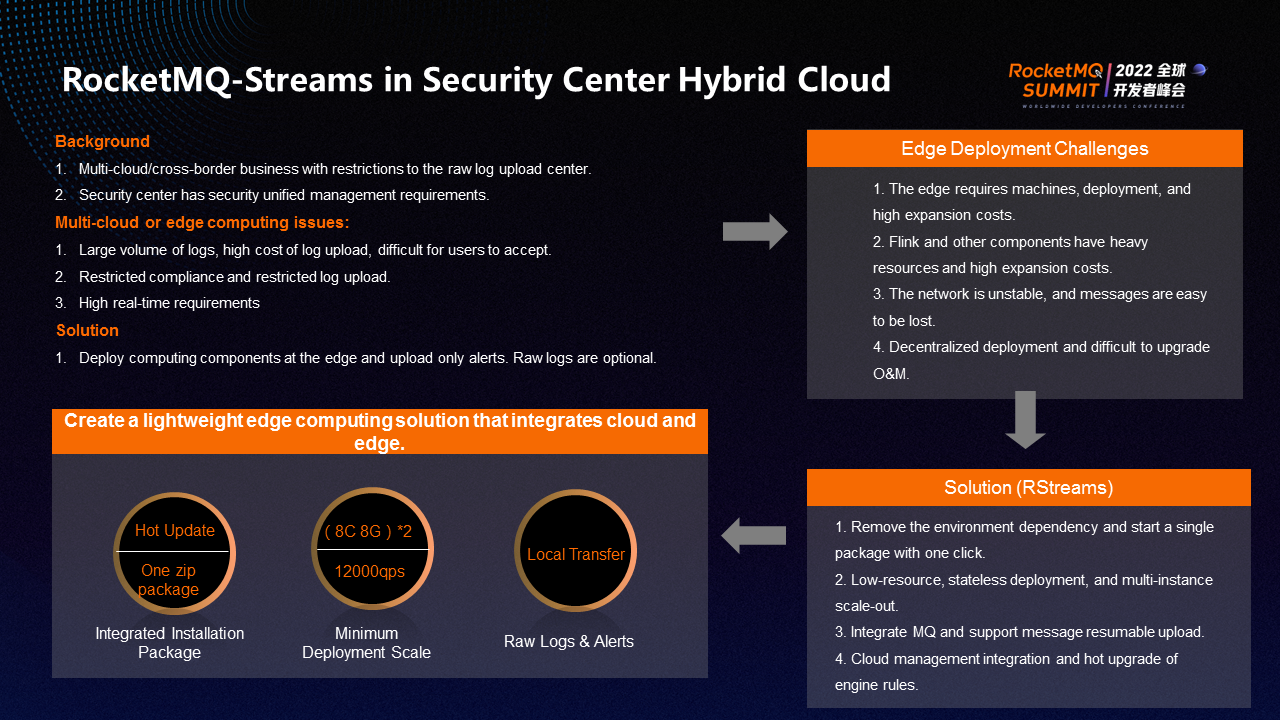

The second application scenario of RocketMQ Streams is a hybrid cloud of the Security Center. Gartner predicts that 80% of enterprises will adopt hybrid cloud and multi-cloud deployment modes in the future, but hybrid cloud and multi-cloud need unified security operation management.

There are a few main problems in multi-cloud or edge computing. Some ECS are purchased through Alibaba and some ECS are purchased in foreign countries. If you want to gather ECS data together, the number of logs is large, and the upload cost is high. The return of foreign ECS data to China will be subject to some restrictions. In addition, if the bandwidth is insufficient, uploading logs may affect normal business.

The solution we provide is simple. We integrate RocketMQ Streams and RocketMQ to support MQ storage, ETL, and stream computing and deploy them to the edge. For example, Alibaba, as a unified control zone and deploys RocketMQ Streams and the edge computing engine, which can be called edge computing. Then, only compute alerts at the edge, discard raw logs, and return alerts. Raw logs can be transferred to users locally, and hot updates can be supported if users need them. Only one zip package is needed. You can implement installation with one-click SH.

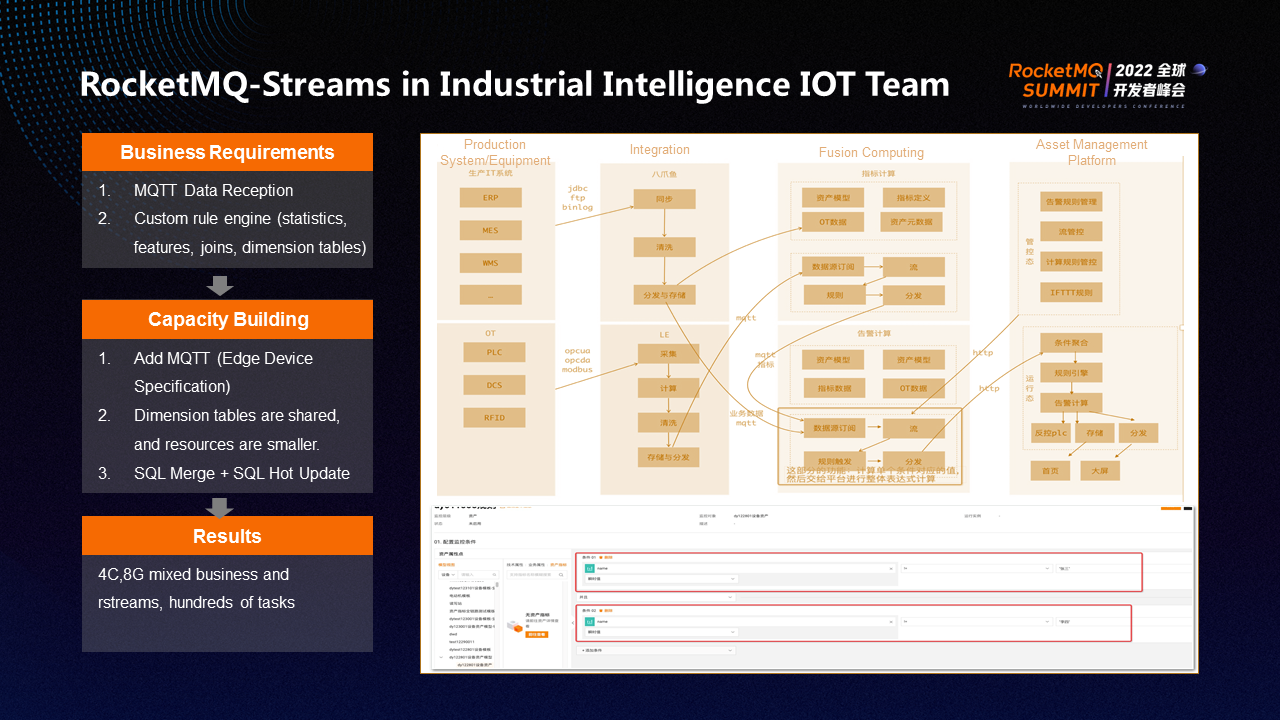

The third application scenario of RocketMQ Streams is IoT. IoT is a typical edge computing scenario. The challenge is to use 4core 8g to support other businesses and RocketMQ Streams tasks. The pressure of hundreds of tasks is great. In addition, it needs the standard IoT protocol input (such as MQTT) and also needs the capabilities of a customize rule engine for statistical, feature, join, and dimension table computing.

MQTT of RocketMQ Streams directly reuses RocketMQ. In terms of dimension tables, you can share dimension tables supporting tens of millions of dimension tables and share multiple instances on the same machine. SQL can support merge and hot update.

In the end, the capability building and user landing of RocketMQ Streams are completed in the IoT scenario.

The future plan for RocketMQ Streams includes four directions:

(1) The engine has only completed the construction of core capabilities. In the future, we will work on improving more capabilities (such as resource scheduling, monitoring, console, and stability).

(2) Continue to polish the best practices of edge computing

(3) Promote CEP, flow and batch integration, and machine learning capabilities

(4) Enrich the ability of message access (such as increasing the access of files, syslog, and http). Continue to enhance ETL and create a message closed loop. ES is supported as a data source based on search results.

RocketMQ-Streams:

https://github.com/apache/rocketmq-streams

RocketMQ-Streams-SQL:

https://github.com/alibaba/rsqldb

The Road to Large-Scale Commercialization of Apache RocketMQ on Alibaba Cloud

706 posts | 57 followers

FollowAlibaba Cloud Native - June 12, 2024

Alibaba Cloud Native - November 13, 2024

Alibaba Cloud Native - June 11, 2024

Alibaba Cloud Native - June 12, 2024

Alibaba Cloud Native - June 12, 2024

Alibaba Cloud Native Community - July 19, 2022

706 posts | 57 followers

Follow Security Center

Security Center

A unified security management system that identifies, analyzes, and notifies you of security threats in real time

Learn More Security Solution

Security Solution

Alibaba Cloud is committed to safeguarding the cloud security for every business.

Learn More Cloud Hardware Security Module (HSM)

Cloud Hardware Security Module (HSM)

Industry-standard hardware security modules (HSMs) deployed on Alibaba Cloud.

Learn More Data Security on the Cloud Solution

Data Security on the Cloud Solution

This solution helps you easily build a robust data security framework to safeguard your data assets throughout the data security lifecycle with ensured confidentiality, integrity, and availability of your data.

Learn MoreMore Posts by Alibaba Cloud Native Community