By Cheng Zhe, nicknamed Lanhao at Alibaba.

In this article, we are going to be looking at experiments done at Alibaba, which were intended at helping increase online users on its second-hand buy-and-sell platform, Xianyu, which literally translates to "Idle Fish." But, before we get ahead of ourselves, let's discuss some of the dynamics of the buy-and-sell platform of Xianyu:

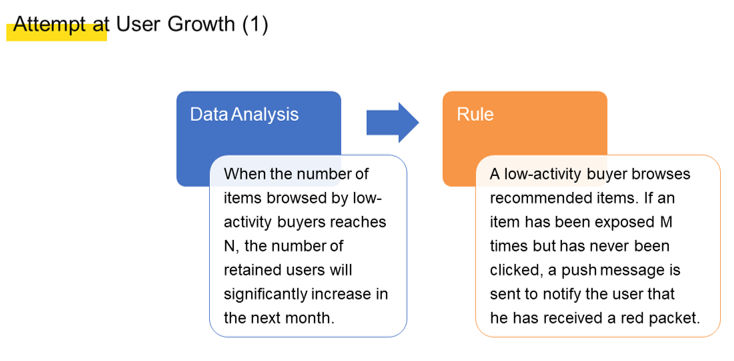

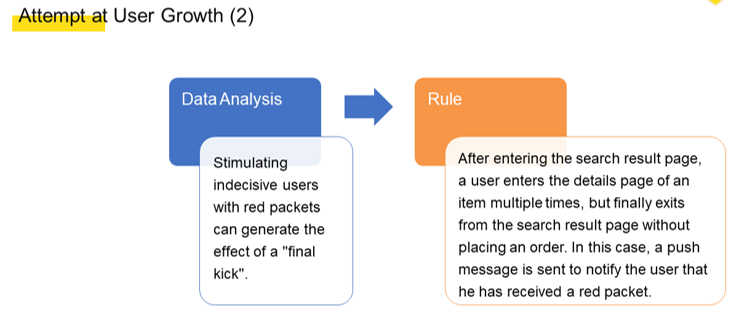

In early 2019, the Xianyu team at Alibaba conducted multiple experiments on user growth, including the following two experiments, shown in the figures below:

The team conducted the preceding two experiments with the aim of retaining users on Xianyu for a longer time. The longer time that users spend on browsing on Xianyu, the more likely they are to discover interesting content, including products and posts in our various curated item groupings, or what are referred to in Chinese as "fish ponds." As such, users may be attracted to return to Xianyu at some later time, and Xianyu can achieve a greater level of user growth. Most of the experiments we conducted produced good business results. However, two problems were also found with these experiments:

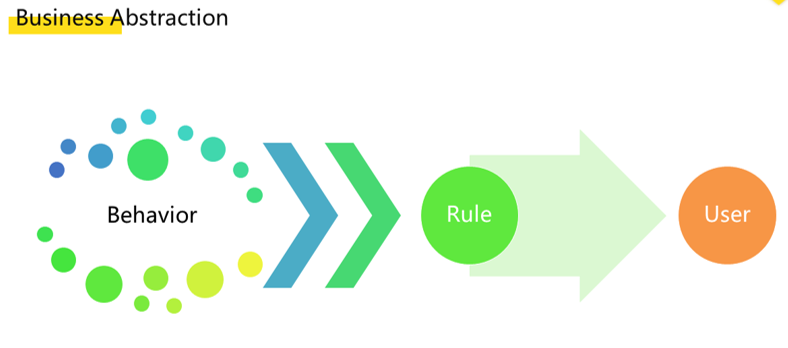

To solve these issues our team turned to an engineering solution, one that involved a rule-engine based on event streams. For this, we first implemented a layer of business abstraction. The operation personnel analyzed and classified various user behaviors to obtain a common and specific rule and then applied the rule to users in real time as an intervention.

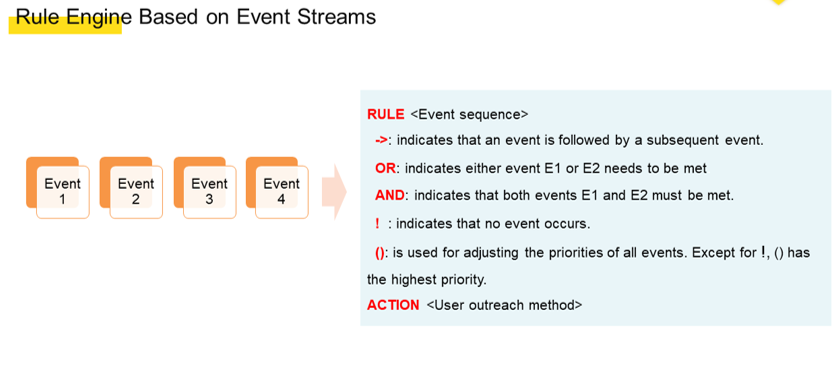

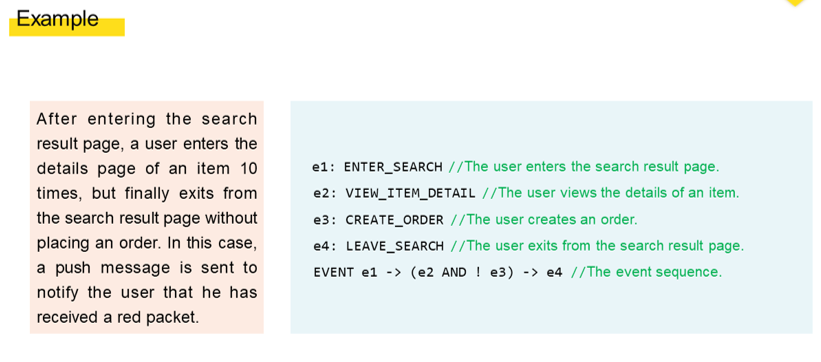

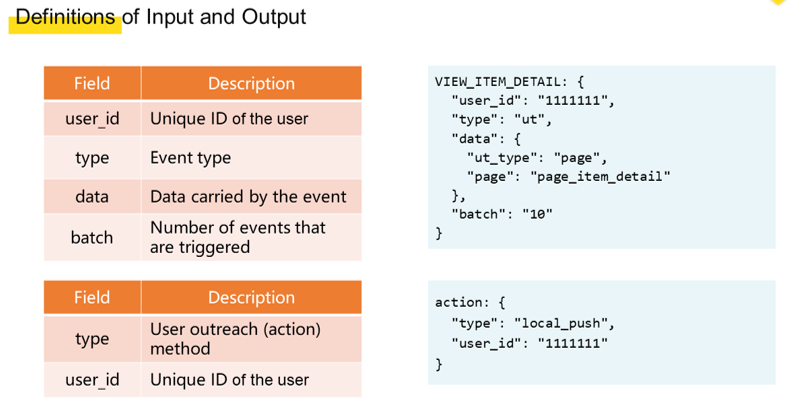

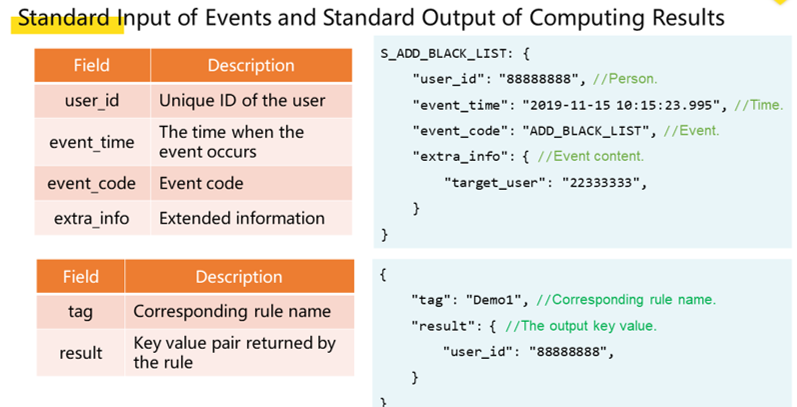

We engineered the business abstraction layer to have improved R&D efficiency and operation efficiency. To this end, we developed the first solution, which was a rule engine based on event streams. We took user behavior as being a series of sequential behavior event streams. We can define a complete rule by using a simple event description in Domain Specific Language (DSL) and then incorporate input and output definitions.

Let's look at the second experiment in user growth as an example. This example can be briefly expressed in DSL as shown in the following figure.

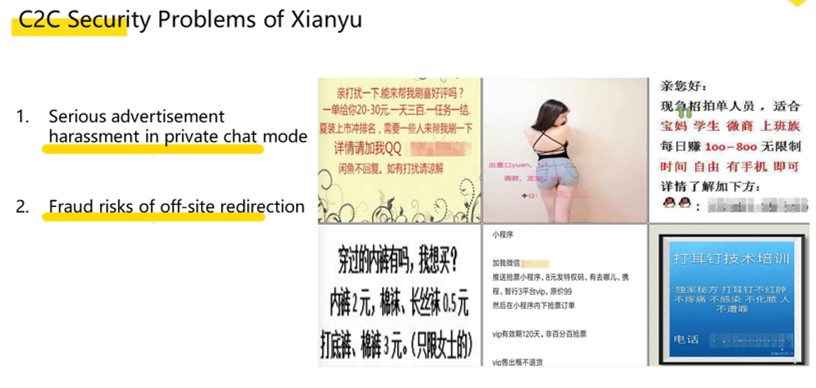

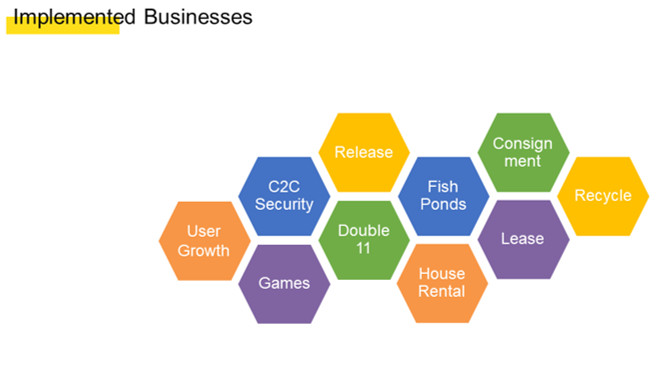

The rule engine that we implemented could appropriately implement policies for user growth, so we quickly promoted it within Alibaba Group to other business modules and we had also planned to implement it as part of the security service integrated in the Xianyu platform. The following is a description of the consumer-to-consumer (C2C) security service. This security service aims to prevent and stop violations of Xianyu's terms of use policy, including cutting down on inappropriate posts, as shown below.

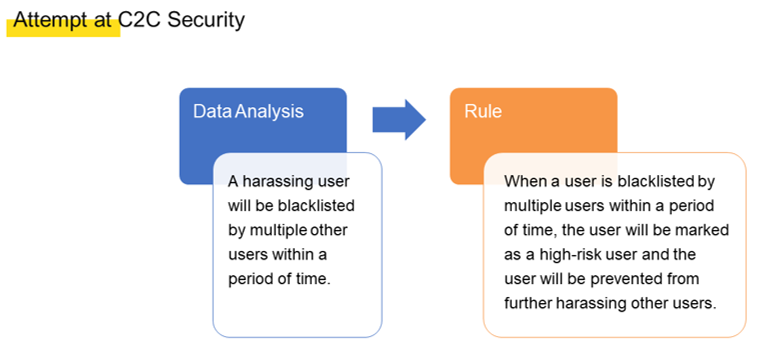

In the C2C security service, a rule abstraction operation, which is obtained from a series of behaviors.

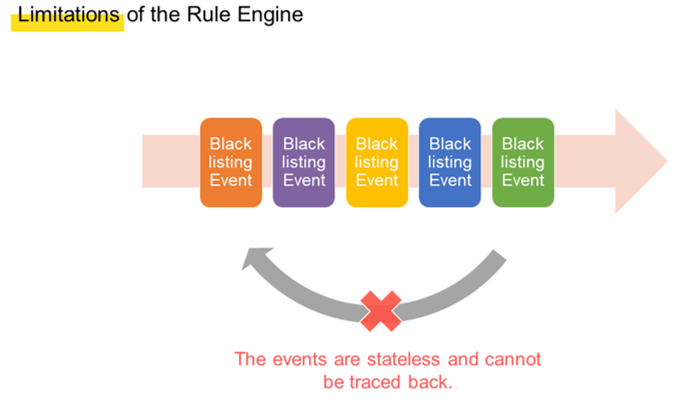

Despite our best efforts, these security rules could not be used in the rule engine. Consider this example rule, for instance. If a user is blacklisted twice within one minute, this user will be marked with a high-risk tag. When the first blacklisting event occurs, the rule engine matches the event. Then when the second blacklisting event occurs, the rule engine also matches this event. As such, the rule should be met from the perspective of the rule engine and subsequent operations can be performed. However, one important aspect is that the blacklistings should be performed by two different users to prevent one user from maliciously blacklisting another with multiple devices.

However, this is difficult for the rule engine to discover, as the rule engine only knows that two blacklisting events are matched and the rule is met. This is because the rule engine can match only stateless events and cannot trace back the details of these events for further aggregate computing purposes.

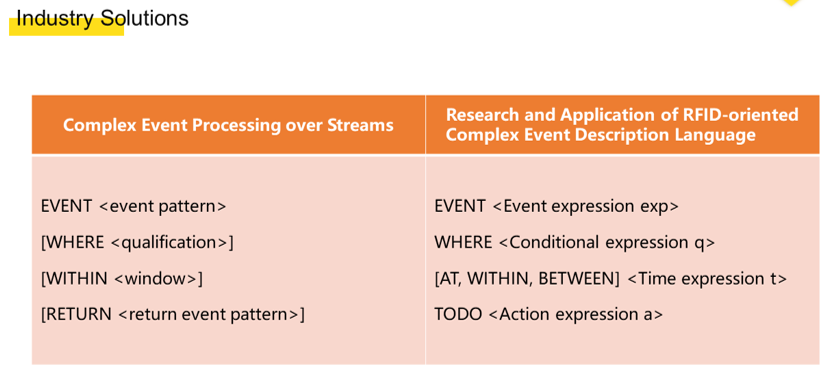

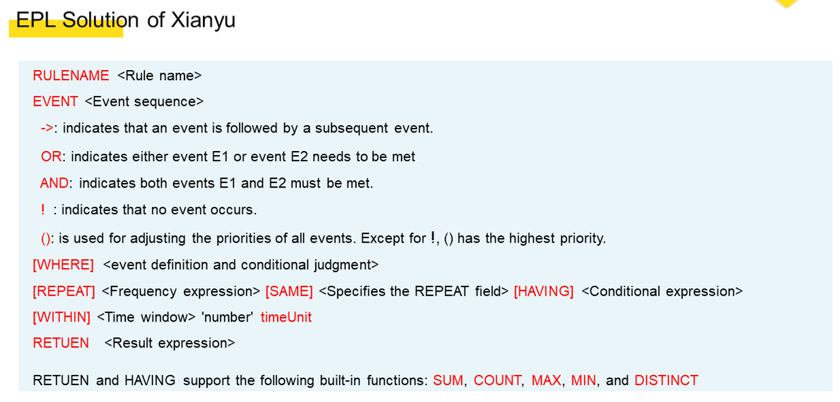

Based on the limitations of the rule engine, we re-analyzed and organized our business scenarios. Then we designed a new solution and defined a new DSL based on the well-known general solutions in the industry. As you see, our syntax is SQL-like and we mainly take the following into considerations:

Compared with the previous rule engine, the new DSL solution has the following strengths:

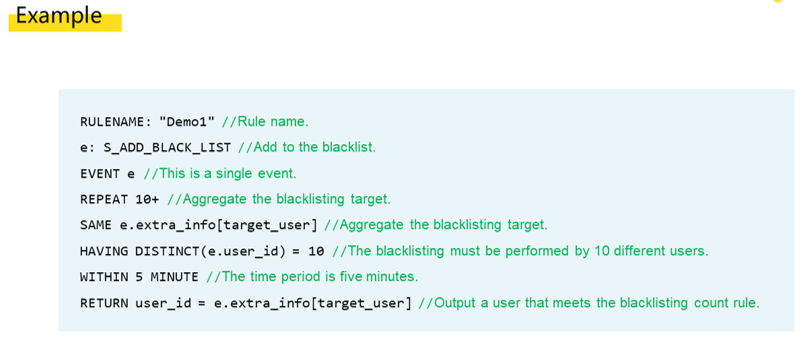

WITHIN keyword is used to define a time window. When we use keywords such as DISTINCT following HAVING, aggregate computing can be performed for events in the time window. Our new solution can solve the preceding problem of rule description for the C2C business.The example below shows how our new solution resolves the problem we discussed in the previous section.

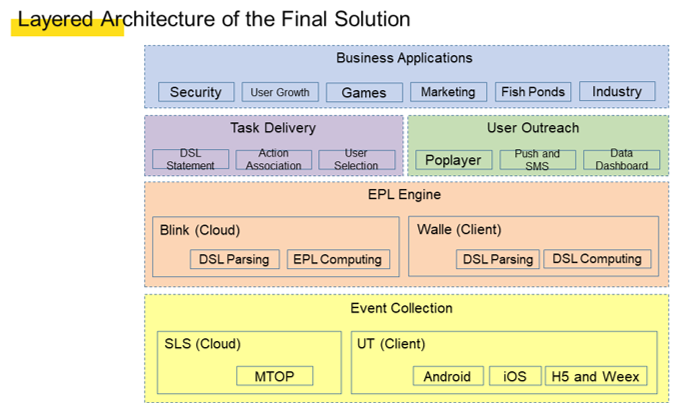

To facilitate engineering, we developed the following overall layered architecture in DSL, written based on the Event Programming Language (EPL): To rapidly achieve minimum closed-loop verification, we selected Blink as the cloud parsing and computing engine. Blink is an enhanced version of Apache Flink, optimized and upgraded by Alibaba.

The layered architecture comprises the following layers from the top down:

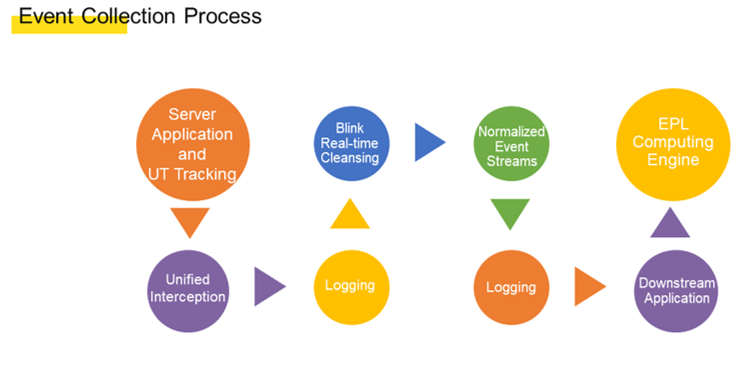

The event collection module intercepts all network requests and behavior-tracking data based on aspects and then records the data in a server log stream. In addition, the event collection module cleanses the event stream by using a fact task to obtain desired events based on the format defined earlier. After that, the event collection module outputs the cleansed logs to another log stream for reading by the EPL engine.

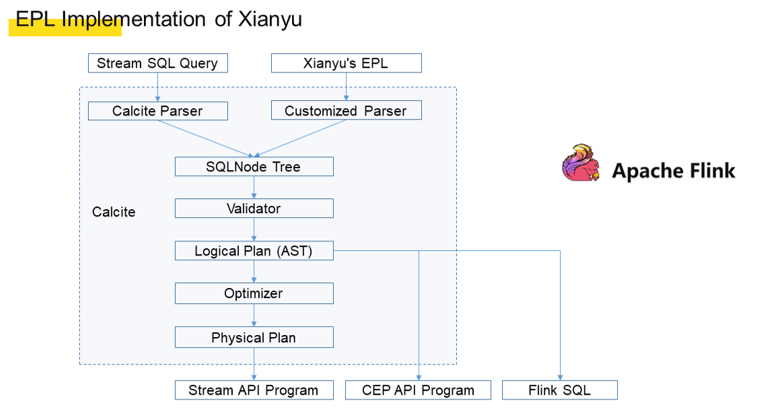

Because we adopted an SQL-like syntax and because Apache Calcite is a common SQL parsing tool in the industry, we decided to use Calcite and customized a Calcite parser to perform parsing. For single-event DSL, Flink SQL is obtained. For multi-event DSL, the Blink API is directly called after parsing.

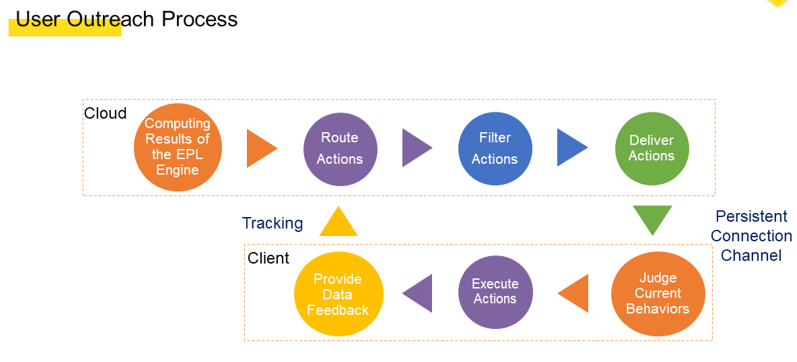

After generating computing results, the EPL engine outputs the results to the user outreach module. The user outreach module first selects an action route to determine the action to respond to. Then, the user outreach module delivers the action to a client by using a persistent connection with the client. After receiving the action, the client identifies whether the current user behavior permits the display of the action. If yes, the client directly implements the action and exposes it to the user. The user may perform a behavior after receiving the response. The behavior may affect the action route and provides feedback about the route.

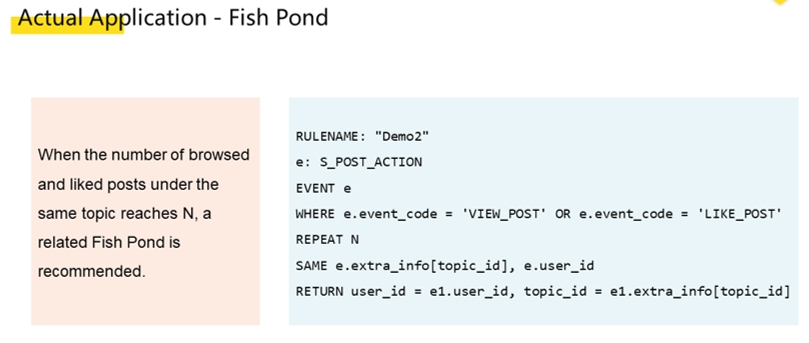

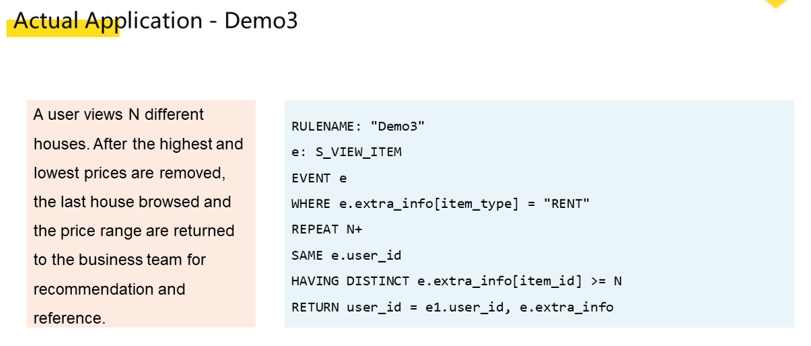

Since the new solution was launched, we have implemented it in an increasing number of business scenarios. Here are two examples.

From the above example of Fish Ponds, we can see that this solution is somewhat like algorithm recommendation. In the preceding rental example, the rule is too complex and it is difficult to express the rule in DSL. Therefore, the rule is configured to collect only four browses of different houses for rental. After the rule is triggered, the data is provided for the business team that developed the house rental application. This is also the boundary we found during the implementation.

This complete solution can significantly improve R&D efficiency. Generally, the original R&D process can be completed in four business days by writing code case by case. In extreme situations, if the client version needs to be updated, the process may take two to three weeks. However, when SQL is used, the R&D process only takes 0.5 business days. In addition, this solution has the following advantages:

By implementing this solution in multiple businesses, we found its appropriate boundaries. This solution is applicable to businesses that:

The current solution has the following disadvantages:

Therefore, in the future, we will focus on exploring real-time computing capabilities on the client and the integration of algorithm capabilities.

How Xianyu's Underlying Architecture Evolved to Support Growing Business

56 posts | 4 followers

FollowRupal_Click2Cloud - August 22, 2022

Neel_Shah - August 13, 2025

Alibaba Cloud Community - April 25, 2024

Alibaba Cloud Community - March 25, 2024

Alibaba Cloud Community - April 25, 2024

Iain Ferguson - January 6, 2022

56 posts | 4 followers

Follow Realtime Compute for Apache Flink

Realtime Compute for Apache Flink

Realtime Compute for Apache Flink offers a highly integrated platform for real-time data processing, which optimizes the computing of Apache Flink.

Learn More Livestreaming for E-Commerce Solution

Livestreaming for E-Commerce Solution

Set up an all-in-one live shopping platform quickly and simply and bring the in-person shopping experience to online audiences through a fast and reliable global network

Learn More MaxCompute

MaxCompute

Conduct large-scale data warehousing with MaxCompute

Learn More E-Commerce Solution

E-Commerce Solution

Alibaba Cloud e-commerce solutions offer a suite of cloud computing and big data services.

Learn MoreMore Posts by XianYu Tech