By Yili

We can understand the evolution of cloud computing from the process of enterprises' migration to the cloud.

The first stage is mainly about moving to the cloud. The "Lift and shift" strategy is used to move applications running on physical machines to virtual environments. This process is mainly cost-driven. Application development and maintenance in this stage are not very different from the original development and maintenance model.

The second stage is what we call cloud-ready. In this stage, enterprises start to focus on the total cost of ownership and hope to improve the innovation efficiency with cloud computing. From the maintenance aspect, virtual machine images or other standardized and automated methods are used to deploy applications. Specialized cloud services such as RDS and SLB are used to improve the maintenance efficiency and SLAs. In the meantime, microservice architectures are used as application architectures. Each service can be deployed and scaled independently, improving the system scalability and availability.

The third stage is today's cloud-native era. In the cloud-native era, innovation becomes the core competency of enterprises, and most enterprises begin to fully embrace cloud computing. Many applications are on the cloud from the very beginning of their development. Cloud computing reshapes software throughout the lifecycle, including architecture design, development, construction, and delivery.

First, application loads can be seamlessly migrated to platforms such as private clouds and public clouds and computing and intelligence can be extended to edge environments to implement boundaryless cloud computing. Second, as new computing paradigms continuously emerge (such as containers, service mesh, and serverless computing), ultimate agility and elasticity can support faster innovation, trial and error, and business growth and reduce the cost accordingly. In addition, the DevOps concept is also recognized and accepted by many developers, facilitating changes in IT organization and architectures as well as cultural reform.

In this era, we also thank CNCF and other open-source communities for their efforts to collaboratively push forward the development of the cloud-native ecology.

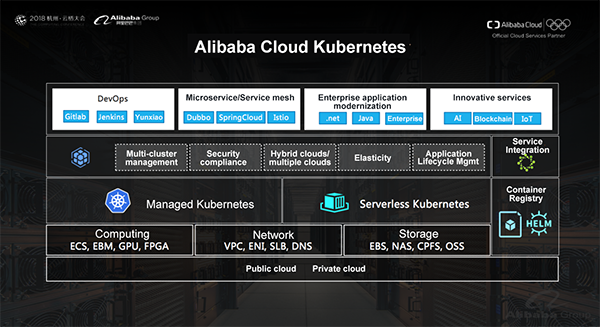

Alibaba Cloud Kubernetes (ACK) supports both the private and public clouds of Alibaba Cloud and provides the optimization and integration of the basic Alibaba Cloud features. Meanwhile, we also make Alibaba Group's practices in large-scale distributed systems accessible to all users in a cloud-native manner to enable these practices to benefit the whole world.

First, we use Kubernetes to implement an infrastructure abstraction layer so that containerized applications can fully utilize the powerful abilities in the bottom layer of Alibaba Cloud, such as computing, storage, and network capacity. For example, in deep learning and high-performance computing scenarios that require every high efficiency, we can utilize heterogeneous computing power of bare-metal machines, GPUs, and FPGA instances. Using elastic network interfaces with the Alibaba Cloud Terway network driver can reach up to 9 GB network bandwidth with almost zero loss. We can also use the RoCE (25 GB) network technology. Parallel file systems like CPFS can be used to improve the processing efficiency and provide up to 100 million IOPS and 1 Tbit/s throughput.

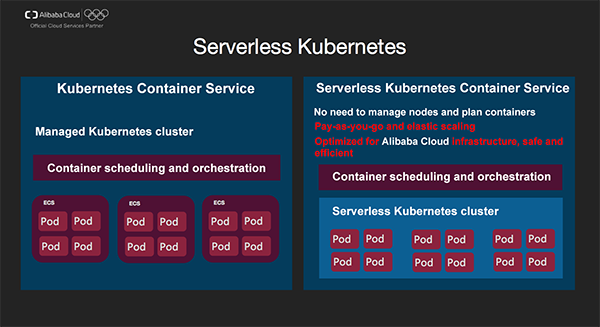

We provide two different service types to simplify the cluster lifecycle management. With Managed Kubernetes, users can select node types according to their workloads, and cluster lifecycle management operations are performed by Alibaba Cloud, such as creating, updating, scaling, monitoring clusters and alarming. Users can host master nodes to further simplify maintenance and reduce the cost. With Serverless Kubernetes, users don't need to focus on any underlying resources and cluster management, and applications can be deployed and scaled as needed.

Based on this, Container Service provides more features.

In the management of containerized content, container image management is supported and the support for Helm application graphs is also provided. According to a CNCF survey, Helm (68%) is the most popular tool for packaging container applications. In addition, Alibaba Cloud also supports the Open Service Broker API that integrates container applications and cloud services.

In addition to the familiar DevOps and microservice applications, more and more enterprise applications (such as .net and JEE applications) use containerization to accelerate the modernization of IT architectures and improve business agility. In the meantime, large amounts of innovative business for Alibaba Cloud and its customers is based on Alibaba Cloud Container Service. For example, Alibaba Cloud businesses like AI prediction service, IoT application platform, and BaaS is based on ACK.

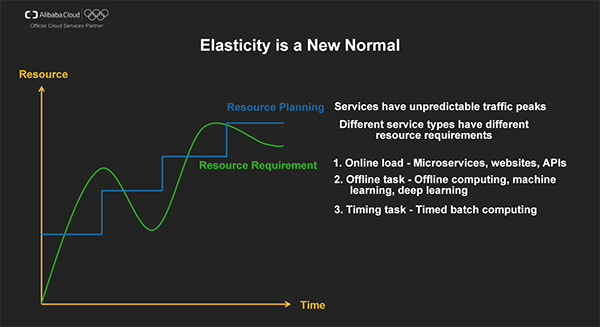

Cloud computing uses the scale effect to balance between peaks and valleys of business traffic for different tenants, significantly decreasing IT costs on a macroscopic scale. In the cloud era, elasticity has become a new normal and is also one of the cloud-native application features that customers pay the most attention to according to a CNCF survey.

The total transaction amount on the Tmall Double 11 Shopping Carnival in 2018 reached 213.5 billion RMB. As the technical supporter of this Double 11 event, Alibaba Cloud set a new record in "surge computing." During this Double 11 event, the accumulated number of ECS cores allocated by Alibaba Cloud exceeded 10 million, which is equal to the capabilities of 10 large data centers.

Of course, many business scenarios cannot be planned in advance and are unpredictable, for example, hot celebrity gossip may cause social websites to experience instantaneous overloads.

Zhou Hongyi joked at the World Internet Conference held at Wuzhen, "Cloud computing is truly awesome if it experiences no downtime in the case of massive amounts of network traffic from news and discussions about five young male celebrities' marriage announcements and four celebrities' affairs."

To achieve that goal, elasticity is required for infrastructures so as to provide sufficient resources in a short time and elasticity is also required for application architectures so as to host more business loads by fully using scaled computing resources.

Different types of workloads in the application layer have different requirements regarding resource auto scaling.

In the cloud era, auto scaling solves the conflict between traffic peaks with sharp traffic increase and resource capacity planning as well as the trade-off between the resource cost and the system availability.

All elastic architectures consist of the following basic parts.

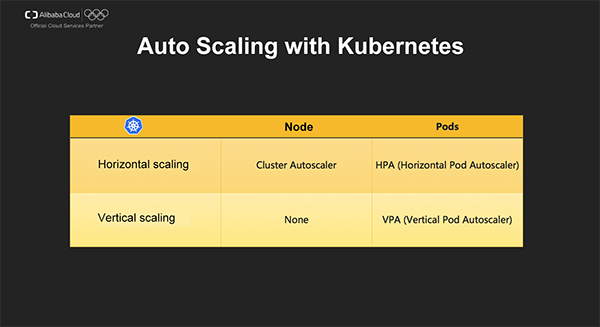

Kubernetes supports auto scaling from two dimensions:

Two types of scaling policies are available:

Kubernetes HPA supports the three following monitoring metrics:

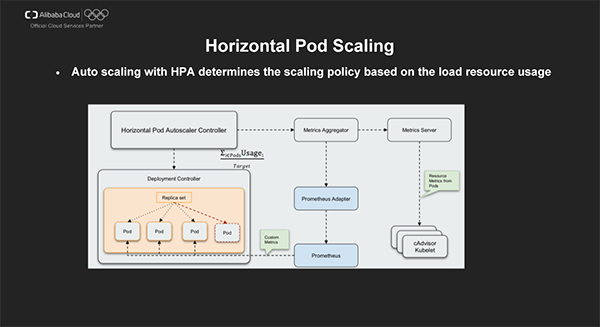

HPA Controller uses Metrics Aggregator to aggregate the performance metrics collected by Metrics Server and the Prometheus adapter and calculates how many replicas a deployment task needs to support target workloads.

To prevent scaling oscillations, k8s provides default cooldown cycle settings: the cooldown period is 3 minutes for scaling out and 5 minutes for scaling in.

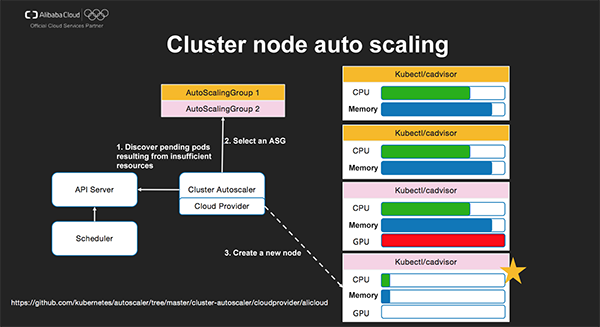

Cluster Autoscaler listens to all pods. When a pod is not scheduled due to insufficient resources, the configured Auto Scaling Group (ASG) is simulated as a virtual node; attempts to reschedule the unscheduled containers are performed; a compliant ASG is selected to perform node scaling. When no scaling tasks are to be performed, the request resource usage of each node is traversed. A node with the request resource usage lower than the threshold will be deleted.

A cluster may have different ASG, such as the ASG for CPU instances and the ASG for GPU instances.

In this example, a GPU ASG is selected to perform scaling when a GPU application cannot be scheduled.

Alibaba Cloud provides a series of enhancements in addition to the basic Cluster AutoScaler:

The open source code of relevant features is available:

https://github.com/kubernetes/autoscaler/tree/master/cluster-autoscaler/cloudprovider/alicloud

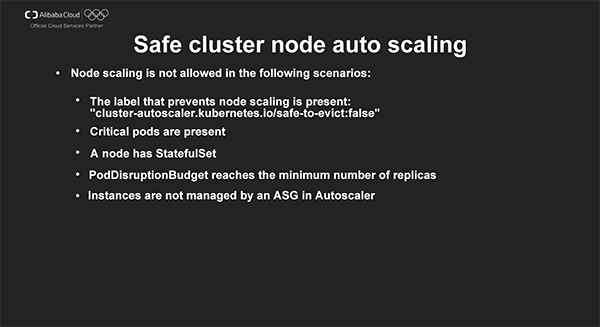

Compared with scaling in nodes, scaling out nodes is more complicated. We need to avoid impact on the application stability due to scaling in nodes. For this purpose, k8s provides the preceding rules to ensure safe scaling.

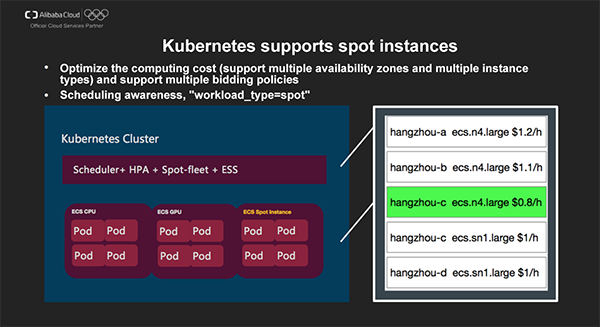

A spot instance (also called preemptible instance) is an instance type in Alibaba Cloud ECS. When creating a spot instance, users are required to set an upper price limit for the specified instance type. If the current market price is lower than the bid price, this spot instance is successfully created and users are billed on the current market price. By default, a user can hold a spot instance without interruption for one hour. When the market price exceeds the bid price later or the supply and demand relationship changes, the instance is automatically released.

Using Alibaba Cloud ECS spot instances properly can decrease the operating cost by 50%-90% (compared with pay-as-you-go instances). Spot instances are of great significance in price-sensitive scenarios such as batch computing and offline tasks. Alibaba Cloud also provides spot GPU cloud servers, which considerably reduce the cost of deep learning and scientific computing and benefit more developers.

Kubernetes clusters have a variety of bidding policies available. You can select multiple availability zones and multiple instance types for bidding. Based on the price of the current instance type, instances with the optimal price will be created, significantly improving the instance creation success rate and reducing the cost.

Spot node instances after elastic scaling have the "workload_type=spot" tag. By using the node selector, application loads that match the features of spot instances can be dispatched to new scaling nodes.

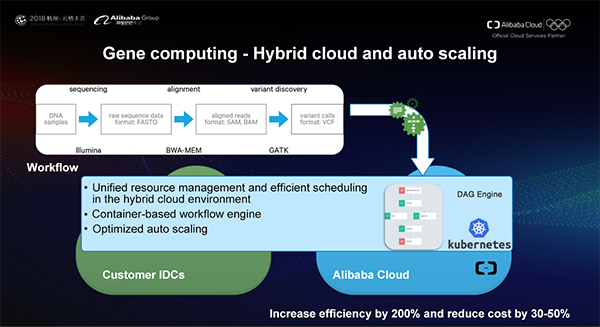

Gene sequencing is one basic technology for precision medicine and requires large amounts of computing power and thousands of different frameworks for computing. Recently, the Alibaba Cloud container team and Annoroad Gene Technology worked together to improve computing power by using container technologies and allow researchers to customize data processing procedures. This implements the deployment and the unified scheduling of thousands of nodes on/off the cloud, increasing the genetic data processing efficiency by 200%-300%.

With Cluster Autoscaler, hundreds of nodes are scaled out within five minutes for each batch of gene sequencing tasks and are recycled after use. In addition, the speed of the next startup is optimized. Cluster Autoscaler can significantly reduce the resource cost and ensure fast scheduling.

HPA is very helpful for stateless services and deals with business growth by increasing replicas. However, some stateful applications (such as databases ad message queues) cannot implement scaling by using horizontal scaling. For these stateful applications, generally more computing resources should be assigned to implement scaling.

Kubernetes provides the "request/limits" resource scheduling and limit policy. However, it is very challenging to properly configure application resources based on specific business requirements. Too few resources will cause stability issues, such as OOM and CPU preemption, while too many resources will affect the system usage.

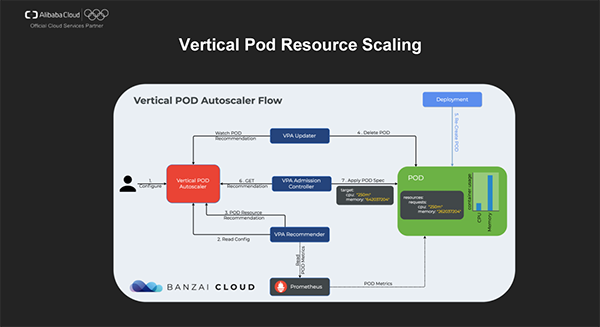

The mission of VPA is to automatically and vertically scale stateful service resources and improve the system maintenance automation efficiency and usage.

Let's see a typical VPA execution process.

Currently VPA is still in its infancy and requires further maturity improvements. For example, its data monitoring model, predictive computing model and the implementation of the update action all have room for improvements. Meanwhile, to avoid the uncertainty of updates by VPA Updater, the community suggestion is to make Updater optional, let VPA only provide some resource allocation suggestions and let users decide how to perform actions like scaling.

In May 2018, Alibaba Cloud released Serverless Kubernetes Container Service, which does not require node management and capacity planning and allows users to pay based on resources required for applications and implement auto scaling.

The bottom layer of Serverless Kubernetes is built on top of Alibaba Cloud's virtualization solutions optimized for containers and provides a lightweight, efficient and safe execution environment for container applications. Serverless Kubernetes also implements service discovery by fully utilizing Alibaba Cloud SLB and DNS-based Service Discovery. Serverless Kubernetes provides Ingress support by using the Layer 7 SLB routing and is compatible with most semantics of Kubernetes applications so that deployment can be performed without modifying semantics.

Currently the serverless HPA supports autoscaling/v1 and CPU scaling. We are planning the support for autoscaling/v2beta1 and the scaling of other pod metrics (memory and network) in early December.

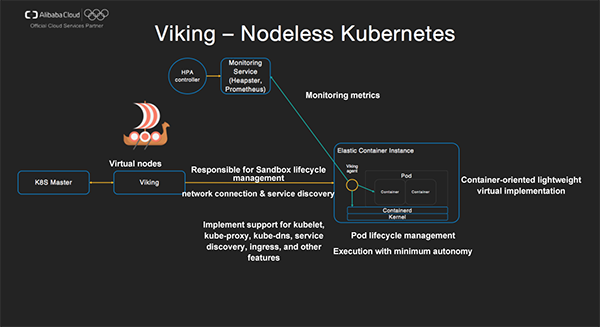

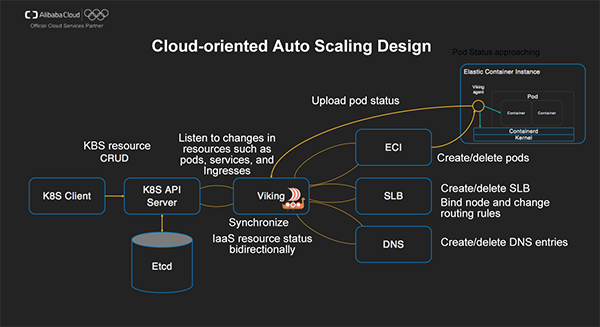

Now let's see how Serverless Kubernetes is implemented. The project code of core component is Viking, a tribute to ancient Vikings' warships well-known for their agility and fast speed.

Viking: Viking registers itself as a virtual node in Kubernetes clusters and implements behaviors of components such as kubelet, kube-proxy, and kube-dns. It is responsible for tasks such as Sandbox lifecycle management, network configuration, and service discovery. Viking performs a variety of actions: It interacts with the cluster API Server, listens to changes of events in the current namespace (such as pods, service, and Ingresses), creates ECI instances for pods, creates SLB instances for services or registers DNS domains, and configures routing rules.

Alibaba Cloud Elastic Container Instance (ECI) is a container-oriented lightweight virtualization implementation. Its internal agent is responsible for a variety of features such as the pod lifecycle management, startup sequence of containers, health check, process guarding, reporting of monitoring information. The bottom layer of ECI can be based ECS virtual machines or X-Dragon + lightweight virtualization technologies.

Serverless Kubernetes provides cloud-oriented autoscaling design.

Listen to changes in resources such as pods, services, and Ingresses and synchronize cloud resource status bidirectionally.

For example, pods will be created/deleted when a Deployment is used to deploy an application.

When an Ingress is passed through, SLB will be automatically used and created, and the features of Alibaba Cloud SLB will be utilized to implement routing rules. In addition, the binding to the back-end ECI is also performed.

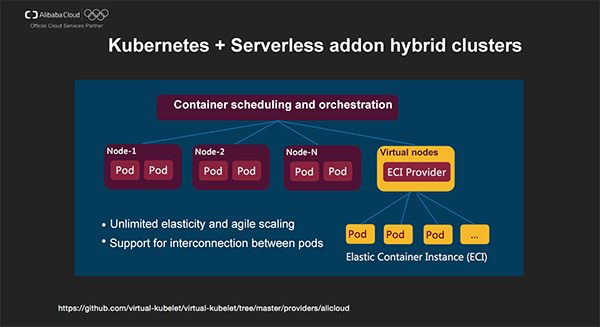

We can also manage ECI instances together with classic k8s clusters by using the Serverless add-on to implement fine elasticity granularity and optimize the cost. ECI can be used with Istio.

The Serverless add-on is built on the Virtual Kubelet framework released by Microsoft. Relevant Virtual Kubelet projects that support ECI have been published as open source so that all developers can use these features.

We are continuously making some enhancements. In the future, many features of Viking will be available in the Serverless add-on.

Deploy Virtual Nodes Quickly with Container Service for Kubernetes

229 posts | 34 followers

FollowAlibaba Cloud Native - October 9, 2021

Alibaba Cloud Serverless - May 26, 2021

Alibaba Clouder - November 30, 2020

OpenAnolis - July 8, 2022

Aliware - March 19, 2021

Alibaba Clouder - November 26, 2020

229 posts | 34 followers

Follow Container Service for Kubernetes

Container Service for Kubernetes

Alibaba Cloud Container Service for Kubernetes is a fully managed cloud container management service that supports native Kubernetes and integrates with other Alibaba Cloud products.

Learn More ACK One

ACK One

Provides a control plane to allow users to manage Kubernetes clusters that run based on different infrastructure resources

Learn More Container Compute Service (ACS)

Container Compute Service (ACS)

A cloud computing service that provides container compute resources that comply with the container specifications of Kubernetes

Learn More Cloud-Native Applications Management Solution

Cloud-Native Applications Management Solution

Accelerate and secure the development, deployment, and management of containerized applications cost-effectively.

Learn MoreMore Posts by Alibaba Container Service