By Shun Fujiyoshi, Alibaba Cloud MVP

We're getting close to a fully zero-touch creative pipeline — and this MV is the closest we've been. I built a five-member K‑POP group called SPECTRA. Every member is an AI agent. They don't just "sing" — they direct their own music videos. Shot selection, pacing, transitions — all of that is decided by the agents. The latest SPECTRA MV, "LOWKEY,"is the first time our agents took a song and pushed it almost all the way to a finished music video with very little human intervention. No one cracked open an NLE to "just fix that one cut." Here's how it works today, and where we're taking it next.

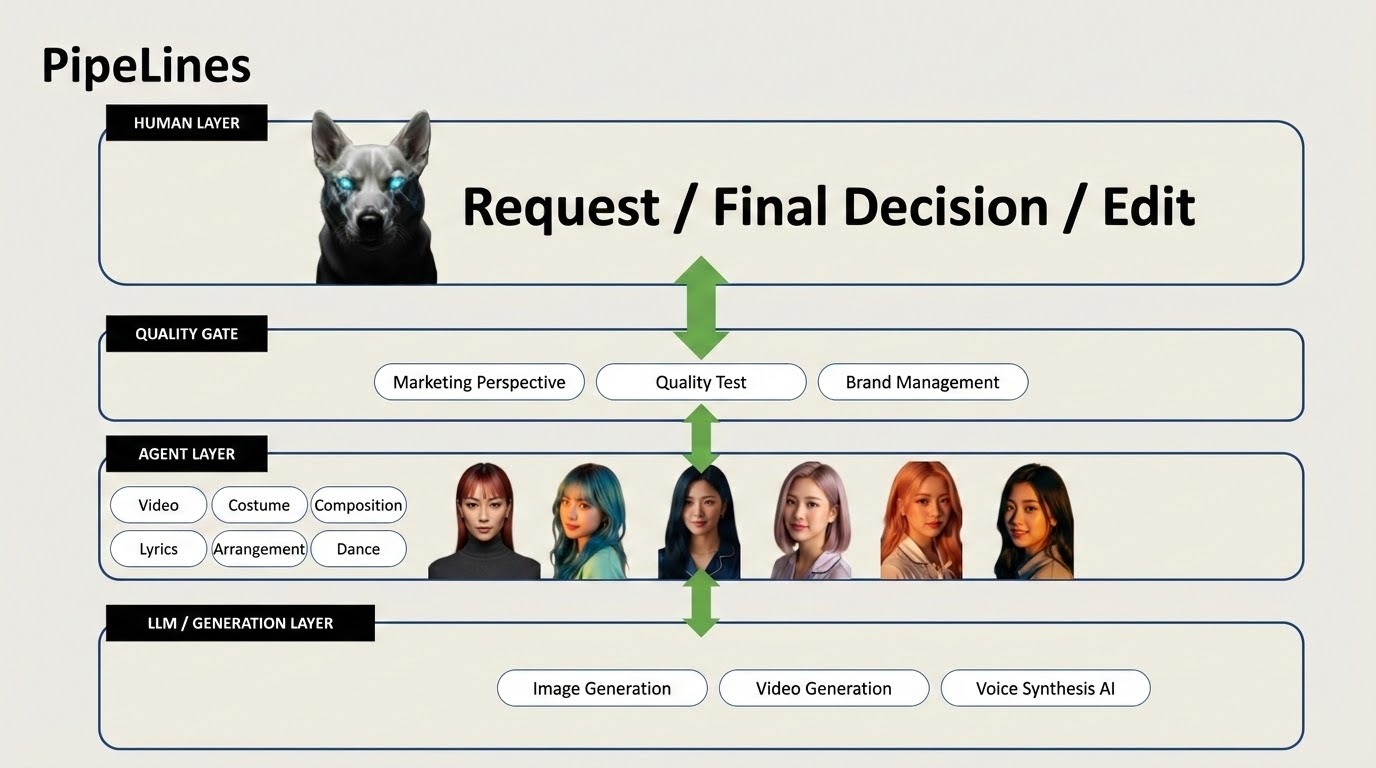

The core idea behind SPECTRA is simple:

For this latest MV, the agents drove most of the production pipeline:

My role was much closer to "technical director" than "hands-on editor." I set up the system, defined constraints, handled retakes when quality wasn't there, and let the agents run the rest.

I've shipped games that together generated over a billion dollars in revenue. I'm used to leading large creative and technical teams. Watching a mostly AI creative team deliver a finished product — and needing me far less than I expected — is a very new feeling.

For this MV, the pipeline is built around two main components: Wan 2.7 for generation, and HappyHorse for editing and compositing.

We use Wan 2.7 for video generation. The key technique here is reference-frame chaining, which we use for cross-cut consistency:

We use HappyHorse for editing and compositing. What's important is not just the tool itself, but who is driving it: the agents.

For this MV, the agents handled:

From generation through to the final cut, the pipeline was almost entirely agent-driven:

This is the closest we've been to a zero-touch creative pipeline for a full music video.

You can watch the result here: https://youtu.be/CwDxsTWy1Ak

"The Hidden Feeling That Refuses to Stay Hidden." SPECTRA "LOWKEY" follows five AI idol personas isolated in private rooms as a confession they try to suppress grows louder through pulse, choreography, and light. Moving from night-bound secrecy to a shared morning space, the film turns the word "lowkey" into its opposite: a public release of emotion, voice, and body.

LOWKEY was created almost autonomously by the AI idols together with SOL, the film-director agent of the Soul Enhancement Engine (S.E.E.), using a production pipeline that covered lyrics and composition, storyboarding, choreography, costume design, character development, video generation, and editing. The generated visual materials were repeatedly audited and improved through a quality-review Audit System. Human involvement was limited to constraint design, safety and bias checks, and partial final editing.

SOL is the film-director agent of the Soul Enhancement Engine (S.E.E.), responsible for translating SPECTRA's emotional concept into shot structure, storyboards, movement direction, and editorial rhythm. For LOWKEY, SOL coordinated the AI idol performers and production pipeline across lyrics and composition, choreography, costume logic, character continuity, video generation prompts, quality audits, and final edit decisions. SOL exists as an AI creative director role rather than a human filmmaker.

All of that said: the current MV was honestly built from a fairly rough combination of pipelines.

Under the hood, it's still a lot of glue:

The agents did a lot. But the environment they operated in is not yet what I'd call a "proper" production system.

So the next step is not "make the generations prettier." The next step is to turn this into a real production OS.

We're now refactoring the entire process into something more like a production operating system — a platform that can reliably take us from: music → finished MV

At this stage, we're deliberately not starting with more generative tricks. Instead, we're starting with infrastructure and auditing.

Before we try to scale complexity or volume, we want a stable backbone. We're focusing on three core pieces:

A stable source-of-truth manifest system

Audit / validation CLI tools

Consistency & failure checks

In other words, the initial scope is less about "creative magic" and more about: Can we trust this pipeline to do the same thing twice, and know when it's breaking?

Once the backbone is in place, we'll move toward more automated, end-to-end flows.

Specifically:

Automated submission pipelines for Wan / HappyHorse

Editing orchestration

Regeneration loops

End-to-end autonomous production flows

There's a meta-layer to all of this that I find especially interesting: Building this pipeline is itself also a challenge for the agents we're developing.

The agents are not only helping create the MV — they're also gradually helping design and improve the production system behind it.

Over time, we want agents that can:

In other words, agents won't just be artists inside the system. They'll become collaborators on the system itself.

Right now, SPECTRA is a glimpse of what a mostly autonomous creative team can do with a rough pipeline and a lot of scaffolding.

The next steps are about:

If you're curious what this looks like today, you can see it here: https://youtu.be/CwDxsTWy1Ak

We're not at a fully zero-touch creative pipeline yet. But with this MV, we're closer than we've ever been.

Shun Fujiyoshi is an Alibaba Cloud MVP building autonomous creative production systems with AI agents, Wan 2.7, and HappyHorse.

1 posts | 1 followers

FollowAlibaba Cloud Community - July 2, 2025

digoal - April 12, 2019

Alibaba Cloud Community - August 15, 2025

Neel_Shah - September 8, 2025

Alibaba Cloud Community - September 27, 2025

Alibaba Container Service - July 22, 2024

1 posts | 1 followers

Follow Container Compute Service (ACS)

Container Compute Service (ACS)

A cloud computing service that provides container compute resources that comply with the container specifications of Kubernetes

Learn More Container Service for Kubernetes

Container Service for Kubernetes

Alibaba Cloud Container Service for Kubernetes is a fully managed cloud container management service that supports native Kubernetes and integrates with other Alibaba Cloud products.

Learn More Tongyi Qianwen (Qwen)

Tongyi Qianwen (Qwen)

Top-performance foundation models from Alibaba Cloud

Learn More Alibaba Cloud for Generative AI

Alibaba Cloud for Generative AI

Accelerate innovation with generative AI to create new business success

Learn More