As an important part of supporting unified stream and batch processing and cloud-native services of Flink, Flink Remote Shuffle became open source today.

Flink Remote Shuffle is a Shuffle implementation that uses external services to complete data exchange between tasks in batch scenarios. This article introduces the research and development background and the design and use of Flink Remote Shuffle.

Flink Remote Shuffle stems from increasing user demand for unified stream and batch processing and cloud-native services.

Real-time processing can improve the user experience and increase the competitiveness of services in the market. Therefore, more business scenarios contain the requirements of real-time and offline processing. If stream processing and batch processing are completed in different frameworks, inconveniences occur in framework learning, code development, and online O&M. At the same time, real-time processing in many application scenarios is limited due to delayed data (for example, users may fill in comments after a long time) or business logic upgrades, and offline tasks are used for data revision. If we use two different frameworks to write two pieces of code logic, inconsistent calculation results may appear.

Flink introduced a data model of unified stream and batch processing to solve these problems. It uses a set of APIs to process real-time data and offline data. Flink designs and implements DataStream API [1] + Table / SQL API [2] + Connector 3 that unifies stream and batch processing to support this goal. Flink also supports the scheduling [5] of unified stream and batch processing at the execution layer [6] and Batch execution mode optimized for batch processing. Flink is required to support the Batch execution mode and implement an efficient and stable Blocking Shuffle. The built-in Blocking Shuffle of Flink continues to rely on TaskManager, where the upstream resides, to provide data read services for the downstream after the upstream ends. This prevents TaskManager from being released immediately, reducing resource utilization. The stability of the Shuffle service is affected by the stability of task execution.

On the other hand, cloud-native can improve cluster resource utilization by supporting offline and online hybrid deployment. It provides unified O&M operation interfaces to reduce O&M costs and supports task auto scaling through dynamic resource orchestration. Therefore, more users are using Kubernetes to manage their cluster resources. Flink actively embraces cloud-native. In addition to providing native support for Kubernetes, 7 Flink provides Adaptive Scheduler [9], which dynamically scales based on the number of resources and promotes the separation of storage and computing [10] of State. The Shuffle process is the largest user of local disks, so some problems must be solved to allow the Batch mode to support cloud-native better. These problems include the separation of storage and computing for Blocking Shuffle, occupation reduction of local disks, and decoupling between computing and storage resources.

Therefore, if you want to support unified stream and batch processing and cloud-native better, independent Shuffle services are the only way to realize data transmission between tasks.

Flink Remote Shuffle is designed and implemented based on the ideas above. It supports multiple important features, including:

1) Storage and Computing Separation: Separated storage and computing enable computing resources and storage resources to scale independently. Computing resources can be released after computing is completed. Shuffle stability is no longer affected by computing stability.

2) Multiple Deployment Modes: Flink Remote Shuffle supports deployment in Kubernetes, Yarn, and Standalone environments.

3) Flink Remote Shuffle adopts a traffic control mechanism similar to the Credit-Based mechanism to realize zero-copy data transmission. Managed memory is used to the maximum extent to avoid Out of Memory (OOM) events and improve the system stability and performance.

4) Multiple optimizations are realized to provide excellent performance and stability, including load balancing, disk I/O optimization, data compression, connection reuse, and small packet merging.

5) Flink Remote Shuffle supports the correctness verification of Shuffle data and tolerates restarts of Shuffle processes and physical nodes.

6) Combined with FLIP-187: Flink Adaptive Batch Job Scheduler [11] and Flink Remote Shuffle support dynamic execution optimization, such as dynamically determining operator concurrency.

Internal core tasks of Alibaba have used the Flink-based unified stream and batch processing procedure since Double 11 in 2020. It was the first large-scale application of unified stream and batch processing in production practice in the industry. With Alibaba solved the problem of caliber inconsistency of stream and batch processing in scenarios, such as the Tmall marketing engine, using unified stream and batch processing. The efficiency of data report creation was improved 4 to 10 times. Day and night peak-load shifting was achieved by adopting the hybrid deployment of stream tasks and batch tasks, saving half of the resource costs.

As an important part of unified stream and batch processing, the largest cluster scale on Flink Remote Shuffle has reached more than 1,000 since the service is launched. It has steadily supported multiple business units, such as the Tmall marketing engine and Tmall International in previous promotional activities. The data volume reached the PB level, which proved the stability and performance of the system.

Flink Remote Shuffle is implemented based on the unified plug-in Shuffle interface of Flink. As a data processing platform that unifies stream and batch processing, Flink can adapt to a variety of Shuffle policies in different scenarios, such as Internet-based online Pipeline Shuffle, TaskManager-based Blocking Shuffle, and Remote Shuffle based on remote services.

Shuffle policies differ in terms of transmission methods and storage media but share common requirements in dataset lifecycle, metadata management, notification of downstream tasks, and data distribution policies. A plug-in Shuffle architecture [12] was introduced in Flink to provide unified support for different types of Shuffle and simplify the implementation of new Shuffle policies, including Flink Remote Shuffle.

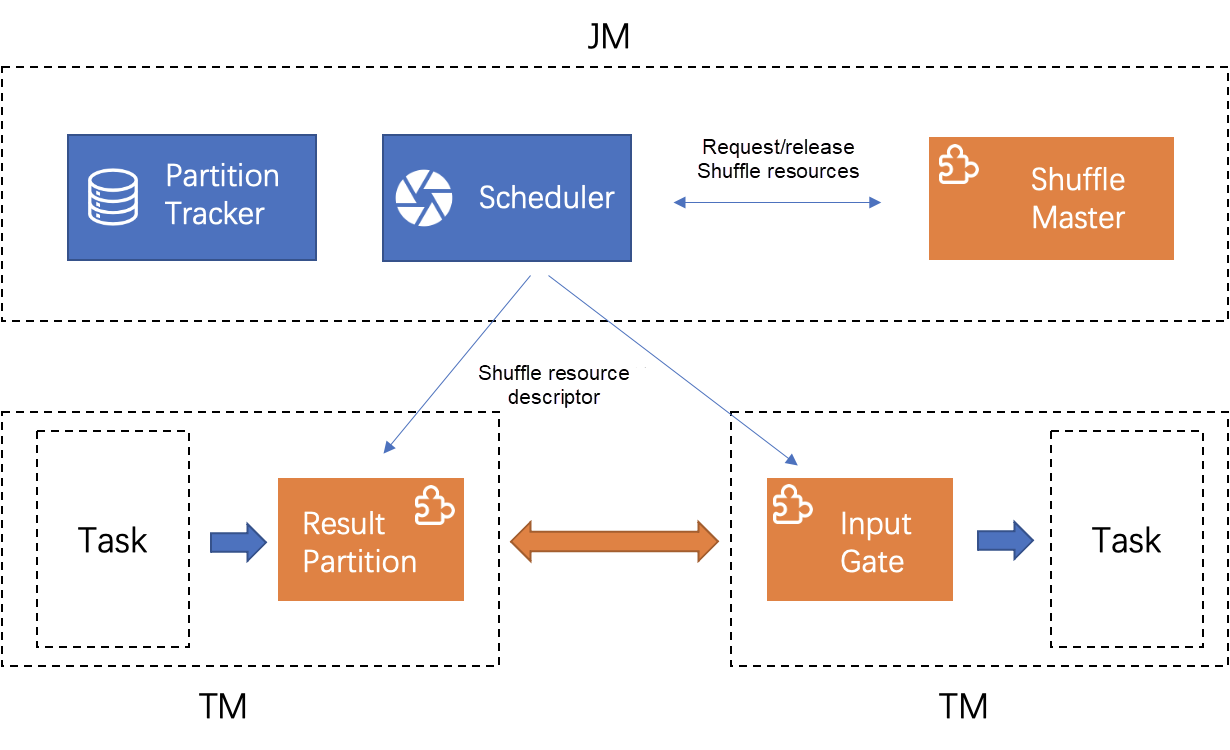

As shown in the following figure, a Shuffle plug-in contains two parts. ShuffleMaster is responsible for resource application and release on the JobMaster end. InputGate and ResultPartition are responsible for data reads and writes on the TaskManager end. The scheduler uses ShuffleMaster to apply for resources and pass the resources to PartitionTracker for management. When upstream and downstream tasks are started, the scheduler carries descriptors of Shuffle resources to describe the location of data output and read.

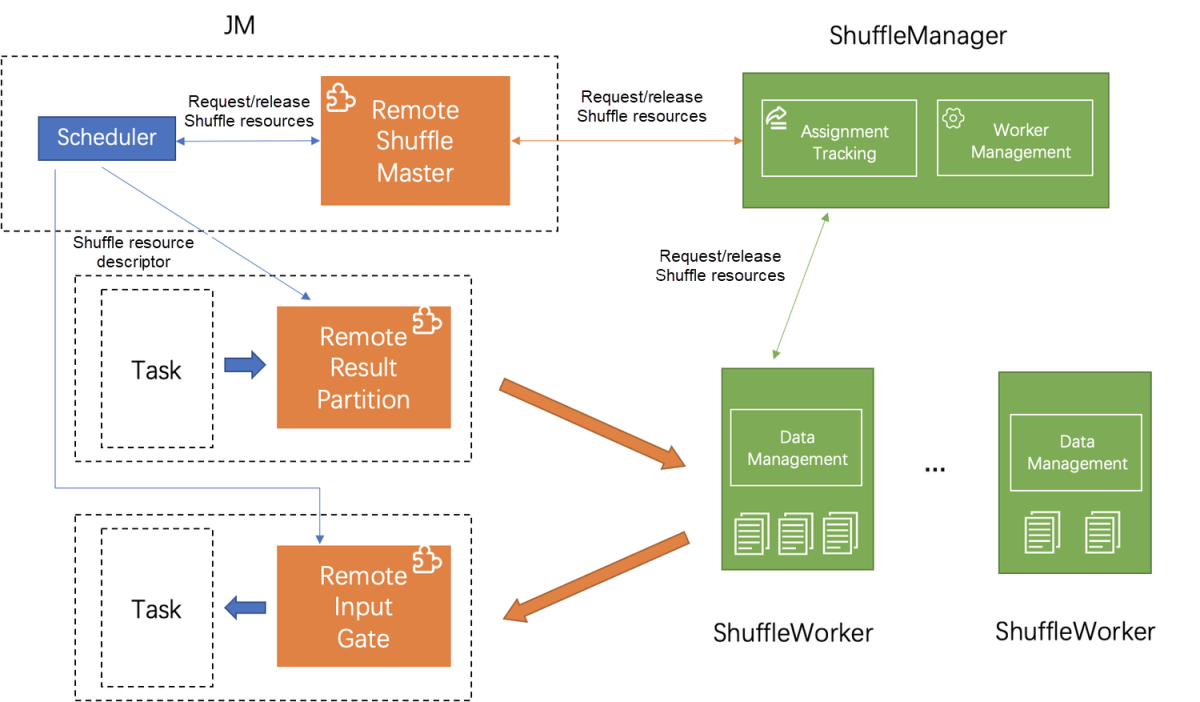

Based on the unified plug-in Shuffle interface of Flink, Flink Remote Shuffle provides the data shuffle service through an individual cluster. The cluster uses the classic master-slave structure. In the structure, ShuffleManager serves as the master node of the entire cluster, manages worker nodes, and allocates and manages Shuffle datasets. ShuffleWorkers serve as the slave nodes of the cluster and are responsible for the reading, writing, and cleaning of datasets.

When an upstream task is started, the scheduler of Flink applies resources from ShuffleManager through the RemoteShuffleMaster plug-in. ShuffleManager selects the appropriate ShuffleWorker to provide services based on the type of dataset and the load of the Worker. When the scheduler gets the Shuffle resource descriptor, it carries the descriptor when the upstream task is started. The upstream task sends data to the corresponding ShuffleWorker for persistent storage according to the ShuffleWorker address recorded in the descriptor. Correspondingly, when the downstream task is started, it reads from the ShuffleWorker according to the address recorded in the descriptor. This is how data is transmitted.

Error tolerance and self-healing capability are critical for a long-running service. Flink Remote Shuffle monitors ShuffleWorker and ShuffleMaster through heartbeat and other mechanisms. It maintains the consistency of the status of the entire cluster by deleting and synchronizing the status of the dataset when exceptions, such as heartbeat timeout and I/O failure occur. Please see the documentation [13] on Flink Remote Shuffle for more information about how to handle exceptions.

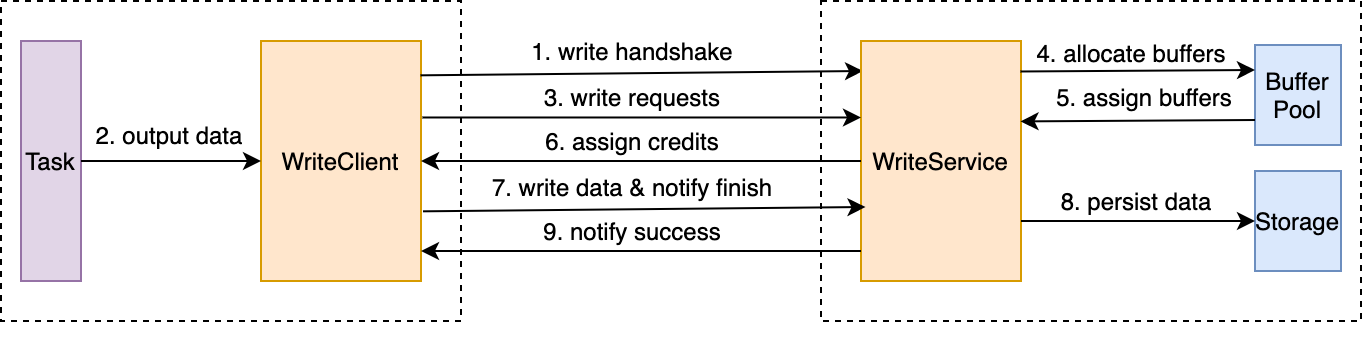

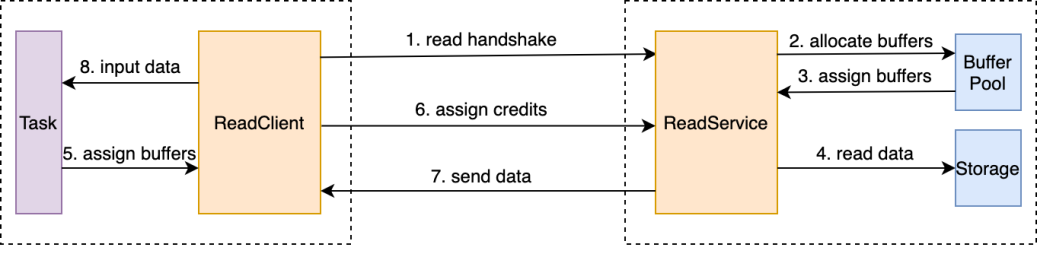

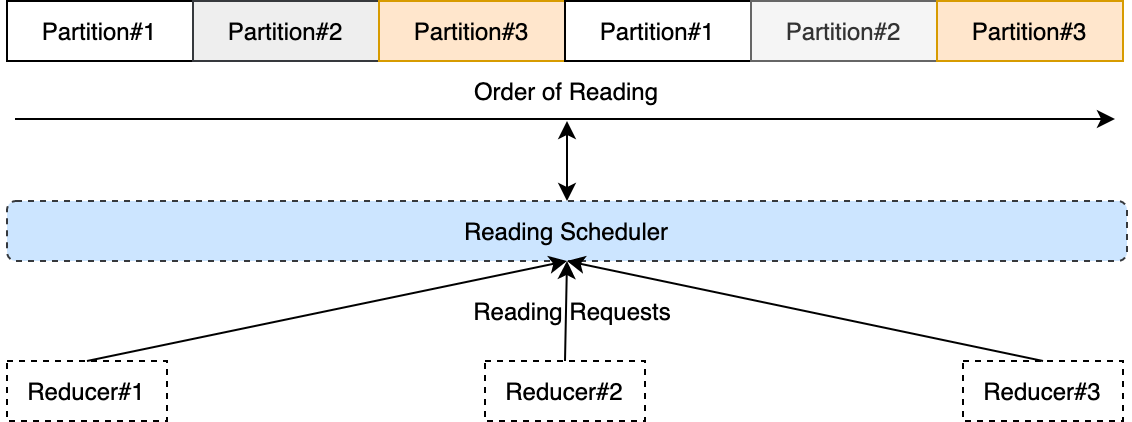

Remote Shuffle of data can be divided into two stages: read and write. In the data write phase, the output data of the upstream computing task is written to the remote ShuffleWorker. In the data read phase, the downstream computing task reads and processes the output data of the upstream computing tasks from the remote ShuffleWorker. The Data Shuffle protocol defines the data type, granularity, constraints, and procedure in this process. In summary, the following figure shows the process of data write and read:

Multiple optimization methods are used during the data read and write process. These methods include data compression, traffic control, data copy reduction, and managed memory use.

File I/O is a bottleneck for Shuffle write, especially on hard disks. Its optimization brings acceleration.

Besides the data compression mentioned above, a widely used technical solution merges small files or small data blocks. This way, the sequential read and write of files are increased, and excessive random reads and writes are avoided. As a result, file I/O performance is optimized. Systems, such as Spark, have optimized the merging of small blocks of data into large blocks for direct Shuffle between non-remote computing nodes.

According to our research, the data merging solution of the remote Shuffle system was first proposed by Microsoft, LinkedIn, and Quantcast in a paper named Sailfish [15]. Later, Riffle [16] of Princeton and Facebook, Cosco [17] of Facebook, Magnet [18] of LinkedIn, and Spark Remote Shuffle [19] of Alibaba EMR implemented similar optimization methods. Shuffle data sent by different upstream computing tasks to the same downstream computing task is pushed to the same remote Shuffle service nodes for merging. The downstream computing task can pull the merged data from the remote Shuffle service nodes.

In addition, we put forward another optimization method in the direct Shuffle implementation between Flink computing nodes, such as Sort-Spill + I/O scheduling. After the output data of the computing task fills up the memory buffer, data is sorted and spilled into the file. Data is appended to the same file to avoid creating multiple files. The scheduling of data read requests is added, and the data is read in the offset order of the file to meet the read request in the process of data reading. Data is read completely in order in an optimal case. The following figure shows the basic storage structure and I/O scheduling process. Please see the Flink blog [20] or its Chinese website [21] for more details.

Both solutions have advantages and disadvantages.

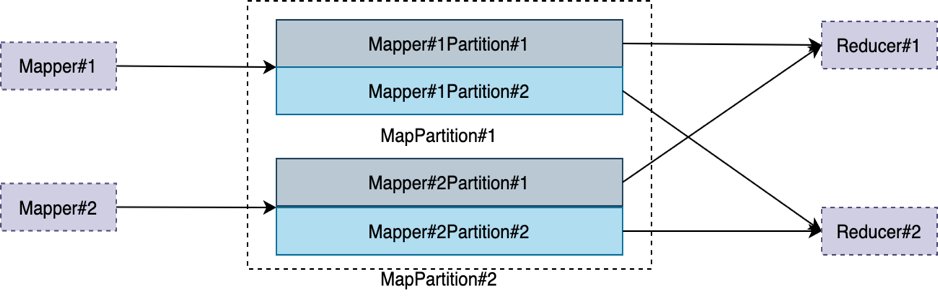

The abstraction of Flink Remote Shuffle does not reject any optimization strategy. Flink Remote Shuffle can be regarded as an intermediate data storage service that can perceive Map-Reduce semantics. The basic data storage unit is DataPartition, which has two types, MapPartition and ReducePartition. Data contained in MapPartition is generated by an upstream computing task and may be consumed by several downstream computing tasks. The following figure shows the generation and consumption of MapPartition.

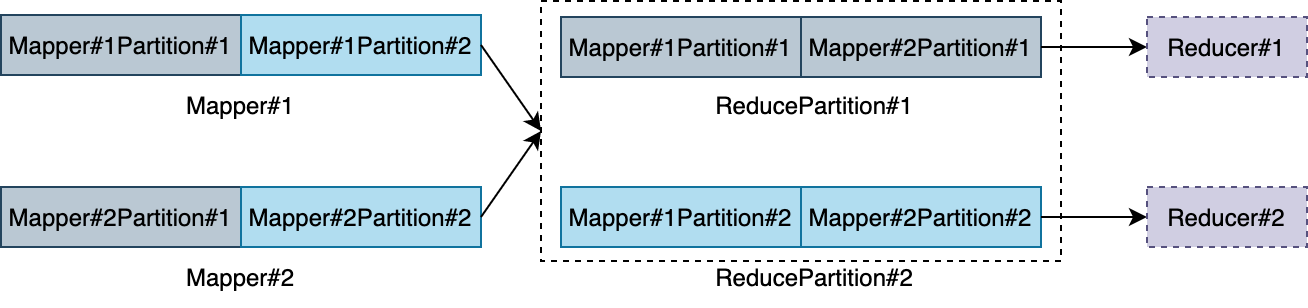

Data contained in ReducePartition is generated by merged outputs of multiple upstream computing tasks. It is consumed by a single downstream computing task. The following figure shows the generation and consumption of ReducePartition:

It is an important capability to support deployment in a variety of environments and meet differentiated deployment requirements. Flink Remote Shuffle supports three deployment modes: Kubernetes, YARN, and Standalone, which meets the deployment environment requirements of most users. In each deployment mode, scripts and templates are provided to users. Please see the documents about Kubernetes deployment mode [22], YARN deployment mode [23], and Standalone deployment mode [24] for more details. Among the three modes, Kubernetes mode and YARN mode implement high availability of the master node (ShuffleManager). This feature in Standalone mode will be supported in future versions.

In addition, the Metric system of Flink Remote Shuffle provides multiple important monitoring metrics for users to monitor the running state of the entire system. The metrics include the number of active nodes, the total number of jobs, the number of available buffers on each node, the number of data partitions, the number of network connections, network throughput, and JVM. In the future, more monitoring metrics will be added to facilitate O&M. Users can access the Metric service of each process (ShuffleManager and ShuffleWorker) to query the metric data. Please see the user documentation below [25] for more information. In the future, the Metric reporting capability will also be provided to allow users to report metrics to external systems, such as Prometheus.

The deployment and O&M of Flink Remote Shuffle are easy. In the future, the Development Team will continue to improve the deployment and O&M experience by simplifying information collection and problem positioning, improving automation and reducing O&M costs.

The remote Shuffle system is divided into two parts: the client end and the server end. The server runs as an independent cluster, and the client runs in the Flink cluster as an agent for Flink jobs to access the remote Shuffle service. In terms of deployment mode, users may access the same set of Shuffle services through different Flink clusters. Therefore, multi-version compatibility is a common user concern. The version of the Shuffle service is continuously upgraded with new features added and optimizations made. If incompatibility between the client and the server occurs, the simplest way is to upgrade the client end of different users together. However, this requires the cooperation of users and is not always feasible.

The best answer is to guarantee full compatibility between versions. Flink Remote Shuffle has made multiple efforts to achieve this:

We hope to achieve full compatibility between different versions and avoid unnecessary surprises through these efforts. If you want to use more new features and optimizations of the latest version, you need to upgrade the client.

In production practice, Flink Remote Shuffle is proved to have good stability and performance due to multiple performance and stability optimizations.

Designs and optimizations improve the stability of Flink Remote Shuffle. For example, separation of storage and computing prevents the stability of Shuffle from being affected by the computing stability. Credit-based traffic control enables sending data according to the processing capacity of consumers to prevent consumers from being crushed.

Connection reuse, small packet merging, and the active health check of network connections help improve network stability. The maximum use of managed memory decreases the possibility of OOM. Data verification enables the system to tolerate restarts of processes and physical nodes.

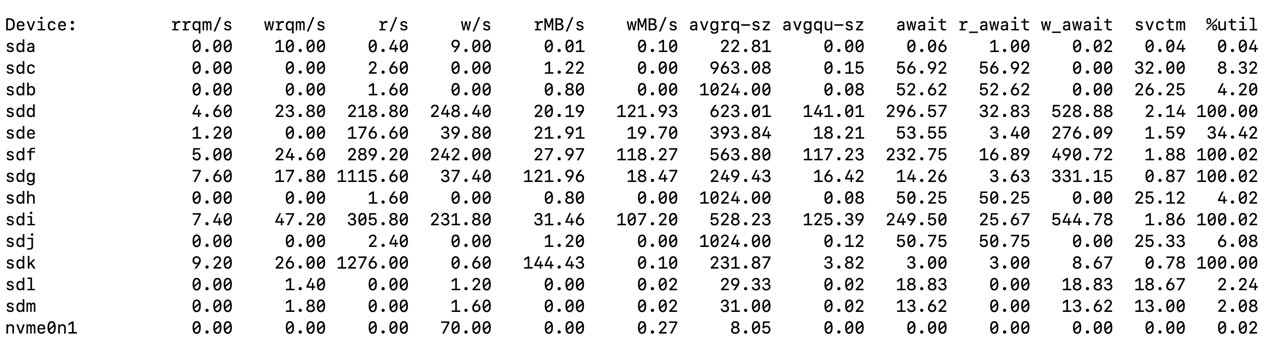

In terms of performance, data compression, load balancing, and file I/O optimization have improved the performance of data Shuffle. In scenarios with a small amount of data, the performance of Flink Remote Shuffle and direct Shuffle between computing nodes is similar because most of the Shuffle data is stored in the cache of the operating system. In scenarios with a large amount of data, the performance of Flink Remote Shuffle is better thanks to the centralized decision-making capabilities, including load balancing of the ShuffleManager node and I/O management of the entire physical machine by a single ShuffleWorker node in a unified manner. The following figure shows the disk I/O information of Flink Remote Shuffle when running a job (TPC-DS q78).

As shown in the figure above, disks of sdd, sde, sdf, sdg, sdi, and sdk are used with relatively high throughput. We will continue to optimize the performance.

The current version of Flink Remote Shuffle is used in Alibaba on a large scale. It has proved to be usable in production in terms of performance and stability. We will continue to iteratively improve and enhance Flink Remote Shuffle in the future. Multiple topics are on the agenda, such as performance and usability. If you are interested, you are welcome to join us to improvement of Flink Remote Shuffle. We can jointly promote the development of unified stream and batch processing and cloud-native services in Flink.

Alibaba Cloud Realtime Compute for Apache Flink Official Website

[1] https://cwiki.apache.org/confluence/display/FLINK/FLIP-134%3A+Batch+execution+for+the+DataStream+API

[4] https://cwiki.apache.org/confluence/display/FLINK/FLIP-143%3A+Unified+Sink+API

[5] https://cwiki.apache.org/confluence/display/FLINK/FLIP-119+Pipelined+Region+Scheduling

[9] https://cwiki.apache.org/confluence/display/FLINK/FLIP-160%3A+Adaptive+Scheduler

[10] https://cwiki.apache.org/confluence/display/FLINK/FLIP-158%3A+Generalized+incremental+checkpoints

[11] https://cwiki.apache.org/confluence/display/FLINK/FLIP-187%3A+Adaptive+Batch+Job+Scheduler

[12] https://cwiki.apache.org/confluence/display/FLINK/FLIP-31%3A+Pluggable+Shuffle+Service

[13] https://github.com/flink-extended/flink-remote-shuffle/blob/main/docs/user_guide.md#fault-tolerance

[14] https://flink.apache.org/2019/06/05/flink-network-stack.html

[15] Rao S, Ramakrishnan R, Silberstein A, et al. Sailfish: A framework for large scale data processing[C]//Proceedings of the Third ACM Symposium on Cloud Computing. 2012: 1-14.

[16] Zhang H, Cho B, Seyfe E, et al. Riffle: optimized shuffle service for large-scale data analytics[C]//Proceedings of the Thirteenth EuroSys Conference. 2018: 1-15.

[17] https://databricks.com/session/cosco-an-efficient-facebook-scale-shuffle-service

[18] Shen M, Zhou Y, Singh C. Magnet: push-based shuffle service for large-scale data processing[J]. Proceedings of the VLDB Endowment, 2020, 13(12): 3382-3395.

[20] https://flink.apache.org/2021/10/26/sort-shuffle-part2.html

[21] https://www.alibabacloud.com/blog/sort-based-blocking-shuffle-implementation-in-flink-part-1_598368

[22] https://github.com/flink-extended/flink-remote-shuffle/blob/master/docs/deploy_on_kubernetes.md

[23] https://github.com/flink-extended/flink-remote-shuffle/blob/master/docs/deploy_on_yarn.md

[24] https://github.com/flink-extended/flink-remote-shuffle/blob/master/docs/deploy_standalone_mode.md

[25] https://github.com/flink-extended/flink-remote-shuffle/blob/master/docs/user_guide.md

206 posts | 58 followers

FollowApache Flink Community China - March 17, 2023

Apache Flink Community - July 18, 2024

Apache Flink Community China - March 29, 2021

Apache Flink Community China - September 27, 2019

Alibaba Cloud Community - March 9, 2023

Apache Flink Community - January 31, 2024

206 posts | 58 followers

Follow Realtime Compute for Apache Flink

Realtime Compute for Apache Flink

Realtime Compute for Apache Flink offers a highly integrated platform for real-time data processing, which optimizes the computing of Apache Flink.

Learn More Apsara Stack

Apsara Stack

Apsara Stack is a full-stack cloud solution created by Alibaba Cloud for medium- and large-size enterprise-class customers.

Learn More Big Data Consulting for Data Technology Solution

Big Data Consulting for Data Technology Solution

Alibaba Cloud provides big data consulting services to help enterprises leverage advanced data technology.

Learn More Big Data Consulting Services for Retail Solution

Big Data Consulting Services for Retail Solution

Alibaba Cloud experts provide retailers with a lightweight and customized big data consulting service to help you assess your big data maturity and plan your big data journey.

Learn MoreMore Posts by Apache Flink Community