Released by ELK Geek

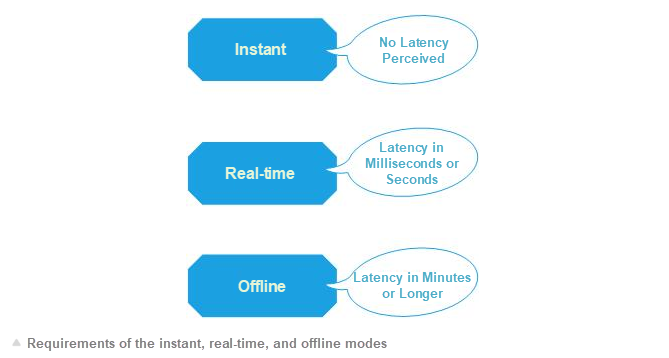

First of all, let us clarify the definitions of real-time, instant, and offline modes.

The instant mode allows you to query data changes immediately after they are made. A transaction isolation mechanism is used internally. Queried data must be blocked until the data update is completed. For example, you can query a data change in a single-instance relational database immediately after it is made.

In real-time mode, data is synchronized between heterogeneous or homogeneous data sources within an acceptably short time usually measured in seconds, milliseconds, or microseconds. You can define the time limit based on business requirements and implementation capabilities. If the MySQL-based synchronization between primary and secondary databases cannot be completed in instant mode, it must be at least completed in the real-time mode. Read/Write splitting in business systems can only be performed on non-instant data.

Compared with real-time mode, the offline mode does not impose strong timeliness requirements. Instead, it emphasizes data throughput. Synchronization in offline mode usually takes minutes, hours, or days. For example, in big data business intelligence (BI) applications, the data synchronization time is generally required to be T+1.

The previous article, "Combining Elasticsearch with DBs: Real-time Data Synchronization", describes a technical solution for real-time data synchronization in a real-world project. The solution is based on the Change Data Capture (CDC) mechanism. It involves a long technology stack pipeline and many intermediate steps, requiring complex coding and high investment. This does not facilitate rapid production and application. In fact, in most business system scenarios, offline synchronization is the most frequently needed capability. To this end, this article describes how to synchronize DB data to Elasticsearch in offline mode.

Offline synchronization from DBs to Elasticsearch is suitable for many business scenarios. Compared with real-time synchronization, offline synchronization better demonstrates the benefits of replacing DBs with Elasticsearch as a query engine.

This article describes how to synchronize data from databases (DBs) to Elasticsearch in offline mode. The following sections describe several business scenarios involving offline synchronization.

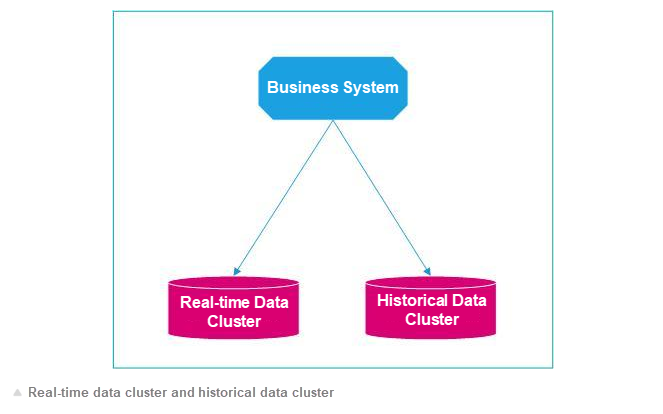

In the e-commerce or logistics industry, a large amount of order data is generated every day. Data of outstanding orders is queried in real time at a high frequency, requiring a lot of upstream and downstream data transfer and processing. By contrast, data of closed orders is queried at a low frequency. However, data of historical orders grows quickly. If a data system treats real-time data and historical data equally in its system architecture design, it will soon suffer various fatal performance problems. For example, the update speed for queries of real-time data slows down, user experience deteriorates, and even one query can cause serious congestion in the system because it involves a large volume of historical data.

Conventionally, real-time data is separated from historical data. Real-time data is queried frequently, and one query involves a small volume of data, allowing a fast system response. By contrast, historical data is queried less frequently, and one query involves a large volume of data, resulting in a slow but acceptable response.

Due to the limitations of traditional relational databases, we can use Elasticsearch to handle such a scenario. An Elasticsearch real-time cluster is used for queries of real-time data, while an Elasticsearch historical cluster is used for queries of historical data. The CDC mechanism is used for the synchronization of real-time data. Historical data synchronization requires a dedicated offline data channel.

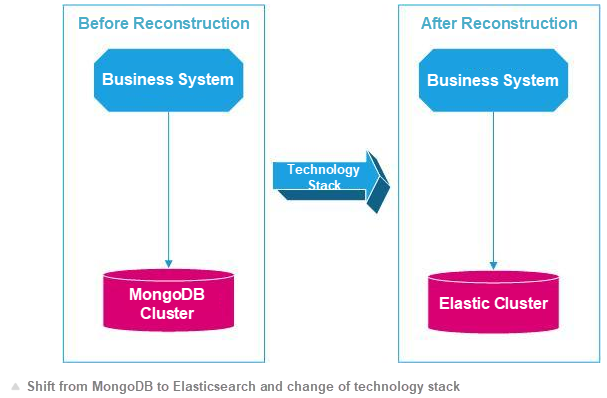

As your business changes and develops, the business system needs to be reconstructed accordingly. This involves both the business model and the technical architecture. When, due to human resources or technology evolution, the existing database system can no longer meet business requirements, you need a more suitable database system.

For example, you can shift a variety of business systems based on MongoDB storage to Elasticsearch for faster speed and lower costs (this will be discussed in subsequent cases). After such a system reconstruction, you need to synchronize all the MongoDB data to Elasticsearch. This is an offline synchronization scenario.

Even the renowned MongoDB has improper applications, to say nothing of the limitations of relational databases. For example, you can shift a variety of business systems based on MongoDB storage to Elasticsearch for faster speed and lower costs (for more information, see the article: Why You Should Migrate from MongoDB to Elasticsearch). After such a system reconstruction, you need to synchronize all the MongoDB data to Elasticsearch. This is an offline synchronization scenario.

Compared with real-time synchronization, offline synchronization features much lower technical complexity, less demanding scenarios, and therefore more technical solutions to choose from. Many excellent special tools are available for effortless configuration of offline synchronization. The following sections introduce several popular products that are my personal favorites. I will briefly analyze their excellent features and architectural principles.

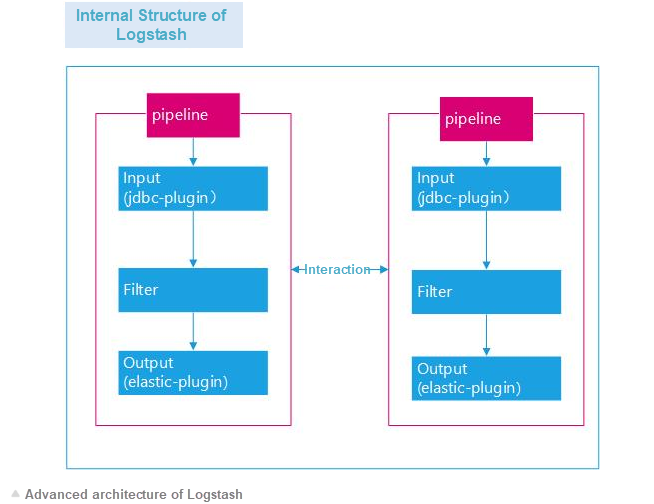

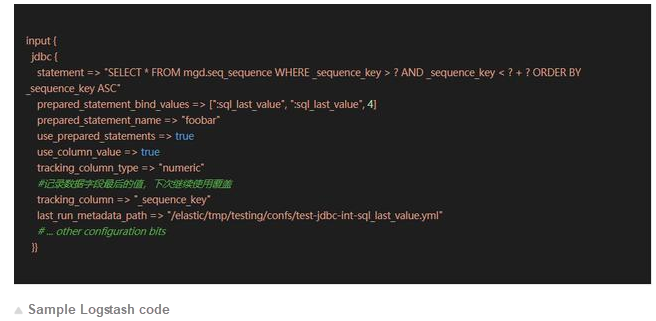

Logstash is an Elasticsearch product that is an essential part of the Elasticsearch Stack.

Logstash is more popular in the O&M community than in the development community. This is largely due to the popularity of Elasticsearch Stack. In fact, Logstash is one of the simplest and most useful tools for data synchronization.

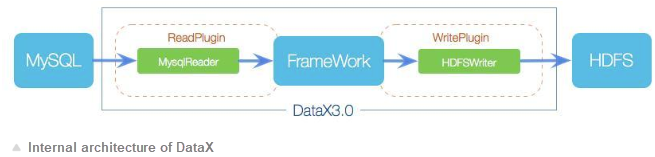

DataX is a data synchronization tool provided by Alibaba. It is used for offline data synchronization between databases.

DataX is not the best tool. However, by virtue of its simplicity, good performance, and large throughput, plus the reputation of Alibaba, DataX is highly supported in the development community and widely used in one-time offline data synchronization projects.

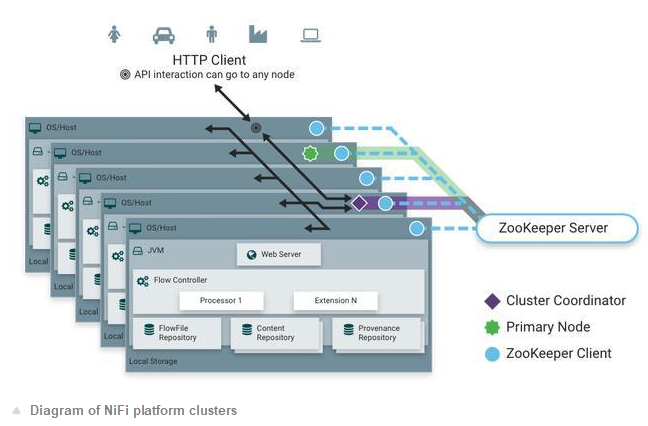

NiFi is a top-level Apache product. It is used for data synchronization.

NiFi has a long history. However, it is not as popular as Sqoop from the Hadoop ecosystem in China. In any case, Command and Data Handling (CDH) has been integrated in the latest version. I personally like NiFi very much due to its platform-based system architecture. Compared with NiFi, DataX and Logstash are nothing but small tools.

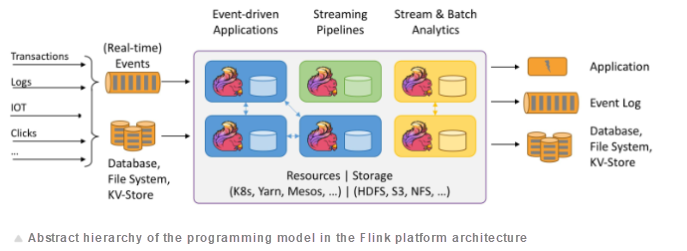

Currently, Flink is the most popular stream processing product in the big data community. It is widely used in the real-time computing field.

Flink is positioned in the real-time computing field, but it also provides strong support for offline computing due to the characteristics of its underlying architecture design. Flink provides a friendly programming model for quick development and deployment. Spark is similar to Flink, so I will not describe it in detail here.

Many professional extract, load, and transform (ELT) products are available. For more information, see the BI materials. I will not provide much detail here.

The preceding sections describe several popular data synchronization tools. Some tools support offline synchronization while other tools support offline and real-time synchronization. In fact, more tools are available. Each product has its own limitations and advantages. Therefore, do not treat them as the same, nor use them all together. Balance your tools based on your actual business and technology needs.

Each tool is specifically positioned and needs to be evaluated objectively. The requirements for offline data synchronization in business systems are diverse and there is no perfect product that meets the requirements of all scenarios.

For example, DataX is a direct synchronization tool without data processing features. Although its [MySQL module supports incremental synchronization, its batch-to-batch continuous synchronization feature requires manual triggering. This causes some inconvenience, but the data throughput is very good.

Logstash does not support JDBC-based write plug-ins. This may lead some people to jump to the conclusion that Logstash is not a good tool due to the lack of support for data synchronization between multiple databases. In fact, Logstash is a great tool suitable for many data synchronization scenarios. It provides a batch-batch continuous timing mechanism that is unavailable in DataX, greatly reducing your workload.

A wide range of offline data synchronization scenarios are encountered in business systems. A single data synchronization tool cannot handle all these scenarios. To use multiple data synchronization tools, you must create a hybrid system.

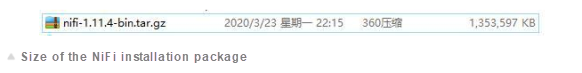

For example, NiFi provides a lot of powerful features and can read data from local files. However, the NiFi installation package may occupy several gigabytes of your disk space. If the size of the data to be synchronized is small or even smaller than the NiFi installation package, select a standalone tool such as Logstash.

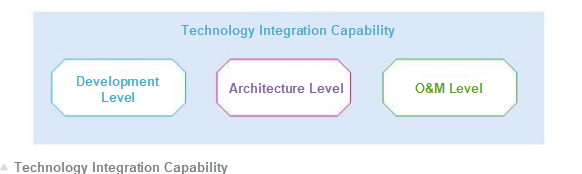

Each tool has its own architecture design concept and unique technical characteristics. To master and integrate them well, you have to invest a certain amount of time and effort. Otherwise, you may run into many problems. For example, at the development level, Flink is easy for beginners and allows you to develop data synchronization code. However, as a platform product, Flink itself is highly complex, especially in terms of architecture and O&M.

Different people approach data synchronization in different ways. Readily-available tools can quickly respond to demands, while re-written programs can better meet custom demands. Therefore, select tools based on your preferences and needs.

Elasticsearch has many excellent features, allowing it to handle almost any scenario, including real-time data and historical data scenarios. Elasticsearch saves you a lot of time.

This article summarizes our practices when combining DBs and Elasticsearch in business systems. This article is intended for reference. This is an original article. If you wish to reproduce it, please indicate the source.

Currently, Elasticsearch is used by almost all information technology companies in China, from small studios to large companies with thousands of employees. It is widely used and highly regarded in many fields. Elasticsearch is easy to get started with. However, you need to invest a lot of time to become an Elasticsearch expert. Therefore, we have designed special courses to help more individual and enterprise users master Elasticsearch.

The courses start with data synchronization, including offline and real-time data synchronization, methods of importing DB data to Elasticsearch, and methods of handling different types of data. For more information, see Practices for DB and Elasticsearch Data Synchronization. The special courses concentrate more on sample code from case studies than theory. We provide the following course series:

Li Meng is an Elasticsearch Stack user and a certified Elasticsearch engineer. Since his first explorations into Elasticsearch in 2012, he has gained in-depth experience in the development, architecture, and operation and maintenance (O&M) of the Elastic Stack and has carried out a variety of large and mid-sized projects. He provides enterprises with Elastic Stack consulting, training, and tuning services. He has years of practical experience and is an expert in various technical fields, such as big data, machine learning, and system architecture.

Declaration: This article is reproduced with authorization from Li Meng, the original author. The author reserves the right to hold users legally liable in the case of unauthorized use.

Combining Elasticsearch with DBs: Real-time Data Synchronization

Why Choose ApsaraDB for RDS (Relational Database Service) of Alibaba Cloud

2,593 posts | 794 followers

FollowAlibaba Clouder - January 7, 2021

Alibaba Clouder - January 6, 2021

Alibaba Cloud New Products - January 19, 2021

Alibaba Cloud Storage - February 27, 2020

Data Geek - April 16, 2024

Data Geek - May 11, 2024

2,593 posts | 794 followers

Follow ApsaraDB for HBase

ApsaraDB for HBase

ApsaraDB for HBase is a NoSQL database engine that is highly optimized and 100% compatible with the community edition of HBase.

Learn More Big Data Consulting for Data Technology Solution

Big Data Consulting for Data Technology Solution

Alibaba Cloud provides big data consulting services to help enterprises leverage advanced data technology.

Learn More Big Data Consulting Services for Retail Solution

Big Data Consulting Services for Retail Solution

Alibaba Cloud experts provide retailers with a lightweight and customized big data consulting service to help you assess your big data maturity and plan your big data journey.

Learn More ApsaraDB for MyBase

ApsaraDB for MyBase

ApsaraDB Dedicated Cluster provided by Alibaba Cloud is a dedicated service for managing databases on the cloud.

Learn MoreMore Posts by Alibaba Clouder