AnalyticDB for MySQL is highly compatible with the MySQL protocol, allowing for millisecond-level data retrieval and handling queries within seconds. It provides real-time multi-dimensional analysis and business exploration capabilities for large amounts of data. AnalyticDB for MySQL Data Lakehouse Edition offers low-cost offline data processing and high-performance online analysis, enabling a database-like experience with data lakes. This solution helps enterprises reduce costs and increase efficiency by establishing an enterprise-level data analysis platform.

Introduction to APS (AnalyticDB Pipeline Service): As part of the AnalyticDB for MySQL Data Lakehouse Edition, APS data channel components have been introduced to provide real-time data streaming services for low-cost and low-latency data ingestion and warehousing. This article focuses on the challenges and solutions of using APS with SLS to achieve quick data ingestion with Exactly-Once consistency. Flink, a widely recognized big data processing framework, is chosen as the underlying engine for constructing the data tunnel. AnalyticDB for MySQL's lake is built on Hudi, a mature data lake foundation used by many large enterprises. Leveraging their experience, AnalyticDB for MySQL Data Lakehouse Edition provides an integrated solution by deeply integrating lakes and warehouses.

Challenges of achieving Exactly-Once consistency in data ingestion: In the data tunnel, exceptions may occur due to scenarios like upgrades or scaling, which can lead to link restarts and trigger replay of previously processed data from the source end, resulting in duplicate data on the target end. One approach to address this issue is configuring a business primary key and using Hudi's Upsert capability to achieve idempotent writing. However, for SLS data with a throughput of several GB per second (e.g., 4 GB/s for a specific business), controlling costs becomes challenging, making it difficult to meet the requirements with Hudi Upsert. Since SLS data is append-only in nature, Hudi's Append Only mode is used for writing data to achieve high throughput, along with other mechanisms to prevent data duplication and loss.

The consistency assurance of stream computing generally includes the following types.

| At-Least-Once | Data is not lost during processing, but may be duplicated. |

| At-Most-Once | Data is not duplicated during processing, but may be lost. |

| Exactly-Once | All data is processed once without duplication and loss. |

Among various consistency semantics in stream computing, Exactly-Once consistency is the most demanding. In stream computing, Exactly-Once refers to the exact consistency of the internal state. However, business scenarios require end-to-end Exactly-Once consistency, ensuring that data on the target remains consistent with the source, without duplication or loss, in the event of a failover.

In order to achieve Exactly-Once consistency, we need to consider the scenario of failover. This means recovering to a consistent state when the system downtime task is restarted. Flink is known as stateful stream processing because it can save its state to the backend storage through the checkpoint mechanism and restore the state to a consistent state from the backend storage upon restart. However, Flink only ensures that its own state is consistent during state recovery. In a complete system that includes source, Flink, and target, there may still be data loss or duplication, resulting in end-to-end inconsistency. Let's discuss the issue of data duplication using the example of string concatenation.

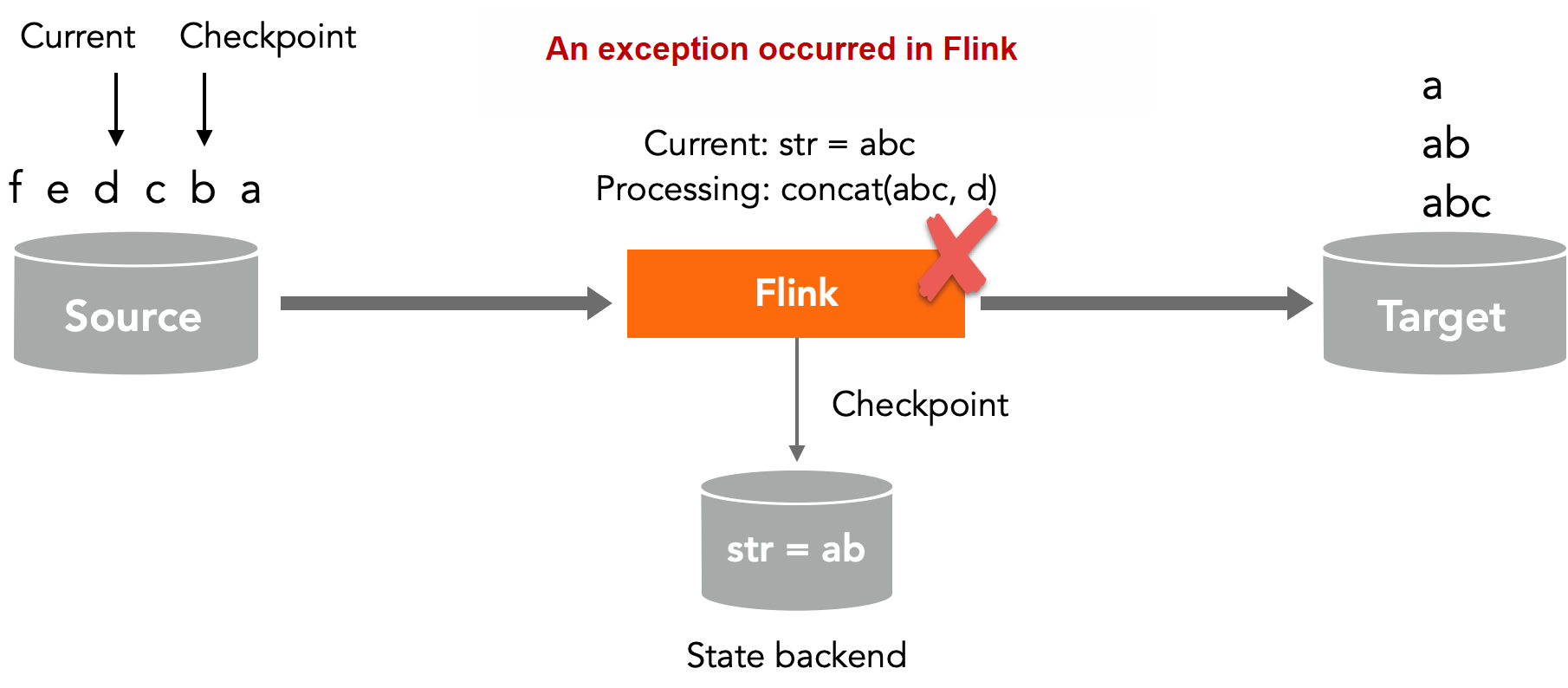

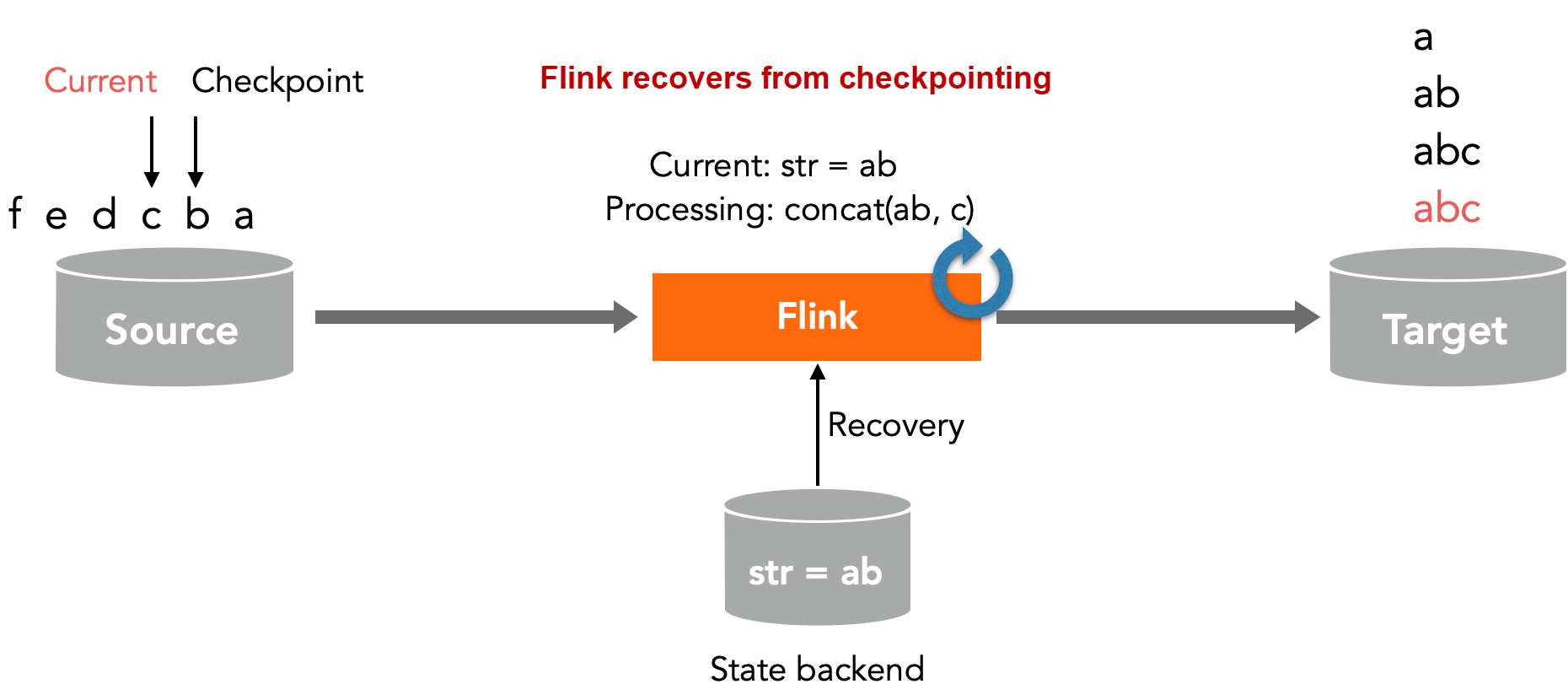

The following figure illustrates the process of string concatenation. The logic is to read characters one by one from the source and concatenate them. Each concatenated character is outputted to the target, resulting in the output of multiple non-repeating strings such as a, ab, and abc in the end.

In this example, the Flink checkpoint stores the completed character concatenation ab and the corresponding source point (indicated by the checkpoint arrow). The current point indicates the point that is currently being processed. At this point, a, ab, and abc have already been outputted to the target. When an abnormal restart occurs, Flink restores its state from the checkpoint, rolls back to the previous point, reprocesses the character c, and outputsabc to the target again, causing abc to be repeated.

In this example, Flink restores its state through checkpointing, so there won't be a situation where characters are duplicated, such as abb or abcc, and there won't be a situation where characters are lost, such as ac, ensuring its own Exactly-Once. However, two duplicate abcs appear on the target side, so end-to-end Exactly-Once is not guaranteed.

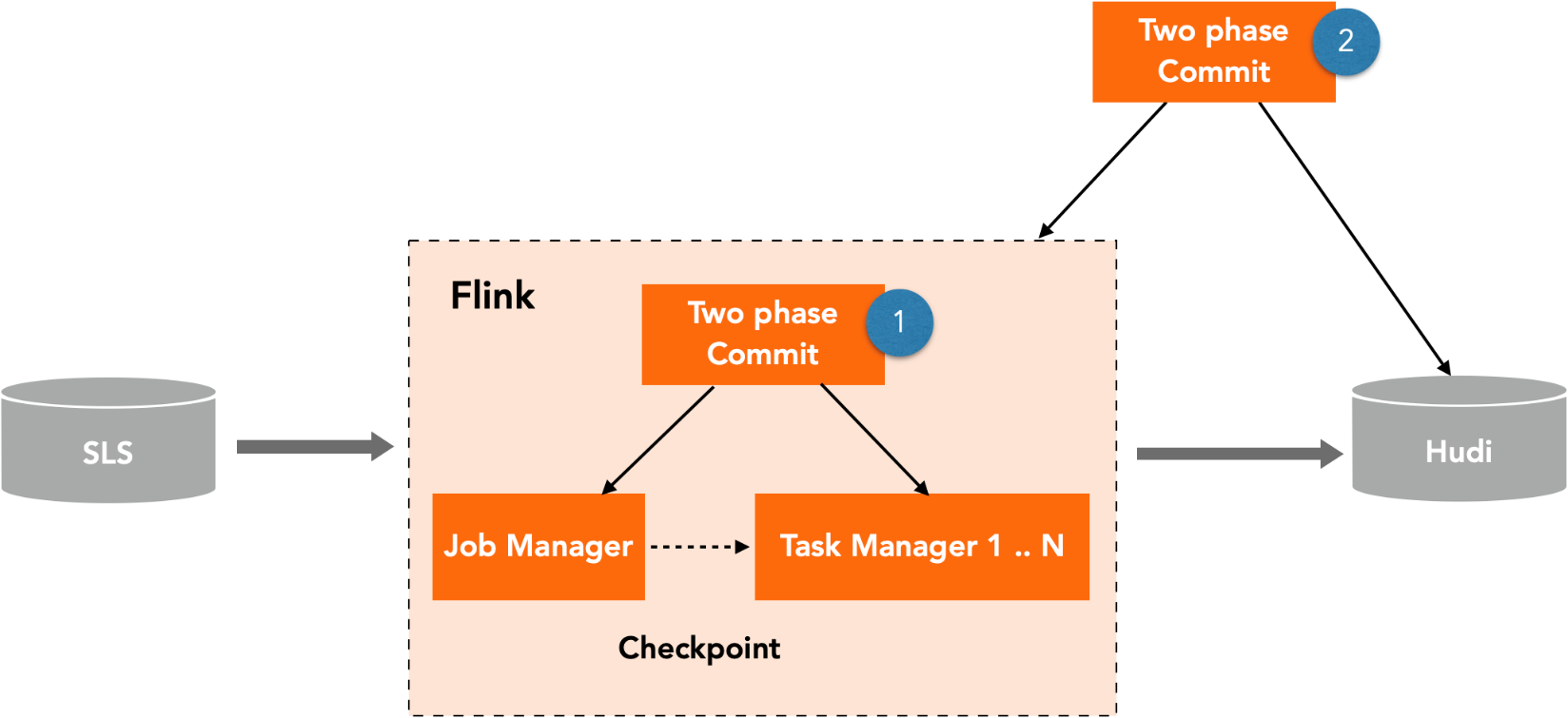

Flink is a complex distributed system that includes operators like source and sink, as well as parallel relationships like slots. In such a system, the two-phase commit is commonly used to achieve Exactly-Once consistency. Flink's checkpointing is an implementation of the two-phase commit.

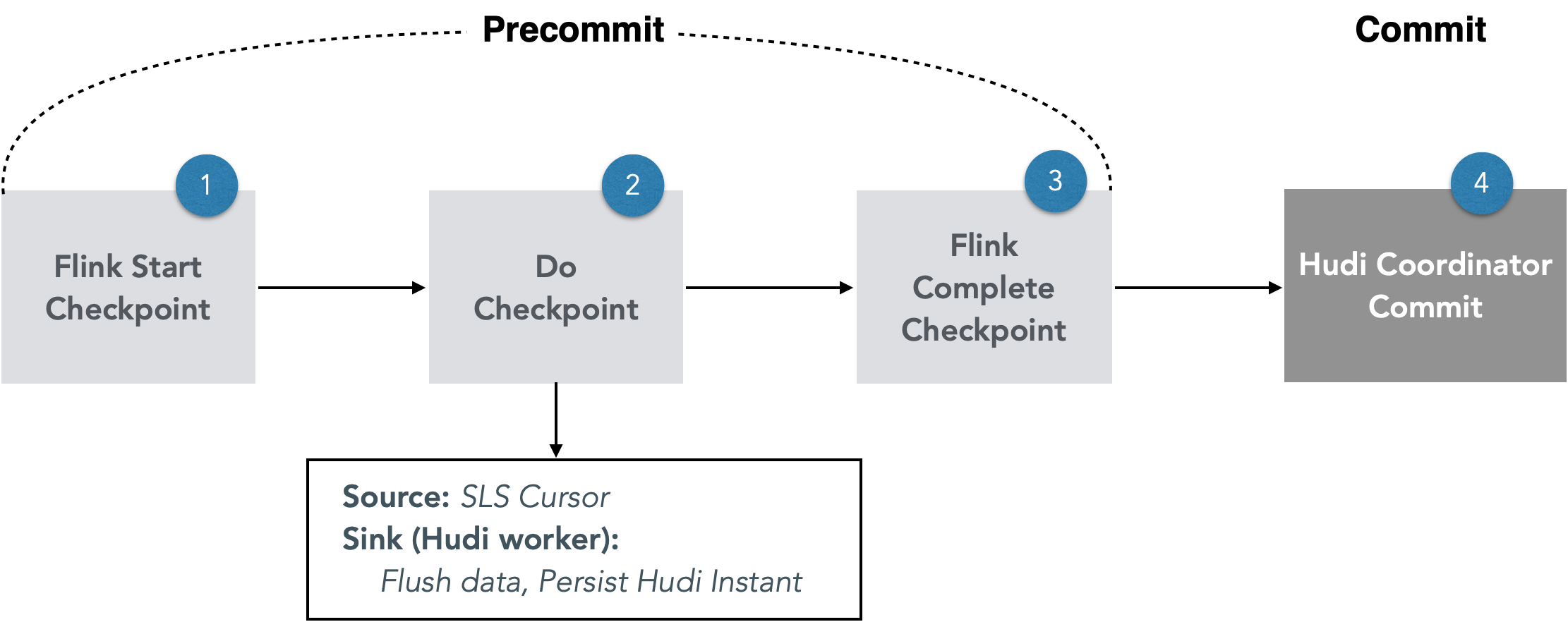

In an end-to-end scenario, Flink and Hudi form another distributed system. To achieve Exactly-Once consistency in this distributed system, we need another set of two-phase commit protocols. (We won't discuss the SLS side here because Flink doesn't change the status of SLS in this scenario, but only utilizes the point replay capability of SLS.) Therefore, in an end-to-end scenario, the two-phase commits of Flink and Flink + Hudi are used to ensure Exactly-Once consistency (see the following figure).

The two-phase checkpointing of Flink will not be explained in detail here. The following sections will focus on the implementation of the two-phase commit of Flink + Hudi, defining the precommitting phase and the committing phase, and discussing how to recover from faults when exceptions occur to ensure that Flink and Hudi are in the same state. For example, if Flink has completed checkpointing but Hudi has not completed committing, we will explore how to restore to a consistent state. These questions will be addressed in the subsequent sections.

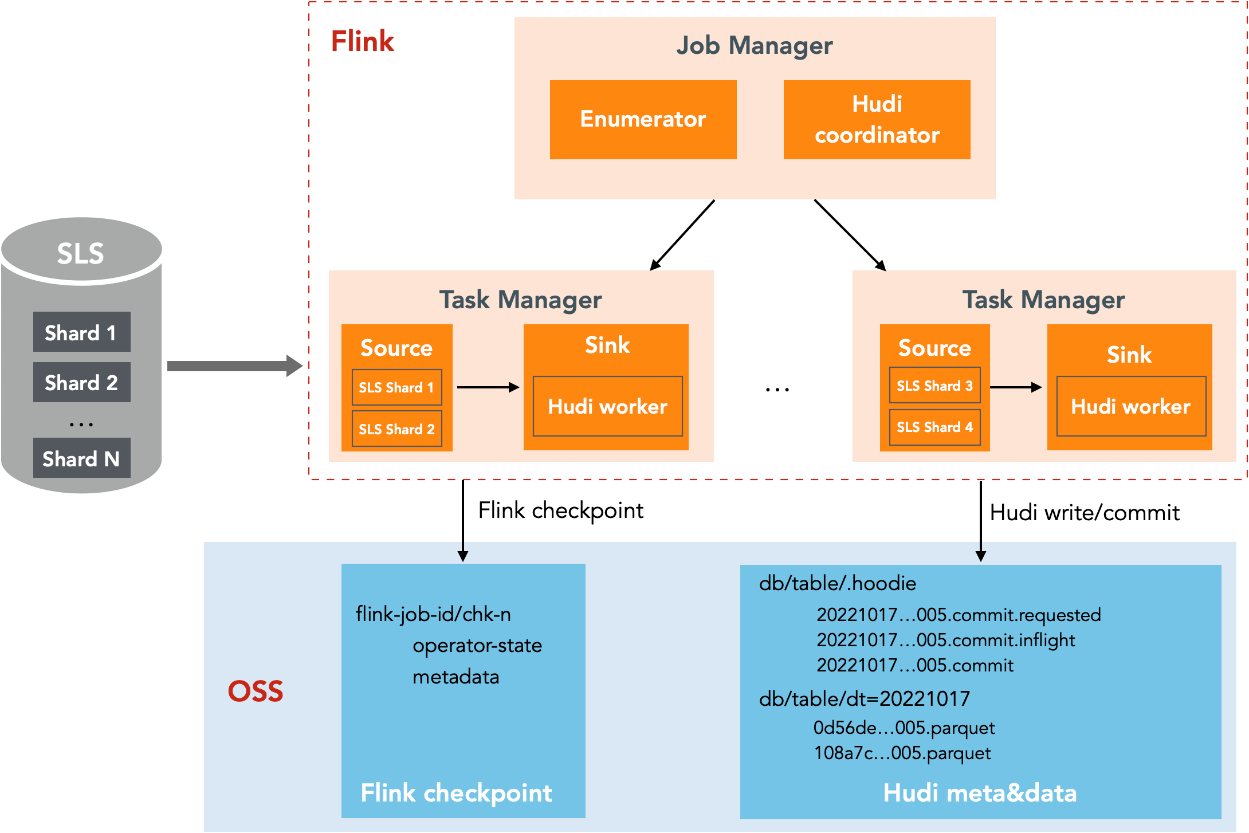

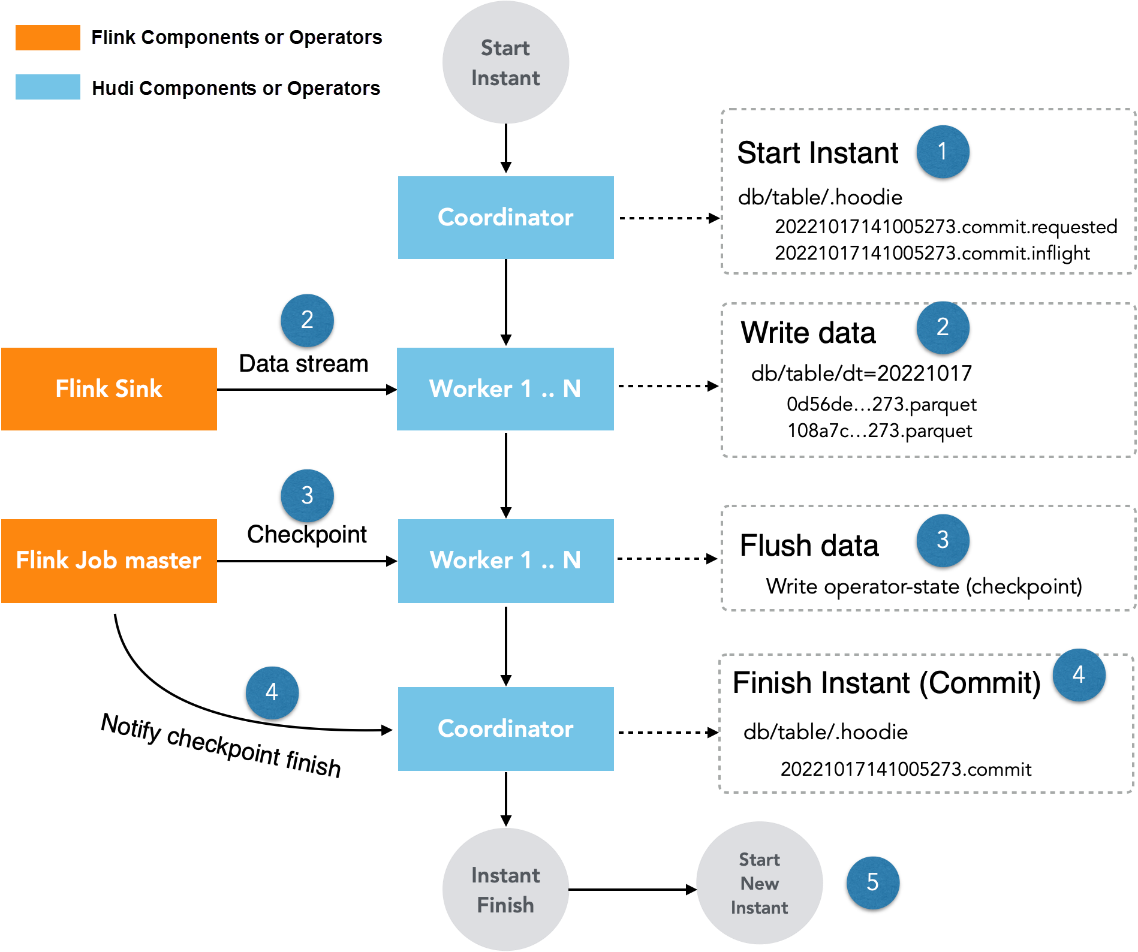

The following describes how to implement the Exactly-Once consistency of SLS into the lake. The architecture involves deploying Hudi components on Flink's JobManager and TaskManager. Flink reads SLS as a data source and writes it to a Hudi table. Since SLS is a multi-shard storage, Flink's multiple sources read it in parallel. After reading the data, the sink uses Hudi worker to write the data to the Hudi table. The figure simplifies the process by omitting Repartition and hot spot scattering logic. The checkpoints of Flink and the storage of Hudi data are stored in OSS.

We will not explain how to implement the source to consume SLS data here. We will introduce two consumption modes of SLS: consumer group mode and general consumption mode and their differences.

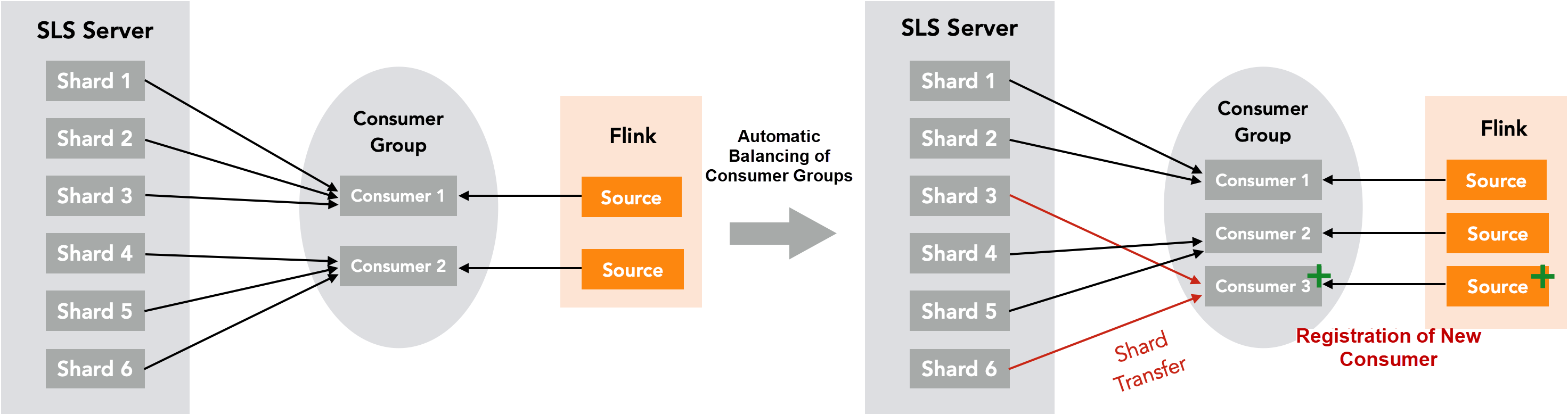

As the name suggests, multiple consumers can be registered to the same consumer group in the Consumer Group Mode. SLS automatically assigns shards to these consumers for reading. The advantage of this mode is that the consumer group of SLS manages the load balancing. In the left part of the figure below, two consumers are registered in the consumer group. Therefore, SLS evenly distributes six shards to these two consumers. When a new consumer is registered (right in the figure below), SLS will automatically balance and migrate some shards from the old consumers to the new consumer, which is called shard transfer.

The advantage of this mode is automatic load balancing, and consumers are automatically allocated when SLS shards are split or merged. However, this mode causes problems in our scenario. To ensure Exactly-Once consistency, we store the current consumer offset of each shard in the checkpoints of Flink. During operation, the source on each slot holds the current consumer offset. If a shard transfer occurs, how can we ensure that the operator on the old slot is no longer consuming and the offset is transferred to the new slot at the same time? This introduces a new consistency problem. In particular, if a large-scale system has hundreds of SLS shards and hundreds of Flink slots, some sources are likely to be registered to SLS before others, resulting in inevitable shard transfer.

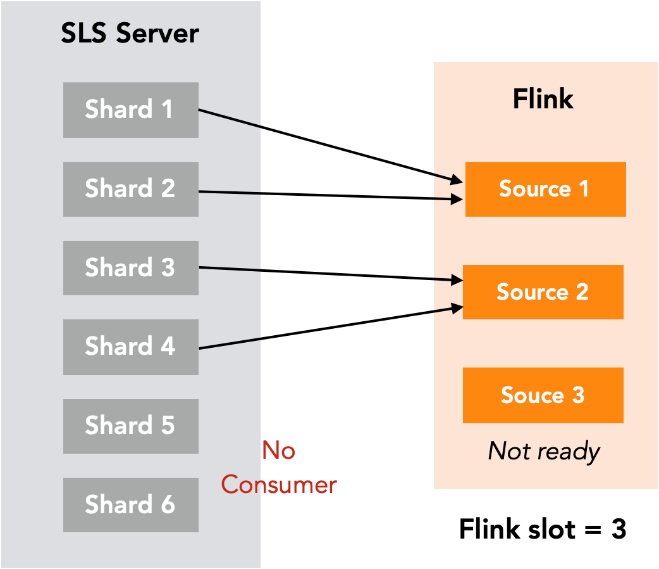

In the General Consumption Mode, the SLS SDK is used to specify shards and offsets to consume data, instead of being allocated by the SLS consumer group. Therefore, shard transfer does not occur. As shown in the figure below, the number of slots in Flink is 3. Therefore, we can calculate that each consumer will consume two shards and allocate accordingly. Even if Source 3 is not ready, Shard 5 and Shard 6 will not be allocated to Source 1 and Source 2. For load balancing (for example, when the load of some TaskManagers is too high), you still need to migrate shards. However, in this case, the migration is triggered by us, and the status is more controllable, thus avoiding inconsistency.

In this section, I'll describe the concepts related to Hudi commit and how it works with Flink to achieve Exactly-Once consistency by achieving two-phase commission and fault tolerance.

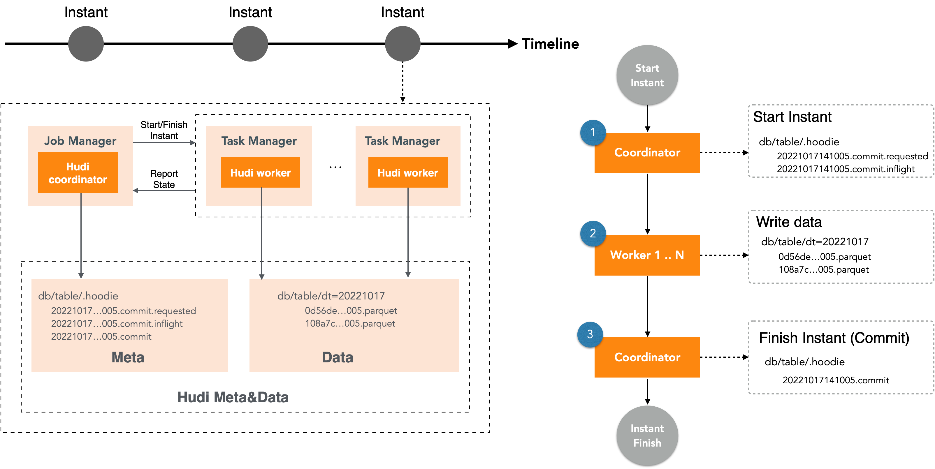

Timeline and Instant

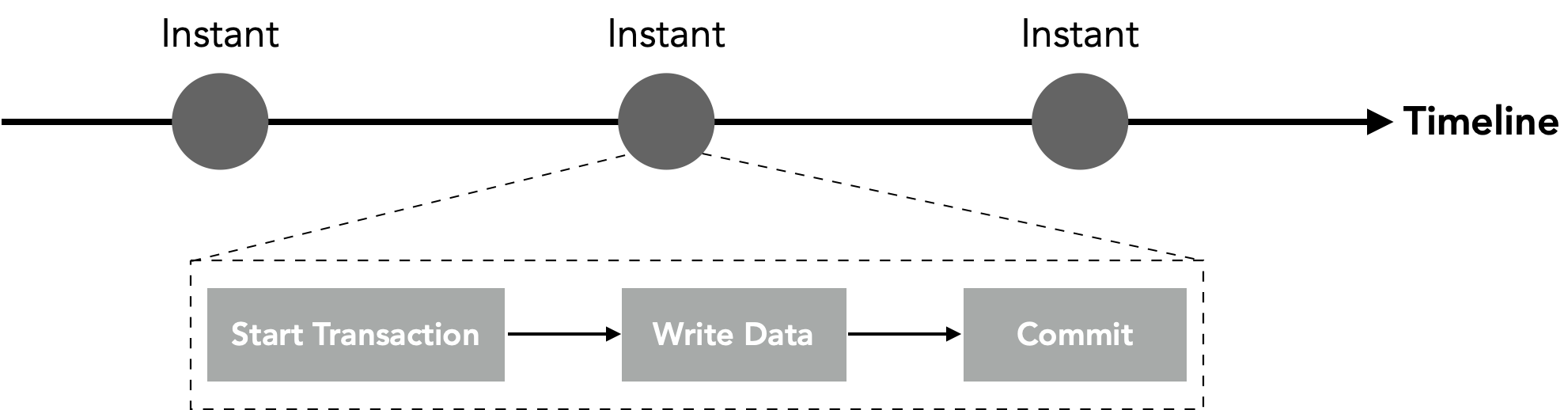

Hudi maintains a timeline. Instant integrates actions initiated at a specific time to the table, and the states of the table. An Instant can be understood as a data version. The action includes Commit, Rollback, and Clean. Their atomicity is guaranteed by Hudi. Therefore, the instant in Hudi is similar to the transactions and versions in the database. In the figure, we use Start Transaction, Write Data, and Commit, which are similar to database transactions, to express the execution process of an instant. In the instant, some actions have the following meanings.

• Commit: Atomically writes records to a dataset.

• Rollback: Rolls back when the commit fails, which deletes dirty files generated during writing.

• Clean: Deletes old versions and files that are no longer needed in the dataset

There are three states of the instant:

• Requested: The operation has been scheduled but not executed. It can be understood as a Start Transaction.

• Inflight: The operation is in progress, which can be understood as Write Data.

• Completed: The operation is completed, which can be interpreted as Commit.

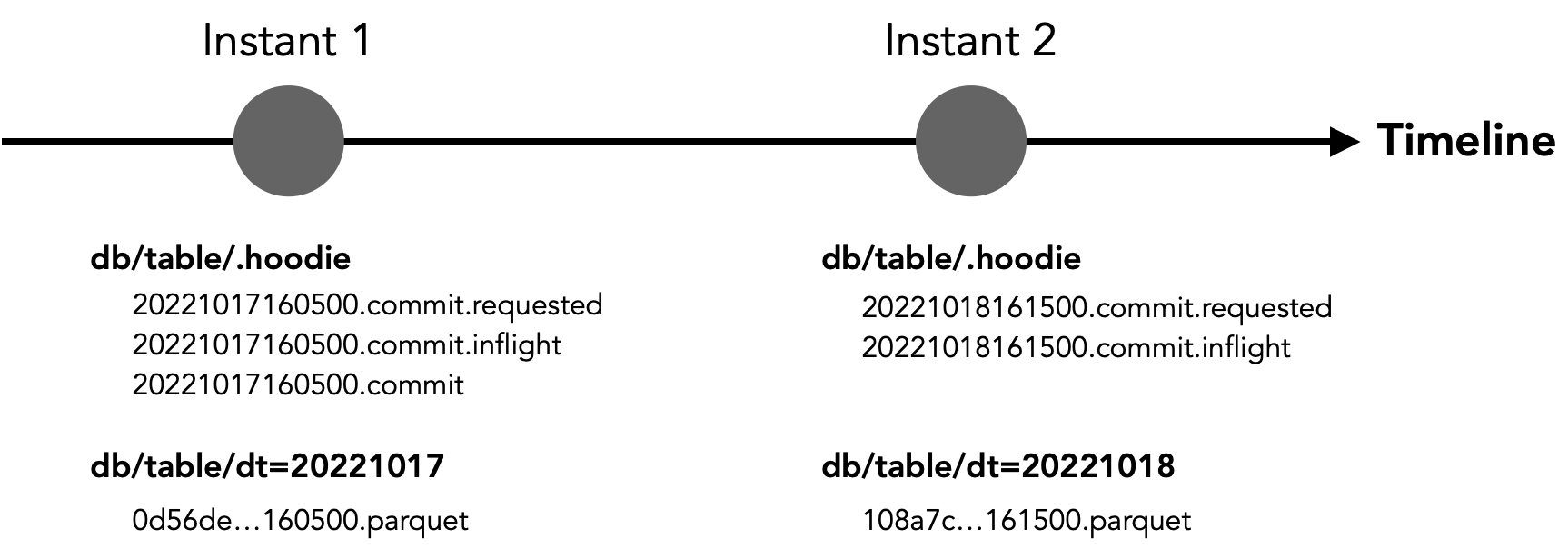

The time, action, and state of the instant are all described in the metadata file. The following figure shows two successive instants on the timeline. The metadata file in the .hoodie directory of Instant 1 indicates that the start time is 2022-10-17 16:05:00, the action is Commit, and the 20221017160500 .commit file indicates that the commit is completed. The parquet data file corresponding to the instant is displayed in the partition directory of the table. Instant 2, on the other hand, happens on the next day, and the action is executed but not yet committed.

Commit Process of Hudi

The process can be understood in the following figure. Hudi has two types of roles. The coordinator is responsible for initiating the instant and complete the commit, and the worker is responsible for writing data.

The following figure shows how Hudi works with Flink to write and commit data.

The actual commit can be simplified to the process described above. As shown in the figure, steps 1 to 3 represent the checkpointing logic of Flink. If an exception occurs during these steps, the checkpoint fails and the job is restarted. The job resumes from the previous checkpoint, similar to a failure in the Precommit phase of the two-phase commit, resulting in a transaction rollback. If an exception occurs between steps 3 and 4, the states of Flink and Hudi become inconsistent. In this case, Flink considers the checkpointing to be completed, but Hudi has not committed it. If we do not handle this situation, data will be lost because the SLS offsets have been moved forward after Flink completes the checkpointing, while this data has not been committed on Hudi. Therefore, the focus of fault tolerance is on how to handle the inconsistency caused by this phase.

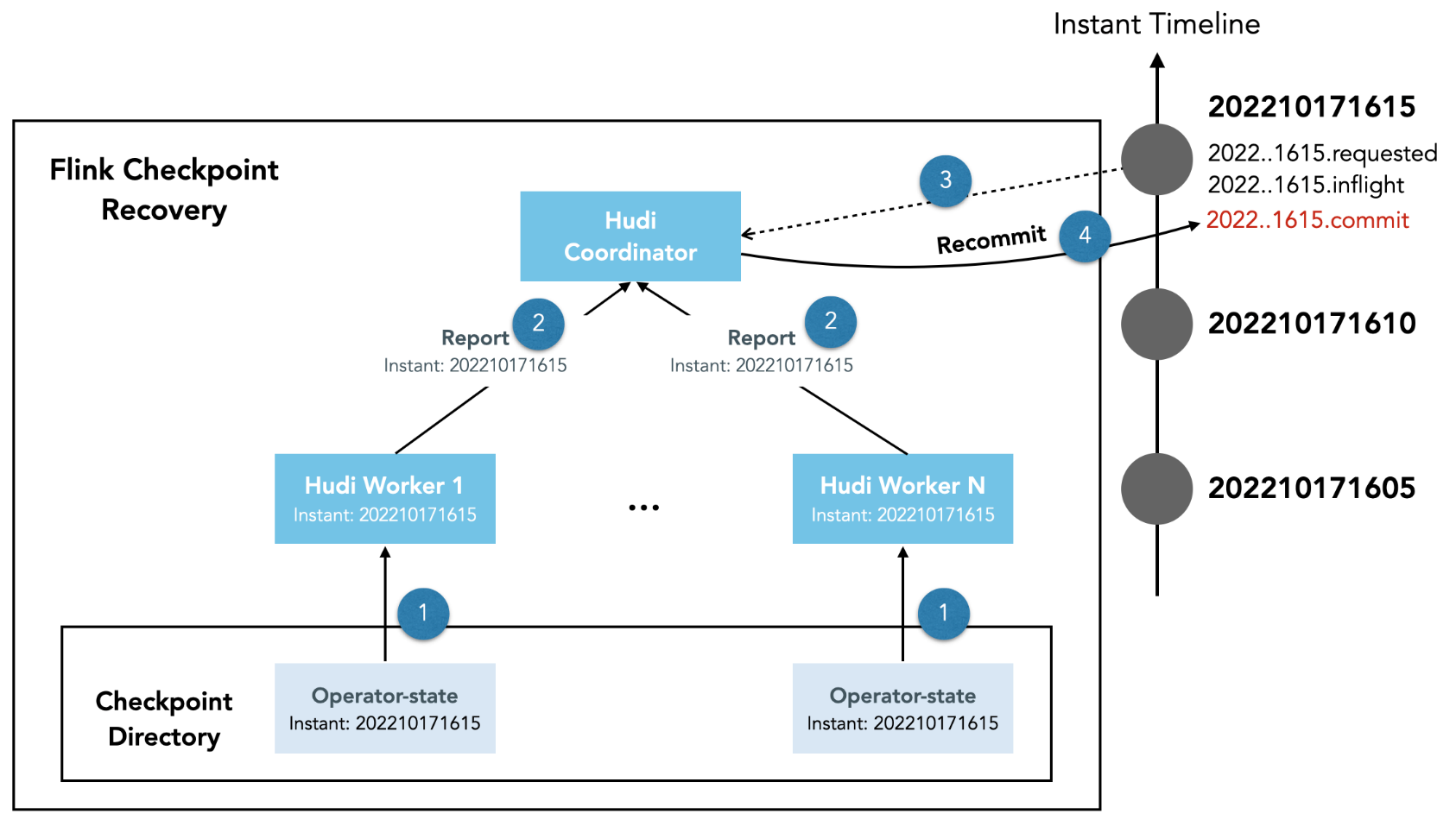

The solution is that when the Flink job restarts and recovers from the checkpoint, if the latest Instant of Hudi has uncommitted writes, they must be recommitted. The procedure for recommitting is shown in the following figure.

Is there a situation where some Hudi workers are in the latest instants and some workers are in the old instants when restarting? The answer is no because Flink's checkpointing is equivalent to the precommitting phase of the two-phase commit. If the checkpointing is complete, Hudi has precommitted, and all workers are in the latest instants. If the checkpointing fails, the Hudi workers will return to the previous checkpoint when restarting. In this case, the status of the Hudi workers is the same as returning to the old instants.

In the failover handling of data ingestion into the lake, the source ensures that the processed data is not replaced by using the persistent SLS sequence numbers in the checkpoint. This ensures that the data is not duplicated. The Sink uses the two-phase commit and recommit mechanisms implemented by Flink and Hudi to ensure that the data is not lost. Finally, Exactly-Once is implemented. Based on actual measurements, the impact of this mechanism on performance is about 3% to 5%. This mechanism achieves high throughput and real-time data lake at a minimal cost while ensuring Exactly-Once consistency. In a massive log ingestion project, the daily throughput reaches 3 GB/s and the peak throughput reaches 5 GB/s. The tunnel runs stably. Additionally, the online and offline integration engine of AnalyticDB for MySQL Data Lakehouse Edition is used to implement real-time data ingestion and online/offline integration analysis.

About Database Kernel | PolarDB X-Engine: Building a Cost-Effective Transaction Storage Engine

Build an All-in-one Real-time Data Warehouse (Code-level) Based on AnalyticDB for PostgreSQL

ApsaraDB - July 25, 2023

Apache Flink Community - March 14, 2025

ApsaraDB - February 29, 2024

Apache Flink Community - March 20, 2025

Apache Flink Community - August 14, 2025

Kidd Ip - July 31, 2025

Realtime Compute for Apache Flink

Realtime Compute for Apache Flink

Realtime Compute for Apache Flink offers a highly integrated platform for real-time data processing, which optimizes the computing of Apache Flink.

Learn More Big Data Consulting for Data Technology Solution

Big Data Consulting for Data Technology Solution

Alibaba Cloud provides big data consulting services to help enterprises leverage advanced data technology.

Learn More Big Data Consulting Services for Retail Solution

Big Data Consulting Services for Retail Solution

Alibaba Cloud experts provide retailers with a lightweight and customized big data consulting service to help you assess your big data maturity and plan your big data journey.

Learn More ApsaraDB for HBase

ApsaraDB for HBase

ApsaraDB for HBase is a NoSQL database engine that is highly optimized and 100% compatible with the community edition of HBase.

Learn MoreMore Posts by ApsaraDB