By Yanliu, Hanfeng, and Fengze

Lakehouse, an emerging concept in big data, integrates stream and batch processing and merges data lakes with data warehouses. Alibaba Cloud AnalyticDB for MySQL has developed a next-generation Lakehouse platform using Apache Hudi, facilitating one-click data ingestion from various sources like logs and CDC into data lakes, along with integrated analysis capabilities of both online and offline computing engines. This article mainly explores how AnalyticDB for MySQL uses Apache Hudi to ingest complete and incremental data from multiple CDC tables into data lakes.

Users of data lakes and traditional data warehouses often encounter several challenges:

• Full data warehousing or direct analysis connected to source databases significantly burdens the source databases, highlighting the need for load reduction to avoid failures.

• Data warehousing is usually delayed by T+1 day, while data lake ingestion can be quicker at just T+10 minutes.

• Storing vast amounts of data in transactional databases or traditional data warehouses is costly, necessitating more affordable data archiving solutions.

• Traditional data lakes lack support for updates and tend to have many small files.

• High operational and maintenance costs for self-managed big data platforms drive the demand for productized, cloud-native, end-to-end solutions.

• Storage in common data warehouses is not openly accessible, leading to a need for self-managed, open-source, and controllable storage systems.

• There are various other pain points and needs.

To address these issues, AnalyticDB Pipeline Service uses Apache Hudi for the full and incremental ingestion of multiple CDC tables. It offers efficient full data loading during ingestion and analysis, real-time incremental data writing, ACID transactions, multi-versioning, automated small file merging and optimization, automatic metadata validation and evolution, efficient columnar analysis format, optimized indexing, and storage for very large partition tables, effectively resolving the aforementioned customer pain points.

AnalyticDB for MySQL uses Apache Hudi as the storage foundation for ingesting CDC and logs into data lakes. Hudi was originally developed by Uber to address several pain points in their big data system. These pain points include:

• Limited scalability of HDFS: A large number of small files put significant strain on the NameNode, causing it to become a bottleneck in the HDFS system.

• Faster data processing on HDFS: Uber required real-time data processing and was no longer satisfied with a T+1 data delay.

• Support for updates and deletions: Uber's data was partitioned by day, and they needed efficient ways to update and delete old data to improve import efficiency.

• Faster ETL and data modeling: Uber wanted downstream data processing tasks to only read relevant incremental data instead of the full dataset from the data lake.

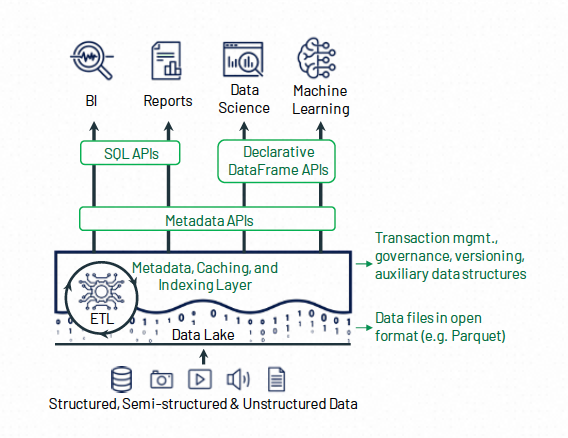

To address these challenges, Uber developed Hudi (Hadoop Upserts Deletes and Incrementals) and contributed it to the Apache Foundation. As the name suggests, Hudi's core capabilities initially focused on efficient updates, deletions, and incremental reading APIs. Hudi, along with Iceberg and DeltaLake shares similar overall functions and architectures, consisting of three main components:

In terms of data objects, Lakehouse typically employs open-source columnar storage formats like Parquet and ORC, with little difference in this respect. For auxiliary data, Hudi offers efficient write indexes, such as Bloom filters and bucket indexes, making it better suited for scenarios with extensive CDC data updates.

Before constructing the data lake ingestion for multiple CDC tables using Hudi, the Alibaba Cloud AnalyticDB for MySQL team researched various industry implementations for reference. Here's a brief overview of some industry solutions:

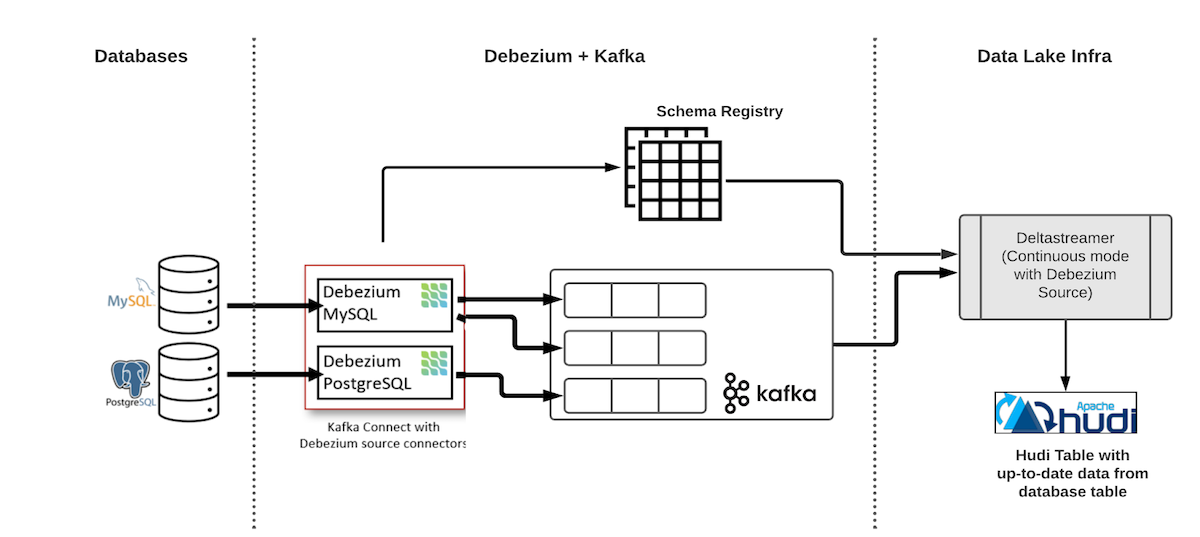

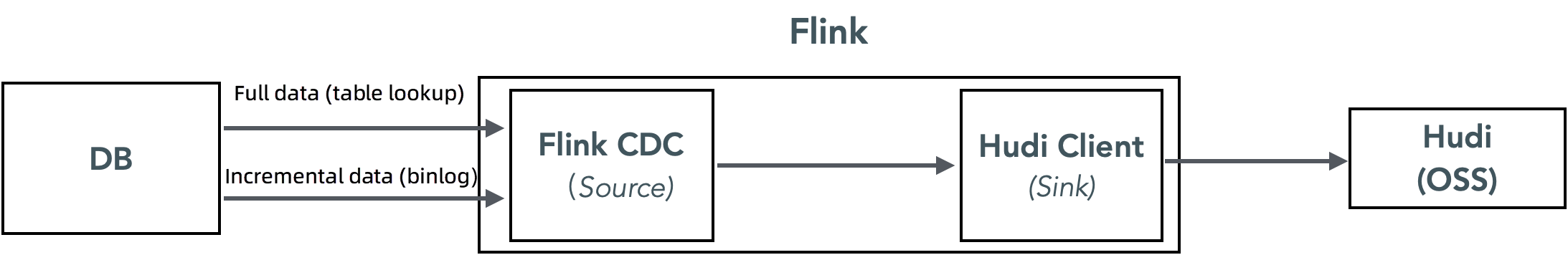

The following figure shows the architecture of end-to-end ingestion of CDC data to data lakes by using Hudi.

The first component in the diagram is the Debezium deployment, comprising the Kafka cluster, the Schema Registry (Confluence or Apicurio), and the Debezium connector. It continuously reads binary log data from the database and writes it into Kafka.

Downstream in the diagram is the Hudi consumer. In this instance, Hudi's DeltaStreamer tool is utilized to consume data from Kafka and write data into the Hudi data lake. In practice, for similar single-table CDC ingestion scenarios, one could replace the binary log source from Debezium + Kafka with Flink CDC + Kafka or choose Spark or Flink as the computing engine according to business needs.

While this method effectively synchronizes CDC data, there is a challenge: every table requires an independent ingestion link to the data lake. This approach poses several issues when synchronizing multiple tables in a database:

Currently, Hudi supports multi-table data lake ingestion through a single link, but this solution is not yet mature enough for production use.

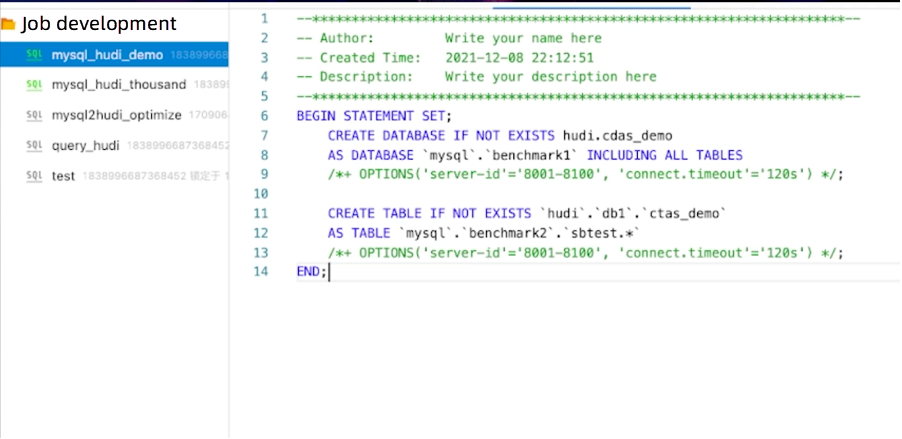

Alibaba Cloud Realtime Compute for Apache Flink (Flink VVP) is a fully managed serverless service built on Apache Flink. It is readily available and supports various billing methods. It provides a comprehensive platform for development, operation, maintenance, and management, offering powerful capabilities for the entire project lifecycle, including draft development, data debugging, operation and monitoring, Autopilot, and intelligent diagnostics.

Alibaba Cloud Flink supports the data lake ingestion of multiple tables (binlog > Flink CDC > downstream consumer). You can consume the binary logs of multiple tables in a Flink task and write them to the downstream consumer.

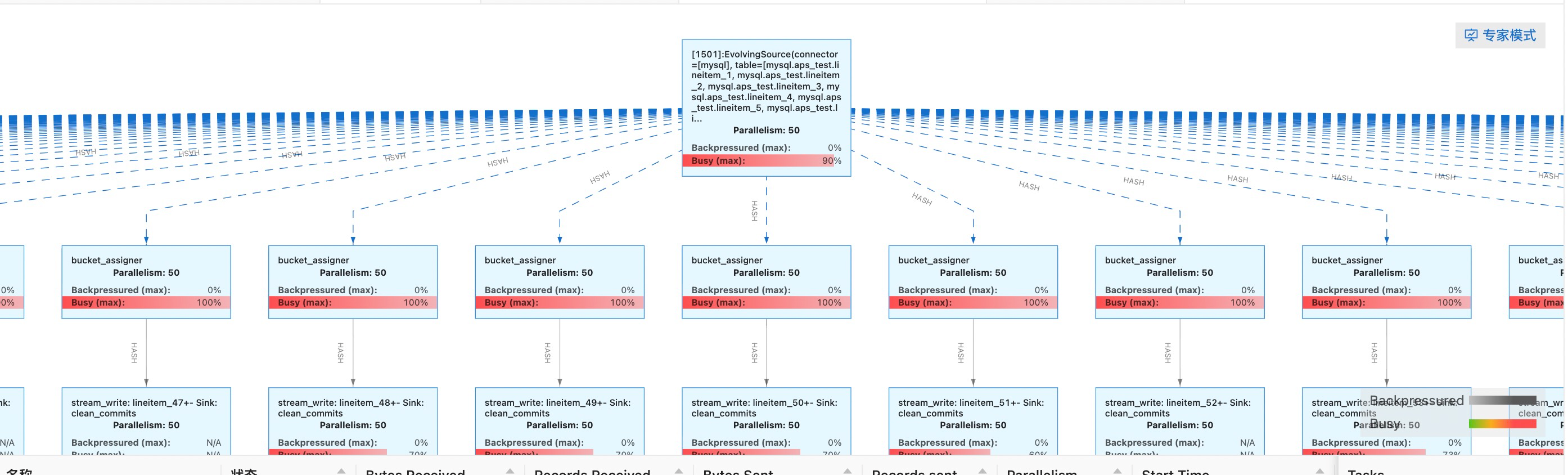

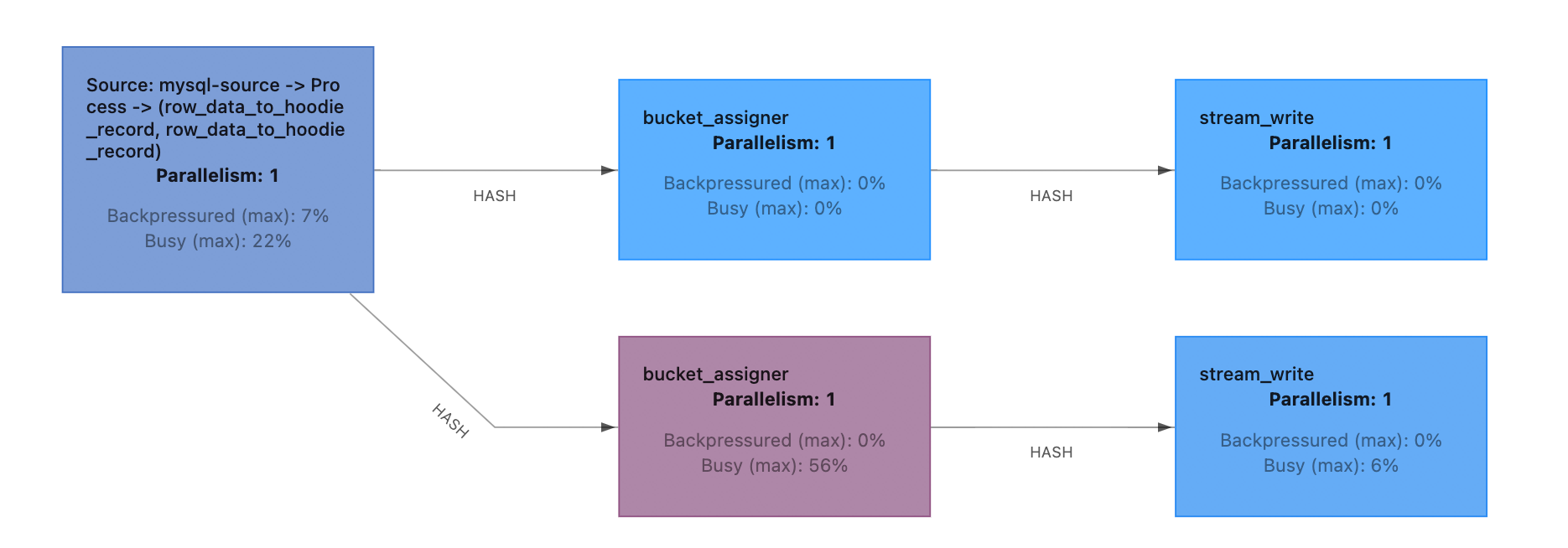

The following figure shows the topology after the task starts. A binary log source operator distributes data to all downstream Hudi sink operators.

You can use Flink VVP to implement the data lake ingestion of multiple CDC tables. However, this solution has the following problems:

After comprehensive consideration, we decided to use a solution similar to the data lake ingestion of multiple CDC tables based on Flink VVP. We provide a service-oriented feature of full and incremental ingestion of multiple CDC tables on AnalyticDB for MySQL.

The main design objectives for the data lake ingestion of multiple CDC tables in AnalyticDB for MySQL are as follows:

• Support one-click ingestion of data from multiple tables to Hudi, reducing consumer management costs.

• Provide a service-oriented management interface where users can start, stop, and edit data lake ingestion tasks, and support features such as unified prefixes for database and table names and primary key mapping.

• Minimize costs and reduce the components required for data lake ingestion.

Based on these design objectives, we initially chose the technical solution of using Flink CDC as the binary log and full data source, directly writing to Hudi without any intermediate cache.

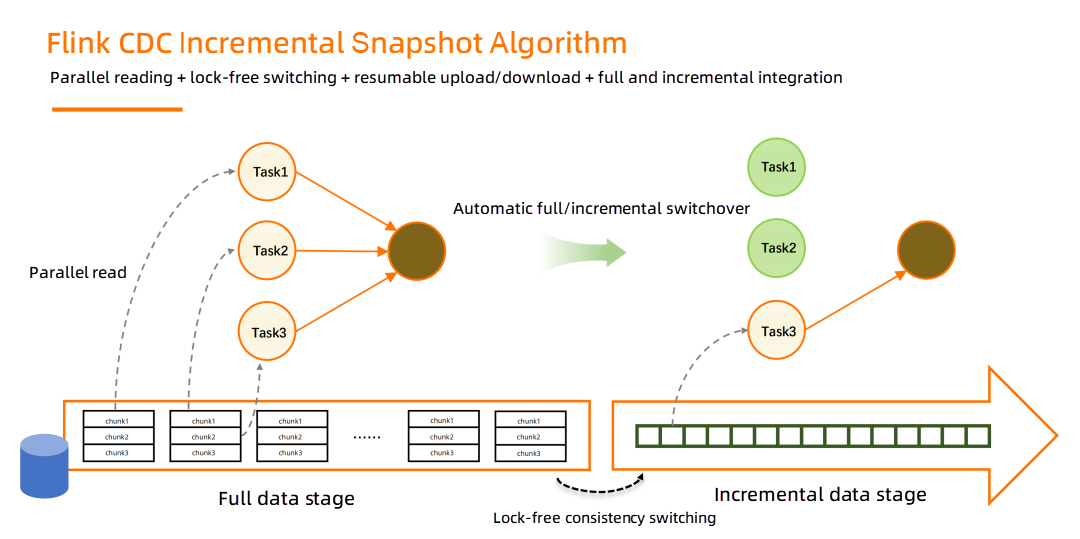

Flink CDC is a source connector for Apache Flink, capable of reading both snapshot and incremental data from databases like MySQL. With Flink CDC 2.0, enhancements such as full lock-free reading, concurrent full data reading, and resumable checkpointing have been implemented to better achieve unified stream and batch processing.

By using Flink CDC, there's no need to worry about switching between full and incremental data. It allows the use of Hudi's universal upsert interface for data consumption, with Flink CDC handling the switchover and offset management for multiple tables, thereby reducing task management overhead. Since Hudi does not natively support multi-table data consumption, it is necessary to develop code to write data from Flink CDC to multiple downstream Hudi tables.

The benefits of this implementation are:

• Shorter data ingestion pathways, fewer components to maintain, and lower costs as there's no reliance on independently deployed binary log source components like Kafka or Alibaba Cloud DTS.

• Existing industry solutions that can be referenced, where the Flink CDC + Hudi combination for single-table data lake ingestion is a well-established solution, and Alibaba Cloud Flink VVP also supports writing multiple tables to Hudi.

The following section describes the practical experience of AnalyticDB MySQL based on this architecture selection.

Currently, the process of writing CDC data to Hudi through Flink is as follows:

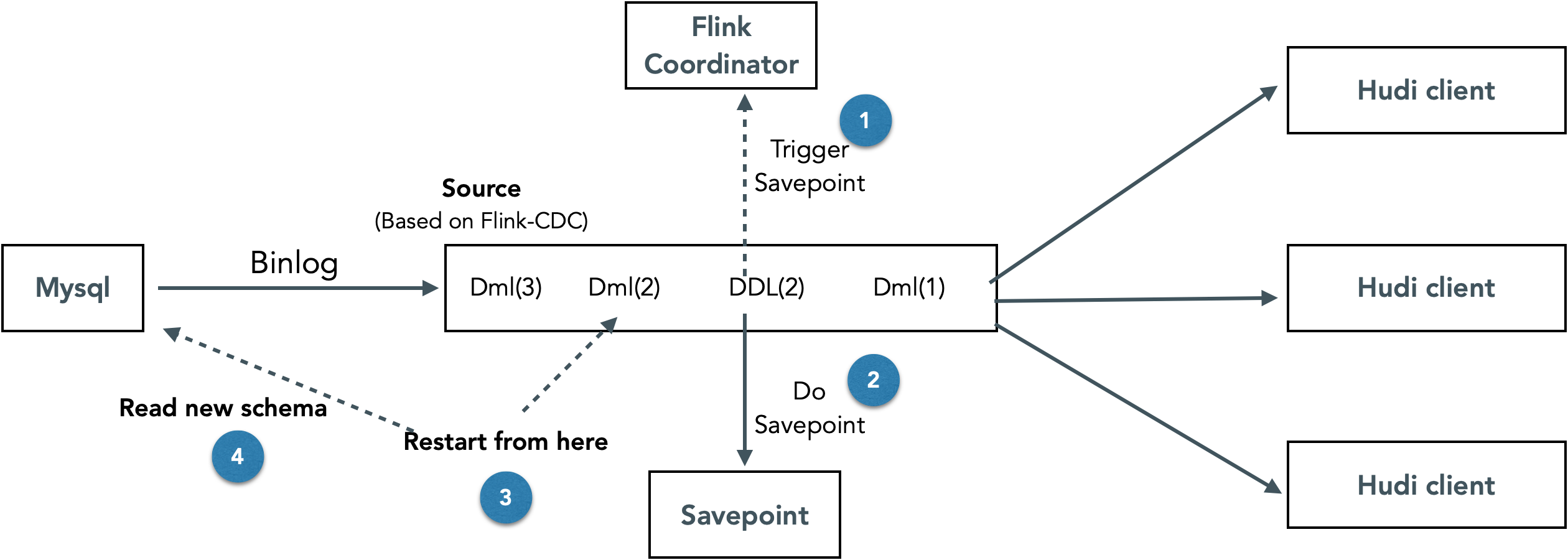

In steps 2 to 4, the write schema is required, and it is determined before the task is deployed in the current implementation. There is no capability to dynamically change schemas during the task runtime.

To solve this problem, we have designed and implemented a solution that can dynamically and non-intrusively update the schema in the data lake ingestion link based on Flink + Hudi. The overall idea is to identify DDL binary log events in Flink CDC. When a DDL event occurs, the consumption of incremental data is stopped, and the task is restarted with the new schema after the savepoint is completed

The advantage of this implementation is that the schemas in the link can be dynamically updated without manual intervention. The disadvantage is that the consumption of all databases and tables needs to stop and then restart. Frequent DDL operations have a great impact on link performance.

First, let's briefly introduce the process of full read and full/incremental switchover in Flink CDC 2.0. In the full data stage, Flink CDC divides a single table into multiple chunks based on the degree of parallelism and distributes them to TaskManagers for parallel reading. After the full reading is completed, lock-free switching to incremental data can be implemented while ensuring consistency. This achieves a true integration of streaming and batch processing.

During the import process, we encountered two problems:

1. The data written in the full data stage is in log files, but for faster querying, it needs to be compacted into Parquet files, which leads to write amplification.

Since Hudi is not aware of the full and incremental switchover, we must use Hudi's upsert interface in the full data stage to deduplicate data. However, the upsert operation on Hudi bucket indexes generates log files, and compaction is required to obtain Parquet files, resulting in write amplification. Moreover, if compaction is performed multiple times during the full import, the write amplification becomes more severe.

Can we compromise the read performance and only write log files? The answer is also no. Increasing the number of log files not only degrades read performance but also affects the performance of listing OSS files, making the writing process slower. During writing, both the log and base files in the current file slice will be listed.

Solution: Increase the checkpoint interval or use different compaction policies in the full data stage and incremental data stage (or perform no compaction in the full data stage).

2. In Flink CDC, fully imported data is parallel within tables but serial between tables, and the maximum concurrency for writing to Hudi is determined by the number of buckets. Sometimes, the available concurrency resources in the cluster cannot be fully utilized.

In Flink CDC, when importing a single table, if the number of concurrent reads and writes is less than the cluster's concurrency, resources may be wasted. If the cluster has a large number of available resources, you may need to increase the number of Hudi buckets to increase the concurrent writing. However, when importing multiple small tables in series, concurrency is not fully utilized, resulting in resource waste. Supporting concurrent import of small tables would improve the performance of the full import.

Solution: Increase the number of Hudi buckets to improve import performance.

1) Checkpoint backpressure tuning

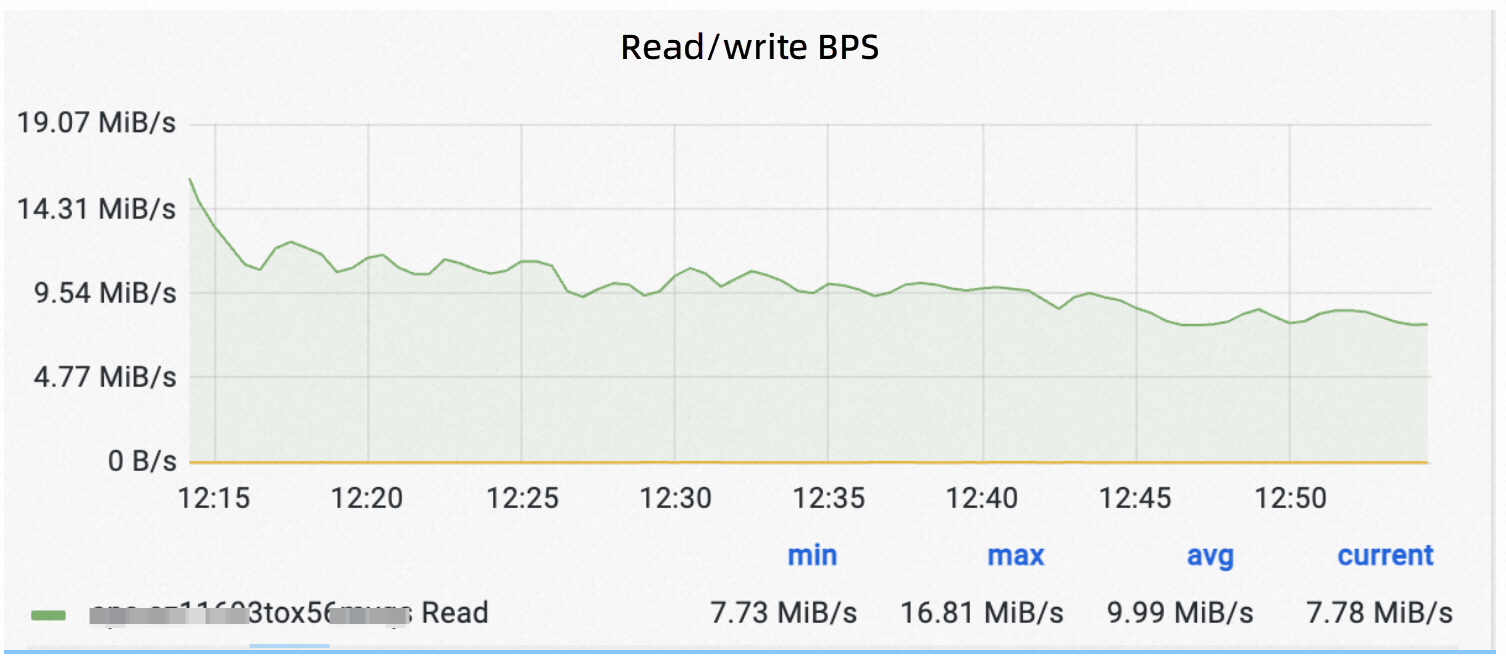

In the process of full and incremental import, we found that the link Hudi checkpoint encountered backpressure, which caused jitter when writing:

As shown, the write traffic fluctuated greatly.

We checked the write link in detail and found that the backpressure is mainly due to data flushing during the Hudi checkpoint. When the traffic is relatively large, 3G data might be flushed within a checkpoint interval, causing a write pause.

The idea to solve this problem is to reduce the buffer size of the Hudi stream write (write task max size) to spread the load of flush data during the checkpoint window to other times.

As shown in the preceding figure, after the buffer size was adjusted, the change in write traffic caused by backpressure resulting from checkpoints was well mitigated.

To alleviate the backpressure of checkpoints, we also made some other optimizations:

• Reducing the Hudi bucket number to reduce the number of files that need to be flushed during the checkpoint. This conflicts with increasing the number of buckets in the full data stage, so this option should be made after weighing.

• Using an off-link Spark job to run compaction promptly to avoid excessive overheads of list files caused by excessive accumulation of log files during log writing.

2) Providing appropriate write metrics to help troubleshoot performance issues

In the process of tuning the Flink link, we found that the Flink-Hudi write metrics are seriously missing. When troubleshooting, we needed to analyze the performance through complicated means such as observing the field logs, dumping the memory, and performing CPU profiling. As a result, we developed a set of in-house Flink stream write metrics to help quickly locate performance problems.

The main indicators include:

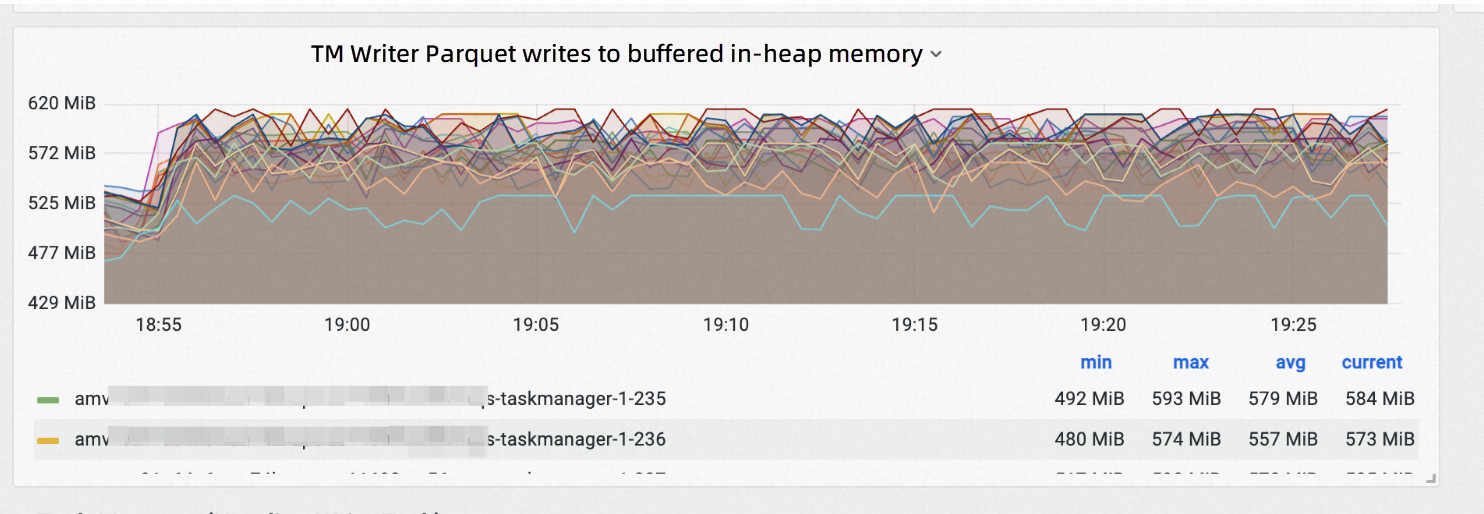

• The size of the buffer occupied by the current stream write operators

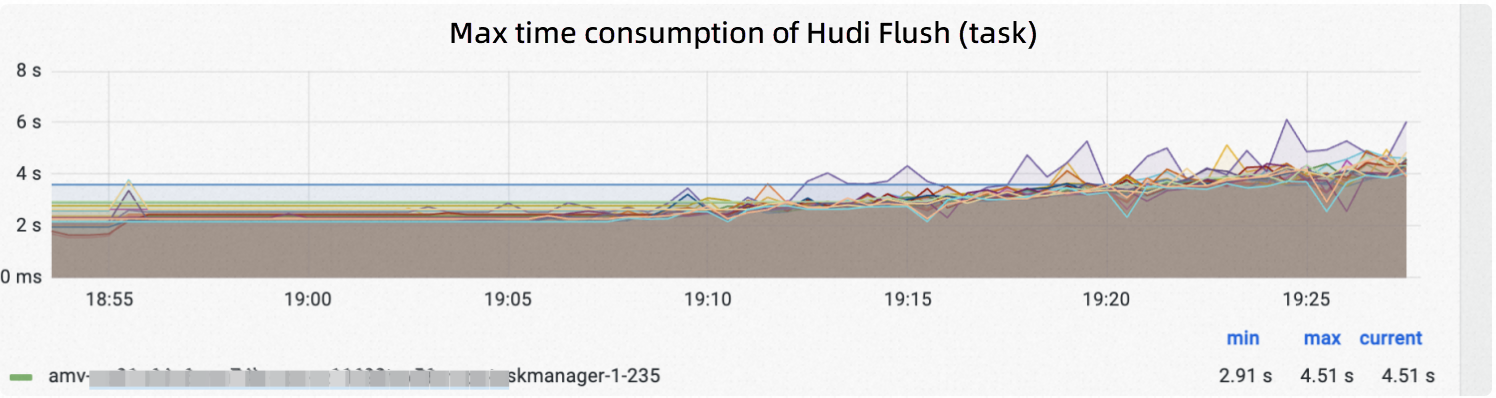

• Time consumption of flush buffer

• Time consumption of requesting OSS to create an object

• Number of active write files

Size of the in-heap memory buffer occupied by stream write or append write:

Time consumption of flushing Parquet/Avro log to disk:

Changes in the indicator values can help us quickly locate the problem. For example, the time consumption of Hudi flush in the preceding figure indicates an upward trend. We can quickly locate the problem and find that the log file listing is slowed down because the compaction is not done in time, resulting in a backlog of log files. After increasing compaction resources, the flush time consumption can be kept stable.

We are also continuously contributing Flink-Hudi metrics-related codes to the community. For details, please refer to HUDI-2141.

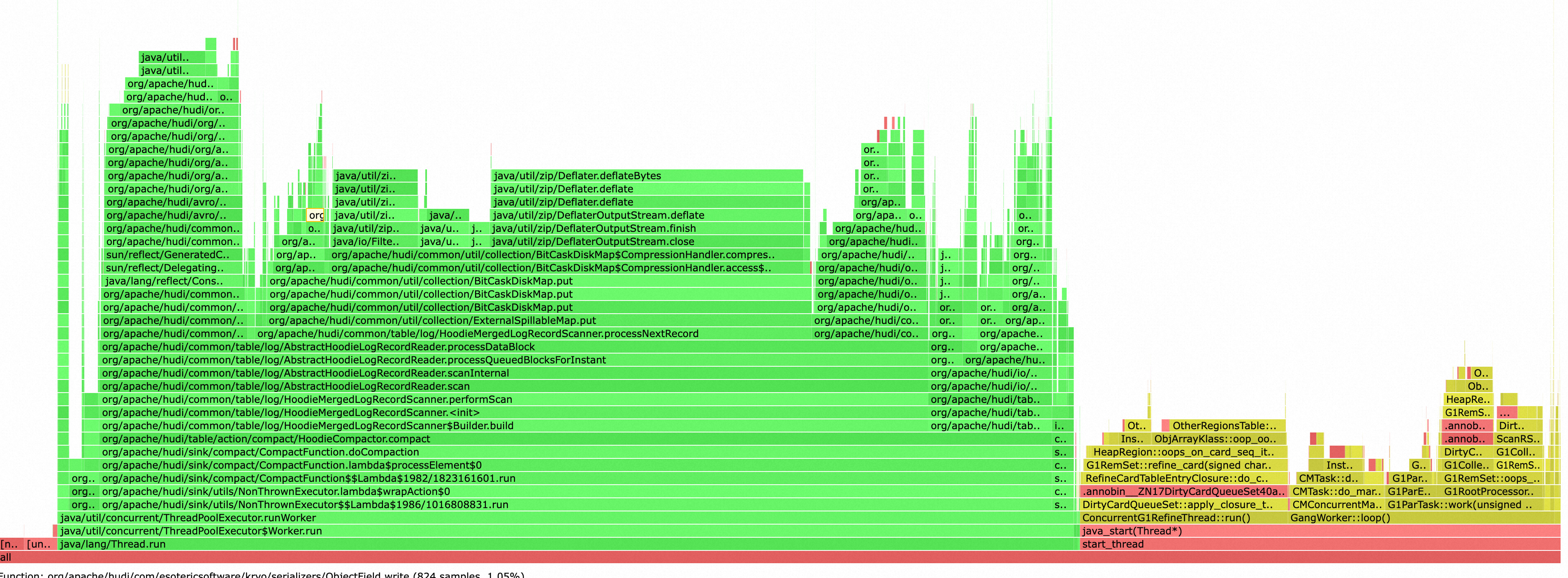

3) Compaction tuning

To simplify the configuration, we initially adopted the in-link compaction solution, but we soon found that compaction preempts write resources seriously and the load was unstable, which greatly affected the performance and stability of the write link. As shown in the following figure, the compaction and GC almost occupied all CPU resources of Task Manager.

Therefore, we now adopt the strategy of separate deployment of TableService and write link, and use Spark offline tasks to run TableService, so that TableService and write link do not affect each other. In addition, Table Service consumes serverless resources and is charged on demand. The write link does not need compaction, so it can stably occupy a relatively small amount of resources. Overall, resource usage and performance stability have been improved.

To facilitate the management of TableService of multiple tables in databases, we have developed a utility that can run multiple TableServices of multiple tables in a single Spark task, which has been contributed to the community. For more information, see PR.

After several rounds of development and tuning, wirting multiple Flink CDC tables into Hudi is basically available. Among them, we believe that the critical stability and performance optimizations are:

• Separating the compaction from the write link to improve the resource usage for writing and compaction.

• Developing a set of Flink Hudi metrics systems, and combining source code and logs for the fine tuning of Hoodie stream write.

However, this architecture scheme still has the following problems that cannot be easily solved:

If we continue to implement multi-table CDC ingestion into the lake based on this solution, we can try the following directions:

However, based on internal discussions and verifications, we believe that it is challenging to continue implementing multi-table full and incremental CDC ingestion based on the Flink + Hudi framework. In this scenario, we should consider replacing it with the Spark engine. The main considerations are as follows:

Subsequent practices have confirmed the accuracy of our judgment. After transitioning to the Spark engine, the feature set, extensibility, performance, and stability of full and incremental multi-table CDC ingestion have all seen improvements. In future articles, we will share our experiences with implementing full and incremental multi-table CDC ingestion based on Spark + Hudi. Stay tuned!

The Principle of the Elasticity Technology of the Cloud-native Data Warehouse AnalyticDB

[Infographic] Highlights | Database New Feature in January 2024

Apache Flink Community - June 11, 2024

Apache Flink Community - May 10, 2024

ApsaraDB - December 27, 2023

Apache Flink Community - July 5, 2024

Apache Flink Community China - August 12, 2022

Apache Flink Community - April 8, 2024

Realtime Compute for Apache Flink

Realtime Compute for Apache Flink

Realtime Compute for Apache Flink offers a highly integrated platform for real-time data processing, which optimizes the computing of Apache Flink.

Learn More Data Lake Formation

Data Lake Formation

An end-to-end solution to efficiently build a secure data lake

Learn More Big Data Consulting for Data Technology Solution

Big Data Consulting for Data Technology Solution

Alibaba Cloud provides big data consulting services to help enterprises leverage advanced data technology.

Learn More Data Lake Storage Solution

Data Lake Storage Solution

Build a Data Lake with Alibaba Cloud Object Storage Service (OSS) with 99.9999999999% (12 9s) availability, 99.995% SLA, and high scalability

Learn MoreMore Posts by ApsaraDB