By Xian Wei

It is possible to manually run a yaml file to add virtual nodes to a Kubernetes cluster. However, this is not user friendly and the nodes cannot be continuously upgraded and managed as components. In this blog post, we discuss how virtual nodes work and how you can use Helm to simplify the deployment and management of ack-virtual-node.

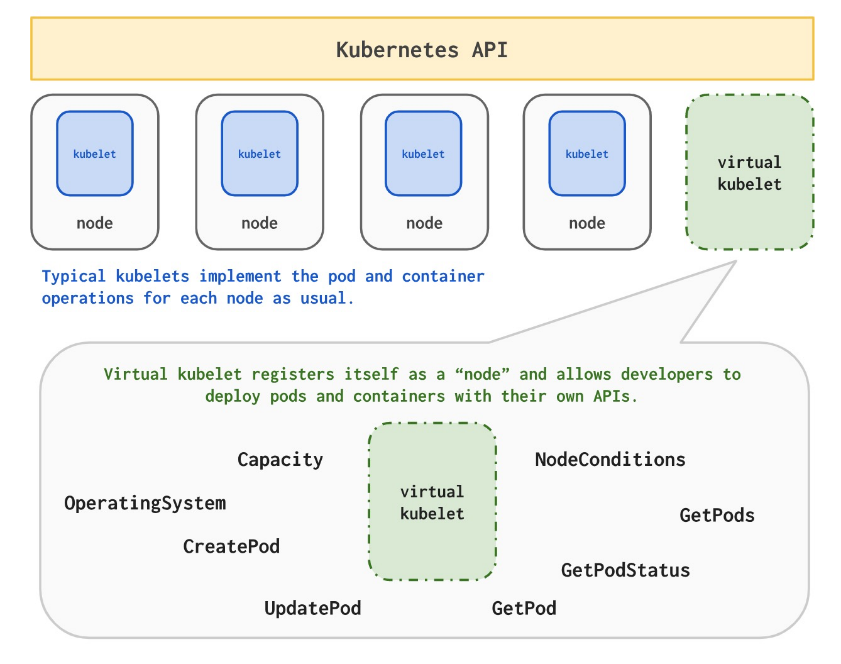

First, let's briefly review how virtual nodes work.

Virtual nodes come from the community's virtual kubelet technology. They make the seamless connection between Kubernetes and an Elastic Container Instance (ECI) possible, so that Kubernetes clusters can easily obtain a high level of elasticity without being limited by the computing capacity of cluster nodes.

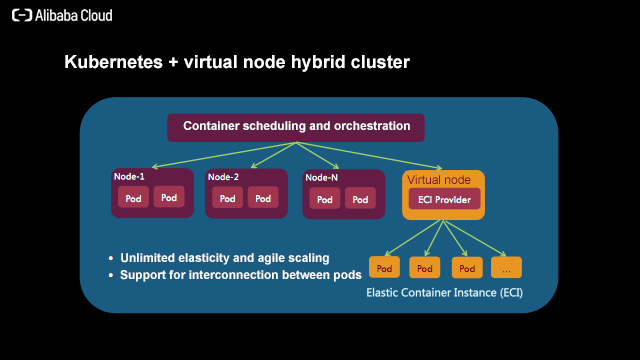

ECI-based virtual nodes support a variety of functions. They not only enhance the elasticity of Kubernetes clusters, but also provide a wide range of capability extensions, such as GPU container instances, EIP mounting, and large container instances, so that users can easily manage multiple computing workloads in a Kubernetes cluster to meet the needs of a variety of scenarios.

In a hybrid cluster, pods on real nodes are interconnected with ECI pods on virtual nodes.

Note that ECI pods on virtual nodes can be charged on demand, which is different from that on real nodes. For the ECI billing rules, see: Pricing overview. For ECI pod specification configuration support from 0.25c to 64c, see: Limits

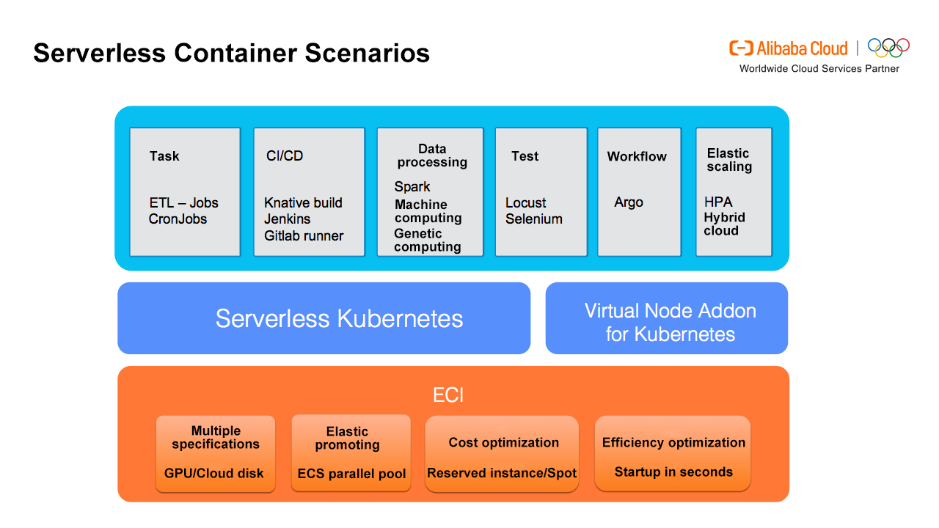

Virtual nodes and Serverless Kubernetes are built based on ECI and are Serverless Containers. They are suitable for a variety of Serverless workload scenarios, and can greatly reduce O&M costs, reduce the overall computing costs of users, and improve computing efficiency.

Now let's look at how you can use Helm to simplify the deployment and management of ack-virtual-node.

To install the ack-virtual-node plug-in, you can follow these steps:

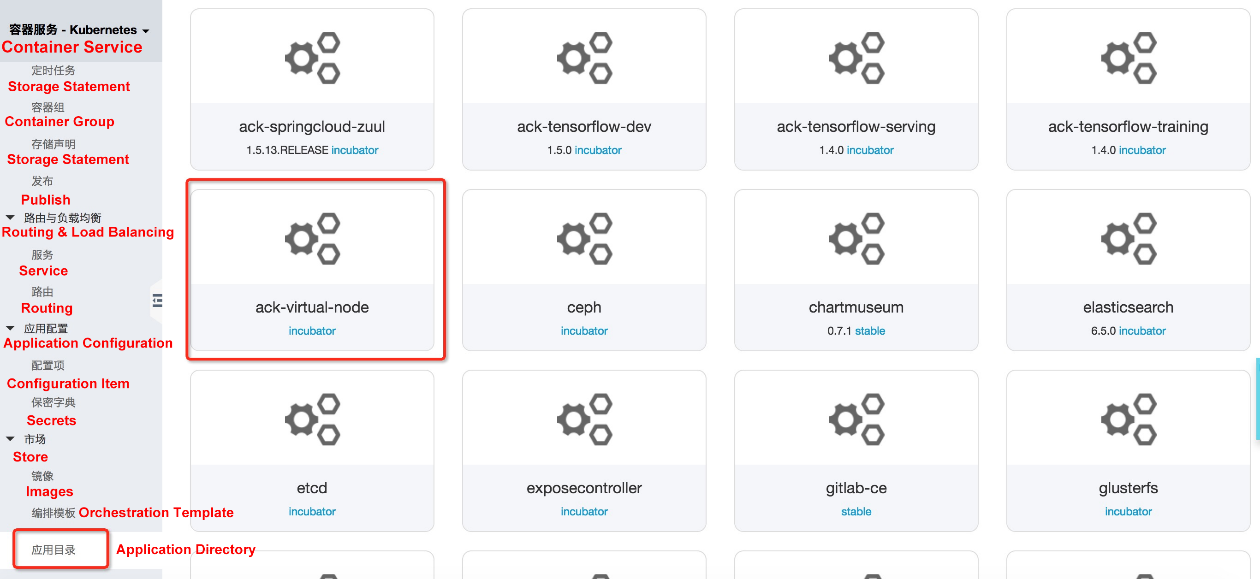

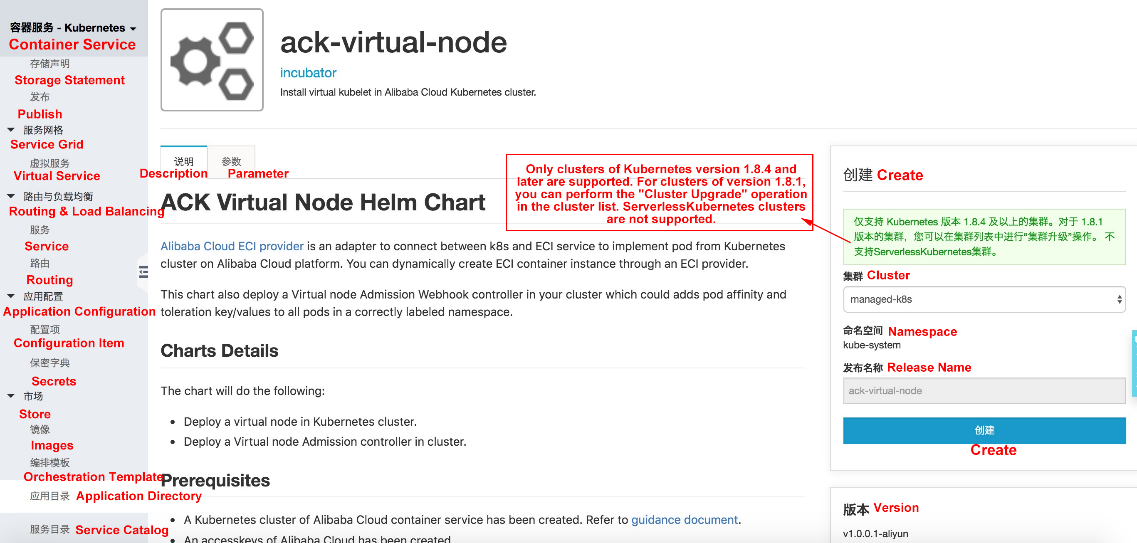

1. Log on to the Container Service console, and create a hosted Kubernetes cluster. Select ack-virtual-node on the Application Directory page.

https://cs.console.aliyun.com/#/k8s/catalog/detail/incubator_ack-virtual-node

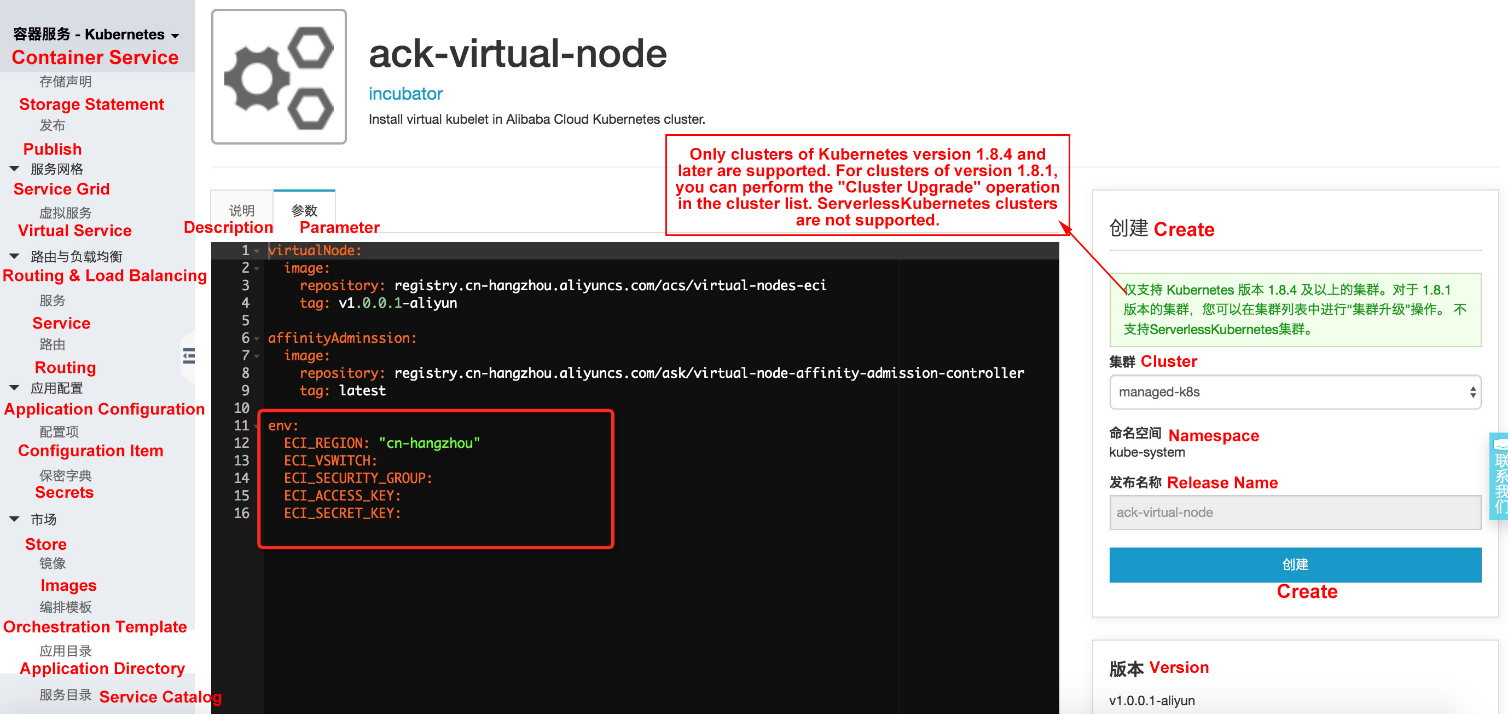

2. Configure virtual node parameters, including Region, AK information, vswitchId, and securityGroupId, with the same configurations as the Kubernetes cluster (network configuration information can be viewed on the Cluster Information page).

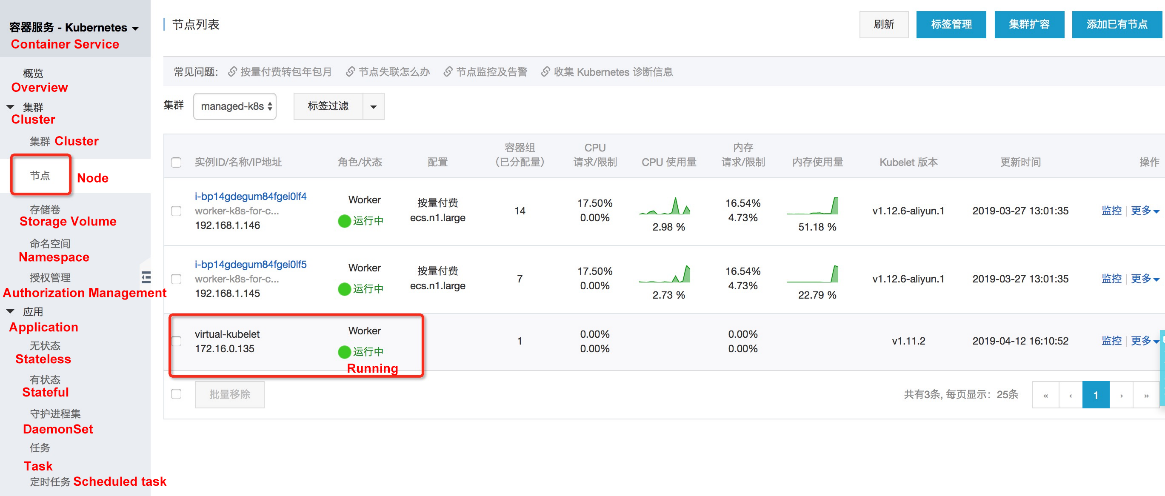

3. After the Chart is successfully installed, a node, virtual-kubelet, is added to the Node page.

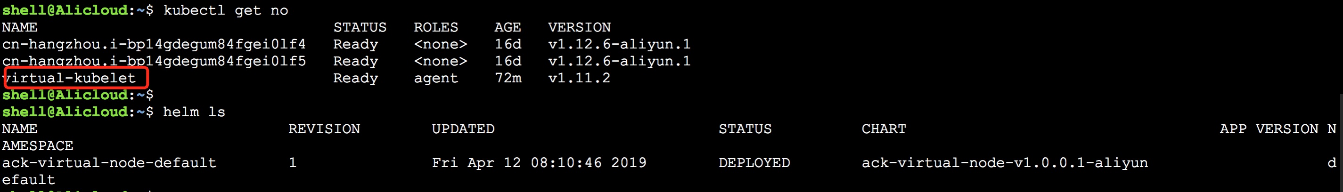

4. With the kubectl command, the node and Helm deployment status can be viewed. Later, ack-virtual-node can also be upgraded and managed through Helm.

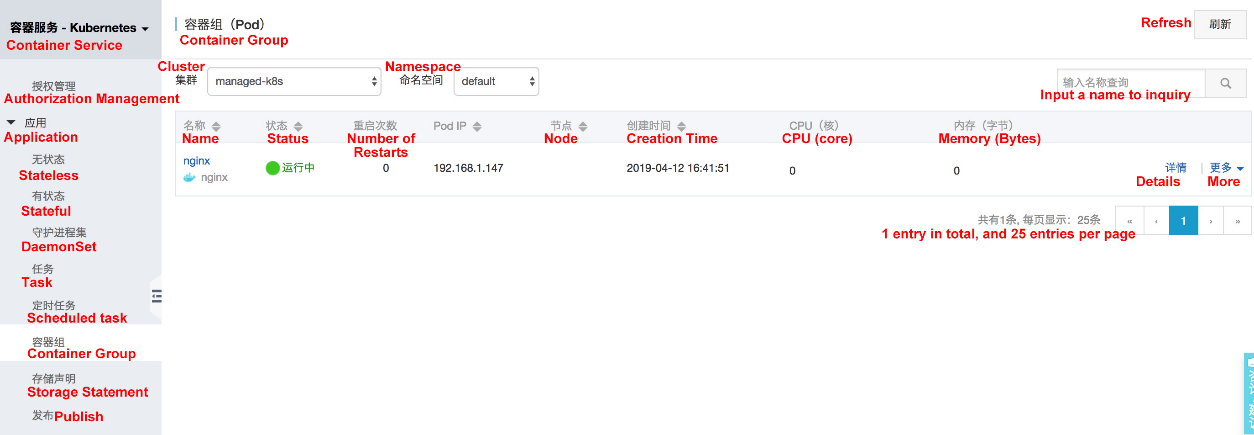

When a virtual node exists in the cluster, a pod can be scheduled to the virtual node, and the VK will create a corresponding ECI pod.Currently, a ECI pod can be created by using one of three ways:

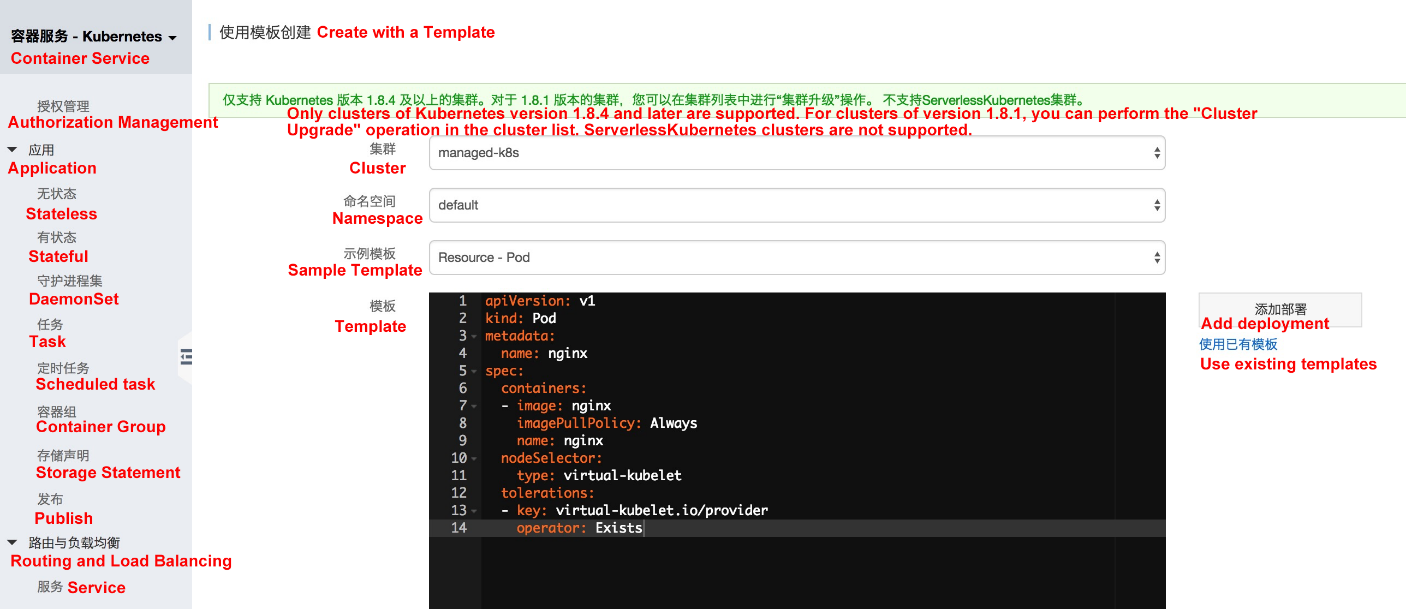

Create the following nginx pod, and set the correct nodeSelector and tolerations to ensure that the pod is scheduled to the virtual node.

apiVersion: v1

kind: Pod

metadata:

name: nginx

spec:

containers:

- image: nginx

imagePullPolicy: Always

name: nginx

nodeSelector:

type: virtual-kubelet

tolerations:

- key: virtual-kubelet.io/provider

operator: Exists

apiVersion: v1

kind: Pod

metadata:

name: nginx

spec:

containers:

- image: nginx

imagePullPolicy: Always

name: nginx

nodeName: virtual-kubeletBy adding the tag "virtual-node-affinity-injection=enabled" to the namespace, the admission controller in the system automatically adds nodeAffinity and tolerations to pods in the namespace without requiring users to manually configure tolerations for the pods, which greatly simplifies the use of virtual nodes and does not require the yaml file to adapt to virtual nodes.

kubectl create ns vk

kubectl label namespace vk virtual-node-affinity-injection=enabled

kubectl -n vk run nginx --image nginxIn this way, an ingress application can be created on a virtual node, and the yaml file can be run in the specified namespace without any modification.

# kubectl -n vk apply -f https://raw.githubusercontent.com/AliyunContainerService/serverless-k8s-examples/master/ingress/ingress-cafe-demo.yaml

deployment "coffee" created

service "coffee-svc" created

deployment "tea" created

service "tea-svc" created

ingress "cafe-ingress" created

# kubectl -n vk get pod -o wide

NAME READY STATUS RESTARTS AGE IP NODE

coffee-56668d6f78-7mdvc 1/1 Running 0 2m 192.168.1.170 virtual-kubelet

coffee-56668d6f78-tpslg 1/1 Running 0 2m 192.168.1.169 virtual-kubelet

tea-85f8bf86fd-5fl2v 1/1 Running 0 2m 192.168.1.172 virtual-kubelet

tea-85f8bf86fd-8n9n8 1/1 Running 0 2m 192.168.1.171 virtual-kubelet

tea-85f8bf86fd-jv7kj 1/1 Running 0 2m 192.168.1.173 virtual-kubelet

# kubectl -n vk get ing

NAME HOSTS ADDRESS PORTS AGE

cafe-ingress cafe.example.com 120.55.8.82 80 2m

# curl -H "Host:cafe.example.com" 120.55.8.82/tea

Server address: 192.168.1.173:80

Server name: vk-tea-85f8bf86fd-jv7kj

Date: 13/May/2019:05:54:32 +0000

URI: /tea

Request ID: 84d2afa2d3a74d7af38f94de21d11d37

# curl -H "Host:cafe.example.com" 120.55.8.82/coffee

Server address: 192.168.1.169:80

Server name: vk-coffee-56668d6f78-tpslg

Date: 13/May/2019:05:54:36 +0000

URI: /coffee

Request ID: 280df5e9f29d22d8174540f8dfe77861

229 posts | 34 followers

FollowAlibaba Container Service - August 10, 2023

Alibaba Cloud Native Community - January 9, 2023

Alibaba Container Service - August 10, 2023

Alibaba Clouder - August 6, 2019

Alibaba Container Service - July 16, 2019

Alibaba Cloud Native - March 6, 2024

229 posts | 34 followers

Follow Container Service for Kubernetes

Container Service for Kubernetes

Alibaba Cloud Container Service for Kubernetes is a fully managed cloud container management service that supports native Kubernetes and integrates with other Alibaba Cloud products.

Learn More ACK One

ACK One

Provides a control plane to allow users to manage Kubernetes clusters that run based on different infrastructure resources

Learn More Container Compute Service (ACS)

Container Compute Service (ACS)

A cloud computing service that provides container compute resources that comply with the container specifications of Kubernetes

Learn More Super Computing Cluster

Super Computing Cluster

Super Computing Service provides ultimate computing performance and parallel computing cluster services for high-performance computing through high-speed RDMA network and heterogeneous accelerators such as GPU.

Learn MoreMore Posts by Alibaba Container Service