Etcd is an open source distributed key-value (kv) storage system that has recently been listed as a sandbox incubation project by Cloud Native Computing Foundation (CNCF). The application scenario of etcd is very wide; for example, Kubernetes uses it as a ledger for storing various meta information inside the cluster.

This article will first introduce the background of the optimization, then the working mechanism of the etcd internal storage and the specific optimization implementation. In the end we will talk about the actual optimization effect.

Due to the large size of the cluster in Alibaba, there is a special need for the data storage capacity of etcd.

The storage size supported by the previous etcd cannot meet the requirements. Therefore, We developed a solution based on the etcd proxy and dumped the data to the tair (another kv storage system like redis). Although this solution solves the problem of data storage capacity, the drawbacks are obvious.

Because the proxy needs to move the data around, the operation delay is much larger than the native storage. In addition, due to the addition of the tair component, operation and maintenance costs are high.

Therefore, we think about what is the reason that limits the storage capacity of etcd. Can we optimize it?

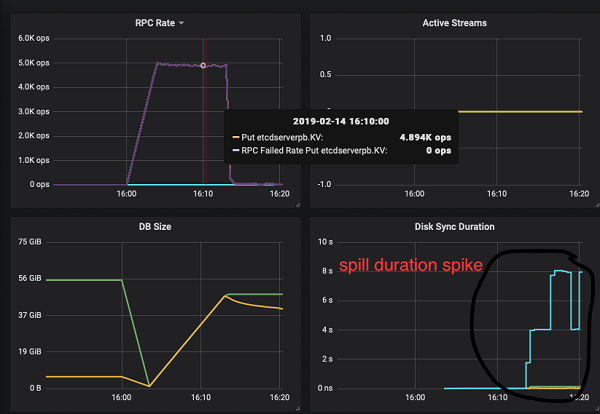

After raising the above problem, we first carried out the stress test and kept injecting data to etcd. When the amount of data stored in etcd exceeded 40GB, after a compact (compact is the operation of deleting the historical version data that we don't need), we found that latency of the put operation has soared and many put operations are timed out. The monitoring found that the internal spill operation of boltdb (see below for definition) took a significant increase in time (from a typical 1ms to 8s). After several repeated stress tests, the results are the same. All etcd read and write operation are much slower than normal which is totally unacceptable.

The following etcd monitoring diagram shows when the amount of etcd data is around 50GB, the spill operation delay has increased to 8s.

The etcd storage layer can be thought of as consisting of two parts, one is in-memory btree-based index layer and one boltdb-based disk storage layer. Here we focus on the underlying boltdb layer, because it is related to this optimization.

The introduction of boltdb is as follows:

Bolt was originally a port of LMDB so it is architecturally similar.

Both use a B+tree, have ACID semantics with fully serializable transactions, and support lock-free MVCC using a single writer and multiple readers.

Bolt is a relatively small code base (<3KLOC) for an embedded, serializable, transactional key/value database so it can be a good starting point for people interested in how databases work.As mentioned above, it is short and succinct, can be embedded into other software used as a database. For example, etcd has built-in boltdb as the engine for storing k/v data internally.

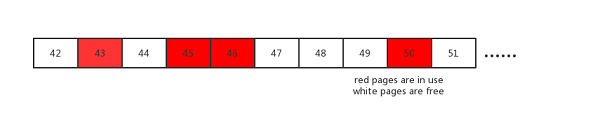

The inside of boltdb uses B+ tree as the data structure for storing data, and the leaf node stores the real key/value. It stores all the data in a single file, maps it to memory using mmap syscall, reads it, and update the file using write syscall. The basic unit of data is called a page, which size defaults to 4K. When page deletion occurs, boltdb does not directly return the deleted disk space to the os, but saves it temporarily to form a free page pool for subsequent use, this so-called pool is called freelist in boltdb. Examples are as follows:

The red page 43, 45, 46, 50 pages are being used, while the pages 42, 44, 47, 48, 49, 51 are free for later use.

When the user data is frequently written into etcd, the internal B+ tree structure will be adjusted (such as rebalancing, splitting the nodes). The spill operation is a key step in boltdb to persist the user data to the disk, which occurs after the tree structure is adjusted. It releases unused pages to the freelist, requesting free page storage data from the freelist.

Through a more in-depth investigation of the spill operation, we found the performance bottleneck, the following code in the spill operation takes the most time:

// arrayAllocate returns the starting page id of a contiguous list of pages of a given size.

// If a contiguous block cannot be found then 0 is returned.

func (f *freelist) arrayAllocate(txid txid, n int) pgid {

...

var initial, previd pgid

for i, id := range f.ids {

if id <= 1 {

panic(fmt.Sprintf("invalid page allocation: %d", id))

}

// Reset initial page if this is not contiguous.

if previd == 0 || id-previd != 1 {

initial = id

}

// If we found a contiguous block then remove it and return it.

if (id-initial)+1 == pgid(n) {

if (i + 1) == n {

f.ids = f.ids[i+1:]

} else {

copy(f.ids[i-n+1:], f.ids[i+1:])

f.ids = f.ids[:len(f.ids)-n]

}

...

return initial

}

previd = id

}

return 0

}From the above internal working mechanism of etcd, we know that boltdb will store the free page as freelist for later use. The above code is the function of the freelist internal page reassignment. He tries to allocate consecutive n page pages for use, returning the start page id. f.ids in the code is an array that records the id of the internal free page. For example, in the previous page, f.ids=[42,44,47,48,49,51]

This method performs a linear scan when n consecutive pages are requested. When there are a lot of internal fragments in freelist, for example, the pages existing in the freelist are mostly small pages, such as 1 or 2, but when a page with size 4 is needed, the algorithm will take a long long time to find. Besides, after the search, it needs to call copy to move the elements of the array. When there are a lot of array elements, that is, a large amount of data is stored internally, this operation is very slow.

From the above analysis, we know that the method of linear scan to find empty pages is indeed naive, which is very slow in large data scenario. Former yahoo's chief scientist Udi Manber once said that the three most important algorithms in yahoo are hashing, hashing and hashing!

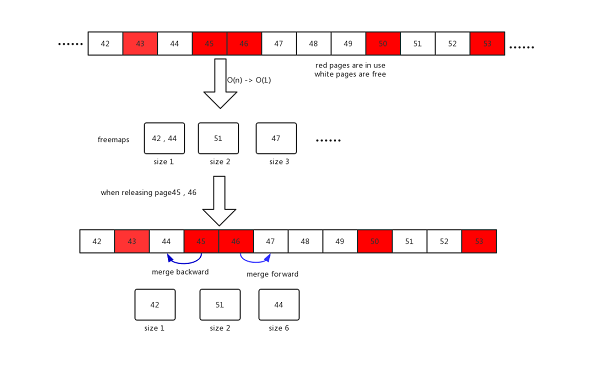

Therefore, in our optimization scheme, consecutive pages of the same size are organized by set, and then the hash algorithm is used to map different page sizes to different ets. See the freemaps data structure in the new freelist structure below. When the user needs a continuous page of size n, just query freemaps to get the first page.

type freelist struct {

...

freemaps map[uint64]pidSet // key is the size of continuous pages(span), value is a set which contains the starting pgids of same size

forwardMap map[pgid]uint64 // key is start pgid, value is its span size

backwardMap map[pgid]uint64 // key is end pgid, value is its span size

...

}In addition, when the page is released, we need to merge as much as possible into a larger continuous page. The previous algorithm uses a time-consuming approach(O(nlgn)). We update it by using the hash algorithm too. The new approach uses two other data structures, forwardMap and backwardMap, their meanings are explained in the comments above.

When a page is released, it tries to merge with the previous page by querying backwardMap and tries to merge with the following page by querying forwardMap. The specific algorithm is shown in the following mergeWithExistingSpan function.

// mergeWithExistingSpan merges pid to the existing free spans, try to merge it backward and forward

func (f *freelist) mergeWithExistingSpan(pid pgid) {

prev := pid - 1

next := pid + 1

preSize, mergeWithPrev := f.backwardMap[prev]

nextSize, mergeWithNext := f.forwardMap[next]

newStart := pid

newSize := uint64(1)

if mergeWithPrev {

//merge with previous span

start := prev + 1 - pgid(preSize)

f.delSpan(start, preSize)

newStart -= pgid(preSize)

newSize += preSize

}

if mergeWithNext {

// merge with next span

f.delSpan(next, nextSize)

newSize += nextSize

}

f.addSpan(newStart, newSize)

}

The new algorithm example is shown below. When page 45, 46 is released, it will try to merge with 44 pages, and merge with 47, 48, 49 pages into a new large page.

The new algorithm is learned from the segregated freelist algorithm in memory management which is also used in tcmalloc. It reduces the page allocation time complexity from O(n) to O(1), and the release from O(nlgn) to O(1). The optimization effect is very obvious.

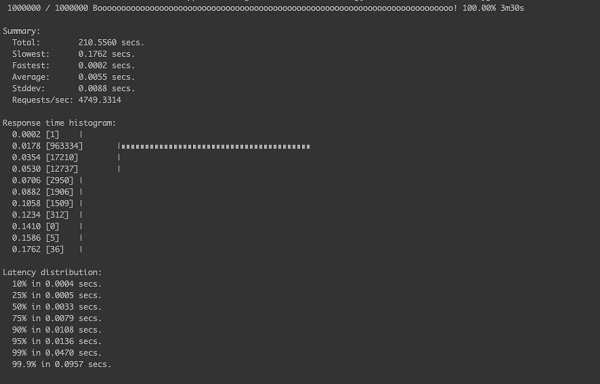

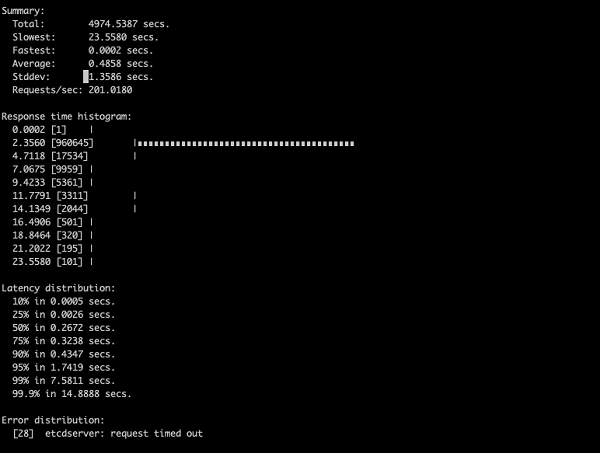

The following test are working on a one-node etcd cluster in order to exclude other reasons such as the network. The only difference is that the old and new algorithms are different. The test simulates 100 clients put 1 million kv pairs to etcd at the same time. The key/value content is random, and we limit the op/s to 5000 op/s. The test tool is the etcd offical benchmark tool. The latency result are shown in the following pictures.

There are some timeouts that have not completed the test,

The less the completion time, the better the performance. The performance boost factor is the time-consuming ratio of each solution to the latest hash algorithm)

| Scenario | Completion Time | Performance boost |

|---|---|---|

| New hash algorithm | 210s | baseline |

| Old array algorithm | 4974s | 24x |

In a scene with a larger amount of data or more parallelism, the new algorithm's performance is more outstanding.

The new optimization reduces time complexity of the internal freelist allocation algorithm in etcd from O(n) to O(1) and the release part from O(nlgn) to O(1), which solves the performance problem of etcd under the large database size. Literally, the etcd's performance is not bound with the storage size anymore. The read and write operations when etcd stores 100GB of data can be as smooth as storing 2GB.

This new algorithm is fully backward compatible, you can get the benefit of this new technology without data migration or data format changes!

At present, the optimization has been tested repeatedly in Alibaba for more than 2 months and the result is good and stable. It has been contributed to the open source community link. You can use it in the new versions of boltdb and etcd.

Knowledge Sharing: Category-based Interpretation of Kubernetes v1.14 Release Notes

229 posts | 34 followers

FollowAlibaba Developer - January 10, 2020

Alibaba Developer - August 19, 2021

Alibaba Developer - October 24, 2019

Alibaba Developer - June 15, 2020

ApsaraDB - November 13, 2019

Neel_Shah - December 4, 2025

229 posts | 34 followers

Follow ACK One

ACK One

Provides a control plane to allow users to manage Kubernetes clusters that run based on different infrastructure resources

Learn More Container Service for Kubernetes

Container Service for Kubernetes

Alibaba Cloud Container Service for Kubernetes is a fully managed cloud container management service that supports native Kubernetes and integrates with other Alibaba Cloud products.

Learn More Container Compute Service (ACS)

Container Compute Service (ACS)

A cloud computing service that provides container compute resources that comply with the container specifications of Kubernetes

Learn More Cloud-Native Applications Management Solution

Cloud-Native Applications Management Solution

Accelerate and secure the development, deployment, and management of containerized applications cost-effectively.

Learn MoreMore Posts by Alibaba Container Service