By De Shi, from the Alibaba Cloud ApsaraDB for MongoDB and InfluxDB teams.

Alibaba Cloud InfluxDB® is a time-series database based on InfluxData that enables more powerful time-series data computing capabilities with a more stable continuous running status. In addition to the existing single-node version, the Alibaba Cloud InfluxDB team also plans to release a high-availability multi-node version in the near future.

The existing open-source InfluxData only supports single-node scenarios. The cluster versions made open source do not allow for much of the potential function of InfluxData with these solutions not maintained by the community. From an examination of the existing documents of the commercially versions of InfluxData, the meta cluster solution of the commercial InfluxData probably implements synchronization based on the Raft consensus algorithm and data replication is asynchronous. This separation solution has its advantages, but it also causes a series of consistency problems. In some published documents, the official InfluxDB team also admits that this data replication solution is not very satisfactory.

Therefore, after considering multiple options, our team decided to use the widely-applied and long-existing Etcd/Raft as the core component to implement the Raft kernel of Alibaba Cloud InfluxDB. This solution synchronizes all user writes or consistency requests directly based on Raft (without splitting operations targeting meta synchronization and data writing in the consistency implementation process), ensuring that our high-availability multi-node version can achieve strong consistency.

I feel privileged to have participated in the development of this multi-node version that offers high availability. In the development process, we had to solve many challenges and difficulties. One of the challenges is a series of complex problems caused by the removal of the Raft log module to Etcd and does not made much difference to the time-series database in the process of transplanting the Etcd Raft framework. This article discusses different Raft log implementation solutions in the industry and describes the Raft HybridStorage solution we developed internally.

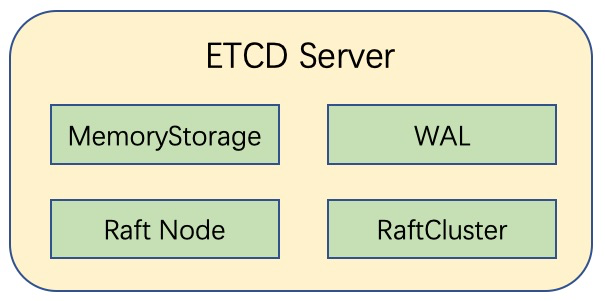

Since we adopted solution using Etcd/Raft, it's important that we bring up how ETCD implements the Raft log module.

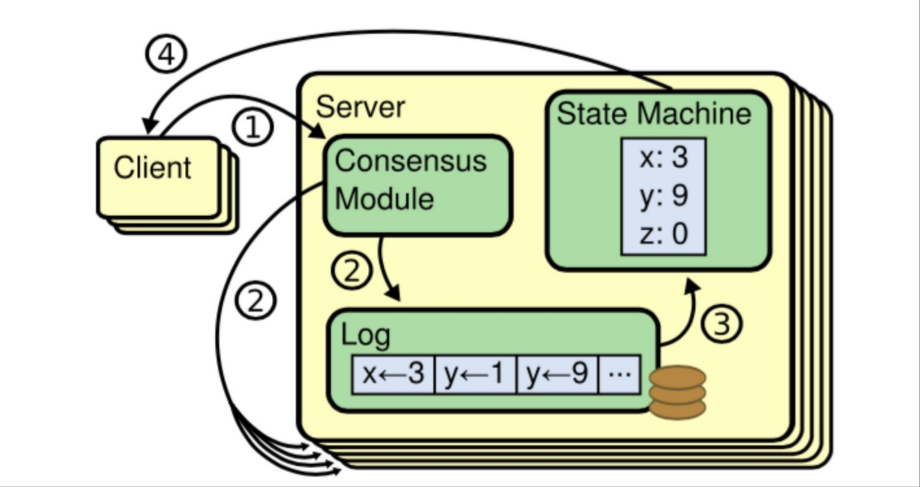

The basic description of the Raft on the official website is shown using the following diagram. Further details about the Raft protocol are not provided in this article.

The Raft log in Etcd mainly consists of two parts: WAL and MemoryStorage.

A WAL (short for Write Ahead Log) is a log file used in the Etcd Raft process. All the log entries received in the Raft process are recorded in a WAL log file. This file can only be appended and cannot be overwritten or rewritten.

MemoryStorage mainly stores a relatively new log segment of log entries in the Raft process, which may include some logs that have achieved a consensus and log entries that have not achieved a consensus. Memory maintenance allows flexible rewriting and replacement. MemoryStorage has two approaches to clearing and releasing memory. The first approach is the compact operation that clears logs before the appliedID to releases the memory. The second approach is the regular snapshot operation that creates and persists the state of the global Etcd data at the moment of snapshot and clears logs in the memory.

In the latest Etcd 3.3 code repository, Etcd elevates Raft WALs and MemoryStorage to the server level, making them have the same level as Raft nodes, Raft node IDs, and other node information of Raft clusters (*membership.RaftCluster). This is significantly different form the Etcd code architecture of the older versions, where Raft WALs and MemoryStorage are only the member variables of Raft nodes.

Generally, the WAL and MemoryStorage of a Raft log work collaboratively: Data is first written into the WAL during the writing process to ensure the persistence and then appended to the MemoryStorage to ensure that hot data can be read and written efficiently.

The data stored either in WALs or MemoryStorage have a consistent data structure-raftpb.Entry. A log entry mainly contains the following information.

| Name | Description |

| Term | The term number of a leader |

| Index | Current log index |

| Type | Log type |

| Data | The content of the log |

In addition, a WAL of the Etcd Raft log also stores additional information set for Etcd, such as log type and checksum.

CockroachDB is an open-source distributed database that has the capability of NoSQL to store and manage a large amount of data. The database also retains ACID and SQL supported in traditional databases and also offers such features as cross-region support, decentralization, high concurrency, multi-replica strong consistency, and high availability.

The consistency mechanism of CockroachDB is also based on the Raft protocol: Multiple replicas of a single range implement data synchronization with the Raft protocol. The Raft protocol serializes all the request as a Raft log, which is synchronized to the followers by the leader. When a majority of the replicas are successfully written into the Raft log, the Raft log is marked with the committed state and applied to the state machine.

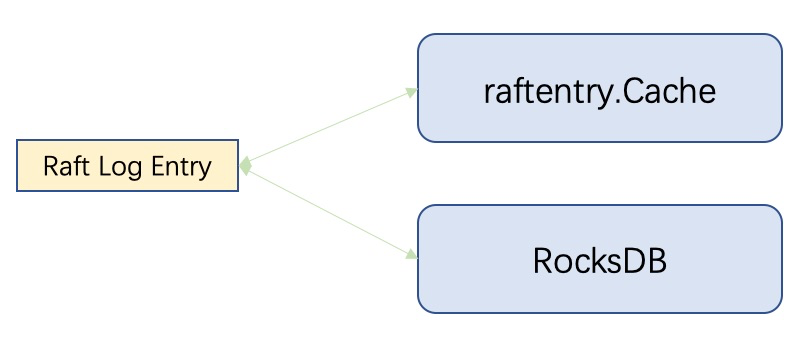

After analyzing the critical code of the CockroachDB Raft mechanism, we can see that it is transplanted from the Etcd Raft framework. However, in CockroachDB, the file storage part of the Etcd Raft log is deleted and all the Raft logs are written into RocksDB. In addition, CockroachDB has its own hot data cache system, raftentry.Cache. The read and write capabilities of raftentry.Cache and RocksDB (including the read cache of RocksDB) are used to ensure the performance of log reads and writes.

Additionally, the snapshots created in the Raft process are directly saved to RocksDB. I think that the reason for this implementation solution is probably because RocksDB itself is used for the underlying data storage of CockroachDB. It is relatively simple to directly use RocksDB to read and write WALs or access snapshots, without having to develop a Raft log module for CockroachDB.

For the Alibaba Cloud InfluxDB multi-node and high-availability implementation solution, we use Etcd/Raft as the core component and remove the native snapshot process based on our explorations in the course of transplanting and the actual requirements of InfluxDB. Meanwhile, we abandoned the native log files (WALs) and use our own solution instead.

Why did we remove the snapshot feature? In the original Raft process, a snapshot operation is performed at a regular interval to prevent Raft logs from increasing infinitely and snapshots are directly used to respond to Raft log requests earlier than the snapshot index. However, if the snapshot operation is to be performed on our single-ring architecture that uses Raft, it is performed throughout InfluxDB. This will as a result consume a lot of resources and severely affect the performance. In addition, it is unacceptable to lock the entire InfluxDB in the process. Therefore, we decided not to use the snapshot feature for the moment. Instead, we store a fixed (large) number of Raft log files for backup.

Our own Raft log file module regularly clears the earliest logs to prevent excessive disk overhead. If a node is unavailable for a short period of time and log files stored on other normal nodes are sufficient, the node is allowed to retrieve outdated data. However, what if a single node experiences significant downtime and insufficient log files are available for the failed node to retrieve data because log files on normal nodes have been cleared. In such a case, we will use the InfluxDB backup and restore tool to recover the outdated node to a relatively new state that is covered by Raft logs and then retrieve data.

In our scenarios, the WAL module of Etcd is not suitable for InfluxDB. The WAL of Etcd is in the pure append mode. When the fault is recovered, and the normal node responds to the log request of the lagging node, it is necessary to analyze and extract the latest log with the same index and different terms. At the same time, an entry of InfluxDB may contain more than 20 MB of time series data, which is a very high disk overhead for time series databases in non-KV mode.

Our in-house Raft log module is HybridStorage, which is a solution that provides hybrid access of memory and files. The memory retains the latest hot data, and the files ensure that all logs are stored on the disk. The memory and file append operations are highly consistent.

The design idea of HybridStorage is as follows:

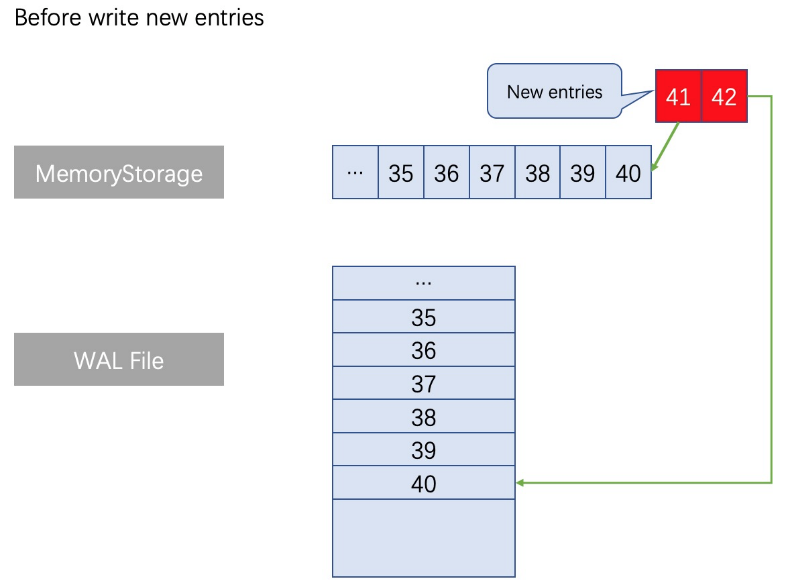

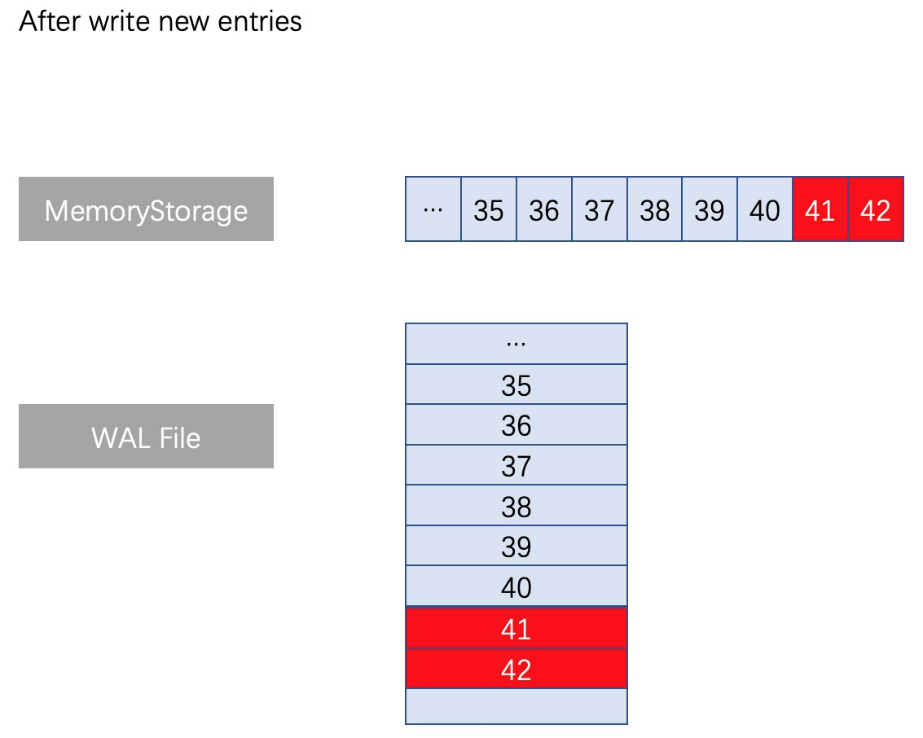

Generally, when log entries with different indexes are added in HybridStorage, it is required to perform similar entry increase or decrease in the file while the memory log is written. The following figure shows the normal writing process:

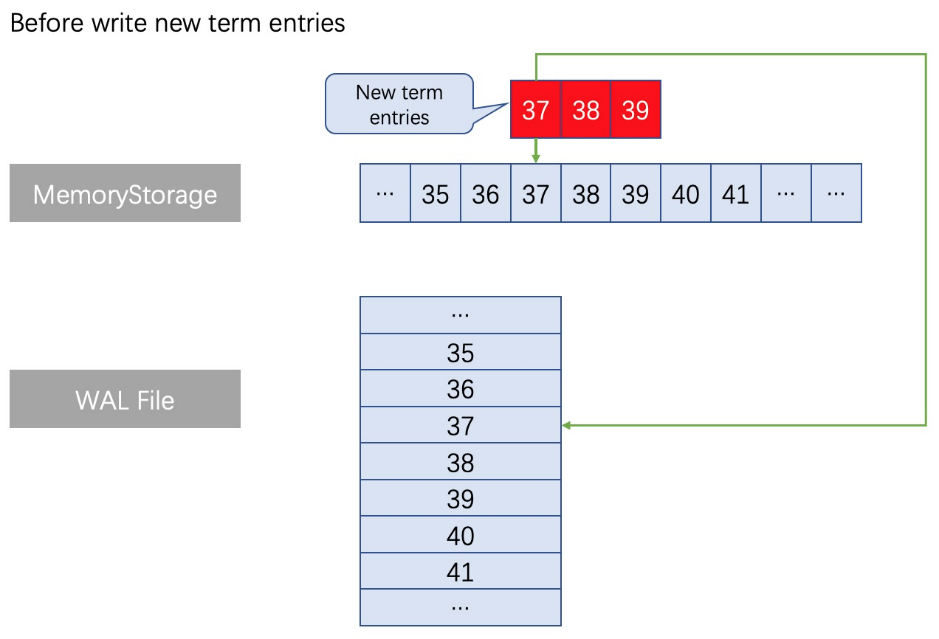

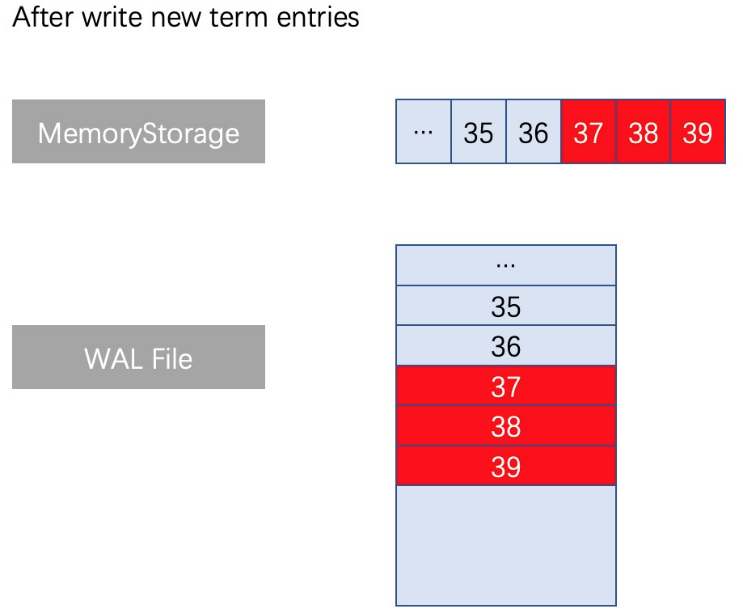

When log entries with the same index and different terms appear, a truncate operation is executed, truncating all logs from the corresponding file location to the end of the file. After this, log entries with the latest term number are written in the append mode again. The operation is logically clear, and no operation exists in the middle of the Update file.

For example, when the append operation is performed on a set of Raft logs, the log entries with the same index (37, 38, 39) and different terms appear, as shown in the following figure. The processing method in MemoryStorage is to find the memory location corresponding to index location (memory location 37), discard the memory data occupied by all the old logs after location A (because in the Raft mechanism, those old logs after memory location 37 are invalid in this case and do not need to be retained), and then splice all the new logs of this append operation. Similar operations need to be carried out in the internally developed WAL: To find the location corresponding to index (file location 37) in the WAL File, delete all file content after the file location 37, and write the latest logs. As shown in the following figure:

For the Etcd solution, the Raft log has two parts: file and memory. The file part only uses the append mode, so not every log entry is valid. When log entries with different terms and the same index appear, only the entries after the latest term are valid. Together with the snapshot mechanism, it is very suitable for KV storage systems, such as Etcd. However, for the high-availability version of InfluxDB, the snapshot can consume a lot of resources and affect performance, and the entire InfluxDB is locked in the process. In addition, an entry in a Raft process may contain more than 20 MB of time series data. Therefore, this solution is not suitable.

For the CockroachDB solution, it seems that RocksDB capabilities are used for simplicity, but because its bottom-layer storage engine is also RocksDB, there is nothing wrong with it. However, the introduction of RocksDB is not too heavy for time series databases that require Raft consistency protocol.

Our internally developed Raft HybridStorage is more in line with the scenario of Alibaba Cloud InfluxDB® . The module design is lightweight and simple, the memory retains the hot data cache, and the file uses a mode similar to the append-only mode of ETCD. In the case of log entries with the same index and different terms, the truncate operation is performed to delete redundant and invalid data and reduce disk pressure.

This article compares two common Raft log implementation solutions in the industry, and also introduces the HybridStorage solution developed by the Alibaba Cloud InfluxDB® team. In the subsequent development process, the team will also optimize the internally developed Raft HybridStorage, such as asynchronous writing, log file indexing, and read logic optimization. It is our hope that we can continue to provide even better solutions.

Interested in reading more similar topics? Stay tuned for more helpful articles and tutorials from the team of engineers at Alibaba Cloud ApsaraDB for MongoDB.

Learn How Alibaba Cloud's Databases Could Support 87 Million Transactions per Second

Alibaba Cloud Native - October 12, 2024

Alibaba Clouder - July 31, 2019

Alibaba Cloud Native - March 14, 2022

ApsaraDB - August 8, 2023

Alibaba Cloud Native - July 18, 2024

Alibaba Cloud Storage - April 25, 2019

Time Series Database for InfluxDB®

Time Series Database for InfluxDB®

A cost-effective online time series database service that offers high availability and auto scaling features

Learn More ApsaraDB for MongoDB

ApsaraDB for MongoDB

A secure, reliable, and elastically scalable cloud database service for automatic monitoring, backup, and recovery by time point

Learn More ApsaraDB for HBase

ApsaraDB for HBase

ApsaraDB for HBase is a NoSQL database engine that is highly optimized and 100% compatible with the community edition of HBase.

Learn More ApsaraDB for OceanBase

ApsaraDB for OceanBase

A financial-grade distributed relational database that features high stability, high scalability, and high performance.

Learn MoreMore Posts by ApsaraDB

guohua.lin November 15, 2019 at 4:29 pm

great idea, and good job!