By Beilou

Recently, analytic databases have been very popular among the business and technology communities, and many innovative products from start-ups have sprung up. On the one hand, this is because enterprises are increasingly relying on data to bring about growth in demand. On the other hand, the development of cloud-native technology has empowered the evolution and transformation of existing technology systems. The success of products such as Snowflake has proved that it is necessary to use cloud native to reengineer the technology system of analytic databases. This may also bring about new market opportunities.

PolarDB MySQL is a database system that came into being due to the cloud. In addition to on-cloud OLTP scenarios, many customers also have requirements for real-time data analysis performance of PolarDB. To meet this demand, the PolarDB technical team proposed an In-Memory Column Index (IMCI) technical solution to achieve hundreds of times of acceleration in complex analysis and query scenarios.

This article introduces the idea of the technical route behind IMCI and the hard choice we've made between several schemes. At the time of writing, the column storage analysis of PolarDB MySQL is yet to be launched publicly, so stay tuned!

MySQL is an open-source database designed mainly for OLTP scenarios. The research and development in the open-source community focus on strengthening its transaction processing capabilities, including single-core performance, multi-core extensibility, and cluster capabilities, to improve availability. As for the capabilities required to process complex queries under large data volumes, such as processing subqueries by the optimizer, high-performance operator HashJoin, and SQL parallel execution, the technology communities have always placed them on a relatively low priority. Therefore, the improvement of MySQL data analysis capability is slow.

As MySQL becomes the most popular open-source database system in the world, users store a large amount of data and run key business logic in it. Real-time data analysis has become a growing demand. When the standalone MySQL cannot meet the requirements, users are expecting a better solution.

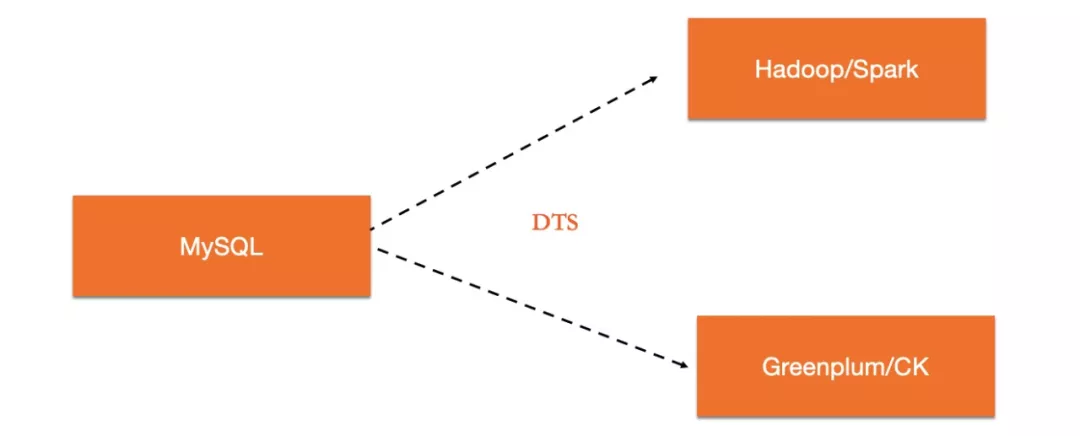

There are many dedicated analytical database products to choose from. One feasible option is to use two systems to meet the OLTP and OLAP requirements, respectively, and to synchronize data in real time through data synchronization tools between the two systems. Users can even add a layer of proxy to automatically route TP load to MySQL and analytic load to OLAP database. By doing so, they can mask the application layer from the deployment topology of the underlying database.

Such an architecture is flexible. For example, both TP database and AP database can choose the best scheme, respectively. This architecture has also completed the isolation of TP and AP loads. Its shortcomings are also obvious. First, it is technically necessary to maintain the two sets of database systems. Second, due to the differences in the processing mechanisms of the two systems, it is also very challenging to ensure real-time consistency of upstream and downstream data. Moreover, due to synchronization latency, data stored in downstream AP systems are often outdated, which fails to meet the requirement of real-time analysis.

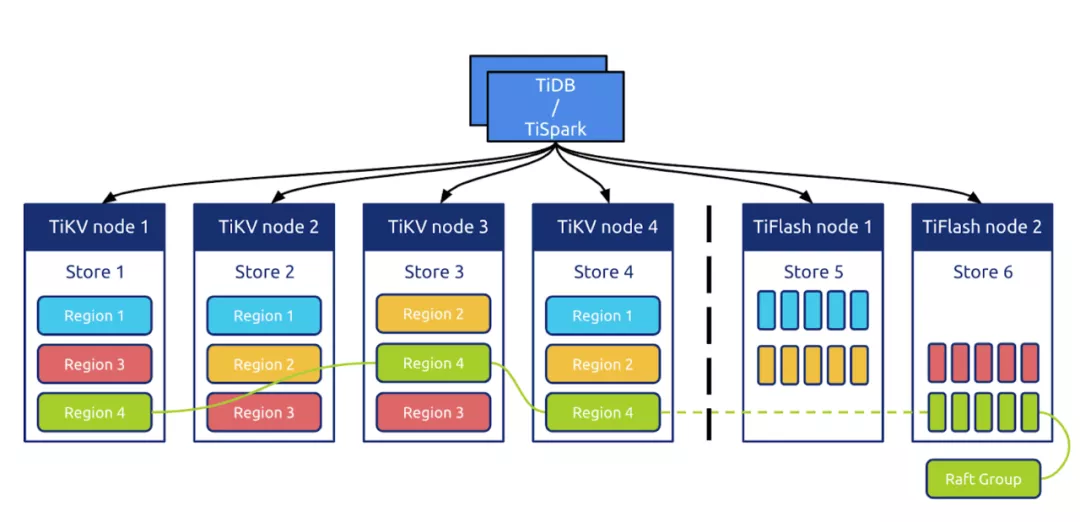

Many of the emerging database products that have emerged with the rise of the Internet are compatible with the MySQL protocol. So they are alternatives to MySQL. Most of these distributed database products adopt the distributed Share Nothing solution. One of the core features is using the distributed consistency protocol to ensure data consistency between multiple replicas of a single partition. Since one copy of data is completely independent among multiple replicas, it is easy to use different formats for storage on different replicas to serve different query loads. A typical example is TiDB. Starting from version 4.0, TiDB uses column storage (TiFlash) on one of the replicas in a Raft Group to respond to AP loads. TiDB also uses its intelligent routing capability to automatically select data sources. This allows that one database system can serve both OLTP and OLAP loads.

This practice has been applied in many research and industry fields and has increasingly become a de facto standard scheme for integrated HTAP in distributed data fields. However, the premise is that users have the requirement to migrate to the corresponding NewSQL database system. This often brings various compatibility and adaptation issues.

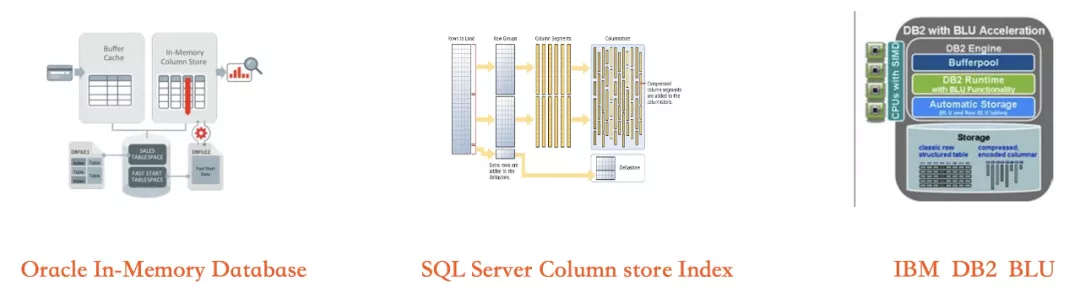

The solution that is more advanced than the multi-replica divergent design is that one database instance uses row-column hybrid storage to respond to both TP and AP loads. This is a solution adopted by all traditional commercial databases such as Oracle, SQL Server, and DB2.

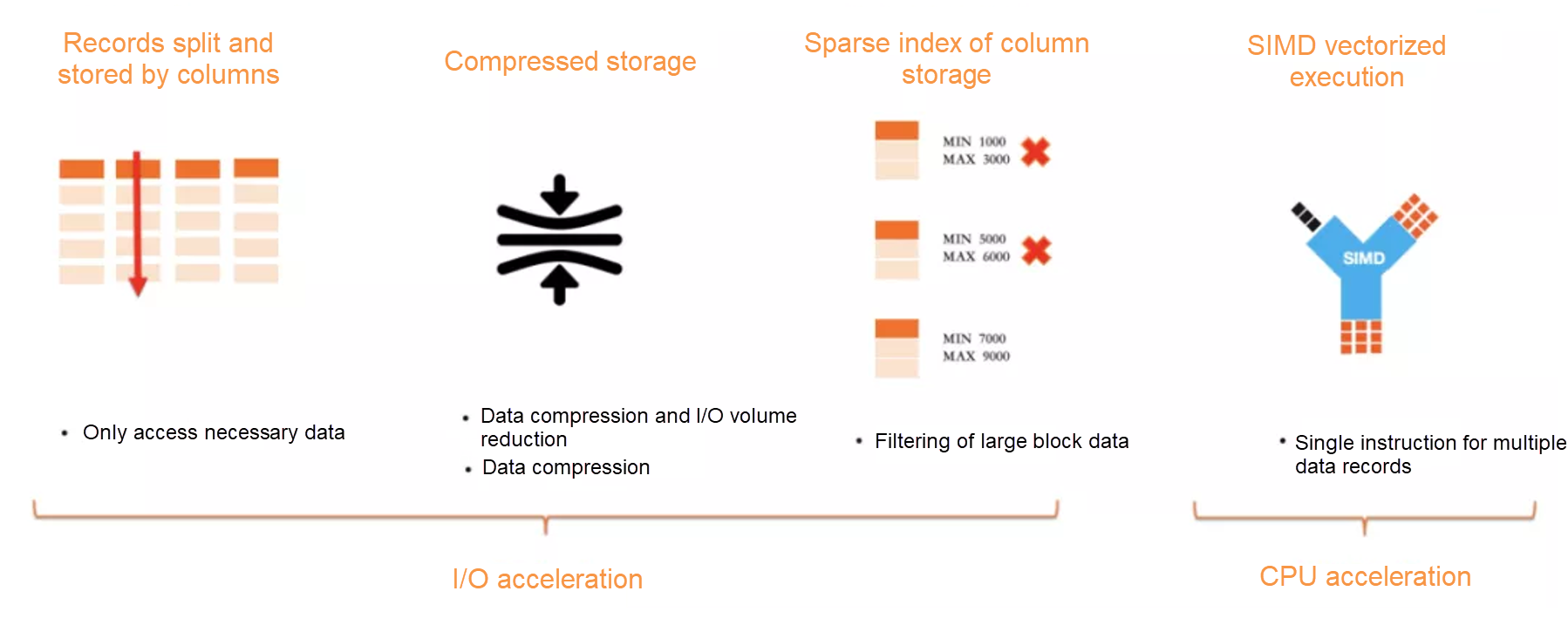

Three leading commercial database enterprises have adopted the technical route of row-column hybrid storage combined with in-memory computing at the same time. This has its underlying technical logic: column storage has better I/O efficiency (compression, DataSkipping, column clipping) and CPU computing efficiency (cache-friendly). Therefore, to achieve the highest analysis performance, column storage must be used. However, the index accuracy issue caused by index sparseness of column storage determines that it cannot become the storage format for TP scenarios. Thus, row-column hybrid storage becomes a required solution. However, in the row-column hybrid storage architecture, there is a performance gap between row storage indexes and column storage indexes when handling random updates. The low read-write latency of DRAM must be used to compensate for the low efficiency of column storage updates. Therefore, with the capabilities of low-latency OLTP and high-performance real-time data analysis, hybrid row-column storage combined with in-memory computing is the only choice.

Let's again take a look at the above three methods. From building block scheme to the divergent design method and the integrated row-column hybrid storage, the integration level is getting higher, and the user experience is getting better. However, the challenges in implementing the kernels are also increasing. The role of basic software is to leave the complexity to itself and simplicity to users, so an integrated approach is in line with technological trends.

The capability stack of PolarDB MySQL is similar to that of open-source MySQL. It is good at handling TP scenarios but weak at AP scenarios. PolarDB provides a storage capacity that one instance can store up to 100 TB of data, and its transaction processing capability far exceeds user-created MySQL. Therefore, PolarDB users tend to store more data on a single instance and run some complex aggregate queries on the data. With the write-once-read-many architecture of PolarDB, users can add read-only RO nodes to run complex read-only queries. This avoids the interference of analytic queries on the TP load.

There are many reasons for the poor performance of MySQL implementation architecture when executing complex queries. Compared with the dedicated OLAP system, its performance bottleneck is reflected in the following aspects:

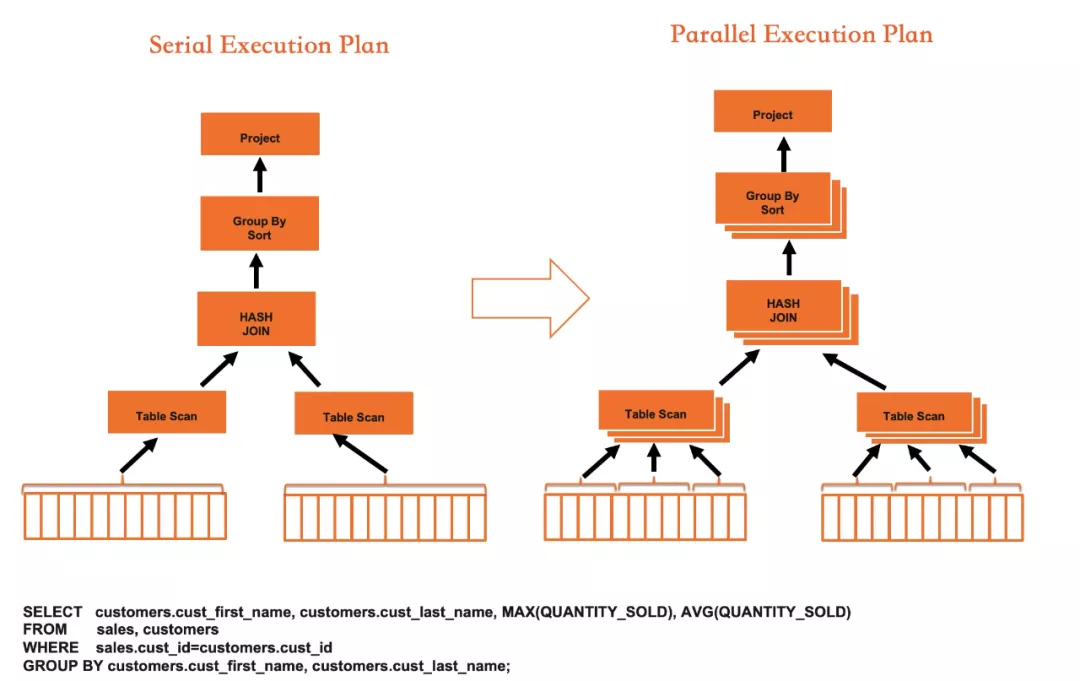

The Parallel Query framework developed by the PolarDB team can automatically start parallel execution when the amount of queried data reaches a certain threshold. The data is distributed to different threads at the storage layer for parallel computing, and the results are aggregated to the main thread. At last, the main thread summarizes and returns the results to users. This framework has improved query efficiency.

The parallel query feature allows PolarDB to break through the limitation of single-core execution performance. By taking advantage of the parallel processing capability of multi-core CPUs, the time consumption of some SQL queries on PolarDB decreases exponentially.

The parallel execution framework breaks through the limitation of CPU extension capability and brings significant performance improvement. However, limited by the efficiency of row storage and row executor, the single-core execution performance has a ceiling. The peak performance still falls behind that of a dedicated OLAP system. To further improve the analysis performance of PolarDB MySQL, we need to introduce column storage:

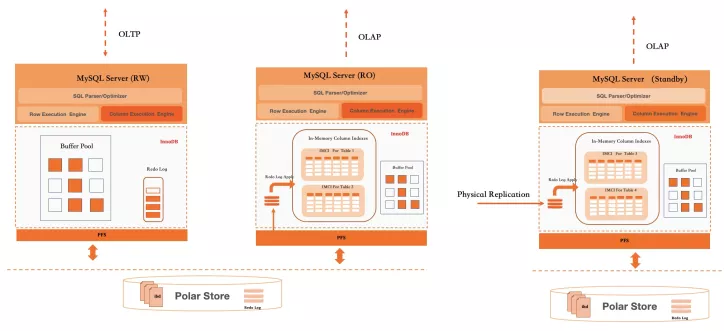

In-Memory Column Index brings column storage and in-memory computing capabilities to PolarDB. This allows users to simultaneously run TP and AP hybrid loads on a set of PolarDB databases. This also ensures the current excellent OLTP performance of PolarDB and greatly improves the performance of PolarDB in running complex queries on large data volumes.

In-Memory Column Index uses row-column hybrid storage and combines the shared storage-based write-once-read-many architecture of PolarDB. It includes the following key technical innovations:

The combination of several key technologies makes PolarDB a true HTAP database system. Its performance in running complex queries on large amounts of data can equal that of industry-leading commercial database systems such as Oracle and SQL Server.

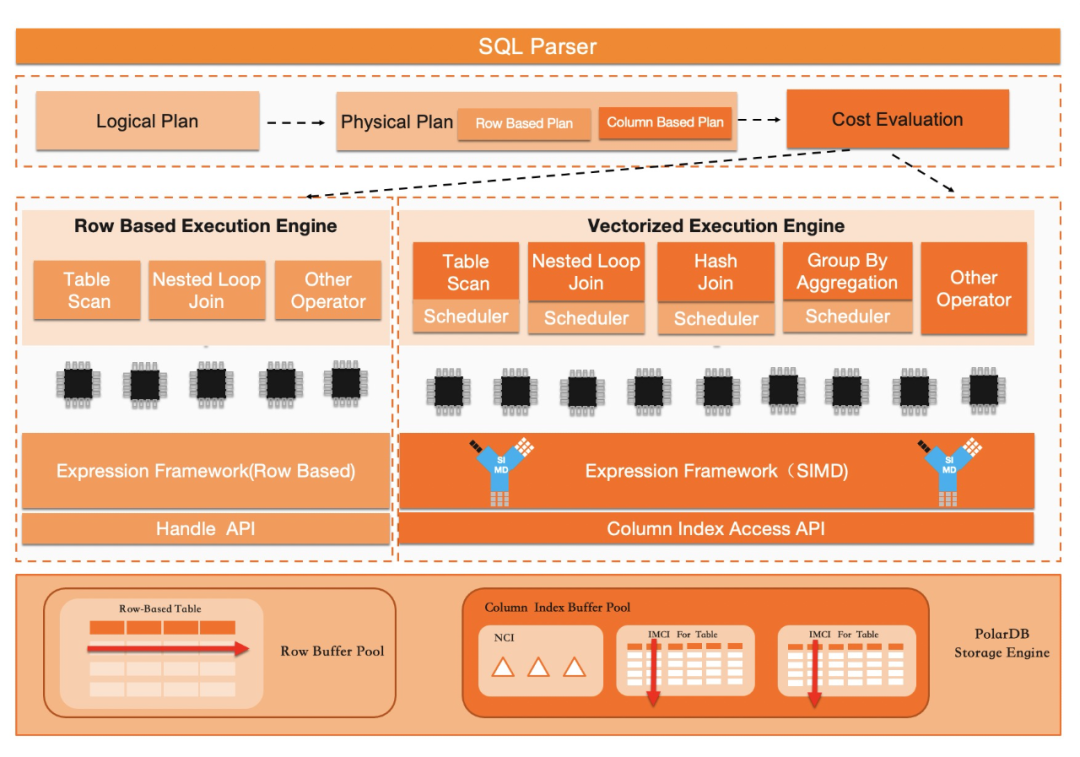

PolarDB has a set of native row-oriented optimizer components. After the engine layer adds support for the column storage, the optimizer components need to be enhanced. The optimizer needs to be able to determine whether a query should be scheduled to row storage or column storage for execution. We achieve this through a whitelist mechanism and a framework of execution cost calculation. The system ensures acceleration of supported SQL and is compatible with running unsupported SQL.

We use a whitelist mechanism to achieve the compatibility goal. The reasons for using the whitelist are as follows: First, considering the limitation of the available system resources (mainly memory resources), columnar indexes are generally not created on all tables. When a query statement needs to use a column that does not exist in the column storage, the statement cannot be executed on the column storage. Second, considering the performance, we have rewritten a set of column-oriented SQL execution engines, involving all physical execution operators and expression computing. The coverage of supported scenarios is inadequate compared with the native row storage of MySQL. When the issued SQL contains some operator fragments or column types that IMCI execution engine cannot support, the execution engine needs to be able to identify, intercept, and switch back to the row storage for execution.

The purpose of plan conversion is to convert the way of expression AST of the native logical execution plan in MySQL to the logical plan of IMCI. After a logical plan of IMCI is generated, a physical plan is generated after a series of optimizations. Plan conversion is simple and direct. You only need to traverse the execution plan tree and convert AST optimized by MySQL into a tree structure with relation operators as its nodes in IMCI. This resembles a translation process. However, in this process, other operations will be performed. For example, implicit conversion of types is made to be compatible with the flexible type system of MySQL.

With two sets of execution engines (row storage and column storage), the optimizer has more choices when selecting the execution plan. It can compare the cost of row-storage execution plan with that of column-storage execution and use the execution plan with the lower cost.

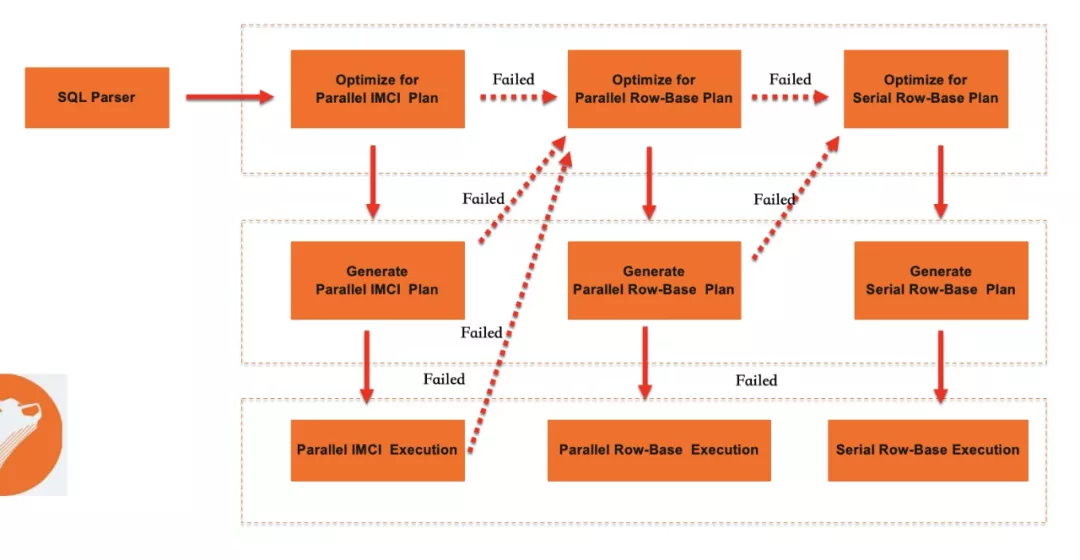

In PolarDB, besides the native row-storage serial execution of MySQL, there is also the parallel query based on row storage that can give full play to multi-core computing power. Therefore, the optimizer actually chooses between row-storage serial execution, row-storage parallel query, and IMCI. In the current iteration phase, the optimizer executes as follows:

The above strategy is based on such a judgment: in terms of execution performance, row-storage serial execution < row-storge parallel execution < IMCI. In terms of SQL compatibility: IMCI < row-storage parallel execution < row-storage serial execution. However, the actual situation is more complicated. For example, in some cases, parallel Index Join based on row-storage ordered index coverage has a lower cost than Sort Merge join based on column storage. IMCI column-storage execution may be selected under the current strategy.

IMCI execution engine is an implementation with column-oriented optimization and is completely independent of the existing MySQL row executor. The purpose of rewriting the executor is to eliminate the two key bottlenecks leading to the low efficiency issue of the existing row-storage execution engine when executing analytic SQL. The bottlenecks are overhead of virtual function access caused by row-based access and inability to execute in parallel.

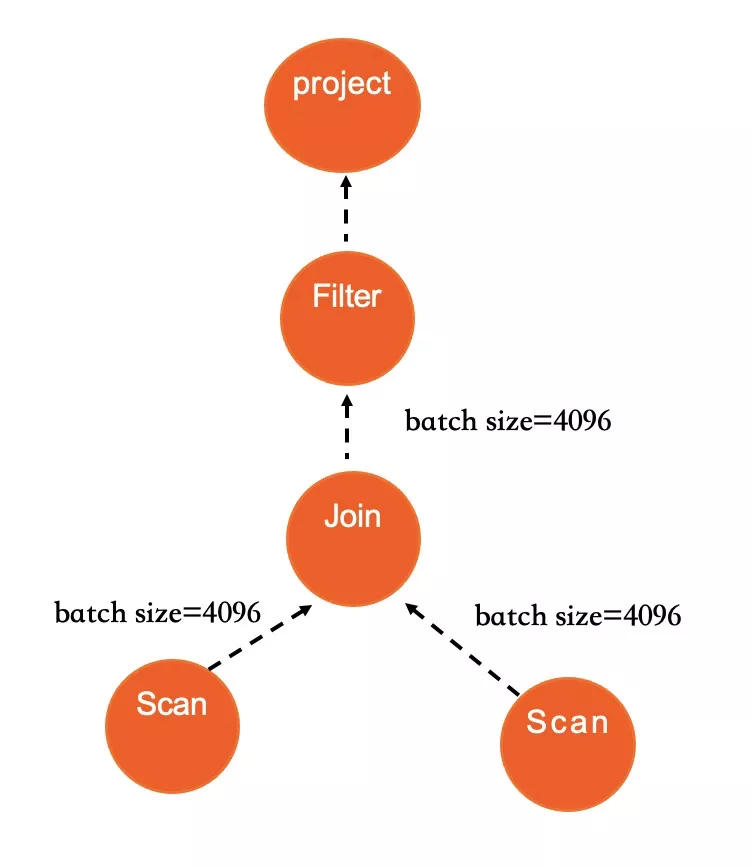

The IMCI executor engine uses the classic volcano model but also uses column storage and vector execution to improve execution performance.

In terms of the volcano model, in the relational algebra corresponding to the syntax tree generated by SQL, each operation will be abstracted into an operator. The execution engine will construct the entire SQL into an operator tree. The query tree will call the Next() interface from top to bottom, and the data will be pulled from bottom to top. The advantage of this method is that its computing model is simple and straightforward. It is achieved by abstracting different physical operators into iterators. Each operator only cares about its own internal logic. This reduces the coupling between operators and makes it easier to write a logically correct execution engine.

Vectorization execution solves the problem of single-core execution efficiency, while parallel execution breaks through the single-core computing bottleneck. The combination of the two makes the IMCI execution speed much faster than traditional MySQL row execution.

In AP scenarios, SQL often contains many computing processes that involve one or more values, operators, or functions. This belongs to the category of expression computing. The evaluation of expressions is a computing-intensive task, so the computing efficiency of expressions is a key factor affecting overall performance.

The traditional expression computing system of MySQL uses row-by-row operation method. It is generally called iterator model implementation. The iterator abstracts the entire table, and the expressions are implemented as a tree structure. Therefore, the implementation code is easy to understand, and the entire process is very clear.

However, this abstraction brings performance loss. This is because, during the iteration of the iterator, the acquisition of each row of data will trigger multiple layers of function calls. The row-by-row acquisition of data brings too much I/O and is not friendly to the cache. MySQL uses a tree iterator model as it is constrained by the access methods of the storage engine. This makes it difficult to optimize complex logical computing.

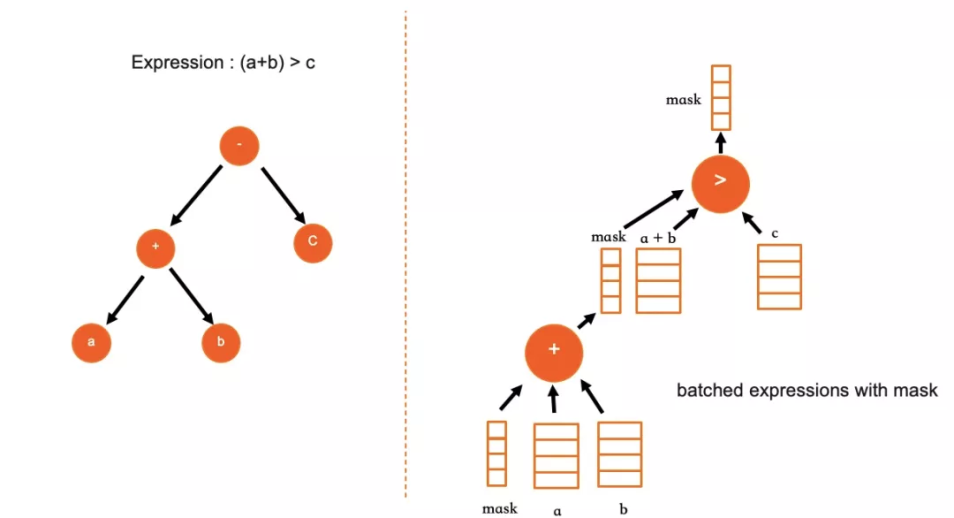

For column storage, since the data in each column is stored separately and sequentially, the expression computing in a specific column can be performed in batches. For each expression, its unit of input and output is batch. In the batch processing mode, the computing process can be accelerated by using SIMD instructions. The new expression system has two key optimizations.

Transactional applications and analytic applications have completely different requirements for storage engines. The former requires that indexes can be accurately located to each row and support efficient addition, deletion, and modification. The latter requires support for efficient batch scanning and processing. The design requirements for storage engines in these two scenarios are completely different and sometimes even contradictory.

Thus, it is very challenging to design an integrated storage engine that can serve both OLTP and OLAP loads. At present, only several large enterprises that have decades of experience in research and development do well in HTAP storage engines. For example, Oracle has In-Memory Column Store, SQL Server has In-Memory Column Index, and DB2 has BLU. Others, like TiDB, can only meet HTAP requirements by adjusting one replica in a multi-replica cluster to column storage.

The integrated HTAP storage engine generally uses a row-column hybrid storage scheme. In other words, both row storage and column storage exist in the engine at the same time. Row storage serves TP, and column storage serves AP. Compared with the separate deployment of OLTP database and OLAP database to meet business requirements, a single HTAP engine has the following advantages:

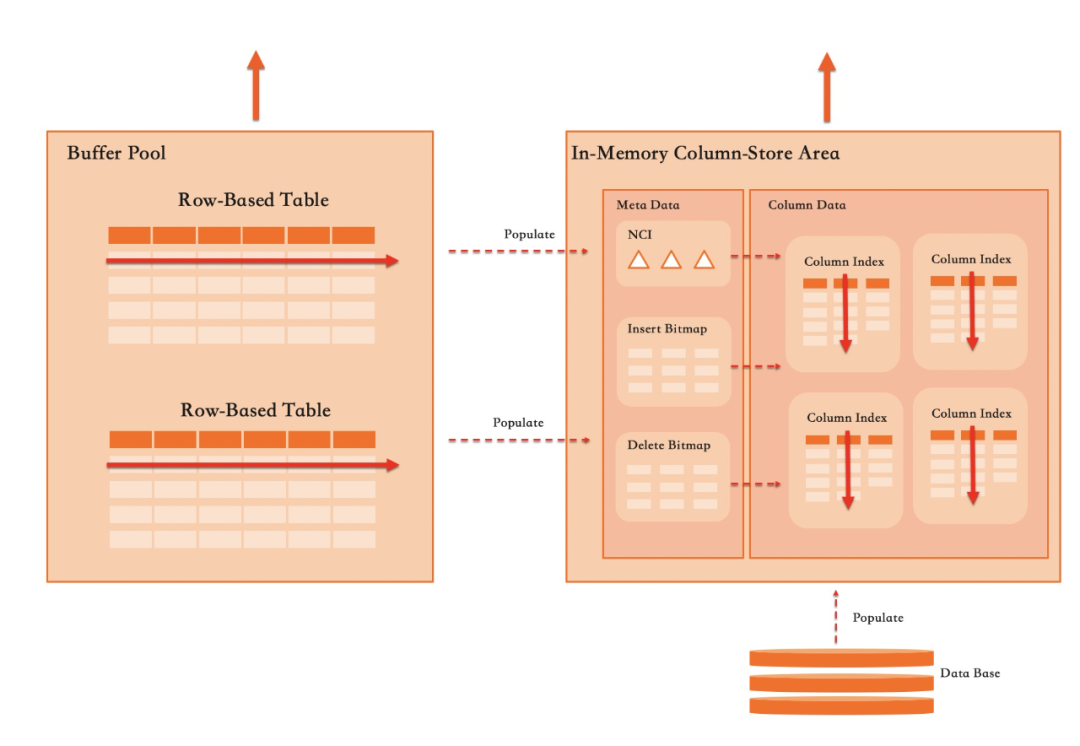

PolarDB uses a row-column hybrid storage technology, similar to that of commercial databases such as Oracle and SQL Server. We call it In-Memory Column Index.

It is technically difficult to implement a row-column hybrid storage engine while adding column-storage support to a mature OLTP load-optimized storage engine like InnoDB faces different situations:

The effect of the above conditions is mixed and has affected the design of the row-column hybrid storage solution for PolarDB.

Under the architecture of the plug-in storage engine framework of MySQL, the simplest solution to add column storage support is to implement a separate storage engine, like ColumnStore of Infobright and MariaDB. PolarDB uses the solution of implementing column storage as a secondary index of InnoDB. This is mainly based on the following considerations:

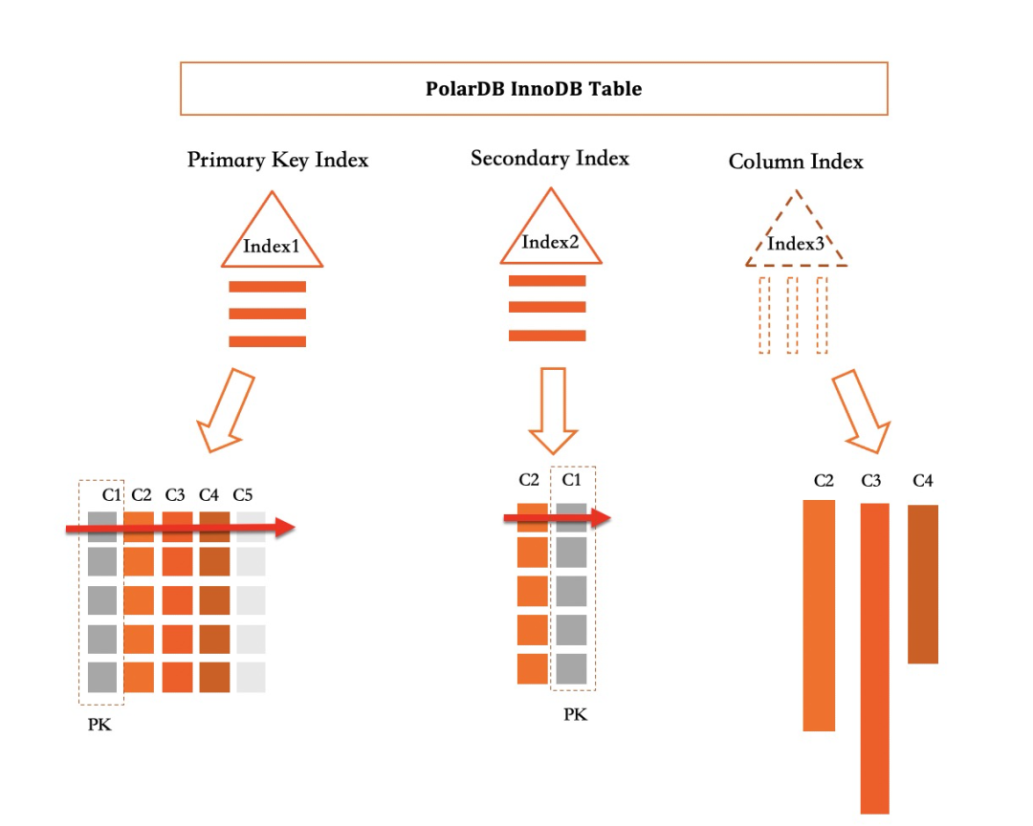

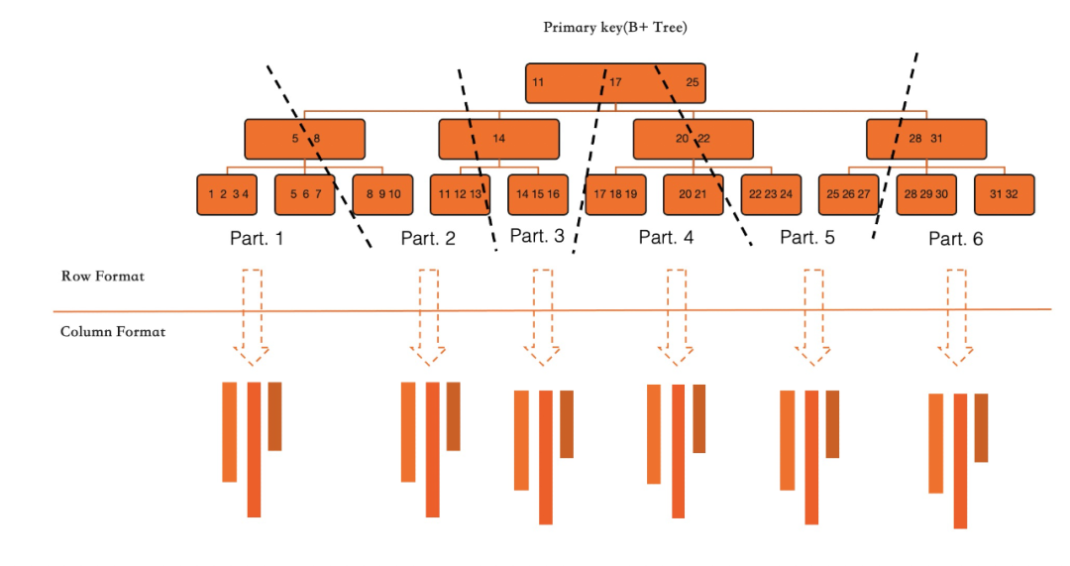

As shown in the above figure, all primary indexes and secondary indexes in PolarDB are implemented as a B+ tree. The column index is an index by definition, but it is a virtual index used to capture the addition, deletion, and modification operations on the covered columns by the index.

For the above table, the primary index contains five columns (C1, C2, C3, C4, C5) of data, and the secondary index contains two columns (C2, C1) of data. In the common secondary index, C2 and C1 are encoded into one row and stored in the B+ tree. The column storage index contains three columns (C2, C3, C4) of data. In actual physical storage, the three columns are split and stored independently. Each column is converted into column storage format according to their writing order.

Another advantage of implementing column storage as a secondary index is that the engineering implementation of the executor is very simple. The concept of covering index already exists in MySQL, that is, all columns required by a query are stored in a secondary index. The data in this secondary index can be directly used to meet the query requirements. Compared with the primary index, the use of a secondary index can greatly reduce the amount of data read and thus improve the query performance. When all columns required for a query are covered by column indexes, the acceleration of column storage can improve query performance by dozens or even hundreds of times.

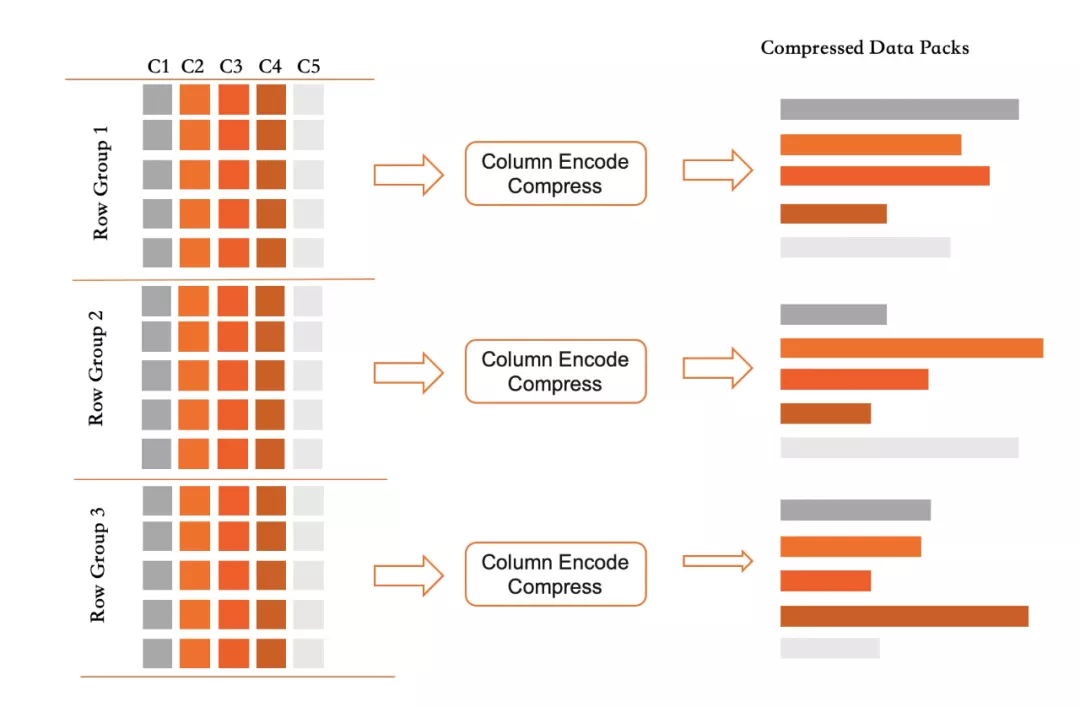

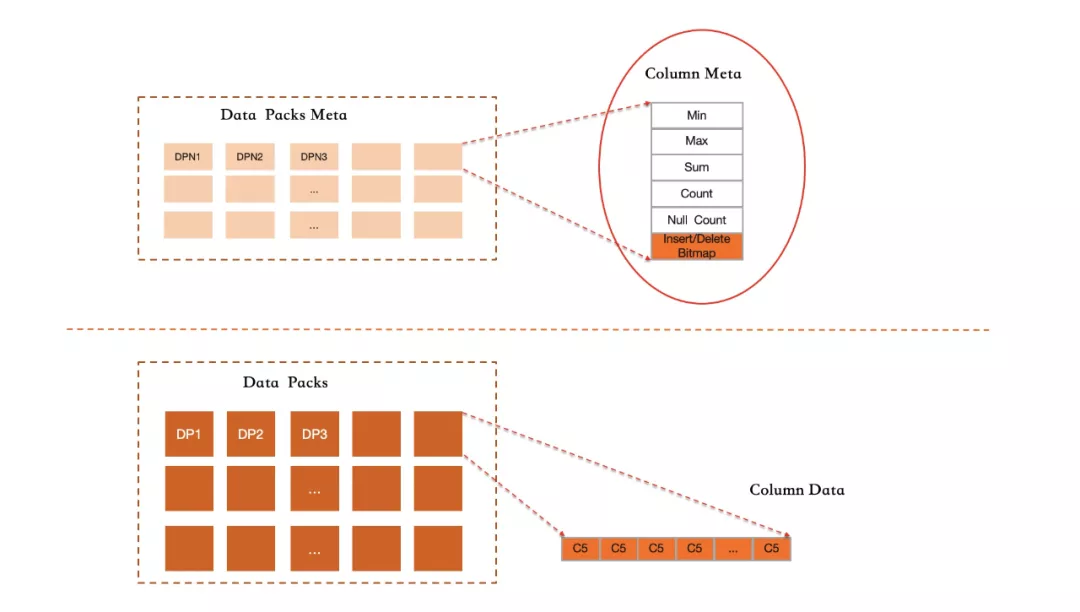

For each column in ColumnIndex, its storage uses the unordered and append write format, combined with label deletion and background asynchronous compaction to realize space reclamation. Its specific implementation has the following key points:

This data organization method meets the requirement of batch scanning and filtering by columns of analytic queries. Besides, the impact on TP transaction operation is very small. The write operation only needs to append write to the memory by column, and the delete operation only needs to set a deletion label. The update operation is a deletion label combined with an append write. Column storage can support transaction-level updates while not reducing the OLTP performance.

The row-to-column operation occurs in two cases. The first case is to use DDL statements to create column indexes for some columns (generally, this is because the business has new analytic requirements for an existing table). You need to scan the data in the entire table to create column indexes. Another case is that, during the transaction operation, row-to-column operations are performed on columns involved in this process in real time.

In the case of the full-table row-to-column, we use parallel scanning to scan primary keys of InnoDB and convert all the columns involved into column storage format in turn. This operation is very fast, only limited by the I/O throughput speed and available CPU resources to the server. This operation is an online-DDL process and does not block the running of online services.

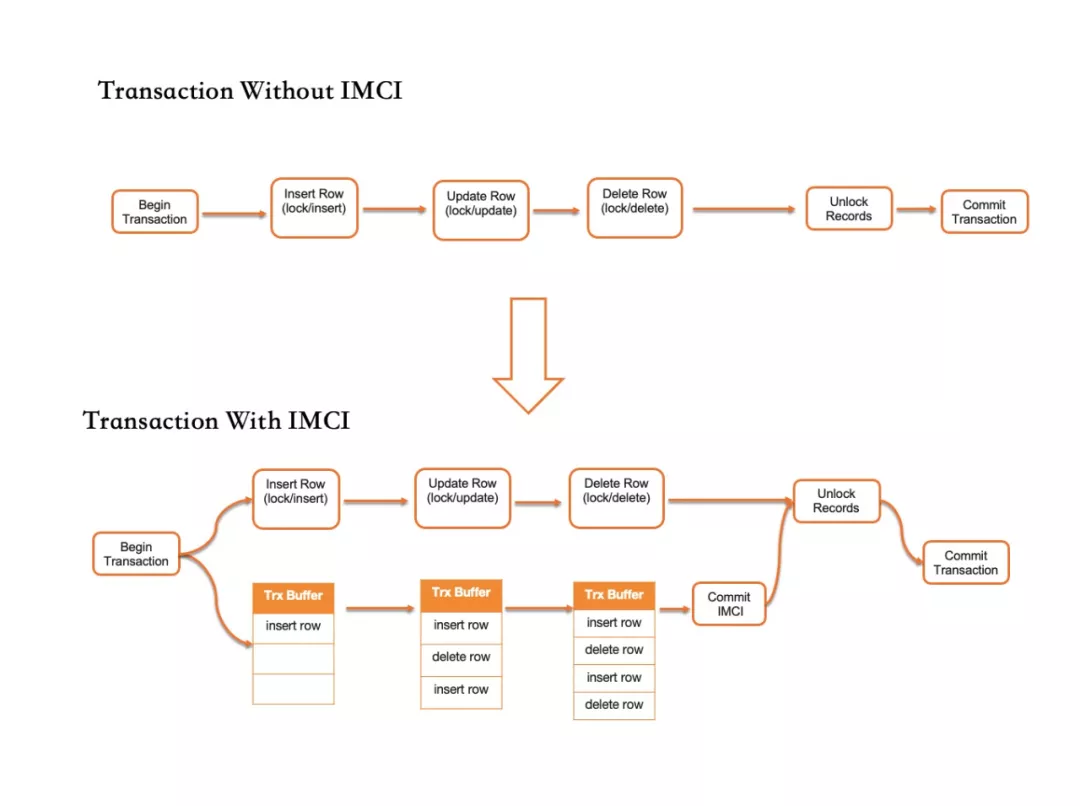

After the column index is established on a table, all updated transactions will update the row-stored and column-stored data simultaneously to ensure the transactional consistency of the two. The following figure demonstrates the difference when IMCI function is disabled and enabled. When the IMCI function is not enabled, updates to all rows by the transaction are locked first. Then, the data page is modified. All locked records are scaled before the transaction is submitted. After the IMCI function is enabled, the transaction system will create an update cache for column storage. When all data pages are modified, the modification operations of the column storage are recorded. Before the transaction submission ends, the update cache will be applied to the column storage system.

Under this implementation, the column storage provides the same level of transaction isolation as the row storage does. For each write operation, each row in RowGroup records the ID of the transaction that modifies the row. For each deletion label operation, the transaction ID of the operation is also recorded. By writing and deleting transaction IDs, AP queries can obtain a globally consistent snapshot in a very lightweight way.

As can be seen from the storage format of the preceding columns, all Datapacks in IMCI are unordered and adopt append write method. So, it is impossible to filter out data that does not meet the requirement as accurately as normal ordered indexes of InnoDB. In IMCI, we use statistics to filter data blocks to reduce the unit price of data access.

The rough index scheme based on statistics is not very friendly for some queries that need to accurately locate some data. However, in a row-column hybrid storage engine, column indexes only need to assist in accelerating queries that involve the scanning of a large amount of data. In this scenario, columns have significant advantages. For SQL that only access a small amount of data, the optimizer usually calculates based on the cost model to get a lower-cost solution based on row store.

The row-column hybrid storage of PolarDB supports both AP queries and TP queries in one instance. However, many businesses have high OLTP loads, and sudden OLAP loads may interfere with the response latency of TP businesses. Therefore, it is necessary to support load isolation in HTAP databases. With the write-once-read-many architecture of PolarDB, we can easily isolate AP loads and TP loads. Under the technical architecture of PolarDB, we have the following deployment modes:

In addition to the above different deployment architectures that support resource isolation, in PolarDB, Automatic Degree of Parallelism (Auto DOP) is supported in some large queries that need to be executed in parallel. This mechanism takes into account the current system load and available CPU and memory resources and limits the resources used by a single query. This aims to avoid a single query consuming too many resources and affecting the processing of other requests.

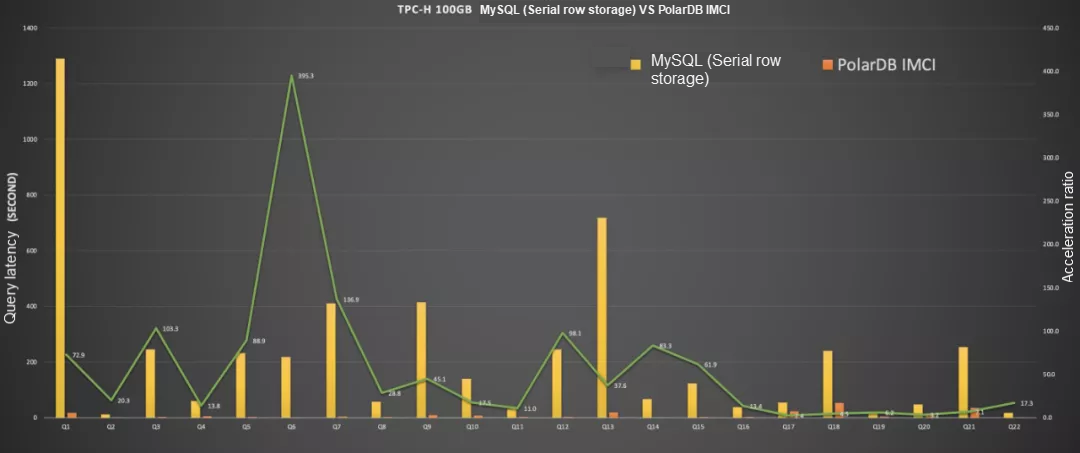

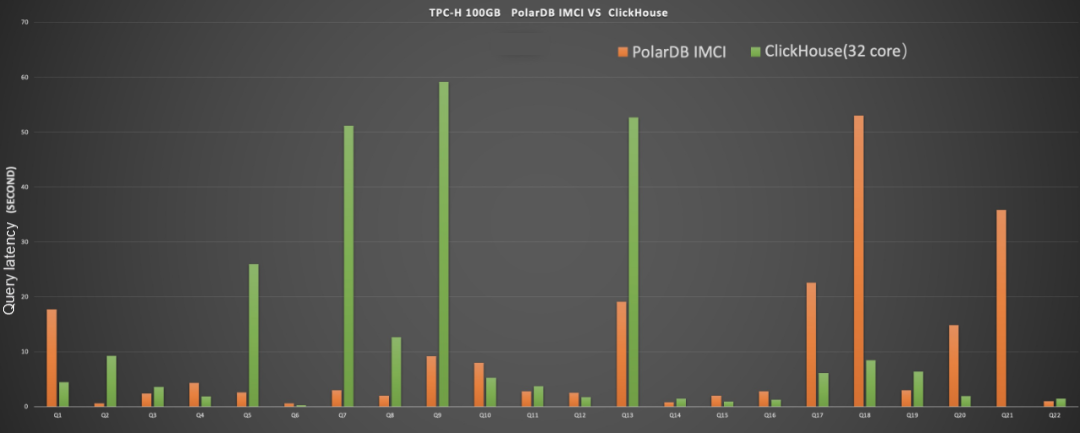

To verify the effect of IMCI technology, we tested the PolarDB MySQL IMCI in TPC-H scenarios. We compared it with the native execution engine of row storage of MySQL and ClickHouse. ClickHouse has the highest standalone performance among OLAP engines. The test conditions are as follows:

In the TPC-H scenario, the IMCI processing latency of all 22 queries is dozens to hundreds of times lower than that of native MySQL. As shown in the figure, Q6 is nearly 400 times better. This reflects the great advantage of IMCI.

The performance of IMCI in TPC-H scenario is basically at the same level as the performance of ClickHouse, currently the hottest analytic database in the technical community. The performance of processing latency for some SQL requests varies. Users can use IMCI to replace ClickHouse, and its data management is more convenient.

IMCI is the first step for PolarDB to enter the data analysis market, and its iteration will not stop. In the future, we will dive deep into the following directions to bring a better experience to our customers.

ApsaraDB - January 4, 2024

ApsaraDB - November 28, 2022

ApsaraDB - November 21, 2023

ApsaraDB - April 10, 2024

ApsaraDB - November 18, 2025

ApsaraDB - April 20, 2026

PolarDB for MySQL

PolarDB for MySQL

Alibaba Cloud PolarDB for MySQL is a cloud-native relational database service 100% compatible with MySQL.

Learn More PolarDB for PostgreSQL

PolarDB for PostgreSQL

Alibaba Cloud PolarDB for PostgreSQL is an in-house relational database service 100% compatible with PostgreSQL and highly compatible with the Oracle syntax.

Learn More PolarDB for Xscale

PolarDB for Xscale

Alibaba Cloud PolarDB for Xscale (PolarDB-X) is a cloud-native high-performance distributed database service independently developed by Alibaba Cloud.

Learn More Quick BI

Quick BI

A new generation of business Intelligence services on the cloud

Learn MoreMore Posts by ApsaraDB