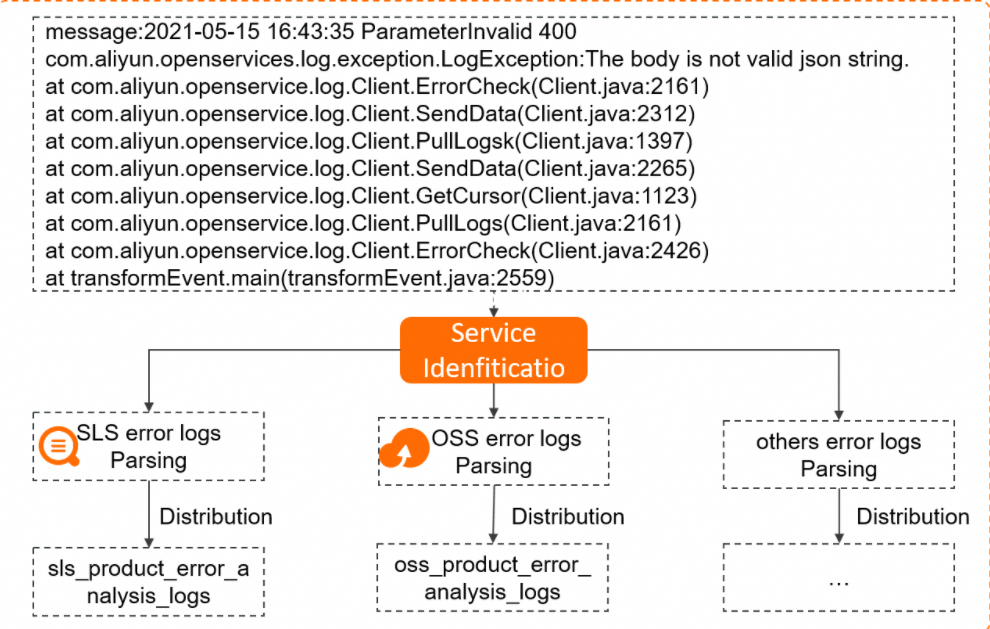

In high-concurrency big data scenarios, you can effectively analyze Java error logs to reduce the operations and maintenance (O&M) costs of your Java applications. Simple Log Service collects Java error logs from Alibaba Cloud services and uses the data transformation feature to parse the collected logs.

Prerequisites

You have collected the Java error logs of SLS, OSS, SLB, and RDS and stored them in a Logstore named cloud_product_error_log. For more information, see Use Logtail to collect logs.

Scenarios

For example, you have developed a Java application named Application A that uses multiple Alibaba Cloud services, such as OSS and Simple Log Service. You have created a Logstore named cloud_product_error_log in the China (Hangzhou) region to store Java error logs that are generated when you call the API operations of the Alibaba Cloud services. To efficiently fix Java errors, you can use Simple Log Service to analyze the Java error logs at regular intervals.

To meet these requirements, you can parse the log time, error code, status code, service name, error message, request method, and error line number from the collected logs. Then, you can send the parsed logs to the Logstore of each cloud service for error analysis.

The following example shows a raw log:

__source__:192.0.2.10

__tag__:__client_ip__:203.0.113.10

__tag__:__receive_time__:1591957901

__topic__:

message: 2021-05-15 16:43:35 ParameterInvalid 400

com.aliyun.openservices.log.exception.LogException:The body is not valid json string.

at com.aliyun.openservice.log.Client.ErrorCheck(Client.java:2161)

at com.aliyun.openservice.log.Client.SendData(Client.java:2312)

at com.aliyun.openservice.log.Client.PullLogsk(Client.java:1397)

at com.aliyun.openservice.log.Client.SendData(Client.java:2265)

at com.aliyun.openservice.log.Client.GetCursor(Client.java:1123)

at com.aliyun.openservice.log.Client.PullLogs(Client.java:2161)

at com.aliyun.openservice.log.Client.ErrorCheck(Client.java:2426)

at transformEvent.main(transformEvent.java:2559)Overall Procedure

Logtail collects the error logs of Application A and stores them in the cloud_product_error_log Logstore. Then, the error logs are transformed, and the transformed logs are sent to the Logstore of each cloud service for error analysis. The overall procedure is as follows:

-

Design a data transformation statement: Analyze the transformation logic and write a transformation statement.

-

Create a data transformation job: Send logs to different Logstores of cloud services for error analysis.

-

Query and analyze data: Analyze error logs in the Logstore of each cloud service.

Step 1: Design a Data Transformation Statement

Transformation Procedure

To easily analyze error logs, you need to perform the following operations:

-

Extract the log time, error code, status code, service name, error message, request method, and error line number from the message field.

-

Send error logs to the Logstore of each cloud service.

Transformation Logic Analysis

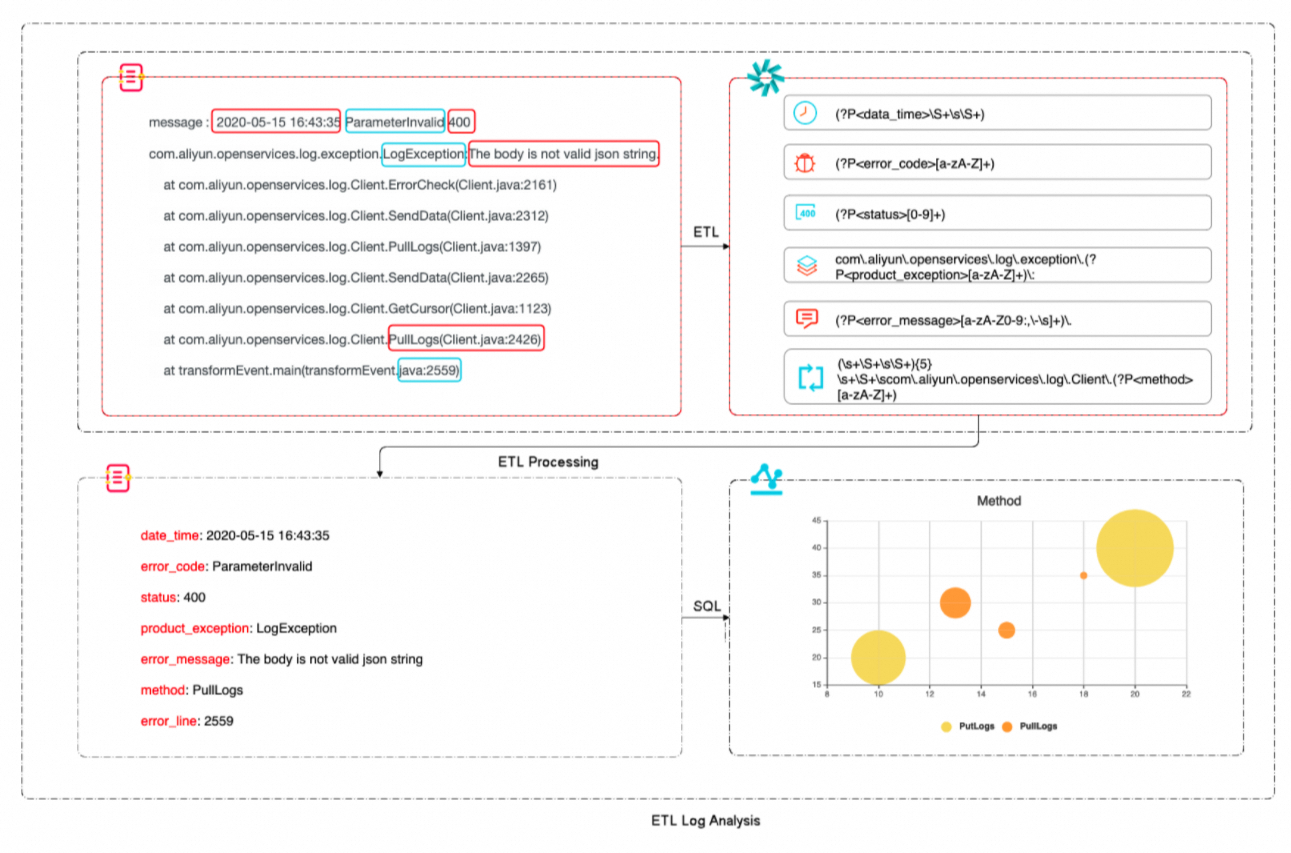

You can analyze the log time, error code, status code, service name, error message, request method, and error line number in the raw log field. Then, you can design regular expressions for each field that you want to extract.

Syntax Details

-

Use the regex_match function to match logs that contain LogException. For more information, see regex_match.

-

If a log matches LogException, it is processed using the parsing rule for Simple Log Service error logs. If a log matches OSSException, it is processed using the parsing rule for OSS error logs. For more information, see e_switch.

-

Use the e_regex function to parse error logs for each cloud service. For more information, see e_regex.

-

Delete the message field and deliver logs to the Logstore of the corresponding product. For more information, see e_drop_fields and e_outputLogStoreut.

-

For more information, see Regular expressions - Group.

Transformation Syntax Analysis

The following example shows how to parse SLS error logs using regular expressions:

The syntax of the data transformation statement is as follows:

e_switch(

regex_match(v("message"), r"LogException"),

e_compose(

e_regex(

"message",

"(?P<data_time>\S+\s\S+)\s(?P<error_code>[a-zA-Z]+)\s(?P<status>[0-9]+)\scom\.aliyun\.openservices\.log\.exception\.(?P<product_exception>[a-zA-Z]+)\:(?P<error_message>[a-zA-Z0-9:,\-\s]+)\.(\s+\S+\s\S+){5}\s+\S+\scom\.aliyun\.openservices\.log\.Client\.(?P<method>[a-zA-Z]+)\S+\s+\S+\stransformEvent\.main\(transformEvent\.java\:(?P<error_line>[0-9]+)\)",

),

e_drop_fields("message"),

e_output("sls-error"),

),

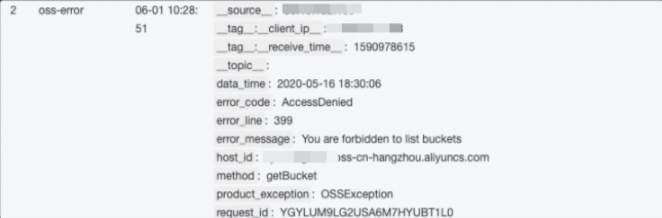

regex_match(v("message"), r"OSSException"),

e_compose(

e_regex(

"message",

"(?P<data_time>\S+\s\S+)\scom\.aliyun\.oss\.(?P<product_exception>[a-zA-Z]+)\:(?P<error_message>[a-zA-Z0-9,\s]+)\.\n\[ErrorCode\]\:\s(?P<error_code>[a-zA-Z]+)\n\[RequestId\]\:\s(?P<request_id>[a-zA-Z0-9]+)\n\[HostId\]\:\s(?P<host_id>[a-zA-Z-.]+)\n\S+\n\S+(\s\S+){3}\n\s+\S+\s+(.+)(\s+\S+){24}\scom\.aliyun\.oss\.OSSClient\.(?P<method>[a-zA-Z]+)\S+\s+\S+\stransformEvent\.main\(transformEvent\.java:(?P<error_line>[0-9]+)\)",

),

e_drop_fields("message"),

e_output("oss-error"),

),

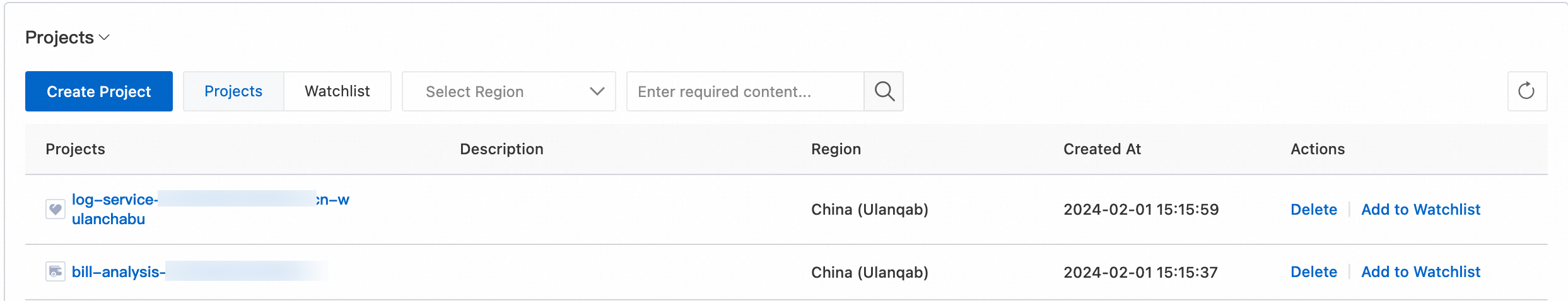

)Step 2: Create a Data Transformation Job

-

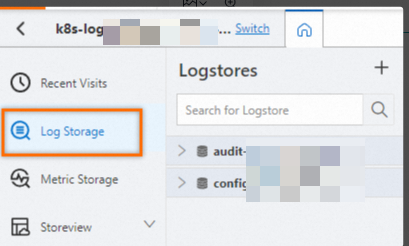

Go to the data transformation page.

In the Projects section, click the project you want.

On the tab, click the logstore you want.

-

On the query and analysis page, click Data Transformation.

-

In the top-right corner of the page, select a time range.

Make sure that log data exists on the Raw Logs tab.

-

In the code editor, enter the following data transformation statement:

e_switch( regex_match(v("message"), r"LogException"), e_compose( e_regex( "message", "(?P<data_time>\S+\s\S+)\s(?P<error_code>[a-zA-Z]+)\s(?P<status>[0-9]+)\scom\.aliyun\.openservices\.log\.exception\.(?P<product_exception>[a-zA-Z]+)\:(?P<error_message>[a-zA-Z0-9:,\-\s]+)\.(\s+\S+\s\S+){5}\s+\S+\scom\.aliyun\.openservices\.log\.Client\.(?P<method>[a-zA-Z]+)\S+\s+\S+\stransformEvent\.main\(transformEvent\.java\:(?P<error_line>[0-9]+)\)", ), e_drop_fields("message"), e_output("sls-error"), ), regex_match(v("message"), r"OSSException"), e_compose( e_regex( "message", "(?P<data_time>\S+\s\S+)\scom\.aliyun\.oss\.(?P<product_exception>[a-zA-Z]+)\:(?P<error_message>[a-zA-Z0-9,\s]+)\.\n\[ErrorCode\]\:\s(?P<error_code>[a-zA-Z]+)\n\[RequestId\]\:\s(?P<request_id>[a-zA-Z0-9]+)\n\[HostId\]\:\s(?P<host_id>[a-zA-Z-.]+)\n\S+\n\S+(\s\S+){3}\n\s+\S+\s+(.+)(\s+\S+){24}\scom\.aliyun\.oss\.OSSClient\. (?P<method>[a-zA-Z]+)\S+\s+\S+\stransformEvent\.main\(transformEvent\.java:(?P<error_line>[0-9]+)\)", ), e_drop_fields("message"), e_output("oss-error"), ), ) -

Click Preview Data.

-

Create a data transformation job.

-

Click Save as Transformation Job.

-

In the Create Data Transformation Job panel, configure the parameters and click OK. The following table describes the parameters.

Parameter

Description

Job Name

The name of the data transformation job. Example: test.

Authorization Method

Select Default Role to read data from the source Logstore.

Storage Target

Target Name

The name of the storage destination. Example: sls-error or oss-error.

Target Region

The region where the destination project resides. Example: China (Hangzhou).

Target Project

The name of the target project for storing data transformation results.

Target Logstore

The name of the destination Logstore for storing data transformation results. Example: sls-error or oss-error.

Authorization Method

Select Default Role to write transformation results to the destination Logstore.

Processing Range

Time Range

Select All.

After you create a data transformation job, Simple Log Service creates a dashboard for the job by default. You can view the metrics of the job on the dashboard.

On the Exception Details chart, you can view logs that failed to parse. Then, you can modify the regular expression.

-

If a log fails to parse, you can set the log severity to WARNING to report the log. The data transformation job continues to run.

-

If you set the log severity to ERROR to report the log, the data transformation job stops. In this case, you must identify the cause of the error and modify the regular expression until the data transformation job can parse all required types of error logs.

-

Step 3: Analyze Error Log Data

After the raw error logs are transformed, you can analyze them. This example analyzes only the Java error logs of Simple Log Service.

In the Projects section, click the one you want.

On the tab, click the logstore you want.

-

Enter a query statement in the search box.

-

Count errors per method call.

* | SELECT COUNT(method) as m_ct, method GROUP BY method -

Count the most frequent error message type in PutLogs.

* | SELECT error_message,COUNT(error_message) as ct_msg, method WHERE method LIKE 'PutLogs' GROUP BY error_message,method -

Count occurrences of each error code.

* | SELECT error_code,COUNT(error_code) as count_code GROUP BY error_code -

Set an error timeline to view real-time API call error information.

* | SELECT date_format(data_time, '%Y-%m-%d %H:%m:%s') as date_time,status,product_exception,error_line, error_message,method ORDER BY date_time desc

-

-

Click 15 Minutes (Relative) to set the query and analysis time range.

You can select a relative time or a time frame. You can also specify a custom time range.

NoteThe margin of error for query results is up to 1 minute.

-

Click Query/Analyze to view the query and analysis results.