Realtime Compute for Apache Flink provides built-in profiling tools that help you diagnose performance bottlenecks in running deployments at the JVM level. Use flame graphs to pinpoint CPU hot spots, inspect memory allocation across JVM spaces, and analyze thread behavior through sampling and thread dumps.

Prerequisites

Before you begin, make sure that you have:

Namespace-level permissions for Realtime Compute for Apache Flink granted to your Alibaba Cloud account or Resource Access Management (RAM) user. For details, see Grant namespace permissions

Limitations

Only Ververica Runtime (VVR) 4.0.11 or later supports deployment performance monitoring.

Performance data is available only for running deployments. Historical deployment data is not retained.

Choose a tool

Start with the tool that matches the symptom you are investigating.

Symptom | Tool | What it shows |

High CPU usage or slow throughput | Flame Graph | CPU-intensive methods and their call stacks |

Suspected memory pressure | Flame Graph (Alloc mode) or Memory | Memory allocation by function, or JVM memory usage by space |

Thread contention or suspected deadlocks | Flame Graph (Lock mode) or Threads | Lock contention patterns, or per-thread stack traces via sampling |

Need a full snapshot of all thread states | Thread Dump | All thread stacks at a single point in time |

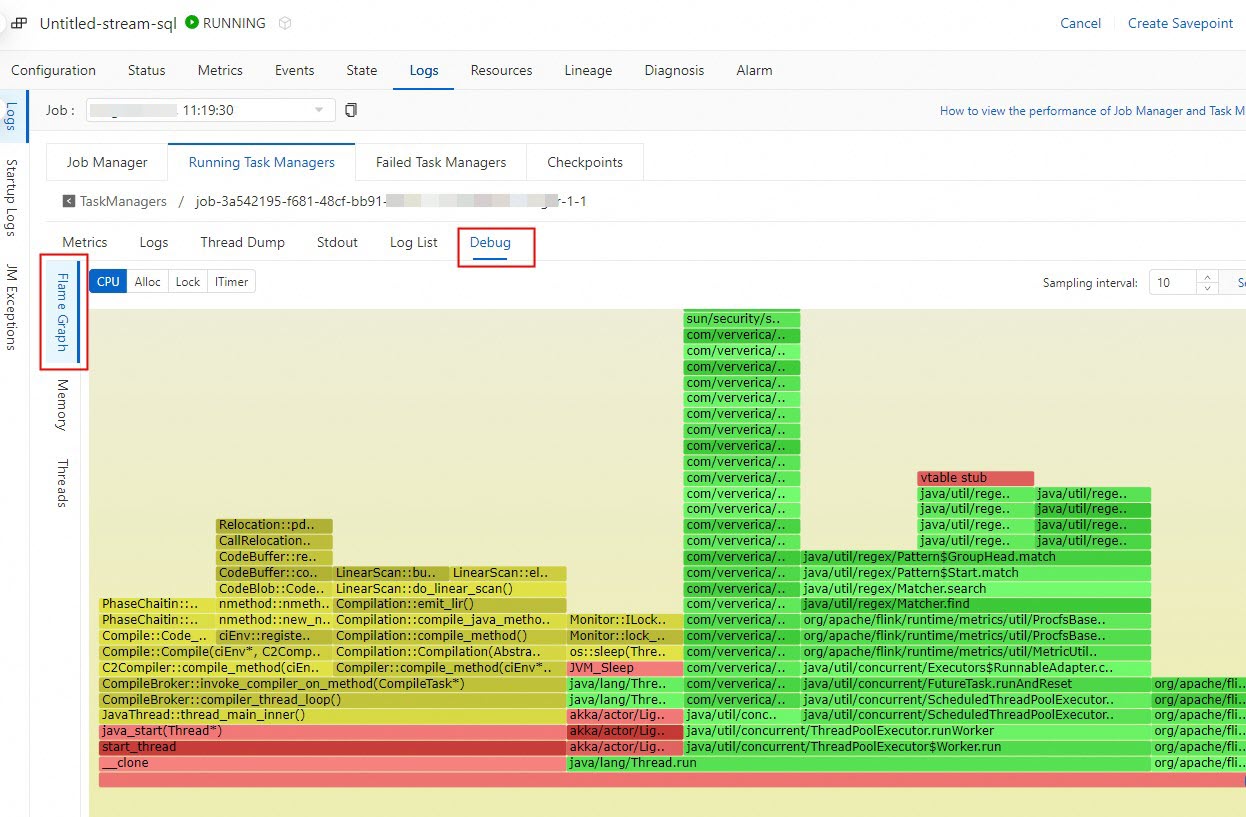

Flame Graph

Flame graphs visualize call stacks as layered horizontal bars. Each bar represents a stack frame: the bottom layer is the application entry point, and higher layers represent deeper function calls. Wider bars indicate more CPU time consumed by that function, making them the primary signal for performance bottlenecks.

For background on flame graph concepts, see Flame Graphs in the Apache Flink documentation.

Flame graph modes

Mode | What it captures |

CPU | Stack traces from actively running threads. Wider frames indicate higher CPU usage. |

Alloc | Memory allocated by each function. Identifies functions that generate the most heap pressure. |

Lock | Lock contention and deadlock patterns. Highlights functions waiting to acquire locks. |

ITimer | CPU consumption across all threads within a sampling interval. Similar to CPU mode but does not require |

Identify bottlenecks with flame graphs

Look for wide frames. A wide frame means the corresponding function consumes a significant share of CPU time relative to other functions. This is the most common indicator of a hot spot.

Check frame frequency. Stack frames that appear repeatedly across samples indicate functions that are called frequently, which may cause cumulative performance degradation.

Interpret vertical position. Wide frames near the bottom of the graph suggest issues in the main application path or entry-point code. Wide frames near the top suggest issues within a specific function deep in the call stack.

Optimize the hot spots. After identifying problematic functions, review the corresponding code. Common optimizations include reducing loop iterations, improving data structures, and minimizing synchronization operations.

Compare before and after. After applying optimizations, generate a new flame graph and compare it against the previous one to verify that the bottleneck is resolved.

Flame graphs are generated from sampled data and may not capture the complete execution context. For more accurate diagnosis, use flame graphs alongside the other performance tools described in this topic. Non-Java functions appear as "unknown" in flame graphs. For details, see the async-profiler discussion.

Memory

The Memory tab shows memory usage across different JVM spaces (heap, non-heap, metaspace, and others). Use this view to identify memory leaks, excessive garbage collection, or JVM spaces approaching their limits.

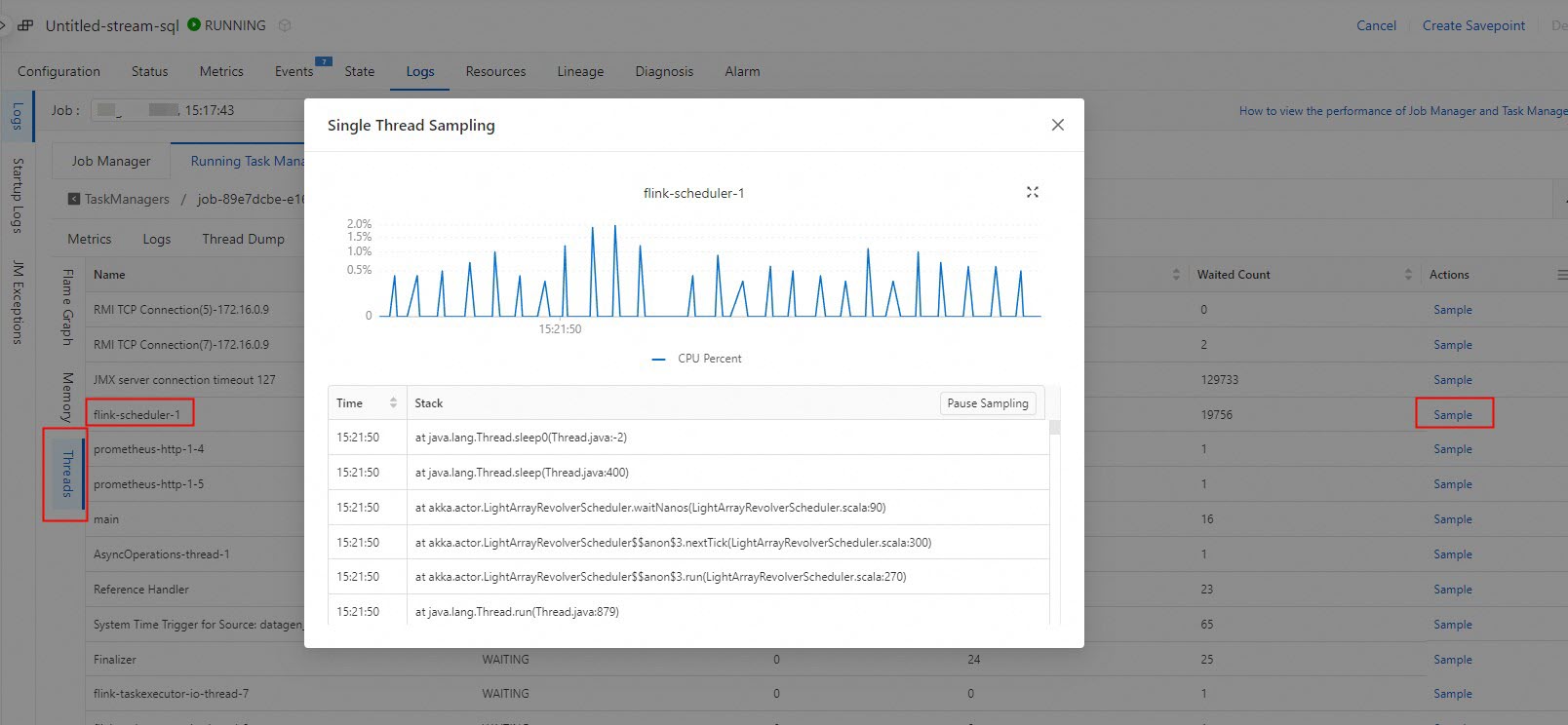

Threads

Thread sampling captures stack traces from individual threads over a time window, helping you understand what each thread is doing during a performance issue.

Sample a thread

Access the Debug tab for the component to inspect:

JobManager: On the Logs tab, click the Job Manager tab, then click Debug.

TaskManager: On the Logs tab, click the Running Task Managers tab, click the value in the Path, ID column, then click Debug.

On the Threads tab, find the operator to inspect and click Sample in the Actions column. Wait for sampling to complete, then review the thread stacks.

This example shows thread stacks accessed by Gemini State.

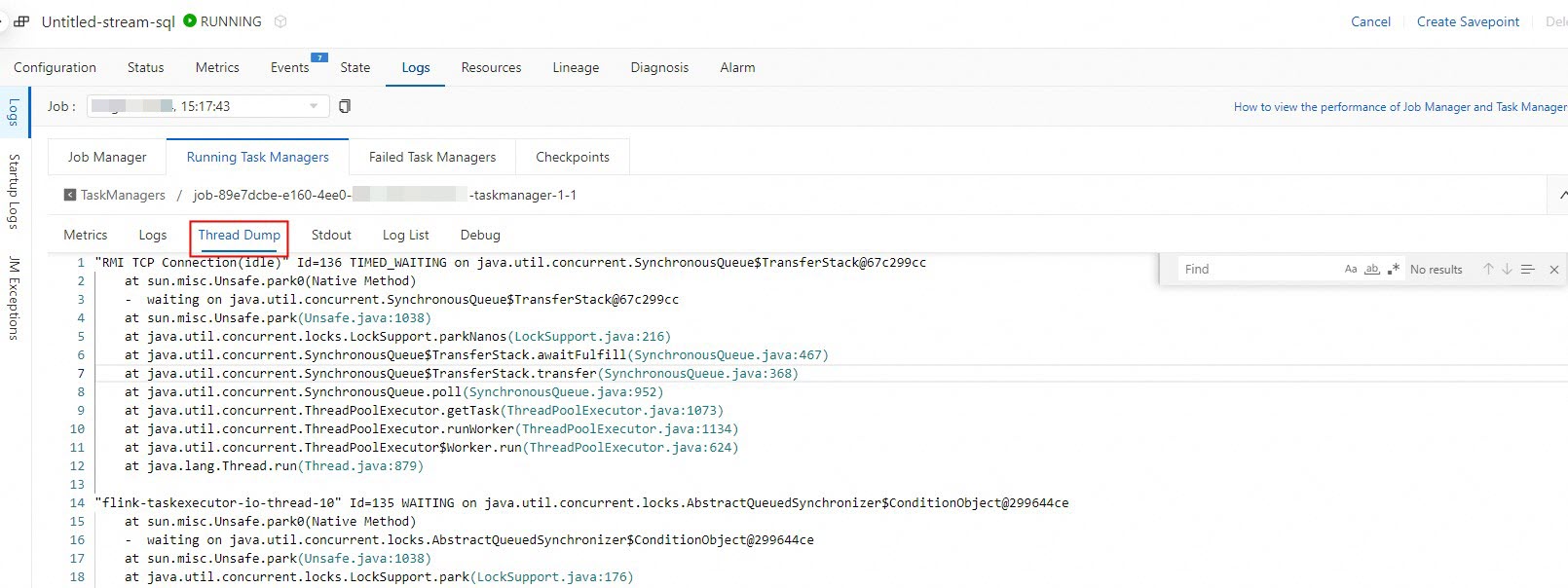

Thread Dump

A thread dump captures the state of every thread at a single point in time. Use thread dumps to detect deadlocks, identify blocked threads, or verify state backend interactions.

Capture a thread dump

On the Logs tab, click the Running Task Managers tab, then click the value in the Path, ID column.

Click the Thread Dump tab.

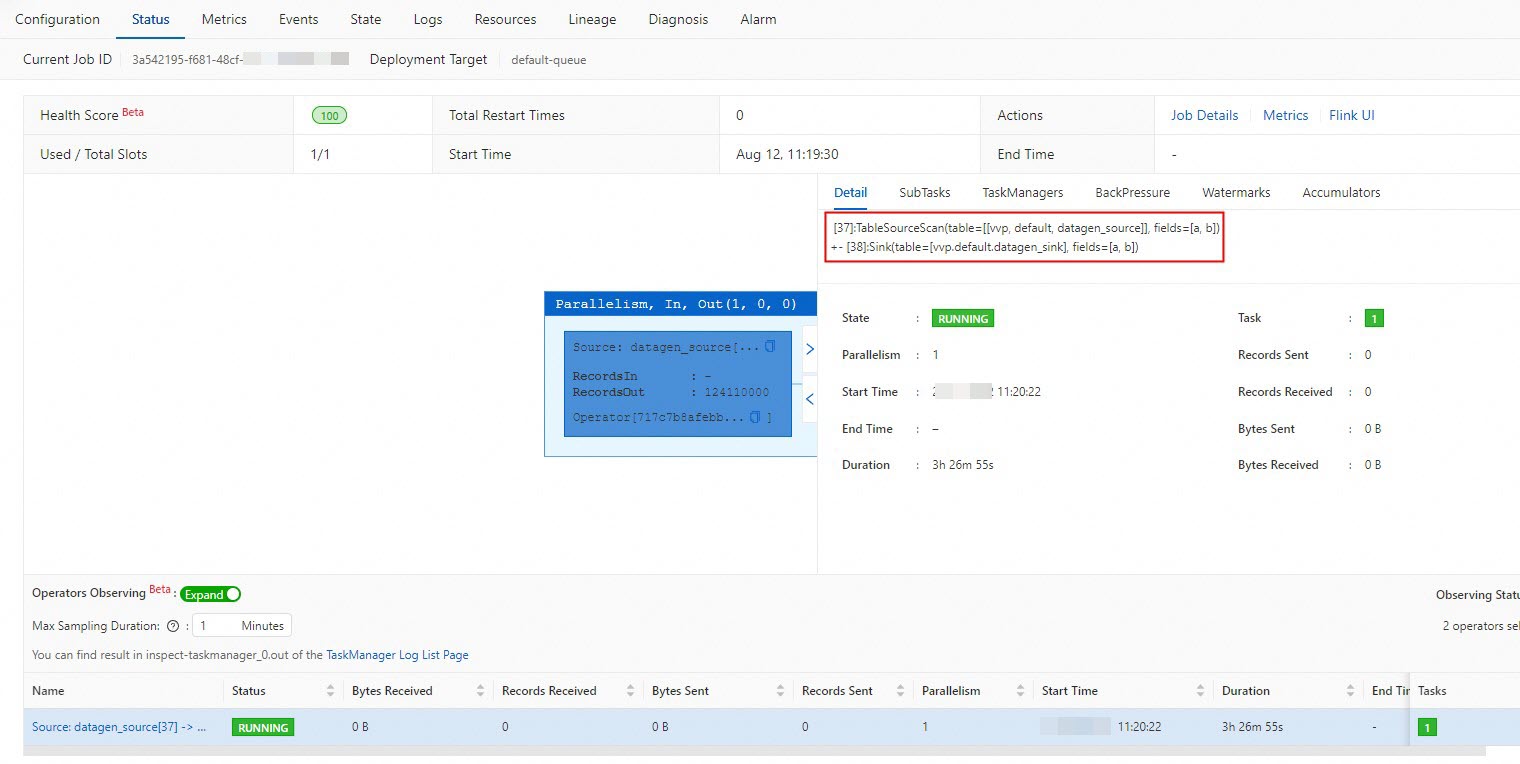

Search for the operator name that processes state data. Check whether thread stacks containing GeminiStateBackend or RocksDBStateBackend interaction are displayed under the operator.

Find the operator name on the Status tab.

References

Perform intelligent deployment diagnostics: Monitor deployment health and identify stability issues automatically.

Optimize Flink SQL: Improve deployment performance through configuration tuning and Flink SQL optimization.